Update readme

Browse files

README.md

CHANGED

|

@@ -11,8 +11,6 @@ inference: false

|

|

| 11 |

|

| 12 |

<video src='https://huggingface.co/ByteDance/AnimateDiff-Lightning/resolve/main/animatediff_lightning_samples_t2v.mp4' width="100%" autoplay muted loop></video>

|

| 13 |

|

| 14 |

-

<video src='https://huggingface.co/ByteDance/AnimateDiff-Lightning/resolve/main/animatediff_lightning_samples_v2v.mp4' width="100%" autoplay muted loop></video>

|

| 15 |

-

|

| 16 |

AnimateDiff-Lightning is a lightning-fast text-to-video generation model. It can generate 16-frame 512px videos in a few steps. For more information, please refer to our research paper: [AnimateDiff-Lightning: Cross-Model Diffusion Distillation](https://huggingface.co/ByteDance/AnimateDiff-Lightning/resolve/main/animatediff_lightning_report.pdf). We release the model as part of the research.

|

| 17 |

|

| 18 |

Our models are distilled from [AnimateDiff SD1.5 v2](https://huggingface.co/guoyww/animatediff). This repository contains checkpoints for 1-step, 2-step, 4-step, and 8-step distilled models. The generation quality of our 2-step, 4-step, and 8-step model is great. Our 1-step model is only provided for research purposes.

|

|

@@ -67,15 +65,39 @@ export_to_gif(output.frames[0], "animation.gif")

|

|

| 67 |

|

| 68 |

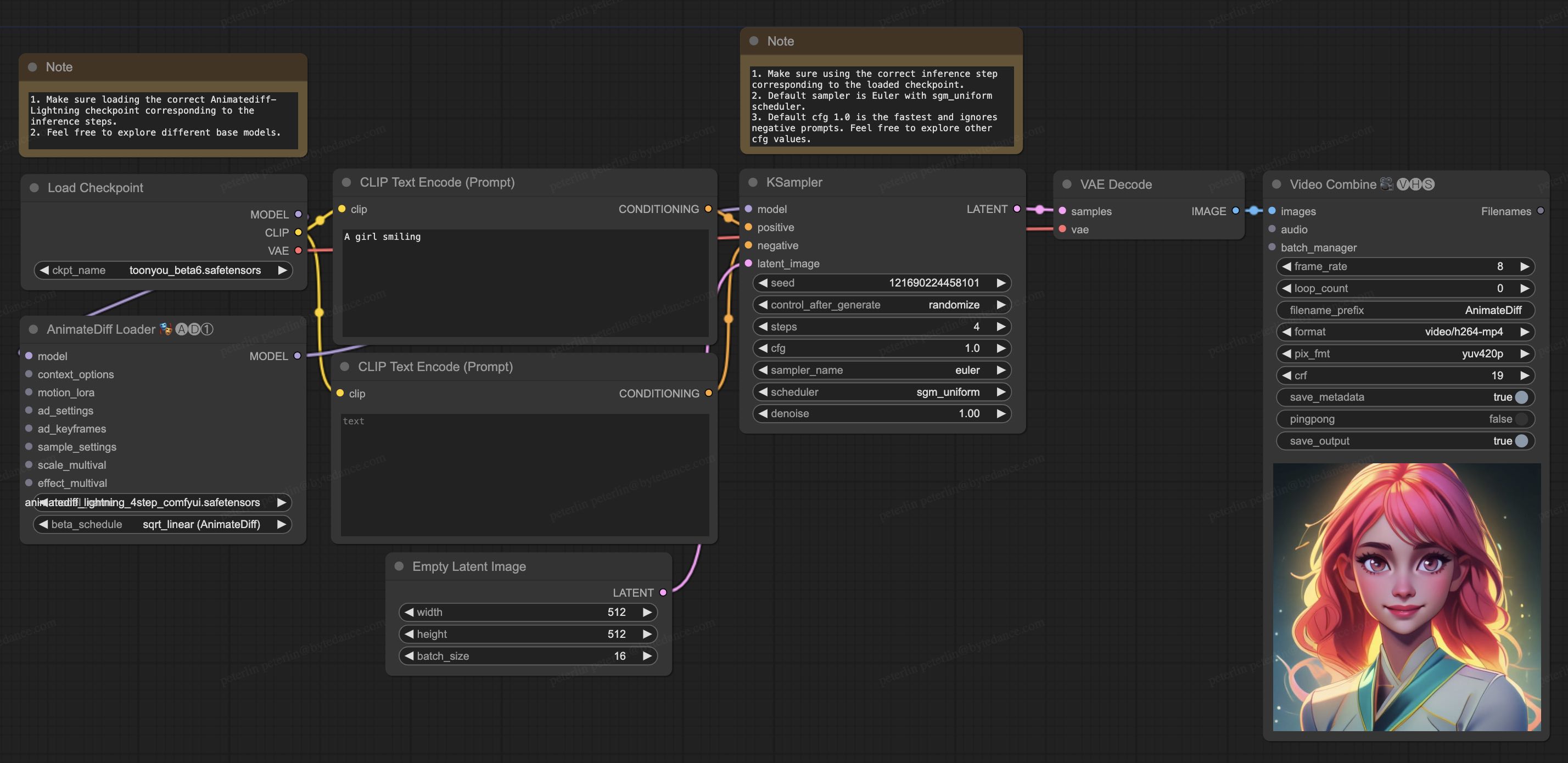

## ComfyUI Usage

|

| 69 |

|

| 70 |

-

1. Download [

|

| 71 |

-

|

| 72 |

-

2. Install nodes. You can install them manually or use [ComfyUI-Manager](https://github.com/ltdrdata/ComfyUI-Manager).

|

| 73 |

* [ComfyUI-AnimateDiff-Evolved](https://github.com/Kosinkadink/ComfyUI-AnimateDiff-Evolved)

|

| 74 |

* [ComfyUI-VideoHelperSuite](https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 75 |

|

| 76 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 77 |

|

| 78 |

-

|

| 79 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 80 |

|

| 81 |

-

. We release the model as part of the research.

|

| 15 |

|

| 16 |

Our models are distilled from [AnimateDiff SD1.5 v2](https://huggingface.co/guoyww/animatediff). This repository contains checkpoints for 1-step, 2-step, 4-step, and 8-step distilled models. The generation quality of our 2-step, 4-step, and 8-step model is great. Our 1-step model is only provided for research purposes.

|

|

|

|

| 65 |

|

| 66 |

## ComfyUI Usage

|

| 67 |

|

| 68 |

+

1. Download [animatediff_lightning_workflow.json](https://huggingface.co/ByteDance/AnimateDiff-Lightning/raw/main/comfyui/animatediff_lightning_workflow.json) and import it in ComfyUI.

|

| 69 |

+

1. Install nodes. You can install them manually or use [ComfyUI-Manager](https://github.com/ltdrdata/ComfyUI-Manager).

|

|

|

|

| 70 |

* [ComfyUI-AnimateDiff-Evolved](https://github.com/Kosinkadink/ComfyUI-AnimateDiff-Evolved)

|

| 71 |

* [ComfyUI-VideoHelperSuite](https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite)

|

| 72 |

+

1. Download your favorite base model checkpoint and put them under `/models/checkpoints/`

|

| 73 |

+

1. Download AnimateDiff-Lightning checkpoint `animatediff_lightning_Nstep_comfyui.safetensors` and put them under `/custom_nodes/ComfyUI-AnimateDiff-Evolved/models/`

|

| 74 |

+

|

| 75 |

+

|

| 76 |

+

|

| 77 |

+

|

| 78 |

|

| 79 |

+

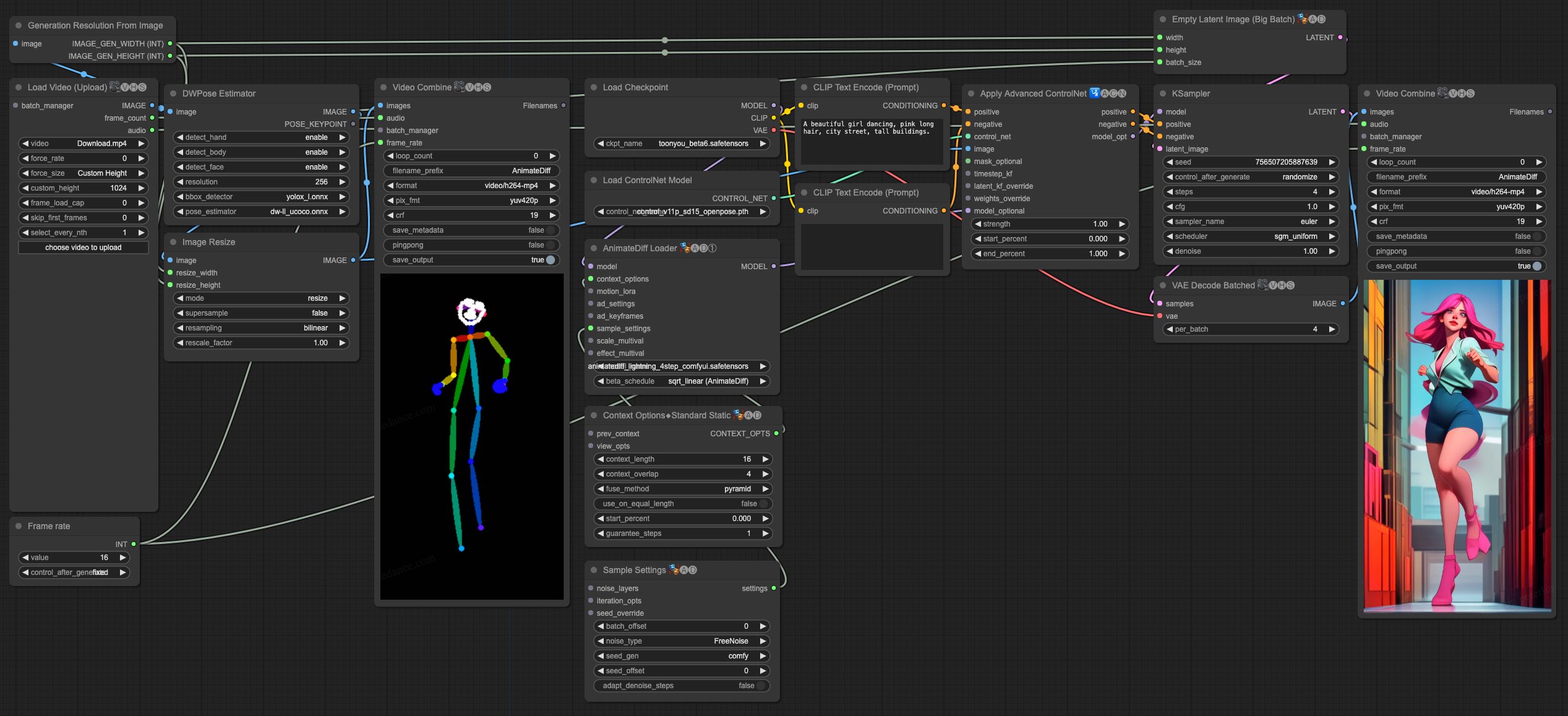

## Video-to-Video Generation

|

| 80 |

+

|

| 81 |

+

<video src='https://huggingface.co/ByteDance/AnimateDiff-Lightning/resolve/main/animatediff_lightning_samples_v2v.mp4' width="100%" autoplay muted loop></video>

|

| 82 |

+

|

| 83 |

+

AnimateDiff-Lightning is great for video-to-video generation. We provide the simplist comfyui workflow using ControlNet.

|

| 84 |

+

|

| 85 |

+

1. Download [animatediff_lightning_v2v_openpose_workflow.json](https://huggingface.co/ByteDance/AnimateDiff-Lightning/raw/main/comfyui/animatediff_lightning_v2v_openpose_workflow.json) and import it in ComfyUI.

|

| 86 |

+

1. Install nodes. You can install them manually or use [ComfyUI-Manager](https://github.com/ltdrdata/ComfyUI-Manager).

|

| 87 |

+

* [ComfyUI-AnimateDiff-Evolved](https://github.com/Kosinkadink/ComfyUI-AnimateDiff-Evolved)

|

| 88 |

+

* [ComfyUI-VideoHelperSuite](https://github.com/Kosinkadink/ComfyUI-VideoHelperSuite)

|

| 89 |

+

* [ComfyUI-Advanced-ControlNet](https://github.com/Kosinkadink/ComfyUI-Advanced-ControlNet)

|

| 90 |

+

* [comfyui_controlnet_aux](https://github.com/Fannovel16/comfyui_controlnet_aux)

|

| 91 |

+

1. Download your favorite base model checkpoint and put them under `/models/checkpoints/`

|

| 92 |

+

1. Download AnimateDiff-Lightning checkpoint `animatediff_lightning_Nstep_comfyui.safetensors` and put them under `/custom_nodes/ComfyUI-AnimateDiff-Evolved/models/`

|

| 93 |

+

1. Download [ControlNet OpenPose](https://huggingface.co/lllyasviel/ControlNet-v1-1/tree/main) `control_v11p_sd15_openpose.pth` checkpoint to `/models/controlnet/`

|

| 94 |

+

1. Upload your video and run the pipeline.

|

| 95 |

|

| 96 |

+

Additional notes:

|

| 97 |

|

| 98 |

+

1. Video shouldn't be too long or too high resolution. We used 576x1024 8 second 30fps videos for testing.

|

| 99 |

+

1. Set the frame rate to match your input video. This allows audio to match with the output video.

|

| 100 |

+

1. DWPose will download checkpoint itself on its first run.

|

| 101 |

+

1. DWPose may get stuck in UI, but the pipeline is actually still running in the background. Check ComfyUI log and your output folder.

|

| 102 |

|

| 103 |

+

|