Update README.md

Browse files

README.md

CHANGED

|

@@ -3,6 +3,9 @@ language:

|

|

| 3 |

- en

|

| 4 |

datasets:

|

| 5 |

- garage-bAInd/Open-Platypus

|

|

|

|

|

|

|

|

|

|

| 6 |

license: cc-by-nc-4.0

|

| 7 |

---

|

| 8 |

|

|

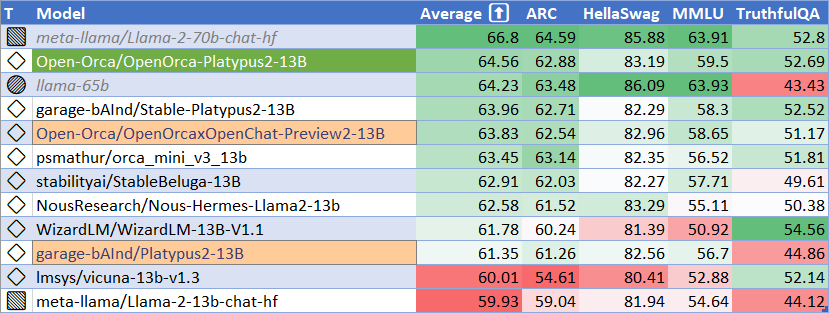

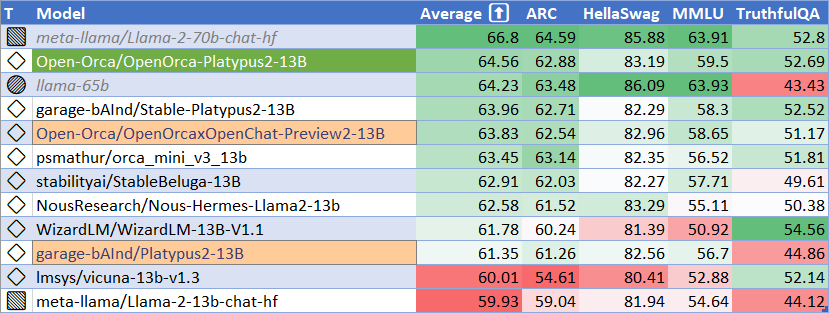

@@ -29,7 +32,7 @@ We will also give sneak-peak announcements on our Discord, which you can find he

|

|

| 29 |

|

| 30 |

https://AlignmentLab.ai

|

| 31 |

|

| 32 |

-

#

|

| 33 |

|

| 34 |

|

| 35 |

|

|

@@ -47,13 +50,14 @@ We use [Language Model Evaluation Harness](https://github.com/EleutherAI/lm-eval

|

|

| 47 |

# Model Details

|

| 48 |

|

| 49 |

* **Trained by**: **Platypus2-13B** trained by Cole Hunter & Ariel Lee; **OpenOrcaxOpenChat-Preview2-13B** trained by Open-Orca

|

| 50 |

-

* **Model type:** **OpenOrca-Platypus2-13B** is an auto-regressive language model based on the

|

| 51 |

* **Language(s)**: English

|

| 52 |

* **License for Platypus2-13B base weights**: Non-Commercial Creative Commons license ([CC BY-NC-4.0](https://creativecommons.org/licenses/by-nc/4.0/))

|

| 53 |

-

* **License for OpenOrcaxOpenChat-Preview2-13B base weights**:

|

| 54 |

|

| 55 |

|

| 56 |

# Prompt Template for base Platypus2-13B

|

|

|

|

| 57 |

```

|

| 58 |

### Instruction:

|

| 59 |

|

|

@@ -79,7 +83,8 @@ Please see our [paper](https://platypus-llm.github.io/Platypus.pdf) and [project

|

|

| 79 |

|

| 80 |

# Training Procedure

|

| 81 |

|

| 82 |

-

`Open-Orca/Platypus2-13B` was instruction fine-tuned using LoRA on

|

|

|

|

| 83 |

|

| 84 |

|

| 85 |

# Reproducing Evaluation Results

|

|

@@ -95,7 +100,7 @@ git checkout b281b0921b636bc36ad05c0b0b0763bd6dd43463

|

|

| 95 |

# install

|

| 96 |

pip install -e .

|

| 97 |

```

|

| 98 |

-

Each task was evaluated on a single A100

|

| 99 |

|

| 100 |

ARC:

|

| 101 |

```

|

|

@@ -127,22 +132,6 @@ Please see the Responsible Use Guide available at https://ai.meta.com/llama/resp

|

|

| 127 |

|

| 128 |

# Citations

|

| 129 |

|

| 130 |

-

```bibtex

|

| 131 |

-

@misc{touvron2023llama,

|

| 132 |

-

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

|

| 133 |

-

author={Hugo Touvron and Louis Martin and Kevin Stone and Peter Albert and Amjad Almahairi and Yasmine Babaei and Nikolay Bashlykov and Soumya Batra and Prajjwal Bhargava and Shruti Bhosale and Dan Bikel and Lukas Blecher and Cristian Canton Ferrer and Moya Chen and Guillem Cucurull and David Esiobu and Jude Fernandes and Jeremy Fu and Wenyin Fu and Brian Fuller and Cynthia Gao and Vedanuj Goswami and Naman Goyal and Anthony Hartshorn and Saghar Hosseini and Rui Hou and Hakan Inan and Marcin Kardas and Viktor Kerkez and Madian Khabsa and Isabel Kloumann and Artem Korenev and Punit Singh Koura and Marie-Anne Lachaux and Thibaut Lavril and Jenya Lee and Diana Liskovich and Yinghai Lu and Yuning Mao and Xavier Martinet and Todor Mihaylov and Pushkar Mishra and Igor Molybog and Yixin Nie and Andrew Poulton and Jeremy Reizenstein and Rashi Rungta and Kalyan Saladi and Alan Schelten and Ruan Silva and Eric Michael Smith and Ranjan Subramanian and Xiaoqing Ellen Tan and Binh Tang and Ross Taylor and Adina Williams and Jian Xiang Kuan and Puxin Xu and Zheng Yan and Iliyan Zarov and Yuchen Zhang and Angela Fan and Melanie Kambadur and Sharan Narang and Aurelien Rodriguez and Robert Stojnic and Sergey Edunov and Thomas Scialom},

|

| 134 |

-

year={2023},

|

| 135 |

-

eprint= arXiv 2307.09288

|

| 136 |

-

}

|

| 137 |

-

```

|

| 138 |

-

```bibtex

|

| 139 |

-

@article{hu2021lora,

|

| 140 |

-

title={LoRA: Low-Rank Adaptation of Large Language Models},

|

| 141 |

-

author={Hu, Edward J. and Shen, Yelong and Wallis, Phillip and Allen-Zhu, Zeyuan and Li, Yuanzhi and Wang, Shean and Chen, Weizhu},

|

| 142 |

-

journal={CoRR},

|

| 143 |

-

year={2021}

|

| 144 |

-

}

|

| 145 |

-

```

|

| 146 |

```bibtex

|

| 147 |

@software{OpenOrcaxOpenChatPreview2,

|

| 148 |

title = {OpenOrcaxOpenChatPreview2: Llama2-13B Model Instruct-tuned on Filtered OpenOrcaV1 GPT-4 Dataset},

|

|

@@ -152,8 +141,6 @@ Please see the Responsible Use Guide available at https://ai.meta.com/llama/resp

|

|

| 152 |

journal = {HuggingFace repository},

|

| 153 |

howpublished = {\url{https://https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B},

|

| 154 |

}

|

| 155 |

-

```

|

| 156 |

-

```bibtex

|

| 157 |

@software{openchat,

|

| 158 |

title = {{OpenChat: Advancing Open-source Language Models with Imperfect Data}},

|

| 159 |

author = {Wang, Guan and Cheng, Sijie and Yu, Qiying and Liu, Changling},

|

|

@@ -163,4 +150,32 @@ Please see the Responsible Use Guide available at https://ai.meta.com/llama/resp

|

|

| 163 |

year = {2023},

|

| 164 |

month = {7},

|

| 165 |

}

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 166 |

```

|

|

|

|

| 3 |

- en

|

| 4 |

datasets:

|

| 5 |

- garage-bAInd/Open-Platypus

|

| 6 |

+

- Open-Orca/OpenOrca

|

| 7 |

+

library_name: transformers

|

| 8 |

+

pipeline_tag: text-generation

|

| 9 |

license: cc-by-nc-4.0

|

| 10 |

---

|

| 11 |

|

|

|

|

| 32 |

|

| 33 |

https://AlignmentLab.ai

|

| 34 |

|

| 35 |

+

# Evaluation

|

| 36 |

|

| 37 |

|

| 38 |

|

|

|

|

| 50 |

# Model Details

|

| 51 |

|

| 52 |

* **Trained by**: **Platypus2-13B** trained by Cole Hunter & Ariel Lee; **OpenOrcaxOpenChat-Preview2-13B** trained by Open-Orca

|

| 53 |

+

* **Model type:** **OpenOrca-Platypus2-13B** is an auto-regressive language model based on the Lllama 2 transformer architecture.

|

| 54 |

* **Language(s)**: English

|

| 55 |

* **License for Platypus2-13B base weights**: Non-Commercial Creative Commons license ([CC BY-NC-4.0](https://creativecommons.org/licenses/by-nc/4.0/))

|

| 56 |

+

* **License for OpenOrcaxOpenChat-Preview2-13B base weights**: Llama 2 Commercial

|

| 57 |

|

| 58 |

|

| 59 |

# Prompt Template for base Platypus2-13B

|

| 60 |

+

|

| 61 |

```

|

| 62 |

### Instruction:

|

| 63 |

|

|

|

|

| 83 |

|

| 84 |

# Training Procedure

|

| 85 |

|

| 86 |

+

`Open-Orca/Platypus2-13B` was instruction fine-tuned using LoRA on 1x A100-80GB.

|

| 87 |

+

For training details and inference instructions please see the [Platypus](https://github.com/arielnlee/Platypus) GitHub repo.

|

| 88 |

|

| 89 |

|

| 90 |

# Reproducing Evaluation Results

|

|

|

|

| 100 |

# install

|

| 101 |

pip install -e .

|

| 102 |

```

|

| 103 |

+

Each task was evaluated on a single A100-80GB GPU.

|

| 104 |

|

| 105 |

ARC:

|

| 106 |

```

|

|

|

|

| 132 |

|

| 133 |

# Citations

|

| 134 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 135 |

```bibtex

|

| 136 |

@software{OpenOrcaxOpenChatPreview2,

|

| 137 |

title = {OpenOrcaxOpenChatPreview2: Llama2-13B Model Instruct-tuned on Filtered OpenOrcaV1 GPT-4 Dataset},

|

|

|

|

| 141 |

journal = {HuggingFace repository},

|

| 142 |

howpublished = {\url{https://https://huggingface.co/Open-Orca/OpenOrcaxOpenChat-Preview2-13B},

|

| 143 |

}

|

|

|

|

|

|

|

| 144 |

@software{openchat,

|

| 145 |

title = {{OpenChat: Advancing Open-source Language Models with Imperfect Data}},

|

| 146 |

author = {Wang, Guan and Cheng, Sijie and Yu, Qiying and Liu, Changling},

|

|

|

|

| 150 |

year = {2023},

|

| 151 |

month = {7},

|

| 152 |

}

|

| 153 |

+

@misc{mukherjee2023orca,

|

| 154 |

+

title={Orca: Progressive Learning from Complex Explanation Traces of GPT-4},

|

| 155 |

+

author={Subhabrata Mukherjee and Arindam Mitra and Ganesh Jawahar and Sahaj Agarwal and Hamid Palangi and Ahmed Awadallah},

|

| 156 |

+

year={2023},

|

| 157 |

+

eprint={2306.02707},

|

| 158 |

+

archivePrefix={arXiv},

|

| 159 |

+

primaryClass={cs.CL}

|

| 160 |

+

}

|

| 161 |

+

@misc{touvron2023llama,

|

| 162 |

+

title={Llama 2: Open Foundation and Fine-Tuned Chat Models},

|

| 163 |

+

author={Hugo Touvron and Louis Martin and Kevin Stone and Peter Albert and Amjad Almahairi and Yasmine Babaei and Nikolay Bashlykov and Soumya Batra and Prajjwal Bhargava and Shruti Bhosale and Dan Bikel and Lukas Blecher and Cristian Canton Ferrer and Moya Chen and Guillem Cucurull and David Esiobu and Jude Fernandes and Jeremy Fu and Wenyin Fu and Brian Fuller and Cynthia Gao and Vedanuj Goswami and Naman Goyal and Anthony Hartshorn and Saghar Hosseini and Rui Hou and Hakan Inan and Marcin Kardas and Viktor Kerkez and Madian Khabsa and Isabel Kloumann and Artem Korenev and Punit Singh Koura and Marie-Anne Lachaux and Thibaut Lavril and Jenya Lee and Diana Liskovich and Yinghai Lu and Yuning Mao and Xavier Martinet and Todor Mihaylov and Pushkar Mishra and Igor Molybog and Yixin Nie and Andrew Poulton and Jeremy Reizenstein and Rashi Rungta and Kalyan Saladi and Alan Schelten and Ruan Silva and Eric Michael Smith and Ranjan Subramanian and Xiaoqing Ellen Tan and Binh Tang and Ross Taylor and Adina Williams and Jian Xiang Kuan and Puxin Xu and Zheng Yan and Iliyan Zarov and Yuchen Zhang and Angela Fan and Melanie Kambadur and Sharan Narang and Aurelien Rodriguez and Robert Stojnic and Sergey Edunov and Thomas Scialom},

|

| 164 |

+

year={2023},

|

| 165 |

+

eprint= arXiv 2307.09288

|

| 166 |

+

}

|

| 167 |

+

@misc{longpre2023flan,

|

| 168 |

+

title={The Flan Collection: Designing Data and Methods for Effective Instruction Tuning},

|

| 169 |

+

author={Shayne Longpre and Le Hou and Tu Vu and Albert Webson and Hyung Won Chung and Yi Tay and Denny Zhou and Quoc V. Le and Barret Zoph and Jason Wei and Adam Roberts},

|

| 170 |

+

year={2023},

|

| 171 |

+

eprint={2301.13688},

|

| 172 |

+

archivePrefix={arXiv},

|

| 173 |

+

primaryClass={cs.AI}

|

| 174 |

+

}

|

| 175 |

+

@article{hu2021lora,

|

| 176 |

+

title={LoRA: Low-Rank Adaptation of Large Language Models},

|

| 177 |

+

author={Hu, Edward J. and Shen, Yelong and Wallis, Phillip and Allen-Zhu, Zeyuan and Li, Yuanzhi and Wang, Shean and Chen, Weizhu},

|

| 178 |

+

journal={CoRR},

|

| 179 |

+

year={2021}

|

| 180 |

+

}

|

| 181 |

```

|