3dc5eae27faca490b6ca87a0dd7a6bbcad85ccec4f07c3d94e19bff02116206b

Browse files- README.md +1 -1

- config.json +1 -1

- plots.png +0 -0

- smash_config.json +5 -5

README.md

CHANGED

|

@@ -61,7 +61,7 @@ You can run the smashed model with these steps:

|

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

model = AutoModelForCausalLM.from_pretrained("PrunaAI/42dot-42dot_LLM-PLM-1.3B-bnb-4bit-smashed",

|

| 64 |

-

trust_remote_code=True)

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("42dot/42dot_LLM-PLM-1.3B")

|

| 66 |

|

| 67 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

|

|

|

| 61 |

from transformers import AutoModelForCausalLM, AutoTokenizer

|

| 62 |

|

| 63 |

model = AutoModelForCausalLM.from_pretrained("PrunaAI/42dot-42dot_LLM-PLM-1.3B-bnb-4bit-smashed",

|

| 64 |

+

trust_remote_code=True, device_map='auto')

|

| 65 |

tokenizer = AutoTokenizer.from_pretrained("42dot/42dot_LLM-PLM-1.3B")

|

| 66 |

|

| 67 |

input_ids = tokenizer("What is the color of prunes?,", return_tensors='pt').to(model.device)["input_ids"]

|

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmpor5u0g_u",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

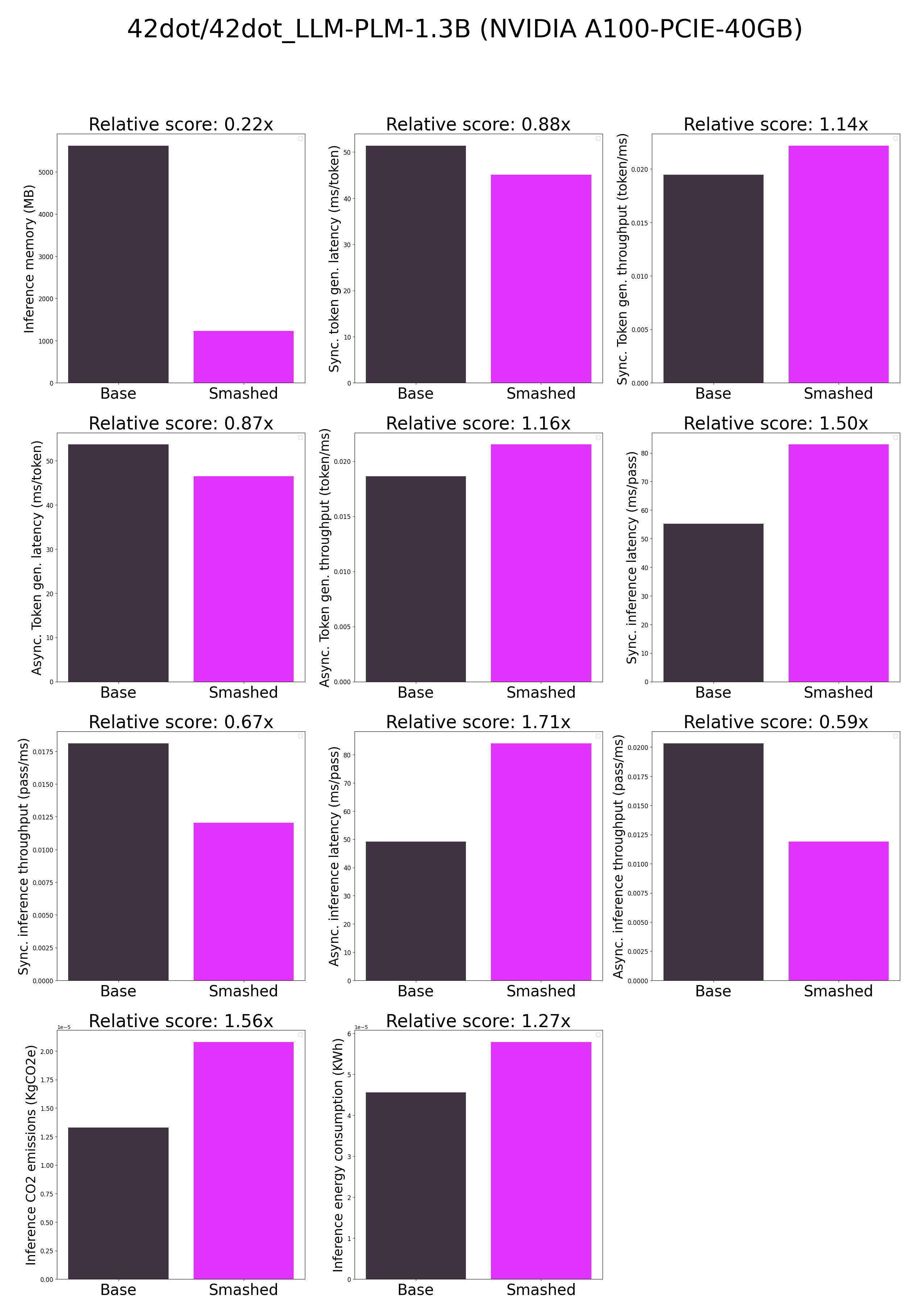

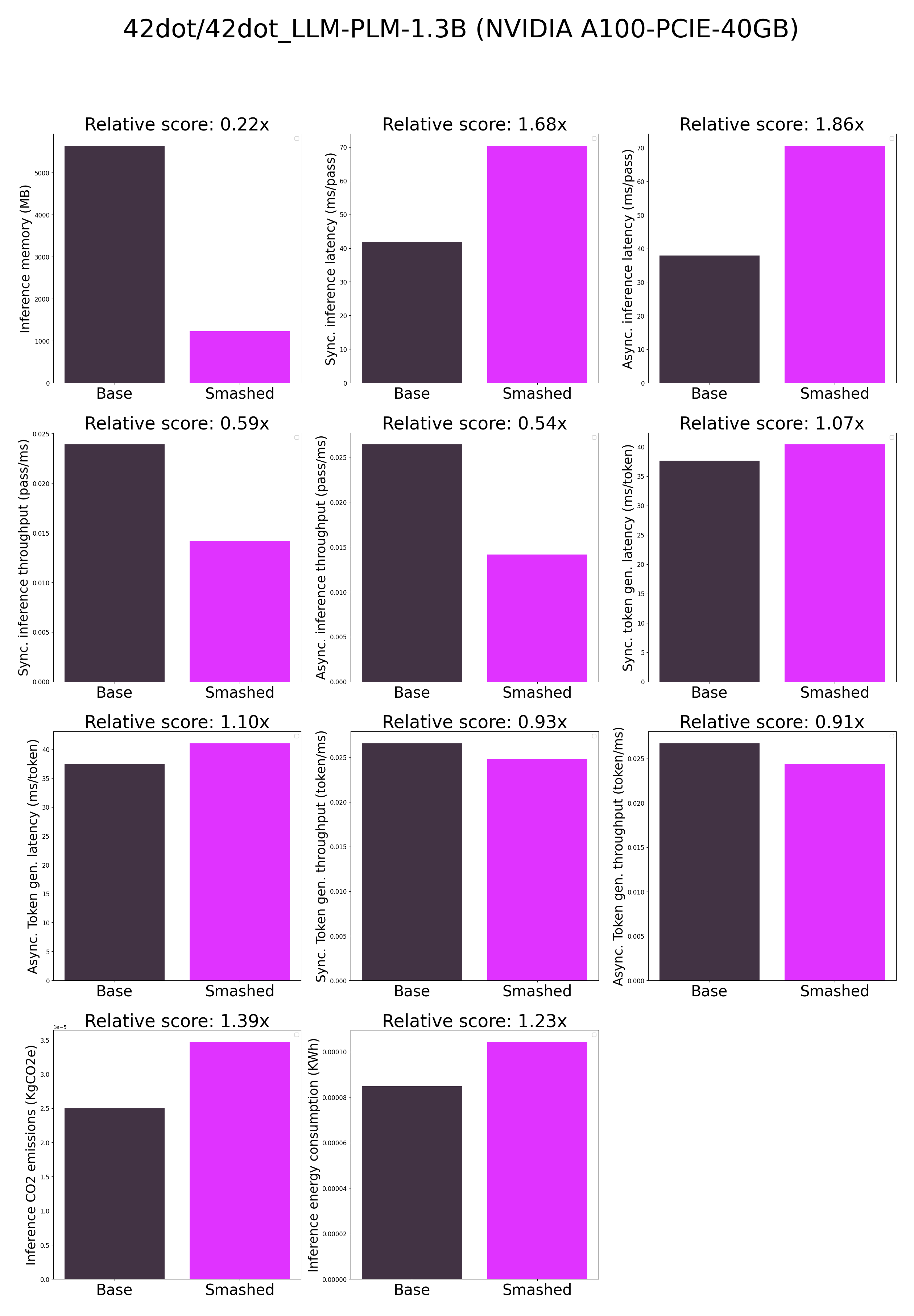

plots.png

CHANGED

|

|

smash_config.json

CHANGED

|

@@ -2,18 +2,18 @@

|

|

| 2 |

"api_key": null,

|

| 3 |

"verify_url": "http://johnrachwan.pythonanywhere.com",

|

| 4 |

"smash_config": {

|

| 5 |

-

"pruners": "

|

| 6 |

-

"factorizers": "

|

| 7 |

"quantizers": "['llm-int8']",

|

| 8 |

-

"compilers": "

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "42dot/42dot_LLM-PLM-1.3B",

|

| 14 |

"pruning_ratio": 0.0,

|

| 15 |

"n_quantization_bits": 4,

|

| 16 |

-

"output_deviation": 0.

|

| 17 |

"max_batch_size": 1,

|

| 18 |

"qtype_weight": "torch.qint8",

|

| 19 |

"qtype_activation": "torch.quint8",

|

|

|

|

| 2 |

"api_key": null,

|

| 3 |

"verify_url": "http://johnrachwan.pythonanywhere.com",

|

| 4 |

"smash_config": {

|

| 5 |

+

"pruners": "None",

|

| 6 |

+

"factorizers": "None",

|

| 7 |

"quantizers": "['llm-int8']",

|

| 8 |

+

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/modelsz_jn2cg7",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "42dot/42dot_LLM-PLM-1.3B",

|

| 14 |

"pruning_ratio": 0.0,

|

| 15 |

"n_quantization_bits": 4,

|

| 16 |

+

"output_deviation": 0.005,

|

| 17 |

"max_batch_size": 1,

|

| 18 |

"qtype_weight": "torch.qint8",

|

| 19 |

"qtype_activation": "torch.quint8",

|