Upload folder using huggingface_hub (#2)

Browse files- 888aa0346f6ad1bfdeb436d8fc737cac86d4c5d19b684432a1b88c8c9fbc61a3 (128a1e53b55e3892e26fd3d7d12a63e3d3a1888a)

- README.md +1 -1

- config.json +2 -2

- model.safetensors +2 -2

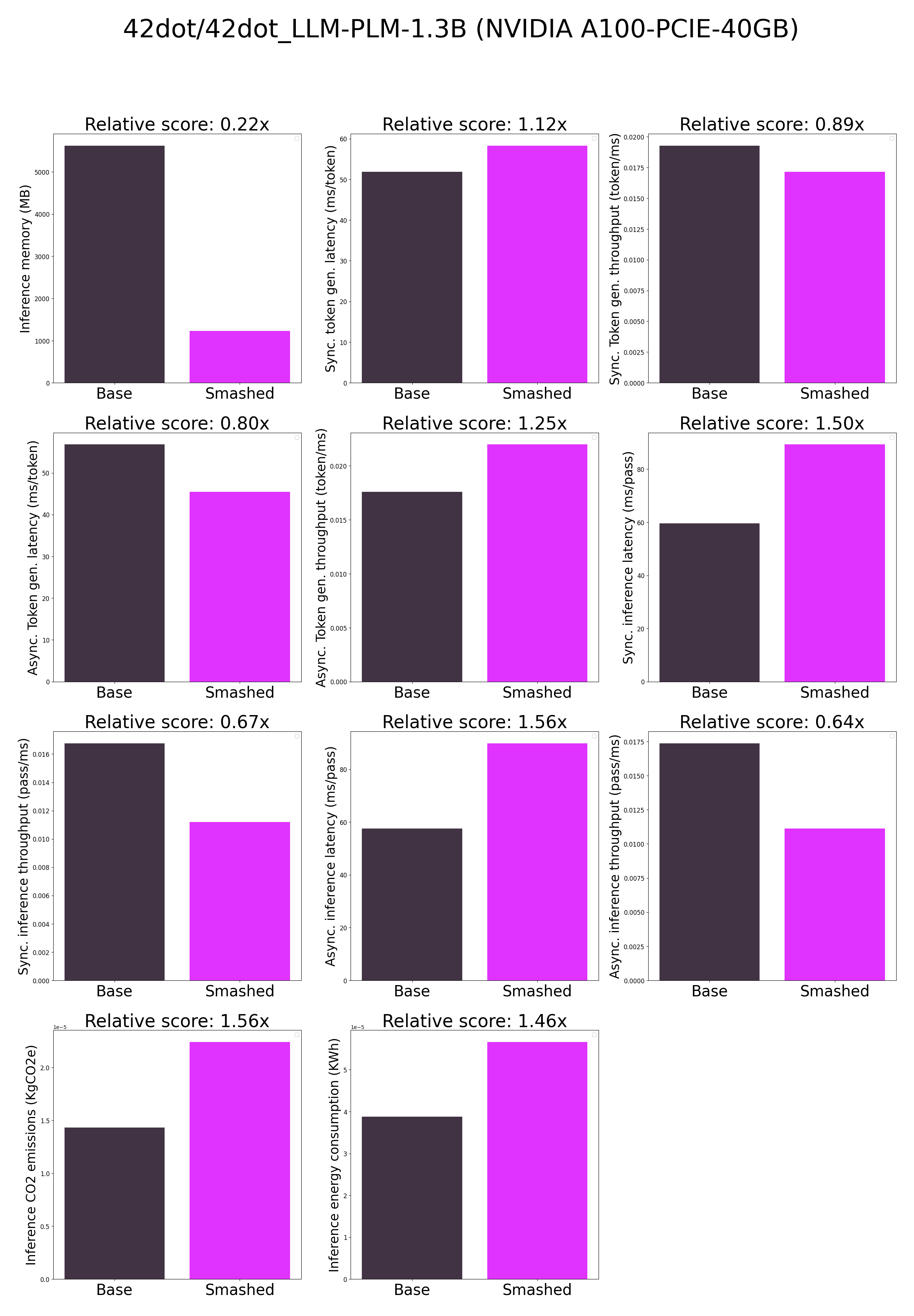

- plots.png +0 -0

- smash_config.json +5 -5

README.md

CHANGED

|

@@ -34,7 +34,7 @@ tags:

|

|

| 34 |

|

| 35 |

## Results

|

| 36 |

|

| 37 |

-

|

| 38 |

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

|

|

|

| 34 |

|

| 35 |

## Results

|

| 36 |

|

| 37 |

+

|

| 38 |

|

| 39 |

**Frequently Asked Questions**

|

| 40 |

- ***How does the compression work?*** The model is compressed with llm-int8.

|

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

@@ -21,7 +21,7 @@

|

|

| 21 |

"quantization_config": {

|

| 22 |

"bnb_4bit_compute_dtype": "bfloat16",

|

| 23 |

"bnb_4bit_quant_type": "fp4",

|

| 24 |

-

"bnb_4bit_use_double_quant":

|

| 25 |

"llm_int8_enable_fp32_cpu_offload": false,

|

| 26 |

"llm_int8_has_fp16_weight": false,

|

| 27 |

"llm_int8_skip_modules": [

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmplq5oo90c",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 21 |

"quantization_config": {

|

| 22 |

"bnb_4bit_compute_dtype": "bfloat16",

|

| 23 |

"bnb_4bit_quant_type": "fp4",

|

| 24 |

+

"bnb_4bit_use_double_quant": false,

|

| 25 |

"llm_int8_enable_fp32_cpu_offload": false,

|

| 26 |

"llm_int8_has_fp16_weight": false,

|

| 27 |

"llm_int8_skip_modules": [

|

model.safetensors

CHANGED

|

@@ -1,3 +1,3 @@

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

-

oid sha256:

|

| 3 |

-

size

|

|

|

|

| 1 |

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:647313ff4fcdc83899aeea8184817f407a2e2ffdfd5fd2cf27016d86cfe7a155

|

| 3 |

+

size 1106034112

|

plots.png

ADDED

|

smash_config.json

CHANGED

|

@@ -2,18 +2,18 @@

|

|

| 2 |

"api_key": null,

|

| 3 |

"verify_url": "http://johnrachwan.pythonanywhere.com",

|

| 4 |

"smash_config": {

|

| 5 |

-

"pruners": "

|

| 6 |

-

"factorizers": "

|

| 7 |

"quantizers": "['llm-int8']",

|

| 8 |

-

"compilers": "

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "42dot/42dot_LLM-PLM-1.3B",

|

| 14 |

"pruning_ratio": 0.0,

|

| 15 |

"n_quantization_bits": 4,

|

| 16 |

-

"output_deviation": 0.

|

| 17 |

"max_batch_size": 1,

|

| 18 |

"qtype_weight": "torch.qint8",

|

| 19 |

"qtype_activation": "torch.quint8",

|

|

|

|

| 2 |

"api_key": null,

|

| 3 |

"verify_url": "http://johnrachwan.pythonanywhere.com",

|

| 4 |

"smash_config": {

|

| 5 |

+

"pruners": "[]",

|

| 6 |

+

"factorizers": "[]",

|

| 7 |

"quantizers": "['llm-int8']",

|

| 8 |

+

"compilers": "[]",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/models7ldbxd23",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "42dot/42dot_LLM-PLM-1.3B",

|

| 14 |

"pruning_ratio": 0.0,

|

| 15 |

"n_quantization_bits": 4,

|

| 16 |

+

"output_deviation": 0.01,

|

| 17 |

"max_batch_size": 1,

|

| 18 |

"qtype_weight": "torch.qint8",

|

| 19 |

"qtype_activation": "torch.quint8",

|