Upload folder using huggingface_hub (#2)

Browse files- 7dcfa8ceefd87824d100303a02dd01a566404f8d774368b2ba3c28071240c2fb (a75d46dd99b327b4fb12027aa665f613e94c1f61)

- 410966bf95d7a764c571b4174b9f0eca23ecfa9be451d687044240ecc312c08e (32f0dfabe528cfc018ac6e08e237c2acd4eaf6d1)

- config.json +1 -1

- plots.png +0 -0

- smash_config.json +1 -1

config.json

CHANGED

|

@@ -1,5 +1,5 @@

|

|

| 1 |

{

|

| 2 |

-

"_name_or_path": "/tmp/

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

|

|

|

| 1 |

{

|

| 2 |

+

"_name_or_path": "/tmp/tmph5te9wun",

|

| 3 |

"architectures": [

|

| 4 |

"LlamaForCausalLM"

|

| 5 |

],

|

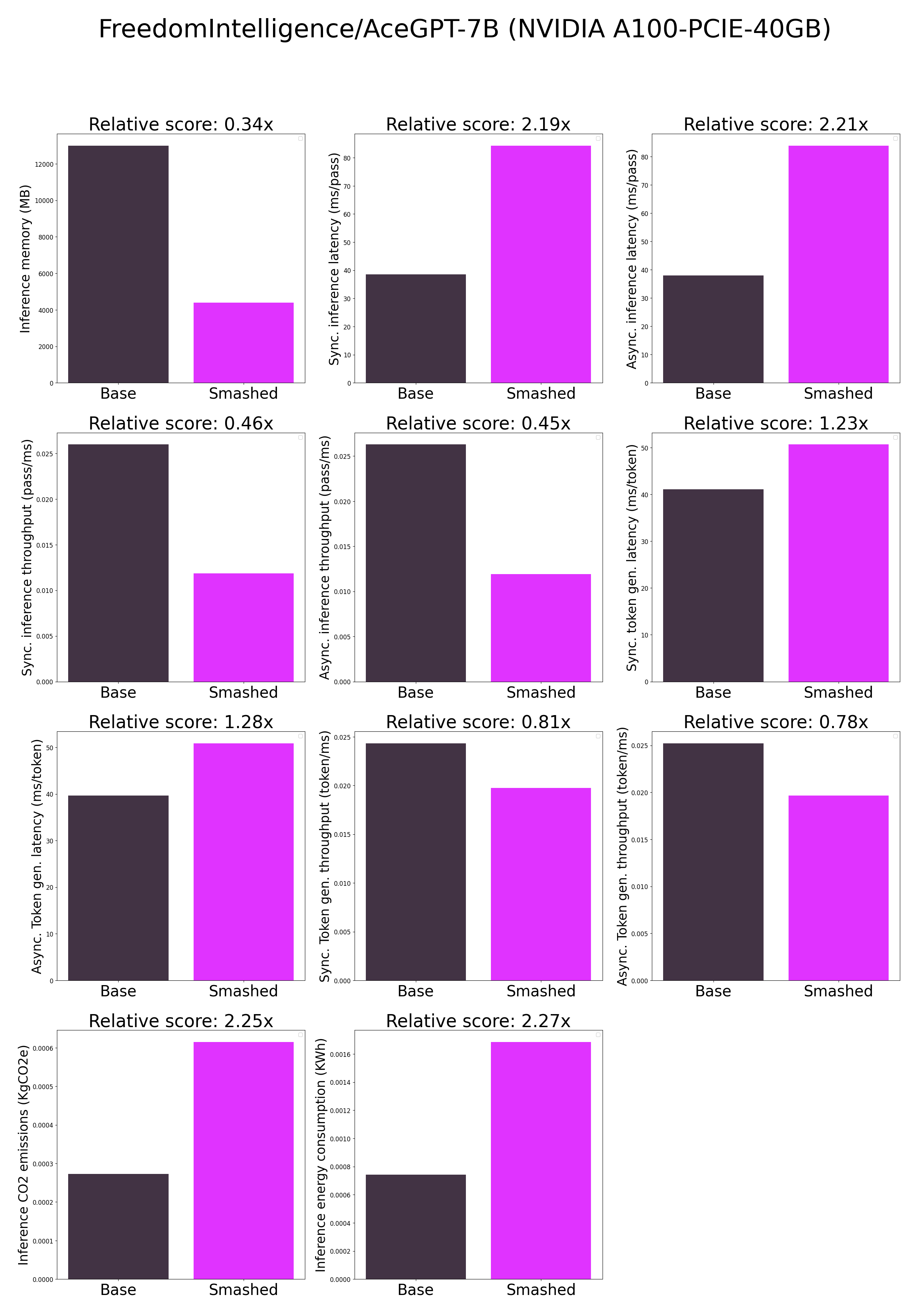

plots.png

CHANGED

|

|

smash_config.json

CHANGED

|

@@ -8,7 +8,7 @@

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

-

"cache_dir": "/ceph/hdd/staff/charpent/.cache/

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "FreedomIntelligence/AceGPT-7B",

|

| 14 |

"pruning_ratio": 0.0,

|

|

|

|

| 8 |

"compilers": "None",

|

| 9 |

"task": "text_text_generation",

|

| 10 |

"device": "cuda",

|

| 11 |

+

"cache_dir": "/ceph/hdd/staff/charpent/.cache/models_keh0ynv",

|

| 12 |

"batch_size": 1,

|

| 13 |

"model_name": "FreedomIntelligence/AceGPT-7B",

|

| 14 |

"pruning_ratio": 0.0,

|