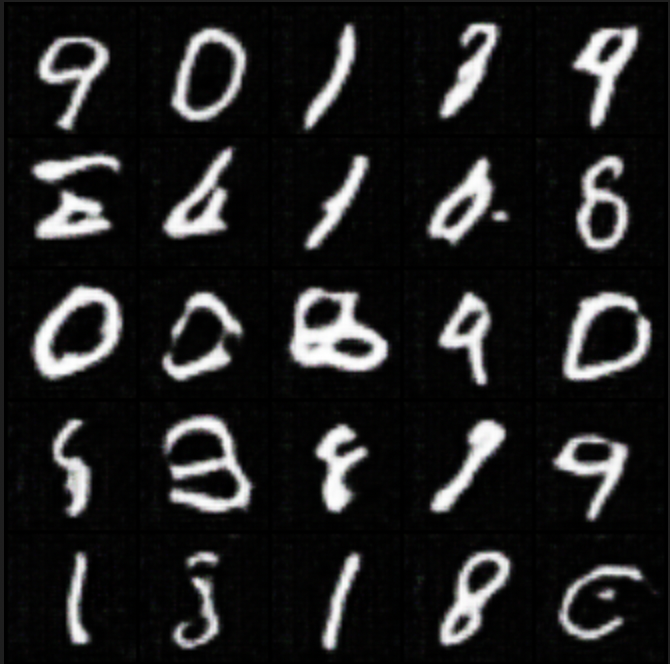

Training in progress, step 1000

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- $RECYCLE.BIN/S-1-5-21-3928097653-3020120303-4105988984-1001/desktop.ini +3 -0

- .gitattributes +4 -0

- added_tokens.json +1611 -0

- community-events/.gitignore +166 -0

- community-events/README.md +16 -0

- community-events/computer-vision-study-group/Notebooks/HuggingFace_vision_ecosystem_overview_(June_2022).ipynb +0 -0

- community-events/computer-vision-study-group/README.md +15 -0

- community-events/computer-vision-study-group/Sessions/Blip2.md +25 -0

- community-events/computer-vision-study-group/Sessions/Fiber.md +24 -0

- community-events/computer-vision-study-group/Sessions/FlexiViT.md +23 -0

- community-events/computer-vision-study-group/Sessions/HFVisionEcosystem.md +10 -0

- community-events/computer-vision-study-group/Sessions/HowDoVisionTransformersWork.md +27 -0

- community-events/computer-vision-study-group/Sessions/MaskedAutoEncoders.md +24 -0

- community-events/computer-vision-study-group/Sessions/NeuralRadianceFields.md +19 -0

- community-events/computer-vision-study-group/Sessions/PolarizedSelfAttention.md +14 -0

- community-events/computer-vision-study-group/Sessions/SwinTransformer.md +25 -0

- community-events/gradio-blocks/README.md +123 -0

- community-events/huggan/README.md +487 -0

- community-events/huggan/__init__.py +3 -0

- community-events/huggan/assets/cyclegan.png +3 -0

- community-events/huggan/assets/dcgan_mnist.png +0 -0

- community-events/huggan/assets/example_model.png +0 -0

- community-events/huggan/assets/example_space.png +0 -0

- community-events/huggan/assets/huggan_banner.png +0 -0

- community-events/huggan/assets/lightweight_gan_wandb.png +3 -0

- community-events/huggan/assets/metfaces.png +0 -0

- community-events/huggan/assets/pix2pix_maps.png +3 -0

- community-events/huggan/assets/wandb.png +3 -0

- community-events/huggan/model_card_template.md +50 -0

- community-events/huggan/pytorch/README.md +19 -0

- community-events/huggan/pytorch/__init__.py +0 -0

- community-events/huggan/pytorch/cyclegan/README.md +81 -0

- community-events/huggan/pytorch/cyclegan/__init__.py +0 -0

- community-events/huggan/pytorch/cyclegan/modeling_cyclegan.py +108 -0

- community-events/huggan/pytorch/cyclegan/train.py +354 -0

- community-events/huggan/pytorch/cyclegan/utils.py +44 -0

- community-events/huggan/pytorch/dcgan/README.md +155 -0

- community-events/huggan/pytorch/dcgan/__init__.py +0 -0

- community-events/huggan/pytorch/dcgan/modeling_dcgan.py +80 -0

- community-events/huggan/pytorch/dcgan/train.py +346 -0

- community-events/huggan/pytorch/huggan_mixin.py +131 -0

- community-events/huggan/pytorch/lightweight_gan/README.md +89 -0

- community-events/huggan/pytorch/lightweight_gan/__init__.py +0 -0

- community-events/huggan/pytorch/lightweight_gan/cli.py +178 -0

- community-events/huggan/pytorch/lightweight_gan/diff_augment.py +102 -0

- community-events/huggan/pytorch/lightweight_gan/lightweight_gan.py +1598 -0

- community-events/huggan/pytorch/metrics/README.md +39 -0

- community-events/huggan/pytorch/metrics/__init__.py +0 -0

- community-events/huggan/pytorch/metrics/fid_score.py +80 -0

- community-events/huggan/pytorch/metrics/inception.py +328 -0

$RECYCLE.BIN/S-1-5-21-3928097653-3020120303-4105988984-1001/desktop.ini

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:e1b9ce9b57957b1a0607a72a057d6b7a9b34ea60f3f8aa8f38a3af979bd23066

|

| 3 |

+

size 129

|

.gitattributes

CHANGED

|

@@ -33,3 +33,7 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

community-events/huggan/assets/cyclegan.png filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

community-events/huggan/assets/lightweight_gan_wandb.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

community-events/huggan/assets/pix2pix_maps.png filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

community-events/huggan/assets/wandb.png filter=lfs diff=lfs merge=lfs -text

|

added_tokens.json

ADDED

|

@@ -0,0 +1,1611 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"<|0.00|>": 50365,

|

| 3 |

+

"<|0.02|>": 50366,

|

| 4 |

+

"<|0.04|>": 50367,

|

| 5 |

+

"<|0.06|>": 50368,

|

| 6 |

+

"<|0.08|>": 50369,

|

| 7 |

+

"<|0.10|>": 50370,

|

| 8 |

+

"<|0.12|>": 50371,

|

| 9 |

+

"<|0.14|>": 50372,

|

| 10 |

+

"<|0.16|>": 50373,

|

| 11 |

+

"<|0.18|>": 50374,

|

| 12 |

+

"<|0.20|>": 50375,

|

| 13 |

+

"<|0.22|>": 50376,

|

| 14 |

+

"<|0.24|>": 50377,

|

| 15 |

+

"<|0.26|>": 50378,

|

| 16 |

+

"<|0.28|>": 50379,

|

| 17 |

+

"<|0.30|>": 50380,

|

| 18 |

+

"<|0.32|>": 50381,

|

| 19 |

+

"<|0.34|>": 50382,

|

| 20 |

+

"<|0.36|>": 50383,

|

| 21 |

+

"<|0.38|>": 50384,

|

| 22 |

+

"<|0.40|>": 50385,

|

| 23 |

+

"<|0.42|>": 50386,

|

| 24 |

+

"<|0.44|>": 50387,

|

| 25 |

+

"<|0.46|>": 50388,

|

| 26 |

+

"<|0.48|>": 50389,

|

| 27 |

+

"<|0.50|>": 50390,

|

| 28 |

+

"<|0.52|>": 50391,

|

| 29 |

+

"<|0.54|>": 50392,

|

| 30 |

+

"<|0.56|>": 50393,

|

| 31 |

+

"<|0.58|>": 50394,

|

| 32 |

+

"<|0.60|>": 50395,

|

| 33 |

+

"<|0.62|>": 50396,

|

| 34 |

+

"<|0.64|>": 50397,

|

| 35 |

+

"<|0.66|>": 50398,

|

| 36 |

+

"<|0.68|>": 50399,

|

| 37 |

+

"<|0.70|>": 50400,

|

| 38 |

+

"<|0.72|>": 50401,

|

| 39 |

+

"<|0.74|>": 50402,

|

| 40 |

+

"<|0.76|>": 50403,

|

| 41 |

+

"<|0.78|>": 50404,

|

| 42 |

+

"<|0.80|>": 50405,

|

| 43 |

+

"<|0.82|>": 50406,

|

| 44 |

+

"<|0.84|>": 50407,

|

| 45 |

+

"<|0.86|>": 50408,

|

| 46 |

+

"<|0.88|>": 50409,

|

| 47 |

+

"<|0.90|>": 50410,

|

| 48 |

+

"<|0.92|>": 50411,

|

| 49 |

+

"<|0.94|>": 50412,

|

| 50 |

+

"<|0.96|>": 50413,

|

| 51 |

+

"<|0.98|>": 50414,

|

| 52 |

+

"<|1.00|>": 50415,

|

| 53 |

+

"<|1.02|>": 50416,

|

| 54 |

+

"<|1.04|>": 50417,

|

| 55 |

+

"<|1.06|>": 50418,

|

| 56 |

+

"<|1.08|>": 50419,

|

| 57 |

+

"<|1.10|>": 50420,

|

| 58 |

+

"<|1.12|>": 50421,

|

| 59 |

+

"<|1.14|>": 50422,

|

| 60 |

+

"<|1.16|>": 50423,

|

| 61 |

+

"<|1.18|>": 50424,

|

| 62 |

+

"<|1.20|>": 50425,

|

| 63 |

+

"<|1.22|>": 50426,

|

| 64 |

+

"<|1.24|>": 50427,

|

| 65 |

+

"<|1.26|>": 50428,

|

| 66 |

+

"<|1.28|>": 50429,

|

| 67 |

+

"<|1.30|>": 50430,

|

| 68 |

+

"<|1.32|>": 50431,

|

| 69 |

+

"<|1.34|>": 50432,

|

| 70 |

+

"<|1.36|>": 50433,

|

| 71 |

+

"<|1.38|>": 50434,

|

| 72 |

+

"<|1.40|>": 50435,

|

| 73 |

+

"<|1.42|>": 50436,

|

| 74 |

+

"<|1.44|>": 50437,

|

| 75 |

+

"<|1.46|>": 50438,

|

| 76 |

+

"<|1.48|>": 50439,

|

| 77 |

+

"<|1.50|>": 50440,

|

| 78 |

+

"<|1.52|>": 50441,

|

| 79 |

+

"<|1.54|>": 50442,

|

| 80 |

+

"<|1.56|>": 50443,

|

| 81 |

+

"<|1.58|>": 50444,

|

| 82 |

+

"<|1.60|>": 50445,

|

| 83 |

+

"<|1.62|>": 50446,

|

| 84 |

+

"<|1.64|>": 50447,

|

| 85 |

+

"<|1.66|>": 50448,

|

| 86 |

+

"<|1.68|>": 50449,

|

| 87 |

+

"<|1.70|>": 50450,

|

| 88 |

+

"<|1.72|>": 50451,

|

| 89 |

+

"<|1.74|>": 50452,

|

| 90 |

+

"<|1.76|>": 50453,

|

| 91 |

+

"<|1.78|>": 50454,

|

| 92 |

+

"<|1.80|>": 50455,

|

| 93 |

+

"<|1.82|>": 50456,

|

| 94 |

+

"<|1.84|>": 50457,

|

| 95 |

+

"<|1.86|>": 50458,

|

| 96 |

+

"<|1.88|>": 50459,

|

| 97 |

+

"<|1.90|>": 50460,

|

| 98 |

+

"<|1.92|>": 50461,

|

| 99 |

+

"<|1.94|>": 50462,

|

| 100 |

+

"<|1.96|>": 50463,

|

| 101 |

+

"<|1.98|>": 50464,

|

| 102 |

+

"<|10.00|>": 50865,

|

| 103 |

+

"<|10.02|>": 50866,

|

| 104 |

+

"<|10.04|>": 50867,

|

| 105 |

+

"<|10.06|>": 50868,

|

| 106 |

+

"<|10.08|>": 50869,

|

| 107 |

+

"<|10.10|>": 50870,

|

| 108 |

+

"<|10.12|>": 50871,

|

| 109 |

+

"<|10.14|>": 50872,

|

| 110 |

+

"<|10.16|>": 50873,

|

| 111 |

+

"<|10.18|>": 50874,

|

| 112 |

+

"<|10.20|>": 50875,

|

| 113 |

+

"<|10.22|>": 50876,

|

| 114 |

+

"<|10.24|>": 50877,

|

| 115 |

+

"<|10.26|>": 50878,

|

| 116 |

+

"<|10.28|>": 50879,

|

| 117 |

+

"<|10.30|>": 50880,

|

| 118 |

+

"<|10.32|>": 50881,

|

| 119 |

+

"<|10.34|>": 50882,

|

| 120 |

+

"<|10.36|>": 50883,

|

| 121 |

+

"<|10.38|>": 50884,

|

| 122 |

+

"<|10.40|>": 50885,

|

| 123 |

+

"<|10.42|>": 50886,

|

| 124 |

+

"<|10.44|>": 50887,

|

| 125 |

+

"<|10.46|>": 50888,

|

| 126 |

+

"<|10.48|>": 50889,

|

| 127 |

+

"<|10.50|>": 50890,

|

| 128 |

+

"<|10.52|>": 50891,

|

| 129 |

+

"<|10.54|>": 50892,

|

| 130 |

+

"<|10.56|>": 50893,

|

| 131 |

+

"<|10.58|>": 50894,

|

| 132 |

+

"<|10.60|>": 50895,

|

| 133 |

+

"<|10.62|>": 50896,

|

| 134 |

+

"<|10.64|>": 50897,

|

| 135 |

+

"<|10.66|>": 50898,

|

| 136 |

+

"<|10.68|>": 50899,

|

| 137 |

+

"<|10.70|>": 50900,

|

| 138 |

+

"<|10.72|>": 50901,

|

| 139 |

+

"<|10.74|>": 50902,

|

| 140 |

+

"<|10.76|>": 50903,

|

| 141 |

+

"<|10.78|>": 50904,

|

| 142 |

+

"<|10.80|>": 50905,

|

| 143 |

+

"<|10.82|>": 50906,

|

| 144 |

+

"<|10.84|>": 50907,

|

| 145 |

+

"<|10.86|>": 50908,

|

| 146 |

+

"<|10.88|>": 50909,

|

| 147 |

+

"<|10.90|>": 50910,

|

| 148 |

+

"<|10.92|>": 50911,

|

| 149 |

+

"<|10.94|>": 50912,

|

| 150 |

+

"<|10.96|>": 50913,

|

| 151 |

+

"<|10.98|>": 50914,

|

| 152 |

+

"<|11.00|>": 50915,

|

| 153 |

+

"<|11.02|>": 50916,

|

| 154 |

+

"<|11.04|>": 50917,

|

| 155 |

+

"<|11.06|>": 50918,

|

| 156 |

+

"<|11.08|>": 50919,

|

| 157 |

+

"<|11.10|>": 50920,

|

| 158 |

+

"<|11.12|>": 50921,

|

| 159 |

+

"<|11.14|>": 50922,

|

| 160 |

+

"<|11.16|>": 50923,

|

| 161 |

+

"<|11.18|>": 50924,

|

| 162 |

+

"<|11.20|>": 50925,

|

| 163 |

+

"<|11.22|>": 50926,

|

| 164 |

+

"<|11.24|>": 50927,

|

| 165 |

+

"<|11.26|>": 50928,

|

| 166 |

+

"<|11.28|>": 50929,

|

| 167 |

+

"<|11.30|>": 50930,

|

| 168 |

+

"<|11.32|>": 50931,

|

| 169 |

+

"<|11.34|>": 50932,

|

| 170 |

+

"<|11.36|>": 50933,

|

| 171 |

+

"<|11.38|>": 50934,

|

| 172 |

+

"<|11.40|>": 50935,

|

| 173 |

+

"<|11.42|>": 50936,

|

| 174 |

+

"<|11.44|>": 50937,

|

| 175 |

+

"<|11.46|>": 50938,

|

| 176 |

+

"<|11.48|>": 50939,

|

| 177 |

+

"<|11.50|>": 50940,

|

| 178 |

+

"<|11.52|>": 50941,

|

| 179 |

+

"<|11.54|>": 50942,

|

| 180 |

+

"<|11.56|>": 50943,

|

| 181 |

+

"<|11.58|>": 50944,

|

| 182 |

+

"<|11.60|>": 50945,

|

| 183 |

+

"<|11.62|>": 50946,

|

| 184 |

+

"<|11.64|>": 50947,

|

| 185 |

+

"<|11.66|>": 50948,

|

| 186 |

+

"<|11.68|>": 50949,

|

| 187 |

+

"<|11.70|>": 50950,

|

| 188 |

+

"<|11.72|>": 50951,

|

| 189 |

+

"<|11.74|>": 50952,

|

| 190 |

+

"<|11.76|>": 50953,

|

| 191 |

+

"<|11.78|>": 50954,

|

| 192 |

+

"<|11.80|>": 50955,

|

| 193 |

+

"<|11.82|>": 50956,

|

| 194 |

+

"<|11.84|>": 50957,

|

| 195 |

+

"<|11.86|>": 50958,

|

| 196 |

+

"<|11.88|>": 50959,

|

| 197 |

+

"<|11.90|>": 50960,

|

| 198 |

+

"<|11.92|>": 50961,

|

| 199 |

+

"<|11.94|>": 50962,

|

| 200 |

+

"<|11.96|>": 50963,

|

| 201 |

+

"<|11.98|>": 50964,

|

| 202 |

+

"<|12.00|>": 50965,

|

| 203 |

+

"<|12.02|>": 50966,

|

| 204 |

+

"<|12.04|>": 50967,

|

| 205 |

+

"<|12.06|>": 50968,

|

| 206 |

+

"<|12.08|>": 50969,

|

| 207 |

+

"<|12.10|>": 50970,

|

| 208 |

+

"<|12.12|>": 50971,

|

| 209 |

+

"<|12.14|>": 50972,

|

| 210 |

+

"<|12.16|>": 50973,

|

| 211 |

+

"<|12.18|>": 50974,

|

| 212 |

+

"<|12.20|>": 50975,

|

| 213 |

+

"<|12.22|>": 50976,

|

| 214 |

+

"<|12.24|>": 50977,

|

| 215 |

+

"<|12.26|>": 50978,

|

| 216 |

+

"<|12.28|>": 50979,

|

| 217 |

+

"<|12.30|>": 50980,

|

| 218 |

+

"<|12.32|>": 50981,

|

| 219 |

+

"<|12.34|>": 50982,

|

| 220 |

+

"<|12.36|>": 50983,

|

| 221 |

+

"<|12.38|>": 50984,

|

| 222 |

+

"<|12.40|>": 50985,

|

| 223 |

+

"<|12.42|>": 50986,

|

| 224 |

+

"<|12.44|>": 50987,

|

| 225 |

+

"<|12.46|>": 50988,

|

| 226 |

+

"<|12.48|>": 50989,

|

| 227 |

+

"<|12.50|>": 50990,

|

| 228 |

+

"<|12.52|>": 50991,

|

| 229 |

+

"<|12.54|>": 50992,

|

| 230 |

+

"<|12.56|>": 50993,

|

| 231 |

+

"<|12.58|>": 50994,

|

| 232 |

+

"<|12.60|>": 50995,

|

| 233 |

+

"<|12.62|>": 50996,

|

| 234 |

+

"<|12.64|>": 50997,

|

| 235 |

+

"<|12.66|>": 50998,

|

| 236 |

+

"<|12.68|>": 50999,

|

| 237 |

+

"<|12.70|>": 51000,

|

| 238 |

+

"<|12.72|>": 51001,

|

| 239 |

+

"<|12.74|>": 51002,

|

| 240 |

+

"<|12.76|>": 51003,

|

| 241 |

+

"<|12.78|>": 51004,

|

| 242 |

+

"<|12.80|>": 51005,

|

| 243 |

+

"<|12.82|>": 51006,

|

| 244 |

+

"<|12.84|>": 51007,

|

| 245 |

+

"<|12.86|>": 51008,

|

| 246 |

+

"<|12.88|>": 51009,

|

| 247 |

+

"<|12.90|>": 51010,

|

| 248 |

+

"<|12.92|>": 51011,

|

| 249 |

+

"<|12.94|>": 51012,

|

| 250 |

+

"<|12.96|>": 51013,

|

| 251 |

+

"<|12.98|>": 51014,

|

| 252 |

+

"<|13.00|>": 51015,

|

| 253 |

+

"<|13.02|>": 51016,

|

| 254 |

+

"<|13.04|>": 51017,

|

| 255 |

+

"<|13.06|>": 51018,

|

| 256 |

+

"<|13.08|>": 51019,

|

| 257 |

+

"<|13.10|>": 51020,

|

| 258 |

+

"<|13.12|>": 51021,

|

| 259 |

+

"<|13.14|>": 51022,

|

| 260 |

+

"<|13.16|>": 51023,

|

| 261 |

+

"<|13.18|>": 51024,

|

| 262 |

+

"<|13.20|>": 51025,

|

| 263 |

+

"<|13.22|>": 51026,

|

| 264 |

+

"<|13.24|>": 51027,

|

| 265 |

+

"<|13.26|>": 51028,

|

| 266 |

+

"<|13.28|>": 51029,

|

| 267 |

+

"<|13.30|>": 51030,

|

| 268 |

+

"<|13.32|>": 51031,

|

| 269 |

+

"<|13.34|>": 51032,

|

| 270 |

+

"<|13.36|>": 51033,

|

| 271 |

+

"<|13.38|>": 51034,

|

| 272 |

+

"<|13.40|>": 51035,

|

| 273 |

+

"<|13.42|>": 51036,

|

| 274 |

+

"<|13.44|>": 51037,

|

| 275 |

+

"<|13.46|>": 51038,

|

| 276 |

+

"<|13.48|>": 51039,

|

| 277 |

+

"<|13.50|>": 51040,

|

| 278 |

+

"<|13.52|>": 51041,

|

| 279 |

+

"<|13.54|>": 51042,

|

| 280 |

+

"<|13.56|>": 51043,

|

| 281 |

+

"<|13.58|>": 51044,

|

| 282 |

+

"<|13.60|>": 51045,

|

| 283 |

+

"<|13.62|>": 51046,

|

| 284 |

+

"<|13.64|>": 51047,

|

| 285 |

+

"<|13.66|>": 51048,

|

| 286 |

+

"<|13.68|>": 51049,

|

| 287 |

+

"<|13.70|>": 51050,

|

| 288 |

+

"<|13.72|>": 51051,

|

| 289 |

+

"<|13.74|>": 51052,

|

| 290 |

+

"<|13.76|>": 51053,

|

| 291 |

+

"<|13.78|>": 51054,

|

| 292 |

+

"<|13.80|>": 51055,

|

| 293 |

+

"<|13.82|>": 51056,

|

| 294 |

+

"<|13.84|>": 51057,

|

| 295 |

+

"<|13.86|>": 51058,

|

| 296 |

+

"<|13.88|>": 51059,

|

| 297 |

+

"<|13.90|>": 51060,

|

| 298 |

+

"<|13.92|>": 51061,

|

| 299 |

+

"<|13.94|>": 51062,

|

| 300 |

+

"<|13.96|>": 51063,

|

| 301 |

+

"<|13.98|>": 51064,

|

| 302 |

+

"<|14.00|>": 51065,

|

| 303 |

+

"<|14.02|>": 51066,

|

| 304 |

+

"<|14.04|>": 51067,

|

| 305 |

+

"<|14.06|>": 51068,

|

| 306 |

+

"<|14.08|>": 51069,

|

| 307 |

+

"<|14.10|>": 51070,

|

| 308 |

+

"<|14.12|>": 51071,

|

| 309 |

+

"<|14.14|>": 51072,

|

| 310 |

+

"<|14.16|>": 51073,

|

| 311 |

+

"<|14.18|>": 51074,

|

| 312 |

+

"<|14.20|>": 51075,

|

| 313 |

+

"<|14.22|>": 51076,

|

| 314 |

+

"<|14.24|>": 51077,

|

| 315 |

+

"<|14.26|>": 51078,

|

| 316 |

+

"<|14.28|>": 51079,

|

| 317 |

+

"<|14.30|>": 51080,

|

| 318 |

+

"<|14.32|>": 51081,

|

| 319 |

+

"<|14.34|>": 51082,

|

| 320 |

+

"<|14.36|>": 51083,

|

| 321 |

+

"<|14.38|>": 51084,

|

| 322 |

+

"<|14.40|>": 51085,

|

| 323 |

+

"<|14.42|>": 51086,

|

| 324 |

+

"<|14.44|>": 51087,

|

| 325 |

+

"<|14.46|>": 51088,

|

| 326 |

+

"<|14.48|>": 51089,

|

| 327 |

+

"<|14.50|>": 51090,

|

| 328 |

+

"<|14.52|>": 51091,

|

| 329 |

+

"<|14.54|>": 51092,

|

| 330 |

+

"<|14.56|>": 51093,

|

| 331 |

+

"<|14.58|>": 51094,

|

| 332 |

+

"<|14.60|>": 51095,

|

| 333 |

+

"<|14.62|>": 51096,

|

| 334 |

+

"<|14.64|>": 51097,

|

| 335 |

+

"<|14.66|>": 51098,

|

| 336 |

+

"<|14.68|>": 51099,

|

| 337 |

+

"<|14.70|>": 51100,

|

| 338 |

+

"<|14.72|>": 51101,

|

| 339 |

+

"<|14.74|>": 51102,

|

| 340 |

+

"<|14.76|>": 51103,

|

| 341 |

+

"<|14.78|>": 51104,

|

| 342 |

+

"<|14.80|>": 51105,

|

| 343 |

+

"<|14.82|>": 51106,

|

| 344 |

+

"<|14.84|>": 51107,

|

| 345 |

+

"<|14.86|>": 51108,

|

| 346 |

+

"<|14.88|>": 51109,

|

| 347 |

+

"<|14.90|>": 51110,

|

| 348 |

+

"<|14.92|>": 51111,

|

| 349 |

+

"<|14.94|>": 51112,

|

| 350 |

+

"<|14.96|>": 51113,

|

| 351 |

+

"<|14.98|>": 51114,

|

| 352 |

+

"<|15.00|>": 51115,

|

| 353 |

+

"<|15.02|>": 51116,

|

| 354 |

+

"<|15.04|>": 51117,

|

| 355 |

+

"<|15.06|>": 51118,

|

| 356 |

+

"<|15.08|>": 51119,

|

| 357 |

+

"<|15.10|>": 51120,

|

| 358 |

+

"<|15.12|>": 51121,

|

| 359 |

+

"<|15.14|>": 51122,

|

| 360 |

+

"<|15.16|>": 51123,

|

| 361 |

+

"<|15.18|>": 51124,

|

| 362 |

+

"<|15.20|>": 51125,

|

| 363 |

+

"<|15.22|>": 51126,

|

| 364 |

+

"<|15.24|>": 51127,

|

| 365 |

+

"<|15.26|>": 51128,

|

| 366 |

+

"<|15.28|>": 51129,

|

| 367 |

+

"<|15.30|>": 51130,

|

| 368 |

+

"<|15.32|>": 51131,

|

| 369 |

+

"<|15.34|>": 51132,

|

| 370 |

+

"<|15.36|>": 51133,

|

| 371 |

+

"<|15.38|>": 51134,

|

| 372 |

+

"<|15.40|>": 51135,

|

| 373 |

+

"<|15.42|>": 51136,

|

| 374 |

+

"<|15.44|>": 51137,

|

| 375 |

+

"<|15.46|>": 51138,

|

| 376 |

+

"<|15.48|>": 51139,

|

| 377 |

+

"<|15.50|>": 51140,

|

| 378 |

+

"<|15.52|>": 51141,

|

| 379 |

+

"<|15.54|>": 51142,

|

| 380 |

+

"<|15.56|>": 51143,

|

| 381 |

+

"<|15.58|>": 51144,

|

| 382 |

+

"<|15.60|>": 51145,

|

| 383 |

+

"<|15.62|>": 51146,

|

| 384 |

+

"<|15.64|>": 51147,

|

| 385 |

+

"<|15.66|>": 51148,

|

| 386 |

+

"<|15.68|>": 51149,

|

| 387 |

+

"<|15.70|>": 51150,

|

| 388 |

+

"<|15.72|>": 51151,

|

| 389 |

+

"<|15.74|>": 51152,

|

| 390 |

+

"<|15.76|>": 51153,

|

| 391 |

+

"<|15.78|>": 51154,

|

| 392 |

+

"<|15.80|>": 51155,

|

| 393 |

+

"<|15.82|>": 51156,

|

| 394 |

+

"<|15.84|>": 51157,

|

| 395 |

+

"<|15.86|>": 51158,

|

| 396 |

+

"<|15.88|>": 51159,

|

| 397 |

+

"<|15.90|>": 51160,

|

| 398 |

+

"<|15.92|>": 51161,

|

| 399 |

+

"<|15.94|>": 51162,

|

| 400 |

+

"<|15.96|>": 51163,

|

| 401 |

+

"<|15.98|>": 51164,

|

| 402 |

+

"<|16.00|>": 51165,

|

| 403 |

+

"<|16.02|>": 51166,

|

| 404 |

+

"<|16.04|>": 51167,

|

| 405 |

+

"<|16.06|>": 51168,

|

| 406 |

+

"<|16.08|>": 51169,

|

| 407 |

+

"<|16.10|>": 51170,

|

| 408 |

+

"<|16.12|>": 51171,

|

| 409 |

+

"<|16.14|>": 51172,

|

| 410 |

+

"<|16.16|>": 51173,

|

| 411 |

+

"<|16.18|>": 51174,

|

| 412 |

+

"<|16.20|>": 51175,

|

| 413 |

+

"<|16.22|>": 51176,

|

| 414 |

+

"<|16.24|>": 51177,

|

| 415 |

+

"<|16.26|>": 51178,

|

| 416 |

+

"<|16.28|>": 51179,

|

| 417 |

+

"<|16.30|>": 51180,

|

| 418 |

+

"<|16.32|>": 51181,

|

| 419 |

+

"<|16.34|>": 51182,

|

| 420 |

+

"<|16.36|>": 51183,

|

| 421 |

+

"<|16.38|>": 51184,

|

| 422 |

+

"<|16.40|>": 51185,

|

| 423 |

+

"<|16.42|>": 51186,

|

| 424 |

+

"<|16.44|>": 51187,

|

| 425 |

+

"<|16.46|>": 51188,

|

| 426 |

+

"<|16.48|>": 51189,

|

| 427 |

+

"<|16.50|>": 51190,

|

| 428 |

+

"<|16.52|>": 51191,

|

| 429 |

+

"<|16.54|>": 51192,

|

| 430 |

+

"<|16.56|>": 51193,

|

| 431 |

+

"<|16.58|>": 51194,

|

| 432 |

+

"<|16.60|>": 51195,

|

| 433 |

+

"<|16.62|>": 51196,

|

| 434 |

+

"<|16.64|>": 51197,

|

| 435 |

+

"<|16.66|>": 51198,

|

| 436 |

+

"<|16.68|>": 51199,

|

| 437 |

+

"<|16.70|>": 51200,

|

| 438 |

+

"<|16.72|>": 51201,

|

| 439 |

+

"<|16.74|>": 51202,

|

| 440 |

+

"<|16.76|>": 51203,

|

| 441 |

+

"<|16.78|>": 51204,

|

| 442 |

+

"<|16.80|>": 51205,

|

| 443 |

+

"<|16.82|>": 51206,

|

| 444 |

+

"<|16.84|>": 51207,

|

| 445 |

+

"<|16.86|>": 51208,

|

| 446 |

+

"<|16.88|>": 51209,

|

| 447 |

+

"<|16.90|>": 51210,

|

| 448 |

+

"<|16.92|>": 51211,

|

| 449 |

+

"<|16.94|>": 51212,

|

| 450 |

+

"<|16.96|>": 51213,

|

| 451 |

+

"<|16.98|>": 51214,

|

| 452 |

+

"<|17.00|>": 51215,

|

| 453 |

+

"<|17.02|>": 51216,

|

| 454 |

+

"<|17.04|>": 51217,

|

| 455 |

+

"<|17.06|>": 51218,

|

| 456 |

+

"<|17.08|>": 51219,

|

| 457 |

+

"<|17.10|>": 51220,

|

| 458 |

+

"<|17.12|>": 51221,

|

| 459 |

+

"<|17.14|>": 51222,

|

| 460 |

+

"<|17.16|>": 51223,

|

| 461 |

+

"<|17.18|>": 51224,

|

| 462 |

+

"<|17.20|>": 51225,

|

| 463 |

+

"<|17.22|>": 51226,

|

| 464 |

+

"<|17.24|>": 51227,

|

| 465 |

+

"<|17.26|>": 51228,

|

| 466 |

+

"<|17.28|>": 51229,

|

| 467 |

+

"<|17.30|>": 51230,

|

| 468 |

+

"<|17.32|>": 51231,

|

| 469 |

+

"<|17.34|>": 51232,

|

| 470 |

+

"<|17.36|>": 51233,

|

| 471 |

+

"<|17.38|>": 51234,

|

| 472 |

+

"<|17.40|>": 51235,

|

| 473 |

+

"<|17.42|>": 51236,

|

| 474 |

+

"<|17.44|>": 51237,

|

| 475 |

+

"<|17.46|>": 51238,

|

| 476 |

+

"<|17.48|>": 51239,

|

| 477 |

+

"<|17.50|>": 51240,

|

| 478 |

+

"<|17.52|>": 51241,

|

| 479 |

+

"<|17.54|>": 51242,

|

| 480 |

+

"<|17.56|>": 51243,

|

| 481 |

+

"<|17.58|>": 51244,

|

| 482 |

+

"<|17.60|>": 51245,

|

| 483 |

+

"<|17.62|>": 51246,

|

| 484 |

+

"<|17.64|>": 51247,

|

| 485 |

+

"<|17.66|>": 51248,

|

| 486 |

+

"<|17.68|>": 51249,

|

| 487 |

+

"<|17.70|>": 51250,

|

| 488 |

+

"<|17.72|>": 51251,

|

| 489 |

+

"<|17.74|>": 51252,

|

| 490 |

+

"<|17.76|>": 51253,

|

| 491 |

+

"<|17.78|>": 51254,

|

| 492 |

+

"<|17.80|>": 51255,

|

| 493 |

+

"<|17.82|>": 51256,

|

| 494 |

+

"<|17.84|>": 51257,

|

| 495 |

+

"<|17.86|>": 51258,

|

| 496 |

+

"<|17.88|>": 51259,

|

| 497 |

+

"<|17.90|>": 51260,

|

| 498 |

+

"<|17.92|>": 51261,

|

| 499 |

+

"<|17.94|>": 51262,

|

| 500 |

+

"<|17.96|>": 51263,

|

| 501 |

+

"<|17.98|>": 51264,

|

| 502 |

+

"<|18.00|>": 51265,

|

| 503 |

+

"<|18.02|>": 51266,

|

| 504 |

+

"<|18.04|>": 51267,

|

| 505 |

+

"<|18.06|>": 51268,

|

| 506 |

+

"<|18.08|>": 51269,

|

| 507 |

+

"<|18.10|>": 51270,

|

| 508 |

+

"<|18.12|>": 51271,

|

| 509 |

+

"<|18.14|>": 51272,

|

| 510 |

+

"<|18.16|>": 51273,

|

| 511 |

+

"<|18.18|>": 51274,

|

| 512 |

+

"<|18.20|>": 51275,

|

| 513 |

+

"<|18.22|>": 51276,

|

| 514 |

+

"<|18.24|>": 51277,

|

| 515 |

+

"<|18.26|>": 51278,

|

| 516 |

+

"<|18.28|>": 51279,

|

| 517 |

+

"<|18.30|>": 51280,

|

| 518 |

+

"<|18.32|>": 51281,

|

| 519 |

+

"<|18.34|>": 51282,

|

| 520 |

+

"<|18.36|>": 51283,

|

| 521 |

+

"<|18.38|>": 51284,

|

| 522 |

+

"<|18.40|>": 51285,

|

| 523 |

+

"<|18.42|>": 51286,

|

| 524 |

+

"<|18.44|>": 51287,

|

| 525 |

+

"<|18.46|>": 51288,

|

| 526 |

+

"<|18.48|>": 51289,

|

| 527 |

+

"<|18.50|>": 51290,

|

| 528 |

+

"<|18.52|>": 51291,

|

| 529 |

+

"<|18.54|>": 51292,

|

| 530 |

+

"<|18.56|>": 51293,

|

| 531 |

+

"<|18.58|>": 51294,

|

| 532 |

+

"<|18.60|>": 51295,

|

| 533 |

+

"<|18.62|>": 51296,

|

| 534 |

+

"<|18.64|>": 51297,

|

| 535 |

+

"<|18.66|>": 51298,

|

| 536 |

+

"<|18.68|>": 51299,

|

| 537 |

+

"<|18.70|>": 51300,

|

| 538 |

+

"<|18.72|>": 51301,

|

| 539 |

+

"<|18.74|>": 51302,

|

| 540 |

+

"<|18.76|>": 51303,

|

| 541 |