Commit

•

4935da6

1

Parent(s):

07729be

Update README.md

Browse files

README.md

CHANGED

|

@@ -2,57 +2,102 @@

|

|

| 2 |

license: apache-2.0

|

| 3 |

base_model: HuggingFaceM4/idefics2-8b

|

| 4 |

tags:

|

| 5 |

-

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 6 |

model-index:

|

| 7 |

- name: mantis-8b-idefics2_8192

|

| 8 |

results: []

|

|

|

|

|

|

|

|

|

|

|

|

|

| 9 |

---

|

| 10 |

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

| 14 |

-

|

| 15 |

-

|

| 16 |

-

|

| 17 |

-

|

| 18 |

-

|

| 19 |

-

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

|

| 25 |

-

|

| 26 |

-

##

|

| 27 |

-

|

| 28 |

-

|

| 29 |

-

|

| 30 |

-

|

| 31 |

-

|

| 32 |

-

|

| 33 |

-

|

| 34 |

-

|

| 35 |

-

-

|

| 36 |

-

-

|

| 37 |

-

|

| 38 |

-

-

|

| 39 |

-

|

| 40 |

-

|

| 41 |

-

|

| 42 |

-

-

|

| 43 |

-

|

| 44 |

-

|

| 45 |

-

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

|

| 50 |

-

|

| 51 |

-

|

| 52 |

-

|

| 53 |

-

|

| 54 |

-

|

| 55 |

-

|

| 56 |

-

|

| 57 |

-

|

| 58 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

license: apache-2.0

|

| 3 |

base_model: HuggingFaceM4/idefics2-8b

|

| 4 |

tags:

|

| 5 |

+

- multimodal

|

| 6 |

+

- lmm

|

| 7 |

+

- vlm

|

| 8 |

+

- llava

|

| 9 |

+

- siglip

|

| 10 |

+

- llama3

|

| 11 |

+

- mantis

|

| 12 |

model-index:

|

| 13 |

- name: mantis-8b-idefics2_8192

|

| 14 |

results: []

|

| 15 |

+

datasets:

|

| 16 |

+

- TIGER-Lab/Mantis-Instruct

|

| 17 |

+

language:

|

| 18 |

+

- en

|

| 19 |

---

|

| 20 |

|

| 21 |

+

# 🔥 Mantis

|

| 22 |

+

|

| 23 |

+

[Paper](https://arxiv.org/abs/2405.01483) | [Website](https://tiger-ai-lab.github.io/Mantis/) | [Github](https://github.com/TIGER-AI-Lab/Mantis) | [Models](https://huggingface.co/collections/TIGER-Lab/mantis-6619b0834594c878cdb1d6e4) | [Demo](https://huggingface.co/spaces/TIGER-Lab/Mantis)

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

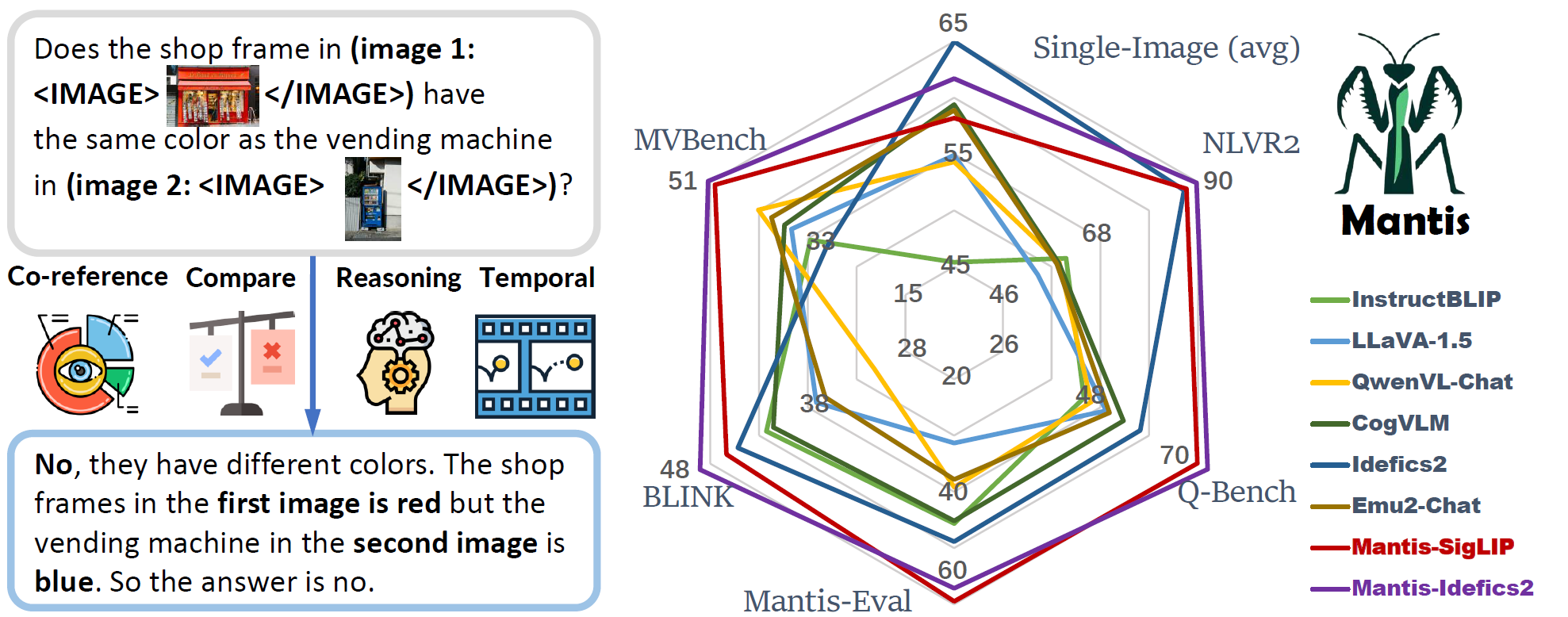

**Excited to announce Mantis-Idefics2, with enhanced ability in multi-image scenarios!**

|

| 28 |

+

It's fine-tuned on [Mantis-Instruct](https://huggingface.co/datasets/TIGER-Lab/Mantis-Instruct) from [Idefics2-8b](https://huggingface.co/HuggingFaceM4/idefics2-8b)

|

| 29 |

+

|

| 30 |

+

## Summary

|

| 31 |

+

|

| 32 |

+

- Mantis-Idefics2 is LMM with **interleaved text and image as inputs**, trained on Mantis-Instruct under academic-level resources (i.e. 36 hours on 16xA100-40G).

|

| 33 |

+

- Mantis is trained to have multi-image skills including co-reference, reasoning, comparing, temporal understanding.

|

| 34 |

+

- Mantis reaches the state-of-the-art performance on five multi-image benchmarks (NLVR2, Q-Bench, BLINK, MVBench, Mantis-Eval), and also maintain a strong single-image performance on par with CogVLM and Emu2.

|

| 35 |

+

|

| 36 |

+

## Multi-Image Performance

|

| 37 |

+

|

| 38 |

+

| Models | Size | Format | NLVR2 | Q-Bench | Mantis-Eval | BLINK | MVBench | Avg |

|

| 39 |

+

|--------------------|:----:|:--------:|:-----:|:-------:|:-----------:|:-----:|:-------:|:----:|

|

| 40 |

+

| GPT-4V | - | sequence | 88.80 | 76.52 | 62.67 | 51.14 | 43.50 | 64.5 |

|

| 41 |

+

| Open Source Models | | | | | | | | |

|

| 42 |

+

| Random | - | - | 48.93 | 40.20 | 23.04 | 38.09 | 27.30 | 35.5 |

|

| 43 |

+

| Kosmos2 | 1.6B | merge | 49.00 | 35.10 | 30.41 | 37.50 | 21.62 | 34.7 |

|

| 44 |

+

| LLaVA-v1.5 | 7B | merge | 53.88 | 49.32 | 31.34 | 37.13 | 36.00 | 41.5 |

|

| 45 |

+

| LLava-V1.6 | 7B | merge | 58.88 | 54.80 | 45.62 | 39.55 | 40.90 | 48.0 |

|

| 46 |

+

| Qwen-VL-Chat | 7B | merge | 58.72 | 45.90 | 39.17 | 31.17 | 42.15 | 43.4 |

|

| 47 |

+

| Fuyu | 8B | merge | 51.10 | 49.15 | 27.19 | 36.59 | 30.20 | 38.8 |

|

| 48 |

+

| BLIP-2 | 13B | merge | 59.42 | 51.20 | 49.77 | 39.45 | 31.40 | 46.2 |

|

| 49 |

+

| InstructBLIP | 13B | merge | 60.26 | 44.30 | 45.62 | 42.24 | 32.50 | 45.0 |

|

| 50 |

+

| CogVLM | 17B | merge | 58.58 | 53.20 | 45.16 | 41.54 | 37.30 | 47.2 |

|

| 51 |

+

| OpenFlamingo | 9B | sequence | 36.41 | 19.60 | 12.44 | 39.18 | 7.90 | 23.1 |

|

| 52 |

+

| Otter-Image | 9B | sequence | 49.15 | 17.50 | 14.29 | 36.26 | 15.30 | 26.5 |

|

| 53 |

+

| Idefics1 | 9B | sequence | 54.63 | 30.60 | 28.11 | 24.69 | 26.42 | 32.9 |

|

| 54 |

+

| VideoLLaVA | 7B | sequence | 56.48 | 45.70 | 35.94 | 38.92 | 44.30 | 44.3 |

|

| 55 |

+

| Emu2-Chat | 37B | sequence | 58.16 | 50.05 | 37.79 | 36.20 | 39.72 | 44.4 |

|

| 56 |

+

| Vila | 8B | sequence | 76.45 | 45.70 | 51.15 | 39.30 | 49.40 | 52.4 |

|

| 57 |

+

| Idefics2 | 8B | sequence | 86.87 | 57.00 | 48.85 | 45.18 | 29.68 | 53.5 |

|

| 58 |

+

| Mantis-CLIP | 8B | sequence | 84.66 | 66.00 | 55.76 | 47.06 | 48.30 | 60.4 |

|

| 59 |

+

| Mantis-SIGLIP | 8B | sequence | 87.43 | 69.90 | **59.45** | 46.35 | 50.15 | 62.7 |

|

| 60 |

+

| Mantis-Flamingo | 9B | sequence | 52.96 | 46.80 | 32.72 | 38.00 | 40.83 | 42.3 |

|

| 61 |

+

| Mantis-Idefics2 | 8B | sequence | **89.71** | **75.20** | 57.14 | **49.05** | **51.38** | **64.5** |

|

| 62 |

+

| $\Delta$ over SOTA | - | - | +2.84 | +18.20 | +8.30 | +3.87 | +1.98 | +11.0 |

|

| 63 |

+

|

| 64 |

+

## Single-Image Performance

|

| 65 |

+

|

| 66 |

+

| Model | Size | TextVQA | VQA | MMB | MMMU | OKVQA | SQA | MathVista | Avg |

|

| 67 |

+

|-----------------|:----:|:-------:|:----:|:----:|:----:|:-----:|:----:|:---------:|:----:|

|

| 68 |

+

| OpenFlamingo | 9B | 46.3 | 58.0 | 32.4 | 28.7 | 51.4 | 45.7 | 18.6 | 40.2 |

|

| 69 |

+

| Idefics1 | 9B | 39.3 | 68.8 | 45.3 | 32.5 | 50.4 | 51.6 | 21.1 | 44.1 |

|

| 70 |

+

| InstructBLIP | 7B | 33.6 | 75.2 | 38.3 | 30.6 | 45.2 | 70.6 | 24.4 | 45.4 |

|

| 71 |

+

| Yi-VL | 6B | 44.8 | 72.5 | 68.4 | 39.1 | 51.3 | 71.7 | 29.7 | 53.9 |

|

| 72 |

+

| Qwen-VL-Chat | 7B | 63.8 | 78.2 | 61.8 | 35.9 | 56.6 | 68.2 | 15.5 | 54.3 |

|

| 73 |

+

| LLaVA-1.5 | 7B | 58.2 | 76.6 | 64.8 | 35.3 | 53.4 | 70.4 | 25.6 | 54.9 |

|

| 74 |

+

| Emu2-Chat | 37B | __66.6__ | **84.9** | 63.6 | 36.3 | **64.8** | 65.3 | 30.7 | 58.9 |

|

| 75 |

+

| CogVLM | 17B | **70.4** | __82.3__ | 65.8 | 32.1 | __64.8__ | 65.6 | 35.0 | 59.4 |

|

| 76 |

+

| Idefics2 | 8B | 70.4 | 79.1 | __75.7__ | **43.0** | 53.5 | **86.5** | **51.4** | **65.7** |

|

| 77 |

+

| Mantis-CLIP | 8B | 56.4 | 73.0 | 66.0 | 38.1 | 53.0 | 73.8 | 31.7 | 56.0 |

|

| 78 |

+

| Mantis-SigLIP | 8B | 59.2 | 74.9 | 68.7 | 40.1 | 55.4 | 74.9 | 34.4 | 58.2 |

|

| 79 |

+

| Mantis-Idefics2 | 8B | 63.5 | 77.6 | 75.7 | __41.1__ | 52.6 | __81.3__ | __40.4__ | __61.7__ |

|

| 80 |

+

|

| 81 |

+

## How to use

|

| 82 |

+

|

| 83 |

+

### Run example inference:

|

| 84 |

+

```python

|

| 85 |

+

```

|

| 86 |

+

|

| 87 |

+

### Training

|

| 88 |

+

See [mantis/train](https://github.com/TIGER-AI-Lab/Mantis/tree/main/mantis/train) for details

|

| 89 |

+

|

| 90 |

+

### Evaluation

|

| 91 |

+

See [mantis/benchmark](https://github.com/TIGER-AI-Lab/Mantis/tree/main/mantis/benchmark) for details

|

| 92 |

+

|

| 93 |

+

**Please cite our paper or give a star to out Github repo if you find this model useful**

|

| 94 |

+

|

| 95 |

+

## Citation

|

| 96 |

+

```

|

| 97 |

+

@inproceedings{Jiang2024MANTISIM,

|

| 98 |

+

title={MANTIS: Interleaved Multi-Image Instruction Tuning},

|

| 99 |

+

author={Dongfu Jiang and Xuan He and Huaye Zeng and Cong Wei and Max W.F. Ku and Qian Liu and Wenhu Chen},

|

| 100 |

+

publisher={arXiv2405.01483}

|

| 101 |

+

year={2024},

|

| 102 |

+

}

|

| 103 |

+

```

|