Upload folder using huggingface_hub

Browse files- .gitattributes +4 -0

- MiniCPM-V-2_6-rk3588-w8a8-opt-0-hybrid-ratio-0.0.rkllm +3 -0

- MiniCPM-V-2_6-rk3588-w8a8-opt-0-hybrid-ratio-0.5.rkllm +3 -0

- MiniCPM-V-2_6-rk3588-w8a8-opt-1-hybrid-ratio-0.0.rkllm +3 -0

- MiniCPM-V-2_6-rk3588-w8a8-opt-1-hybrid-ratio-0.5.rkllm +3 -0

- README.md +365 -0

- added_tokens.json +25 -0

- config.json +54 -0

- generation_config.json +6 -0

- model.safetensors.index.json +796 -0

- preprocessor_config.json +24 -0

- special_tokens_map.json +172 -0

- tokenizer.json +0 -0

- tokenizer_config.json +235 -0

- vocab.json +0 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,7 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

MiniCPM-V-2_6-rk3588-w8a8-opt-0-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

MiniCPM-V-2_6-rk3588-w8a8-opt-0-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

MiniCPM-V-2_6-rk3588-w8a8-opt-1-hybrid-ratio-0.0.rkllm filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

MiniCPM-V-2_6-rk3588-w8a8-opt-1-hybrid-ratio-0.5.rkllm filter=lfs diff=lfs merge=lfs -text

|

MiniCPM-V-2_6-rk3588-w8a8-opt-0-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6405908be4df7e4060062228ea1585208ef5e0ecbe3087bbad232b5a4387ca56

|

| 3 |

+

size 8189403140

|

MiniCPM-V-2_6-rk3588-w8a8-opt-0-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:6405908be4df7e4060062228ea1585208ef5e0ecbe3087bbad232b5a4387ca56

|

| 3 |

+

size 8189403140

|

MiniCPM-V-2_6-rk3588-w8a8-opt-1-hybrid-ratio-0.0.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:61e8b15a2bb02e47d967edfbca5f29b0d1d489bdd992e8dbc7741a339e22459f

|

| 3 |

+

size 8189403140

|

MiniCPM-V-2_6-rk3588-w8a8-opt-1-hybrid-ratio-0.5.rkllm

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:61e8b15a2bb02e47d967edfbca5f29b0d1d489bdd992e8dbc7741a339e22459f

|

| 3 |

+

size 8189403140

|

README.md

ADDED

|

@@ -0,0 +1,365 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

datasets:

|

| 3 |

+

- openbmb/RLAIF-V-Dataset

|

| 4 |

+

language:

|

| 5 |

+

- multilingual

|

| 6 |

+

library_name: transformers

|

| 7 |

+

pipeline_tag: image-text-to-text

|

| 8 |

+

tags:

|

| 9 |

+

- minicpm-v

|

| 10 |

+

- vision

|

| 11 |

+

- ocr

|

| 12 |

+

- multi-image

|

| 13 |

+

- video

|

| 14 |

+

- custom_code

|

| 15 |

+

---

|

| 16 |

+

# MiniCPM-V-2_6-RK3588-1.1.1

|

| 17 |

+

|

| 18 |

+

This version of MiniCPM-V-2_6 has been converted to run on the RK3588 NPU using {'w8a8'} quantization.

|

| 19 |

+

|

| 20 |

+

This model has been optimized with the following LoRA:

|

| 21 |

+

|

| 22 |

+

Compatible with RKLLM version: 1.1.1

|

| 23 |

+

|

| 24 |

+

###Useful links:

|

| 25 |

+

[Official RKLLM GitHub](https://github.com/airockchip/rknn-llm)

|

| 26 |

+

|

| 27 |

+

[RockhipNPU Reddit](https://reddit.com/r/RockchipNPU)

|

| 28 |

+

|

| 29 |

+

[EZRKNN-LLM](https://github.com/Pelochus/ezrknn-llm/)

|

| 30 |

+

|

| 31 |

+

Pretty much anything by these folks: (marty1885)[https://github.com/marty1885] and [happyme531](https://huggingface.co/happyme531)

|

| 32 |

+

|

| 33 |

+

# Original Model Card for base model, MiniCPM-V-2_6, below:

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

<h1>A GPT-4V Level MLLM for Single Image, Multi Image and Video on Your Phone</h1>

|

| 37 |

+

|

| 38 |

+

[GitHub](https://github.com/OpenBMB/MiniCPM-V) | [Demo](http://120.92.209.146:8887/)</a>

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

## MiniCPM-V 2.6

|

| 42 |

+

|

| 43 |

+

**MiniCPM-V 2.6** is the latest and most capable model in the MiniCPM-V series. The model is built on SigLip-400M and Qwen2-7B with a total of 8B parameters. It exhibits a significant performance improvement over MiniCPM-Llama3-V 2.5, and introduces new features for multi-image and video understanding. Notable features of MiniCPM-V 2.6 include:

|

| 44 |

+

|

| 45 |

+

- 🔥 **Leading Performance.**

|

| 46 |

+

MiniCPM-V 2.6 achieves an average score of 65.2 on the latest version of OpenCompass, a comprehensive evaluation over 8 popular benchmarks. **With only 8B parameters, it surpasses widely used proprietary models like GPT-4o mini, GPT-4V, Gemini 1.5 Pro, and Claude 3.5 Sonnet** for single image understanding.

|

| 47 |

+

|

| 48 |

+

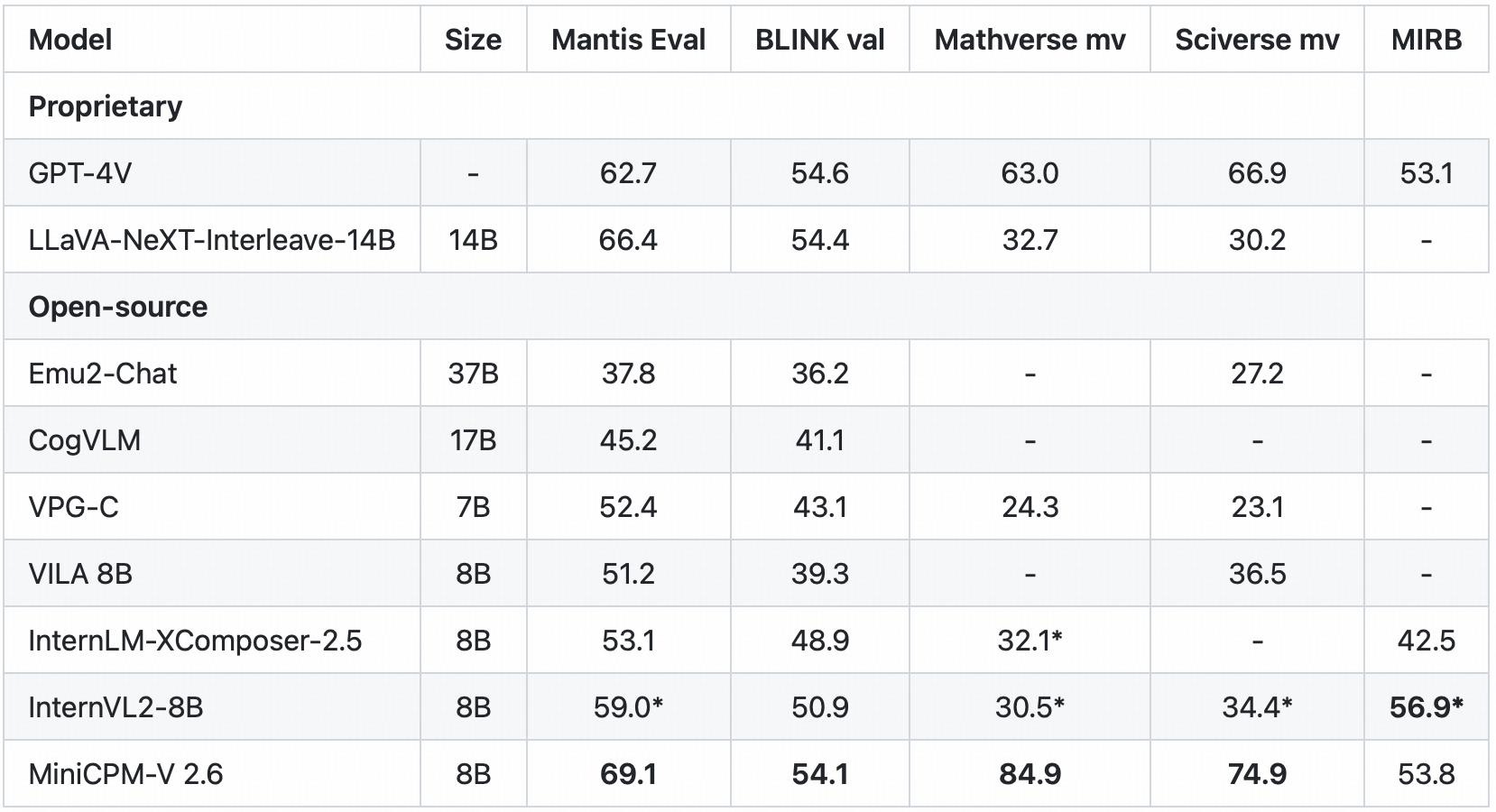

- 🖼️ **Multi Image Understanding and In-context Learning.** MiniCPM-V 2.6 can also perform **conversation and reasoning over multiple images**. It achieves **state-of-the-art performance** on popular multi-image benchmarks such as Mantis-Eval, BLINK, Mathverse mv and Sciverse mv, and also shows promising in-context learning capability.

|

| 49 |

+

|

| 50 |

+

- 🎬 **Video Understanding.** MiniCPM-V 2.6 can also **accept video inputs**, performing conversation and providing dense captions for spatial-temporal information. It outperforms **GPT-4V, Claude 3.5 Sonnet and LLaVA-NeXT-Video-34B** on Video-MME with/without subtitles.

|

| 51 |

+

|

| 52 |

+

- 💪 **Strong OCR Capability and Others.**

|

| 53 |

+

MiniCPM-V 2.6 can process images with any aspect ratio and up to 1.8 million pixels (e.g., 1344x1344). It achieves **state-of-the-art performance on OCRBench, surpassing proprietary models such as GPT-4o, GPT-4V, and Gemini 1.5 Pro**.

|

| 54 |

+

Based on the the latest [RLAIF-V](https://github.com/RLHF-V/RLAIF-V/) and [VisCPM](https://github.com/OpenBMB/VisCPM) techniques, it features **trustworthy behaviors**, with significantly lower hallucination rates than GPT-4o and GPT-4V on Object HalBench, and supports **multilingual capabilities** on English, Chinese, German, French, Italian, Korean, etc.

|

| 55 |

+

|

| 56 |

+

- 🚀 **Superior Efficiency.**

|

| 57 |

+

In addition to its friendly size, MiniCPM-V 2.6 also shows **state-of-the-art token density** (i.e., number of pixels encoded into each visual token). **It produces only 640 tokens when processing a 1.8M pixel image, which is 75% fewer than most models**. This directly improves the inference speed, first-token latency, memory usage, and power consumption. As a result, MiniCPM-V 2.6 can efficiently support **real-time video understanding** on end-side devices such as iPad.

|

| 58 |

+

|

| 59 |

+

- 💫 **Easy Usage.**

|

| 60 |

+

MiniCPM-V 2.6 can be easily used in various ways: (1) [llama.cpp](https://github.com/OpenBMB/llama.cpp/blob/minicpmv-main/examples/llava/README-minicpmv2.6.md) and [ollama](https://github.com/OpenBMB/ollama/tree/minicpm-v2.6) support for efficient CPU inference on local devices, (2) [int4](https://huggingface.co/openbmb/MiniCPM-V-2_6-int4) and [GGUF](https://huggingface.co/openbmb/MiniCPM-V-2_6-gguf) format quantized models in 16 sizes, (3) [vLLM](https://github.com/OpenBMB/MiniCPM-V/tree/main?tab=readme-ov-file#inference-with-vllm) support for high-throughput and memory-efficient inference, (4) fine-tuning on new domains and tasks, (5) quick local WebUI demo setup with [Gradio](https://github.com/OpenBMB/MiniCPM-V/tree/main?tab=readme-ov-file#chat-with-our-demo-on-gradio) and (6) online web [demo](http://120.92.209.146:8887).

|

| 61 |

+

|

| 62 |

+

### Evaluation <!-- omit in toc -->

|

| 63 |

+

<div align="center">

|

| 64 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/radar_final.png" width=66% />

|

| 65 |

+

</div>

|

| 66 |

+

|

| 67 |

+

Single image results on OpenCompass, MME, MMVet, OCRBench, MMMU, MathVista, MMB, AI2D, TextVQA, DocVQA, HallusionBench, Object HalBench:

|

| 68 |

+

<div align="center">

|

| 69 |

+

|

| 70 |

+

|

| 71 |

+

|

| 72 |

+

</div>

|

| 73 |

+

|

| 74 |

+

<sup>*</sup> We evaluate this benchmark using chain-of-thought prompting.

|

| 75 |

+

|

| 76 |

+

<sup>+</sup> Token Density: number of pixels encoded into each visual token at maximum resolution, i.e., # pixels at maximum resolution / # visual tokens.

|

| 77 |

+

|

| 78 |

+

Note: For proprietary models, we calculate token density based on the image encoding charging strategy defined in the official API documentation, which provides an upper-bound estimation.

|

| 79 |

+

|

| 80 |

+

|

| 81 |

+

<details>

|

| 82 |

+

<summary>Click to view multi-image results on Mantis Eval, BLINK Val, Mathverse mv, Sciverse mv, MIRB.</summary>

|

| 83 |

+

<div align="center">

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

|

| 87 |

+

</div>

|

| 88 |

+

<sup>*</sup> We evaluate the officially released checkpoint by ourselves.

|

| 89 |

+

</details>

|

| 90 |

+

|

| 91 |

+

<details>

|

| 92 |

+

<summary>Click to view video results on Video-MME and Video-ChatGPT.</summary>

|

| 93 |

+

<div align="center">

|

| 94 |

+

|

| 95 |

+

<!--  -->

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

</div>

|

| 99 |

+

|

| 100 |

+

</details>

|

| 101 |

+

|

| 102 |

+

|

| 103 |

+

<details>

|

| 104 |

+

<summary>Click to view few-shot results on TextVQA, VizWiz, VQAv2, OK-VQA.</summary>

|

| 105 |

+

<div align="center">

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

</div>

|

| 111 |

+

* denotes zero image shot and two additional text shots following Flamingo.

|

| 112 |

+

|

| 113 |

+

<sup>+</sup> We evaluate the pretraining ckpt without SFT.

|

| 114 |

+

</details>

|

| 115 |

+

|

| 116 |

+

### Examples <!-- omit in toc -->

|

| 117 |

+

|

| 118 |

+

<div style="display: flex; flex-direction: column; align-items: center;">

|

| 119 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/minicpmv2_6/multi_img-bike.png" alt="Bike" style="margin-bottom: -20px;">

|

| 120 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/minicpmv2_6/multi_img-menu.png" alt="Menu" style="margin-bottom: -20px;">

|

| 121 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/minicpmv2_6/multi_img-code.png" alt="Code" style="margin-bottom: -20px;">

|

| 122 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/minicpmv2_6/ICL-Mem.png" alt="Mem" style="margin-bottom: -20px;">

|

| 123 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/minicpmv2_6/multiling-medal.png" alt="medal" style="margin-bottom: 10px;">

|

| 124 |

+

</div>

|

| 125 |

+

<details>

|

| 126 |

+

<summary>Click to view more cases.</summary>

|

| 127 |

+

<div style="display: flex; flex-direction: column; align-items: center;">

|

| 128 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/minicpmv2_6/ICL-elec.png" alt="elec" style="margin-bottom: -20px;">

|

| 129 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/minicpmv2_6/multiling-olympic.png" alt="Menu" style="margin-bottom: 10px;">

|

| 130 |

+

</div>

|

| 131 |

+

</details>

|

| 132 |

+

|

| 133 |

+

We deploy MiniCPM-V 2.6 on end devices. The demo video is the raw screen recording on a iPad Pro without edition.

|

| 134 |

+

|

| 135 |

+

<div style="display: flex; justify-content: center;">

|

| 136 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/gif_cases/ai.gif" width="48%" style="margin: 0 10px;"/>

|

| 137 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/gif_cases/beer.gif" width="48%" style="margin: 0 10px;"/>

|

| 138 |

+

</div>

|

| 139 |

+

<div style="display: flex; justify-content: center; margin-top: 20px;">

|

| 140 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/gif_cases/ticket.gif" width="48%" style="margin: 0 10px;"/>

|

| 141 |

+

<img src="https://github.com/OpenBMB/MiniCPM-V/raw/main/assets/gif_cases/wfh.gif" width="48%" style="margin: 0 10px;"/>

|

| 142 |

+

</div>

|

| 143 |

+

|

| 144 |

+

<div style="text-align: center;">

|

| 145 |

+

<video controls autoplay src="https://cdn-uploads.huggingface.co/production/uploads/64abc4aa6cadc7aca585dddf/mXAEFQFqNd4nnvPk7r5eX.mp4"></video>

|

| 146 |

+

<!-- <video controls autoplay src="https://cdn-uploads.huggingface.co/production/uploads/64abc4aa6cadc7aca585dddf/fEWzfHUdKnpkM7sdmnBQa.mp4"></video> -->

|

| 147 |

+

|

| 148 |

+

</div>

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

## Demo

|

| 153 |

+

Click here to try the Demo of [MiniCPM-V 2.6](http://120.92.209.146:8887/).

|

| 154 |

+

|

| 155 |

+

|

| 156 |

+

## Usage

|

| 157 |

+

Inference using Huggingface transformers on NVIDIA GPUs. Requirements tested on python 3.10:

|

| 158 |

+

```

|

| 159 |

+

Pillow==10.1.0

|

| 160 |

+

torch==2.1.2

|

| 161 |

+

torchvision==0.16.2

|

| 162 |

+

transformers==4.40.0

|

| 163 |

+

sentencepiece==0.1.99

|

| 164 |

+

decord

|

| 165 |

+

```

|

| 166 |

+

|

| 167 |

+

```python

|

| 168 |

+

# test.py

|

| 169 |

+

import torch

|

| 170 |

+

from PIL import Image

|

| 171 |

+

from transformers import AutoModel, AutoTokenizer

|

| 172 |

+

|

| 173 |

+

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True,

|

| 174 |

+

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

|

| 175 |

+

model = model.eval().cuda()

|

| 176 |

+

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True)

|

| 177 |

+

|

| 178 |

+

image = Image.open('xx.jpg').convert('RGB')

|

| 179 |

+

question = 'What is in the image?'

|

| 180 |

+

msgs = [{'role': 'user', 'content': [image, question]}]

|

| 181 |

+

|

| 182 |

+

res = model.chat(

|

| 183 |

+

image=None,

|

| 184 |

+

msgs=msgs,

|

| 185 |

+

tokenizer=tokenizer

|

| 186 |

+

)

|

| 187 |

+

print(res)

|

| 188 |

+

|

| 189 |

+

## if you want to use streaming, please make sure sampling=True and stream=True

|

| 190 |

+

## the model.chat will return a generator

|

| 191 |

+

res = model.chat(

|

| 192 |

+

image=None,

|

| 193 |

+

msgs=msgs,

|

| 194 |

+

tokenizer=tokenizer,

|

| 195 |

+

sampling=True,

|

| 196 |

+

stream=True

|

| 197 |

+

)

|

| 198 |

+

|

| 199 |

+

generated_text = ""

|

| 200 |

+

for new_text in res:

|

| 201 |

+

generated_text += new_text

|

| 202 |

+

print(new_text, flush=True, end='')

|

| 203 |

+

```

|

| 204 |

+

|

| 205 |

+

### Chat with multiple images

|

| 206 |

+

<details>

|

| 207 |

+

<summary> Click to show Python code running MiniCPM-V 2.6 with multiple images input. </summary>

|

| 208 |

+

|

| 209 |

+

```python

|

| 210 |

+

import torch

|

| 211 |

+

from PIL import Image

|

| 212 |

+

from transformers import AutoModel, AutoTokenizer

|

| 213 |

+

|

| 214 |

+

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True,

|

| 215 |

+

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

|

| 216 |

+

model = model.eval().cuda()

|

| 217 |

+

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True)

|

| 218 |

+

|

| 219 |

+

image1 = Image.open('image1.jpg').convert('RGB')

|

| 220 |

+

image2 = Image.open('image2.jpg').convert('RGB')

|

| 221 |

+

question = 'Compare image 1 and image 2, tell me about the differences between image 1 and image 2.'

|

| 222 |

+

|

| 223 |

+

msgs = [{'role': 'user', 'content': [image1, image2, question]}]

|

| 224 |

+

|

| 225 |

+

answer = model.chat(

|

| 226 |

+

image=None,

|

| 227 |

+

msgs=msgs,

|

| 228 |

+

tokenizer=tokenizer

|

| 229 |

+

)

|

| 230 |

+

print(answer)

|

| 231 |

+

```

|

| 232 |

+

</details>

|

| 233 |

+

|

| 234 |

+

### In-context few-shot learning

|

| 235 |

+

<details>

|

| 236 |

+

<summary> Click to view Python code running MiniCPM-V 2.6 with few-shot input. </summary>

|

| 237 |

+

|

| 238 |

+

```python

|

| 239 |

+

import torch

|

| 240 |

+

from PIL import Image

|

| 241 |

+

from transformers import AutoModel, AutoTokenizer

|

| 242 |

+

|

| 243 |

+

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True,

|

| 244 |

+

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

|

| 245 |

+

model = model.eval().cuda()

|

| 246 |

+

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True)

|

| 247 |

+

|

| 248 |

+

question = "production date"

|

| 249 |

+

image1 = Image.open('example1.jpg').convert('RGB')

|

| 250 |

+

answer1 = "2023.08.04"

|

| 251 |

+

image2 = Image.open('example2.jpg').convert('RGB')

|

| 252 |

+

answer2 = "2007.04.24"

|

| 253 |

+

image_test = Image.open('test.jpg').convert('RGB')

|

| 254 |

+

|

| 255 |

+

msgs = [

|

| 256 |

+

{'role': 'user', 'content': [image1, question]}, {'role': 'assistant', 'content': [answer1]},

|

| 257 |

+

{'role': 'user', 'content': [image2, question]}, {'role': 'assistant', 'content': [answer2]},

|

| 258 |

+

{'role': 'user', 'content': [image_test, question]}

|

| 259 |

+

]

|

| 260 |

+

|

| 261 |

+

answer = model.chat(

|

| 262 |

+

image=None,

|

| 263 |

+

msgs=msgs,

|

| 264 |

+

tokenizer=tokenizer

|

| 265 |

+

)

|

| 266 |

+

print(answer)

|

| 267 |

+

```

|

| 268 |

+

</details>

|

| 269 |

+

|

| 270 |

+

### Chat with video

|

| 271 |

+

<details>

|

| 272 |

+

<summary> Click to view Python code running MiniCPM-V 2.6 with video input. </summary>

|

| 273 |

+

|

| 274 |

+

```python

|

| 275 |

+

import torch

|

| 276 |

+

from PIL import Image

|

| 277 |

+

from transformers import AutoModel, AutoTokenizer

|

| 278 |

+

from decord import VideoReader, cpu # pip install decord

|

| 279 |

+

|

| 280 |

+

model = AutoModel.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True,

|

| 281 |

+

attn_implementation='sdpa', torch_dtype=torch.bfloat16) # sdpa or flash_attention_2, no eager

|

| 282 |

+

model = model.eval().cuda()

|

| 283 |

+

tokenizer = AutoTokenizer.from_pretrained('openbmb/MiniCPM-V-2_6', trust_remote_code=True)

|

| 284 |

+

|

| 285 |

+

MAX_NUM_FRAMES=64 # if cuda OOM set a smaller number

|

| 286 |

+

|

| 287 |

+

def encode_video(video_path):

|

| 288 |

+

def uniform_sample(l, n):

|

| 289 |

+

gap = len(l) / n

|

| 290 |

+

idxs = [int(i * gap + gap / 2) for i in range(n)]

|

| 291 |

+

return [l[i] for i in idxs]

|

| 292 |

+

|

| 293 |

+

vr = VideoReader(video_path, ctx=cpu(0))

|

| 294 |

+

sample_fps = round(vr.get_avg_fps() / 1) # FPS

|

| 295 |

+

frame_idx = [i for i in range(0, len(vr), sample_fps)]

|

| 296 |

+

if len(frame_idx) > MAX_NUM_FRAMES:

|

| 297 |

+

frame_idx = uniform_sample(frame_idx, MAX_NUM_FRAMES)

|

| 298 |

+

frames = vr.get_batch(frame_idx).asnumpy()

|

| 299 |

+

frames = [Image.fromarray(v.astype('uint8')) for v in frames]

|

| 300 |

+

print('num frames:', len(frames))

|

| 301 |

+

return frames

|

| 302 |

+

|

| 303 |

+

video_path ="video_test.mp4"

|

| 304 |

+

frames = encode_video(video_path)

|

| 305 |

+

question = "Describe the video"

|

| 306 |

+

msgs = [

|

| 307 |

+

{'role': 'user', 'content': frames + [question]},

|

| 308 |

+

]

|

| 309 |

+

|

| 310 |

+

# Set decode params for video

|

| 311 |

+

params={}

|

| 312 |

+

params["use_image_id"] = False

|

| 313 |

+

params["max_slice_nums"] = 2 # use 1 if cuda OOM and video resolution > 448*448

|

| 314 |

+

|

| 315 |

+

answer = model.chat(

|

| 316 |

+

image=None,

|

| 317 |

+

msgs=msgs,

|

| 318 |

+

tokenizer=tokenizer,

|

| 319 |

+

**params

|

| 320 |

+

)

|

| 321 |

+

print(answer)

|

| 322 |

+

```

|

| 323 |

+

</details>

|

| 324 |

+

|

| 325 |

+

|

| 326 |

+

Please look at [GitHub](https://github.com/OpenBMB/MiniCPM-V) for more detail about usage.

|

| 327 |

+

|

| 328 |

+

|

| 329 |

+

## Inference with llama.cpp<a id="llamacpp"></a>

|

| 330 |

+

MiniCPM-V 2.6 can run with llama.cpp. See our fork of [llama.cpp](https://github.com/OpenBMB/llama.cpp/tree/minicpm-v2.5/examples/minicpmv) for more detail.

|

| 331 |

+

|

| 332 |

+

|

| 333 |

+

## Int4 quantized version

|

| 334 |

+

Download the int4 quantized version for lower GPU memory (7GB) usage: [MiniCPM-V-2_6-int4](https://huggingface.co/openbmb/MiniCPM-V-2_6-int4).

|

| 335 |

+

|

| 336 |

+

|

| 337 |

+

## License

|

| 338 |

+

#### Model License

|

| 339 |

+

* The code in this repo is released under the [Apache-2.0](https://github.com/OpenBMB/MiniCPM/blob/main/LICENSE) License.

|

| 340 |

+

* The usage of MiniCPM-V series model weights must strictly follow [MiniCPM Model License.md](https://github.com/OpenBMB/MiniCPM/blob/main/MiniCPM%20Model%20License.md).

|

| 341 |

+

* The models and weights of MiniCPM are completely free for academic research. After filling out a ["questionnaire"](https://modelbest.feishu.cn/share/base/form/shrcnpV5ZT9EJ6xYjh3Kx0J6v8g) for registration, MiniCPM-V 2.6 weights are also available for free commercial use.

|

| 342 |

+

|

| 343 |

+

|

| 344 |

+

#### Statement

|

| 345 |

+

* As an LMM, MiniCPM-V 2.6 generates contents by learning a large mount of multimodal corpora, but it cannot comprehend, express personal opinions or make value judgement. Anything generated by MiniCPM-V 2.6 does not represent the views and positions of the model developers

|

| 346 |

+

* We will not be liable for any problems arising from the use of the MinCPM-V models, including but not limited to data security issues, risk of public opinion, or any risks and problems arising from the misdirection, misuse, dissemination or misuse of the model.

|

| 347 |

+

|

| 348 |

+

## Key Techniques and Other Multimodal Projects

|

| 349 |

+

|

| 350 |

+

👏 Welcome to explore key techniques of MiniCPM-V 2.6 and other multimodal projects of our team:

|

| 351 |

+

|

| 352 |

+

[VisCPM](https://github.com/OpenBMB/VisCPM/tree/main) | [RLHF-V](https://github.com/RLHF-V/RLHF-V) | [LLaVA-UHD](https://github.com/thunlp/LLaVA-UHD) | [RLAIF-V](https://github.com/RLHF-V/RLAIF-V)

|

| 353 |

+

|

| 354 |

+

## Citation

|

| 355 |

+

|

| 356 |

+

If you find our work helpful, please consider citing our papers 📝 and liking this project ❤️!

|

| 357 |

+

|

| 358 |

+

```bib

|

| 359 |

+

@article{yao2024minicpm,

|

| 360 |

+

title={MiniCPM-V: A GPT-4V Level MLLM on Your Phone},

|

| 361 |

+

author={Yao, Yuan and Yu, Tianyu and Zhang, Ao and Wang, Chongyi and Cui, Junbo and Zhu, Hongji and Cai, Tianchi and Li, Haoyu and Zhao, Weilin and He, Zhihui and others},

|

| 362 |

+

journal={arXiv preprint arXiv:2408.01800},

|

| 363 |

+

year={2024}

|

| 364 |

+

}

|

| 365 |

+

```

|

added_tokens.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"</box>": 151651,

|

| 3 |

+

"</image>": 151647,

|

| 4 |

+

"</image_id>": 151659,

|

| 5 |

+

"</point>": 151655,

|

| 6 |

+

"</quad>": 151653,

|

| 7 |

+

"</ref>": 151649,

|

| 8 |

+

"</slice>": 151657,

|

| 9 |

+

"<box>": 151650,

|

| 10 |

+

"<image>": 151646,

|

| 11 |

+

"<image_id>": 151658,

|

| 12 |

+

"<point>": 151654,

|

| 13 |

+

"<quad>": 151652,

|

| 14 |

+

"<ref>": 151648,

|

| 15 |

+

"<slice>": 151656,

|

| 16 |

+

"<|endoftext|>": 151643,

|

| 17 |

+

"<|im_end|>": 151645,

|

| 18 |

+

"<|im_start|>": 151644,

|

| 19 |

+

"<|reserved_special_token_0|>": 151660,

|

| 20 |

+

"<|reserved_special_token_1|>": 151661,

|

| 21 |

+

"<|reserved_special_token_2|>": 151662,

|

| 22 |

+

"<|reserved_special_token_3|>": 151663,

|

| 23 |

+

"<|reserved_special_token_4|>": 151664,

|

| 24 |

+

"<|reserved_special_token_5|>": 151665

|

| 25 |

+

}

|

config.json

ADDED

|

@@ -0,0 +1,54 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "openbmb/MiniCPM-V-2_6",

|

| 3 |

+

"version": 2.6,

|

| 4 |

+

"architectures": [

|

| 5 |

+

"MiniCPMV"

|

| 6 |

+

],

|

| 7 |

+

"auto_map": {

|

| 8 |

+

"AutoConfig": "configuration_minicpm.MiniCPMVConfig",

|

| 9 |

+

"AutoModel": "modeling_minicpmv.MiniCPMV",

|

| 10 |

+

"AutoModelForCausalLM": "modeling_minicpmv.MiniCPMV"

|

| 11 |

+

},

|

| 12 |

+

"attention_dropout": 0.0,

|

| 13 |

+

"bos_token_id": 151643,

|

| 14 |

+

"eos_token_id": 151645,

|

| 15 |

+

"hidden_act": "silu",

|

| 16 |

+

"hidden_size": 3584,

|

| 17 |

+

"initializer_range": 0.02,

|

| 18 |

+

"intermediate_size": 18944,

|

| 19 |

+

"max_position_embeddings": 32768,

|

| 20 |

+

"max_window_layers": 28,

|

| 21 |

+

"num_attention_heads": 28,

|

| 22 |

+

"num_hidden_layers": 28,

|

| 23 |

+

"num_key_value_heads": 4,

|

| 24 |

+

"rms_norm_eps": 1e-06,

|

| 25 |

+

"rope_theta": 1000000.0,

|

| 26 |

+

"sliding_window": 131072,

|

| 27 |

+

"tie_word_embeddings": false,

|

| 28 |

+

"torch_dtype": "bfloat16",

|

| 29 |

+

"transformers_version": "4.40.0",

|

| 30 |

+

"use_cache": true,

|

| 31 |

+

"use_sliding_window": false,

|

| 32 |

+

"vocab_size": 151666,

|

| 33 |

+

"batch_vision_input": true,

|

| 34 |

+

"drop_vision_last_layer": false,

|

| 35 |

+

"image_size": 448,

|

| 36 |

+

"model_type": "minicpmv",

|

| 37 |

+

"patch_size": 14,

|

| 38 |

+

"query_num": 64,

|

| 39 |

+

"slice_config": {

|

| 40 |

+

"max_slice_nums": 9,

|

| 41 |

+

"patch_size": 14,

|

| 42 |

+

"model_type": "minicpmv"

|

| 43 |

+

},

|

| 44 |

+

"slice_mode": true,

|

| 45 |

+

"vision_config": {

|

| 46 |

+

"hidden_size": 1152,

|

| 47 |

+

"image_size": 980,

|

| 48 |

+

"intermediate_size": 4304,

|

| 49 |

+

"model_type": "siglip",

|

| 50 |

+

"num_attention_heads": 16,

|

| 51 |

+

"num_hidden_layers": 27,

|

| 52 |

+

"patch_size": 14

|

| 53 |

+

}

|

| 54 |

+

}

|

generation_config.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_from_model_config": true,

|

| 3 |

+

"bos_token_id": 151643,

|

| 4 |

+

"eos_token_id": 151645,

|

| 5 |

+

"transformers_version": "4.40.0"

|

| 6 |

+

}

|

model.safetensors.index.json

ADDED

|

@@ -0,0 +1,796 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"metadata": {

|

| 3 |

+

"total_size": 16198350304

|

| 4 |

+

},

|

| 5 |

+

"weight_map": {

|

| 6 |

+

"llm.lm_head.weight": "model-00004-of-00004.safetensors",

|

| 7 |

+

"llm.model.embed_tokens.weight": "model-00001-of-00004.safetensors",

|

| 8 |

+

"llm.model.layers.0.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 9 |

+

"llm.model.layers.0.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 10 |

+

"llm.model.layers.0.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 11 |

+

"llm.model.layers.0.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 12 |

+

"llm.model.layers.0.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 13 |

+

"llm.model.layers.0.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

| 14 |

+

"llm.model.layers.0.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 15 |

+

"llm.model.layers.0.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 16 |

+

"llm.model.layers.0.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

| 17 |

+

"llm.model.layers.0.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 18 |

+

"llm.model.layers.0.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

| 19 |

+

"llm.model.layers.0.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 20 |

+

"llm.model.layers.1.input_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 21 |

+

"llm.model.layers.1.mlp.down_proj.weight": "model-00001-of-00004.safetensors",

|

| 22 |

+

"llm.model.layers.1.mlp.gate_proj.weight": "model-00001-of-00004.safetensors",

|

| 23 |

+

"llm.model.layers.1.mlp.up_proj.weight": "model-00001-of-00004.safetensors",

|

| 24 |

+

"llm.model.layers.1.post_attention_layernorm.weight": "model-00001-of-00004.safetensors",

|

| 25 |

+

"llm.model.layers.1.self_attn.k_proj.bias": "model-00001-of-00004.safetensors",

|

| 26 |

+

"llm.model.layers.1.self_attn.k_proj.weight": "model-00001-of-00004.safetensors",

|

| 27 |

+

"llm.model.layers.1.self_attn.o_proj.weight": "model-00001-of-00004.safetensors",

|

| 28 |

+

"llm.model.layers.1.self_attn.q_proj.bias": "model-00001-of-00004.safetensors",

|

| 29 |

+

"llm.model.layers.1.self_attn.q_proj.weight": "model-00001-of-00004.safetensors",

|

| 30 |

+

"llm.model.layers.1.self_attn.v_proj.bias": "model-00001-of-00004.safetensors",

|

| 31 |

+

"llm.model.layers.1.self_attn.v_proj.weight": "model-00001-of-00004.safetensors",

|

| 32 |

+

"llm.model.layers.10.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 33 |

+

"llm.model.layers.10.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 34 |

+

"llm.model.layers.10.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 35 |

+

"llm.model.layers.10.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 36 |

+

"llm.model.layers.10.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 37 |

+

"llm.model.layers.10.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

| 38 |

+

"llm.model.layers.10.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 39 |

+

"llm.model.layers.10.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 40 |

+

"llm.model.layers.10.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

| 41 |

+

"llm.model.layers.10.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 42 |

+

"llm.model.layers.10.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

| 43 |

+

"llm.model.layers.10.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 44 |

+

"llm.model.layers.11.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 45 |

+

"llm.model.layers.11.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 46 |

+

"llm.model.layers.11.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 47 |

+

"llm.model.layers.11.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 48 |

+

"llm.model.layers.11.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 49 |

+

"llm.model.layers.11.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

| 50 |

+

"llm.model.layers.11.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 51 |

+

"llm.model.layers.11.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 52 |

+

"llm.model.layers.11.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

| 53 |

+

"llm.model.layers.11.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 54 |

+

"llm.model.layers.11.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

| 55 |

+

"llm.model.layers.11.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 56 |

+

"llm.model.layers.12.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 57 |

+

"llm.model.layers.12.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 58 |

+

"llm.model.layers.12.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 59 |

+

"llm.model.layers.12.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 60 |

+

"llm.model.layers.12.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 61 |

+

"llm.model.layers.12.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

| 62 |

+

"llm.model.layers.12.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 63 |

+

"llm.model.layers.12.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 64 |

+

"llm.model.layers.12.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

| 65 |

+

"llm.model.layers.12.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 66 |

+

"llm.model.layers.12.self_attn.v_proj.bias": "model-00002-of-00004.safetensors",

|

| 67 |

+

"llm.model.layers.12.self_attn.v_proj.weight": "model-00002-of-00004.safetensors",

|

| 68 |

+

"llm.model.layers.13.input_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 69 |

+

"llm.model.layers.13.mlp.down_proj.weight": "model-00002-of-00004.safetensors",

|

| 70 |

+

"llm.model.layers.13.mlp.gate_proj.weight": "model-00002-of-00004.safetensors",

|

| 71 |

+

"llm.model.layers.13.mlp.up_proj.weight": "model-00002-of-00004.safetensors",

|

| 72 |

+

"llm.model.layers.13.post_attention_layernorm.weight": "model-00002-of-00004.safetensors",

|

| 73 |

+

"llm.model.layers.13.self_attn.k_proj.bias": "model-00002-of-00004.safetensors",

|

| 74 |

+

"llm.model.layers.13.self_attn.k_proj.weight": "model-00002-of-00004.safetensors",

|

| 75 |

+

"llm.model.layers.13.self_attn.o_proj.weight": "model-00002-of-00004.safetensors",

|

| 76 |

+

"llm.model.layers.13.self_attn.q_proj.bias": "model-00002-of-00004.safetensors",

|

| 77 |

+

"llm.model.layers.13.self_attn.q_proj.weight": "model-00002-of-00004.safetensors",

|

| 78 |

+