Datasets:

TheGreatRambler

commited on

Commit

•

10497ef

1

Parent(s):

98276f2

Create README

Browse files

README.md

ADDED

|

@@ -0,0 +1,148 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

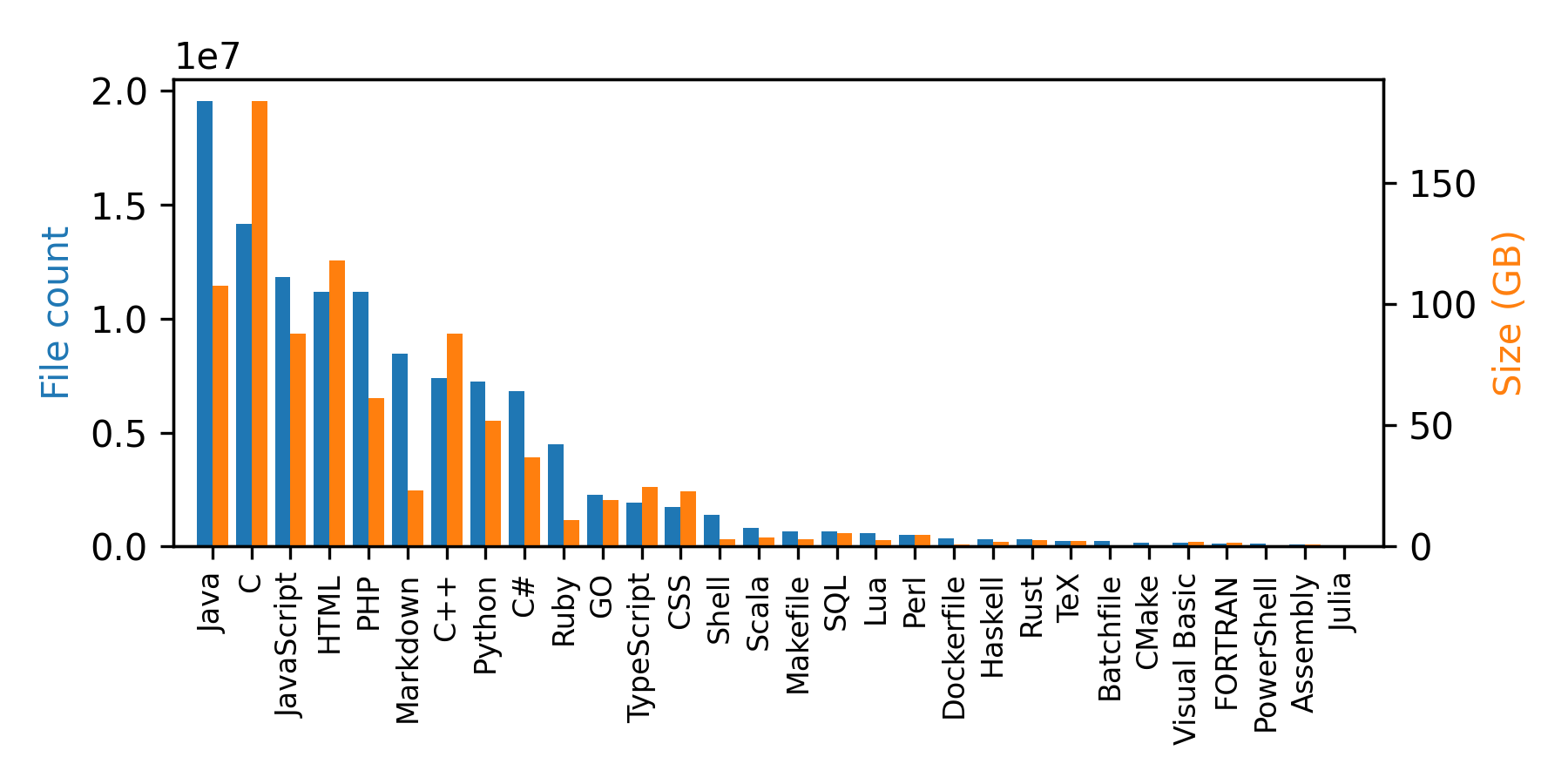

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- multilingual

|

| 4 |

+

license:

|

| 5 |

+

- cc-by-nc-sa-4.0

|

| 6 |

+

multilinguality:

|

| 7 |

+

- multilingual

|

| 8 |

+

size_categories:

|

| 9 |

+

- 100M<n<1B

|

| 10 |

+

source_datasets:

|

| 11 |

+

- original

|

| 12 |

+

task_categories:

|

| 13 |

+

- text-generation

|

| 14 |

+

- structure-prediction

|

| 15 |

+

- object-detection

|

| 16 |

+

- text-mining

|

| 17 |

+

- information-retrieval

|

| 18 |

+

- other

|

| 19 |

+

task_ids:

|

| 20 |

+

- other

|

| 21 |

+

pretty_name: Mario Maker 2 user badges

|

| 22 |

+

---

|

| 23 |

+

|

| 24 |

+

# Mario Maker 2 user badges

|

| 25 |

+

Part of the [Mario Maker 2 Dataset Collection](https://tgrcode.com/posts/mario_maker_2_datasets)

|

| 26 |

+

|

| 27 |

+

## Dataset Description

|

| 28 |

+

The Mario Maker 2 user badges dataset consists of 9328 user badges (they are capped to 10k globally) from Nintendo's online service and adds onto `TheGreatRambler/mm2_user`. The dataset was created using the self-hosted [Mario Maker 2 api](https://tgrcode.com/posts/mario_maker_2_api) over the course of 1 month in February 2022.

|

| 29 |

+

|

| 30 |

+

### How to use it

|

| 31 |

+

You can load and iterate through the dataset with the following code:

|

| 32 |

+

|

| 33 |

+

```python

|

| 34 |

+

from datasets import load_dataset

|

| 35 |

+

|

| 36 |

+

ds = load_dataset("TheGreatRambler/mm2_user_badges", split="train")

|

| 37 |

+

print(next(iter(ds)))

|

| 38 |

+

|

| 39 |

+

#OUTPUT:

|

| 40 |

+

{

|

| 41 |

+

'pid': '1779763691699286988',

|

| 42 |

+

'type': 4,

|

| 43 |

+

'rank': 6

|

| 44 |

+

}

|

| 45 |

+

```

|

| 46 |

+

Each row is a badge awarded to the player denoted by `pid`. `TheGreatRambler/mm2_user` contains these players.

|

| 47 |

+

|

| 48 |

+

## Data Structure

|

| 49 |

+

|

| 50 |

+

### Data Instances

|

| 51 |

+

|

| 52 |

+

```python

|

| 53 |

+

{

|

| 54 |

+

'pid': '1779763691699286988',

|

| 55 |

+

'type': 4,

|

| 56 |

+

'rank': 6

|

| 57 |

+

}

|

| 58 |

+

```

|

| 59 |

+

|

| 60 |

+

### Data Fields

|

| 61 |

+

|

| 62 |

+

|Field|Type|Description|

|

| 63 |

+

|---|---|---|

|

| 64 |

+

|pid|string|Player ID|

|

| 65 |

+

|type|int|The kind of badge, enum below|

|

| 66 |

+

|rank|int|The rank of badge, enum below|

|

| 67 |

+

|

| 68 |

+

### Data Splits

|

| 69 |

+

|

| 70 |

+

The dataset only contains a train split.

|

| 71 |

+

|

| 72 |

+

## Enums

|

| 73 |

+

|

| 74 |

+

The dataset contains some enum integer fields. This can be used to convert back to their string equivalents:

|

| 75 |

+

|

| 76 |

+

```python

|

| 77 |

+

BadgeTypes = {

|

| 78 |

+

0: "Maker Points (All-Time)",

|

| 79 |

+

1: "Endless Challenge (Easy)",

|

| 80 |

+

2: "Endless Challenge (Normal)",

|

| 81 |

+

3: "Endless Challenge (Expert)",

|

| 82 |

+

4: "Endless Challenge (Super Expert)",

|

| 83 |

+

5: "Multiplayer Versus",

|

| 84 |

+

6: "Number of Clears",

|

| 85 |

+

7: "Number of First Clears",

|

| 86 |

+

8: "Number of World Records",

|

| 87 |

+

9: "Maker Points (Weekly)"

|

| 88 |

+

}

|

| 89 |

+

|

| 90 |

+

BadgeRanks = {

|

| 91 |

+

6: "Bronze",

|

| 92 |

+

5: "Silver",

|

| 93 |

+

4: "Gold",

|

| 94 |

+

3: "Bronze Ribbon",

|

| 95 |

+

2: "Silver Ribbon",

|

| 96 |

+

1: "Gold Ribbon"

|

| 97 |

+

}

|

| 98 |

+

```

|

| 99 |

+

|

| 100 |

+

<!-- TODO create detailed statistics -->

|

| 101 |

+

<!--

|

| 102 |

+

## Dataset Statistics

|

| 103 |

+

|

| 104 |

+

The dataset contains 115M files and the sum of all the source code file sizes is 873 GB (note that the size of the dataset is larger due to the extra fields). A breakdown per language is given in the plot and table below:

|

| 105 |

+

|

| 106 |

+

|

| 107 |

+

|

| 108 |

+

| | Language |File Count| Size (GB)|

|

| 109 |

+

|---:|:-------------|---------:|-------:|

|

| 110 |

+

| 0 | Java | 19548190 | 107.70 |

|

| 111 |

+

| 1 | C | 14143113 | 183.83 |

|

| 112 |

+

| 2 | JavaScript | 11839883 | 87.82 |

|

| 113 |

+

| 3 | HTML | 11178557 | 118.12 |

|

| 114 |

+

| 4 | PHP | 11177610 | 61.41 |

|

| 115 |

+

| 5 | Markdown | 8464626 | 23.09 |

|

| 116 |

+

| 6 | C++ | 7380520 | 87.73 |

|

| 117 |

+

| 7 | Python | 7226626 | 52.03 |

|

| 118 |

+

| 8 | C# | 6811652 | 36.83 |

|

| 119 |

+

| 9 | Ruby | 4473331 | 10.95 |

|

| 120 |

+

| 10 | GO | 2265436 | 19.28 |

|

| 121 |

+

| 11 | TypeScript | 1940406 | 24.59 |

|

| 122 |

+

| 12 | CSS | 1734406 | 22.67 |

|

| 123 |

+

| 13 | Shell | 1385648 | 3.01 |

|

| 124 |

+

| 14 | Scala | 835755 | 3.87 |

|

| 125 |

+

| 15 | Makefile | 679430 | 2.92 |

|

| 126 |

+

| 16 | SQL | 656671 | 5.67 |

|

| 127 |

+

| 17 | Lua | 578554 | 2.81 |

|

| 128 |

+

| 18 | Perl | 497949 | 4.70 |

|

| 129 |

+

| 19 | Dockerfile | 366505 | 0.71 |

|

| 130 |

+

| 20 | Haskell | 340623 | 1.85 |

|

| 131 |

+

| 21 | Rust | 322431 | 2.68 |

|

| 132 |

+

| 22 | TeX | 251015 | 2.15 |

|

| 133 |

+

| 23 | Batchfile | 236945 | 0.70 |

|

| 134 |

+

| 24 | CMake | 175282 | 0.54 |

|

| 135 |

+

| 25 | Visual Basic | 155652 | 1.91 |

|

| 136 |

+

| 26 | FORTRAN | 142038 | 1.62 |

|

| 137 |

+

| 27 | PowerShell | 136846 | 0.69 |

|

| 138 |

+

| 28 | Assembly | 82905 | 0.78 |

|

| 139 |

+

| 29 | Julia | 58317 | 0.29 |

|

| 140 |

+

-->

|

| 141 |

+

|

| 142 |

+

## Dataset Creation

|

| 143 |

+

|

| 144 |

+

The dataset was created over a little more than a month in Febuary 2022 using the self hosted [Mario Maker 2 api](https://tgrcode.com/posts/mario_maker_2_api). As requests made to Nintendo's servers require authentication the process had to be done with upmost care and limiting download speed as to not overload the API and risk a ban. There are no intentions to create an updated release of this dataset.

|

| 145 |

+

|

| 146 |

+

## Considerations for Using the Data

|

| 147 |

+

|

| 148 |

+

The dataset contains no harmful language or depictions.

|