Librarian Bot: Add language metadata for dataset

Browse filesThis pull request aims to enrich the metadata of your dataset by adding language metadata to `YAML` block of your dataset card `README.md`.

How did we find this information?

- The librarian-bot downloaded a sample of rows from your dataset using the [dataset-server](https://huggingface.co/docs/datasets-server/) library

- The librarian-bot used a language detection model to predict the likely language of your dataset. This was done on columns likely to contain text data.

- Predictions for rows are aggregated by language and a filter is applied to remove languages which are very infrequently predicted

- A confidence threshold is applied to remove languages which are not confidently predicted

The following languages were detected with the following mean probabilities:

- Turkish (tr): 99.18%

If this PR is merged, the language metadata will be added to your dataset card. This will allow users to filter datasets by language on the [Hub](https://huggingface.co/datasets).

If the language metadata is incorrect, please feel free to close this PR.

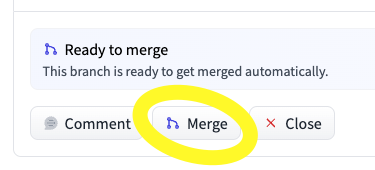

To merge this PR, you can use the merge button below the PR:

This PR comes courtesy of [Librarian Bot](https://huggingface.co/librarian-bots). If you have any feedback, queries, or need assistance, please don't hesitate to reach out to

@davanstrien

.

|

@@ -1,3 +1,7 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

This is the embedded version of [barandinho/wikipedia_tr](https://huggingface.co/datasets/barandinho/wikipedia_tr) the dataset was chunked (chunk_size=2048, chunk_overlap=256) and then put into an embedding model.\

|

| 2 |

The embedding model used for this dataset is [sentence-transformers/distiluse-base-multilingual-cased-v1](https://huggingface.co/sentence-transformers/distiluse-base-multilingual-cased-v1) so you have to use it if you wanna do similarity search\

|

| 3 |

It is one of the best embedding model for Turkish according to our tests.\

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language:

|

| 3 |

+

- tr

|

| 4 |

+

---

|

| 5 |

This is the embedded version of [barandinho/wikipedia_tr](https://huggingface.co/datasets/barandinho/wikipedia_tr) the dataset was chunked (chunk_size=2048, chunk_overlap=256) and then put into an embedding model.\

|

| 6 |

The embedding model used for this dataset is [sentence-transformers/distiluse-base-multilingual-cased-v1](https://huggingface.co/sentence-transformers/distiluse-base-multilingual-cased-v1) so you have to use it if you wanna do similarity search\

|

| 7 |

It is one of the best embedding model for Turkish according to our tests.\

|