Upload README.md with huggingface_hub

Browse files

README.md

ADDED

|

@@ -0,0 +1,258 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

<div align="center">

|

| 2 |

+

|

| 3 |

+

<a href="https://www.youtube.com/watch?v=jlMAX2Oaht0">

|

| 4 |

+

<img width=560 width=315 alt="Making TGI deployment optimal" src="https://huggingface.co/datasets/Narsil/tgi_assets/resolve/main/thumbnail.png">

|

| 5 |

+

</a>

|

| 6 |

+

|

| 7 |

+

# Text Generation Inference

|

| 8 |

+

|

| 9 |

+

<a href="https://github.com/huggingface/text-generation-inference">

|

| 10 |

+

<img alt="GitHub Repo stars" src="https://img.shields.io/github/stars/huggingface/text-generation-inference?style=social">

|

| 11 |

+

</a>

|

| 12 |

+

<a href="https://huggingface.github.io/text-generation-inference">

|

| 13 |

+

<img alt="Swagger API documentation" src="https://img.shields.io/badge/API-Swagger-informational">

|

| 14 |

+

</a>

|

| 15 |

+

|

| 16 |

+

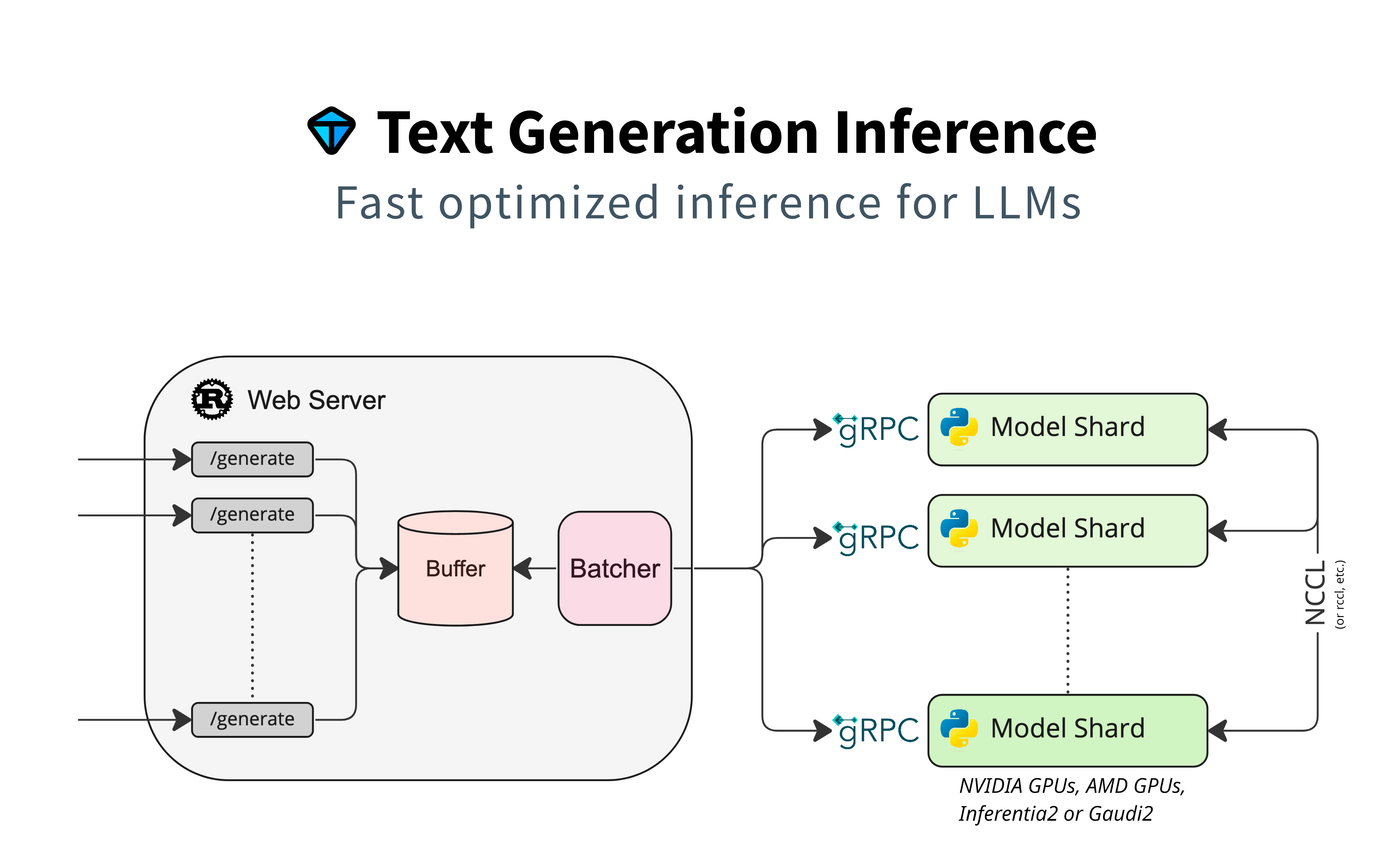

A Rust, Python and gRPC server for text generation inference. Used in production at [HuggingFace](https://huggingface.co)

|

| 17 |

+

to power Hugging Chat, the Inference API and Inference Endpoint.

|

| 18 |

+

|

| 19 |

+

</div>

|

| 20 |

+

|

| 21 |

+

## Table of contents

|

| 22 |

+

|

| 23 |

+

- [Get Started](#get-started)

|

| 24 |

+

- [API Documentation](#api-documentation)

|

| 25 |

+

- [Using a private or gated model](#using-a-private-or-gated-model)

|

| 26 |

+

- [A note on Shared Memory](#a-note-on-shared-memory-shm)

|

| 27 |

+

- [Distributed Tracing](#distributed-tracing)

|

| 28 |

+

- [Local Install](#local-install)

|

| 29 |

+

- [CUDA Kernels](#cuda-kernels)

|

| 30 |

+

- [Optimized architectures](#optimized-architectures)

|

| 31 |

+

- [Run Mistral](#run-a-model)

|

| 32 |

+

- [Run](#run)

|

| 33 |

+

- [Quantization](#quantization)

|

| 34 |

+

- [Develop](#develop)

|

| 35 |

+

- [Testing](#testing)

|

| 36 |

+

|

| 37 |

+

Text Generation Inference (TGI) is a toolkit for deploying and serving Large Language Models (LLMs). TGI enables high-performance text generation for the most popular open-source LLMs, including Llama, Falcon, StarCoder, BLOOM, GPT-NeoX, and [more](https://huggingface.co/docs/text-generation-inference/supported_models). TGI implements many features, such as:

|

| 38 |

+

|

| 39 |

+

- Simple launcher to serve most popular LLMs

|

| 40 |

+

- Production ready (distributed tracing with Open Telemetry, Prometheus metrics)

|

| 41 |

+

- Tensor Parallelism for faster inference on multiple GPUs

|

| 42 |

+

- Token streaming using Server-Sent Events (SSE)

|

| 43 |

+

- Continuous batching of incoming requests for increased total throughput

|

| 44 |

+

- Optimized transformers code for inference using [Flash Attention](https://github.com/HazyResearch/flash-attention) and [Paged Attention](https://github.com/vllm-project/vllm) on the most popular architectures

|

| 45 |

+

- Quantization with :

|

| 46 |

+

- [bitsandbytes](https://github.com/TimDettmers/bitsandbytes)

|

| 47 |

+

- [GPT-Q](https://arxiv.org/abs/2210.17323)

|

| 48 |

+

- [EETQ](https://github.com/NetEase-FuXi/EETQ)

|

| 49 |

+

- [AWQ](https://github.com/casper-hansen/AutoAWQ)

|

| 50 |

+

- [Safetensors](https://github.com/huggingface/safetensors) weight loading

|

| 51 |

+

- Watermarking with [A Watermark for Large Language Models](https://arxiv.org/abs/2301.10226)

|

| 52 |

+

- Logits warper (temperature scaling, top-p, top-k, repetition penalty, more details see [transformers.LogitsProcessor](https://huggingface.co/docs/transformers/internal/generation_utils#transformers.LogitsProcessor))

|

| 53 |

+

- Stop sequences

|

| 54 |

+

- Log probabilities

|

| 55 |

+

- [Speculation](https://huggingface.co/docs/text-generation-inference/conceptual/speculation) ~2x latency

|

| 56 |

+

- [Guidance/JSON](https://huggingface.co/docs/text-generation-inference/conceptual/guidance). Specify output format to speed up inference and make sure the output is valid according to some specs..

|

| 57 |

+

- Custom Prompt Generation: Easily generate text by providing custom prompts to guide the model's output

|

| 58 |

+

- Fine-tuning Support: Utilize fine-tuned models for specific tasks to achieve higher accuracy and performance

|

| 59 |

+

|

| 60 |

+

### Hardware support

|

| 61 |

+

|

| 62 |

+

- [Nvidia](https://github.com/huggingface/text-generation-inference/pkgs/container/text-generation-inference)

|

| 63 |

+

- [AMD](https://github.com/huggingface/text-generation-inference/pkgs/container/text-generation-inference) (-rocm)

|

| 64 |

+

- [Inferentia](https://github.com/huggingface/optimum-neuron/tree/main/text-generation-inference)

|

| 65 |

+

- [Intel GPU](https://github.com/huggingface/text-generation-inference/pull/1475)

|

| 66 |

+

- [Gaudi](https://github.com/huggingface/tgi-gaudi)

|

| 67 |

+

- [Google TPU](https://huggingface.co/docs/optimum-tpu/howto/serving)

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

## Get Started

|

| 71 |

+

|

| 72 |

+

### Docker

|

| 73 |

+

|

| 74 |

+

For a detailed starting guide, please see the [Quick Tour](https://huggingface.co/docs/text-generation-inference/quicktour). The easiest way of getting started is using the official Docker container:

|

| 75 |

+

|

| 76 |

+

```shell

|

| 77 |

+

model=HuggingFaceH4/zephyr-7b-beta

|

| 78 |

+

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

|

| 79 |

+

|

| 80 |

+

docker run --gpus all --shm-size 1g -p 8080:80 -v $volume:/data ghcr.io/huggingface/text-generation-inference:2.0 --model-id $model

|

| 81 |

+

```

|

| 82 |

+

|

| 83 |

+

And then you can make requests like

|

| 84 |

+

|

| 85 |

+

```bash

|

| 86 |

+

curl 127.0.0.1:8080/generate_stream \

|

| 87 |

+

-X POST \

|

| 88 |

+

-d '{"inputs":"What is Deep Learning?","parameters":{"max_new_tokens":20}}' \

|

| 89 |

+

-H 'Content-Type: application/json'

|

| 90 |

+

```

|

| 91 |

+

|

| 92 |

+

**Note:** To use NVIDIA GPUs, you need to install the [NVIDIA Container Toolkit](https://docs.nvidia.com/datacenter/cloud-native/container-toolkit/install-guide.html). We also recommend using NVIDIA drivers with CUDA version 12.2 or higher. For running the Docker container on a machine with no GPUs or CUDA support, it is enough to remove the `--gpus all` flag and add `--disable-custom-kernels`, please note CPU is not the intended platform for this project, so performance might be subpar.

|

| 93 |

+

|

| 94 |

+

**Note:** TGI supports AMD Instinct MI210 and MI250 GPUs. Details can be found in the [Supported Hardware documentation](https://huggingface.co/docs/text-generation-inference/supported_models#supported-hardware). To use AMD GPUs, please use `docker run --device /dev/kfd --device /dev/dri --shm-size 1g -p 8080:80 -v $volume:/data ghcr.io/huggingface/text-generation-inference:2.0-rocm --model-id $model` instead of the command above.

|

| 95 |

+

|

| 96 |

+

To see all options to serve your models (in the [code](https://github.com/huggingface/text-generation-inference/blob/main/launcher/src/main.rs) or in the cli):

|

| 97 |

+

```

|

| 98 |

+

text-generation-launcher --help

|

| 99 |

+

```

|

| 100 |

+

|

| 101 |

+

### API documentation

|

| 102 |

+

|

| 103 |

+

You can consult the OpenAPI documentation of the `text-generation-inference` REST API using the `/docs` route.

|

| 104 |

+

The Swagger UI is also available at: [https://huggingface.github.io/text-generation-inference](https://huggingface.github.io/text-generation-inference).

|

| 105 |

+

|

| 106 |

+

### Using a private or gated model

|

| 107 |

+

|

| 108 |

+

You have the option to utilize the `HUGGING_FACE_HUB_TOKEN` environment variable for configuring the token employed by

|

| 109 |

+

`text-generation-inference`. This allows you to gain access to protected resources.

|

| 110 |

+

|

| 111 |

+

For example, if you want to serve the gated Llama V2 model variants:

|

| 112 |

+

|

| 113 |

+

1. Go to https://huggingface.co/settings/tokens

|

| 114 |

+

2. Copy your cli READ token

|

| 115 |

+

3. Export `HUGGING_FACE_HUB_TOKEN=<your cli READ token>`

|

| 116 |

+

|

| 117 |

+

or with Docker:

|

| 118 |

+

|

| 119 |

+

```shell

|

| 120 |

+

model=meta-llama/Llama-2-7b-chat-hf

|

| 121 |

+

volume=$PWD/data # share a volume with the Docker container to avoid downloading weights every run

|

| 122 |

+

token=<your cli READ token>

|

| 123 |

+

|

| 124 |

+

docker run --gpus all --shm-size 1g -e HUGGING_FACE_HUB_TOKEN=$token -p 8080:80 -v $volume:/data ghcr.io/huggingface/text-generation-inference:2.0 --model-id $model

|

| 125 |

+

```

|

| 126 |

+

|

| 127 |

+

### A note on Shared Memory (shm)

|

| 128 |

+

|

| 129 |

+

[`NCCL`](https://docs.nvidia.com/deeplearning/nccl/user-guide/docs/index.html) is a communication framework used by

|

| 130 |

+

`PyTorch` to do distributed training/inference. `text-generation-inference` make

|

| 131 |

+

use of `NCCL` to enable Tensor Parallelism to dramatically speed up inference for large language models.

|

| 132 |

+

|

| 133 |

+

In order to share data between the different devices of a `NCCL` group, `NCCL` might fall back to using the host memory if

|

| 134 |

+

peer-to-peer using NVLink or PCI is not possible.

|

| 135 |

+

|

| 136 |

+

To allow the container to use 1G of Shared Memory and support SHM sharing, we add `--shm-size 1g` on the above command.

|

| 137 |

+

|

| 138 |

+

If you are running `text-generation-inference` inside `Kubernetes`. You can also add Shared Memory to the container by

|

| 139 |

+

creating a volume with:

|

| 140 |

+

|

| 141 |

+

```yaml

|

| 142 |

+

- name: shm

|

| 143 |

+

emptyDir:

|

| 144 |

+

medium: Memory

|

| 145 |

+

sizeLimit: 1Gi

|

| 146 |

+

```

|

| 147 |

+

|

| 148 |

+

and mounting it to `/dev/shm`.

|

| 149 |

+

|

| 150 |

+

Finally, you can also disable SHM sharing by using the `NCCL_SHM_DISABLE=1` environment variable. However, note that

|

| 151 |

+

this will impact performance.

|

| 152 |

+

|

| 153 |

+

### Distributed Tracing

|

| 154 |

+

|

| 155 |

+

`text-generation-inference` is instrumented with distributed tracing using OpenTelemetry. You can use this feature

|

| 156 |

+

by setting the address to an OTLP collector with the `--otlp-endpoint` argument.

|

| 157 |

+

|

| 158 |

+

### Architecture

|

| 159 |

+

|

| 160 |

+

|

| 161 |

+

|

| 162 |

+

### Local install

|

| 163 |

+

|

| 164 |

+

You can also opt to install `text-generation-inference` locally.

|

| 165 |

+

|

| 166 |

+

First [install Rust](https://rustup.rs/) and create a Python virtual environment with at least

|

| 167 |

+

Python 3.9, e.g. using `conda`:

|

| 168 |

+

|

| 169 |

+

```shell

|

| 170 |

+

curl --proto '=https' --tlsv1.2 -sSf https://sh.rustup.rs | sh

|

| 171 |

+

|

| 172 |

+

conda create -n text-generation-inference python=3.11

|

| 173 |

+

conda activate text-generation-inference

|

| 174 |

+

```

|

| 175 |

+

|

| 176 |

+

You may also need to install Protoc.

|

| 177 |

+

|

| 178 |

+

On Linux:

|

| 179 |

+

|

| 180 |

+

```shell

|

| 181 |

+

PROTOC_ZIP=protoc-21.12-linux-x86_64.zip

|

| 182 |

+

curl -OL https://github.com/protocolbuffers/protobuf/releases/download/v21.12/$PROTOC_ZIP

|

| 183 |

+

sudo unzip -o $PROTOC_ZIP -d /usr/local bin/protoc

|

| 184 |

+

sudo unzip -o $PROTOC_ZIP -d /usr/local 'include/*'

|

| 185 |

+

rm -f $PROTOC_ZIP

|

| 186 |

+

```

|

| 187 |

+

|

| 188 |

+

On MacOS, using Homebrew:

|

| 189 |

+

|

| 190 |

+

```shell

|

| 191 |

+

brew install protobuf

|

| 192 |

+

```

|

| 193 |

+

|

| 194 |

+

Then run:

|

| 195 |

+

|

| 196 |

+

```shell

|

| 197 |

+

BUILD_EXTENSIONS=True make install # Install repository and HF/transformer fork with CUDA kernels

|

| 198 |

+

text-generation-launcher --model-id mistralai/Mistral-7B-Instruct-v0.2

|

| 199 |

+

```

|

| 200 |

+

|

| 201 |

+

**Note:** on some machines, you may also need the OpenSSL libraries and gcc. On Linux machines, run:

|

| 202 |

+

|

| 203 |

+

```shell

|

| 204 |

+

sudo apt-get install libssl-dev gcc -y

|

| 205 |

+

```

|

| 206 |

+

|

| 207 |

+

## Optimized architectures

|

| 208 |

+

|

| 209 |

+

TGI works out of the box to serve optimized models for all modern models. They can be found in [this list](https://huggingface.co/docs/text-generation-inference/supported_models).

|

| 210 |

+

|

| 211 |

+

Other architectures are supported on a best-effort basis using:

|

| 212 |

+

|

| 213 |

+

`AutoModelForCausalLM.from_pretrained(<model>, device_map="auto")`

|

| 214 |

+

|

| 215 |

+

or

|

| 216 |

+

|

| 217 |

+

`AutoModelForSeq2SeqLM.from_pretrained(<model>, device_map="auto")`

|

| 218 |

+

|

| 219 |

+

|

| 220 |

+

|

| 221 |

+

## Run locally

|

| 222 |

+

|

| 223 |

+

### Run

|

| 224 |

+

|

| 225 |

+

```shell

|

| 226 |

+

text-generation-launcher --model-id mistralai/Mistral-7B-Instruct-v0.2

|

| 227 |

+

```

|

| 228 |

+

|

| 229 |

+

### Quantization

|

| 230 |

+

|

| 231 |

+

You can also quantize the weights with bitsandbytes to reduce the VRAM requirement:

|

| 232 |

+

|

| 233 |

+

```shell

|

| 234 |

+

text-generation-launcher --model-id mistralai/Mistral-7B-Instruct-v0.2 --quantize

|

| 235 |

+

```

|

| 236 |

+

|

| 237 |

+

4bit quantization is available using the [NF4 and FP4 data types from bitsandbytes](https://arxiv.org/pdf/2305.14314.pdf). It can be enabled by providing `--quantize bitsandbytes-nf4` or `--quantize bitsandbytes-fp4` as a command line argument to `text-generation-launcher`.

|

| 238 |

+

|

| 239 |

+

## Develop

|

| 240 |

+

|

| 241 |

+

```shell

|

| 242 |

+

make server-dev

|

| 243 |

+

make router-dev

|

| 244 |

+

```

|

| 245 |

+

|

| 246 |

+

## Testing

|

| 247 |

+

|

| 248 |

+

```shell

|

| 249 |

+

# python

|

| 250 |

+

make python-server-tests

|

| 251 |

+

make python-client-tests

|

| 252 |

+

# or both server and client tests

|

| 253 |

+

make python-tests

|

| 254 |

+

# rust cargo tests

|

| 255 |

+

make rust-tests

|

| 256 |

+

# integration tests

|

| 257 |

+

make integration-tests

|

| 258 |

+

```

|