Initial release

Browse files- .gitattributes +1 -0

- LICENSE +201 -0

- README.md +188 -0

- configs/inference.json +101 -0

- configs/inference_autoencoder.json +149 -0

- configs/logging.conf +21 -0

- configs/metadata.json +119 -0

- configs/multi_gpu_train_autoencoder.json +40 -0

- configs/multi_gpu_train_diffusion.json +16 -0

- configs/train_autoencoder.json +206 -0

- configs/train_diffusion.json +157 -0

- docs/README.md +181 -0

- docs/data_license.txt +49 -0

- models/model.pt +3 -0

- models/model_autoencoder.pt +3 -0

- models/model_autoencoder.ts +3 -0

- scripts/__init__.py +12 -0

- scripts/ldm_sampler.py +60 -0

- scripts/ldm_trainer.py +380 -0

- scripts/losses.py +50 -0

- scripts/utils.py +20 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

models/model_autoencoder.ts filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

README.md

ADDED

|

@@ -0,0 +1,188 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

tags:

|

| 3 |

+

- monai

|

| 4 |

+

- medical

|

| 5 |

+

library_name: monai

|

| 6 |

+

license: apache-2.0

|

| 7 |

+

---

|

| 8 |

+

# Model Overview

|

| 9 |

+

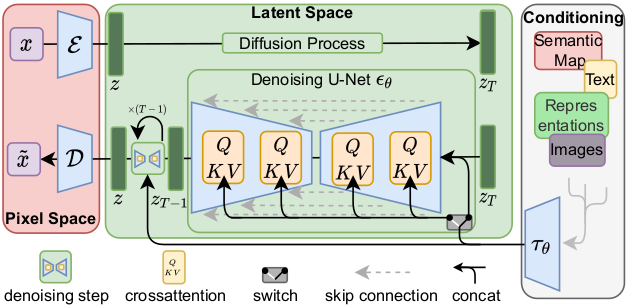

A pre-trained model for volumetric (3D) Brats MRI 3D Latent Diffusion Generative Model.

|

| 10 |

+

|

| 11 |

+

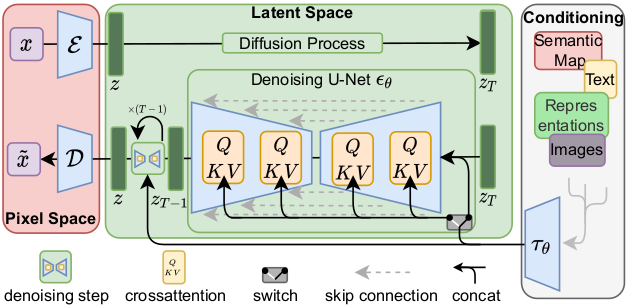

This model is trained on BraTS 2016 and 2017 data from [Medical Decathlon](http://medicaldecathlon.com/), using the Latent diffusion model [1].

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

This model is a generator for creating images like the Flair MRIs based on BraTS 2016 and 2017 data. It was trained as a 3d latent diffusion model and accepts Gaussian random noise as inputs to produce an image output. The `train_autoencoder.json` file describes the training process of the variational autoencoder with GAN loss. The `train_diffusion.json` file describes the training process of the 3D latent diffusion model.

|

| 16 |

+

|

| 17 |

+

In this bundle, the autoencoder uses perceptual loss, which is based on ResNet50 with pre-trained weights (the network is frozen and will not be trained in the bundle). In default, the `pretrained` parameter is specified as `False` in `train_autoencoder.json`. To ensure correct training, changing the default settings is necessary. There are two ways to utilize pretrained weights:

|

| 18 |

+

1. if set `pretrained` to `True`, ImageNet pretrained weights from [torchvision](https://pytorch.org/vision/stable/_modules/torchvision/models/resnet.html#ResNet50_Weights) will be used. However, the weights are for non-commercial use only.

|

| 19 |

+

2. if set `pretrained` to `True` and specifies the `perceptual_loss_model_weights_path` parameter, users are able to load weights from a local path. This is the way this bundle used to train, and the pre-trained weights are from some internal data.

|

| 20 |

+

|

| 21 |

+

Please note that each user is responsible for checking the data source of the pre-trained models, the applicable licenses, and determining if suitable for the intended use.

|

| 22 |

+

|

| 23 |

+

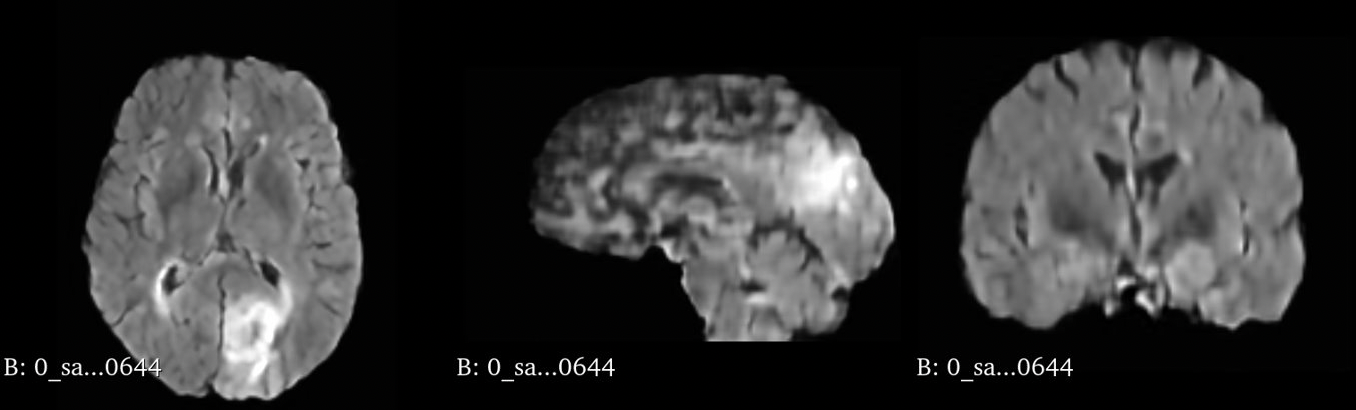

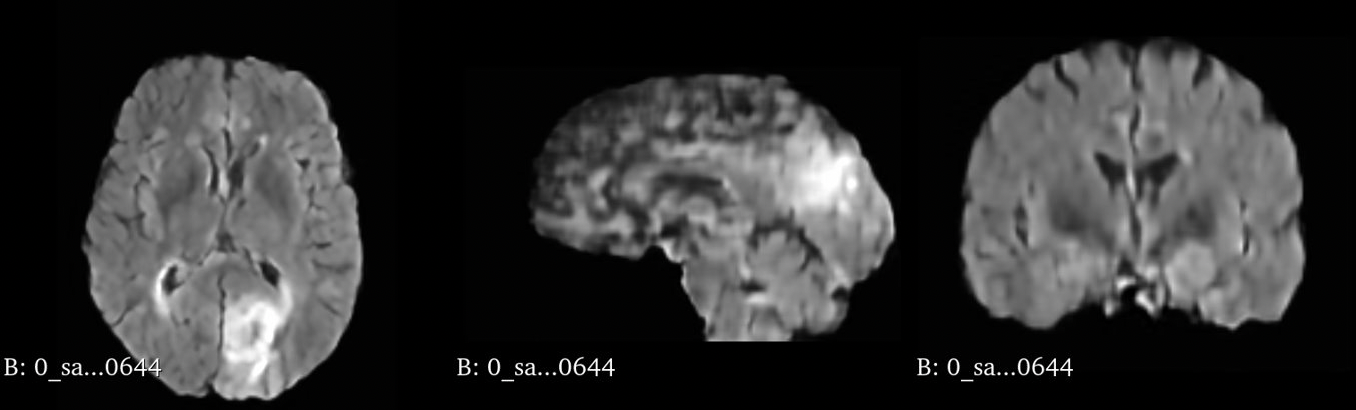

#### Example synthetic image

|

| 24 |

+

An example result from inference is shown below:

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

**This is a demonstration network meant to just show the training process for this sort of network with MONAI. To achieve better performance, users need to use larger dataset like [Brats 2021](https://www.synapse.org/#!Synapse:syn25829067/wiki/610865) and have GPU with memory larger than 32G to enable larger networks and attention layers.**

|

| 28 |

+

|

| 29 |

+

## MONAI Generative Model Dependencies

|

| 30 |

+

[MONAI generative models](https://github.com/Project-MONAI/GenerativeModels) can be installed by

|

| 31 |

+

```

|

| 32 |

+

pip install lpips==0.1.4

|

| 33 |

+

git clone https://github.com/Project-MONAI/GenerativeModels.git

|

| 34 |

+

cd GenerativeModels/

|

| 35 |

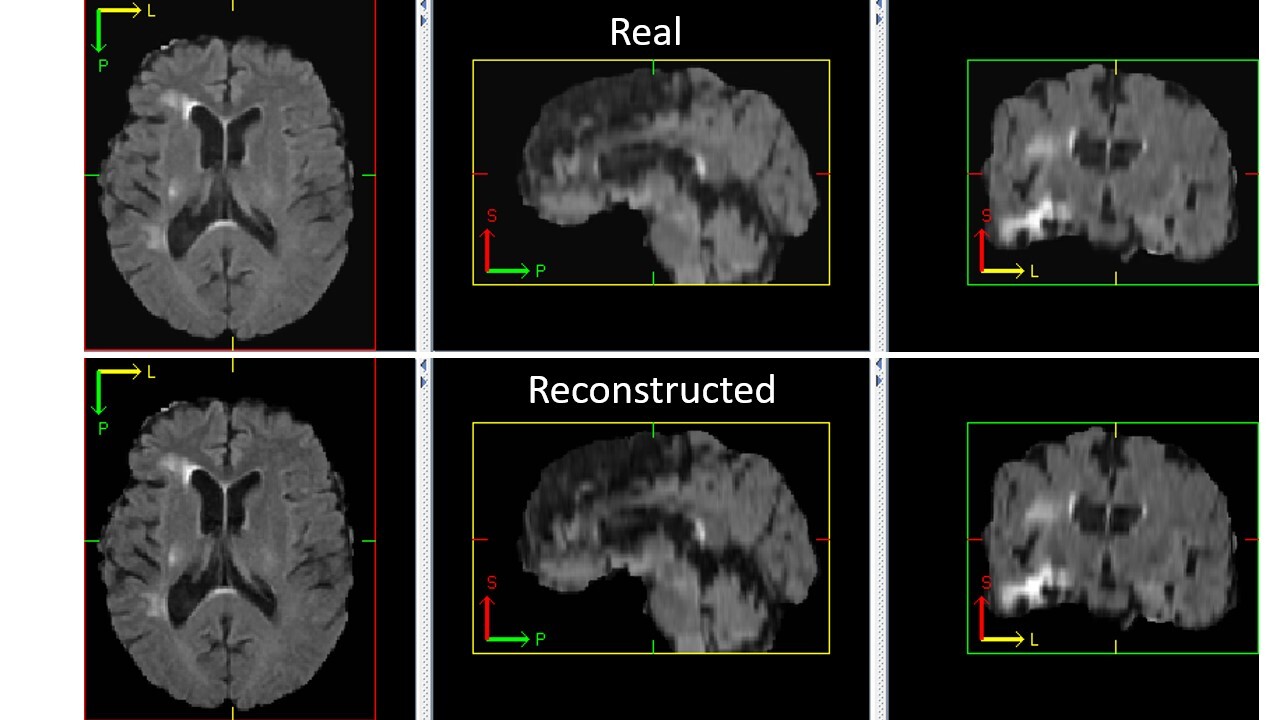

+

git checkout f969c24f88d013dc0045fb7b2885a01fb219992b

|

| 36 |

+

python setup.py install

|

| 37 |

+

cd ..

|

| 38 |

+

```

|

| 39 |

+

|

| 40 |

+

## Data

|

| 41 |

+

The training data is BraTS 2016 and 2017 from the Medical Segmentation Decathalon. Users can find more details on the dataset (`Task01_BrainTumour`) at http://medicaldecathlon.com/.

|

| 42 |

+

|

| 43 |

+

- Target: Image Generation

|

| 44 |

+

- Task: Synthesis

|

| 45 |

+

- Modality: MRI

|

| 46 |

+

- Size: 388 3D volumes (1 channel used)

|

| 47 |

+

|

| 48 |

+

## Training Configuration

|

| 49 |

+

If you have a GPU with less than 32G of memory, you may need to decrease the batch size when training. To do so, modify the `train_batch_size` parameter in the [configs/train_autoencoder.json](../configs/train_autoencoder.json) and [configs/train_diffusion.json](../configs/train_diffusion.json) configuration files.

|

| 50 |

+

|

| 51 |

+

### Training Configuration of Autoencoder

|

| 52 |

+

The autoencoder was trained using the following configuration:

|

| 53 |

+

|

| 54 |

+

- GPU: at least 32GB GPU memory

|

| 55 |

+

- Actual Model Input: 112 x 128 x 80

|

| 56 |

+

- AMP: False

|

| 57 |

+

- Optimizer: Adam

|

| 58 |

+

- Learning Rate: 1e-5

|

| 59 |

+

- Loss: L1 loss, perceptual loss, KL divergence loss, adversarial loss, GAN BCE loss

|

| 60 |

+

|

| 61 |

+

#### Input

|

| 62 |

+

1 channel 3D MRI Flair patches

|

| 63 |

+

|

| 64 |

+

#### Output

|

| 65 |

+

- 1 channel 3D MRI reconstructed patches

|

| 66 |

+

- 8 channel mean of latent features

|

| 67 |

+

- 8 channel standard deviation of latent features

|

| 68 |

+

|

| 69 |

+

### Training Configuration of Diffusion Model

|

| 70 |

+

The latent diffusion model was trained using the following configuration:

|

| 71 |

+

|

| 72 |

+

- GPU: at least 32GB GPU memory

|

| 73 |

+

- Actual Model Input: 36 x 44 x 28

|

| 74 |

+

- AMP: False

|

| 75 |

+

- Optimizer: Adam

|

| 76 |

+

- Learning Rate: 1e-5

|

| 77 |

+

- Loss: MSE loss

|

| 78 |

+

|

| 79 |

+

#### Training Input

|

| 80 |

+

- 8 channel noisy latent features

|

| 81 |

+

- an int that indicates the time step

|

| 82 |

+

|

| 83 |

+

#### Training Output

|

| 84 |

+

8 channel predicted added noise

|

| 85 |

+

|

| 86 |

+

#### Inference Input

|

| 87 |

+

8 channel noise

|

| 88 |

+

|

| 89 |

+

#### Inference Output

|

| 90 |

+

8 channel denoised latent features

|

| 91 |

+

|

| 92 |

+

### Memory Consumption Warning

|

| 93 |

+

|

| 94 |

+

If you face memory issues with data loading, you can lower the caching rate `cache_rate` in the configurations within range [0, 1] to minimize the System RAM requirements.

|

| 95 |

+

|

| 96 |

+

## Performance

|

| 97 |

+

|

| 98 |

+

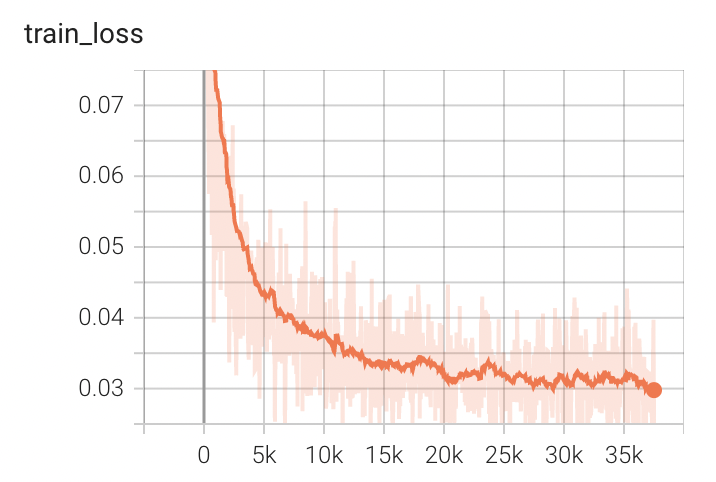

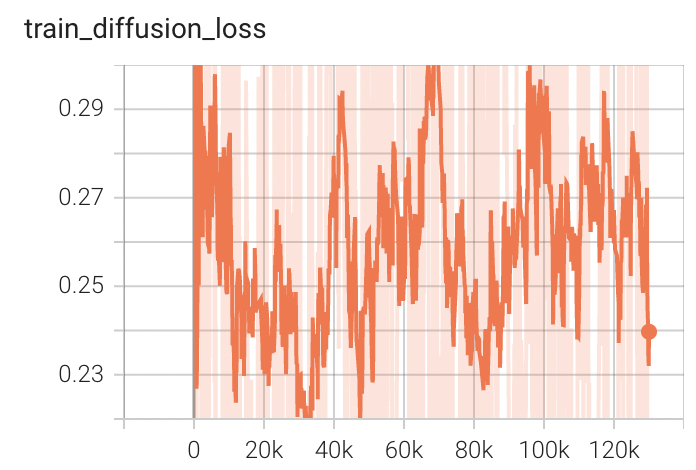

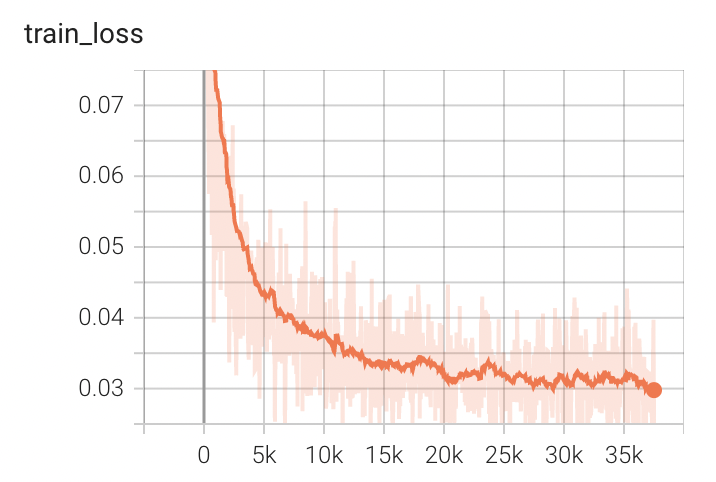

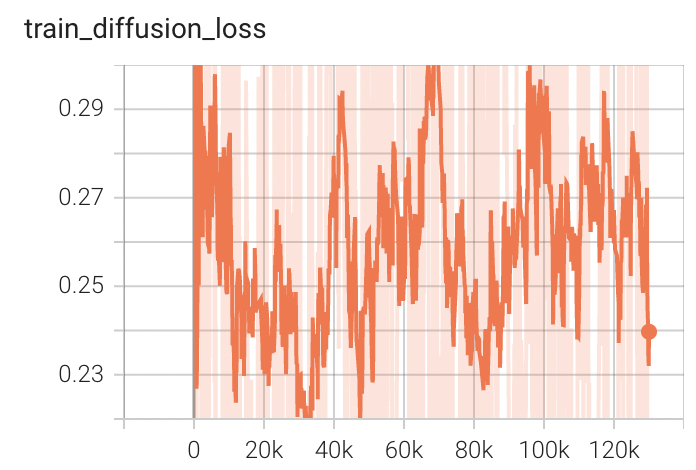

#### Training Loss

|

| 99 |

+

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

|

| 103 |

+

## MONAI Bundle Commands

|

| 104 |

+

|

| 105 |

+

In addition to the Pythonic APIs, a few command line interfaces (CLI) are provided to interact with the bundle. The CLI supports flexible use cases, such as overriding configs at runtime and predefining arguments in a file.

|

| 106 |

+

|

| 107 |

+

For more details usage instructions, visit the [MONAI Bundle Configuration Page](https://docs.monai.io/en/latest/config_syntax.html).

|

| 108 |

+

|

| 109 |

+

### Execute Autoencoder Training

|

| 110 |

+

|

| 111 |

+

#### Execute Autoencoder Training on single GPU

|

| 112 |

+

|

| 113 |

+

```

|

| 114 |

+

python -m monai.bundle run --config_file configs/train_autoencoder.json

|

| 115 |

+

```

|

| 116 |

+

|

| 117 |

+

Please note that if the default dataset path is not modified with the actual path (it should be the path that contains `Task01_BrainTumour`) in the bundle config files, you can also override it by using `--dataset_dir`:

|

| 118 |

+

|

| 119 |

+

```

|

| 120 |

+

python -m monai.bundle run --config_file configs/train_autoencoder.json --dataset_dir <actual dataset path>

|

| 121 |

+

```

|

| 122 |

+

|

| 123 |

+

#### Override the `train` config to execute multi-GPU training for Autoencoder

|

| 124 |

+

To train with multiple GPUs, use the following command, which requires scaling up the learning rate according to the number of GPUs.

|

| 125 |

+

|

| 126 |

+

```

|

| 127 |

+

torchrun --standalone --nnodes=1 --nproc_per_node=8 -m monai.bundle run --config_file "['configs/train_autoencoder.json','configs/multi_gpu_train_autoencoder.json']" --lr 8e-5

|

| 128 |

+

```

|

| 129 |

+

|

| 130 |

+

#### Check the Autoencoder Training result

|

| 131 |

+

The following code generates a reconstructed image from a random input image.

|

| 132 |

+

We can visualize it to see if the autoencoder is trained correctly.

|

| 133 |

+

```

|

| 134 |

+

python -m monai.bundle run --config_file configs/inference_autoencoder.json

|

| 135 |

+

```

|

| 136 |

+

|

| 137 |

+

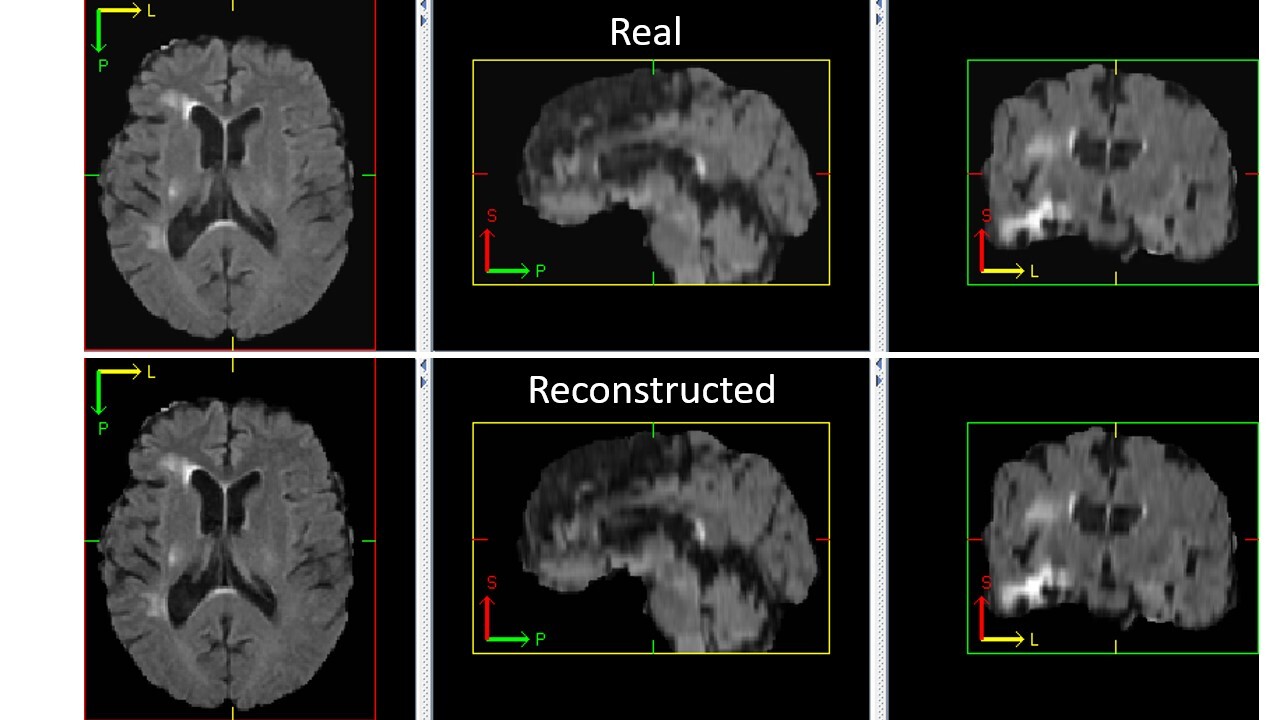

An example of reconstructed image from inference is shown below. If the autoencoder is trained correctly, the reconstructed image should look similar to original image.

|

| 138 |

+

|

| 139 |

+

|

| 140 |

+

|

| 141 |

+

|

| 142 |

+

### Execute Latent Diffusion Training

|

| 143 |

+

|

| 144 |

+

#### Execute Latent Diffusion Model Training on single GPU

|

| 145 |

+

After training the autoencoder, run the following command to train the latent diffusion model. This command will print out the scale factor of the latent feature space. If your autoencoder is well trained, this value should be close to 1.0.

|

| 146 |

+

|

| 147 |

+

```

|

| 148 |

+

python -m monai.bundle run --config_file "['configs/train_autoencoder.json','configs/train_diffusion.json']"

|

| 149 |

+

```

|

| 150 |

+

|

| 151 |

+

#### Override the `train` config to execute multi-GPU training for Latent Diffusion Model

|

| 152 |

+

To train with multiple GPUs, use the following command, which requires scaling up the learning rate according to the number of GPUs.

|

| 153 |

+

|

| 154 |

+

```

|

| 155 |

+

torchrun --standalone --nnodes=1 --nproc_per_node=8 -m monai.bundle run --config_file "['configs/train_autoencoder.json','configs/train_diffusion.json','configs/multi_gpu_train_autoencoder.json','configs/multi_gpu_train_diffusion.json']" --lr 8e-5

|

| 156 |

+

```

|

| 157 |

+

|

| 158 |

+

#### Execute inference

|

| 159 |

+

The following code generates a synthetic image from a random sampled noise.

|

| 160 |

+

```

|

| 161 |

+

python -m monai.bundle run --config_file configs/inference.json

|

| 162 |

+

```

|

| 163 |

+

|

| 164 |

+

#### Export checkpoint to TorchScript file

|

| 165 |

+

|

| 166 |

+

The Autoencoder can be exported into a TorchScript file.

|

| 167 |

+

|

| 168 |

+

```

|

| 169 |

+

python -m monai.bundle ckpt_export autoencoder_def --filepath models/model_autoencoder.ts --ckpt_file models/model_autoencoder.pt --meta_file configs/metadata.json --config_file configs/inference.json

|

| 170 |

+

```

|

| 171 |

+

|

| 172 |

+

# References

|

| 173 |

+

[1] Rombach, Robin, et al. "High-resolution image synthesis with latent diffusion models." Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition. 2022. https://openaccess.thecvf.com/content/CVPR2022/papers/Rombach_High-Resolution_Image_Synthesis_With_Latent_Diffusion_Models_CVPR_2022_paper.pdf

|

| 174 |

+

|

| 175 |

+

# License

|

| 176 |

+

Copyright (c) MONAI Consortium

|

| 177 |

+

|

| 178 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 179 |

+

you may not use this file except in compliance with the License.

|

| 180 |

+

You may obtain a copy of the License at

|

| 181 |

+

|

| 182 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 183 |

+

|

| 184 |

+

Unless required by applicable law or agreed to in writing, software

|

| 185 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 186 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 187 |

+

See the License for the specific language governing permissions and

|

| 188 |

+

limitations under the License.

|

configs/inference.json

ADDED

|

@@ -0,0 +1,101 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"imports": [

|

| 3 |

+

"$import torch",

|

| 4 |

+

"$from datetime import datetime",

|

| 5 |

+

"$from pathlib import Path"

|

| 6 |

+

],

|

| 7 |

+

"bundle_root": ".",

|

| 8 |

+

"model_dir": "$@bundle_root + '/models'",

|

| 9 |

+

"output_dir": "$@bundle_root + '/output'",

|

| 10 |

+

"create_output_dir": "$Path(@output_dir).mkdir(exist_ok=True)",

|

| 11 |

+

"device": "$torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')",

|

| 12 |

+

"output_postfix": "$datetime.now().strftime('sample_%Y%m%d_%H%M%S')",

|

| 13 |

+

"spatial_dims": 3,

|

| 14 |

+

"image_channels": 1,

|

| 15 |

+

"latent_channels": 8,

|

| 16 |

+

"latent_shape": [

|

| 17 |

+

8,

|

| 18 |

+

36,

|

| 19 |

+

44,

|

| 20 |

+

28

|

| 21 |

+

],

|

| 22 |

+

"autoencoder_def": {

|

| 23 |

+

"_target_": "generative.networks.nets.AutoencoderKL",

|

| 24 |

+

"spatial_dims": "@spatial_dims",

|

| 25 |

+

"in_channels": "@image_channels",

|

| 26 |

+

"out_channels": "@image_channels",

|

| 27 |

+

"latent_channels": "@latent_channels",

|

| 28 |

+

"num_channels": [

|

| 29 |

+

64,

|

| 30 |

+

128,

|

| 31 |

+

256

|

| 32 |

+

],

|

| 33 |

+

"num_res_blocks": 2,

|

| 34 |

+

"norm_num_groups": 32,

|

| 35 |

+

"norm_eps": 1e-06,

|

| 36 |

+

"attention_levels": [

|

| 37 |

+

false,

|

| 38 |

+

false,

|

| 39 |

+

false

|

| 40 |

+

],

|

| 41 |

+

"with_encoder_nonlocal_attn": false,

|

| 42 |

+

"with_decoder_nonlocal_attn": false

|

| 43 |

+

},

|

| 44 |

+

"network_def": {

|

| 45 |

+

"_target_": "generative.networks.nets.DiffusionModelUNet",

|

| 46 |

+

"spatial_dims": "@spatial_dims",

|

| 47 |

+

"in_channels": "@latent_channels",

|

| 48 |

+

"out_channels": "@latent_channels",

|

| 49 |

+

"num_channels": [

|

| 50 |

+

256,

|

| 51 |

+

256,

|

| 52 |

+

512

|

| 53 |

+

],

|

| 54 |

+

"attention_levels": [

|

| 55 |

+

false,

|

| 56 |

+

true,

|

| 57 |

+

true

|

| 58 |

+

],

|

| 59 |

+

"num_head_channels": [

|

| 60 |

+

0,

|

| 61 |

+

64,

|

| 62 |

+

64

|

| 63 |

+

],

|

| 64 |

+

"num_res_blocks": 2

|

| 65 |

+

},

|

| 66 |

+

"load_autoencoder_path": "$@bundle_root + '/models/model_autoencoder.pt'",

|

| 67 |

+

"load_autoencoder": "$@autoencoder_def.load_state_dict(torch.load(@load_autoencoder_path))",

|

| 68 |

+

"autoencoder": "$@autoencoder_def.to(@device)",

|

| 69 |

+

"load_diffusion_path": "$@model_dir + '/model.pt'",

|

| 70 |

+

"load_diffusion": "$@network_def.load_state_dict(torch.load(@load_diffusion_path))",

|

| 71 |

+

"diffusion": "$@network_def.to(@device)",

|

| 72 |

+

"noise_scheduler": {

|

| 73 |

+

"_target_": "generative.networks.schedulers.DDIMScheduler",

|

| 74 |

+

"_requires_": [

|

| 75 |

+

"@load_diffusion",

|

| 76 |

+

"@load_autoencoder"

|

| 77 |

+

],

|

| 78 |

+

"num_train_timesteps": 1000,

|

| 79 |

+

"beta_start": 0.0015,

|

| 80 |

+

"beta_end": 0.0195,

|

| 81 |

+

"beta_schedule": "scaled_linear",

|

| 82 |

+

"clip_sample": false

|

| 83 |

+

},

|

| 84 |

+

"noise": "$torch.randn([1]+@latent_shape).to(@device)",

|

| 85 |

+

"set_timesteps": "$@noise_scheduler.set_timesteps(num_inference_steps=50)",

|

| 86 |

+

"inferer": {

|

| 87 |

+

"_target_": "scripts.ldm_sampler.LDMSampler",

|

| 88 |

+

"_requires_": "@set_timesteps"

|

| 89 |

+

},

|

| 90 |

+

"sample": "$@inferer.sampling_fn(@noise, @autoencoder, @diffusion, @noise_scheduler)",

|

| 91 |

+

"saver": {

|

| 92 |

+

"_target_": "SaveImage",

|

| 93 |

+

"_requires_": "@create_output_dir",

|

| 94 |

+

"output_dir": "@output_dir",

|

| 95 |

+

"output_postfix": "@output_postfix"

|

| 96 |

+

},

|

| 97 |

+

"generated_image": "$@sample",

|

| 98 |

+

"run": [

|

| 99 |

+

"$@saver(@generated_image[0])"

|

| 100 |

+

]

|

| 101 |

+

}

|

configs/inference_autoencoder.json

ADDED

|

@@ -0,0 +1,149 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"imports": [

|

| 3 |

+

"$import torch",

|

| 4 |

+

"$from datetime import datetime",

|

| 5 |

+

"$from pathlib import Path"

|

| 6 |

+

],

|

| 7 |

+

"bundle_root": ".",

|

| 8 |

+

"model_dir": "$@bundle_root + '/models'",

|

| 9 |

+

"dataset_dir": "@bundle_root",

|

| 10 |

+

"output_dir": "$@bundle_root + '/output'",

|

| 11 |

+

"create_output_dir": "$Path(@output_dir).mkdir(exist_ok=True)",

|

| 12 |

+

"device": "$torch.device('cuda:0' if torch.cuda.is_available() else 'cpu')",

|

| 13 |

+

"output_orig_postfix": "recon",

|

| 14 |

+

"output_recon_postfix": "orig",

|

| 15 |

+

"channel": 0,

|

| 16 |

+

"spacing": [

|

| 17 |

+

1.1,

|

| 18 |

+

1.1,

|

| 19 |

+

1.1

|

| 20 |

+

],

|

| 21 |

+

"spatial_dims": 3,

|

| 22 |

+

"image_channels": 1,

|

| 23 |

+

"latent_channels": 8,

|

| 24 |

+

"infer_patch_size": [

|

| 25 |

+

144,

|

| 26 |

+

176,

|

| 27 |

+

112

|

| 28 |

+

],

|

| 29 |

+

"autoencoder_def": {

|

| 30 |

+

"_target_": "generative.networks.nets.AutoencoderKL",

|

| 31 |

+

"spatial_dims": "@spatial_dims",

|

| 32 |

+

"in_channels": "@image_channels",

|

| 33 |

+

"out_channels": "@image_channels",

|

| 34 |

+

"latent_channels": "@latent_channels",

|

| 35 |

+

"num_channels": [

|

| 36 |

+

64,

|

| 37 |

+

128,

|

| 38 |

+

256

|

| 39 |

+

],

|

| 40 |

+

"num_res_blocks": 2,

|

| 41 |

+

"norm_num_groups": 32,

|

| 42 |

+

"norm_eps": 1e-06,

|

| 43 |

+

"attention_levels": [

|

| 44 |

+

false,

|

| 45 |

+

false,

|

| 46 |

+

false

|

| 47 |

+

],

|

| 48 |

+

"with_encoder_nonlocal_attn": false,

|

| 49 |

+

"with_decoder_nonlocal_attn": false

|

| 50 |

+

},

|

| 51 |

+

"load_autoencoder_path": "$@bundle_root + '/models/model_autoencoder.pt'",

|

| 52 |

+

"load_autoencoder": "$@autoencoder_def.load_state_dict(torch.load(@load_autoencoder_path))",

|

| 53 |

+

"autoencoder": "$@autoencoder_def.to(@device)",

|

| 54 |

+

"preprocessing_transforms": [

|

| 55 |

+

{

|

| 56 |

+

"_target_": "LoadImaged",

|

| 57 |

+

"keys": "image"

|

| 58 |

+

},

|

| 59 |

+

{

|

| 60 |

+

"_target_": "EnsureChannelFirstd",

|

| 61 |

+

"keys": "image"

|

| 62 |

+

},

|

| 63 |

+

{

|

| 64 |

+

"_target_": "Lambdad",

|

| 65 |

+

"keys": "image",

|

| 66 |

+

"func": "$lambda x: x[@channel, :, :, :]"

|

| 67 |

+

},

|

| 68 |

+

{

|

| 69 |

+

"_target_": "AddChanneld",

|

| 70 |

+

"keys": "image"

|

| 71 |

+

},

|

| 72 |

+

{

|

| 73 |

+

"_target_": "EnsureTyped",

|

| 74 |

+

"keys": "image"

|

| 75 |

+

},

|

| 76 |

+

{

|

| 77 |

+

"_target_": "Orientationd",

|

| 78 |

+

"keys": "image",

|

| 79 |

+

"axcodes": "RAS"

|

| 80 |

+

},

|

| 81 |

+

{

|

| 82 |

+

"_target_": "Spacingd",

|

| 83 |

+

"keys": "image",

|

| 84 |

+

"pixdim": "@spacing",

|

| 85 |

+

"mode": "bilinear"

|

| 86 |

+

}

|

| 87 |

+

],

|

| 88 |

+

"crop_transforms": [

|

| 89 |

+

{

|

| 90 |

+

"_target_": "CenterSpatialCropd",

|

| 91 |

+

"keys": "image",

|

| 92 |

+

"roi_size": "@infer_patch_size"

|

| 93 |

+

}

|

| 94 |

+

],

|

| 95 |

+

"final_transforms": [

|

| 96 |

+

{

|

| 97 |

+

"_target_": "ScaleIntensityRangePercentilesd",

|

| 98 |

+

"keys": "image",

|

| 99 |

+

"lower": 0,

|

| 100 |

+

"upper": 99.5,

|

| 101 |

+

"b_min": 0,

|

| 102 |

+

"b_max": 1

|

| 103 |

+

}

|

| 104 |

+

],

|

| 105 |

+

"preprocessing": {

|

| 106 |

+

"_target_": "Compose",

|

| 107 |

+

"transforms": "$@preprocessing_transforms + @crop_transforms + @final_transforms"

|

| 108 |

+

},

|

| 109 |

+

"dataset": {

|

| 110 |

+

"_target_": "monai.apps.DecathlonDataset",

|

| 111 |

+

"root_dir": "@dataset_dir",

|

| 112 |

+

"task": "Task01_BrainTumour",

|

| 113 |

+

"section": "validation",

|

| 114 |

+

"cache_rate": 0.0,

|

| 115 |

+

"num_workers": 8,

|

| 116 |

+

"download": false,

|

| 117 |

+

"transform": "@preprocessing"

|

| 118 |

+

},

|

| 119 |

+

"dataloader": {

|

| 120 |

+

"_target_": "DataLoader",

|

| 121 |

+

"dataset": "@dataset",

|

| 122 |

+

"batch_size": 1,

|

| 123 |

+

"shuffle": true,

|

| 124 |

+

"num_workers": 0

|

| 125 |

+

},

|

| 126 |

+

"saver_orig": {

|

| 127 |

+

"_target_": "SaveImage",

|

| 128 |

+

"_requires_": "@create_output_dir",

|

| 129 |

+

"output_dir": "@output_dir",

|

| 130 |

+

"output_postfix": "@output_orig_postfix",

|

| 131 |

+

"resample": false,

|

| 132 |

+

"padding_mode": "zeros"

|

| 133 |

+

},

|

| 134 |

+

"saver_recon": {

|

| 135 |

+

"_target_": "SaveImage",

|

| 136 |

+

"_requires_": "@create_output_dir",

|

| 137 |

+

"output_dir": "@output_dir",

|

| 138 |

+

"output_postfix": "@output_recon_postfix",

|

| 139 |

+

"resample": false,

|

| 140 |

+

"padding_mode": "zeros"

|

| 141 |

+

},

|

| 142 |

+

"input_img": "$monai.utils.first(@dataloader)['image'].to(@device)",

|

| 143 |

+

"recon_img": "$@autoencoder(@input_img)[0][0]",

|

| 144 |

+

"run": [

|

| 145 |

+

"$@load_autoencoder",

|

| 146 |

+

"$@saver_orig(@input_img[0][0])",

|

| 147 |

+

"$@saver_recon(@recon_img)"

|

| 148 |

+

]

|

| 149 |

+

}

|

configs/logging.conf

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

[loggers]

|

| 2 |

+

keys=root

|

| 3 |

+

|

| 4 |

+

[handlers]

|

| 5 |

+

keys=consoleHandler

|

| 6 |

+

|

| 7 |

+

[formatters]

|

| 8 |

+

keys=fullFormatter

|

| 9 |

+

|

| 10 |

+

[logger_root]

|

| 11 |

+

level=INFO

|

| 12 |

+

handlers=consoleHandler

|

| 13 |

+

|

| 14 |

+

[handler_consoleHandler]

|

| 15 |

+

class=StreamHandler

|

| 16 |

+

level=INFO

|

| 17 |

+

formatter=fullFormatter

|

| 18 |

+

args=(sys.stdout,)

|

| 19 |

+

|

| 20 |

+

[formatter_fullFormatter]

|

| 21 |

+

format=%(asctime)s - %(name)s - %(levelname)s - %(message)s

|

configs/metadata.json

ADDED

|

@@ -0,0 +1,119 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_generator_ldm_20230507.json",

|

| 3 |

+

"version": "1.0.0",

|

| 4 |

+

"changelog": {

|

| 5 |

+

"1.0.0": "Initial release"

|

| 6 |

+

},

|

| 7 |

+

"monai_version": "1.2.0rc5",

|

| 8 |

+

"pytorch_version": "1.13.1",

|

| 9 |

+

"numpy_version": "1.22.2",

|

| 10 |

+

"optional_packages_version": {

|

| 11 |

+

"nibabel": "5.1.0",

|

| 12 |

+

"lpips": "0.1.4"

|

| 13 |

+

},

|

| 14 |

+

"name": "BraTS MRI image latent diffusion generation",

|

| 15 |

+

"task": "BraTS MRI image synthesis",

|

| 16 |

+

"description": "A generative model for creating 3D brain MRI from Gaussian noise based on BraTS dataset",

|

| 17 |

+

"authors": "MONAI team",

|

| 18 |

+

"copyright": "Copyright (c) MONAI Consortium",

|

| 19 |

+

"data_source": "http://medicaldecathlon.com/",

|

| 20 |

+

"data_type": "nibabel",

|

| 21 |

+

"image_classes": "Flair brain MRI with 1.1x1.1x1.1 mm voxel size",

|

| 22 |

+

"eval_metrics": {},

|

| 23 |

+

"intended_use": "This is a research tool/prototype and not to be used clinically",

|

| 24 |

+

"references": [],

|

| 25 |

+

"autoencoder_data_format": {

|

| 26 |

+

"inputs": {

|

| 27 |

+

"image": {

|

| 28 |

+

"type": "image",

|

| 29 |

+

"format": "image",

|

| 30 |

+

"num_channels": 1,

|

| 31 |

+

"spatial_shape": [

|

| 32 |

+

112,

|

| 33 |

+

128,

|

| 34 |

+

80

|

| 35 |

+

],

|

| 36 |

+

"dtype": "float32",

|

| 37 |

+

"value_range": [

|

| 38 |

+

0,

|

| 39 |

+

1

|

| 40 |

+

],

|

| 41 |

+

"is_patch_data": true

|

| 42 |

+

}

|

| 43 |

+

},

|

| 44 |

+

"outputs": {

|

| 45 |

+

"pred": {

|

| 46 |

+

"type": "image",

|

| 47 |

+

"format": "image",

|

| 48 |

+

"num_channels": 1,

|

| 49 |

+

"spatial_shape": [

|

| 50 |

+

112,

|

| 51 |

+

128,

|

| 52 |

+

80

|

| 53 |

+

],

|

| 54 |

+

"dtype": "float32",

|

| 55 |

+

"value_range": [

|

| 56 |

+

0,

|

| 57 |

+

1

|

| 58 |

+

],

|

| 59 |

+

"is_patch_data": true,

|

| 60 |

+

"channel_def": {

|

| 61 |

+

"0": "image"

|

| 62 |

+

}

|

| 63 |

+

}

|

| 64 |

+

}

|

| 65 |

+

},

|

| 66 |

+

"generator_data_format": {

|

| 67 |

+

"inputs": {

|

| 68 |

+

"latent": {

|

| 69 |

+

"type": "noise",

|

| 70 |

+

"format": "image",

|

| 71 |

+

"num_channels": 8,

|

| 72 |

+

"spatial_shape": [

|

| 73 |

+

36,

|

| 74 |

+

44,

|

| 75 |

+

28

|

| 76 |

+

],

|

| 77 |

+

"dtype": "float32",

|

| 78 |

+

"value_range": [

|

| 79 |

+

0,

|

| 80 |

+

1

|

| 81 |

+

],

|

| 82 |

+

"is_patch_data": true

|

| 83 |

+

},

|

| 84 |

+

"condition": {

|

| 85 |

+

"type": "timesteps",

|

| 86 |

+

"format": "timesteps",

|

| 87 |

+

"num_channels": 1,

|

| 88 |

+

"spatial_shape": [],

|

| 89 |

+

"dtype": "long",

|

| 90 |

+

"value_range": [

|

| 91 |

+

0,

|

| 92 |

+

1000

|

| 93 |

+

],

|

| 94 |

+

"is_patch_data": false

|

| 95 |

+

}

|

| 96 |

+

},

|

| 97 |

+

"outputs": {

|

| 98 |

+

"pred": {

|

| 99 |

+

"type": "feature",

|

| 100 |

+

"format": "image",

|

| 101 |

+

"num_channels": 8,

|

| 102 |

+

"spatial_shape": [

|

| 103 |

+

36,

|

| 104 |

+

44,

|

| 105 |

+

28

|

| 106 |

+

],

|

| 107 |

+

"dtype": "float32",

|

| 108 |

+

"value_range": [

|

| 109 |

+

0,

|

| 110 |

+

1

|

| 111 |

+

],

|

| 112 |

+

"is_patch_data": true,

|

| 113 |

+

"channel_def": {

|

| 114 |

+

"0": "image"

|

| 115 |

+

}

|

| 116 |

+

}

|

| 117 |

+

}

|

| 118 |

+

}

|

| 119 |

+

}

|

configs/multi_gpu_train_autoencoder.json

ADDED

|

@@ -0,0 +1,40 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"device": "$torch.device(f'cuda:{dist.get_rank()}')",

|

| 3 |

+

"gnetwork": {

|

| 4 |

+

"_target_": "torch.nn.parallel.DistributedDataParallel",

|

| 5 |

+