add support for TensorRT conversion and inference

Browse files- README.md +37 -0

- configs/inference_trt.yaml +8 -0

- configs/metadata.json +2 -1

- docs/README.md +37 -0

README.md

CHANGED

|

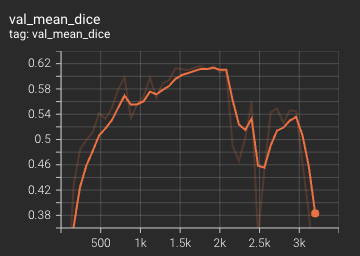

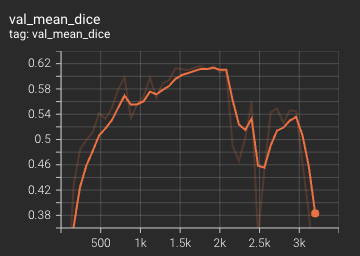

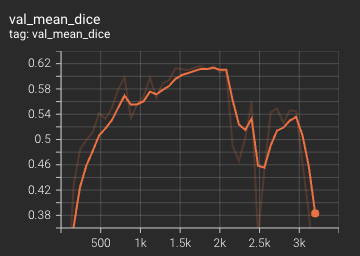

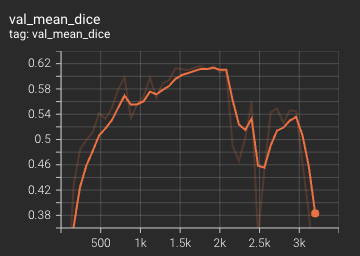

@@ -86,6 +86,31 @@ The mean dice score over 3200 epochs (the bright curve is smoothed, and the dark

|

|

| 86 |

|

| 87 |

|

| 88 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 89 |

### Searched Architecture Visualization

|

| 90 |

Users can install Graphviz for visualization of searched architectures (needed in [decode_plot.py](https://github.com/Project-MONAI/tutorials/blob/main/automl/DiNTS/decode_plot.py)). The edges between nodes indicate global structure, and numbers next to edges represent different operations in the cell searching space. An example of searched architecture is shown as follows:

|

| 91 |

|

|

@@ -144,6 +169,18 @@ python -m monai.bundle run --config_file configs/inference.yaml

|

|

| 144 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.yaml

|

| 145 |

```

|

| 146 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 147 |

# References

|

| 148 |

|

| 149 |

[1] He, Y., Yang, D., Roth, H., Zhao, C. and Xu, D., 2021. Dints: Differentiable neural network topology search for 3d medical image segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 5841-5850).

|

|

|

|

| 86 |

|

| 87 |

|

| 88 |

|

| 89 |

+

#### TensorRT speedup

|

| 90 |

+

This bundle supports acceleration with TensorRT. The table below displays the speedup ratios observed on an A100 80G GPU.

|

| 91 |

+

|

| 92 |

+

| method | torch_fp32(ms) | torch_amp(ms) | trt_fp32(ms) | trt_fp16(ms) | speedup amp | speedup fp32 | speedup fp16 | amp vs fp16|

|

| 93 |

+

| :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: |

|

| 94 |

+

| model computation | 54611.72 | 19240.66 | 16104.8 | 11443.57 | 2.84 | 3.39 | 4.77 | 1.68 |

|

| 95 |

+

| end2end | 133.93 | 43.41 | 35.65 | 26.63 | 3.09 | 3.76 | 5.03 | 1.63 |

|

| 96 |

+

|

| 97 |

+

Where:

|

| 98 |

+

- `model computation` means the speedup ratio of model's inference with a random input without preprocessing and postprocessing

|

| 99 |

+

- `end2end` means run the bundle end-to-end with the TensorRT based model.

|

| 100 |

+

- `torch_fp32` and `torch_amp` are for the PyTorch models with or without `amp` mode.

|

| 101 |

+

- `trt_fp32` and `trt_fp16` are for the TensorRT based models converted in corresponding precision.

|

| 102 |

+

- `speedup amp`, `speedup fp32` and `speedup fp16` are the speedup ratios of corresponding models versus the PyTorch float32 model

|

| 103 |

+

- `amp vs fp16` is the speedup ratio between the PyTorch amp model and the TensorRT float16 based model.

|

| 104 |

+

|

| 105 |

+

This result is benchmarked under:

|

| 106 |

+

- TensorRT: 8.6.1+cuda12.0

|

| 107 |

+

- Torch-TensorRT Version: 1.4.0

|

| 108 |

+

- CPU Architecture: x86-64

|

| 109 |

+

- OS: ubuntu 20.04

|

| 110 |

+

- Python version:3.8.10

|

| 111 |

+

- CUDA version: 12.1

|

| 112 |

+

- GPU models and configuration: A100 80G

|

| 113 |

+

|

| 114 |

### Searched Architecture Visualization

|

| 115 |

Users can install Graphviz for visualization of searched architectures (needed in [decode_plot.py](https://github.com/Project-MONAI/tutorials/blob/main/automl/DiNTS/decode_plot.py)). The edges between nodes indicate global structure, and numbers next to edges represent different operations in the cell searching space. An example of searched architecture is shown as follows:

|

| 116 |

|

|

|

|

| 169 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.yaml

|

| 170 |

```

|

| 171 |

|

| 172 |

+

#### Export checkpoint to TensorRT based models with fp32 or fp16 precision:

|

| 173 |

+

|

| 174 |

+

```

|

| 175 |

+

python -m monai.bundle trt_export --net_id network_def --filepath models/model_trt.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.yaml --precision <fp32/fp16> --use_trace "True" --dynamic_batchsize "[1, 4, 8]" --converter_kwargs "{'truncate_long_and_double':True, 'torch_executed_ops': ['aten::upsample_trilinear3d']}"

|

| 176 |

+

```

|

| 177 |

+

|

| 178 |

+

#### Execute inference with the TensorRT model:

|

| 179 |

+

|

| 180 |

+

```

|

| 181 |

+

python -m monai.bundle run --config_file "['configs/inference.yaml', 'configs/inference_trt.yaml']"

|

| 182 |

+

```

|

| 183 |

+

|

| 184 |

# References

|

| 185 |

|

| 186 |

[1] He, Y., Yang, D., Roth, H., Zhao, C. and Xu, D., 2021. Dints: Differentiable neural network topology search for 3d medical image segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 5841-5850).

|

configs/inference_trt.yaml

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

imports:

|

| 3 |

+

- "$import glob"

|

| 4 |

+

- "$import os"

|

| 5 |

+

- "$import torch_tensorrt"

|

| 6 |

+

handlers#0#_disabled_: true

|

| 7 |

+

network_def: "$torch.jit.load(@bundle_root + '/models/model_trt.ts')"

|

| 8 |

+

evaluator#amp: false

|

configs/metadata.json

CHANGED

|

@@ -1,7 +1,8 @@

|

|

| 1 |

{

|

| 2 |

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_20220324.json",

|

| 3 |

-

"version": "0.4.

|

| 4 |

"changelog": {

|

|

|

|

| 5 |

"0.4.2": "update search function to match monai 1.2",

|

| 6 |

"0.4.1": "fix the wrong GPU index issue of multi-node",

|

| 7 |

"0.4.0": "remove error dollar symbol in readme",

|

|

|

|

| 1 |

{

|

| 2 |

"schema": "https://github.com/Project-MONAI/MONAI-extra-test-data/releases/download/0.8.1/meta_schema_20220324.json",

|

| 3 |

+

"version": "0.4.3",

|

| 4 |

"changelog": {

|

| 5 |

+

"0.4.3": "add support for TensorRT conversion and inference",

|

| 6 |

"0.4.2": "update search function to match monai 1.2",

|

| 7 |

"0.4.1": "fix the wrong GPU index issue of multi-node",

|

| 8 |

"0.4.0": "remove error dollar symbol in readme",

|

docs/README.md

CHANGED

|

@@ -79,6 +79,31 @@ The mean dice score over 3200 epochs (the bright curve is smoothed, and the dark

|

|

| 79 |

|

| 80 |

|

| 81 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 82 |

### Searched Architecture Visualization

|

| 83 |

Users can install Graphviz for visualization of searched architectures (needed in [decode_plot.py](https://github.com/Project-MONAI/tutorials/blob/main/automl/DiNTS/decode_plot.py)). The edges between nodes indicate global structure, and numbers next to edges represent different operations in the cell searching space. An example of searched architecture is shown as follows:

|

| 84 |

|

|

@@ -137,6 +162,18 @@ python -m monai.bundle run --config_file configs/inference.yaml

|

|

| 137 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.yaml

|

| 138 |

```

|

| 139 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 140 |

# References

|

| 141 |

|

| 142 |

[1] He, Y., Yang, D., Roth, H., Zhao, C. and Xu, D., 2021. Dints: Differentiable neural network topology search for 3d medical image segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 5841-5850).

|

|

|

|

| 79 |

|

| 80 |

|

| 81 |

|

| 82 |

+

#### TensorRT speedup

|

| 83 |

+

This bundle supports acceleration with TensorRT. The table below displays the speedup ratios observed on an A100 80G GPU.

|

| 84 |

+

|

| 85 |

+

| method | torch_fp32(ms) | torch_amp(ms) | trt_fp32(ms) | trt_fp16(ms) | speedup amp | speedup fp32 | speedup fp16 | amp vs fp16|

|

| 86 |

+

| :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: | :---: |

|

| 87 |

+

| model computation | 54611.72 | 19240.66 | 16104.8 | 11443.57 | 2.84 | 3.39 | 4.77 | 1.68 |

|

| 88 |

+

| end2end | 133.93 | 43.41 | 35.65 | 26.63 | 3.09 | 3.76 | 5.03 | 1.63 |

|

| 89 |

+

|

| 90 |

+

Where:

|

| 91 |

+

- `model computation` means the speedup ratio of model's inference with a random input without preprocessing and postprocessing

|

| 92 |

+

- `end2end` means run the bundle end-to-end with the TensorRT based model.

|

| 93 |

+

- `torch_fp32` and `torch_amp` are for the PyTorch models with or without `amp` mode.

|

| 94 |

+

- `trt_fp32` and `trt_fp16` are for the TensorRT based models converted in corresponding precision.

|

| 95 |

+

- `speedup amp`, `speedup fp32` and `speedup fp16` are the speedup ratios of corresponding models versus the PyTorch float32 model

|

| 96 |

+

- `amp vs fp16` is the speedup ratio between the PyTorch amp model and the TensorRT float16 based model.

|

| 97 |

+

|

| 98 |

+

This result is benchmarked under:

|

| 99 |

+

- TensorRT: 8.6.1+cuda12.0

|

| 100 |

+

- Torch-TensorRT Version: 1.4.0

|

| 101 |

+

- CPU Architecture: x86-64

|

| 102 |

+

- OS: ubuntu 20.04

|

| 103 |

+

- Python version:3.8.10

|

| 104 |

+

- CUDA version: 12.1

|

| 105 |

+

- GPU models and configuration: A100 80G

|

| 106 |

+

|

| 107 |

### Searched Architecture Visualization

|

| 108 |

Users can install Graphviz for visualization of searched architectures (needed in [decode_plot.py](https://github.com/Project-MONAI/tutorials/blob/main/automl/DiNTS/decode_plot.py)). The edges between nodes indicate global structure, and numbers next to edges represent different operations in the cell searching space. An example of searched architecture is shown as follows:

|

| 109 |

|

|

|

|

| 162 |

python -m monai.bundle ckpt_export network_def --filepath models/model.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.yaml

|

| 163 |

```

|

| 164 |

|

| 165 |

+

#### Export checkpoint to TensorRT based models with fp32 or fp16 precision:

|

| 166 |

+

|

| 167 |

+

```

|

| 168 |

+

python -m monai.bundle trt_export --net_id network_def --filepath models/model_trt.ts --ckpt_file models/model.pt --meta_file configs/metadata.json --config_file configs/inference.yaml --precision <fp32/fp16> --use_trace "True" --dynamic_batchsize "[1, 4, 8]" --converter_kwargs "{'truncate_long_and_double':True, 'torch_executed_ops': ['aten::upsample_trilinear3d']}"

|

| 169 |

+

```

|

| 170 |

+

|

| 171 |

+

#### Execute inference with the TensorRT model:

|

| 172 |

+

|

| 173 |

+

```

|

| 174 |

+

python -m monai.bundle run --config_file "['configs/inference.yaml', 'configs/inference_trt.yaml']"

|

| 175 |

+

```

|

| 176 |

+

|

| 177 |

# References

|

| 178 |

|

| 179 |

[1] He, Y., Yang, D., Roth, H., Zhao, C. and Xu, D., 2021. Dints: Differentiable neural network topology search for 3d medical image segmentation. In Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (pp. 5841-5850).

|