Commit

•

e9e2aaf

0

Parent(s):

Duplicate from ayushtues/blipdiffusion-controlnet

Browse filesCo-authored-by: Ayush Mangal <ayushtues@users.noreply.huggingface.co>

- .gitattributes +35 -0

- README.md +198 -0

- controlnet/config.json +45 -0

- controlnet/diffusion_pytorch_model.bin +3 -0

- image_processor/preprocessor_config.json +24 -0

- model_index.json +47 -0

- qformer/config.json +29 -0

- qformer/pytorch_model.bin +3 -0

- scheduler/scheduler_config.json +14 -0

- text_encoder/config.json +25 -0

- text_encoder/pytorch_model.bin +3 -0

- tokenizer/merges.txt +0 -0

- tokenizer/special_tokens_map.json +24 -0

- tokenizer/tokenizer_config.json +33 -0

- tokenizer/vocab.json +0 -0

- unet/config.json +65 -0

- unet/diffusion_pytorch_model.bin +3 -0

- vae/config.json +31 -0

- vae/diffusion_pytorch_model.bin +3 -0

.gitattributes

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

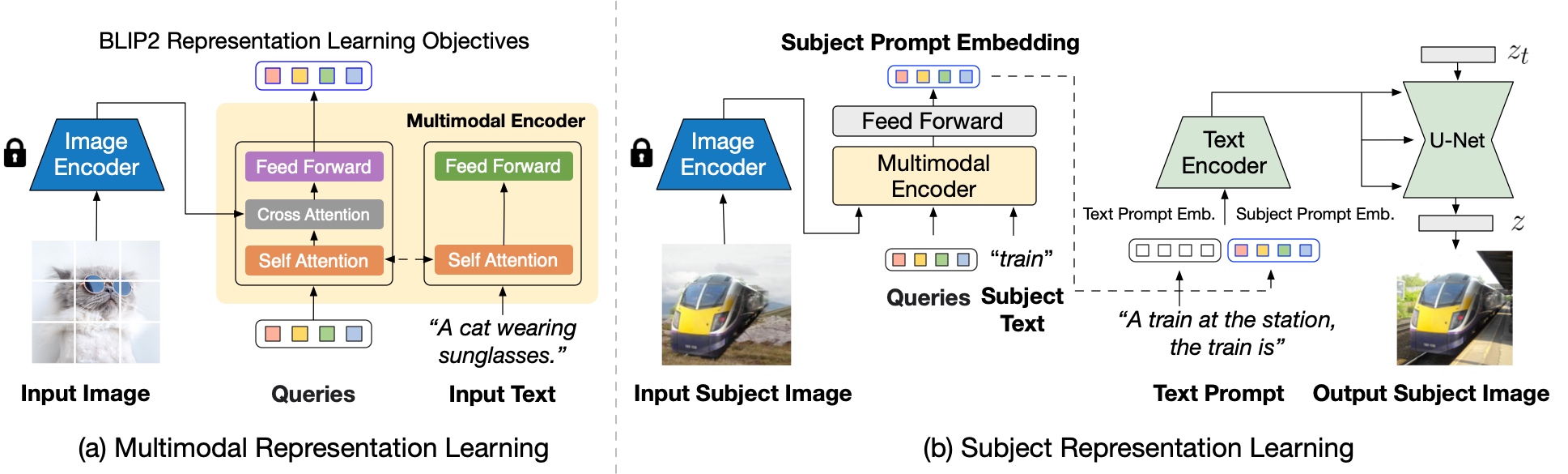

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

README.md

ADDED

|

@@ -0,0 +1,198 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: apache-2.0

|

| 3 |

+

language:

|

| 4 |

+

- en

|

| 5 |

+

library_name: diffusers

|

| 6 |

+

---

|

| 7 |

+

# BLIP-Diffusion: Pre-trained Subject Representation for Controllable Text-to-Image Generation and Editing

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

<!-- Provide a quick summary of what the model is/does. -->

|

| 11 |

+

|

| 12 |

+

Model card for BLIP-Diffusion, a text to image Diffusion model which enables zero-shot subject-driven generation and control-guided zero-shot generation.

|

| 13 |

+

|

| 14 |

+

The abstract from the paper is:

|

| 15 |

+

|

| 16 |

+

*Subject-driven text-to-image generation models create novel renditions of an input subject based on text prompts. Existing models suffer from lengthy fine-tuning and difficulties preserving the subject fidelity. To overcome these limitations, we introduce BLIP-Diffusion, a new subject-driven image generation model that supports multimodal control which consumes inputs of subject images and text prompts. Unlike other subject-driven generation models, BLIP-Diffusion introduces a new multimodal encoder which is pre-trained to provide subject representation. We first pre-train the multimodal encoder following BLIP-2 to produce visual representation aligned with the text. Then we design a subject representation learning task which enables a diffusion model to leverage such visual representation and generates new subject renditions. Compared with previous methods such as DreamBooth, our model enables zero-shot subject-driven generation, and efficient fine-tuning for customized subject with up to 20x speedup. We also demonstrate that BLIP-Diffusion can be flexibly combined with existing techniques such as ControlNet and prompt-to-prompt to enable novel subject-driven generation and editing applications.*

|

| 17 |

+

|

| 18 |

+

The model is created by Dongxu Li, Junnan Li, Steven C.H. Hoi.

|

| 19 |

+

|

| 20 |

+

### Model Sources

|

| 21 |

+

|

| 22 |

+

<!-- Provide the basic links for the model. -->

|

| 23 |

+

|

| 24 |

+

- **Original Repository:** https://github.com/salesforce/LAVIS/tree/main

|

| 25 |

+

- **Project Page:** https://dxli94.github.io/BLIP-Diffusion-website/

|

| 26 |

+

|

| 27 |

+

## Uses

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

### Zero-Shot Subject Driven Generation

|

| 31 |

+

```python

|

| 32 |

+

from diffusers.pipelines import BlipDiffusionPipeline

|

| 33 |

+

from diffusers.utils import load_image

|

| 34 |

+

import torch

|

| 35 |

+

|

| 36 |

+

blip_diffusion_pipe = BlipDiffusionPipeline.from_pretrained(

|

| 37 |

+

"Salesforce/blipdiffusion", torch_dtype=torch.float16

|

| 38 |

+

).to("cuda")

|

| 39 |

+

|

| 40 |

+

cond_subject = "dog"

|

| 41 |

+

tgt_subject = "dog"

|

| 42 |

+

text_prompt_input = "swimming underwater"

|

| 43 |

+

|

| 44 |

+

cond_image = load_image(

|

| 45 |

+

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/dog.jpg"

|

| 46 |

+

)

|

| 47 |

+

|

| 48 |

+

iter_seed = 88888

|

| 49 |

+

guidance_scale = 7.5

|

| 50 |

+

num_inference_steps = 25

|

| 51 |

+

negative_prompt = "over-exposure, under-exposure, saturated, duplicate, out of frame, lowres, cropped, worst quality, low quality, jpeg artifacts, morbid, mutilated, out of frame, ugly, bad anatomy, bad proportions, deformed, blurry, duplicate"

|

| 52 |

+

|

| 53 |

+

output = blip_diffusion_pipe(

|

| 54 |

+

text_prompt_input,

|

| 55 |

+

cond_image,

|

| 56 |

+

cond_subject,

|

| 57 |

+

tgt_subject,

|

| 58 |

+

guidance_scale=guidance_scale,

|

| 59 |

+

num_inference_steps=num_inference_steps,

|

| 60 |

+

neg_prompt=negative_prompt,

|

| 61 |

+

height=512,

|

| 62 |

+

width=512,

|

| 63 |

+

).images

|

| 64 |

+

output[0].save("image.png")

|

| 65 |

+

```

|

| 66 |

+

Input Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/dog.jpg" style="width:500px;"/>

|

| 67 |

+

|

| 68 |

+

Generatred Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/dog_underwater.png" style="width:500px;"/>

|

| 69 |

+

|

| 70 |

+

### Controlled subject-driven generation

|

| 71 |

+

|

| 72 |

+

```python

|

| 73 |

+

from diffusers.pipelines import BlipDiffusionControlNetPipeline

|

| 74 |

+

from diffusers.utils import load_image

|

| 75 |

+

from controlnet_aux import CannyDetector

|

| 76 |

+

|

| 77 |

+

blip_diffusion_pipe = BlipDiffusionControlNetPipeline.from_pretrained(

|

| 78 |

+

"Salesforce/blipdiffusion-controlnet", torch_dtype=torch.float16

|

| 79 |

+

).to("cuda")

|

| 80 |

+

|

| 81 |

+

style_subject = "flower" # subject that defines the style

|

| 82 |

+

tgt_subject = "teapot" # subject to generate.

|

| 83 |

+

text_prompt = "on a marble table"

|

| 84 |

+

|

| 85 |

+

cldm_cond_image = load_image(

|

| 86 |

+

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/kettle.jpg"

|

| 87 |

+

).resize((512, 512))

|

| 88 |

+

canny = CannyDetector()

|

| 89 |

+

cldm_cond_image = canny(cldm_cond_image, 30, 70, output_type="pil")

|

| 90 |

+

style_image = load_image(

|

| 91 |

+

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg"

|

| 92 |

+

)

|

| 93 |

+

|

| 94 |

+

guidance_scale = 7.5

|

| 95 |

+

num_inference_steps = 50

|

| 96 |

+

negative_prompt = "over-exposure, under-exposure, saturated, duplicate, out of frame, lowres, cropped, worst quality, low quality, jpeg artifacts, morbid, mutilated, out of frame, ugly, bad anatomy, bad proportions, deformed, blurry, duplicate"

|

| 97 |

+

|

| 98 |

+

output = blip_diffusion_pipe(

|

| 99 |

+

text_prompt,

|

| 100 |

+

style_image,

|

| 101 |

+

cldm_cond_image,

|

| 102 |

+

style_subject,

|

| 103 |

+

tgt_subject,

|

| 104 |

+

guidance_scale=guidance_scale,

|

| 105 |

+

num_inference_steps=num_inference_steps,

|

| 106 |

+

neg_prompt=negative_prompt,

|

| 107 |

+

height=512,

|

| 108 |

+

width=512,

|

| 109 |

+

).images

|

| 110 |

+

output[0].save("image.png")

|

| 111 |

+

```

|

| 112 |

+

|

| 113 |

+

Input Style Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg" style="width:500px;"/>

|

| 114 |

+

Canny Edge Input : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/kettle.jpg" style="width:500px;"/>

|

| 115 |

+

Generated Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/canny_generated.png" style="width:500px;"/>

|

| 116 |

+

|

| 117 |

+

### Controlled subject-driven generation Scribble

|

| 118 |

+

```python

|

| 119 |

+

from diffusers.pipelines import BlipDiffusionControlNetPipeline

|

| 120 |

+

from diffusers.utils import load_image

|

| 121 |

+

from controlnet_aux import HEDdetector

|

| 122 |

+

|

| 123 |

+

blip_diffusion_pipe = BlipDiffusionControlNetPipeline.from_pretrained(

|

| 124 |

+

"Salesforce/blipdiffusion-controlnet"

|

| 125 |

+

)

|

| 126 |

+

controlnet = ControlNetModel.from_pretrained("lllyasviel/sd-controlnet-scribble")

|

| 127 |

+

blip_diffusion_pipe.controlnet = controlnet

|

| 128 |

+

blip_diffusion_pipe.to("cuda")

|

| 129 |

+

|

| 130 |

+

style_subject = "flower" # subject that defines the style

|

| 131 |

+

tgt_subject = "bag" # subject to generate.

|

| 132 |

+

text_prompt = "on a table"

|

| 133 |

+

cldm_cond_image = load_image(

|

| 134 |

+

"https://huggingface.co/lllyasviel/sd-controlnet-scribble/resolve/main/images/bag.png"

|

| 135 |

+

).resize((512, 512))

|

| 136 |

+

hed = HEDdetector.from_pretrained("lllyasviel/Annotators")

|

| 137 |

+

cldm_cond_image = hed(cldm_cond_image)

|

| 138 |

+

style_image = load_image(

|

| 139 |

+

"https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg"

|

| 140 |

+

)

|

| 141 |

+

|

| 142 |

+

guidance_scale = 7.5

|

| 143 |

+

num_inference_steps = 50

|

| 144 |

+

negative_prompt = "over-exposure, under-exposure, saturated, duplicate, out of frame, lowres, cropped, worst quality, low quality, jpeg artifacts, morbid, mutilated, out of frame, ugly, bad anatomy, bad proportions, deformed, blurry, duplicate"

|

| 145 |

+

|

| 146 |

+

output = blip_diffusion_pipe(

|

| 147 |

+

text_prompt,

|

| 148 |

+

style_image,

|

| 149 |

+

cldm_cond_image,

|

| 150 |

+

style_subject,

|

| 151 |

+

tgt_subject,

|

| 152 |

+

guidance_scale=guidance_scale,

|

| 153 |

+

num_inference_steps=num_inference_steps,

|

| 154 |

+

neg_prompt=negative_prompt,

|

| 155 |

+

height=512,

|

| 156 |

+

width=512,

|

| 157 |

+

).images

|

| 158 |

+

output[0].save("image.png")

|

| 159 |

+

```

|

| 160 |

+

|

| 161 |

+

Input Style Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/flower.jpg" style="width:500px;"/>

|

| 162 |

+

Scribble Input : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/scribble.png" style="width:500px;"/>

|

| 163 |

+

Generated Image : <img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/scribble_output.png" style="width:500px;"/>

|

| 164 |

+

|

| 165 |

+

## Model Architecture

|

| 166 |

+

|

| 167 |

+

Blip-Diffusion learns a **pre-trained subject representation**. uch representation aligns with text embeddings and in the meantime also encodes the subject appearance. This allows efficient fine-tuning of the model for high-fidelity subject-driven applications, such as text-to-image generation, editing and style transfer.

|

| 168 |

+

|

| 169 |

+

To this end, they design a two-stage pre-training strategy to learn generic subject representation. In the first pre-training stage, they perform multimodal representation learning, which enforces BLIP-2 to produce text-aligned visual features based on the input image. In the second pre-training stage, they design a subject representation learning task, called prompted context generation, where the diffusion model learns to generate novel subject renditions based on the input visual features.

|

| 170 |

+

|

| 171 |

+

To achieve this, they curate pairs of input-target images with the same subject appearing in different contexts. Specifically, they synthesize input images by composing the subject with a random background. During pre-training, they feed the synthetic input image and the subject class label through BLIP-2 to obtain the multimodal embeddings as subject representation. The subject representation is then combined with a text prompt to guide the generation of the target image.

|

| 172 |

+

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

The architecture is also compatible to integrate with established techniques built on top of the diffusion model, such as ControlNet.

|

| 176 |

+

|

| 177 |

+

They attach the U-Net of the pre-trained ControlNet to that of BLIP-Diffusion via residuals. In this way, the model takes into account the input structure condition, such as edge maps and depth maps, in addition to the subject cues. Since the model inherits the architecture of the original latent diffusion model, they observe satisfying generations using off-the-shelf integration with pre-trained ControlNet without further training.

|

| 178 |

+

|

| 179 |

+

<img src="https://huggingface.co/datasets/ayushtues/blipdiffusion_images/resolve/main/arch_controlnet.png" style="width:50%;"/>

|

| 180 |

+

|

| 181 |

+

## Citation

|

| 182 |

+

|

| 183 |

+

|

| 184 |

+

**BibTeX:**

|

| 185 |

+

|

| 186 |

+

If you find this repository useful in your research, please cite:

|

| 187 |

+

|

| 188 |

+

```

|

| 189 |

+

@misc{li2023blipdiffusion,

|

| 190 |

+

title={BLIP-Diffusion: Pre-trained Subject Representation for Controllable Text-to-Image Generation and Editing},

|

| 191 |

+

author={Dongxu Li and Junnan Li and Steven C. H. Hoi},

|

| 192 |

+

year={2023},

|

| 193 |

+

eprint={2305.14720},

|

| 194 |

+

archivePrefix={arXiv},

|

| 195 |

+

primaryClass={cs.CV}

|

| 196 |

+

}

|

| 197 |

+

```

|

| 198 |

+

|

controlnet/config.json

ADDED

|

@@ -0,0 +1,45 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "ControlNetModel",

|

| 3 |

+

"_diffusers_version": "0.18.0.dev0",

|

| 4 |

+

"_name_or_path": "lllyasviel/sd-controlnet-canny",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"attention_head_dim": 8,

|

| 7 |

+

"block_out_channels": [

|

| 8 |

+

320,

|

| 9 |

+

640,

|

| 10 |

+

1280,

|

| 11 |

+

1280

|

| 12 |

+

],

|

| 13 |

+

"class_embed_type": null,

|

| 14 |

+

"conditioning_channels": 3,

|

| 15 |

+

"conditioning_embedding_out_channels": [

|

| 16 |

+

16,

|

| 17 |

+

32,

|

| 18 |

+

96,

|

| 19 |

+

256

|

| 20 |

+

],

|

| 21 |

+

"controlnet_conditioning_channel_order": "rgb",

|

| 22 |

+

"cross_attention_dim": 768,

|

| 23 |

+

"down_block_types": [

|

| 24 |

+

"CrossAttnDownBlock2D",

|

| 25 |

+

"CrossAttnDownBlock2D",

|

| 26 |

+

"CrossAttnDownBlock2D",

|

| 27 |

+

"DownBlock2D"

|

| 28 |

+

],

|

| 29 |

+

"downsample_padding": 1,

|

| 30 |

+

"flip_sin_to_cos": true,

|

| 31 |

+

"freq_shift": 0,

|

| 32 |

+

"global_pool_conditions": false,

|

| 33 |

+

"in_channels": 4,

|

| 34 |

+

"layers_per_block": 2,

|

| 35 |

+

"mid_block_scale_factor": 1,

|

| 36 |

+

"norm_eps": 1e-05,

|

| 37 |

+

"norm_num_groups": 32,

|

| 38 |

+

"num_attention_heads": null,

|

| 39 |

+

"num_class_embeds": null,

|

| 40 |

+

"only_cross_attention": false,

|

| 41 |

+

"projection_class_embeddings_input_dim": null,

|

| 42 |

+

"resnet_time_scale_shift": "default",

|

| 43 |

+

"upcast_attention": false,

|

| 44 |

+

"use_linear_projection": false

|

| 45 |

+

}

|

controlnet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:da137629679b3714e8a47009ec8f657e48ee6b7d741c05c9c9607a218621f9df

|

| 3 |

+

size 1445259705

|

image_processor/preprocessor_config.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"do_center_crop": true,

|

| 3 |

+

"do_convert_rgb": true,

|

| 4 |

+

"do_normalize": true,

|

| 5 |

+

"do_rescale": true,

|

| 6 |

+

"do_resize": true,

|

| 7 |

+

"image_mean": [

|

| 8 |

+

0.48145466,

|

| 9 |

+

0.4578275,

|

| 10 |

+

0.40821073

|

| 11 |

+

],

|

| 12 |

+

"image_processor_type": "BlipImageProcessor",

|

| 13 |

+

"image_std": [

|

| 14 |

+

0.26862954,

|

| 15 |

+

0.26130258,

|

| 16 |

+

0.27577711

|

| 17 |

+

],

|

| 18 |

+

"resample": 3,

|

| 19 |

+

"rescale_factor": 0.00392156862745098,

|

| 20 |

+

"size": {

|

| 21 |

+

"height": 224,

|

| 22 |

+

"width": 224

|

| 23 |

+

}

|

| 24 |

+

}

|

model_index.json

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "BlipDiffusionControlNetPipeline",

|

| 3 |

+

"_diffusers_version": "0.18.0.dev0",

|

| 4 |

+

"controlnet": [

|

| 5 |

+

"diffusers",

|

| 6 |

+

"ControlNetModel"

|

| 7 |

+

],

|

| 8 |

+

"ctx_begin_pos": 2,

|

| 9 |

+

"image_processor": [

|

| 10 |

+

"blip_diffusion",

|

| 11 |

+

"BlipImageProcessor"

|

| 12 |

+

],

|

| 13 |

+

"mean": [

|

| 14 |

+

0.48145466,

|

| 15 |

+

0.4578275,

|

| 16 |

+

0.40821073

|

| 17 |

+

],

|

| 18 |

+

"qformer": [

|

| 19 |

+

"blip_diffusion",

|

| 20 |

+

"Blip2QFormerModel"

|

| 21 |

+

],

|

| 22 |

+

"scheduler": [

|

| 23 |

+

"diffusers",

|

| 24 |

+

"PNDMScheduler"

|

| 25 |

+

],

|

| 26 |

+

"std": [

|

| 27 |

+

0.26862954,

|

| 28 |

+

0.26130258,

|

| 29 |

+

0.27577711

|

| 30 |

+

],

|

| 31 |

+

"text_encoder": [

|

| 32 |

+

"blip_diffusion",

|

| 33 |

+

"ContextCLIPTextModel"

|

| 34 |

+

],

|

| 35 |

+

"tokenizer": [

|

| 36 |

+

"transformers",

|

| 37 |

+

"CLIPTokenizer"

|

| 38 |

+

],

|

| 39 |

+

"unet": [

|

| 40 |

+

"diffusers",

|

| 41 |

+

"UNet2DConditionModel"

|

| 42 |

+

],

|

| 43 |

+

"vae": [

|

| 44 |

+

"diffusers",

|

| 45 |

+

"AutoencoderKL"

|

| 46 |

+

]

|

| 47 |

+

}

|

qformer/config.json

ADDED

|

@@ -0,0 +1,29 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "ayushtues/blipdiffusion",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"Blip2QFormerModel"

|

| 5 |

+

],

|

| 6 |

+

"initializer_factor": 1.0,

|

| 7 |

+

"initializer_range": 0.02,

|

| 8 |

+

"model_type": "blip-2",

|

| 9 |

+

"num_query_tokens": 16,

|

| 10 |

+

"qformer_config": {

|

| 11 |

+

"cross_attention_frequency": 1,

|

| 12 |

+

"encoder_hidden_size": 1024,

|

| 13 |

+

"model_type": "blip_2_qformer",

|

| 14 |

+

"vocab_size": 30523

|

| 15 |

+

},

|

| 16 |

+

"text_config": {

|

| 17 |

+

"model_type": "opt"

|

| 18 |

+

},

|

| 19 |

+

"torch_dtype": "float32",

|

| 20 |

+

"transformers_version": "4.33.0.dev0",

|

| 21 |

+

"use_decoder_only_language_model": true,

|

| 22 |

+

"vision_config": {

|

| 23 |

+

"hidden_act": "quick_gelu",

|

| 24 |

+

"hidden_size": 1024,

|

| 25 |

+

"intermediate_size": 4096,

|

| 26 |

+

"model_type": "blip_2_vision_model",

|

| 27 |

+

"num_hidden_layers": 23

|

| 28 |

+

}

|

| 29 |

+

}

|

qformer/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2beb7ccb198585f2da2e7e8699aaea821274eeb946baf82d2b181139dedd5b2e

|

| 3 |

+

size 1976172201

|

scheduler/scheduler_config.json

ADDED

|

@@ -0,0 +1,14 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "PNDMScheduler",

|

| 3 |

+

"_diffusers_version": "0.18.0.dev0",

|

| 4 |

+

"beta_end": 0.012,

|

| 5 |

+

"beta_schedule": "scaled_linear",

|

| 6 |

+

"beta_start": 0.00085,

|

| 7 |

+

"num_train_timesteps": 1000,

|

| 8 |

+

"prediction_type": "epsilon",

|

| 9 |

+

"set_alpha_to_one": false,

|

| 10 |

+

"skip_prk_steps": true,

|

| 11 |

+

"steps_offset": 0,

|

| 12 |

+

"timestep_spacing": "leading",

|

| 13 |

+

"trained_betas": null

|

| 14 |

+

}

|

text_encoder/config.json

ADDED

|

@@ -0,0 +1,25 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_name_or_path": "ayushtues/blipdiffusion",

|

| 3 |

+

"architectures": [

|

| 4 |

+

"ContextCLIPTextModel"

|

| 5 |

+

],

|

| 6 |

+

"attention_dropout": 0.0,

|

| 7 |

+

"bos_token_id": 0,

|

| 8 |

+

"dropout": 0.0,

|

| 9 |

+

"eos_token_id": 2,

|

| 10 |

+

"hidden_act": "quick_gelu",

|

| 11 |

+

"hidden_size": 768,

|

| 12 |

+

"initializer_factor": 1.0,

|

| 13 |

+

"initializer_range": 0.02,

|

| 14 |

+

"intermediate_size": 3072,

|

| 15 |

+

"layer_norm_eps": 1e-05,

|

| 16 |

+

"max_position_embeddings": 77,

|

| 17 |

+

"model_type": "clip_text_model",

|

| 18 |

+

"num_attention_heads": 12,

|

| 19 |

+

"num_hidden_layers": 12,

|

| 20 |

+

"pad_token_id": 1,

|

| 21 |

+

"projection_dim": 768,

|

| 22 |

+

"torch_dtype": "float32",

|

| 23 |

+

"transformers_version": "4.33.0.dev0",

|

| 24 |

+

"vocab_size": 49408

|

| 25 |

+

}

|

text_encoder/pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:2579756b867c003d1b8d06b05b8e1a0bd781cc4551c0ca2c8d43544cb2ef0d8e

|

| 3 |

+

size 492309793

|

tokenizer/merges.txt

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

tokenizer/special_tokens_map.json

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"bos_token": {

|

| 3 |

+

"content": "<|startoftext|>",

|

| 4 |

+

"lstrip": false,

|

| 5 |

+

"normalized": true,

|

| 6 |

+

"rstrip": false,

|

| 7 |

+

"single_word": false

|

| 8 |

+

},

|

| 9 |

+

"eos_token": {

|

| 10 |

+

"content": "<|endoftext|>",

|

| 11 |

+

"lstrip": false,

|

| 12 |

+

"normalized": true,

|

| 13 |

+

"rstrip": false,

|

| 14 |

+

"single_word": false

|

| 15 |

+

},

|

| 16 |

+

"pad_token": "<|endoftext|>",

|

| 17 |

+

"unk_token": {

|

| 18 |

+

"content": "<|endoftext|>",

|

| 19 |

+

"lstrip": false,

|

| 20 |

+

"normalized": true,

|

| 21 |

+

"rstrip": false,

|

| 22 |

+

"single_word": false

|

| 23 |

+

}

|

| 24 |

+

}

|

tokenizer/tokenizer_config.json

ADDED

|

@@ -0,0 +1,33 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"add_prefix_space": false,

|

| 3 |

+

"bos_token": {

|

| 4 |

+

"__type": "AddedToken",

|

| 5 |

+

"content": "<|startoftext|>",

|

| 6 |

+

"lstrip": false,

|

| 7 |

+

"normalized": true,

|

| 8 |

+

"rstrip": false,

|

| 9 |

+

"single_word": false

|

| 10 |

+

},

|

| 11 |

+

"clean_up_tokenization_spaces": true,

|

| 12 |

+

"do_lower_case": true,

|

| 13 |

+

"eos_token": {

|

| 14 |

+

"__type": "AddedToken",

|

| 15 |

+

"content": "<|endoftext|>",

|

| 16 |

+

"lstrip": false,

|

| 17 |

+

"normalized": true,

|

| 18 |

+

"rstrip": false,

|

| 19 |

+

"single_word": false

|

| 20 |

+

},

|

| 21 |

+

"errors": "replace",

|

| 22 |

+

"model_max_length": 77,

|

| 23 |

+

"pad_token": "<|endoftext|>",

|

| 24 |

+

"tokenizer_class": "CLIPTokenizer",

|

| 25 |

+

"unk_token": {

|

| 26 |

+

"__type": "AddedToken",

|

| 27 |

+

"content": "<|endoftext|>",

|

| 28 |

+

"lstrip": false,

|

| 29 |

+

"normalized": true,

|

| 30 |

+

"rstrip": false,

|

| 31 |

+

"single_word": false

|

| 32 |

+

}

|

| 33 |

+

}

|

tokenizer/vocab.json

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

unet/config.json

ADDED

|

@@ -0,0 +1,65 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "UNet2DConditionModel",

|

| 3 |

+

"_diffusers_version": "0.18.0.dev0",

|

| 4 |

+

"_name_or_path": "ayushtues/blipdiffusion",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"addition_embed_type": null,

|

| 7 |

+

"addition_embed_type_num_heads": 64,

|

| 8 |

+

"addition_time_embed_dim": null,

|

| 9 |

+

"attention_head_dim": 8,

|

| 10 |

+

"block_out_channels": [

|

| 11 |

+

320,

|

| 12 |

+

640,

|

| 13 |

+

1280,

|

| 14 |

+

1280

|

| 15 |

+

],

|

| 16 |

+

"center_input_sample": false,

|

| 17 |

+

"class_embed_type": null,

|

| 18 |

+

"class_embeddings_concat": false,

|

| 19 |

+

"conv_in_kernel": 3,

|

| 20 |

+

"conv_out_kernel": 3,

|

| 21 |

+

"cross_attention_dim": 768,

|

| 22 |

+

"cross_attention_norm": null,

|

| 23 |

+

"down_block_types": [

|

| 24 |

+

"CrossAttnDownBlock2D",

|

| 25 |

+

"CrossAttnDownBlock2D",

|

| 26 |

+

"CrossAttnDownBlock2D",

|

| 27 |

+

"DownBlock2D"

|

| 28 |

+

],

|

| 29 |

+

"downsample_padding": 1,

|

| 30 |

+

"dual_cross_attention": false,

|

| 31 |

+

"encoder_hid_dim": null,

|

| 32 |

+

"encoder_hid_dim_type": null,

|

| 33 |

+

"flip_sin_to_cos": true,

|

| 34 |

+

"freq_shift": 0,

|

| 35 |

+

"in_channels": 4,

|

| 36 |

+

"layers_per_block": 2,

|

| 37 |

+

"mid_block_only_cross_attention": null,

|

| 38 |

+

"mid_block_scale_factor": 1,

|

| 39 |

+

"mid_block_type": "UNetMidBlock2DCrossAttn",

|

| 40 |

+

"norm_eps": 1e-05,

|

| 41 |

+

"norm_num_groups": 32,

|

| 42 |

+

"num_attention_heads": null,

|

| 43 |

+

"num_class_embeds": null,

|

| 44 |

+

"only_cross_attention": false,

|

| 45 |

+

"out_channels": 4,

|

| 46 |

+

"projection_class_embeddings_input_dim": null,

|

| 47 |

+

"resnet_out_scale_factor": 1.0,

|

| 48 |

+

"resnet_skip_time_act": false,

|

| 49 |

+

"resnet_time_scale_shift": "default",

|

| 50 |

+

"sample_size": 64,

|

| 51 |

+

"time_cond_proj_dim": null,

|

| 52 |

+

"time_embedding_act_fn": null,

|

| 53 |

+

"time_embedding_dim": null,

|

| 54 |

+

"time_embedding_type": "positional",

|

| 55 |

+

"timestep_post_act": null,

|

| 56 |

+

"transformer_layers_per_block": 1,

|

| 57 |

+

"up_block_types": [

|

| 58 |

+

"UpBlock2D",

|

| 59 |

+

"CrossAttnUpBlock2D",

|

| 60 |

+

"CrossAttnUpBlock2D",

|

| 61 |

+

"CrossAttnUpBlock2D"

|

| 62 |

+

],

|

| 63 |

+

"upcast_attention": false,

|

| 64 |

+

"use_linear_projection": false

|

| 65 |

+

}

|

unet/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:4f789a4d491581750b69ba3d8f83560c9dd85ee93d9f80006d9e9bcf24de0da5

|

| 3 |

+

size 3438375973

|

vae/config.json

ADDED

|

@@ -0,0 +1,31 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"_class_name": "AutoencoderKL",

|

| 3 |

+

"_diffusers_version": "0.18.0.dev0",

|

| 4 |

+

"_name_or_path": "ayushtues/blipdiffusion",

|

| 5 |

+

"act_fn": "silu",

|

| 6 |

+

"block_out_channels": [

|

| 7 |

+

128,

|

| 8 |

+

256,

|

| 9 |

+

512,

|

| 10 |

+

512

|

| 11 |

+

],

|

| 12 |

+

"down_block_types": [

|

| 13 |

+

"DownEncoderBlock2D",

|

| 14 |

+

"DownEncoderBlock2D",

|

| 15 |

+

"DownEncoderBlock2D",

|

| 16 |

+

"DownEncoderBlock2D"

|

| 17 |

+

],

|

| 18 |

+

"in_channels": 3,

|

| 19 |

+

"latent_channels": 4,

|

| 20 |

+

"layers_per_block": 2,

|

| 21 |

+

"norm_num_groups": 32,

|

| 22 |

+

"out_channels": 3,

|

| 23 |

+

"sample_size": 512,

|

| 24 |

+

"scaling_factor": 0.18215,

|

| 25 |

+

"up_block_types": [

|

| 26 |

+

"UpDecoderBlock2D",

|

| 27 |

+

"UpDecoderBlock2D",

|

| 28 |

+

"UpDecoderBlock2D",

|

| 29 |

+

"UpDecoderBlock2D"

|

| 30 |

+

]

|

| 31 |

+

}

|

vae/diffusion_pytorch_model.bin

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:11a6fc35e77a2d5696ae6a494f797f01b7ab97b08b5f8f2f17e19d0ef169b1ca

|

| 3 |

+

size 334715569

|