File size: 3,574 Bytes

f778422 602e818 f778422 602e818 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 |

---

license: mit

tags:

- vision

- image-classification

datasets:

- imagenet-1k

widget:

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg

example_title: Tiger

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/teapot.jpg

example_title: Teapot

- src: https://huggingface.co/datasets/mishig/sample_images/resolve/main/palace.jpg

example_title: Palace

---

# DiNAT (small variant)

DiNAT-Small trained on ImageNet-1K at 224x224 resolution.

It was introduced in the paper [Dilated Neighborhood Attention Transformer](https://arxiv.org/abs/2209.15001) by Hassani et al. and first released in [this repository](https://github.com/SHI-Labs/Neighborhood-Attention-Transformer).

## Model description

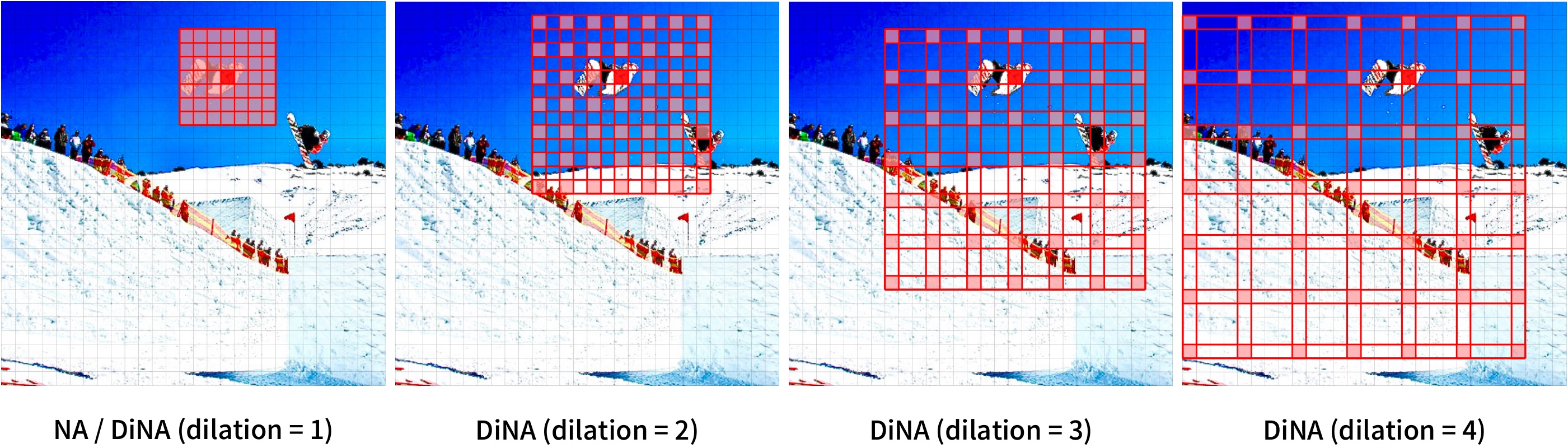

DiNAT is a hierarchical vision transformer based on Neighborhood Attention (NA) and its dilated variant (DiNA).

Neighborhood Attention is a restricted self attention pattern in which each token's receptive field is limited to its nearest neighboring pixels.

NA and DiNA are therefore sliding-window attention patterns, and as a result are highly flexible and maintain translational equivariance.

They come with PyTorch implementations through the [NATTEN](https://github.com/SHI-Labs/NATTEN/) package.

[Source](https://paperswithcode.com/paper/dilated-neighborhood-attention-transformer)

## Intended uses & limitations

You can use the raw model for image classification. See the [model hub](https://huggingface.co/models?search=dinat) to look for

fine-tuned versions on a task that interests you.

### Example

Here is how to use this model to classify an image from the COCO 2017 dataset into one of the 1,000 ImageNet classes:

```python

from transformers import AutoImageProcessor, DinatForImageClassification

from PIL import Image

import requests

url = "http://images.cocodataset.org/val2017/000000039769.jpg"

image = Image.open(requests.get(url, stream=True).raw)

feature_extractor = AutoImageProcessor.from_pretrained("shi-labs/dinat-small-in1k-224")

model = DinatForImageClassification.from_pretrained("shi-labs/dinat-small-in1k-224")

inputs = feature_extractor(images=image, return_tensors="pt")

outputs = model(**inputs)

logits = outputs.logits

# model predicts one of the 1000 ImageNet classes

predicted_class_idx = logits.argmax(-1).item()

print("Predicted class:", model.config.id2label[predicted_class_idx])

```

For more examples, please refer to the [documentation](https://huggingface.co/transformers/model_doc/dinat.html#).

### Requirements

Other than transformers, this model requires the [NATTEN](https://shi-labs.com/natten) package.

If you're on Linux, you can refer to [shi-labs.com/natten](https://shi-labs.com/natten) for instructions on installing with pre-compiled binaries (just select your torch build to get the correct wheel URL).

You can alternatively use `pip install natten` to compile on your device, which may take up to a few minutes.

Mac users only have the latter option (no pre-compiled binaries).

Refer to [NATTEN's GitHub](https://github.com/SHI-Labs/NATTEN/) for more information.

### BibTeX entry and citation info

```bibtex

@article{hassani2022dilated,

title = {Dilated Neighborhood Attention Transformer},

author = {Ali Hassani and Humphrey Shi},

year = 2022,

url = {https://arxiv.org/abs/2209.15001},

eprint = {2209.15001},

archiveprefix = {arXiv},

primaryclass = {cs.CV}

}

``` |