Spaces:

Sleeping

Sleeping

Upload 49 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +6 -0

- TripoSR +0 -1

- TripoSR/.gitignore +164 -0

- TripoSR/LICENSE +21 -0

- TripoSR/README.md +80 -0

- TripoSR/__pycache__/obj_gen.cpython-310.pyc +0 -0

- TripoSR/examples/captured.jpeg +3 -0

- TripoSR/examples/captured_p.png +3 -0

- TripoSR/examples/chair.png +0 -0

- TripoSR/examples/flamingo.png +0 -0

- TripoSR/examples/hamburger.png +0 -0

- TripoSR/examples/horse.png +0 -0

- TripoSR/examples/iso_house.png +3 -0

- TripoSR/examples/marble.png +0 -0

- TripoSR/examples/police_woman.png +0 -0

- TripoSR/examples/poly_fox.png +0 -0

- TripoSR/examples/robot.png +0 -0

- TripoSR/examples/stripes.png +0 -0

- TripoSR/examples/teapot.png +0 -0

- TripoSR/examples/tiger_girl.png +0 -0

- TripoSR/examples/unicorn.png +0 -0

- TripoSR/figures/comparison800.gif +3 -0

- TripoSR/figures/scatter-comparison.png +0 -0

- TripoSR/figures/teaser800.gif +3 -0

- TripoSR/figures/visual_comparisons.jpg +3 -0

- TripoSR/gradio_app.py +187 -0

- TripoSR/obj_gen.py +92 -0

- TripoSR/output/0/input.png +0 -0

- TripoSR/output/0/mesh.obj +0 -0

- TripoSR/requirements.txt +9 -0

- TripoSR/run.py +162 -0

- TripoSR/tsr/__pycache__/system.cpython-310.pyc +0 -0

- TripoSR/tsr/__pycache__/utils.cpython-310.pyc +0 -0

- TripoSR/tsr/models/__pycache__/isosurface.cpython-310.pyc +0 -0

- TripoSR/tsr/models/__pycache__/nerf_renderer.cpython-310.pyc +0 -0

- TripoSR/tsr/models/__pycache__/network_utils.cpython-310.pyc +0 -0

- TripoSR/tsr/models/isosurface.py +52 -0

- TripoSR/tsr/models/nerf_renderer.py +180 -0

- TripoSR/tsr/models/network_utils.py +124 -0

- TripoSR/tsr/models/tokenizers/__pycache__/image.cpython-310.pyc +0 -0

- TripoSR/tsr/models/tokenizers/__pycache__/triplane.cpython-310.pyc +0 -0

- TripoSR/tsr/models/tokenizers/image.py +66 -0

- TripoSR/tsr/models/tokenizers/triplane.py +45 -0

- TripoSR/tsr/models/transformer/__pycache__/attention.cpython-310.pyc +0 -0

- TripoSR/tsr/models/transformer/__pycache__/basic_transformer_block.cpython-310.pyc +0 -0

- TripoSR/tsr/models/transformer/__pycache__/transformer_1d.cpython-310.pyc +0 -0

- TripoSR/tsr/models/transformer/attention.py +653 -0

- TripoSR/tsr/models/transformer/basic_transformer_block.py +334 -0

- TripoSR/tsr/models/transformer/transformer_1d.py +219 -0

- TripoSR/tsr/system.py +203 -0

.gitattributes

CHANGED

|

@@ -34,3 +34,9 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

wheel/torchmcubes-0.1.0-cp310-cp310-linux_x86_64.whl filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

wheel/torchmcubes-0.1.0-cp310-cp310-linux_x86_64.whl filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

TripoSR/examples/captured_p.png filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

TripoSR/examples/captured.jpeg filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

TripoSR/examples/iso_house.png filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

TripoSR/figures/comparison800.gif filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

TripoSR/figures/teaser800.gif filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

TripoSR/figures/visual_comparisons.jpg filter=lfs diff=lfs merge=lfs -text

|

TripoSR

DELETED

|

@@ -1 +0,0 @@

|

|

| 1 |

-

Subproject commit 8e51fec8095c9eae20e6ea7c9aef6368c5631a21

|

|

|

|

|

|

TripoSR/.gitignore

ADDED

|

@@ -0,0 +1,164 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Byte-compiled / optimized / DLL files

|

| 2 |

+

__pycache__/

|

| 3 |

+

*.py[cod]

|

| 4 |

+

*$py.class

|

| 5 |

+

|

| 6 |

+

# C extensions

|

| 7 |

+

*.so

|

| 8 |

+

|

| 9 |

+

# Distribution / packaging

|

| 10 |

+

.Python

|

| 11 |

+

build/

|

| 12 |

+

develop-eggs/

|

| 13 |

+

dist/

|

| 14 |

+

downloads/

|

| 15 |

+

eggs/

|

| 16 |

+

.eggs/

|

| 17 |

+

lib/

|

| 18 |

+

lib64/

|

| 19 |

+

parts/

|

| 20 |

+

sdist/

|

| 21 |

+

var/

|

| 22 |

+

wheels/

|

| 23 |

+

share/python-wheels/

|

| 24 |

+

*.egg-info/

|

| 25 |

+

.installed.cfg

|

| 26 |

+

*.egg

|

| 27 |

+

MANIFEST

|

| 28 |

+

|

| 29 |

+

# PyInstaller

|

| 30 |

+

# Usually these files are written by a python script from a template

|

| 31 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 32 |

+

*.manifest

|

| 33 |

+

*.spec

|

| 34 |

+

|

| 35 |

+

# Installer logs

|

| 36 |

+

pip-log.txt

|

| 37 |

+

pip-delete-this-directory.txt

|

| 38 |

+

|

| 39 |

+

# Unit test / coverage reports

|

| 40 |

+

htmlcov/

|

| 41 |

+

.tox/

|

| 42 |

+

.nox/

|

| 43 |

+

.coverage

|

| 44 |

+

.coverage.*

|

| 45 |

+

.cache

|

| 46 |

+

nosetests.xml

|

| 47 |

+

coverage.xml

|

| 48 |

+

*.cover

|

| 49 |

+

*.py,cover

|

| 50 |

+

.hypothesis/

|

| 51 |

+

.pytest_cache/

|

| 52 |

+

cover/

|

| 53 |

+

|

| 54 |

+

# Translations

|

| 55 |

+

*.mo

|

| 56 |

+

*.pot

|

| 57 |

+

|

| 58 |

+

# Django stuff:

|

| 59 |

+

*.log

|

| 60 |

+

local_settings.py

|

| 61 |

+

db.sqlite3

|

| 62 |

+

db.sqlite3-journal

|

| 63 |

+

|

| 64 |

+

# Flask stuff:

|

| 65 |

+

instance/

|

| 66 |

+

.webassets-cache

|

| 67 |

+

|

| 68 |

+

# Scrapy stuff:

|

| 69 |

+

.scrapy

|

| 70 |

+

|

| 71 |

+

# Sphinx documentation

|

| 72 |

+

docs/_build/

|

| 73 |

+

|

| 74 |

+

# PyBuilder

|

| 75 |

+

.pybuilder/

|

| 76 |

+

target/

|

| 77 |

+

|

| 78 |

+

# Jupyter Notebook

|

| 79 |

+

.ipynb_checkpoints

|

| 80 |

+

|

| 81 |

+

# IPython

|

| 82 |

+

profile_default/

|

| 83 |

+

ipython_config.py

|

| 84 |

+

|

| 85 |

+

# pyenv

|

| 86 |

+

# For a library or package, you might want to ignore these files since the code is

|

| 87 |

+

# intended to run in multiple environments; otherwise, check them in:

|

| 88 |

+

# .python-version

|

| 89 |

+

|

| 90 |

+

# pipenv

|

| 91 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 92 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 93 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 94 |

+

# install all needed dependencies.

|

| 95 |

+

#Pipfile.lock

|

| 96 |

+

|

| 97 |

+

# poetry

|

| 98 |

+

# Similar to Pipfile.lock, it is generally recommended to include poetry.lock in version control.

|

| 99 |

+

# This is especially recommended for binary packages to ensure reproducibility, and is more

|

| 100 |

+

# commonly ignored for libraries.

|

| 101 |

+

# https://python-poetry.org/docs/basic-usage/#commit-your-poetrylock-file-to-version-control

|

| 102 |

+

#poetry.lock

|

| 103 |

+

|

| 104 |

+

# pdm

|

| 105 |

+

# Similar to Pipfile.lock, it is generally recommended to include pdm.lock in version control.

|

| 106 |

+

#pdm.lock

|

| 107 |

+

# pdm stores project-wide configurations in .pdm.toml, but it is recommended to not include it

|

| 108 |

+

# in version control.

|

| 109 |

+

# https://pdm.fming.dev/#use-with-ide

|

| 110 |

+

.pdm.toml

|

| 111 |

+

|

| 112 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow and github.com/pdm-project/pdm

|

| 113 |

+

__pypackages__/

|

| 114 |

+

|

| 115 |

+

# Celery stuff

|

| 116 |

+

celerybeat-schedule

|

| 117 |

+

celerybeat.pid

|

| 118 |

+

|

| 119 |

+

# SageMath parsed files

|

| 120 |

+

*.sage.py

|

| 121 |

+

|

| 122 |

+

# Environments

|

| 123 |

+

.env

|

| 124 |

+

.venv

|

| 125 |

+

env/

|

| 126 |

+

venv/

|

| 127 |

+

ENV/

|

| 128 |

+

env.bak/

|

| 129 |

+

venv.bak/

|

| 130 |

+

|

| 131 |

+

# Spyder project settings

|

| 132 |

+

.spyderproject

|

| 133 |

+

.spyproject

|

| 134 |

+

|

| 135 |

+

# Rope project settings

|

| 136 |

+

.ropeproject

|

| 137 |

+

|

| 138 |

+

# mkdocs documentation

|

| 139 |

+

/site

|

| 140 |

+

|

| 141 |

+

# mypy

|

| 142 |

+

.mypy_cache/

|

| 143 |

+

.dmypy.json

|

| 144 |

+

dmypy.json

|

| 145 |

+

|

| 146 |

+

# Pyre type checker

|

| 147 |

+

.pyre/

|

| 148 |

+

|

| 149 |

+

# pytype static type analyzer

|

| 150 |

+

.pytype/

|

| 151 |

+

|

| 152 |

+

# Cython debug symbols

|

| 153 |

+

cython_debug/

|

| 154 |

+

|

| 155 |

+

# PyCharm

|

| 156 |

+

# JetBrains specific template is maintained in a separate JetBrains.gitignore that can

|

| 157 |

+

# be found at https://github.com/github/gitignore/blob/main/Global/JetBrains.gitignore

|

| 158 |

+

# and can be added to the global gitignore or merged into this file. For a more nuclear

|

| 159 |

+

# option (not recommended) you can uncomment the following to ignore the entire idea folder.

|

| 160 |

+

#.idea/

|

| 161 |

+

|

| 162 |

+

# default output directory

|

| 163 |

+

output/

|

| 164 |

+

outputs/

|

TripoSR/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2024 Tripo AI & Stability AI

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

TripoSR/README.md

ADDED

|

@@ -0,0 +1,80 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# TripoSR <a href="https://huggingface.co/stabilityai/TripoSR"><img src="https://img.shields.io/badge/%F0%9F%A4%97%20Model_Card-Huggingface-orange"></a> <a href="https://huggingface.co/spaces/stabilityai/TripoSR"><img src="https://img.shields.io/badge/%F0%9F%A4%97%20Gradio%20Demo-Huggingface-orange"></a> <a href="https://arxiv.org/abs/2403.02151"><img src="https://img.shields.io/badge/Arxiv-2403.02151-B31B1B.svg"></a>

|

| 2 |

+

|

| 3 |

+

<div align="center">

|

| 4 |

+

<img src="figures/teaser800.gif" alt="Teaser Video">

|

| 5 |

+

</div>

|

| 6 |

+

|

| 7 |

+

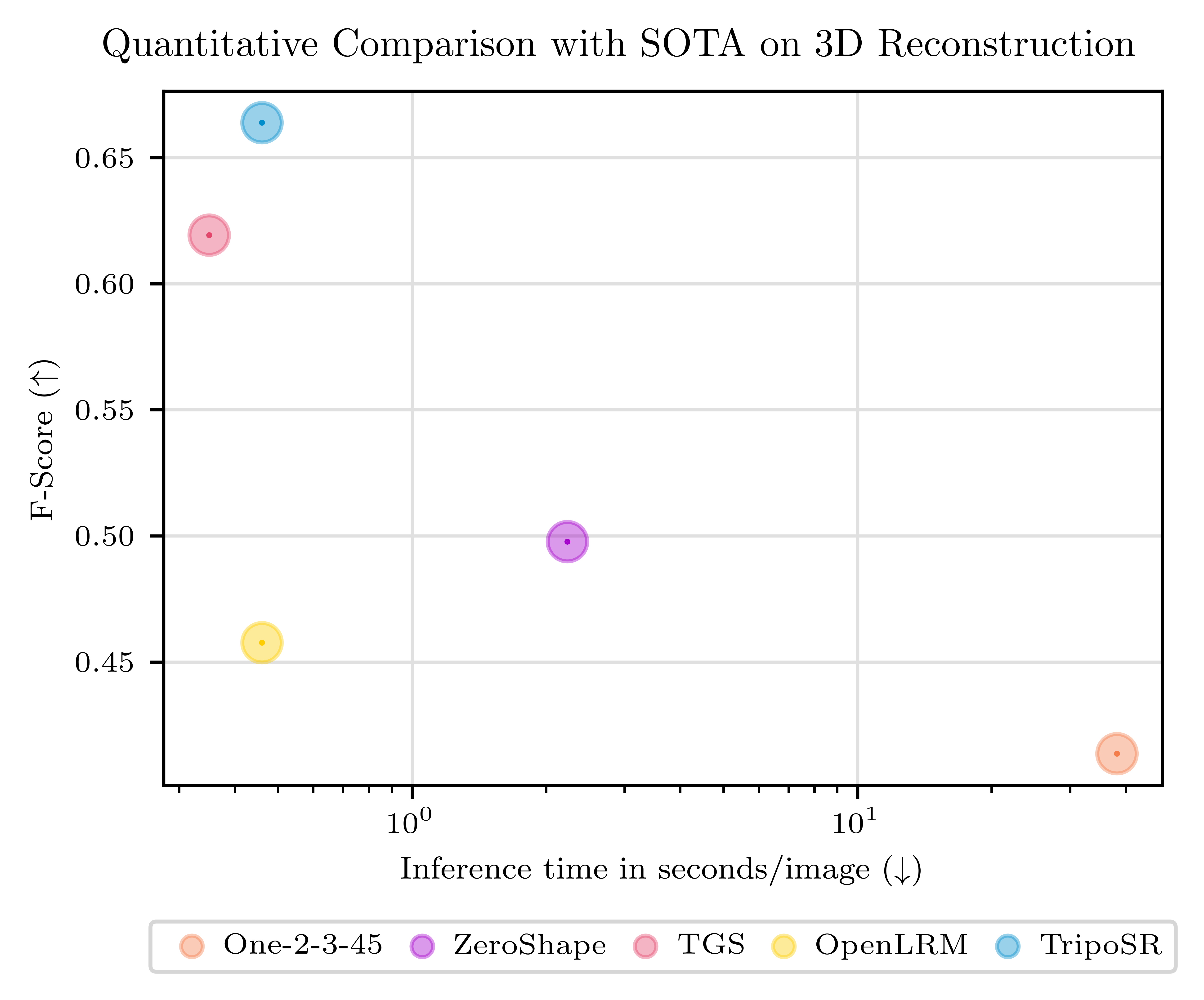

This is the official codebase for **TripoSR**, a state-of-the-art open-source model for **fast** feedforward 3D reconstruction from a single image, collaboratively developed by [Tripo AI](https://www.tripo3d.ai/) and [Stability AI](https://stability.ai/).

|

| 8 |

+

<br><br>

|

| 9 |

+

Leveraging the principles of the [Large Reconstruction Model (LRM)](https://yiconghong.me/LRM/), TripoSR brings to the table key advancements that significantly boost both the speed and quality of 3D reconstruction. Our model is distinguished by its ability to rapidly process inputs, generating high-quality 3D models in less than 0.5 seconds on an NVIDIA A100 GPU. TripoSR has exhibited superior performance in both qualitative and quantitative evaluations, outperforming other open-source alternatives across multiple public datasets. The figures below illustrate visual comparisons and metrics showcasing TripoSR's performance relative to other leading models. Details about the model architecture, training process, and comparisons can be found in this [technical report](https://arxiv.org/abs/2403.02151).

|

| 10 |

+

|

| 11 |

+

<!--

|

| 12 |

+

<div align="center">

|

| 13 |

+

<img src="figures/comparison800.gif" alt="Teaser Video">

|

| 14 |

+

</div>

|

| 15 |

+

-->

|

| 16 |

+

<p align="center">

|

| 17 |

+

<img width="800" src="figures/visual_comparisons.jpg"/>

|

| 18 |

+

</p>

|

| 19 |

+

|

| 20 |

+

<p align="center">

|

| 21 |

+

<img width="450" src="figures/scatter-comparison.png"/>

|

| 22 |

+

</p>

|

| 23 |

+

|

| 24 |

+

|

| 25 |

+

The model is released under the MIT license, which includes the source code, pretrained models, and an interactive online demo. Our goal is to empower researchers, developers, and creatives to push the boundaries of what's possible in 3D generative AI and 3D content creation.

|

| 26 |

+

|

| 27 |

+

## Getting Started

|

| 28 |

+

### Installation

|

| 29 |

+

- Python >= 3.8

|

| 30 |

+

- Install CUDA if available

|

| 31 |

+

- Install PyTorch according to your platform: [https://pytorch.org/get-started/locally/](https://pytorch.org/get-started/locally/) **[Please make sure that the locally-installed CUDA major version matches the PyTorch-shipped CUDA major version. For example if you have CUDA 11.x installed, make sure to install PyTorch compiled with CUDA 11.x.]**

|

| 32 |

+

- Update setuptools by `pip install --upgrade setuptools`

|

| 33 |

+

- Install other dependencies by `pip install -r requirements.txt`

|

| 34 |

+

|

| 35 |

+

### Manual Inference

|

| 36 |

+

```sh

|

| 37 |

+

python run.py examples/chair.png --output-dir output/

|

| 38 |

+

```

|

| 39 |

+

This will save the reconstructed 3D model to `output/`. You can also specify more than one image path separated by spaces. The default options takes about **6GB VRAM** for a single image input.

|

| 40 |

+

|

| 41 |

+

For detailed usage of this script, use `python run.py --help`.

|

| 42 |

+

|

| 43 |

+

### Local Gradio App

|

| 44 |

+

Install Gradio:

|

| 45 |

+

```sh

|

| 46 |

+

pip install gradio

|

| 47 |

+

```

|

| 48 |

+

Start the Gradio App:

|

| 49 |

+

```sh

|

| 50 |

+

python gradio_app.py

|

| 51 |

+

```

|

| 52 |

+

|

| 53 |

+

## Troubleshooting

|

| 54 |

+

> AttributeError: module 'torchmcubes_module' has no attribute 'mcubes_cuda'

|

| 55 |

+

|

| 56 |

+

or

|

| 57 |

+

|

| 58 |

+

> torchmcubes was not compiled with CUDA support, use CPU version instead.

|

| 59 |

+

|

| 60 |

+

This is because `torchmcubes` is compiled without CUDA support. Please make sure that

|

| 61 |

+

|

| 62 |

+

- The locally-installed CUDA major version matches the PyTorch-shipped CUDA major version. For example if you have CUDA 11.x installed, make sure to install PyTorch compiled with CUDA 11.x.

|

| 63 |

+

- `setuptools>=49.6.0`. If not, upgrade by `pip install --upgrade setuptools`.

|

| 64 |

+

|

| 65 |

+

Then re-install `torchmcubes` by:

|

| 66 |

+

|

| 67 |

+

```sh

|

| 68 |

+

pip uninstall torchmcubes

|

| 69 |

+

pip install git+https://github.com/tatsy/torchmcubes.git

|

| 70 |

+

```

|

| 71 |

+

|

| 72 |

+

## Citation

|

| 73 |

+

```BibTeX

|

| 74 |

+

@article{TripoSR2024,

|

| 75 |

+

title={TripoSR: Fast 3D Object Reconstruction from a Single Image},

|

| 76 |

+

author={Tochilkin, Dmitry and Pankratz, David and Liu, Zexiang and Huang, Zixuan and and Letts, Adam and Li, Yangguang and Liang, Ding and Laforte, Christian and Jampani, Varun and Cao, Yan-Pei},

|

| 77 |

+

journal={arXiv preprint arXiv:2403.02151},

|

| 78 |

+

year={2024}

|

| 79 |

+

}

|

| 80 |

+

```

|

TripoSR/__pycache__/obj_gen.cpython-310.pyc

ADDED

|

Binary file (2.39 kB). View file

|

|

|

TripoSR/examples/captured.jpeg

ADDED

|

Git LFS Details

|

TripoSR/examples/captured_p.png

ADDED

|

Git LFS Details

|

TripoSR/examples/chair.png

ADDED

|

TripoSR/examples/flamingo.png

ADDED

|

TripoSR/examples/hamburger.png

ADDED

|

TripoSR/examples/horse.png

ADDED

|

TripoSR/examples/iso_house.png

ADDED

|

Git LFS Details

|

TripoSR/examples/marble.png

ADDED

|

TripoSR/examples/police_woman.png

ADDED

|

TripoSR/examples/poly_fox.png

ADDED

|

TripoSR/examples/robot.png

ADDED

|

TripoSR/examples/stripes.png

ADDED

|

TripoSR/examples/teapot.png

ADDED

|

TripoSR/examples/tiger_girl.png

ADDED

|

TripoSR/examples/unicorn.png

ADDED

|

TripoSR/figures/comparison800.gif

ADDED

|

Git LFS Details

|

TripoSR/figures/scatter-comparison.png

ADDED

|

TripoSR/figures/teaser800.gif

ADDED

|

Git LFS Details

|

TripoSR/figures/visual_comparisons.jpg

ADDED

|

Git LFS Details

|

TripoSR/gradio_app.py

ADDED

|

@@ -0,0 +1,187 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import logging

|

| 2 |

+

import os

|

| 3 |

+

import tempfile

|

| 4 |

+

import time

|

| 5 |

+

|

| 6 |

+

import gradio as gr

|

| 7 |

+

import numpy as np

|

| 8 |

+

import rembg

|

| 9 |

+

import torch

|

| 10 |

+

from PIL import Image

|

| 11 |

+

from functools import partial

|

| 12 |

+

|

| 13 |

+

from tsr.system import TSR

|

| 14 |

+

from tsr.utils import remove_background, resize_foreground, to_gradio_3d_orientation

|

| 15 |

+

|

| 16 |

+

import argparse

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

if torch.cuda.is_available():

|

| 20 |

+

device = "cuda:0"

|

| 21 |

+

else:

|

| 22 |

+

device = "cpu"

|

| 23 |

+

|

| 24 |

+

model = TSR.from_pretrained(

|

| 25 |

+

"stabilityai/TripoSR",

|

| 26 |

+

config_name="config.yaml",

|

| 27 |

+

weight_name="model.ckpt",

|

| 28 |

+

)

|

| 29 |

+

|

| 30 |

+

# adjust the chunk size to balance between speed and memory usage

|

| 31 |

+

model.renderer.set_chunk_size(8192)

|

| 32 |

+

model.to(device)

|

| 33 |

+

|

| 34 |

+

rembg_session = rembg.new_session()

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

def check_input_image(input_image):

|

| 38 |

+

if input_image is None:

|

| 39 |

+

raise gr.Error("No image uploaded!")

|

| 40 |

+

|

| 41 |

+

|

| 42 |

+

def preprocess(input_image, do_remove_background, foreground_ratio):

|

| 43 |

+

def fill_background(image):

|

| 44 |

+

image = np.array(image).astype(np.float32) / 255.0

|

| 45 |

+

image = image[:, :, :3] * image[:, :, 3:4] + (1 - image[:, :, 3:4]) * 0.5

|

| 46 |

+

image = Image.fromarray((image * 255.0).astype(np.uint8))

|

| 47 |

+

return image

|

| 48 |

+

|

| 49 |

+

if do_remove_background:

|

| 50 |

+

image = input_image.convert("RGB")

|

| 51 |

+

image = remove_background(image, rembg_session)

|

| 52 |

+

image = resize_foreground(image, foreground_ratio)

|

| 53 |

+

image = fill_background(image)

|

| 54 |

+

else:

|

| 55 |

+

image = input_image

|

| 56 |

+

if image.mode == "RGBA":

|

| 57 |

+

image = fill_background(image)

|

| 58 |

+

return image

|

| 59 |

+

|

| 60 |

+

|

| 61 |

+

def generate(image, mc_resolution, formats=["obj", "glb"]):

|

| 62 |

+

scene_codes = model(image, device=device)

|

| 63 |

+

mesh = model.extract_mesh(scene_codes, resolution=mc_resolution)[0]

|

| 64 |

+

mesh = to_gradio_3d_orientation(mesh)

|

| 65 |

+

rv = []

|

| 66 |

+

for format in formats:

|

| 67 |

+

mesh_path = tempfile.NamedTemporaryFile(suffix=f".{format}", delete=False)

|

| 68 |

+

mesh.export(mesh_path.name)

|

| 69 |

+

rv.append(mesh_path.name)

|

| 70 |

+

return rv

|

| 71 |

+

|

| 72 |

+

|

| 73 |

+

def run_example(image_pil):

|

| 74 |

+

preprocessed = preprocess(image_pil, False, 0.9)

|

| 75 |

+

mesh_name_obj, mesh_name_glb = generate(preprocessed, 256, ["obj", "glb"])

|

| 76 |

+

return preprocessed, mesh_name_obj, mesh_name_glb

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

with gr.Blocks(title="TripoSR") as interface:

|

| 80 |

+

gr.Markdown(

|

| 81 |

+

"""

|

| 82 |

+

# TripoSR Demo

|

| 83 |

+

[TripoSR](https://github.com/VAST-AI-Research/TripoSR) is a state-of-the-art open-source model for **fast** feedforward 3D reconstruction from a single image, collaboratively developed by [Tripo AI](https://www.tripo3d.ai/) and [Stability AI](https://stability.ai/).

|

| 84 |

+

|

| 85 |

+

**Tips:**

|

| 86 |

+

1. If you find the result is unsatisfied, please try to change the foreground ratio. It might improve the results.

|

| 87 |

+

2. You can disable "Remove Background" for the provided examples since they have been already preprocessed.

|

| 88 |

+

3. Otherwise, please disable "Remove Background" option only if your input image is RGBA with transparent background, image contents are centered and occupy more than 70% of image width or height.

|

| 89 |

+

"""

|

| 90 |

+

)

|

| 91 |

+

with gr.Row(variant="panel"):

|

| 92 |

+

with gr.Column():

|

| 93 |

+

with gr.Row():

|

| 94 |

+

input_image = gr.Image(

|

| 95 |

+

label="Input Image",

|

| 96 |

+

image_mode="RGBA",

|

| 97 |

+

sources="upload",

|

| 98 |

+

type="pil",

|

| 99 |

+

elem_id="content_image",

|

| 100 |

+

)

|

| 101 |

+

processed_image = gr.Image(label="Processed Image", interactive=False)

|

| 102 |

+

with gr.Row():

|

| 103 |

+

with gr.Group():

|

| 104 |

+

do_remove_background = gr.Checkbox(

|

| 105 |

+

label="Remove Background", value=True

|

| 106 |

+

)

|

| 107 |

+

foreground_ratio = gr.Slider(

|

| 108 |

+

label="Foreground Ratio",

|

| 109 |

+

minimum=0.5,

|

| 110 |

+

maximum=1.0,

|

| 111 |

+

value=0.85,

|

| 112 |

+

step=0.05,

|

| 113 |

+

)

|

| 114 |

+

mc_resolution = gr.Slider(

|

| 115 |

+

label="Marching Cubes Resolution",

|

| 116 |

+

minimum=32,

|

| 117 |

+

maximum=320,

|

| 118 |

+

value=256,

|

| 119 |

+

step=32

|

| 120 |

+

)

|

| 121 |

+

with gr.Row():

|

| 122 |

+

submit = gr.Button("Generate", elem_id="generate", variant="primary")

|

| 123 |

+

with gr.Column():

|

| 124 |

+

with gr.Tab("OBJ"):

|

| 125 |

+

output_model_obj = gr.Model3D(

|

| 126 |

+

label="Output Model (OBJ Format)",

|

| 127 |

+

interactive=False,

|

| 128 |

+

)

|

| 129 |

+

gr.Markdown("Note: The model shown here is flipped. Download to get correct results.")

|

| 130 |

+

with gr.Tab("GLB"):

|

| 131 |

+

output_model_glb = gr.Model3D(

|

| 132 |

+

label="Output Model (GLB Format)",

|

| 133 |

+

interactive=False,

|

| 134 |

+

)

|

| 135 |

+

gr.Markdown("Note: The model shown here has a darker appearance. Download to get correct results.")

|

| 136 |

+

with gr.Row(variant="panel"):

|

| 137 |

+

gr.Examples(

|

| 138 |

+

examples=[

|

| 139 |

+

"examples/hamburger.png",

|

| 140 |

+

"examples/poly_fox.png",

|

| 141 |

+

"examples/robot.png",

|

| 142 |

+

"examples/teapot.png",

|

| 143 |

+

"examples/tiger_girl.png",

|

| 144 |

+

"examples/horse.png",

|

| 145 |

+

"examples/flamingo.png",

|

| 146 |

+

"examples/unicorn.png",

|

| 147 |

+

"examples/chair.png",

|

| 148 |

+

"examples/iso_house.png",

|

| 149 |

+

"examples/marble.png",

|

| 150 |

+

"examples/police_woman.png",

|

| 151 |

+

"examples/captured_p.png",

|

| 152 |

+

],

|

| 153 |

+

inputs=[input_image],

|

| 154 |

+

outputs=[processed_image, output_model_obj, output_model_glb],

|

| 155 |

+

cache_examples=False,

|

| 156 |

+

fn=partial(run_example),

|

| 157 |

+

label="Examples",

|

| 158 |

+

examples_per_page=20,

|

| 159 |

+

)

|

| 160 |

+

submit.click(fn=check_input_image, inputs=[input_image]).success(

|

| 161 |

+

fn=preprocess,

|

| 162 |

+

inputs=[input_image, do_remove_background, foreground_ratio],

|

| 163 |

+

outputs=[processed_image],

|

| 164 |

+

).success(

|

| 165 |

+

fn=generate,

|

| 166 |

+

inputs=[processed_image, mc_resolution],

|

| 167 |

+

outputs=[output_model_obj, output_model_glb],

|

| 168 |

+

)

|

| 169 |

+

|

| 170 |

+

|

| 171 |

+

|

| 172 |

+

if __name__ == '__main__':

|

| 173 |

+

parser = argparse.ArgumentParser()

|

| 174 |

+

parser.add_argument('--username', type=str, default=None, help='Username for authentication')

|

| 175 |

+

parser.add_argument('--password', type=str, default=None, help='Password for authentication')

|

| 176 |

+

parser.add_argument('--port', type=int, default=7860, help='Port to run the server listener on')

|

| 177 |

+

parser.add_argument("--listen", action='store_true', help="launch gradio with 0.0.0.0 as server name, allowing to respond to network requests")

|

| 178 |

+

parser.add_argument("--share", action='store_true', help="use share=True for gradio and make the UI accessible through their site")

|

| 179 |

+

parser.add_argument("--queuesize", type=int, default=1, help="launch gradio queue max_size")

|

| 180 |

+

args = parser.parse_args()

|

| 181 |

+

interface.queue(max_size=args.queuesize)

|

| 182 |

+

interface.launch(

|

| 183 |

+

auth=(args.username, args.password) if (args.username and args.password) else None,

|

| 184 |

+

share=args.share,

|

| 185 |

+

server_name="0.0.0.0" if args.listen else None,

|

| 186 |

+

server_port=args.port

|

| 187 |

+

)

|

TripoSR/obj_gen.py

ADDED

|

@@ -0,0 +1,92 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import logging

|

| 2 |

+

import os

|

| 3 |

+

import tempfile

|

| 4 |

+

import time

|

| 5 |

+

|

| 6 |

+

import numpy as np

|

| 7 |

+

import rembg

|

| 8 |

+

import torch

|

| 9 |

+

from PIL import Image

|

| 10 |

+

from functools import partial

|

| 11 |

+

|

| 12 |

+

from tsr.system import TSR

|

| 13 |

+

from tsr.utils import remove_background, resize_foreground, to_gradio_3d_orientation

|

| 14 |

+

|

| 15 |

+

import argparse

|

| 16 |

+

from dotenv import load_dotenv

|

| 17 |

+

|

| 18 |

+

load_dotenv()

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

device = "cpu"

|

| 23 |

+

|

| 24 |

+

model = TSR.from_pretrained(

|

| 25 |

+

"stabilityai/TripoSR",

|

| 26 |

+

config_name="config.yaml",

|

| 27 |

+

weight_name="model.ckpt",

|

| 28 |

+

)

|

| 29 |

+

|

| 30 |

+

# adjust the chunk size to balance between speed and memory usage

|

| 31 |

+

model.renderer.set_chunk_size(8192)

|

| 32 |

+

model.to(device)

|

| 33 |

+

|

| 34 |

+

rembg_session = rembg.new_session()

|

| 35 |

+

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

def preprocess(input_image, do_remove_background, foreground_ratio):

|

| 40 |

+

def fill_background(image):

|

| 41 |

+

image = np.array(image).astype(np.float32) / 255.0

|

| 42 |

+

image = image[:, :, :3] * image[:, :, 3:4] + (1 - image[:, :, 3:4]) * 0.5

|

| 43 |

+

image = Image.fromarray((image * 255.0).astype(np.uint8))

|

| 44 |

+

return image

|

| 45 |

+

|

| 46 |

+

if do_remove_background:

|

| 47 |

+

image = input_image.convert("RGB")

|

| 48 |

+

image = remove_background(image, rembg_session)

|

| 49 |

+

image = resize_foreground(image, foreground_ratio)

|

| 50 |

+

image = fill_background(image)

|

| 51 |

+

else:

|

| 52 |

+

image = input_image

|

| 53 |

+

if image.mode == "RGBA":

|

| 54 |

+

image = fill_background(image)

|

| 55 |

+

return image

|

| 56 |

+

|

| 57 |

+

|

| 58 |

+

def generate(image, mc_resolution, formats=["obj", "glb"], path="output.obj"):

|

| 59 |

+

scene_codes = model(image, device=device)

|

| 60 |

+

mesh = model.extract_mesh(scene_codes, resolution=mc_resolution)[0]

|

| 61 |

+

mesh = to_gradio_3d_orientation(mesh)

|

| 62 |

+

rv = []

|

| 63 |

+

for format in formats:

|

| 64 |

+

mesh_path = path.replace(".obj", f".{format}")

|

| 65 |

+

mesh.export(mesh_path)

|

| 66 |

+

rv.append(mesh_path)

|

| 67 |

+

return rv

|

| 68 |

+

|

| 69 |

+

|

| 70 |

+

def run_example(image_pil):

|

| 71 |

+

preprocessed = preprocess(image_pil, False, 0.9)

|

| 72 |

+

mesh_name_obj, mesh_name_glb = generate(preprocessed, 256, ["obj", "glb"])

|

| 73 |

+

return preprocessed, mesh_name_obj, mesh_name_glb

|

| 74 |

+

|

| 75 |

+

def generate_obj_from_image(image_pil, path="output.obj"):

|

| 76 |

+

# Preprocess the image without removing the background and with a foreground ratio of 0.9

|

| 77 |

+

preprocessed = preprocess(image_pil, True, 0.9)

|

| 78 |

+

|

| 79 |

+

# Generate the mesh and get the paths to the .obj and .glb files

|

| 80 |

+

mesh_paths = generate(preprocessed, 256, ["obj"], path)

|

| 81 |

+

|

| 82 |

+

# Return the path to the .obj file

|

| 83 |

+

return mesh_paths[0]

|

| 84 |

+

|

| 85 |

+

if __name__ == "__main__":

|

| 86 |

+

# run a test

|

| 87 |

+

image_path = "output.png"

|

| 88 |

+

image = Image.open(image_path)

|

| 89 |

+

generate_obj_from_image(image, "output.obj")

|

| 90 |

+

# move the .obj file to the output directory

|

| 91 |

+

|

| 92 |

+

|

TripoSR/output/0/input.png

ADDED

|

TripoSR/output/0/mesh.obj

ADDED

|

The diff for this file is too large to render.

See raw diff

|

|

|

TripoSR/requirements.txt

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

omegaconf==2.3.0

|

| 2 |

+

Pillow==10.1.0

|

| 3 |

+

einops==0.7.0

|

| 4 |

+

git+https://github.com/tatsy/torchmcubes.git

|

| 5 |

+

transformers==4.35.0

|

| 6 |

+

trimesh==4.0.5

|

| 7 |

+

rembg

|

| 8 |

+

huggingface-hub

|

| 9 |

+

imageio[ffmpeg]

|

TripoSR/run.py

ADDED

|

@@ -0,0 +1,162 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import logging

|

| 3 |

+

import os

|

| 4 |

+

import time

|

| 5 |

+

|

| 6 |

+

import numpy as np

|

| 7 |

+

import rembg

|

| 8 |

+

import torch

|

| 9 |

+

from PIL import Image

|

| 10 |

+

|

| 11 |

+

from tsr.system import TSR

|

| 12 |

+

from tsr.utils import remove_background, resize_foreground, save_video

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

class Timer:

|

| 16 |

+

def __init__(self):

|

| 17 |

+

self.items = {}

|

| 18 |

+

self.time_scale = 1000.0 # ms

|

| 19 |

+

self.time_unit = "ms"

|

| 20 |

+

|

| 21 |

+

def start(self, name: str) -> None:

|

| 22 |

+

if torch.cuda.is_available():

|

| 23 |

+

torch.cuda.synchronize()

|

| 24 |

+

self.items[name] = time.time()

|

| 25 |

+

logging.info(f"{name} ...")

|

| 26 |

+

|

| 27 |

+

def end(self, name: str) -> float:

|

| 28 |

+

if name not in self.items:

|

| 29 |

+

return

|

| 30 |

+

if torch.cuda.is_available():

|

| 31 |

+

torch.cuda.synchronize()

|

| 32 |

+

start_time = self.items.pop(name)

|

| 33 |

+

delta = time.time() - start_time

|

| 34 |

+

t = delta * self.time_scale

|

| 35 |

+

logging.info(f"{name} finished in {t:.2f}{self.time_unit}.")

|

| 36 |

+

|

| 37 |

+

|

| 38 |

+

timer = Timer()

|

| 39 |

+

|

| 40 |

+

|

| 41 |

+

logging.basicConfig(

|

| 42 |

+

format="%(asctime)s - %(levelname)s - %(message)s", level=logging.INFO

|

| 43 |

+

)

|

| 44 |

+

parser = argparse.ArgumentParser()

|

| 45 |

+

parser.add_argument("image", type=str, nargs="+", help="Path to input image(s).")

|

| 46 |

+

parser.add_argument(

|

| 47 |

+

"--device",

|

| 48 |

+

default="cuda:0",

|

| 49 |

+

type=str,

|

| 50 |

+

help="Device to use. If no CUDA-compatible device is found, will fallback to 'cpu'. Default: 'cuda:0'",

|

| 51 |

+

)

|

| 52 |

+

parser.add_argument(

|

| 53 |

+

"--pretrained-model-name-or-path",

|

| 54 |

+

default="stabilityai/TripoSR",

|

| 55 |

+

type=str,

|

| 56 |

+

help="Path to the pretrained model. Could be either a huggingface model id is or a local path. Default: 'stabilityai/TripoSR'",

|

| 57 |

+

)

|

| 58 |

+

parser.add_argument(

|

| 59 |

+

"--chunk-size",

|

| 60 |

+

default=8192,

|

| 61 |

+

type=int,

|

| 62 |

+

help="Evaluation chunk size for surface extraction and rendering. Smaller chunk size reduces VRAM usage but increases computation time. 0 for no chunking. Default: 8192",

|

| 63 |

+

)

|

| 64 |

+

parser.add_argument(

|

| 65 |

+

"--mc-resolution",

|

| 66 |

+

default=256,

|

| 67 |

+

type=int,

|

| 68 |

+

help="Marching cubes grid resolution. Default: 256"

|

| 69 |

+

)

|

| 70 |

+

parser.add_argument(

|

| 71 |

+

"--no-remove-bg",

|

| 72 |

+

action="store_true",

|

| 73 |

+

help="If specified, the background will NOT be automatically removed from the input image, and the input image should be an RGB image with gray background and properly-sized foreground. Default: false",

|

| 74 |

+

)

|

| 75 |

+

parser.add_argument(

|

| 76 |

+

"--foreground-ratio",

|

| 77 |

+

default=0.85,

|

| 78 |

+

type=float,

|

| 79 |

+

help="Ratio of the foreground size to the image size. Only used when --no-remove-bg is not specified. Default: 0.85",

|

| 80 |

+

)

|

| 81 |

+

parser.add_argument(

|

| 82 |

+

"--output-dir",

|

| 83 |

+

default="output/",

|

| 84 |

+

type=str,

|

| 85 |

+

help="Output directory to save the results. Default: 'output/'",

|

| 86 |

+

)

|

| 87 |

+

parser.add_argument(

|

| 88 |

+

"--model-save-format",

|

| 89 |

+

default="obj",

|

| 90 |

+

type=str,

|

| 91 |

+

choices=["obj", "glb"],

|

| 92 |

+

help="Format to save the extracted mesh. Default: 'obj'",

|

| 93 |

+

)

|

| 94 |

+

parser.add_argument(

|

| 95 |

+

"--render",

|

| 96 |

+

action="store_true",

|

| 97 |

+

help="If specified, save a NeRF-rendered video. Default: false",

|

| 98 |

+

)

|

| 99 |

+

args = parser.parse_args()

|

| 100 |

+

|

| 101 |

+

output_dir = args.output_dir

|

| 102 |

+

os.makedirs(output_dir, exist_ok=True)

|

| 103 |

+

|

| 104 |

+

device = args.device

|

| 105 |

+

if not torch.cuda.is_available():

|

| 106 |

+

device = "cpu"

|

| 107 |

+

|

| 108 |

+

timer.start("Initializing model")

|

| 109 |

+

model = TSR.from_pretrained(

|

| 110 |

+

args.pretrained_model_name_or_path,

|

| 111 |

+

config_name="config.yaml",

|

| 112 |

+

weight_name="model.ckpt",

|

| 113 |

+

)

|

| 114 |

+

model.renderer.set_chunk_size(args.chunk_size)

|

| 115 |

+

model.to(device)

|

| 116 |

+

timer.end("Initializing model")

|

| 117 |

+

|

| 118 |

+

timer.start("Processing images")

|

| 119 |

+

images = []

|

| 120 |

+

|

| 121 |

+

if args.no_remove_bg:

|

| 122 |

+

rembg_session = None

|

| 123 |

+

else:

|

| 124 |

+

rembg_session = rembg.new_session()

|

| 125 |

+

|

| 126 |

+

for i, image_path in enumerate(args.image):

|

| 127 |

+

if args.no_remove_bg:

|

| 128 |

+

image = np.array(Image.open(image_path).convert("RGB"))

|

| 129 |

+

else:

|

| 130 |

+

image = remove_background(Image.open(image_path), rembg_session)

|

| 131 |

+

image = resize_foreground(image, args.foreground_ratio)

|

| 132 |

+

image = np.array(image).astype(np.float32) / 255.0

|

| 133 |

+

image = image[:, :, :3] * image[:, :, 3:4] + (1 - image[:, :, 3:4]) * 0.5

|

| 134 |

+

image = Image.fromarray((image * 255.0).astype(np.uint8))

|

| 135 |

+

if not os.path.exists(os.path.join(output_dir, str(i))):

|

| 136 |

+

os.makedirs(os.path.join(output_dir, str(i)))

|

| 137 |

+

image.save(os.path.join(output_dir, str(i), f"input.png"))

|

| 138 |

+

images.append(image)

|

| 139 |

+

timer.end("Processing images")

|

| 140 |

+

|

| 141 |

+

for i, image in enumerate(images):

|

| 142 |

+

logging.info(f"Running image {i + 1}/{len(images)} ...")

|

| 143 |

+

|

| 144 |

+

timer.start("Running model")

|

| 145 |

+

with torch.no_grad():

|

| 146 |

+

scene_codes = model([image], device=device)

|

| 147 |

+

timer.end("Running model")

|

| 148 |

+

|

| 149 |

+

if args.render:

|

| 150 |

+

timer.start("Rendering")

|

| 151 |

+

render_images = model.render(scene_codes, n_views=30, return_type="pil")

|

| 152 |

+

for ri, render_image in enumerate(render_images[0]):

|

| 153 |

+

render_image.save(os.path.join(output_dir, str(i), f"render_{ri:03d}.png"))

|

| 154 |

+

save_video(

|

| 155 |

+

render_images[0], os.path.join(output_dir, str(i), f"render.mp4"), fps=30

|

| 156 |

+

)

|

| 157 |

+

timer.end("Rendering")

|

| 158 |

+

|

| 159 |

+

timer.start("Exporting mesh")

|

| 160 |

+

meshes = model.extract_mesh(scene_codes, resolution=args.mc_resolution)

|

| 161 |

+

meshes[0].export(os.path.join(output_dir, str(i), f"mesh.{args.model_save_format}"))

|

| 162 |

+

timer.end("Exporting mesh")

|

TripoSR/tsr/__pycache__/system.cpython-310.pyc

ADDED

|

Binary file (5.15 kB). View file

|

|

|

TripoSR/tsr/__pycache__/utils.cpython-310.pyc

ADDED

|

Binary file (13.5 kB). View file

|

|

|

TripoSR/tsr/models/__pycache__/isosurface.cpython-310.pyc

ADDED

|

Binary file (2.23 kB). View file

|

|

|

TripoSR/tsr/models/__pycache__/nerf_renderer.cpython-310.pyc

ADDED

|

Binary file (5.28 kB). View file

|

|

|

TripoSR/tsr/models/__pycache__/network_utils.cpython-310.pyc

ADDED

|

Binary file (3.41 kB). View file

|

|

|

TripoSR/tsr/models/isosurface.py

ADDED

|

@@ -0,0 +1,52 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from typing import Callable, Optional, Tuple

|

| 2 |

+

|

| 3 |

+

import numpy as np

|

| 4 |

+

import torch

|

| 5 |

+

import torch.nn as nn

|

| 6 |

+

from torchmcubes import marching_cubes

|

| 7 |

+

|

| 8 |

+

|

| 9 |

+

class IsosurfaceHelper(nn.Module):

|

| 10 |

+

points_range: Tuple[float, float] = (0, 1)

|

| 11 |

+

|

| 12 |

+

@property

|

| 13 |

+

def grid_vertices(self) -> torch.FloatTensor:

|

| 14 |

+

raise NotImplementedError

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

class MarchingCubeHelper(IsosurfaceHelper):

|

| 18 |

+

def __init__(self, resolution: int) -> None:

|

| 19 |

+

super().__init__()

|

| 20 |

+

self.resolution = resolution

|

| 21 |

+

self.mc_func: Callable = marching_cubes

|

| 22 |

+

self._grid_vertices: Optional[torch.FloatTensor] = None

|

| 23 |

+

|

| 24 |

+

@property

|

| 25 |

+

def grid_vertices(self) -> torch.FloatTensor:

|

| 26 |

+

if self._grid_vertices is None:

|

| 27 |

+

# keep the vertices on CPU so that we can support very large resolution

|

| 28 |

+

x, y, z = (

|

| 29 |

+

torch.linspace(*self.points_range, self.resolution),

|

| 30 |

+

torch.linspace(*self.points_range, self.resolution),

|

| 31 |

+

torch.linspace(*self.points_range, self.resolution),

|

| 32 |

+

)

|

| 33 |

+

x, y, z = torch.meshgrid(x, y, z, indexing="ij")

|

| 34 |

+

verts = torch.cat(

|

| 35 |

+

[x.reshape(-1, 1), y.reshape(-1, 1), z.reshape(-1, 1)], dim=-1

|

| 36 |

+

).reshape(-1, 3)

|

| 37 |

+

self._grid_vertices = verts

|

| 38 |

+

return self._grid_vertices

|

| 39 |

+

|

| 40 |

+

def forward(

|

| 41 |

+

self,

|

| 42 |

+

level: torch.FloatTensor,

|

| 43 |

+

) -> Tuple[torch.FloatTensor, torch.LongTensor]:

|

| 44 |

+

level = -level.view(self.resolution, self.resolution, self.resolution)

|

| 45 |

+

try:

|

| 46 |

+

v_pos, t_pos_idx = self.mc_func(level.detach(), 0.0)

|

| 47 |

+

except AttributeError:

|

| 48 |

+

print("torchmcubes was not compiled with CUDA support, use CPU version instead.")

|

| 49 |

+

v_pos, t_pos_idx = self.mc_func(level.detach().cpu(), 0.0)

|

| 50 |

+

v_pos = v_pos[..., [2, 1, 0]]

|

| 51 |

+

v_pos = v_pos / (self.resolution - 1.0)

|

| 52 |

+

return v_pos.to(level.device), t_pos_idx.to(level.device)

|

TripoSR/tsr/models/nerf_renderer.py

ADDED

|

@@ -0,0 +1,180 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|