initial commit

Browse files- app.py +145 -0

- images.png +0 -0

- packages.txt +1 -0

- requirements.txt +16 -0

- scholarly_text.jpg +0 -0

app.py

ADDED

|

@@ -0,0 +1,145 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

"""

|

| 2 |

+

## App: NLP App with Streamlit

|

| 3 |

+

Credits: Streamlit Team,Marc Skov Madsen(For Awesome-streamlit gallery)

|

| 4 |

+

Description

|

| 5 |

+

This is a Natural Language Processing(NLP) Based App useful for basic NLP concepts such as follows;

|

| 6 |

+

|

| 7 |

+

+ Tokenization & Lemmatization using Spacy

|

| 8 |

+

|

| 9 |

+

+ Named Entity Recognition(NER) using SpaCy

|

| 10 |

+

|

| 11 |

+

+ Sentiment Analysis using TextBlob

|

| 12 |

+

|

| 13 |

+

+ Document/Text Summarization using Gensim/T5

|

| 14 |

+

|

| 15 |

+

This is built with Streamlit Framework, an awesome framework for building ML and NLP tools.

|

| 16 |

+

Purpose

|

| 17 |

+

To perform basic and useful NLP task with Streamlit, Spacy, Textblob and Gensim

|

| 18 |

+

"""

|

| 19 |

+

# Core Pkgs

|

| 20 |

+

import streamlit as st

|

| 21 |

+

import os

|

| 22 |

+

import torch

|

| 23 |

+

from transformers import AutoTokenizer, AutoModelWithLMHead

|

| 24 |

+

|

| 25 |

+

# NLP Pkgs

|

| 26 |

+

from textblob import TextBlob

|

| 27 |

+

import spacy

|

| 28 |

+

from gensim.summarization import summarize

|

| 29 |

+

import requests

|

| 30 |

+

import cv2

|

| 31 |

+

import numpy as np

|

| 32 |

+

import pytesseract

|

| 33 |

+

pytesseract.pytesseract.tesseract_cmd = r"C:\Program Files\Tesseract-OCR\tesseract.exe"

|

| 34 |

+

from PIL import Image

|

| 35 |

+

# Function to Analyse Tokens and Lemma

|

| 36 |

+

tokenizer = AutoTokenizer.from_pretrained('t5-base')

|

| 37 |

+

model = AutoModelWithLMHead.from_pretrained('t5-base', return_dict=True)

|

| 38 |

+

@st.cache

|

| 39 |

+

def text_analyzer(my_text):

|

| 40 |

+

nlp = spacy.load('en_core_web_sm')

|

| 41 |

+

docx = nlp(my_text)

|

| 42 |

+

# tokens = [ token.text for token in docx]

|

| 43 |

+

allData = [('"Token":{},\n"Lemma":{}'.format(token.text,token.lemma_))for token in docx ]

|

| 44 |

+

return allData

|

| 45 |

+

|

| 46 |

+

# Function For Extracting Entities

|

| 47 |

+

@st.cache

|

| 48 |

+

def entity_analyzer(my_text):

|

| 49 |

+

nlp = spacy.load('en_core_web_sm')

|

| 50 |

+

docx = nlp(my_text)

|

| 51 |

+

tokens = [ token.text for token in docx]

|

| 52 |

+

entities = [(entity.text,entity.label_)for entity in docx.ents]

|

| 53 |

+

allData = ['"Token":{},\n"Entities":{}'.format(tokens,entities)]

|

| 54 |

+

return allData

|

| 55 |

+

|

| 56 |

+

|

| 57 |

+

def main():

|

| 58 |

+

""" NLP Based App with Streamlit """

|

| 59 |

+

|

| 60 |

+

# Title

|

| 61 |

+

st.title("Streamlit NLP APP")

|

| 62 |

+

st.markdown("""

|

| 63 |

+

#### Description

|

| 64 |

+

+ This is a Natural Language Processing(NLP) Based App useful for basic NLP task

|

| 65 |

+

NER,Sentiment, Spell Corrections and Summarization

|

| 66 |

+

""")

|

| 67 |

+

|

| 68 |

+

|

| 69 |

+

# Entity Extraction

|

| 70 |

+

if st.checkbox("Show Named Entities"):

|

| 71 |

+

st.subheader("Analyze Your Text")

|

| 72 |

+

|

| 73 |

+

message = st.text_area("Enter your Text","Typing Here ..")

|

| 74 |

+

if st.button("Extract"):

|

| 75 |

+

entity_result = entity_analyzer(message)

|

| 76 |

+

st.json(entity_result)

|

| 77 |

+

|

| 78 |

+

# Sentiment Analysis

|

| 79 |

+

elif st.checkbox("Show Sentiment Analysis"):

|

| 80 |

+

st.subheader("Analyse Your Text")

|

| 81 |

+

message = st.text_area("Enter Text plz","Type Here .")

|

| 82 |

+

if st.button("Analyze"):

|

| 83 |

+

blob = TextBlob(message)

|

| 84 |

+

result_sentiment = blob.sentiment

|

| 85 |

+

st.success(result_sentiment)

|

| 86 |

+

#Text Corrections

|

| 87 |

+

elif st.checkbox("Spell Corrections"):

|

| 88 |

+

st.subheader("Correct Your Text")

|

| 89 |

+

message = st.text_area("Enter the Text","Type please ..")

|

| 90 |

+

if st.button("Spell Corrections"):

|

| 91 |

+

st.text("Using TextBlob ..")

|

| 92 |

+

st.success(TextBlob(message).correct())

|

| 93 |

+

def change_photo_state():

|

| 94 |

+

st.session_state["photo"]="done"

|

| 95 |

+

st.subheader("Summary section, feed your image!")

|

| 96 |

+

camera_photo = st.camera_input("Take a photo", on_change=change_photo_state)

|

| 97 |

+

uploaded_photo = st.file_uploader("Upload Image",type=['jpg','png','jpeg'], on_change=change_photo_state)

|

| 98 |

+

message = st.text_input("Or, drop your text here!")

|

| 99 |

+

if "photo" not in st.session_state:

|

| 100 |

+

st.session_state["photo"]="not done"

|

| 101 |

+

|

| 102 |

+

if st.session_state["photo"]=="done" or message:

|

| 103 |

+

if uploaded_photo:

|

| 104 |

+

img = Image.open(uploaded_photo)

|

| 105 |

+

img = img.save("img.png")

|

| 106 |

+

img = cv2.imread("img.png")

|

| 107 |

+

text = pytesseract.image_to_string(img)

|

| 108 |

+

st.success(text)

|

| 109 |

+

if camera_photo:

|

| 110 |

+

img = Image.open(camera_photo)

|

| 111 |

+

img = img.save("img.png")

|

| 112 |

+

img = cv2.imread("img.png")

|

| 113 |

+

text = pytesseract.image_to_string(img)

|

| 114 |

+

st.success(text)

|

| 115 |

+

if uploaded_photo==None and camera_photo==None:

|

| 116 |

+

#our_image=load_image("image.jpg")

|

| 117 |

+

#img = cv2.imread("scholarly_text.jpg")

|

| 118 |

+

text = message

|

| 119 |

+

# Summarization

|

| 120 |

+

if st.checkbox("Show Text Summarization Genism"):

|

| 121 |

+

st.subheader("Summarize Your Text")

|

| 122 |

+

#message = st.text_area("Enter the Text","Type please ..")

|

| 123 |

+

st.text("Using Gensim Summarizer ..")

|

| 124 |

+

#st.success(mess)

|

| 125 |

+

summary_result = summarize(text)

|

| 126 |

+

st.success(summary_result)

|

| 127 |

+

|

| 128 |

+

elif st.checkbox("Show Text Summarization T5"):

|

| 129 |

+

st.subheader("Summarize Your Text")

|

| 130 |

+

#message = st.text_area("Enter the Text","Type please ..")

|

| 131 |

+

st.text("Using Google T5 Transformer ..")

|

| 132 |

+

inputs = tokenizer.encode("summarize: " + text,

|

| 133 |

+

return_tensors='pt',

|

| 134 |

+

max_length=512,

|

| 135 |

+

truncation=True)

|

| 136 |

+

summary_ids = model.generate(inputs, max_length=150, min_length=80, length_penalty=5., num_beams=2)

|

| 137 |

+

summary = tokenizer.decode(summary_ids[0])

|

| 138 |

+

st.success(summary)

|

| 139 |

+

|

| 140 |

+

st.sidebar.subheader("About App")

|

| 141 |

+

st.sidebar.subheader("By")

|

| 142 |

+

st.sidebar.text("Soumen Sarker")

|

| 143 |

+

|

| 144 |

+

if __name__ == '__main__':

|

| 145 |

+

main()

|

images.png

ADDED

|

packages.txt

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

tesseract-ocr-all

|

requirements.txt

ADDED

|

@@ -0,0 +1,16 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

torch

|

| 2 |

+

transformers

|

| 3 |

+

nltk==3.6.5

|

| 4 |

+

wordnet

|

| 5 |

+

gensim==3.8.3

|

| 6 |

+

joblib==1.1.0

|

| 7 |

+

numpy==1.21.4

|

| 8 |

+

pandas==1.3.4

|

| 9 |

+

scikit-learn==1.0.1

|

| 10 |

+

spacy==3.2.0

|

| 11 |

+

streamlit==1.2.0

|

| 12 |

+

textblob==0.17.1

|

| 13 |

+

request

|

| 14 |

+

pytesseract

|

| 15 |

+

opencv-python

|

| 16 |

+

Pillow

|

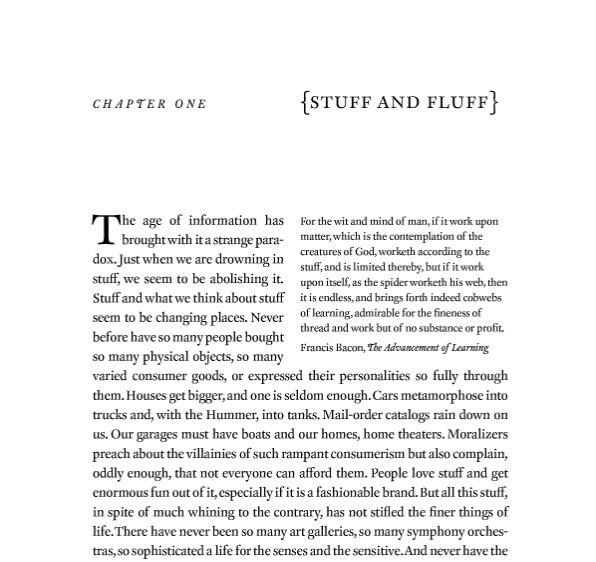

scholarly_text.jpg

ADDED

|