Spaces:

Runtime error

Runtime error

MiniGPT-Med

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +1 -0

- Med_examples_v2/1.2.276.0.7230010.3.1.4.8323329.1495.1517874291.249176.jpg +0 -0

- Med_examples_v2/1.2.276.0.7230010.3.1.4.8323329.16254.1517874395.786150.jpg +0 -0

- Med_examples_v2/1.2.840.113654.2.55.48339325922382839066544590341580673064.png +0 -0

- Med_examples_v2/1.3.6.1.4.1.14519.5.2.1.7009.9004.242286124999058976921785904029.png +0 -0

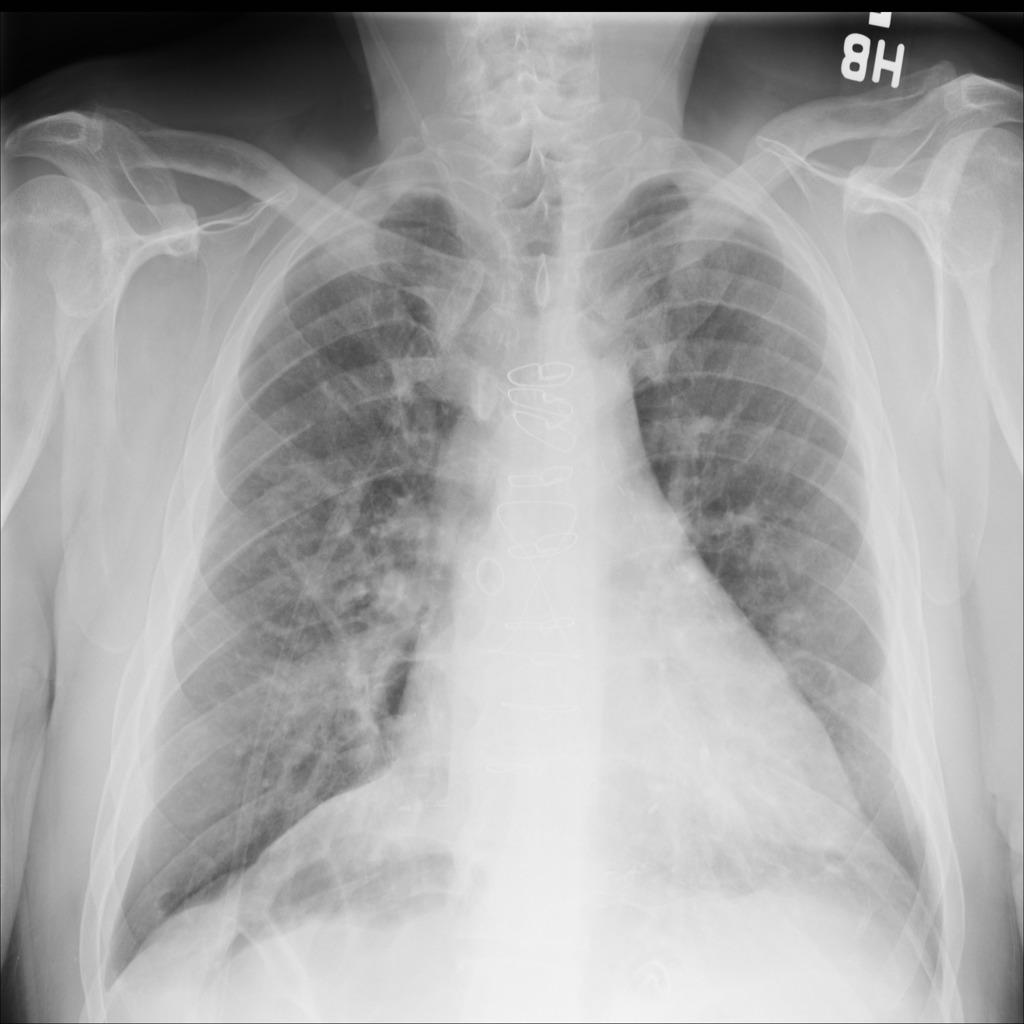

- Med_examples_v2/5f4e8079-8225a5d2-1b0c3c46-4394a094-f285db0e.jpg +3 -0

- Med_examples_v2/synpic33889.jpg +0 -0

- Med_examples_v2/synpic50958.jpg +0 -0

- Med_examples_v2/synpic56061.jpg +0 -0

- Med_examples_v2/synpic58547.jpg +0 -0

- Med_examples_v2/synpic60423.jpg +0 -0

- Med_examples_v2/synpic676.jpg +0 -0

- Med_examples_v2/xmlab149/source.jpg +0 -0

- Med_examples_v2/xmlab589/source.jpg +0 -0

- README.md +51 -13

- dcgm/bash/34649895/dcgm-gpu-stats-gpu202-02-r-34649895.out +39 -0

- dcgm/bash/34673507/dcgm-gpu-stats-gpu201-23-l-34673507.out +39 -0

- dcgm/bash/34676162/dcgm-gpu-stats-gpu201-23-l-34676162.out +39 -0

- dcgm/bash/34691276/dcgm-gpu-stats-gpu201-09-l-34691276.out +42 -0

- dcgm/bash/34709014/dcgm-gpu-stats-gpu109-16-l-34709014.out +39 -0

- dcgm/bash/34721198/dcgm-gpu-stats-gpu203-23-r-34721198.out +57 -0

- dcgm/bash/34734121/dcgm-gpu-stats-gpu201-23-l-34734121.out +35 -0

- dcgm/bash/34738689/dcgm-gpu-stats-gpu201-16-r-34738689.out +35 -0

- dcgm/bash/34757693/dcgm-gpu-stats-gpu202-16-r-34757693.out +42 -0

- demo_v2.py +648 -0

- environment.yml +35 -0

- eval_configs/minigptv2_benchmark_evaluation.yaml +69 -0

- eval_configs/minigptv2_eval.yaml +24 -0

- eval_scripts/.DS_Store +0 -0

- eval_scripts/__pycache__/IoU.cpython-39.pyc +0 -0

- eval_scripts/__pycache__/clean_json.cpython-39.pyc +0 -0

- eval_scripts/__pycache__/metrics.cpython-39.pyc +0 -0

- eval_scripts/clean_json.py +74 -0

- eval_scripts/metrics.py +164 -0

- eval_scripts/model_evaluation.py +274 -0

- miniGPTV2.yml +35 -0

- miniGPT_Med_.pth +3 -0

- minigpt4/.DS_Store +0 -0

- minigpt4/__init__.py +31 -0

- minigpt4/__pycache__/__init__.cpython-310.pyc +0 -0

- minigpt4/__pycache__/__init__.cpython-39.pyc +0 -0

- minigpt4/common/.DS_Store +0 -0

- minigpt4/common/__init__.py +0 -0

- minigpt4/common/__pycache__/__init__.cpython-310.pyc +0 -0

- minigpt4/common/__pycache__/__init__.cpython-39.pyc +0 -0

- minigpt4/common/__pycache__/config.cpython-310.pyc +0 -0

- minigpt4/common/__pycache__/config.cpython-39.pyc +0 -0

- minigpt4/common/__pycache__/dist_utils.cpython-310.pyc +0 -0

- minigpt4/common/__pycache__/dist_utils.cpython-39.pyc +0 -0

- minigpt4/common/__pycache__/eval_utils.cpython-39.pyc +0 -0

.gitattributes

CHANGED

|

@@ -34,3 +34,4 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

MiniGPT-Med-github/Med_examples_v2/5f4e8079-8225a5d2-1b0c3c46-4394a094-f285db0e.jpg filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

MiniGPT-Med-github/Med_examples_v2/5f4e8079-8225a5d2-1b0c3c46-4394a094-f285db0e.jpg filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

Med_examples_v2/5f4e8079-8225a5d2-1b0c3c46-4394a094-f285db0e.jpg filter=lfs diff=lfs merge=lfs -text

|

Med_examples_v2/1.2.276.0.7230010.3.1.4.8323329.1495.1517874291.249176.jpg

ADDED

|

Med_examples_v2/1.2.276.0.7230010.3.1.4.8323329.16254.1517874395.786150.jpg

ADDED

|

Med_examples_v2/1.2.840.113654.2.55.48339325922382839066544590341580673064.png

ADDED

|

Med_examples_v2/1.3.6.1.4.1.14519.5.2.1.7009.9004.242286124999058976921785904029.png

ADDED

|

Med_examples_v2/5f4e8079-8225a5d2-1b0c3c46-4394a094-f285db0e.jpg

ADDED

|

Git LFS Details

|

Med_examples_v2/synpic33889.jpg

ADDED

|

Med_examples_v2/synpic50958.jpg

ADDED

|

Med_examples_v2/synpic56061.jpg

ADDED

|

Med_examples_v2/synpic58547.jpg

ADDED

|

Med_examples_v2/synpic60423.jpg

ADDED

|

Med_examples_v2/synpic676.jpg

ADDED

|

Med_examples_v2/xmlab149/source.jpg

ADDED

|

Med_examples_v2/xmlab589/source.jpg

ADDED

|

README.md

CHANGED

|

@@ -1,13 +1,51 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# MiniGPT-Med: Large Language Model as a General Interface for Radiology Diagnosis

|

| 2 |

+

Asma Alkhaldi, Raneem Alnajim, Layan Alabdullatef, Rawan Alyahya, Jun Chen, Deyao Zhu, Ahmed Alsinan, Mohamed Elhoseiny

|

| 3 |

+

|

| 4 |

+

*Saudi Data and Artificial Intelligence Authority (SDAIA) and King Abdullah University of Science and Technology (KAUST)*

|

| 5 |

+

|

| 6 |

+

## Installation

|

| 7 |

+

```

|

| 8 |

+

git clone https://github.com/Vision-CAIR/MiniGPT-Med

|

| 9 |

+

cd MiniGPT-Med

|

| 10 |

+

conda env create -f environment.yml

|

| 11 |

+

conda activate miniGPT-Med

|

| 12 |

+

```

|

| 13 |

+

|

| 14 |

+

## Download miniGPT-Med trained model weights

|

| 15 |

+

|

| 16 |

+

* miniGPT-Med's weights [miniGPT-Med Model](https://drive.google.com/file/d/1kjGLk6s9LsBmXfLWQFCdlwF3aul08Cl8/view?usp=sharing)

|

| 17 |

+

|

| 18 |

+

* Then modify line 8 at miniGPT-Med/eval_configs/minigptv2_eval.yaml to be the path of miniGPT-Med weight.

|

| 19 |

+

|

| 20 |

+

## Prepare weight for LLMs

|

| 21 |

+

|

| 22 |

+

### Llama2 Version

|

| 23 |

+

|

| 24 |

+

```shell

|

| 25 |

+

git clone https://huggingface.co/meta-llama/Llama-2-13b-chat-hf

|

| 26 |

+

```

|

| 27 |

+

|

| 28 |

+

Then modify line 14 at miniGPT-Med/minigpt4/configs/models/minigpt_v2.yaml to be the path of Llama-2-13b-chat-hf.

|

| 29 |

+

|

| 30 |

+

## Launching Demo Locally

|

| 31 |

+

|

| 32 |

+

```

|

| 33 |

+

python demo.py --cfg-path eval_configs/minigptv2_eval.yaml --gpu-id 0

|

| 34 |

+

```

|

| 35 |

+

|

| 36 |

+

## Dataset

|

| 37 |

+

| Dataset | Images | json file|

|

| 38 |

+

|---------|---------|----------|

|

| 39 |

+

| MIMIC |[Download](https://physionet.org/content/mimiciii/1.4/) | [Download](https://drive.google.com/drive/folders/1nZhdfNoh7fkx7CWvf0_47_OLv3tA2m3o?usp=sharing) |

|

| 40 |

+

| NLST |[Download](https://wiki.cancerimagingarchive.net/display/NLST)| [Downlaod](https://drive.google.com/drive/folders/1OKgMTaGLu_dWRuco6JipYzezw3oNwgaz?usp=sharing) |

|

| 41 |

+

|SLAKE |[Downlaod](https://www.med-vqa.com/slake/) |[Download](https://drive.google.com/drive/folders/1vstjmfRbKahSAsi_b6FmTQiuolvgO8oC?usp=sharing)|

|

| 42 |

+

|RSNA |[Downlaod](https://www.rsna.org/rsnai/ai-image-challenge/rsna-pneumonia-detection-challenge-2018) | [Download](https://drive.google.com/drive/folders/1wkXPvUNqda6jWAIduyiVJkS3Tx7P7td8?usp=sharing) |

|

| 43 |

+

|Rad-VQA |[Downalod](https://osf.io/89kps/) |[Download](https://drive.google.com/drive/folders/1ING6Dodwk2DU_t4GHQYudNFMMg9OMfBQ?usp=sharing) |

|

| 44 |

+

|

| 45 |

+

## Acknowledgement

|

| 46 |

+

|

| 47 |

+

- MiniGPT-4

|

| 48 |

+

- Lavis

|

| 49 |

+

- Vicuna

|

| 50 |

+

- Falcon

|

| 51 |

+

- Llama 2

|

dcgm/bash/34649895/dcgm-gpu-stats-gpu202-02-r-34649895.out

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34649895.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 0 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Tue Jul 9 09:29:46 2024 |

|

| 7 |

+

| End Time | Wed Jul 10 09:30:32 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 86445.3 |

|

| 9 |

+

| No. of Processes | 1 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 232291 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 65.6704, Max: 84.315, Min: 61.555 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 10104078336 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 595, Max: 1155, Min: 210 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+-- Compute Process Utilization ---+-----------------------------------------+

|

| 33 |

+

| PID | 1548651 |

|

| 34 |

+

| Avg SM Utilization (%) | 0 |

|

| 35 |

+

| Avg Memory Utilization (%) | 0 |

|

| 36 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 37 |

+

| Overall Health | Healthy |

|

| 38 |

+

+------------------------------------+-----------------------------------------+

|

| 39 |

+

|

dcgm/bash/34673507/dcgm-gpu-stats-gpu201-23-l-34673507.out

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34673507.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 1 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Fri Jul 12 11:48:45 2024 |

|

| 7 |

+

| End Time | Sat Jul 13 11:49:39 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 86454.5 |

|

| 9 |

+

| No. of Processes | 1 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 252136 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 69.7762, Max: 70.022, Min: 69.151 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 10104078336 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 1157, Max: 1410, Min: 1155 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+-- Compute Process Utilization ---+-----------------------------------------+

|

| 33 |

+

| PID | 2527521 |

|

| 34 |

+

| Avg SM Utilization (%) | 0 |

|

| 35 |

+

| Avg Memory Utilization (%) | 0 |

|

| 36 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 37 |

+

| Overall Health | Healthy |

|

| 38 |

+

+------------------------------------+-----------------------------------------+

|

| 39 |

+

|

dcgm/bash/34676162/dcgm-gpu-stats-gpu201-23-l-34676162.out

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34676162.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 3 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Sun Jul 14 07:57:08 2024 |

|

| 7 |

+

| End Time | Mon Jul 15 07:57:59 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 86450.6 |

|

| 9 |

+

| No. of Processes | 1 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 249997 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 82.8167, Max: 86.615, Min: 70.491 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 10104078336 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 1352, Max: 1410, Min: 1080 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+-- Compute Process Utilization ---+-----------------------------------------+

|

| 33 |

+

| PID | 3048225 |

|

| 34 |

+

| Avg SM Utilization (%) | 0 |

|

| 35 |

+

| Avg Memory Utilization (%) | 0 |

|

| 36 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 37 |

+

| Overall Health | Healthy |

|

| 38 |

+

+------------------------------------+-----------------------------------------+

|

| 39 |

+

|

dcgm/bash/34691276/dcgm-gpu-stats-gpu201-09-l-34691276.out

ADDED

|

@@ -0,0 +1,42 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34691276.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 0 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Tue Jul 16 08:21:43 2024 |

|

| 7 |

+

| End Time | Tue Jul 16 21:44:34 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 48170.9 |

|

| 9 |

+

| No. of Processes | 2 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 222759 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 61.4158, Max: 61.683, Min: 61.349 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 10806624256 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 210, Max: 225, Min: 210 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+-- Compute Process Utilization ---+-----------------------------------------+

|

| 33 |

+

| PID | 1958147 |

|

| 34 |

+

| Avg SM Utilization (%) | 1 |

|

| 35 |

+

| Avg Memory Utilization (%) | 0 |

|

| 36 |

+

| PID | 2068287 |

|

| 37 |

+

| Avg SM Utilization (%) | 0 |

|

| 38 |

+

| Avg Memory Utilization (%) | 0 |

|

| 39 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 40 |

+

| Overall Health | Healthy |

|

| 41 |

+

+------------------------------------+-----------------------------------------+

|

| 42 |

+

|

dcgm/bash/34709014/dcgm-gpu-stats-gpu109-16-l-34709014.out

ADDED

|

@@ -0,0 +1,39 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34709014.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 3 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Thu Jul 18 07:54:11 2024 |

|

| 7 |

+

| End Time | Fri Jul 19 07:55:07 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 86456.3 |

|

| 9 |

+

| No. of Processes | 1 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 245376 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 67.8347, Max: 68.156, Min: 67.563 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 10582228992 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 1161, Max: 1410, Min: 1155 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+-- Compute Process Utilization ---+-----------------------------------------+

|

| 33 |

+

| PID | 4005887 |

|

| 34 |

+

| Avg SM Utilization (%) | 0 |

|

| 35 |

+

| Avg Memory Utilization (%) | 0 |

|

| 36 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 37 |

+

| Overall Health | Healthy |

|

| 38 |

+

+------------------------------------+-----------------------------------------+

|

| 39 |

+

|

dcgm/bash/34721198/dcgm-gpu-stats-gpu203-23-r-34721198.out

ADDED

|

@@ -0,0 +1,57 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34721198.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 1 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Fri Jul 19 21:34:44 2024 |

|

| 7 |

+

| End Time | Sat Jul 20 00:01:06 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 8782.23 |

|

| 9 |

+

| No. of Processes | 7 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 225540 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 75.9496, Max: 87.541, Min: 65.792 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 13356761088 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 210, Max: 210, Min: 210 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+-- Compute Process Utilization ---+-----------------------------------------+

|

| 33 |

+

| PID | 866309 |

|

| 34 |

+

| Avg SM Utilization (%) | 0 |

|

| 35 |

+

| Avg Memory Utilization (%) | 0 |

|

| 36 |

+

| PID | 866955 |

|

| 37 |

+

| Avg SM Utilization (%) | 1 |

|

| 38 |

+

| Avg Memory Utilization (%) | 0 |

|

| 39 |

+

| PID | 868076 |

|

| 40 |

+

| Avg SM Utilization (%) | 0 |

|

| 41 |

+

| Avg Memory Utilization (%) | 0 |

|

| 42 |

+

| PID | 868638 |

|

| 43 |

+

| Avg SM Utilization (%) | 5 |

|

| 44 |

+

| Avg Memory Utilization (%) | 0 |

|

| 45 |

+

| PID | 869519 |

|

| 46 |

+

| Avg SM Utilization (%) | 0 |

|

| 47 |

+

| Avg Memory Utilization (%) | 0 |

|

| 48 |

+

| PID | 871043 |

|

| 49 |

+

| Avg SM Utilization (%) | 1 |

|

| 50 |

+

| Avg Memory Utilization (%) | 0 |

|

| 51 |

+

| PID | 871322 |

|

| 52 |

+

| Avg SM Utilization (%) | 0 |

|

| 53 |

+

| Avg Memory Utilization (%) | 0 |

|

| 54 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 55 |

+

| Overall Health | Healthy |

|

| 56 |

+

+------------------------------------+-----------------------------------------+

|

| 57 |

+

|

dcgm/bash/34734121/dcgm-gpu-stats-gpu201-23-l-34734121.out

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34734121.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 3 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Tue Jul 23 11:47:49 2024 |

|

| 7 |

+

| End Time | Tue Jul 23 13:47:51 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 7202.22 |

|

| 9 |

+

| No. of Processes | 0 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 226384 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 62.6807, Max: 81.445, Min: 62.015 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 0 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 220, Max: 1410, Min: 210 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 33 |

+

| Overall Health | Healthy |

|

| 34 |

+

+------------------------------------+-----------------------------------------+

|

| 35 |

+

|

dcgm/bash/34738689/dcgm-gpu-stats-gpu201-16-r-34738689.out

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34738689.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 3 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Wed Jul 24 10:14:38 2024 |

|

| 7 |

+

| End Time | Wed Jul 24 11:45:33 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 5454.69 |

|

| 9 |

+

| No. of Processes | 0 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 232516 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 64.2532, Max: 64.329, Min: 63.938 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 0 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 210, Max: 210, Min: 210 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 33 |

+

| Overall Health | Healthy |

|

| 34 |

+

+------------------------------------+-----------------------------------------+

|

| 35 |

+

|

dcgm/bash/34757693/dcgm-gpu-stats-gpu202-16-r-34757693.out

ADDED

|

@@ -0,0 +1,42 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Successfully retrieved statistics for job: 34757693.

|

| 2 |

+

+------------------------------------------------------------------------------+

|

| 3 |

+

| GPU ID: 2 |

|

| 4 |

+

+====================================+=========================================+

|

| 5 |

+

|----- Execution Stats ------------+-----------------------------------------|

|

| 6 |

+

| Start Time | Thu Jul 25 15:38:16 2024 |

|

| 7 |

+

| End Time | Thu Jul 25 17:08:59 2024 |

|

| 8 |

+

| Total Execution Time (sec) | 5442.54 |

|

| 9 |

+

| No. of Processes | 2 |

|

| 10 |

+

+----- Performance Stats ----------+-----------------------------------------+

|

| 11 |

+

| Energy Consumed (Joules) | 214029 |

|

| 12 |

+

| Power Usage (Watts) | Avg: 59.2012, Max: 67.659, Min: 59.026 |

|

| 13 |

+

| Max GPU Memory Used (bytes) | 7616856064 |

|

| 14 |

+

| SM Clock (MHz) | Avg: 243, Max: 1080, Min: 210 |

|

| 15 |

+

| Memory Clock (MHz) | Avg: 1593, Max: 1593, Min: 1593 |

|

| 16 |

+

| SM Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 17 |

+

| Memory Utilization (%) | Avg: 0, Max: 0, Min: 0 |

|

| 18 |

+

| PCIe Rx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 19 |

+

| PCIe Tx Bandwidth (megabytes) | Avg: N/A, Max: N/A, Min: N/A |

|

| 20 |

+

+----- Event Stats ----------------+-----------------------------------------+

|

| 21 |

+

| Single Bit ECC Errors | 0 |

|

| 22 |

+

| Double Bit ECC Errors | 0 |

|

| 23 |

+

| PCIe Replay Warnings | 0 |

|

| 24 |

+

| Critical XID Errors | 0 |

|

| 25 |

+

+----- Slowdown Stats -------------+-----------------------------------------+

|

| 26 |

+

| Due to - Power (%) | 0 |

|

| 27 |

+

| - Thermal (%) | 0 |

|

| 28 |

+

| - Reliability (%) | Not Supported |

|

| 29 |

+

| - Board Limit (%) | Not Supported |

|

| 30 |

+

| - Low Utilization (%) | Not Supported |

|

| 31 |

+

| - Sync Boost (%) | 0 |

|

| 32 |

+

+-- Compute Process Utilization ---+-----------------------------------------+

|

| 33 |

+

| PID | 1095606 |

|

| 34 |

+

| Avg SM Utilization (%) | 3 |

|

| 35 |

+

| Avg Memory Utilization (%) | 0 |

|

| 36 |

+

| PID | 1096190 |

|

| 37 |

+

| Avg SM Utilization (%) | 14 |

|

| 38 |

+

| Avg Memory Utilization (%) | 2 |

|

| 39 |

+

+----- Overall Health -------------+-----------------------------------------+

|

| 40 |

+

| Overall Health | Healthy |

|

| 41 |

+

+------------------------------------+-----------------------------------------+

|

| 42 |

+

|

demo_v2.py

ADDED

|

@@ -0,0 +1,648 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# python demo_v2.py --cfg-path eval_configs/minigptv2_eval.yaml --gpu-id 0

|

| 2 |

+

|

| 3 |

+

import argparse

|

| 4 |

+

import os

|

| 5 |

+

import random

|

| 6 |

+

from collections import defaultdict

|

| 7 |

+

|

| 8 |

+

import cv2

|

| 9 |

+

import re

|

| 10 |

+

|

| 11 |

+

import numpy as np

|

| 12 |

+

from PIL import Image

|

| 13 |

+

import torch

|

| 14 |

+

import html

|

| 15 |

+

import gradio as gr

|

| 16 |

+

|

| 17 |

+

import torchvision.transforms as T

|

| 18 |

+

import torch.backends.cudnn as cudnn

|

| 19 |

+

|

| 20 |

+

from minigpt4.common.config import Config

|

| 21 |

+

|

| 22 |

+

from minigpt4.common.registry import registry

|

| 23 |

+

from minigpt4.conversation.conversation import Conversation, SeparatorStyle, Chat

|

| 24 |

+

|

| 25 |

+

# imports modules for registration

|

| 26 |

+

from minigpt4.datasets.builders import *

|

| 27 |

+

from minigpt4.models import *

|

| 28 |

+

from minigpt4.processors import *

|

| 29 |

+

from minigpt4.runners import *

|

| 30 |

+

from minigpt4.tasks import *

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

def parse_args():

|

| 34 |

+

parser = argparse.ArgumentParser(description="Demo")

|

| 35 |

+

parser.add_argument("--cfg-path", default='eval_configs/minigptv2_eval.yaml',

|

| 36 |

+

help="path to configuration file.")

|

| 37 |

+

parser.add_argument("--gpu-id", type=int, default=0, help="specify the gpu to load the model.")

|

| 38 |

+

parser.add_argument(

|

| 39 |

+

"--options",

|

| 40 |

+

nargs="+",

|

| 41 |

+

help="override some settings in the used config, the key-value pair "

|

| 42 |

+

"in xxx=yyy format will be merged into config file (deprecate), "

|

| 43 |

+

"change to --cfg-options instead.",

|

| 44 |

+

)

|

| 45 |

+

args = parser.parse_args()

|

| 46 |

+

return args

|

| 47 |

+

|

| 48 |

+

|

| 49 |

+

random.seed(42)

|

| 50 |

+

np.random.seed(42)

|

| 51 |

+

torch.manual_seed(42)

|

| 52 |

+

|

| 53 |

+

cudnn.benchmark = False

|

| 54 |

+

cudnn.deterministic = True

|

| 55 |

+

|

| 56 |

+

print('Initializing Chat')

|

| 57 |

+

args = parse_args()

|

| 58 |

+

cfg = Config(args)

|

| 59 |

+

|

| 60 |

+

device = 'cuda:{}'.format(args.gpu_id)

|

| 61 |

+

|

| 62 |

+

model_config = cfg.model_cfg

|

| 63 |

+

model_config.device_8bit = args.gpu_id

|

| 64 |

+

model_cls = registry.get_model_class(model_config.arch)

|

| 65 |

+

model = model_cls.from_config(model_config).to(device)

|

| 66 |

+

bounding_box_size = 100

|

| 67 |

+

|

| 68 |

+

vis_processor_cfg = cfg.datasets_cfg.cc_sbu_align.vis_processor.train

|

| 69 |

+

vis_processor = registry.get_processor_class(vis_processor_cfg.name).from_config(vis_processor_cfg)

|

| 70 |

+

|

| 71 |

+

model = model.eval()

|

| 72 |

+

|

| 73 |

+

CONV_VISION = Conversation(

|

| 74 |

+

system="",

|

| 75 |

+

roles=(r"<s>[INST] ", r" [/INST]"),

|

| 76 |

+

messages=[],

|

| 77 |

+

offset=2,

|

| 78 |

+

sep_style=SeparatorStyle.SINGLE,

|

| 79 |

+

sep="",

|

| 80 |

+

)

|

| 81 |

+

|

| 82 |

+

|

| 83 |

+

def extract_substrings(string):

|

| 84 |

+

# first check if there is no-finished bracket

|

| 85 |

+

index = string.rfind('}')

|

| 86 |

+

if index != -1:

|

| 87 |

+

string = string[:index + 1]

|

| 88 |

+

|

| 89 |

+

pattern = r'<p>(.*?)\}(?!<)'

|

| 90 |

+

matches = re.findall(pattern, string)

|

| 91 |

+

substrings = [match for match in matches]

|

| 92 |

+

|

| 93 |

+

return substrings

|

| 94 |

+

|

| 95 |

+

|

| 96 |

+

def is_overlapping(rect1, rect2):

|

| 97 |

+

x1, y1, x2, y2 = rect1

|

| 98 |

+

x3, y3, x4, y4 = rect2

|

| 99 |

+

return not (x2 < x3 or x1 > x4 or y2 < y3 or y1 > y4)

|

| 100 |

+

|

| 101 |

+

|

| 102 |

+

def computeIoU(bbox1, bbox2):

|

| 103 |

+

x1, y1, x2, y2 = bbox1

|

| 104 |

+

x3, y3, x4, y4 = bbox2

|

| 105 |

+

intersection_x1 = max(x1, x3)

|

| 106 |

+

intersection_y1 = max(y1, y3)

|

| 107 |

+

intersection_x2 = min(x2, x4)

|

| 108 |

+

intersection_y2 = min(y2, y4)

|

| 109 |

+

intersection_area = max(0, intersection_x2 - intersection_x1 + 1) * max(0, intersection_y2 - intersection_y1 + 1)

|

| 110 |

+

bbox1_area = (x2 - x1 + 1) * (y2 - y1 + 1)

|

| 111 |

+

bbox2_area = (x4 - x3 + 1) * (y4 - y3 + 1)

|

| 112 |

+

union_area = bbox1_area + bbox2_area - intersection_area

|

| 113 |

+

iou = intersection_area / union_area

|

| 114 |

+

return iou

|

| 115 |

+

|

| 116 |

+

|

| 117 |

+

def save_tmp_img(visual_img):

|

| 118 |

+

file_name = "".join([str(random.randint(0, 9)) for _ in range(5)]) + ".jpg"

|

| 119 |

+

file_path = "/tmp/gradio" + file_name

|

| 120 |

+

visual_img.save(file_path)

|

| 121 |

+

return file_path

|

| 122 |

+

|

| 123 |

+

|

| 124 |

+

def mask2bbox(mask):

|

| 125 |

+

if mask is None:

|

| 126 |

+

return ''

|

| 127 |

+

mask = mask.resize([100, 100], resample=Image.NEAREST)

|

| 128 |

+

mask = np.array(mask)[:, :, 0]

|

| 129 |

+

|

| 130 |

+

rows = np.any(mask, axis=1)

|

| 131 |

+

cols = np.any(mask, axis=0)

|

| 132 |

+

|

| 133 |

+

if rows.sum():

|

| 134 |

+

# Get the top, bottom, left, and right boundaries

|

| 135 |

+

rmin, rmax = np.where(rows)[0][[0, -1]]

|

| 136 |

+

cmin, cmax = np.where(cols)[0][[0, -1]]

|

| 137 |

+

bbox = '{{<{}><{}><{}><{}>}}'.format(cmin, rmin, cmax, rmax)

|

| 138 |

+

else:

|

| 139 |

+

bbox = ''

|

| 140 |

+

|

| 141 |

+

return bbox

|

| 142 |

+

|

| 143 |

+

|

| 144 |

+

def escape_markdown(text):

|

| 145 |

+

# List of Markdown special characters that need to be escaped

|

| 146 |

+

md_chars = ['<', '>']

|

| 147 |

+

|

| 148 |

+

# Escape each special character

|

| 149 |

+

for char in md_chars:

|

| 150 |

+

text = text.replace(char, '\\' + char)

|

| 151 |

+

|

| 152 |

+

return text

|

| 153 |

+

|

| 154 |

+

|

| 155 |

+

def reverse_escape(text):

|

| 156 |

+

md_chars = ['\\<', '\\>']

|

| 157 |

+

|

| 158 |

+

for char in md_chars:

|

| 159 |

+

text = text.replace(char, char[1:])

|

| 160 |

+

|

| 161 |

+

return text

|

| 162 |

+

|

| 163 |

+

|

| 164 |

+

colors = [

|

| 165 |

+

(255, 0, 0),

|

| 166 |

+

(0, 255, 0),

|

| 167 |

+

(0, 0, 255),

|

| 168 |

+

(210, 210, 0),

|

| 169 |

+

(255, 0, 255),

|

| 170 |

+

(0, 255, 255),

|

| 171 |

+

(114, 128, 250),

|

| 172 |

+

(0, 165, 255),

|

| 173 |

+

(0, 128, 0),

|

| 174 |

+

(144, 238, 144),

|

| 175 |

+

(238, 238, 175),

|

| 176 |

+