ZhengChong

commited on

Commit

·

6a6227f

1

Parent(s):

82131f3

chore: Add SCHP model and detectron2 dependencies

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- app.py +373 -0

- model/DensePose/__init__.py +158 -0

- model/DensePose/__pycache__/__init__.cpython-310.pyc +0 -0

- model/DensePose/__pycache__/__init__.cpython-312.pyc +0 -0

- model/DensePose/__pycache__/__init__.cpython-39.pyc +0 -0

- model/SCHP/LICENSE +21 -0

- model/SCHP/README.md +129 -0

- model/SCHP/__init__.py +163 -0

- model/SCHP/__pycache__/__init__.cpython-310.pyc +0 -0

- model/SCHP/__pycache__/__init__.cpython-39.pyc +0 -0

- model/SCHP/datasets/__init__.py +0 -0

- model/SCHP/datasets/__pycache__/__init__.cpython-39.pyc +0 -0

- model/SCHP/datasets/__pycache__/simple_extractor_dataset.cpython-39.pyc +0 -0

- model/SCHP/datasets/datasets.py +205 -0

- model/SCHP/datasets/simple_extractor_dataset.py +92 -0

- model/SCHP/datasets/target_generation.py +40 -0

- model/SCHP/environment.yaml +49 -0

- model/SCHP/evaluate.py +210 -0

- model/SCHP/file_list.txt +0 -0

- model/SCHP/mhp_extension/.ipynb_checkpoints/demo-checkpoint.ipynb +0 -0

- model/SCHP/mhp_extension/README.md +38 -0

- model/SCHP/mhp_extension/coco_style_annotation_creator/__pycache__/pycococreatortools.cpython-37.pyc +0 -0

- model/SCHP/mhp_extension/coco_style_annotation_creator/human_to_coco.py +166 -0

- model/SCHP/mhp_extension/coco_style_annotation_creator/pycococreatortools.py +114 -0

- model/SCHP/mhp_extension/coco_style_annotation_creator/test_human2coco_format.py +74 -0

- model/SCHP/mhp_extension/data/DemoDataset/global_pic/demo.jpg +0 -0

- model/SCHP/mhp_extension/demo.ipynb +0 -0

- model/SCHP/mhp_extension/demo/demo.jpg +0 -0

- model/SCHP/mhp_extension/demo/demo_global_human_parsing.png +0 -0

- model/SCHP/mhp_extension/demo/demo_instance_human_mask.png +0 -0

- model/SCHP/mhp_extension/demo/demo_multiple_human_parsing.png +0 -0

- model/SCHP/mhp_extension/detectron2/.circleci/config.yml +179 -0

- model/SCHP/mhp_extension/detectron2/.clang-format +85 -0

- model/SCHP/mhp_extension/detectron2/.flake8 +9 -0

- model/SCHP/mhp_extension/detectron2/.github/CODE_OF_CONDUCT.md +5 -0

- model/SCHP/mhp_extension/detectron2/.github/CONTRIBUTING.md +49 -0

- model/SCHP/mhp_extension/detectron2/.github/Detectron2-Logo-Horz.svg +1 -0

- model/SCHP/mhp_extension/detectron2/.github/ISSUE_TEMPLATE.md +5 -0

- model/SCHP/mhp_extension/detectron2/.github/ISSUE_TEMPLATE/bugs.md +36 -0

- model/SCHP/mhp_extension/detectron2/.github/ISSUE_TEMPLATE/config.yml +9 -0

- model/SCHP/mhp_extension/detectron2/.github/ISSUE_TEMPLATE/feature-request.md +31 -0

- model/SCHP/mhp_extension/detectron2/.github/ISSUE_TEMPLATE/questions-help-support.md +26 -0

- model/SCHP/mhp_extension/detectron2/.github/ISSUE_TEMPLATE/unexpected-problems-bugs.md +45 -0

- model/SCHP/mhp_extension/detectron2/.github/pull_request_template.md +9 -0

- model/SCHP/mhp_extension/detectron2/.gitignore +46 -0

- model/SCHP/mhp_extension/detectron2/GETTING_STARTED.md +79 -0

- model/SCHP/mhp_extension/detectron2/INSTALL.md +184 -0

- model/SCHP/mhp_extension/detectron2/LICENSE +201 -0

- model/SCHP/mhp_extension/detectron2/MODEL_ZOO.md +903 -0

- model/SCHP/mhp_extension/detectron2/README.md +56 -0

app.py

ADDED

|

@@ -0,0 +1,373 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

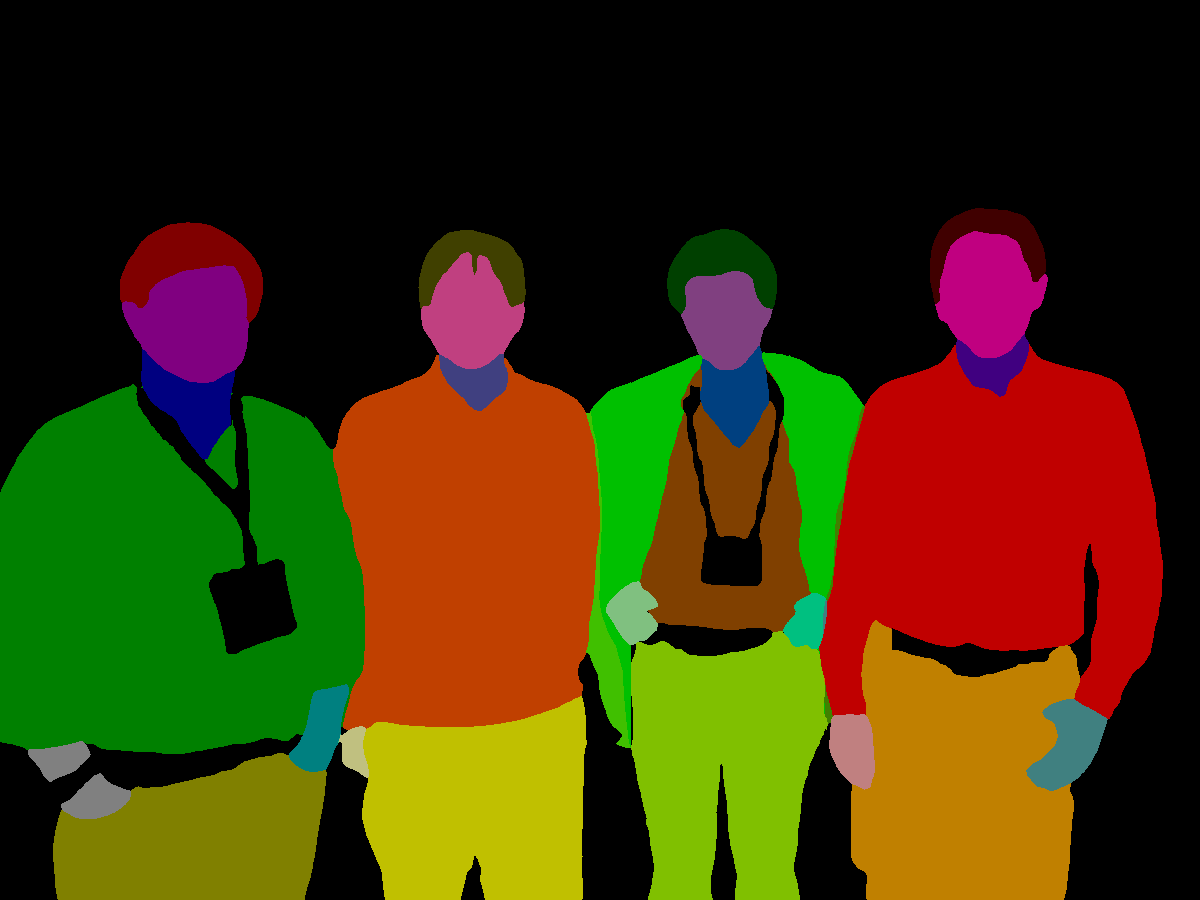

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import os

|

| 3 |

+

from datetime import datetime

|

| 4 |

+

|

| 5 |

+

import gradio as gr

|

| 6 |

+

import numpy as np

|

| 7 |

+

import torch

|

| 8 |

+

from diffusers.image_processor import VaeImageProcessor

|

| 9 |

+

from huggingface_hub import snapshot_download

|

| 10 |

+

from PIL import Image

|

| 11 |

+

|

| 12 |

+

from model.cloth_masker import AutoMasker, vis_mask

|

| 13 |

+

from model.pipeline import CatVTONPipeline

|

| 14 |

+

from utils import init_weight_dtype, resize_and_crop, resize_and_padding

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

def parse_args():

|

| 18 |

+

parser = argparse.ArgumentParser(description="Simple example of a training script.")

|

| 19 |

+

parser.add_argument(

|

| 20 |

+

"--base_model_path",

|

| 21 |

+

type=str,

|

| 22 |

+

default="runwayml/stable-diffusion-inpainting",

|

| 23 |

+

help=(

|

| 24 |

+

"The path to the base model to use for evaluation. This can be a local path or a model identifier from the Model Hub."

|

| 25 |

+

),

|

| 26 |

+

)

|

| 27 |

+

parser.add_argument(

|

| 28 |

+

"--resume_path",

|

| 29 |

+

type=str,

|

| 30 |

+

default="zhengchong/CatVTON",

|

| 31 |

+

help=(

|

| 32 |

+

"The Path to the checkpoint of trained tryon model."

|

| 33 |

+

),

|

| 34 |

+

)

|

| 35 |

+

parser.add_argument(

|

| 36 |

+

"--output_dir",

|

| 37 |

+

type=str,

|

| 38 |

+

default="resource/demo/output",

|

| 39 |

+

help="The output directory where the model predictions will be written.",

|

| 40 |

+

)

|

| 41 |

+

|

| 42 |

+

parser.add_argument(

|

| 43 |

+

"--width",

|

| 44 |

+

type=int,

|

| 45 |

+

default=768,

|

| 46 |

+

help=(

|

| 47 |

+

"The resolution for input images, all the images in the train/validation dataset will be resized to this"

|

| 48 |

+

" resolution"

|

| 49 |

+

),

|

| 50 |

+

)

|

| 51 |

+

parser.add_argument(

|

| 52 |

+

"--height",

|

| 53 |

+

type=int,

|

| 54 |

+

default=1024,

|

| 55 |

+

help=(

|

| 56 |

+

"The resolution for input images, all the images in the train/validation dataset will be resized to this"

|

| 57 |

+

" resolution"

|

| 58 |

+

),

|

| 59 |

+

)

|

| 60 |

+

parser.add_argument(

|

| 61 |

+

"--repaint",

|

| 62 |

+

action="store_true",

|

| 63 |

+

help="Whether to repaint the result image with the original background."

|

| 64 |

+

)

|

| 65 |

+

parser.add_argument(

|

| 66 |

+

"--allow_tf32",

|

| 67 |

+

action="store_true",

|

| 68 |

+

default=True,

|

| 69 |

+

help=(

|

| 70 |

+

"Whether or not to allow TF32 on Ampere GPUs. Can be used to speed up training. For more information, see"

|

| 71 |

+

" https://pytorch.org/docs/stable/notes/cuda.html#tensorfloat-32-tf32-on-ampere-devices"

|

| 72 |

+

),

|

| 73 |

+

)

|

| 74 |

+

parser.add_argument(

|

| 75 |

+

"--mixed_precision",

|

| 76 |

+

type=str,

|

| 77 |

+

default="bf16",

|

| 78 |

+

choices=["no", "fp16", "bf16"],

|

| 79 |

+

help=(

|

| 80 |

+

"Whether to use mixed precision. Choose between fp16 and bf16 (bfloat16). Bf16 requires PyTorch >="

|

| 81 |

+

" 1.10.and an Nvidia Ampere GPU. Default to the value of accelerate config of the current system or the"

|

| 82 |

+

" flag passed with the `accelerate.launch` command. Use this argument to override the accelerate config."

|

| 83 |

+

),

|

| 84 |

+

)

|

| 85 |

+

# parser.add_argument(

|

| 86 |

+

# "--enable_condition_noise",

|

| 87 |

+

# action="store_true",

|

| 88 |

+

# default=True,

|

| 89 |

+

# help="Whether or not to enable condition noise.",

|

| 90 |

+

# )

|

| 91 |

+

|

| 92 |

+

args = parser.parse_args()

|

| 93 |

+

env_local_rank = int(os.environ.get("LOCAL_RANK", -1))

|

| 94 |

+

if env_local_rank != -1 and env_local_rank != args.local_rank:

|

| 95 |

+

args.local_rank = env_local_rank

|

| 96 |

+

|

| 97 |

+

return args

|

| 98 |

+

|

| 99 |

+

def image_grid(imgs, rows, cols):

|

| 100 |

+

assert len(imgs) == rows * cols

|

| 101 |

+

|

| 102 |

+

w, h = imgs[0].size

|

| 103 |

+

grid = Image.new("RGB", size=(cols * w, rows * h))

|

| 104 |

+

|

| 105 |

+

for i, img in enumerate(imgs):

|

| 106 |

+

grid.paste(img, box=(i % cols * w, i // cols * h))

|

| 107 |

+

return grid

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

args = parse_args()

|

| 111 |

+

repo_path = snapshot_download(repo_id=args.resume_path)

|

| 112 |

+

# Pipeline

|

| 113 |

+

pipeline = CatVTONPipeline(

|

| 114 |

+

base_ckpt=args.base_model_path,

|

| 115 |

+

attn_ckpt=repo_path,

|

| 116 |

+

attn_ckpt_version="mix",

|

| 117 |

+

weight_dtype=init_weight_dtype(args.mixed_precision),

|

| 118 |

+

use_tf32=args.allow_tf32,

|

| 119 |

+

device='cuda'

|

| 120 |

+

)

|

| 121 |

+

# AutoMasker

|

| 122 |

+

mask_processor = VaeImageProcessor(vae_scale_factor=8, do_normalize=False, do_binarize=True, do_convert_grayscale=True)

|

| 123 |

+

automasker = AutoMasker(

|

| 124 |

+

densepose_ckpt=os.path.join(repo_path, "DensePose"),

|

| 125 |

+

schp_ckpt=os.path.join(repo_path, "SCHP"),

|

| 126 |

+

device='cuda',

|

| 127 |

+

)

|

| 128 |

+

|

| 129 |

+

|

| 130 |

+

def submit_function(

|

| 131 |

+

person_image,

|

| 132 |

+

cloth_image,

|

| 133 |

+

cloth_type,

|

| 134 |

+

num_inference_steps,

|

| 135 |

+

guidance_scale,

|

| 136 |

+

seed,

|

| 137 |

+

show_type

|

| 138 |

+

):

|

| 139 |

+

person_image, mask = person_image["background"], person_image["layers"][0]

|

| 140 |

+

mask = Image.open(mask).convert("L")

|

| 141 |

+

if len(np.unique(np.array(mask))) == 1:

|

| 142 |

+

mask = None

|

| 143 |

+

else:

|

| 144 |

+

mask = np.array(mask)

|

| 145 |

+

mask[mask > 0] = 255

|

| 146 |

+

mask = Image.fromarray(mask)

|

| 147 |

+

|

| 148 |

+

tmp_folder = args.output_dir

|

| 149 |

+

date_str = datetime.now().strftime("%Y%m%d%H%M%S")

|

| 150 |

+

result_save_path = os.path.join(tmp_folder, date_str[:8], date_str[8:] + ".png")

|

| 151 |

+

if not os.path.exists(os.path.join(tmp_folder, date_str[:8])):

|

| 152 |

+

os.makedirs(os.path.join(tmp_folder, date_str[:8]))

|

| 153 |

+

|

| 154 |

+

generator = None

|

| 155 |

+

if seed != -1:

|

| 156 |

+

generator = torch.Generator(device='cuda').manual_seed(seed)

|

| 157 |

+

|

| 158 |

+

person_image = Image.open(person_image).convert("RGB")

|

| 159 |

+

cloth_image = Image.open(cloth_image).convert("RGB")

|

| 160 |

+

person_image = resize_and_crop(person_image, (args.width, args.height))

|

| 161 |

+

cloth_image = resize_and_padding(cloth_image, (args.width, args.height))

|

| 162 |

+

|

| 163 |

+

# Process mask

|

| 164 |

+

if mask is not None:

|

| 165 |

+

mask = resize_and_crop(mask, (args.width, args.height))

|

| 166 |

+

else:

|

| 167 |

+

mask = automasker(

|

| 168 |

+

person_image,

|

| 169 |

+

cloth_type

|

| 170 |

+

)['mask']

|

| 171 |

+

mask = mask_processor.blur(mask, blur_factor=9)

|

| 172 |

+

|

| 173 |

+

# Inference

|

| 174 |

+

# try:

|

| 175 |

+

result_image = pipeline(

|

| 176 |

+

image=person_image,

|

| 177 |

+

condition_image=cloth_image,

|

| 178 |

+

mask=mask,

|

| 179 |

+

num_inference_steps=num_inference_steps,

|

| 180 |

+

guidance_scale=guidance_scale,

|

| 181 |

+

generator=generator

|

| 182 |

+

)[0]

|

| 183 |

+

# except Exception as e:

|

| 184 |

+

# raise gr.Error(

|

| 185 |

+

# "An error occurred. Please try again later: {}".format(e)

|

| 186 |

+

# )

|

| 187 |

+

|

| 188 |

+

# Post-process

|

| 189 |

+

masked_person = vis_mask(person_image, mask)

|

| 190 |

+

save_result_image = image_grid([person_image, masked_person, cloth_image, result_image], 1, 4)

|

| 191 |

+

save_result_image.save(result_save_path)

|

| 192 |

+

if show_type == "result only":

|

| 193 |

+

return result_image

|

| 194 |

+

else:

|

| 195 |

+

width, height = person_image.size

|

| 196 |

+

if show_type == "input & result":

|

| 197 |

+

condition_width = width // 2

|

| 198 |

+

conditions = image_grid([person_image, cloth_image], 2, 1)

|

| 199 |

+

else:

|

| 200 |

+

condition_width = width // 3

|

| 201 |

+

conditions = image_grid([person_image, masked_person , cloth_image], 3, 1)

|

| 202 |

+

conditions = conditions.resize((condition_width, height), Image.NEAREST)

|

| 203 |

+

new_result_image = Image.new("RGB", (width + condition_width + 5, height))

|

| 204 |

+

new_result_image.paste(conditions, (0, 0))

|

| 205 |

+

new_result_image.paste(result_image, (condition_width + 5, 0))

|

| 206 |

+

return new_result_image

|

| 207 |

+

|

| 208 |

+

|

| 209 |

+

def person_example_fn(image_path):

|

| 210 |

+

return image_path

|

| 211 |

+

|

| 212 |

+

HEADER = """

|

| 213 |

+

<h1 style="text-align: center;"> 🐈 CatVTON: Concatenation Is All You Need for Virtual Try-On with Diffusion Models </h1>

|

| 214 |

+

<div style="display: flex; justify-content: center; align-items: center;">

|

| 215 |

+

<a href="http://arxiv.org/abs/2407.15886" style="margin: 0 2px;">

|

| 216 |

+

<img src='https://img.shields.io/badge/arXiv-2407.15886-red?style=flat&logo=arXiv&logoColor=red' alt='arxiv'>

|

| 217 |

+

</a>

|

| 218 |

+

<a href='https://huggingface.co/zhengchong/CatVTON' style="margin: 0 2px;">

|

| 219 |

+

<img src='https://img.shields.io/badge/Hugging Face-ckpts-orange?style=flat&logo=HuggingFace&logoColor=orange' alt='huggingface'>

|

| 220 |

+

</a>

|

| 221 |

+

<a href="https://github.com/Zheng-Chong/CatVTON" style="margin: 0 2px;">

|

| 222 |

+

<img src='https://img.shields.io/badge/GitHub-Repo-blue?style=flat&logo=GitHub' alt='GitHub'>

|

| 223 |

+

</a>

|

| 224 |

+

<a href="http://120.76.142.206:8888" style="margin: 0 2px;">

|

| 225 |

+

<img src='https://img.shields.io/badge/Demo-Gradio-gold?style=flat&logo=Gradio&logoColor=red' alt='Demo'>

|

| 226 |

+

</a>

|

| 227 |

+

<a href='https://zheng-chong.github.io/CatVTON/' style="margin: 0 2px;">

|

| 228 |

+

<img src='https://img.shields.io/badge/Webpage-Project-silver?style=flat&logo=&logoColor=orange' alt='webpage'>

|

| 229 |

+

</a>

|

| 230 |

+

<a href="https://github.com/Zheng-Chong/CatVTON/LICENCE" style="margin: 0 2px;">

|

| 231 |

+

<img src='https://img.shields.io/badge/License-CC BY--NC--SA--4.0-lightgreen?style=flat&logo=Lisence' alt='License'>

|

| 232 |

+

</a>

|

| 233 |

+

</div>

|

| 234 |

+

"""

|

| 235 |

+

|

| 236 |

+

def app_gradio():

|

| 237 |

+

with gr.Blocks(title="CatVTON") as demo:

|

| 238 |

+

gr.Markdown(HEADER)

|

| 239 |

+

with gr.Row():

|

| 240 |

+

with gr.Column(scale=1, min_width=350):

|

| 241 |

+

with gr.Row():

|

| 242 |

+

image_path = gr.Image(

|

| 243 |

+

type="filepath",

|

| 244 |

+

interactive=True,

|

| 245 |

+

visible=False,

|

| 246 |

+

)

|

| 247 |

+

person_image = gr.ImageEditor(

|

| 248 |

+

interactive=True, label="Person Image", type="filepath"

|

| 249 |

+

)

|

| 250 |

+

|

| 251 |

+

with gr.Row():

|

| 252 |

+

with gr.Column(scale=1, min_width=230):

|

| 253 |

+

cloth_image = gr.Image(

|

| 254 |

+

interactive=True, label="Condition Image", type="filepath"

|

| 255 |

+

)

|

| 256 |

+

with gr.Column(scale=1, min_width=120):

|

| 257 |

+

gr.Markdown(

|

| 258 |

+

'<span style="color: #808080; font-size: small;">Two ways to provide Mask:<br>1. Upload the person image and use the `🖌️` above to draw the Mask (higher priority)<br>2. Select the `Try-On Cloth Type` to generate automatically </span>'

|

| 259 |

+

)

|

| 260 |

+

cloth_type = gr.Radio(

|

| 261 |

+

label="Try-On Cloth Type",

|

| 262 |

+

choices=["upper", "lower", "overall"],

|

| 263 |

+

value="upper",

|

| 264 |

+

)

|

| 265 |

+

|

| 266 |

+

|

| 267 |

+

submit = gr.Button("Submit")

|

| 268 |

+

gr.Markdown(

|

| 269 |

+

'<center><span style="color: #FF0000">!!! Click only Once, Wait for Delay !!!</span></center>'

|

| 270 |

+

)

|

| 271 |

+

|

| 272 |

+

gr.Markdown(

|

| 273 |

+

'<span style="color: #808080; font-size: small;">Advanced options can adjust details:<br>1. `Inference Step` may enhance details;<br>2. `CFG` is highly correlated with saturation;<br>3. `Random seed` may improve pseudo-shadow.</span>'

|

| 274 |

+

)

|

| 275 |

+

with gr.Accordion("Advanced Options", open=False):

|

| 276 |

+

num_inference_steps = gr.Slider(

|

| 277 |

+

label="Inference Step", minimum=10, maximum=100, step=5, value=50

|

| 278 |

+

)

|

| 279 |

+

# Guidence Scale

|

| 280 |

+

guidance_scale = gr.Slider(

|

| 281 |

+

label="CFG Strenth", minimum=0.0, maximum=7.5, step=0.5, value=2.5

|

| 282 |

+

)

|

| 283 |

+

# Random Seed

|

| 284 |

+

seed = gr.Slider(

|

| 285 |

+

label="Seed", minimum=-1, maximum=10000, step=1, value=42

|

| 286 |

+

)

|

| 287 |

+

show_type = gr.Radio(

|

| 288 |

+

label="Show Type",

|

| 289 |

+

choices=["result only", "input & result", "input & mask & result"],

|

| 290 |

+

value="input & mask & result",

|

| 291 |

+

)

|

| 292 |

+

|

| 293 |

+

with gr.Column(scale=2, min_width=500):

|

| 294 |

+

result_image = gr.Image(interactive=False, label="Result")

|

| 295 |

+

with gr.Row():

|

| 296 |

+

# Photo Examples

|

| 297 |

+

root_path = "resource/demo/example"

|

| 298 |

+

with gr.Column():

|

| 299 |

+

men_exm = gr.Examples(

|

| 300 |

+

examples=[

|

| 301 |

+

os.path.join(root_path, "person", "men", _)

|

| 302 |

+

for _ in os.listdir(os.path.join(root_path, "person", "men"))

|

| 303 |

+

],

|

| 304 |

+

examples_per_page=4,

|

| 305 |

+

inputs=image_path,

|

| 306 |

+

label="Person Examples ①",

|

| 307 |

+

)

|

| 308 |

+

women_exm = gr.Examples(

|

| 309 |

+

examples=[

|

| 310 |

+

os.path.join(root_path, "person", "women", _)

|

| 311 |

+

for _ in os.listdir(os.path.join(root_path, "person", "women"))

|

| 312 |

+

],

|

| 313 |

+

examples_per_page=4,

|

| 314 |

+

inputs=image_path,

|

| 315 |

+

label="Person Examples ②",

|

| 316 |

+

)

|

| 317 |

+

gr.Markdown(

|

| 318 |

+

'<span style="color: #808080; font-size: small;">*Person examples come from the demos of <a href="https://huggingface.co/spaces/levihsu/OOTDiffusion">OOTDiffusion</a> and <a href="https://www.outfitanyone.org">OutfitAnyone</a>. </span>'

|

| 319 |

+

)

|

| 320 |

+

with gr.Column():

|

| 321 |

+

condition_upper_exm = gr.Examples(

|

| 322 |

+

examples=[

|

| 323 |

+

os.path.join(root_path, "condition", "upper", _)

|

| 324 |

+

for _ in os.listdir(os.path.join(root_path, "condition", "upper"))

|

| 325 |

+

],

|

| 326 |

+

examples_per_page=4,

|

| 327 |

+

inputs=cloth_image,

|

| 328 |

+

label="Condition Upper Examples",

|

| 329 |

+

)

|

| 330 |

+

condition_overall_exm = gr.Examples(

|

| 331 |

+

examples=[

|

| 332 |

+

os.path.join(root_path, "condition", "overall", _)

|

| 333 |

+

for _ in os.listdir(os.path.join(root_path, "condition", "overall"))

|

| 334 |

+

],

|

| 335 |

+

examples_per_page=4,

|

| 336 |

+

inputs=cloth_image,

|

| 337 |

+

label="Condition Overall Examples",

|

| 338 |

+

)

|

| 339 |

+

condition_person_exm = gr.Examples(

|

| 340 |

+

examples=[

|

| 341 |

+

os.path.join(root_path, "condition", "person", _)

|

| 342 |

+

for _ in os.listdir(os.path.join(root_path, "condition", "person"))

|

| 343 |

+

],

|

| 344 |

+

examples_per_page=4,

|

| 345 |

+

inputs=cloth_image,

|

| 346 |

+

label="Condition Reference Person Examples",

|

| 347 |

+

)

|

| 348 |

+

gr.Markdown(

|

| 349 |

+

'<span style="color: #808080; font-size: small;">*Condition examples come from the Internet. </span>'

|

| 350 |

+

)

|

| 351 |

+

|

| 352 |

+

image_path.change(

|

| 353 |

+

person_example_fn, inputs=image_path, outputs=person_image

|

| 354 |

+

)

|

| 355 |

+

|

| 356 |

+

submit.click(

|

| 357 |

+

submit_function,

|

| 358 |

+

[

|

| 359 |

+

person_image,

|

| 360 |

+

cloth_image,

|

| 361 |

+

cloth_type,

|

| 362 |

+

num_inference_steps,

|

| 363 |

+

guidance_scale,

|

| 364 |

+

seed,

|

| 365 |

+

show_type,

|

| 366 |

+

],

|

| 367 |

+

result_image,

|

| 368 |

+

)

|

| 369 |

+

demo.queue().launch(share=True, show_error=True)

|

| 370 |

+

|

| 371 |

+

|

| 372 |

+

if __name__ == "__main__":

|

| 373 |

+

app_gradio()

|

model/DensePose/__init__.py

ADDED

|

@@ -0,0 +1,158 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

import glob

|

| 3 |

+

import os

|

| 4 |

+

from random import randint

|

| 5 |

+

import shutil

|

| 6 |

+

import time

|

| 7 |

+

|

| 8 |

+

import cv2

|

| 9 |

+

import numpy as np

|

| 10 |

+

import torch

|

| 11 |

+

from PIL import Image

|

| 12 |

+

from densepose import add_densepose_config

|

| 13 |

+

from densepose.vis.base import CompoundVisualizer

|

| 14 |

+

from densepose.vis.densepose_results import DensePoseResultsFineSegmentationVisualizer

|

| 15 |

+

from densepose.vis.extractor import create_extractor, CompoundExtractor

|

| 16 |

+

from detectron2.config import get_cfg

|

| 17 |

+

from detectron2.data.detection_utils import read_image

|

| 18 |

+

from detectron2.engine.defaults import DefaultPredictor

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

class DensePose:

|

| 22 |

+

"""

|

| 23 |

+

DensePose used in this project is from Detectron2 (https://github.com/facebookresearch/detectron2).

|

| 24 |

+

These codes are modified from https://github.com/facebookresearch/detectron2/tree/main/projects/DensePose.

|

| 25 |

+

The checkpoint is downloaded from https://github.com/facebookresearch/detectron2/blob/main/projects/DensePose/doc/DENSEPOSE_IUV.md#ModelZoo.

|

| 26 |

+

|

| 27 |

+

We use the model R_50_FPN_s1x with id 165712039, but other models should also work.

|

| 28 |

+

The config file is downloaded from https://github.com/facebookresearch/detectron2/tree/main/projects/DensePose/configs.

|

| 29 |

+

Noted that the config file should match the model checkpoint and Base-DensePose-RCNN-FPN.yaml is also needed.

|

| 30 |

+

"""

|

| 31 |

+

|

| 32 |

+

def __init__(self, model_path="./checkpoints/densepose_", device="cuda"):

|

| 33 |

+

self.device = device

|

| 34 |

+

self.config_path = os.path.join(model_path, 'densepose_rcnn_R_50_FPN_s1x.yaml')

|

| 35 |

+

self.model_path = os.path.join(model_path, 'model_final_162be9.pkl')

|

| 36 |

+

self.visualizations = ["dp_segm"]

|

| 37 |

+

self.VISUALIZERS = {"dp_segm": DensePoseResultsFineSegmentationVisualizer}

|

| 38 |

+

self.min_score = 0.8

|

| 39 |

+

|

| 40 |

+

self.cfg = self.setup_config()

|

| 41 |

+

self.predictor = DefaultPredictor(self.cfg)

|

| 42 |

+

self.predictor.model.to(self.device)

|

| 43 |

+

|

| 44 |

+

def setup_config(self):

|

| 45 |

+

opts = ["MODEL.ROI_HEADS.SCORE_THRESH_TEST", str(self.min_score)]

|

| 46 |

+

cfg = get_cfg()

|

| 47 |

+

add_densepose_config(cfg)

|

| 48 |

+

cfg.merge_from_file(self.config_path)

|

| 49 |

+

cfg.merge_from_list(opts)

|

| 50 |

+

cfg.MODEL.WEIGHTS = self.model_path

|

| 51 |

+

cfg.freeze()

|

| 52 |

+

return cfg

|

| 53 |

+

|

| 54 |

+

@staticmethod

|

| 55 |

+

def _get_input_file_list(input_spec: str):

|

| 56 |

+

if os.path.isdir(input_spec):

|

| 57 |

+

file_list = [os.path.join(input_spec, fname) for fname in os.listdir(input_spec)

|

| 58 |

+

if os.path.isfile(os.path.join(input_spec, fname))]

|

| 59 |

+

elif os.path.isfile(input_spec):

|

| 60 |

+

file_list = [input_spec]

|

| 61 |

+

else:

|

| 62 |

+

file_list = glob.glob(input_spec)

|

| 63 |

+

return file_list

|

| 64 |

+

|

| 65 |

+

def create_context(self, cfg, output_path):

|

| 66 |

+

vis_specs = self.visualizations

|

| 67 |

+

visualizers = []

|

| 68 |

+

extractors = []

|

| 69 |

+

for vis_spec in vis_specs:

|

| 70 |

+

texture_atlas = texture_atlases_dict = None

|

| 71 |

+

vis = self.VISUALIZERS[vis_spec](

|

| 72 |

+

cfg=cfg,

|

| 73 |

+

texture_atlas=texture_atlas,

|

| 74 |

+

texture_atlases_dict=texture_atlases_dict,

|

| 75 |

+

alpha=1.0

|

| 76 |

+

)

|

| 77 |

+

visualizers.append(vis)

|

| 78 |

+

extractor = create_extractor(vis)

|

| 79 |

+

extractors.append(extractor)

|

| 80 |

+

visualizer = CompoundVisualizer(visualizers)

|

| 81 |

+

extractor = CompoundExtractor(extractors)

|

| 82 |

+

context = {

|

| 83 |

+

"extractor": extractor,

|

| 84 |

+

"visualizer": visualizer,

|

| 85 |

+

"out_fname": output_path,

|

| 86 |

+

"entry_idx": 0,

|

| 87 |

+

}

|

| 88 |

+

return context

|

| 89 |

+

|

| 90 |

+

def execute_on_outputs(self, context, entry, outputs):

|

| 91 |

+

extractor = context["extractor"]

|

| 92 |

+

|

| 93 |

+

data = extractor(outputs)

|

| 94 |

+

|

| 95 |

+

H, W, _ = entry["image"].shape

|

| 96 |

+

result = np.zeros((H, W), dtype=np.uint8)

|

| 97 |

+

|

| 98 |

+

data, box = data[0]

|

| 99 |

+

x, y, w, h = [int(_) for _ in box[0].cpu().numpy()]

|

| 100 |

+

i_array = data[0].labels[None].cpu().numpy()[0]

|

| 101 |

+

result[y:y + h, x:x + w] = i_array

|

| 102 |

+

result = Image.fromarray(result)

|

| 103 |

+

result.save(context["out_fname"])

|

| 104 |

+

|

| 105 |

+

def __call__(self, image_or_path, resize=512) -> Image.Image:

|

| 106 |

+

"""

|

| 107 |

+

:param image_or_path: Path of the input image.

|

| 108 |

+

:param resize: Resize the input image if its max size is larger than this value.

|

| 109 |

+

:return: Dense pose image.

|

| 110 |

+

"""

|

| 111 |

+

# random tmp path with timestamp

|

| 112 |

+

tmp_path = f"./densepose_/tmp/"

|

| 113 |

+

if not os.path.exists(tmp_path):

|

| 114 |

+

os.makedirs(tmp_path)

|

| 115 |

+

|

| 116 |

+

image_path = os.path.join(tmp_path, f"{int(time.time())}-{self.device}-{randint(0, 100000)}.png")

|

| 117 |

+

if isinstance(image_or_path, str):

|

| 118 |

+

assert image_or_path.split(".")[-1] in ["jpg", "png"], "Only support jpg and png images."

|

| 119 |

+

shutil.copy(image_or_path, image_path)

|

| 120 |

+

elif isinstance(image_or_path, Image.Image):

|

| 121 |

+

image_or_path.save(image_path)

|

| 122 |

+

else:

|

| 123 |

+

shutil.rmtree(tmp_path)

|

| 124 |

+

raise TypeError("image_path must be str or PIL.Image.Image")

|

| 125 |

+

|

| 126 |

+

output_path = image_path.replace(".png", "_dense.png").replace(".jpg", "_dense.png")

|

| 127 |

+

w, h = Image.open(image_path).size

|

| 128 |

+

|

| 129 |

+

file_list = self._get_input_file_list(image_path)

|

| 130 |

+

assert len(file_list), "No input images found!"

|

| 131 |

+

context = self.create_context(self.cfg, output_path)

|

| 132 |

+

for file_name in file_list:

|

| 133 |

+

img = read_image(file_name, format="BGR") # predictor expects BGR image.

|

| 134 |

+

# resize

|

| 135 |

+

if (_ := max(img.shape)) > resize:

|

| 136 |

+

scale = resize / _

|

| 137 |

+

img = cv2.resize(img, (int(img.shape[1] * scale), int(img.shape[0] * scale)))

|

| 138 |

+

|

| 139 |

+

with torch.no_grad():

|

| 140 |

+

outputs = self.predictor(img)["instances"]

|

| 141 |

+

try:

|

| 142 |

+

self.execute_on_outputs(context, {"file_name": file_name, "image": img}, outputs)

|

| 143 |

+

except Exception as e:

|

| 144 |

+

null_gray = Image.new('L', (1, 1))

|

| 145 |

+

null_gray.save(output_path)

|

| 146 |

+

|

| 147 |

+

dense_gray = Image.open(output_path).convert("L")

|

| 148 |

+

dense_gray = dense_gray.resize((w, h), Image.NEAREST)

|

| 149 |

+

# remove image_path and output_path

|

| 150 |

+

os.remove(image_path)

|

| 151 |

+

os.remove(output_path)

|

| 152 |

+

|

| 153 |

+

|

| 154 |

+

return dense_gray

|

| 155 |

+

|

| 156 |

+

|

| 157 |

+

if __name__ == '__main__':

|

| 158 |

+

pass

|

model/DensePose/__pycache__/__init__.cpython-310.pyc

ADDED

|

Binary file (5.85 kB). View file

|

|

|

model/DensePose/__pycache__/__init__.cpython-312.pyc

ADDED

|

Binary file (8.91 kB). View file

|

|

|

model/DensePose/__pycache__/__init__.cpython-39.pyc

ADDED

|

Binary file (5.83 kB). View file

|

|

|

model/SCHP/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2020 Peike Li

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

model/SCHP/README.md

ADDED

|

@@ -0,0 +1,129 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Self Correction for Human Parsing

|

| 2 |

+

|

| 3 |

+

|

| 4 |

+

[](https://opensource.org/licenses/MIT)

|

| 5 |

+

|

| 6 |

+

An out-of-box human parsing representation extractor.

|

| 7 |

+

|

| 8 |

+

Our solution ranks 1st for all human parsing tracks (including single, multiple and video) in the third LIP challenge!

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

Features:

|

| 13 |

+

- [x] Out-of-box human parsing extractor for other downstream applications.

|

| 14 |

+

- [x] Pretrained model on three popular single person human parsing datasets.

|

| 15 |

+

- [x] Training and inferecne code.

|

| 16 |

+

- [x] Simple yet effective extension on multi-person and video human parsing tasks.

|

| 17 |

+

|

| 18 |

+

## Requirements

|

| 19 |

+

|

| 20 |

+

```

|

| 21 |

+

conda env create -f environment.yaml

|

| 22 |

+

conda activate schp

|

| 23 |

+

pip install -r requirements.txt

|

| 24 |

+

```

|

| 25 |

+

|

| 26 |

+

## Simple Out-of-Box Extractor

|

| 27 |

+

|

| 28 |

+

The easiest way to get started is to use our trained SCHP models on your own images to extract human parsing representations. Here we provided state-of-the-art [trained models](https://drive.google.com/drive/folders/1uOaQCpNtosIjEL2phQKEdiYd0Td18jNo?usp=sharing) on three popular datasets. Theses three datasets have different label system, you can choose the best one to fit on your own task.

|

| 29 |

+

|

| 30 |

+

**LIP** ([exp-schp-201908261155-lip.pth](https://drive.google.com/file/d/1k4dllHpu0bdx38J7H28rVVLpU-kOHmnH/view?usp=sharing))

|

| 31 |

+

|

| 32 |

+

* mIoU on LIP validation: **59.36 %**.

|

| 33 |

+

|

| 34 |

+

* LIP is the largest single person human parsing dataset with 50000+ images. This dataset focus more on the complicated real scenarios. LIP has 20 labels, including 'Background', 'Hat', 'Hair', 'Glove', 'Sunglasses', 'Upper-clothes', 'Dress', 'Coat', 'Socks', 'Pants', 'Jumpsuits', 'Scarf', 'Skirt', 'Face', 'Left-arm', 'Right-arm', 'Left-leg', 'Right-leg', 'Left-shoe', 'Right-shoe'.

|

| 35 |

+

|

| 36 |

+

**ATR** ([exp-schp-201908301523-atr.pth](https://drive.google.com/file/d/1ruJg4lqR_jgQPj-9K0PP-L2vJERYOxLP/view?usp=sharing))

|

| 37 |

+

|

| 38 |

+

* mIoU on ATR test: **82.29%**.

|

| 39 |

+

|

| 40 |

+

* ATR is a large single person human parsing dataset with 17000+ images. This dataset focus more on fashion AI. ATR has 18 labels, including 'Background', 'Hat', 'Hair', 'Sunglasses', 'Upper-clothes', 'Skirt', 'Pants', 'Dress', 'Belt', 'Left-shoe', 'Right-shoe', 'Face', 'Left-leg', 'Right-leg', 'Left-arm', 'Right-arm', 'Bag', 'Scarf'.

|

| 41 |

+

|

| 42 |

+

**Pascal-Person-Part** ([exp-schp-201908270938-pascal-person-part.pth](https://drive.google.com/file/d/1E5YwNKW2VOEayK9mWCS3Kpsxf-3z04ZE/view?usp=sharing))

|

| 43 |

+

|

| 44 |

+

* mIoU on Pascal-Person-Part validation: **71.46** %.

|

| 45 |

+

|

| 46 |

+

* Pascal Person Part is a tiny single person human parsing dataset with 3000+ images. This dataset focus more on body parts segmentation. Pascal Person Part has 7 labels, including 'Background', 'Head', 'Torso', 'Upper Arms', 'Lower Arms', 'Upper Legs', 'Lower Legs'.

|

| 47 |

+

|

| 48 |

+

Choose one and have fun on your own task!

|

| 49 |

+

|

| 50 |

+

To extract the human parsing representation, simply put your own image in the `INPUT_PATH` folder, then download a pretrained model and run the following command. The output images with the same file name will be saved in `OUTPUT_PATH`

|

| 51 |

+

|

| 52 |

+

```

|

| 53 |

+

python simple_extractor.py --dataset [DATASET] --model-restore [CHECKPOINT_PATH] --input-dir [INPUT_PATH] --output-dir [OUTPUT_PATH]

|

| 54 |

+

```

|

| 55 |

+

|

| 56 |

+

**[Updated]** Here is also a [colab demo example](https://colab.research.google.com/drive/1JOwOPaChoc9GzyBi5FUEYTSaP2qxJl10?usp=sharing) for quick inference provided by [@levindabhi](https://github.com/levindabhi).

|

| 57 |

+

|

| 58 |

+

The `DATASET` command has three options, including 'lip', 'atr' and 'pascal'. Note each pixel in the output images denotes the predicted label number. The output images have the same size as the input ones. To better visualization, we put a palette with the output images. We suggest you to read the image with `PIL`.

|

| 59 |

+

|

| 60 |

+

If you need not only the final parsing images, but also the feature map representations. Add `--logits` command to save the output feature maps. These feature maps are the logits before softmax layer.

|

| 61 |

+

|

| 62 |

+

## Dataset Preparation

|

| 63 |

+

|

| 64 |

+

Please download the [LIP](http://sysu-hcp.net/lip/) dataset following the below structure.

|

| 65 |

+

|

| 66 |

+

```commandline

|

| 67 |

+

data/LIP

|

| 68 |

+

|--- train_imgaes # 30462 training single person images

|

| 69 |

+

|--- val_images # 10000 validation single person images

|

| 70 |

+

|--- train_segmentations # 30462 training annotations

|

| 71 |

+

|--- val_segmentations # 10000 training annotations

|

| 72 |

+

|--- train_id.txt # training image list

|

| 73 |

+

|--- val_id.txt # validation image list

|

| 74 |

+

```

|

| 75 |

+

|

| 76 |

+

## Training

|

| 77 |

+

|

| 78 |

+

```

|

| 79 |

+

python train.py

|

| 80 |

+

```

|

| 81 |

+

By default, the trained model will be saved in `./log` directory. Please read the arguments for more details.

|

| 82 |

+

|

| 83 |

+

## Evaluation

|

| 84 |

+

```

|

| 85 |

+

python evaluate.py --model-restore [CHECKPOINT_PATH]

|

| 86 |

+

```

|

| 87 |

+

CHECKPOINT_PATH should be the path of trained model.

|

| 88 |

+

|

| 89 |

+

## Extension on Multiple Human Parsing

|

| 90 |

+

|

| 91 |

+

Please read [MultipleHumanParsing.md](./mhp_extension/README.md) for more details.

|

| 92 |

+

|

| 93 |

+

## Citation

|

| 94 |

+

|

| 95 |

+

Please cite our work if you find this repo useful in your research.

|

| 96 |

+

|

| 97 |

+

```latex

|

| 98 |

+

@article{li2020self,

|

| 99 |

+

title={Self-Correction for Human Parsing},

|

| 100 |

+

author={Li, Peike and Xu, Yunqiu and Wei, Yunchao and Yang, Yi},

|

| 101 |

+

journal={IEEE Transactions on Pattern Analysis and Machine Intelligence},

|

| 102 |

+

year={2020},

|

| 103 |

+

doi={10.1109/TPAMI.2020.3048039}}

|

| 104 |

+

```

|

| 105 |

+

|

| 106 |

+

## Visualization

|

| 107 |

+

|

| 108 |

+

* Source Image.

|

| 109 |

+

|

| 110 |

+

* LIP Parsing Result.

|

| 111 |

+

|

| 112 |

+

* ATR Parsing Result.

|

| 113 |

+

|

| 114 |

+

* Pascal-Person-Part Parsing Result.

|

| 115 |

+

|

| 116 |

+

* Source Image.

|

| 117 |

+

|

| 118 |

+

* Instance Human Mask.

|

| 119 |

+

|

| 120 |

+

* Global Human Parsing Result.

|

| 121 |

+

|

| 122 |

+

* Multiple Human Parsing Result.

|

| 123 |

+

|

| 124 |

+

|

| 125 |

+

|

| 126 |

+

## Related

|

| 127 |

+

Our code adopts the [InplaceSyncBN](https://github.com/mapillary/inplace_abn) to save gpu memory cost.

|

| 128 |

+

|

| 129 |

+

There is also a [PaddlePaddle](https://github.com/PaddlePaddle/PaddleSeg/tree/develop/contrib/ACE2P) Implementation of this project.

|

model/SCHP/__init__.py

ADDED

|

@@ -0,0 +1,163 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from model.SCHP import networks

|

| 2 |

+

from model.SCHP.utils.transforms import get_affine_transform, transform_logits

|

| 3 |

+

|

| 4 |

+

from collections import OrderedDict

|

| 5 |

+

import torch

|

| 6 |

+

import numpy as np

|

| 7 |

+

import cv2

|

| 8 |

+

from PIL import Image

|

| 9 |

+

from torchvision import transforms

|

| 10 |

+

|

| 11 |

+

def get_palette(num_cls):

|

| 12 |

+

""" Returns the color map for visualizing the segmentation mask.

|

| 13 |

+

Args:

|

| 14 |

+

num_cls: Number of classes

|

| 15 |

+

Returns:

|

| 16 |

+

The color map

|

| 17 |

+

"""

|

| 18 |

+

n = num_cls

|

| 19 |

+

palette = [0] * (n * 3)

|

| 20 |

+

for j in range(0, n):

|

| 21 |

+

lab = j

|

| 22 |

+

palette[j * 3 + 0] = 0

|

| 23 |

+

palette[j * 3 + 1] = 0

|

| 24 |

+

palette[j * 3 + 2] = 0

|

| 25 |

+

i = 0

|

| 26 |

+

while lab:

|

| 27 |

+

palette[j * 3 + 0] |= (((lab >> 0) & 1) << (7 - i))

|

| 28 |

+

palette[j * 3 + 1] |= (((lab >> 1) & 1) << (7 - i))

|

| 29 |

+

palette[j * 3 + 2] |= (((lab >> 2) & 1) << (7 - i))

|

| 30 |

+

i += 1

|

| 31 |

+

lab >>= 3

|

| 32 |

+

return palette

|

| 33 |

+

|

| 34 |

+

dataset_settings = {

|

| 35 |

+

'lip': {

|

| 36 |

+

'input_size': [473, 473],

|

| 37 |

+

'num_classes': 20,

|

| 38 |

+

'label': ['Background', 'Hat', 'Hair', 'Glove', 'Sunglasses', 'Upper-clothes', 'Dress', 'Coat',

|

| 39 |

+

'Socks', 'Pants', 'Jumpsuits', 'Scarf', 'Skirt', 'Face', 'Left-arm', 'Right-arm',

|

| 40 |

+

'Left-leg', 'Right-leg', 'Left-shoe', 'Right-shoe']

|

| 41 |

+

},

|

| 42 |

+

'atr': {

|

| 43 |

+

'input_size': [512, 512],

|

| 44 |

+

'num_classes': 18,

|

| 45 |

+

'label': ['Background', 'Hat', 'Hair', 'Sunglasses', 'Upper-clothes', 'Skirt', 'Pants', 'Dress', 'Belt',

|

| 46 |

+

'Left-shoe', 'Right-shoe', 'Face', 'Left-leg', 'Right-leg', 'Left-arm', 'Right-arm', 'Bag', 'Scarf']

|

| 47 |

+

},

|

| 48 |

+

'pascal': {

|

| 49 |

+

'input_size': [512, 512],

|

| 50 |

+

'num_classes': 7,

|

| 51 |

+

'label': ['Background', 'Head', 'Torso', 'Upper Arms', 'Lower Arms', 'Upper Legs', 'Lower Legs'],

|

| 52 |

+

}

|

| 53 |

+

}

|

| 54 |

+

|

| 55 |

+

class SCHP:

|

| 56 |

+

def __init__(self, ckpt_path, device):

|

| 57 |

+

dataset_type = None

|

| 58 |

+

if 'lip' in ckpt_path:

|

| 59 |

+

dataset_type = 'lip'

|

| 60 |

+

elif 'atr' in ckpt_path:

|

| 61 |

+

dataset_type = 'atr'

|

| 62 |

+

elif 'pascal' in ckpt_path:

|

| 63 |

+

dataset_type = 'pascal'

|

| 64 |

+

assert dataset_type is not None, 'Dataset type not found in checkpoint path'

|

| 65 |

+

self.device = device

|

| 66 |

+

self.num_classes = dataset_settings[dataset_type]['num_classes']

|

| 67 |

+

self.input_size = dataset_settings[dataset_type]['input_size']

|

| 68 |

+

self.aspect_ratio = self.input_size[1] * 1.0 / self.input_size[0]

|

| 69 |

+

self.palette = get_palette(self.num_classes)

|

| 70 |

+

|

| 71 |

+

self.label = dataset_settings[dataset_type]['label']

|

| 72 |

+

self.model = networks.init_model('resnet101', num_classes=self.num_classes, pretrained=None).to(device)

|

| 73 |

+

self.load_ckpt(ckpt_path)

|

| 74 |

+

self.model.eval()

|

| 75 |

+

|

| 76 |

+