Spaces:

Runtime error

Runtime error

Li

commited on

Commit

·

5282eae

1

Parent(s):

6eb2745

init

Browse files- app.py +225 -0

- open_flamingo/.github/workflows/black.yml +10 -0

- open_flamingo/.gitignore +149 -0

- open_flamingo/HISTORY.md +3 -0

- open_flamingo/LICENSE +21 -0

- open_flamingo/MODEL_CARD.md +44 -0

- open_flamingo/Makefile +19 -0

- open_flamingo/README.md +233 -0

- open_flamingo/TERMS_AND_CONDITIONS.md +15 -0

- open_flamingo/docs/flamingo.png +0 -0

- open_flamingo/environment.yml +10 -0

- open_flamingo/open_flamingo/__init__.py +2 -0

- open_flamingo/open_flamingo/eval/__init__.py +1 -0

- open_flamingo/open_flamingo/eval/classification.py +147 -0

- open_flamingo/open_flamingo/eval/coco_metric.py +23 -0

- open_flamingo/open_flamingo/eval/eval_datasets.py +138 -0

- open_flamingo/open_flamingo/eval/evaluate.py +1094 -0

- open_flamingo/open_flamingo/eval/evaluate2.py +1113 -0

- open_flamingo/open_flamingo/eval/imagenet_utils.py +1007 -0

- open_flamingo/open_flamingo/eval/ok_vqa_utils.py +213 -0

- open_flamingo/open_flamingo/eval/vqa_metric.py +594 -0

- open_flamingo/open_flamingo/src/__init__.py +0 -0

- open_flamingo/open_flamingo/src/factory.py +278 -0

- open_flamingo/open_flamingo/src/flamingo.py +236 -0

- open_flamingo/open_flamingo/src/flamingo_lm.py +203 -0

- open_flamingo/open_flamingo/src/helpers.py +263 -0

- open_flamingo/open_flamingo/src/utils.py +31 -0

- open_flamingo/open_flamingo/train/__init__.py +1 -0

- open_flamingo/open_flamingo/train/data.deprecated.py +812 -0

- open_flamingo/open_flamingo/train/data2.py +573 -0

- open_flamingo/open_flamingo/train/distributed.py +128 -0

- open_flamingo/open_flamingo/train/train.py +587 -0

- open_flamingo/open_flamingo/train/train_utils.py +371 -0

- open_flamingo/requirements-dev.txt +5 -0

- open_flamingo/requirements.txt +16 -0

- open_flamingo/setup.py +58 -0

- open_flamingo/tests/test_flamingo_model.py +77 -0

- open_flamingo/tools/check_refcoco.py +14 -0

- open_flamingo/tools/convert_mmc4_to_wds.py +124 -0

- open_flamingo/tools/make_gqa_val.py +0 -0

- open_flamingo/tools/make_mmc4_global_table.py +31 -0

- open_flamingo/tools/make_soft_link.py +26 -0

- open_flamingo/tools/make_soft_link_blip2_data.py +30 -0

- open_flamingo/tools/make_soft_link_laion.py +23 -0

- open_flamingo/tools/make_vqav2_ft_dataset.py +24 -0

- open_flamingo/tools/prepare_mini_blip2_dataset.py +178 -0

- open_flamingo/tools/prepare_pile.py +31 -0

app.py

ADDED

|

@@ -0,0 +1,225 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import os

|

| 2 |

+

os.system("cd open_flamingo && pip install .")

|

| 3 |

+

import numpy as np

|

| 4 |

+

import torch

|

| 5 |

+

from PIL import Image

|

| 6 |

+

|

| 7 |

+

from open_flamingo.train.distributed import init_distributed_device, world_info_from_env

|

| 8 |

+

import string

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

import gradio as gr

|

| 13 |

+

import torch

|

| 14 |

+

from PIL import Image

|

| 15 |

+

# from huggingface_hub import hf_hub_download, login

|

| 16 |

+

|

| 17 |

+

from open_flamingo.src.factory import create_model_and_transforms

|

| 18 |

+

flamingo, image_processor, tokenizer, vis_embed_size = create_model_and_transforms(

|

| 19 |

+

"ViT-L-14",

|

| 20 |

+

"datacomp_xl_s13b_b90k",

|

| 21 |

+

"facebook/opt-350m",

|

| 22 |

+

"facebook/opt-350m",

|

| 23 |

+

add_visual_grounding=True,

|

| 24 |

+

location_token_num=1000,

|

| 25 |

+

add_visual_token = True,

|

| 26 |

+

use_format_v2 = True,

|

| 27 |

+

)

|

| 28 |

+

|

| 29 |

+

checkpoint_path = hf_hub_download("chendl/mm", "checkpoint_opt350m.pt")

|

| 30 |

+

checkpoint = torch.load(args.checkpoint_path, map_location="cpu")

|

| 31 |

+

model_state_dict = {}

|

| 32 |

+

for key in checkpoint["model_state_dict"].keys():

|

| 33 |

+

model_state_dict[key.replace("module.", "")] = checkpoint["model_state_dict"][key]

|

| 34 |

+

if "vision_encoder.logit_scale"in model_state_dict:

|

| 35 |

+

# previous checkpoint has some unnecessary weights

|

| 36 |

+

del model_state_dict["vision_encoder.logit_scale"]

|

| 37 |

+

del model_state_dict["vision_encoder.visual.proj"]

|

| 38 |

+

del model_state_dict["vision_encoder.visual.ln_post.weight"]

|

| 39 |

+

del model_state_dict["vision_encoder.visual.ln_post.bias"]

|

| 40 |

+

flamingo.load_state_dict(model_state_dict, strict=True)

|

| 41 |

+

|

| 42 |

+

|

| 43 |

+

def generate(

|

| 44 |

+

idx,

|

| 45 |

+

image,

|

| 46 |

+

text,

|

| 47 |

+

tsvfile,

|

| 48 |

+

vis_embed_size=256,

|

| 49 |

+

rank=0,

|

| 50 |

+

world_size=1,

|

| 51 |

+

):

|

| 52 |

+

if image is None:

|

| 53 |

+

raise gr.Error("Please upload an image.")

|

| 54 |

+

flamingo.eval().cuda()

|

| 55 |

+

loc_token_ids = []

|

| 56 |

+

for i in range(1000):

|

| 57 |

+

loc_token_ids.append(int(tokenizer(f"<loc_{i}>", add_special_tokens=False)["input_ids"][-1]))

|

| 58 |

+

media_token_id = tokenizer("<|#image#|>", add_special_tokens=False)["input_ids"][-1]

|

| 59 |

+

endofchunk_token_id = tokenizer("<|endofchunk|>", add_special_tokens=False)["input_ids"][-1]

|

| 60 |

+

endofmedia_token_id = tokenizer("<|#endofimage#|>", add_special_tokens=False)["input_ids"][-1]

|

| 61 |

+

pad_token_id = tokenizer(tokenizer.pad_token, add_special_tokens=False)["input_ids"][-1]

|

| 62 |

+

bos_token_id = tokenizer(tokenizer.bos_token, add_special_tokens=False)["input_ids"][-1]

|

| 63 |

+

all_ids = set(range(flamingo.lang_encoder.lm_head.out_features))

|

| 64 |

+

bad_words_ids = list(all_ids - set(loc_token_ids))

|

| 65 |

+

bad_words_ids = [[b] for b in bad_words_ids]

|

| 66 |

+

min_loc_token_id = min(loc_token_ids)

|

| 67 |

+

max_loc_token_id = max(loc_token_ids)

|

| 68 |

+

image = Image.open(image).convert("RGB")

|

| 69 |

+

width = image.width

|

| 70 |

+

height = image.height

|

| 71 |

+

image = image.resize((224, 224))

|

| 72 |

+

batch_images = image_processor(image).unsqueeze(0).unsqueeze(1).unsqueeze(0)

|

| 73 |

+

prompt = [f"<|#image#|>{tokenizer.pad_token*vis_embed_size}<|#endofimage#|><|#obj#|>{text.rstrip('.')}"]

|

| 74 |

+

encodings = tokenizer(

|

| 75 |

+

prompt,

|

| 76 |

+

padding="longest",

|

| 77 |

+

truncation=True,

|

| 78 |

+

return_tensors="pt",

|

| 79 |

+

max_length=2000,

|

| 80 |

+

)

|

| 81 |

+

input_ids = encodings["input_ids"]

|

| 82 |

+

attention_mask = encodings["attention_mask"]

|

| 83 |

+

image_start_index_list = ((input_ids == media_token_id).nonzero(as_tuple=True)[-1] + 1).tolist()

|

| 84 |

+

image_start_index_list = [[x] for x in image_start_index_list]

|

| 85 |

+

image_nums = [1] * len(input_ids)

|

| 86 |

+

outputs = get_outputs(

|

| 87 |

+

model=flamingo,

|

| 88 |

+

batch_images=batch_images.cuda(),

|

| 89 |

+

attention_mask=attention_mask.cuda(),

|

| 90 |

+

max_generation_length=5,

|

| 91 |

+

min_generation_length=4,

|

| 92 |

+

num_beams=1,

|

| 93 |

+

length_penalty=1.0,

|

| 94 |

+

input_ids=input_ids.cuda(),

|

| 95 |

+

bad_words_ids=bad_words_ids,

|

| 96 |

+

image_start_index_list=image_start_index_list,

|

| 97 |

+

image_nums=image_nums,

|

| 98 |

+

)

|

| 99 |

+

box = []

|

| 100 |

+

for o in outputs[0]:

|

| 101 |

+

if o >= min_loc_token_id and o <= max_loc_token_id:

|

| 102 |

+

box.append(o.item() - min_loc_token_id)

|

| 103 |

+

if len(box) == 4:

|

| 104 |

+

break

|

| 105 |

+

# else:

|

| 106 |

+

# tqdm.write(f"output: {tokenizer.batch_decode(outputs)}")

|

| 107 |

+

# tqdm.write(f"prompt: {prompt}")

|

| 108 |

+

|

| 109 |

+

gen_text = tokenizer.batch_decode(outputs)

|

| 110 |

+

return (

|

| 111 |

+

f"Output:{gen_text}"

|

| 112 |

+

if idx != 2

|

| 113 |

+

else f"Question: {text.strip()} Answer: {gen_text}"

|

| 114 |

+

)

|

| 115 |

+

|

| 116 |

+

|

| 117 |

+

with gr.Blocks() as demo:

|

| 118 |

+

gr.Markdown(

|

| 119 |

+

"""

|

| 120 |

+

# 🦩 OpenFlamingo Demo

|

| 121 |

+

|

| 122 |

+

Blog posts: #1 [Announcing OpenFlamingo: An open-source framework for training vision-language models with in-context learning](https://laion.ai/blog/open-flamingo/) // #2 [OpenFlamingo v2: New Models and Enhanced Training Setup](https://laion.ai/blog/open-flamingo-v2/)

|

| 123 |

+

|

| 124 |

+

GitHub: [open_flamingo](https://github.com/mlfoundations/open_flamingo)

|

| 125 |

+

|

| 126 |

+

In this demo we showcase the in-context learning capabilities of the OpenFlamingo-9B model, a large multimodal model trained on top of mpt-7b. Note that we add two additional demonstrations to the ones presented to improve the demo experience.

|

| 127 |

+

The model is trained on an interleaved mixture of text and images and is able to generate text conditioned on sequences of images/text. To safeguard against harmful generations, we detect toxic text in the model output and reject it. However, we understand that this is not a perfect solution and we encourage you to use this demo responsibly. If you find that the model is generating harmful text, please report it using this [form](https://forms.gle/StbcPvyyW2p3Pc7z6).

|

| 128 |

+

"""

|

| 129 |

+

)

|

| 130 |

+

|

| 131 |

+

with gr.Accordion("See terms and conditions"):

|

| 132 |

+

gr.Markdown("""**Please read the following information carefully before proceeding.**

|

| 133 |

+

|

| 134 |

+

[OpenFlamingo-9B](https://huggingface.co/openflamingo/OpenFlamingo-9B-vitl-mpt7b) is a **research prototype** that aims to enable users to interact with AI through both language and images. AI agents equipped with both language and visual understanding can be useful on a larger variety of tasks compared to models that communicate solely via language. By releasing an open-source research prototype, we hope to help the research community better understand the risks and limitations of modern visual-language AI models and accelerate the development of safer and more reliable methods.

|

| 135 |

+

**Limitations.** OpenFlamingo-9B is built on top of the [MPT-7B](https://huggingface.co/mosaicml/mpt-7b) large language model developed by Together.xyz. Large language models are trained on mostly unfiltered internet data, and have been shown to be able to produce toxic, unethical, inaccurate, and harmful content. On top of this, OpenFlamingo’s ability to support visual inputs creates additional risks, since it can be used in a wider variety of applications; image+text models may carry additional risks specific to multimodality. Please use discretion when assessing the accuracy or appropriateness of the model’s outputs, and be mindful before sharing its results.

|

| 136 |

+

**Privacy and data collection.** This demo does NOT store any personal information on its users, and it does NOT store user queries.""")

|

| 137 |

+

|

| 138 |

+

|

| 139 |

+

with gr.Tab("📷 Image Captioning"):

|

| 140 |

+

with gr.Row():

|

| 141 |

+

|

| 142 |

+

|

| 143 |

+

query_image = gr.Image(type="pil")

|

| 144 |

+

with gr.Row():

|

| 145 |

+

chat_input = gr.Textbox(lines=1, label="Chat Input")

|

| 146 |

+

text_output = gr.Textbox(value="Output:", label="Model output")

|

| 147 |

+

|

| 148 |

+

run_btn = gr.Button("Run model")

|

| 149 |

+

|

| 150 |

+

|

| 151 |

+

|

| 152 |

+

def on_click_fn(img,text): return generate(0, img, text)

|

| 153 |

+

|

| 154 |

+

run_btn.click(on_click_fn, inputs=[query_image,chat_input], outputs=[text_output])

|

| 155 |

+

|

| 156 |

+

with gr.Tab("🦓 Grounding"):

|

| 157 |

+

with gr.Row():

|

| 158 |

+

query_image = gr.Image(type="pil")

|

| 159 |

+

with gr.Row():

|

| 160 |

+

chat_input = gr.Textbox(lines=1, label="Chat Input")

|

| 161 |

+

text_output = gr.Textbox(value="Output:", label="Model output")

|

| 162 |

+

|

| 163 |

+

run_btn = gr.Button("Run model")

|

| 164 |

+

|

| 165 |

+

|

| 166 |

+

|

| 167 |

+

def on_click_fn(img,text): return generate(0, img, text)

|

| 168 |

+

|

| 169 |

+

run_btn.click(on_click_fn, inputs=[query_image,chat_input], outputs=[text_output])

|

| 170 |

+

|

| 171 |

+

with gr.Tab("🔢 Counting objects"):

|

| 172 |

+

with gr.Row():

|

| 173 |

+

query_image = gr.Image(type="pil")

|

| 174 |

+

with gr.Row():

|

| 175 |

+

chat_input = gr.Textbox(lines=1, label="Chat Input")

|

| 176 |

+

text_output = gr.Textbox(value="Output:", label="Model output")

|

| 177 |

+

|

| 178 |

+

run_btn = gr.Button("Run model")

|

| 179 |

+

|

| 180 |

+

|

| 181 |

+

def on_click_fn(img,text): return generate(0, img, text)

|

| 182 |

+

|

| 183 |

+

|

| 184 |

+

run_btn.click(on_click_fn, inputs=[query_image, chat_input], outputs=[text_output])

|

| 185 |

+

|

| 186 |

+

with gr.Tab("🕵️ Visual Question Answering"):

|

| 187 |

+

with gr.Row():

|

| 188 |

+

query_image = gr.Image(type="pil")

|

| 189 |

+

with gr.Row():

|

| 190 |

+

question = gr.Textbox(lines=1, label="Question")

|

| 191 |

+

text_output = gr.Textbox(value="Output:", label="Model output")

|

| 192 |

+

|

| 193 |

+

run_btn = gr.Button("Run model")

|

| 194 |

+

|

| 195 |

+

|

| 196 |

+

def on_click_fn(img, txt): return generate(2, img, txt)

|

| 197 |

+

|

| 198 |

+

|

| 199 |

+

run_btn.click(

|

| 200 |

+

on_click_fn, inputs=[query_image, question], outputs=[text_output]

|

| 201 |

+

)

|

| 202 |

+

|

| 203 |

+

with gr.Tab("🌎 Custom"):

|

| 204 |

+

gr.Markdown(

|

| 205 |

+

"""### Customize the demonstration by uploading your own images and text samples.

|

| 206 |

+

### **Note: Any text prompt you use will be prepended with an 'Output:', so you don't need to include it in your prompt.**"""

|

| 207 |

+

)

|

| 208 |

+

with gr.Row():

|

| 209 |

+

query_image = gr.Image(type="pil")

|

| 210 |

+

with gr.Row():

|

| 211 |

+

question = gr.Textbox(lines=1, label="Question")

|

| 212 |

+

text_output = gr.Textbox(value="Output:", label="Model output")

|

| 213 |

+

|

| 214 |

+

run_btn = gr.Button("Run model")

|

| 215 |

+

|

| 216 |

+

|

| 217 |

+

def on_click_fn(img, txt): return generate(2, img, txt)

|

| 218 |

+

|

| 219 |

+

|

| 220 |

+

run_btn.click(

|

| 221 |

+

on_click_fn, inputs=[query_image, question], outputs=[text_output]

|

| 222 |

+

)

|

| 223 |

+

|

| 224 |

+

demo.queue(concurrency_count=1)

|

| 225 |

+

demo.launch()

|

open_flamingo/.github/workflows/black.yml

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: Lint

|

| 2 |

+

|

| 3 |

+

on: [push, pull_request]

|

| 4 |

+

|

| 5 |

+

jobs:

|

| 6 |

+

lint:

|

| 7 |

+

runs-on: ubuntu-latest

|

| 8 |

+

steps:

|

| 9 |

+

- uses: actions/checkout@v2

|

| 10 |

+

- uses: psf/black@stable

|

open_flamingo/.gitignore

ADDED

|

@@ -0,0 +1,149 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.pt

|

| 2 |

+

GRiT/

|

| 3 |

+

temp/

|

| 4 |

+

eval_*.sh

|

| 5 |

+

*.json

|

| 6 |

+

eval_results/

|

| 7 |

+

pycocoevalcap/

|

| 8 |

+

checkpoints_align_*

|

| 9 |

+

segment-anything/

|

| 10 |

+

|

| 11 |

+

wandb/

|

| 12 |

+

checkpoints*/

|

| 13 |

+

|

| 14 |

+

# Byte-compiled / optimized / DLL files

|

| 15 |

+

__pycache__/

|

| 16 |

+

*.py[cod]

|

| 17 |

+

*$py.class

|

| 18 |

+

|

| 19 |

+

# C extensions

|

| 20 |

+

*.so

|

| 21 |

+

|

| 22 |

+

# Distribution / packaging

|

| 23 |

+

.Python

|

| 24 |

+

build/

|

| 25 |

+

develop-eggs/

|

| 26 |

+

dist/

|

| 27 |

+

downloads/

|

| 28 |

+

eggs/

|

| 29 |

+

.eggs/

|

| 30 |

+

lib/

|

| 31 |

+

lib64/

|

| 32 |

+

parts/

|

| 33 |

+

sdist/

|

| 34 |

+

var/

|

| 35 |

+

wheels/

|

| 36 |

+

pip-wheel-metadata/

|

| 37 |

+

share/python-wheels/

|

| 38 |

+

*.egg-info/

|

| 39 |

+

.installed.cfg

|

| 40 |

+

*.egg

|

| 41 |

+

MANIFEST

|

| 42 |

+

|

| 43 |

+

# PyInstaller

|

| 44 |

+

# Usually these files are written by a python script from a template

|

| 45 |

+

# before PyInstaller builds the exe, so as to inject date/other infos into it.

|

| 46 |

+

*.manifest

|

| 47 |

+

*.spec

|

| 48 |

+

|

| 49 |

+

# Installer logs

|

| 50 |

+

pip-log.txt

|

| 51 |

+

pip-delete-this-directory.txt

|

| 52 |

+

|

| 53 |

+

# Unit test / coverage reports

|

| 54 |

+

htmlcov/

|

| 55 |

+

.tox/

|

| 56 |

+

.nox/

|

| 57 |

+

.coverage

|

| 58 |

+

.coverage.*

|

| 59 |

+

.cache

|

| 60 |

+

nosetests.xml

|

| 61 |

+

coverage.xml

|

| 62 |

+

*.cover

|

| 63 |

+

*.py,cover

|

| 64 |

+

.hypothesis/

|

| 65 |

+

.pytest_cache/

|

| 66 |

+

|

| 67 |

+

# Translations

|

| 68 |

+

*.mo

|

| 69 |

+

*.pot

|

| 70 |

+

|

| 71 |

+

# Django stuff:

|

| 72 |

+

*.log

|

| 73 |

+

local_settings.py

|

| 74 |

+

db.sqlite3

|

| 75 |

+

db.sqlite3-journal

|

| 76 |

+

|

| 77 |

+

# Flask stuff:

|

| 78 |

+

instance/

|

| 79 |

+

.webassets-cache

|

| 80 |

+

|

| 81 |

+

# Scrapy stuff:

|

| 82 |

+

.scrapy

|

| 83 |

+

|

| 84 |

+

# Sphinx documentation

|

| 85 |

+

docs/_build/

|

| 86 |

+

|

| 87 |

+

# PyBuilder

|

| 88 |

+

target/

|

| 89 |

+

|

| 90 |

+

# Jupyter Notebook

|

| 91 |

+

.ipynb_checkpoints

|

| 92 |

+

|

| 93 |

+

# IPython

|

| 94 |

+

profile_default/

|

| 95 |

+

ipython_config.py

|

| 96 |

+

|

| 97 |

+

# pyenv

|

| 98 |

+

.python-version

|

| 99 |

+

|

| 100 |

+

# pipenv

|

| 101 |

+

# According to pypa/pipenv#598, it is recommended to include Pipfile.lock in version control.

|

| 102 |

+

# However, in case of collaboration, if having platform-specific dependencies or dependencies

|

| 103 |

+

# having no cross-platform support, pipenv may install dependencies that don't work, or not

|

| 104 |

+

# install all needed dependencies.

|

| 105 |

+

#Pipfile.lock

|

| 106 |

+

|

| 107 |

+

# PEP 582; used by e.g. github.com/David-OConnor/pyflow

|

| 108 |

+

__pypackages__/

|

| 109 |

+

|

| 110 |

+

# Celery stuff

|

| 111 |

+

celerybeat-schedule

|

| 112 |

+

celerybeat.pid

|

| 113 |

+

|

| 114 |

+

# SageMath parsed files

|

| 115 |

+

*.sage.py

|

| 116 |

+

|

| 117 |

+

# Environments

|

| 118 |

+

.env

|

| 119 |

+

.venv

|

| 120 |

+

env/

|

| 121 |

+

venv/

|

| 122 |

+

ENV/

|

| 123 |

+

env.bak/

|

| 124 |

+

venv.bak/

|

| 125 |

+

|

| 126 |

+

# Pycharm project settings

|

| 127 |

+

.idea

|

| 128 |

+

|

| 129 |

+

# Spyder project settings

|

| 130 |

+

.spyderproject

|

| 131 |

+

.spyproject

|

| 132 |

+

|

| 133 |

+

# Rope project settings

|

| 134 |

+

.ropeproject

|

| 135 |

+

|

| 136 |

+

# mkdocs documentation

|

| 137 |

+

/site

|

| 138 |

+

|

| 139 |

+

# mypy

|

| 140 |

+

.mypy_cache/

|

| 141 |

+

.dmypy.json

|

| 142 |

+

dmypy.json

|

| 143 |

+

|

| 144 |

+

*.out

|

| 145 |

+

src/wandb

|

| 146 |

+

wandb

|

| 147 |

+

|

| 148 |

+

# Pyre type checker

|

| 149 |

+

.pyre/

|

open_flamingo/HISTORY.md

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

## 1.0.0

|

| 2 |

+

|

| 3 |

+

* it works

|

open_flamingo/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2023 Anas Awadalla, Irena Gao, Joshua Gardner, Jack Hessel, Yusuf Hanafy, Wanrong Zhu, Kalyani Marathe, Yonatan Bitton, Samir Gadre, Jenia Jitsev, Simon Kornblith, Pang Wei Koh, Gabriel Ilharco, Mitchell Wortsman, Ludwig Schmidt.

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

open_flamingo/MODEL_CARD.md

ADDED

|

@@ -0,0 +1,44 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

language: en

|

| 3 |

+

datasets:

|

| 4 |

+

- laion2b

|

| 5 |

+

---

|

| 6 |

+

|

| 7 |

+

# OpenFlamingo-9B

|

| 8 |

+

|

| 9 |

+

[Blog post]() | [Code](https://github.com/mlfoundations/open_flamingo) | [Demo](https://7164d2142d11.ngrok.app)

|

| 10 |

+

|

| 11 |

+

OpenFlamingo is an open source implementation of DeepMind's [Flamingo](https://www.deepmind.com/blog/tackling-multiple-tasks-with-a-single-visual-language-model) models.

|

| 12 |

+

OpenFlamingo-9B is built off of [CLIP ViT-L/14](https://huggingface.co/openai/clip-vit-large-patch14) and [LLaMA-7B](https://ai.facebook.com/blog/large-language-model-llama-meta-ai/).

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

## Model Details

|

| 16 |

+

We freeze the pretrained vision encoder and language model, and then we train connecting Perceiver modules and cross-attention layers, following the original Flamingo paper.

|

| 17 |

+

|

| 18 |

+

Our training data is a mixture of [LAION 2B](https://huggingface.co/datasets/laion/laion2B-en) and a large interleaved image-text dataset called Multimodal C4, which will be released soon.

|

| 19 |

+

|

| 20 |

+

The current model is an early checkpoint of an ongoing effort. This checkpoint has seen 5 million interleaved image-text examples from Multimodal C4 and 10 million samples from LAION 2B.

|

| 21 |

+

|

| 22 |

+

## Uses

|

| 23 |

+

OpenFlamingo-9B is intended to be used **for academic research purposes only.** Commercial use is prohibited, in line with LLaMA's non-commercial license.

|

| 24 |

+

|

| 25 |

+

### Bias, Risks, and Limitations

|

| 26 |

+

This model may generate inaccurate or offensive outputs, reflecting biases in its training data and pretrained priors.

|

| 27 |

+

|

| 28 |

+

In an effort to mitigate current potential biases and harms, we have deployed a text content filter on model outputs in the OpenFlamingo demo. We continue to red-team the model to understand and improve its safety.

|

| 29 |

+

|

| 30 |

+

## Evaluation

|

| 31 |

+

We've evaluated this checkpoint on the validation sets for two vision-language tasks: COCO captioning and VQAv2. Results are displayed below.

|

| 32 |

+

|

| 33 |

+

**COCO (CIDEr)**

|

| 34 |

+

|

| 35 |

+

|0-shot|4-shot|8-shot|16-shot|32-shot|

|

| 36 |

+

|--|--|--|--|--|

|

| 37 |

+

|65.52|74.28|79.26|81.84|84.52|

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

**VQAv2 (VQA accuracy)**

|

| 41 |

+

|

| 42 |

+

|0-shot|4-shot|8-shot|16-shot|32-shot|

|

| 43 |

+

|---|---|---|---|---|

|

| 44 |

+

|43.55|44.05|47.5|48.87|50.34|

|

open_flamingo/Makefile

ADDED

|

@@ -0,0 +1,19 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

install: ## [Local development] Upgrade pip, install requirements, install package.

|

| 2 |

+

python -m pip install -U pip

|

| 3 |

+

python -m pip install -e .

|

| 4 |

+

|

| 5 |

+

install-dev: ## [Local development] Install test requirements

|

| 6 |

+

python -m pip install -r requirements-test.txt

|

| 7 |

+

|

| 8 |

+

lint: ## [Local development] Run mypy, pylint and black

|

| 9 |

+

python -m mypy open_flamingo

|

| 10 |

+

python -m pylint open_flamingo

|

| 11 |

+

python -m black --check -l 120 open_flamingo

|

| 12 |

+

|

| 13 |

+

black: ## [Local development] Auto-format python code using black

|

| 14 |

+

python -m black -l 120 .

|

| 15 |

+

|

| 16 |

+

.PHONY: help

|

| 17 |

+

|

| 18 |

+

help: # Run `make help` to get help on the make commands

|

| 19 |

+

@grep -E '^[0-9a-zA-Z_-]+:.*?## .*$$' $(MAKEFILE_LIST) | sort | awk 'BEGIN {FS = ":.*?## "}; {printf "\033[36m%-30s\033[0m %s\n", $$1, $$2}'

|

open_flamingo/README.md

ADDED

|

@@ -0,0 +1,233 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# 🦩 OpenFlamingo

|

| 2 |

+

|

| 3 |

+

[](https://badge.fury.io/py/open_flamingo)

|

| 4 |

+

|

| 5 |

+

[Blog post](https://laion.ai/blog/open-flamingo/) | Paper (coming soon)

|

| 6 |

+

|

| 7 |

+

Welcome to our open source version of DeepMind's [Flamingo](https://www.deepmind.com/blog/tackling-multiple-tasks-with-a-single-visual-language-model) model! In this repository, we provide a PyTorch implementation for training and evaluating OpenFlamingo models. We also provide an initial [OpenFlamingo 9B model](https://huggingface.co/openflamingo/OpenFlamingo-9B) trained on a new Multimodal C4 dataset (coming soon). Please refer to our blog post for more details.

|

| 8 |

+

|

| 9 |

+

This repo is still under development, and we hope to release better performing and larger OpenFlamingo models soon. If you have any questions, please feel free to open an issue. We also welcome contributions!

|

| 10 |

+

|

| 11 |

+

# Table of Contents

|

| 12 |

+

- [Installation](#installation)

|

| 13 |

+

- [Approach](#approach)

|

| 14 |

+

* [Model architecture](#model-architecture)

|

| 15 |

+

- [Usage](#usage)

|

| 16 |

+

* [Initializing an OpenFlamingo model](#initializing-an-openflamingo-model)

|

| 17 |

+

* [Generating text](#generating-text)

|

| 18 |

+

- [Training](#training)

|

| 19 |

+

* [Dataset](#dataset)

|

| 20 |

+

- [Evaluation](#evaluation)

|

| 21 |

+

- [Future plans](#future-plans)

|

| 22 |

+

- [Team](#team)

|

| 23 |

+

- [Acknowledgments](#acknowledgments)

|

| 24 |

+

- [Citing](#citing)

|

| 25 |

+

|

| 26 |

+

# Installation

|

| 27 |

+

|

| 28 |

+

To install the package in an existing environment, run

|

| 29 |

+

```

|

| 30 |

+

pip install open-flamingo

|

| 31 |

+

```

|

| 32 |

+

|

| 33 |

+

or to create a conda environment for running OpenFlamingo, run

|

| 34 |

+

```

|

| 35 |

+

conda env create -f environment.yml

|

| 36 |

+

```

|

| 37 |

+

|

| 38 |

+

# Usage

|

| 39 |

+

We provide an initial [OpenFlamingo 9B model](https://huggingface.co/openflamingo/OpenFlamingo-9B) using a CLIP ViT-Large vision encoder and a LLaMA-7B language model. In general, we support any [CLIP vision encoder](https://huggingface.co/models?search=clip). For the language model, we support [LLaMA](https://huggingface.co/models?search=llama), [OPT](https://huggingface.co/models?search=opt), [GPT-Neo](https://huggingface.co/models?search=gpt-neo), [GPT-J](https://huggingface.co/models?search=gptj), and [Pythia](https://huggingface.co/models?search=pythia) models.

|

| 40 |

+

|

| 41 |

+

#### NOTE: To use LLaMA models, you will need to install the latest version of transformers via

|

| 42 |

+

```

|

| 43 |

+

pip install git+https://github.com/huggingface/transformers

|

| 44 |

+

```

|

| 45 |

+

Use this [script](https://github.com/huggingface/transformers/blob/main/src/transformers/models/llama/convert_llama_weights_to_hf.py) for converting LLaMA weights to HuggingFace format.

|

| 46 |

+

|

| 47 |

+

## Initializing an OpenFlamingo model

|

| 48 |

+

``` python

|

| 49 |

+

from open_flamingo import create_model_and_transforms

|

| 50 |

+

|

| 51 |

+

model, image_processor, tokenizer = create_model_and_transforms(

|

| 52 |

+

clip_vision_encoder_path="ViT-L-14",

|

| 53 |

+

clip_vision_encoder_pretrained="openai",

|

| 54 |

+

lang_encoder_path="<path to llama weights in HuggingFace format>",

|

| 55 |

+

tokenizer_path="<path to llama tokenizer in HuggingFace format>",

|

| 56 |

+

cross_attn_every_n_layers=4

|

| 57 |

+

)

|

| 58 |

+

|

| 59 |

+

# grab model checkpoint from huggingface hub

|

| 60 |

+

from huggingface_hub import hf_hub_download

|

| 61 |

+

import torch

|

| 62 |

+

|

| 63 |

+

checkpoint_path = hf_hub_download("openflamingo/OpenFlamingo-9B", "checkpoint.pt")

|

| 64 |

+

model.load_state_dict(torch.load(checkpoint_path), strict=False)

|

| 65 |

+

```

|

| 66 |

+

|

| 67 |

+

## Generating text

|

| 68 |

+

Here is an example of generating text conditioned on interleaved images/text, in this case we will do few-shot image captioning.

|

| 69 |

+

|

| 70 |

+

``` python

|

| 71 |

+

from PIL import Image

|

| 72 |

+

import requests

|

| 73 |

+

|

| 74 |

+

"""

|

| 75 |

+

Step 1: Load images

|

| 76 |

+

"""

|

| 77 |

+

demo_image_one = Image.open(

|

| 78 |

+

requests.get(

|

| 79 |

+

"http://images.cocodataset.org/val2017/000000039769.jpg", stream=True

|

| 80 |

+

).raw

|

| 81 |

+

)

|

| 82 |

+

|

| 83 |

+

demo_image_two = Image.open(

|

| 84 |

+

requests.get(

|

| 85 |

+

"http://images.cocodataset.org/test-stuff2017/000000028137.jpg",

|

| 86 |

+

stream=True

|

| 87 |

+

).raw

|

| 88 |

+

)

|

| 89 |

+

|

| 90 |

+

query_image = Image.open(

|

| 91 |

+

requests.get(

|

| 92 |

+

"http://images.cocodataset.org/test-stuff2017/000000028352.jpg",

|

| 93 |

+

stream=True

|

| 94 |

+

).raw

|

| 95 |

+

)

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

"""

|

| 99 |

+

Step 2: Preprocessing images

|

| 100 |

+

Details: For OpenFlamingo, we expect the image to be a torch tensor of shape

|

| 101 |

+

batch_size x num_media x num_frames x channels x height x width.

|

| 102 |

+

In this case batch_size = 1, num_media = 3, num_frames = 1

|

| 103 |

+

(this will always be one expect for video which we don't support yet),

|

| 104 |

+

channels = 3, height = 224, width = 224.

|

| 105 |

+

"""

|

| 106 |

+

vision_x = [image_processor(demo_image_one).unsqueeze(0), image_processor(demo_image_two).unsqueeze(0), image_processor(query_image).unsqueeze(0)]

|

| 107 |

+

vision_x = torch.cat(vision_x, dim=0)

|

| 108 |

+

vision_x = vision_x.unsqueeze(1).unsqueeze(0)

|

| 109 |

+

|

| 110 |

+

"""

|

| 111 |

+

Step 3: Preprocessing text

|

| 112 |

+

Details: In the text we expect an <|#image#|> special token to indicate where an image is.

|

| 113 |

+

We also expect an <|endofchunk|> special token to indicate the end of the text

|

| 114 |

+

portion associated with an image.

|

| 115 |

+

"""

|

| 116 |

+

tokenizer.padding_side = "left" # For generation padding tokens should be on the left

|

| 117 |

+

lang_x = tokenizer(

|

| 118 |

+

["<|#image#|>An image of two cats.<|endofchunk|><|#image#|>An image of a bathroom sink.<|endofchunk|><|#image#|>An image of"],

|

| 119 |

+

return_tensors="pt",

|

| 120 |

+

)

|

| 121 |

+

|

| 122 |

+

|

| 123 |

+

"""

|

| 124 |

+

Step 4: Generate text

|

| 125 |

+

"""

|

| 126 |

+

generated_text = model.generate(

|

| 127 |

+

vision_x=vision_x,

|

| 128 |

+

lang_x=lang_x["input_ids"],

|

| 129 |

+

attention_mask=lang_x["attention_mask"],

|

| 130 |

+

max_new_tokens=20,

|

| 131 |

+

num_beams=3,

|

| 132 |

+

)

|

| 133 |

+

|

| 134 |

+

print("Generated text: ", tokenizer.decode(generated_text[0]))

|

| 135 |

+

```

|

| 136 |

+

|

| 137 |

+

# Approach

|

| 138 |

+

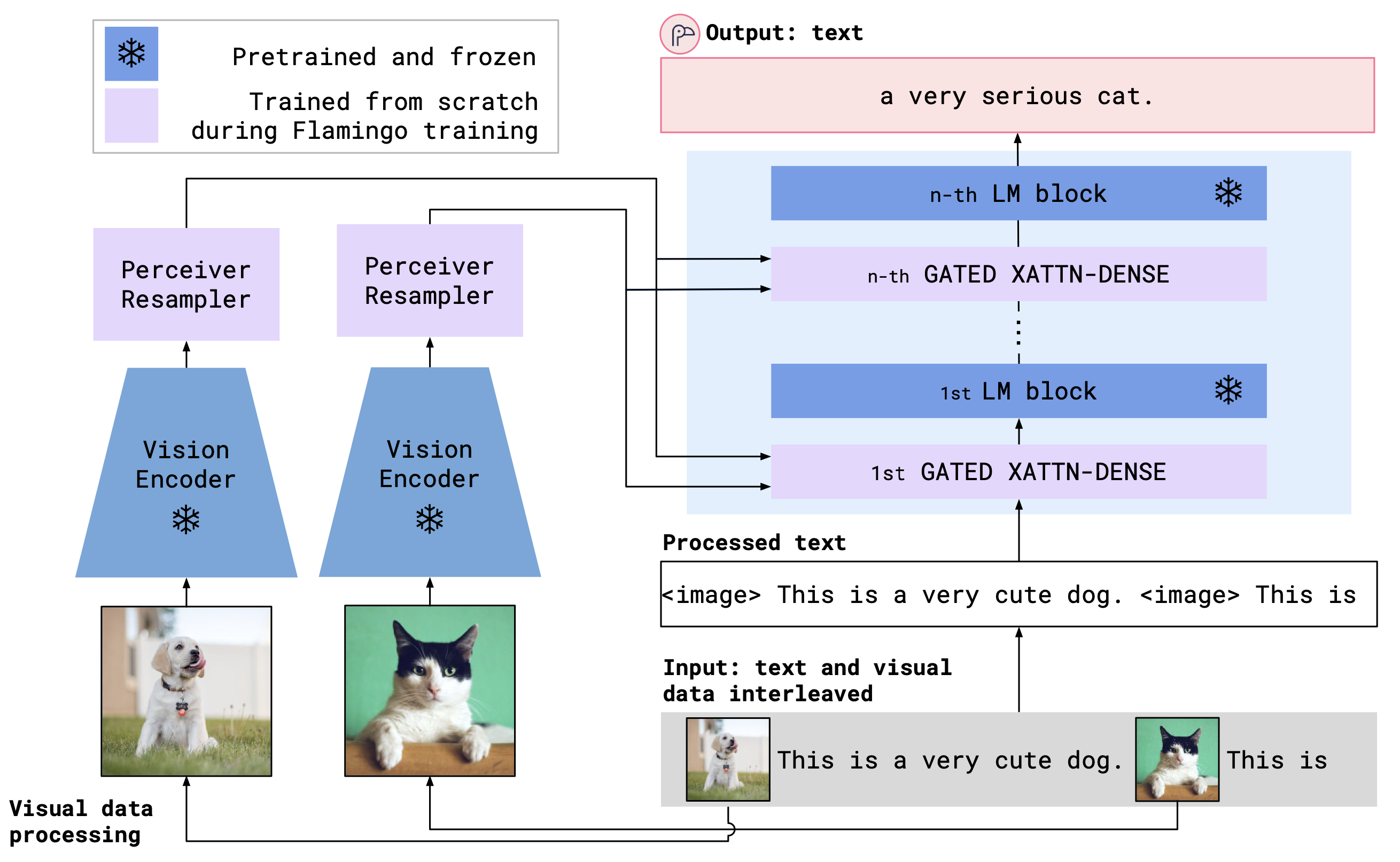

OpenFlamingo is a multimodal language model that can be used for a variety of tasks. It is trained on a large multimodal dataset (e.g. Multimodal C4) and can be used to generate text conditioned on interleaved images/text. For example, OpenFlamingo can be used to generate a caption for an image, or to generate a question given an image and a text passage. The benefit of this approach is that we are able to rapidly adapt to new tasks using in-context training.

|

| 139 |

+

|

| 140 |

+

## Model architecture

|

| 141 |

+

OpenFlamingo seeks to fuse a pretrained vision encoder and a language model using cross attention layers. The model architecture is shown below.

|

| 142 |

+

|

| 143 |

+

|

| 144 |

+

Credit: [Flamingo](https://www.deepmind.com/blog/tackling-multiple-tasks-with-a-single-visual-language-model)

|

| 145 |

+

|

| 146 |

+

# Training

|

| 147 |

+

To train a model, modify the following example command, which uses OPT 1.3B as an example LM:

|

| 148 |

+

```

|

| 149 |

+

torchrun --nnodes=1 --nproc_per_node=4 train.py \

|

| 150 |

+

--run_name flamingo3B \

|

| 151 |

+

--lm_path facebook/opt-1.3b \

|

| 152 |

+

--tokenizer_path facebook/opt-1.3b \

|

| 153 |

+

--dataset_resampled \

|

| 154 |

+

--laion_shards "/path/to/shards/shard-{0000..0999}.tar" \

|

| 155 |

+

--mmc4_shards "/path/to/shards/shard-{0000..0999}.tar" \

|

| 156 |

+

--batch_size_mmc4 4 \

|

| 157 |

+

--batch_size_laion 8 \

|

| 158 |

+

--train_num_samples_mmc4 125000 \

|

| 159 |

+

--train_num_samples_laion 250000 \

|

| 160 |

+

--loss_multiplier_laion 0.2 \

|

| 161 |

+

--workers=6 \

|

| 162 |

+

--num_epochs 250 \

|

| 163 |

+

--lr_scheduler constant \

|

| 164 |

+

--warmup_steps 5000 \

|

| 165 |

+

--use_media_placement_augmentation \

|

| 166 |

+

--mmc4_textsim_threshold 30

|

| 167 |

+

```

|

| 168 |

+

|

| 169 |

+

## Dataset

|

| 170 |

+

We expect all our training datasets to be [WebDataset](https://github.com/webdataset/webdataset) shards.

|

| 171 |

+

We train our models on the [LAION 2B](https://huggingface.co/datasets/laion/laion2B-en) and Multimodal C4 (coming soon) datasets. By default the LAION 2B dataset is in WebDataset format if it is downloaded using the [img2dataset tool](https://github.com/rom1504/img2dataset) and Multimodal C4 comes packaged in the WebDataset format.

|

| 172 |

+

|

| 173 |

+

|

| 174 |

+

# Evaluation

|

| 175 |

+

We currently support running evaluations on [COCO](https://cocodataset.org/#home), [VQAv2](https://visualqa.org/index.html), [OKVQA](https://okvqa.allenai.org), [Flickr30k](https://www.kaggle.com/datasets/hsankesara/flickr-image-dataset), and [ImageNet](https://image-net.org/index.php). Note that currently these evaluations are ran in validation mode (as specified in the Flamingo paper). We will be adding support for running evaluations in test mode in the future.

|

| 176 |

+

|

| 177 |

+

Before evaluating the model, you will need to install the coco evaluation package by running the following command:

|

| 178 |

+

```

|

| 179 |

+

pip install pycocoevalcap

|

| 180 |

+

```

|

| 181 |

+

|

| 182 |

+

To run evaluations on OKVQA you will need to run the following command:

|

| 183 |

+

```

|

| 184 |

+

import nltk

|

| 185 |

+

nltk.download('wordnet')

|

| 186 |

+

```

|

| 187 |

+

|

| 188 |

+

To evaluate the model, run the script at `open_flamingo/scripts/run_eval.sh`

|

| 189 |

+

|

| 190 |

+

# Future plans

|

| 191 |

+

- [ ] Add support for video input

|

| 192 |

+

- [ ] Release better performing and larger OpenFlamingo models

|

| 193 |

+

- [ ] Expand our evaluation suite

|

| 194 |

+

- [ ] Add support for FSDP training

|

| 195 |

+

|

| 196 |

+

# Team

|

| 197 |

+

|

| 198 |

+

OpenFlamingo is developed by:

|

| 199 |

+

|

| 200 |

+

[Anas Awadalla](https://anas-awadalla.streamlit.app/), [Irena Gao](https://i-gao.github.io/), [Joshua Gardner](https://homes.cs.washington.edu/~jpgard/), [Jack Hessel](https://jmhessel.com/), [Yusuf Hanafy](https://www.linkedin.com/in/yusufhanafy/), [Wanrong Zhu](https://wanrong-zhu.com/), [Kalyani Marathe](https://sites.google.com/uw.edu/kalyanimarathe/home?authuser=0), [Yonatan Bitton](https://yonatanbitton.github.io/), [Samir Gadre](https://sagadre.github.io/), [Jenia Jitsev](https://scholar.google.de/citations?user=p1FuAMkAAAAJ&hl=en), [Simon Kornblith](https://simonster.com/), [Pang Wei Koh](https://koh.pw/), [Gabriel Ilharco](https://gabrielilharco.com/), [Mitchell Wortsman](https://mitchellnw.github.io/), [Ludwig Schmidt](https://people.csail.mit.edu/ludwigs/).

|

| 201 |

+

|

| 202 |

+

The team is primarily from the University of Washington, Stanford, AI2, UCSB, and Google.

|

| 203 |

+

|

| 204 |

+

# Acknowledgments

|

| 205 |

+

This code is based on Lucidrains' [flamingo implementation](https://github.com/lucidrains/flamingo-pytorch) and David Hansmair's [flamingo-mini repo](https://github.com/dhansmair/flamingo-mini). Thank you for making your code public! We also thank the [OpenCLIP](https://github.com/mlfoundations/open_clip) team as we use their data loading code and take inspiration from their library design.

|

| 206 |

+

|

| 207 |

+

We would also like to thank [Jean-Baptiste Alayrac](https://www.jbalayrac.com) and [Antoine Miech](https://antoine77340.github.io) for their advice, [Rohan Taori](https://www.rohantaori.com/), [Nicholas Schiefer](https://nicholasschiefer.com/), [Deep Ganguli](https://hai.stanford.edu/people/deep-ganguli), [Thomas Liao](https://thomasliao.com/), [Tatsunori Hashimoto](https://thashim.github.io/), and [Nicholas Carlini](https://nicholas.carlini.com/) for their help with assessing the safety risks of our release, and to [Stability AI](https://stability.ai) for providing us with compute resources to train these models.

|

| 208 |

+

|

| 209 |

+

# Citing

|

| 210 |

+

If you found this repository useful, please consider citing:

|

| 211 |

+

|

| 212 |

+

```

|

| 213 |

+

@software{anas_awadalla_2023_7733589,

|

| 214 |

+

author = {Awadalla, Anas and Gao, Irena and Gardner, Joshua and Hessel, Jack and Hanafy, Yusuf and Zhu, Wanrong and Marathe, Kalyani and Bitton, Yonatan and Gadre, Samir and Jitsev, Jenia and Kornblith, Simon and Koh, Pang Wei and Ilharco, Gabriel and Wortsman, Mitchell and Schmidt, Ludwig},

|

| 215 |

+

title = {OpenFlamingo},

|

| 216 |

+

month = mar,

|

| 217 |

+

year = 2023,

|

| 218 |

+

publisher = {Zenodo},

|

| 219 |

+

version = {v0.1.1},

|

| 220 |

+

doi = {10.5281/zenodo.7733589},

|

| 221 |

+

url = {https://doi.org/10.5281/zenodo.7733589}

|

| 222 |

+

}

|

| 223 |

+

```

|

| 224 |

+

|

| 225 |

+

```

|

| 226 |

+

@article{Alayrac2022FlamingoAV,

|

| 227 |

+

title={Flamingo: a Visual Language Model for Few-Shot Learning},

|

| 228 |

+

author={Jean-Baptiste Alayrac and Jeff Donahue and Pauline Luc and Antoine Miech and Iain Barr and Yana Hasson and Karel Lenc and Arthur Mensch and Katie Millican and Malcolm Reynolds and Roman Ring and Eliza Rutherford and Serkan Cabi and Tengda Han and Zhitao Gong and Sina Samangooei and Marianne Monteiro and Jacob Menick and Sebastian Borgeaud and Andy Brock and Aida Nematzadeh and Sahand Sharifzadeh and Mikolaj Binkowski and Ricardo Barreira and Oriol Vinyals and Andrew Zisserman and Karen Simonyan},

|

| 229 |

+

journal={ArXiv},

|

| 230 |

+

year={2022},

|

| 231 |

+

volume={abs/2204.14198}

|

| 232 |

+

}

|

| 233 |

+

```

|

open_flamingo/TERMS_AND_CONDITIONS.md

ADDED

|

@@ -0,0 +1,15 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

**Please read the following information carefully before proceeding.**

|

| 2 |

+

|

| 3 |

+

OpenFlamingo is a **research prototype** that aims to enable users to interact with AI through both language and images. AI agents equipped with both language and visual understanding can be useful on a larger variety of tasks compared to models that communicate solely via language. By releasing an open-source research prototype, we hope to help the research community better understand the risks and limitations of modern visual-language AI models and accelerate the development of safer and more reliable methods.

|

| 4 |

+

|

| 5 |

+

- [ ] I understand that OpenFlamingo is a research prototype and I will only use it for non-commercial research purposes.

|

| 6 |

+

|

| 7 |

+

**Limitations.** OpenFlamingo is built on top of the LLaMA large language model developed by Meta AI. Large language models, including LLaMA, are trained on mostly unfiltered internet data, and have been shown to be able to produce toxic, unethical, inaccurate, and harmful content. On top of this, OpenFlamingo’s ability to support visual inputs creates additional risks, since it can be used in a wider variety of applications; image+text models may carry additional risks specific to multimodality. Please use discretion when assessing the accuracy or appropriateness of the model’s outputs, and be mindful before sharing its results.

|

| 8 |

+

|

| 9 |

+

- [ ] I understand that OpenFlamingo may produce unintended, inappropriate, offensive, and/or inaccurate results. I agree to take full responsibility for any use of the OpenFlamingo outputs that I generate.

|

| 10 |

+

|

| 11 |

+

**Privacy and data collection.** This demo does NOT store any personal information on its users, and it does NOT store user queries.

|

| 12 |

+

|

| 13 |

+

**Licensing.** As OpenFlamingo is built on top of the LLaMA large language model from Meta AI, the LLaMA license agreement (as documented in the Meta request form) also applies.

|

| 14 |

+

|

| 15 |

+

- [ ] I have read and agree to the terms of the LLaMA license agreement.

|

open_flamingo/docs/flamingo.png

ADDED

|

open_flamingo/environment.yml

ADDED

|

@@ -0,0 +1,10 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

name: mm

|

| 2 |

+

channels:

|

| 3 |

+

- defaults

|

| 4 |

+

dependencies:

|

| 5 |

+

- python=3.9

|

| 6 |

+

- conda-forge::openjdk

|

| 7 |

+

- pip

|

| 8 |

+

- pip:

|

| 9 |

+

- -r requirements.txt

|

| 10 |

+

- -e .

|

open_flamingo/open_flamingo/__init__.py

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from .src.flamingo import Flamingo

|

| 2 |

+

from .src.factory import create_model_and_transforms

|

open_flamingo/open_flamingo/eval/__init__.py

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

|

open_flamingo/open_flamingo/eval/classification.py

ADDED

|

@@ -0,0 +1,147 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|