Spaces:

Runtime error

Runtime error

Upload 244 files

Browse filesThis view is limited to 50 files because it contains too many changes.

See raw diff

- .gitattributes +16 -0

- LICENSE +21 -0

- assets/13 00_00_00-00_00_30.gif +3 -0

- assets/5 00_00_00-00_00_30.gif +3 -0

- assets/6 00_00_00-00_00_30.gif +3 -0

- assets/7 00_00_00-00_00_30.gif +3 -0

- assets/dpvj8-y3ubn.gif +3 -0

- assets/framework.jpg +0 -0

- assets/i1ude-11d4e.gif +3 -0

- assets/kntw7-iuluy.gif +3 -0

- assets/nr2a2-oe6qj.gif +3 -0

- assets/ns4et-xj8ax.gif +3 -0

- assets/open-sora-plan.png +3 -0

- assets/ozg76-g1aqh.gif +3 -0

- assets/pvvm5-5hm65.gif +3 -0

- assets/rrdqk-puoud.gif +3 -0

- assets/we_want_you.jpg +0 -0

- assets/y70q9-y5tip.gif +3 -0

- docker/LICENSE +21 -0

- docker/README.md +87 -0

- docker/build_docker.png +0 -0

- docker/docker_build.sh +8 -0

- docker/docker_run.sh +45 -0

- docker/dockerfile.base +24 -0

- docker/packages.txt +3 -0

- docker/ports.txt +1 -0

- docker/postinstallscript.sh +3 -0

- docker/requirements.txt +40 -0

- docker/run_docker.png +0 -0

- docker/setup_env.sh +11 -0

- docs/Contribution_Guidelines.md +87 -0

- docs/Data.md +35 -0

- docs/EVAL.md +110 -0

- docs/Report-v1.0.0.md +131 -0

- docs/VQVAE.md +57 -0

- examples/get_latents_std.py +38 -0

- examples/prompt_list_0.txt +16 -0

- examples/rec_image.py +57 -0

- examples/rec_imvi_vae.py +159 -0

- examples/rec_video.py +120 -0

- examples/rec_video_ae.py +120 -0

- examples/rec_video_vae.py +275 -0

- opensora/__init__.py +1 -0

- opensora/dataset/__init__.py +99 -0

- opensora/dataset/extract_feature_dataset.py +64 -0

- opensora/dataset/feature_datasets.py +213 -0

- opensora/dataset/landscope.py +90 -0

- opensora/dataset/sky_datasets.py +128 -0

- opensora/dataset/t2v_datasets.py +111 -0

- opensora/dataset/transform.py +489 -0

.gitattributes

CHANGED

|

@@ -33,3 +33,19 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

assets/13[[:space:]]00_00_00-00_00_30.gif filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

assets/5[[:space:]]00_00_00-00_00_30.gif filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

assets/6[[:space:]]00_00_00-00_00_30.gif filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

assets/7[[:space:]]00_00_00-00_00_30.gif filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

assets/dpvj8-y3ubn.gif filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

assets/i1ude-11d4e.gif filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

assets/kntw7-iuluy.gif filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

assets/nr2a2-oe6qj.gif filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

assets/ns4et-xj8ax.gif filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

assets/open-sora-plan.png filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

assets/ozg76-g1aqh.gif filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

assets/pvvm5-5hm65.gif filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

assets/rrdqk-puoud.gif filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

assets/y70q9-y5tip.gif filter=lfs diff=lfs merge=lfs -text

|

| 50 |

+

opensora/models/captioner/caption_refiner/dataset/test_videos/video1.gif filter=lfs diff=lfs merge=lfs -text

|

| 51 |

+

opensora/models/captioner/caption_refiner/dataset/test_videos/video2.gif filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2024 PKU-YUAN's Group (袁粒课题组-北大信工)

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

assets/13 00_00_00-00_00_30.gif

ADDED

|

Git LFS Details

|

assets/5 00_00_00-00_00_30.gif

ADDED

|

Git LFS Details

|

assets/6 00_00_00-00_00_30.gif

ADDED

|

Git LFS Details

|

assets/7 00_00_00-00_00_30.gif

ADDED

|

Git LFS Details

|

assets/dpvj8-y3ubn.gif

ADDED

|

Git LFS Details

|

assets/framework.jpg

ADDED

|

assets/i1ude-11d4e.gif

ADDED

|

Git LFS Details

|

assets/kntw7-iuluy.gif

ADDED

|

Git LFS Details

|

assets/nr2a2-oe6qj.gif

ADDED

|

Git LFS Details

|

assets/ns4et-xj8ax.gif

ADDED

|

Git LFS Details

|

assets/open-sora-plan.png

ADDED

|

Git LFS Details

|

assets/ozg76-g1aqh.gif

ADDED

|

Git LFS Details

|

assets/pvvm5-5hm65.gif

ADDED

|

Git LFS Details

|

assets/rrdqk-puoud.gif

ADDED

|

Git LFS Details

|

assets/we_want_you.jpg

ADDED

|

assets/y70q9-y5tip.gif

ADDED

|

Git LFS Details

|

docker/LICENSE

ADDED

|

@@ -0,0 +1,21 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

MIT License

|

| 2 |

+

|

| 3 |

+

Copyright (c) 2024 SimonLee

|

| 4 |

+

|

| 5 |

+

Permission is hereby granted, free of charge, to any person obtaining a copy

|

| 6 |

+

of this software and associated documentation files (the "Software"), to deal

|

| 7 |

+

in the Software without restriction, including without limitation the rights

|

| 8 |

+

to use, copy, modify, merge, publish, distribute, sublicense, and/or sell

|

| 9 |

+

copies of the Software, and to permit persons to whom the Software is

|

| 10 |

+

furnished to do so, subject to the following conditions:

|

| 11 |

+

|

| 12 |

+

The above copyright notice and this permission notice shall be included in all

|

| 13 |

+

copies or substantial portions of the Software.

|

| 14 |

+

|

| 15 |

+

THE SOFTWARE IS PROVIDED "AS IS", WITHOUT WARRANTY OF ANY KIND, EXPRESS OR

|

| 16 |

+

IMPLIED, INCLUDING BUT NOT LIMITED TO THE WARRANTIES OF MERCHANTABILITY,

|

| 17 |

+

FITNESS FOR A PARTICULAR PURPOSE AND NONINFRINGEMENT. IN NO EVENT SHALL THE

|

| 18 |

+

AUTHORS OR COPYRIGHT HOLDERS BE LIABLE FOR ANY CLAIM, DAMAGES OR OTHER

|

| 19 |

+

LIABILITY, WHETHER IN AN ACTION OF CONTRACT, TORT OR OTHERWISE, ARISING FROM,

|

| 20 |

+

OUT OF OR IN CONNECTION WITH THE SOFTWARE OR THE USE OR OTHER DEALINGS IN THE

|

| 21 |

+

SOFTWARE.

|

docker/README.md

ADDED

|

@@ -0,0 +1,87 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

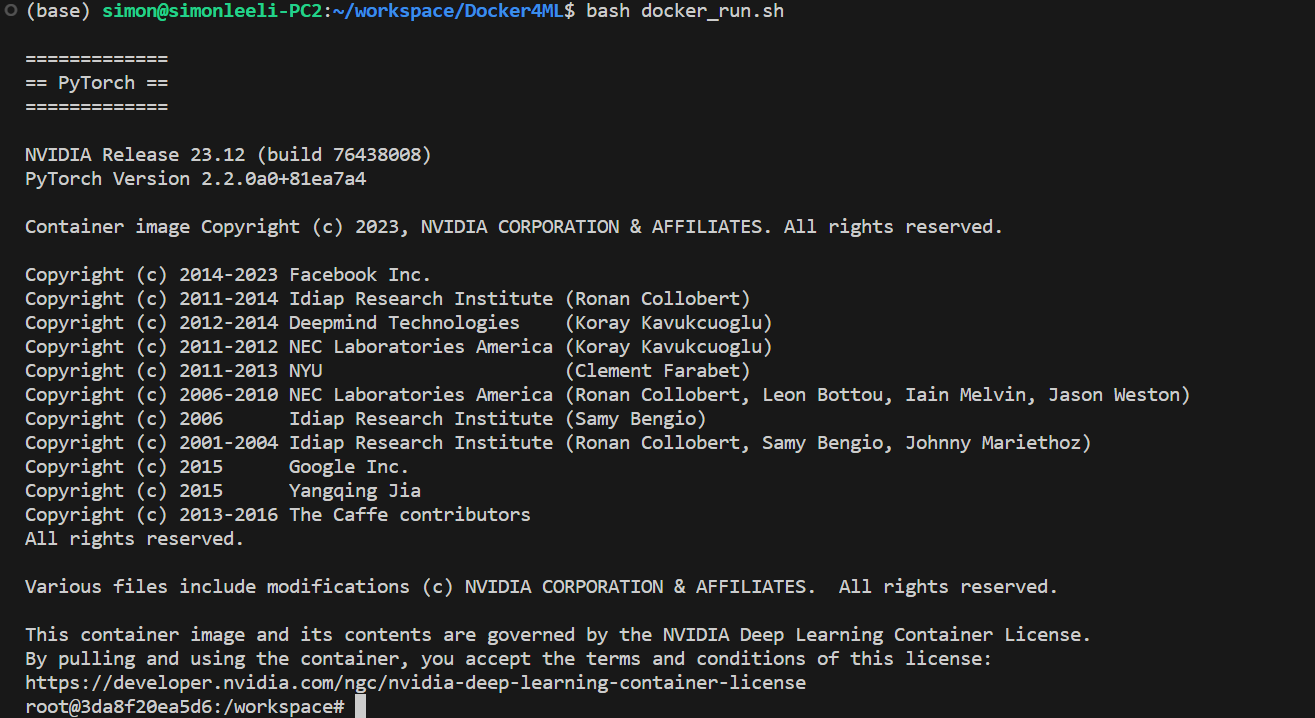

# Docker4ML

|

| 2 |

+

|

| 3 |

+

Useful docker scripts for ML developement.

|

| 4 |

+

[https://github.com/SimonLeeGit/Docker4ML](https://github.com/SimonLeeGit/Docker4ML)

|

| 5 |

+

|

| 6 |

+

## Build Docker Image

|

| 7 |

+

|

| 8 |

+

```bash

|

| 9 |

+

bash docker_build.sh

|

| 10 |

+

```

|

| 11 |

+

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

## Run Docker Container as Development Envirnoment

|

| 15 |

+

|

| 16 |

+

```bash

|

| 17 |

+

bash docker_run.sh

|

| 18 |

+

```

|

| 19 |

+

|

| 20 |

+

|

| 21 |

+

|

| 22 |

+

## Custom Docker Config

|

| 23 |

+

|

| 24 |

+

### Config [setup_env.sh](./setup_env.sh)

|

| 25 |

+

|

| 26 |

+

You can modify this file to custom your settings.

|

| 27 |

+

|

| 28 |

+

```bash

|

| 29 |

+

TAG=ml:dev

|

| 30 |

+

BASE_TAG=nvcr.io/nvidia/pytorch:23.12-py3

|

| 31 |

+

```

|

| 32 |

+

|

| 33 |

+

#### TAG

|

| 34 |

+

|

| 35 |

+

Your built docker image tag, you can set it as what you what.

|

| 36 |

+

|

| 37 |

+

#### BASE_TAG

|

| 38 |

+

|

| 39 |

+

The base docker image tag for your built docker image, here we use nvidia pytorch images.

|

| 40 |

+

You can check it from [https://catalog.ngc.nvidia.com/orgs/nvidia/containers/pytorch/tags](https://catalog.ngc.nvidia.com/orgs/nvidia/containers/pytorch/tags)

|

| 41 |

+

|

| 42 |

+

Also, you can use other docker image as base, such as: [ubuntu](https://hub.docker.com/_/ubuntu/tags)

|

| 43 |

+

|

| 44 |

+

### USER_NAME

|

| 45 |

+

|

| 46 |

+

Your user name used in docker container.

|

| 47 |

+

|

| 48 |

+

### USER_PASSWD

|

| 49 |

+

|

| 50 |

+

Your user password used in docker container.

|

| 51 |

+

|

| 52 |

+

### Config [requriements.txt](./requirements.txt)

|

| 53 |

+

|

| 54 |

+

You can add your default installed python libraries here.

|

| 55 |

+

|

| 56 |

+

```txt

|

| 57 |

+

transformers==4.27.1

|

| 58 |

+

```

|

| 59 |

+

|

| 60 |

+

By default, it has some libs installed, you can check it from [https://docs.nvidia.com/deeplearning/frameworks/pytorch-release-notes/rel-24-01.html](https://docs.nvidia.com/deeplearning/frameworks/pytorch-release-notes/rel-24-01.html)

|

| 61 |

+

|

| 62 |

+

### Config [packages.txt](./packages.txt)

|

| 63 |

+

|

| 64 |

+

You can add your default apt-get installed packages here.

|

| 65 |

+

|

| 66 |

+

```txt

|

| 67 |

+

wget

|

| 68 |

+

curl

|

| 69 |

+

git

|

| 70 |

+

```

|

| 71 |

+

|

| 72 |

+

### Config [ports.txt](./ports.txt)

|

| 73 |

+

|

| 74 |

+

You can add some ports enabled for docker container here.

|

| 75 |

+

|

| 76 |

+

```txt

|

| 77 |

+

-p 6006:6006

|

| 78 |

+

-p 8080:8080

|

| 79 |

+

```

|

| 80 |

+

|

| 81 |

+

### Config [postinstallscript.sh](./postinstallscript.sh)

|

| 82 |

+

|

| 83 |

+

You can add your custom script to run when build docker image.

|

| 84 |

+

|

| 85 |

+

## Q&A

|

| 86 |

+

|

| 87 |

+

If you have any use problems, please contact to <simonlee235@gmail.com>.

|

docker/build_docker.png

ADDED

|

docker/docker_build.sh

ADDED

|

@@ -0,0 +1,8 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/usr/bin/env bash

|

| 2 |

+

|

| 3 |

+

WORK_DIR=$(dirname "$(readlink -f "$0")")

|

| 4 |

+

cd $WORK_DIR

|

| 5 |

+

|

| 6 |

+

source setup_env.sh

|

| 7 |

+

|

| 8 |

+

docker build -t $TAG --build-arg BASE_TAG=$BASE_TAG --build-arg USER_NAME=$USER_NAME --build-arg USER_PASSWD=$USER_PASSWD . -f dockerfile.base

|

docker/docker_run.sh

ADDED

|

@@ -0,0 +1,45 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/usr/bin/env bash

|

| 2 |

+

|

| 3 |

+

WORK_DIR=$(dirname "$(readlink -f "$0")")

|

| 4 |

+

source $WORK_DIR/setup_env.sh

|

| 5 |

+

|

| 6 |

+

RUNNING_IDS="$(docker ps --filter ancestor=$TAG --format "{{.ID}}")"

|

| 7 |

+

|

| 8 |

+

if [ -n "$RUNNING_IDS" ]; then

|

| 9 |

+

# Initialize an array to hold the container IDs

|

| 10 |

+

declare -a container_ids=($RUNNING_IDS)

|

| 11 |

+

|

| 12 |

+

# Get the first container ID using array indexing

|

| 13 |

+

ID=${container_ids[0]}

|

| 14 |

+

|

| 15 |

+

# Print the first container ID

|

| 16 |

+

echo ' '

|

| 17 |

+

echo "The running container ID is: $ID, enter it!"

|

| 18 |

+

else

|

| 19 |

+

echo ' '

|

| 20 |

+

echo "Not found running containers, run it!"

|

| 21 |

+

|

| 22 |

+

# Run a new docker container instance

|

| 23 |

+

ID=$(docker run \

|

| 24 |

+

--rm \

|

| 25 |

+

--gpus all \

|

| 26 |

+

-itd \

|

| 27 |

+

--ipc=host \

|

| 28 |

+

--ulimit memlock=-1 \

|

| 29 |

+

--ulimit stack=67108864 \

|

| 30 |

+

-e DISPLAY=$DISPLAY \

|

| 31 |

+

-v /tmp/.X11-unix/:/tmp/.X11-unix/ \

|

| 32 |

+

-v $PWD:/home/$USER_NAME/workspace \

|

| 33 |

+

-w /home/$USER_NAME/workspace \

|

| 34 |

+

$(cat $WORK_DIR/ports.txt) \

|

| 35 |

+

$TAG)

|

| 36 |

+

fi

|

| 37 |

+

|

| 38 |

+

docker logs $ID

|

| 39 |

+

|

| 40 |

+

echo ' '

|

| 41 |

+

echo ' '

|

| 42 |

+

echo '========================================='

|

| 43 |

+

echo ' '

|

| 44 |

+

|

| 45 |

+

docker exec -it $ID bash

|

docker/dockerfile.base

ADDED

|

@@ -0,0 +1,24 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

ARG BASE_TAG

|

| 2 |

+

FROM ${BASE_TAG}

|

| 3 |

+

ARG USER_NAME=myuser

|

| 4 |

+

ARG USER_PASSWD=111111

|

| 5 |

+

ARG DEBIAN_FRONTEND=noninteractive

|

| 6 |

+

|

| 7 |

+

# Pre-install packages, pip install requirements and run post install script.

|

| 8 |

+

COPY packages.txt .

|

| 9 |

+

COPY requirements.txt .

|

| 10 |

+

COPY postinstallscript.sh .

|

| 11 |

+

RUN apt-get update && apt-get install -y sudo $(cat packages.txt)

|

| 12 |

+

RUN pip install --no-cache-dir -r requirements.txt

|

| 13 |

+

RUN bash postinstallscript.sh

|

| 14 |

+

|

| 15 |

+

# Create a new user and group using the username argument

|

| 16 |

+

RUN groupadd -r ${USER_NAME} && useradd -r -m -g${USER_NAME} ${USER_NAME}

|

| 17 |

+

RUN echo "${USER_NAME}:${USER_PASSWD}" | chpasswd

|

| 18 |

+

RUN usermod -aG sudo ${USER_NAME}

|

| 19 |

+

USER ${USER_NAME}

|

| 20 |

+

ENV USER=${USER_NAME}

|

| 21 |

+

WORKDIR /home/${USER_NAME}/workspace

|

| 22 |

+

|

| 23 |

+

# Set the prompt to highlight the username

|

| 24 |

+

RUN echo "export PS1='\[\033[01;32m\]\u\[\033[00m\]@\[\033[01;34m\]\h\[\033[00m\]:\[\033[01;36m\]\w\[\033[00m\]\$'" >> /home/${USER_NAME}/.bashrc

|

docker/packages.txt

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

wget

|

| 2 |

+

curl

|

| 3 |

+

git

|

docker/ports.txt

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

-p 6006:6006

|

docker/postinstallscript.sh

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/usr/bin/env bash

|

| 2 |

+

# this script will run when build docker image.

|

| 3 |

+

|

docker/requirements.txt

ADDED

|

@@ -0,0 +1,40 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

setuptools>=61.0

|

| 2 |

+

torch==2.0.1

|

| 3 |

+

torchvision==0.15.2

|

| 4 |

+

transformers==4.32.0

|

| 5 |

+

albumentations==1.4.0

|

| 6 |

+

av==11.0.0

|

| 7 |

+

decord==0.6.0

|

| 8 |

+

einops==0.3.0

|

| 9 |

+

fastapi==0.110.0

|

| 10 |

+

accelerate==0.21.0

|

| 11 |

+

gdown==5.1.0

|

| 12 |

+

h5py==3.10.0

|

| 13 |

+

idna==3.6

|

| 14 |

+

imageio==2.34.0

|

| 15 |

+

matplotlib==3.7.5

|

| 16 |

+

numpy==1.24.4

|

| 17 |

+

omegaconf==2.1.1

|

| 18 |

+

opencv-python==4.9.0.80

|

| 19 |

+

opencv-python-headless==4.9.0.80

|

| 20 |

+

pandas==2.0.3

|

| 21 |

+

pillow==10.2.0

|

| 22 |

+

pydub==0.25.1

|

| 23 |

+

pytorch-lightning==1.4.2

|

| 24 |

+

pytorchvideo==0.1.5

|

| 25 |

+

PyYAML==6.0.1

|

| 26 |

+

regex==2023.12.25

|

| 27 |

+

requests==2.31.0

|

| 28 |

+

scikit-learn==1.3.2

|

| 29 |

+

scipy==1.10.1

|

| 30 |

+

six==1.16.0

|

| 31 |

+

tensorboard==2.14.0

|

| 32 |

+

test-tube==0.7.5

|

| 33 |

+

timm==0.9.16

|

| 34 |

+

torchdiffeq==0.2.3

|

| 35 |

+

torchmetrics==0.5.0

|

| 36 |

+

tqdm==4.66.2

|

| 37 |

+

urllib3==2.2.1

|

| 38 |

+

uvicorn==0.27.1

|

| 39 |

+

diffusers==0.24.0

|

| 40 |

+

scikit-video==1.1.11

|

docker/run_docker.png

ADDED

|

docker/setup_env.sh

ADDED

|

@@ -0,0 +1,11 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Docker tag for new build image

|

| 2 |

+

TAG=open_sora_plan:dev

|

| 3 |

+

|

| 4 |

+

# Base docker image tag used by docker build

|

| 5 |

+

BASE_TAG=nvcr.io/nvidia/pytorch:23.05-py3

|

| 6 |

+

|

| 7 |

+

# User name used in docker container

|

| 8 |

+

USER_NAME=developer

|

| 9 |

+

|

| 10 |

+

# User password used in docker container

|

| 11 |

+

USER_PASSWD=666666

|

docs/Contribution_Guidelines.md

ADDED

|

@@ -0,0 +1,87 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Contributing to the Open-Sora Plan Community

|

| 2 |

+

|

| 3 |

+

The Open-Sora Plan open-source community is a collaborative initiative driven by the community, emphasizing a commitment to being free and void of exploitation. Organized spontaneously by community members, we invite you to contribute to the Open-Sora Plan open-source community and help elevate it to new heights!

|

| 4 |

+

|

| 5 |

+

## Submitting a Pull Request (PR)

|

| 6 |

+

|

| 7 |

+

As a contributor, before submitting your request, kindly follow these guidelines:

|

| 8 |

+

|

| 9 |

+

1. Start by checking the [Open-Sora Plan GitHub](https://github.com/PKU-YuanGroup/Open-Sora-Plan/pulls) to see if there are any open or closed pull requests related to your intended submission. Avoid duplicating existing work.

|

| 10 |

+

|

| 11 |

+

2. [Fork](https://github.com/PKU-YuanGroup/Open-Sora-Plan/fork) the [open-sora plan](https://github.com/PKU-YuanGroup/Open-Sora-Plan) repository and download your forked repository to your local machine.

|

| 12 |

+

|

| 13 |

+

```bash

|

| 14 |

+

git clone [your-forked-repository-url]

|

| 15 |

+

```

|

| 16 |

+

|

| 17 |

+

3. Add the original Open-Sora Plan repository as a remote to sync with the latest updates:

|

| 18 |

+

|

| 19 |

+

```bash

|

| 20 |

+

git remote add upstream https://github.com/PKU-YuanGroup/Open-Sora-Plan

|

| 21 |

+

```

|

| 22 |

+

|

| 23 |

+

4. Sync the code from the main repository to your local machine, and then push it back to your forked remote repository.

|

| 24 |

+

|

| 25 |

+

```

|

| 26 |

+

# Pull the latest code from the upstream branch

|

| 27 |

+

git fetch upstream

|

| 28 |

+

|

| 29 |

+

# Switch to the main branch

|

| 30 |

+

git checkout main

|

| 31 |

+

|

| 32 |

+

# Merge the updates from the upstream branch into main, synchronizing the local main branch with the upstream

|

| 33 |

+

git merge upstream/main

|

| 34 |

+

|

| 35 |

+

# Additionally, sync the local main branch to the remote branch of your forked repository

|

| 36 |

+

git push origin main

|

| 37 |

+

```

|

| 38 |

+

|

| 39 |

+

|

| 40 |

+

> Note: Sync the code from the main repository before each submission.

|

| 41 |

+

|

| 42 |

+

5. Create a branch in your forked repository for your changes, ensuring the branch name is meaningful.

|

| 43 |

+

|

| 44 |

+

```bash

|

| 45 |

+

git checkout -b my-docs-branch main

|

| 46 |

+

```

|

| 47 |

+

|

| 48 |

+

6. While making modifications and committing changes, adhere to our [Commit Message Format](#Commit-Message-Format).

|

| 49 |

+

|

| 50 |

+

```bash

|

| 51 |

+

git commit -m "[docs]: xxxx"

|

| 52 |

+

```

|

| 53 |

+

|

| 54 |

+

7. Push your changes to your GitHub repository.

|

| 55 |

+

|

| 56 |

+

```bash

|

| 57 |

+

git push origin my-docs-branch

|

| 58 |

+

```

|

| 59 |

+

|

| 60 |

+

8. Submit a pull request to `Open-Sora-Plan:main` on the GitHub repository page.

|

| 61 |

+

|

| 62 |

+

## Commit Message Format

|

| 63 |

+

|

| 64 |

+

Commit messages must include both `<type>` and `<summary>` sections.

|

| 65 |

+

|

| 66 |

+

```bash

|

| 67 |

+

[<type>]: <summary>

|

| 68 |

+

│ │

|

| 69 |

+

│ └─⫸ Briefly describe your changes, without ending with a period.

|

| 70 |

+

│

|

| 71 |

+

└─⫸ Commit Type: |docs|feat|fix|refactor|

|

| 72 |

+

```

|

| 73 |

+

|

| 74 |

+

### Type

|

| 75 |

+

|

| 76 |

+

* **docs**: Modify or add documents.

|

| 77 |

+

* **feat**: Introduce a new feature.

|

| 78 |

+

* **fix**: Fix a bug.

|

| 79 |

+

* **refactor**: Restructure code, excluding new features or bug fixes.

|

| 80 |

+

|

| 81 |

+

### Summary

|

| 82 |

+

|

| 83 |

+

Describe modifications in English, without ending with a period.

|

| 84 |

+

|

| 85 |

+

> e.g., git commit -m "[docs]: add a contributing.md file"

|

| 86 |

+

|

| 87 |

+

This guideline is borrowed by [minisora](https://github.com/mini-sora/minisora). We sincerely appreciate MiniSora authors for their awesome templates.

|

docs/Data.md

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

|

| 2 |

+

**We need more dataset**, please refer to the [open-sora-Dataset](https://github.com/shaodong233/open-sora-Dataset) for details.

|

| 3 |

+

|

| 4 |

+

|

| 5 |

+

## Sky

|

| 6 |

+

|

| 7 |

+

|

| 8 |

+

This is an un-condition datasets. [Link](https://drive.google.com/open?id=1xWLiU-MBGN7MrsFHQm4_yXmfHBsMbJQo)

|

| 9 |

+

|

| 10 |

+

```

|

| 11 |

+

sky_timelapse

|

| 12 |

+

├── readme

|

| 13 |

+

├── sky_test

|

| 14 |

+

├── sky_train

|

| 15 |

+

├── test_videofolder.py

|

| 16 |

+

└── video_folder.py

|

| 17 |

+

```

|

| 18 |

+

|

| 19 |

+

## UCF101

|

| 20 |

+

|

| 21 |

+

We test the code with UCF-101 dataset. In order to download UCF-101 dataset, you can download the necessary files in [here](https://www.crcv.ucf.edu/data/UCF101.php). The code assumes a `ucf101` directory with the following structure

|

| 22 |

+

```

|

| 23 |

+

UCF-101/

|

| 24 |

+

ApplyEyeMakeup/

|

| 25 |

+

v1.avi

|

| 26 |

+

...

|

| 27 |

+

...

|

| 28 |

+

YoYo/

|

| 29 |

+

v1.avi

|

| 30 |

+

...

|

| 31 |

+

```

|

| 32 |

+

|

| 33 |

+

|

| 34 |

+

## Offline feature extraction

|

| 35 |

+

Coming soon...

|

docs/EVAL.md

ADDED

|

@@ -0,0 +1,110 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Evaluate the generated videos quality

|

| 2 |

+

|

| 3 |

+

You can easily calculate the following video quality metrics, which supports the batch-wise process.

|

| 4 |

+

- **CLIP-SCORE**: It uses the pretrained CLIP model to measure the cosine similarity between two modalities.

|

| 5 |

+

- **FVD**: Frechét Video Distance

|

| 6 |

+

- **SSIM**: structural similarity index measure

|

| 7 |

+

- **LPIPS**: learned perceptual image patch similarity

|

| 8 |

+

- **PSNR**: peak-signal-to-noise ratio

|

| 9 |

+

|

| 10 |

+

# Requirement

|

| 11 |

+

## Environment

|

| 12 |

+

- install Pytorch (torch>=1.7.1)

|

| 13 |

+

- install CLIP

|

| 14 |

+

```

|

| 15 |

+

pip install git+https://github.com/openai/CLIP.git

|

| 16 |

+

```

|

| 17 |

+

- install clip-cose from PyPi

|

| 18 |

+

```

|

| 19 |

+

pip install clip-score

|

| 20 |

+

```

|

| 21 |

+

- Other package

|

| 22 |

+

```

|

| 23 |

+

pip install lpips

|

| 24 |

+

pip install scipy (scipy==1.7.3/1.9.3, if you use 1.11.3, **you will calculate a WRONG FVD VALUE!!!**)

|

| 25 |

+

pip install numpy

|

| 26 |

+

pip install pillow

|

| 27 |

+

pip install torchvision>=0.8.2

|

| 28 |

+

pip install ftfy

|

| 29 |

+

pip install regex

|

| 30 |

+

pip install tqdm

|

| 31 |

+

```

|

| 32 |

+

## Pretrain model

|

| 33 |

+

- FVD

|

| 34 |

+

Before you cacluate FVD, you should first download the FVD pre-trained model. You can manually download any of the following and put it into FVD folder.

|

| 35 |

+

- `i3d_torchscript.pt` from [here](https://www.dropbox.com/s/ge9e5ujwgetktms/i3d_torchscript.pt)

|

| 36 |

+

- `i3d_pretrained_400.pt` from [here](https://onedrive.live.com/download?cid=78EEF3EB6AE7DBCB&resid=78EEF3EB6AE7DBCB%21199&authkey=AApKdFHPXzWLNyI)

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

## Other Notices

|

| 40 |

+

1. Make sure the pixel value of videos should be in [0, 1].

|

| 41 |

+

2. We average SSIM when images have 3 channels, ssim is the only metric extremely sensitive to gray being compared to b/w.

|

| 42 |

+

3. Because the i3d model downsamples in the time dimension, `frames_num` should > 10 when calculating FVD, so FVD calculation begins from 10-th frame, like upper example.

|

| 43 |

+

4. For grayscale videos, we multiply to 3 channels

|

| 44 |

+

5. data input specifications for clip_score

|

| 45 |

+

> - Image Files:All images should be stored in a single directory. The image files can be in either .png or .jpg format.

|

| 46 |

+

>

|

| 47 |

+

> - Text Files: All text data should be contained in plain text files in a separate directory. These text files should have the extension .txt.

|

| 48 |

+

>

|

| 49 |

+

> Note: The number of files in the image directory should be exactly equal to the number of files in the text directory. Additionally, the files in the image directory and text directory should be paired by file name. For instance, if there is a cat.png in the image directory, there should be a corresponding cat.txt in the text directory.

|

| 50 |

+

>

|

| 51 |

+

> Directory Structure Example:

|

| 52 |

+

> ```

|

| 53 |

+

> ├── path/to/image

|

| 54 |

+

> │ ├── cat.png

|

| 55 |

+

> │ ├── dog.png

|

| 56 |

+

> │ └── bird.jpg

|

| 57 |

+

> └── path/to/text

|

| 58 |

+

> ├── cat.txt

|

| 59 |

+

> ├── dog.txt

|

| 60 |

+

> └── bird.txt

|

| 61 |

+

> ```

|

| 62 |

+

|

| 63 |

+

6. data input specifications for fvd, psnr, ssim, lpips

|

| 64 |

+

|

| 65 |

+

> Directory Structure Example:

|

| 66 |

+

> ```

|

| 67 |

+

> ├── path/to/generated_image

|

| 68 |

+

> │ ├── cat.mp4

|

| 69 |

+

> │ ├── dog.mp4

|

| 70 |

+

> │ └── bird.mp4

|

| 71 |

+

> └── path/to/real_image

|

| 72 |

+

> ├── cat.mp4

|

| 73 |

+

> ├── dog.mp4

|

| 74 |

+

> └── bird.mp4

|

| 75 |

+

> ```

|

| 76 |

+

|

| 77 |

+

|

| 78 |

+

|

| 79 |

+

# Usage

|

| 80 |

+

|

| 81 |

+

```

|

| 82 |

+

# you change the file path and need to set the frame_num, resolution etc...

|

| 83 |

+

|

| 84 |

+

# clip_score cross modality

|

| 85 |

+

cd opensora/eval

|

| 86 |

+

bash script/cal_clip_score.sh

|

| 87 |

+

|

| 88 |

+

|

| 89 |

+

|

| 90 |

+

# fvd

|

| 91 |

+

cd opensora/eval

|

| 92 |

+

bash script/cal_fvd.sh

|

| 93 |

+

|

| 94 |

+

# psnr

|

| 95 |

+

cd opensora/eval

|

| 96 |

+

bash eval/script/cal_psnr.sh

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

# ssim

|

| 100 |

+

cd opensora/eval

|

| 101 |

+

bash eval/script/cal_ssim.sh

|

| 102 |

+

|

| 103 |

+

|

| 104 |

+

# lpips

|

| 105 |

+

cd opensora/eval

|

| 106 |

+

bash eval/script/cal_lpips.sh

|

| 107 |

+

```

|

| 108 |

+

|

| 109 |

+

# Acknowledgement

|

| 110 |

+

The evaluation codebase refers to [clip-score](https://github.com/Taited/clip-score) and [common_metrics](https://github.com/JunyaoHu/common_metrics_on_video_quality).

|

docs/Report-v1.0.0.md

ADDED

|

@@ -0,0 +1,131 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Report v1.0.0

|

| 2 |

+

|

| 3 |

+

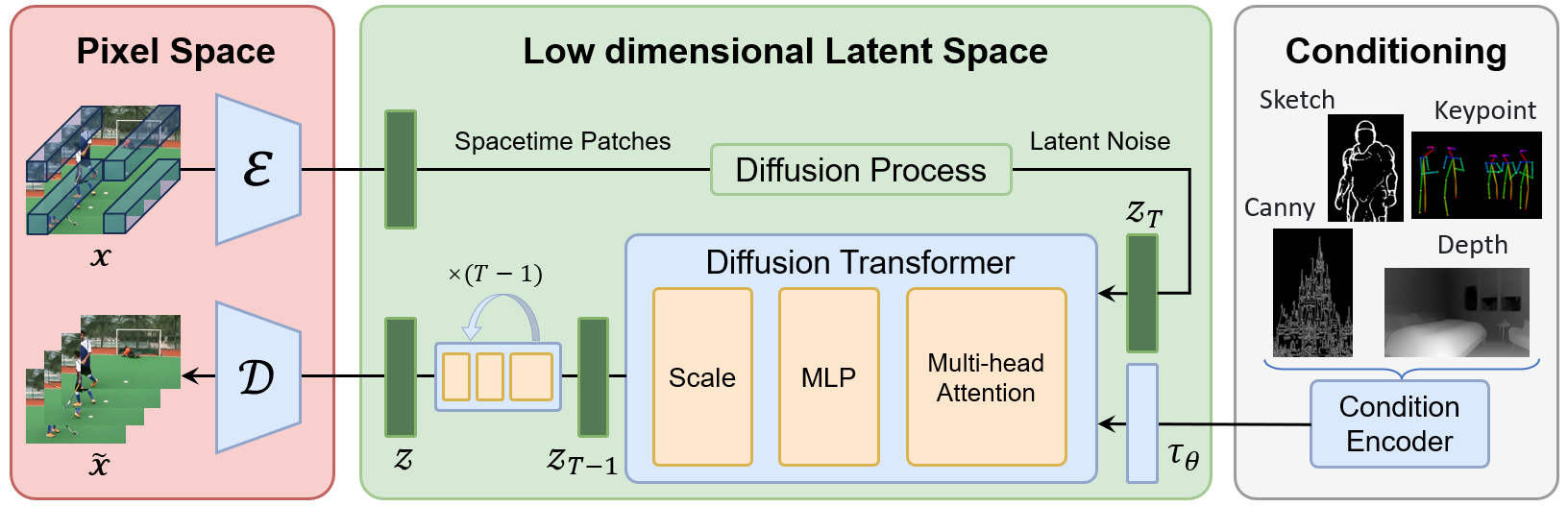

In March 2024, we launched a plan called Open-Sora-Plan, which aims to reproduce the OpenAI [Sora](https://openai.com/sora) through an open-source framework. As a foundational open-source framework, it enables training of video generation models, including Unconditioned Video Generation, Class Video Generation, and Text-to-Video Generation.

|

| 4 |

+

|

| 5 |

+

**Today, we are thrilled to present Open-Sora-Plan v1.0.0, which significantly enhances video generation quality and text control capabilities.**

|

| 6 |

+

|

| 7 |

+

Compared with previous video generation model, Open-Sora-Plan v1.0.0 has several improvements:

|

| 8 |

+

|

| 9 |

+

1. **Efficient training and inference with CausalVideoVAE**. We apply a spatial-temporal compression to the videos by 4×8×8.

|

| 10 |

+

2. **Joint image-video training for better quality**. Our CausalVideoVAE considers the first frame as an image, allowing for the simultaneous encoding of both images and videos in a natural manner. This allows the diffusion model to grasp more spatial-visual details to improve visual quality.

|

| 11 |

+

|

| 12 |

+

### Open-Source Release

|

| 13 |

+

We open-source the Open-Sora-Plan to facilitate future development of Video Generation in the community. Code, data, model will be made publicly available.

|

| 14 |

+

- Demo: Hugging Face demo [here](https://huggingface.co/spaces/LanguageBind/Open-Sora-Plan-v1.0.0). 🤝 Enjoying the [](https://replicate.com/camenduru/open-sora-plan-512x512) and [](https://colab.research.google.com/github/camenduru/Open-Sora-Plan-jupyter/blob/main/Open_Sora_Plan_jupyter.ipynb), created by [@camenduru](https://github.com/camenduru), who generously supports our research!

|

| 15 |

+

- Code: All training scripts and sample scripts.

|

| 16 |

+

- Model: Both Diffusion Model and CausalVideoVAE [here](https://huggingface.co/LanguageBind/Open-Sora-Plan-v1.0.0).

|

| 17 |

+

- Data: Both raw videos and captions [here](https://huggingface.co/datasets/LanguageBind/Open-Sora-Plan-v1.0.0).

|

| 18 |

+

|

| 19 |

+

## Gallery

|

| 20 |

+

|

| 21 |

+

Open-Sora-Plan v1.0.0 supports joint training of images and videos. Here, we present the capabilities of Video/Image Reconstruction and Generation:

|

| 22 |

+

|

| 23 |

+

### CausalVideoVAE Reconstruction

|

| 24 |

+

|

| 25 |

+

**Video Reconstruction** with 720×1280. Since github can't upload large video, we put it here: [1](https://streamable.com/gqojal), [2](https://streamable.com/6nu3j8).

|

| 26 |

+

|

| 27 |

+

https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/88202804/c100bb02-2420-48a3-9d7b-4608a41f14aa

|

| 28 |

+

|

| 29 |

+

https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/88202804/8aa8f587-d9f1-4e8b-8a82-d3bf9ba91d68

|

| 30 |

+

|

| 31 |

+

**Image Reconstruction** in 1536×1024.

|

| 32 |

+

|

| 33 |

+

<img src="https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/88202804/1684c3ec-245d-4a60-865c-b8946d788eb9" width="45%"/> <img src="https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/88202804/46ef714e-3e5b-492c-aec4-3793cb2260b5" width="45%"/>

|

| 34 |

+

|

| 35 |

+

**Text-to-Video Generation** with 65×1024×1024

|

| 36 |

+

|

| 37 |

+

https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/62638829/2641a8aa-66ac-4cda-8279-86b2e6a6e011

|

| 38 |

+

|

| 39 |

+

**Text-to-Video Generation** with 65×512×512

|

| 40 |

+

|

| 41 |

+

https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/62638829/37e3107e-56b3-4b09-8920-fa1d8d144b9e

|

| 42 |

+

|

| 43 |

+

|

| 44 |

+

**Text-to-Image Generation** with 512×512

|

| 45 |

+

|

| 46 |

+

|

| 47 |

+

|

| 48 |

+

## Detailed Technical Report

|

| 49 |

+

|

| 50 |

+

### CausalVideoVAE

|

| 51 |

+

|

| 52 |

+

#### Model Structure

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

|

| 56 |

+

The CausalVideoVAE architecture inherits from the [Stable-Diffusion Image VAE](https://github.com/CompVis/stable-diffusion/tree/main). To ensure that the pretrained weights of the Image VAE can be seamlessly applied to the Video VAE, the model structure has been designed as follows:

|

| 57 |

+

|

| 58 |

+

1. **CausalConv3D**: Converting Conv2D to CausalConv3D enables joint training of image and video data. CausalConv3D applies a special treatment to the first frame, as it does not have access to subsequent frames. For more specific details, please refer to https://github.com/PKU-YuanGroup/Open-Sora-Plan/pull/145

|

| 59 |

+

|

| 60 |

+

2. **Initialization**: There are two common [methods](https://github.com/hassony2/inflated_convnets_pytorch/blob/master/src/inflate.py#L5) to expand Conv2D to Conv3D: average initialization and center initialization. But we employ a specific initialization method (tail initialization). This initialization method ensures that without any training, the model is capable of directly reconstructing images, and even videos.

|

| 61 |

+

|

| 62 |

+

#### Training Details

|

| 63 |

+

|

| 64 |

+

<img width="833" alt="image" src="https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/88202804/9ffb6dc4-23f6-4274-a066-bbebc7522a14">

|

| 65 |

+

|

| 66 |

+

We present the loss curves for two distinct initialization methods under 17×256×256. The yellow curve represents the loss using tail init, while the blue curve corresponds to the loss from center initialization. As shown in the graph, tail initialization demonstrates better performance on the loss curve. Additionally, we found that center initialization leads to error accumulation, causing the collapse over extended durations.

|

| 67 |

+

|

| 68 |

+

#### Inference Tricks

|

| 69 |

+

Despite the VAE in Diffusion training being frozen, we still find it challenging to afford the cost of the CausalVideoVAE. In our case, with 80GB of GPU memory, we can only infer a video of either 256×512×512 or 32×1024×1024 resolution using half-precision, which limits our ability to scale up to longer and higher-resolution videos. Therefore, we adopt tile convolution, which allows us to infer videos of arbitrary duration or resolution with nearly constant memory usage.

|

| 70 |

+

|

| 71 |

+

### Data Construction

|

| 72 |

+

We define a high-quality video dataset based on two core principles: (1) No content-unrelated watermarks. (2) High-quality and dense captions.

|

| 73 |

+

|

| 74 |

+

**For principles 1**, we crawled approximately 40,000 videos from open-source websites under the CC0 license. Specifically, we obtained 1,244 videos from [mixkit](https://mixkit.co/), 7,408 videos from [pexels](https://www.pexels.com/), and 31,617 videos from [pixabay](https://pixabay.com/). These videos adhere to the principle of having no content-unrelated watermarks. According to the scene transformation and clipping script provided by [Panda70M](https://github.com/snap-research/Panda-70M/blob/main/splitting/README.md), we have divided these videos into approximately 434,000 video clips. In fact, based on our clipping results, 99% of the videos obtained from these online sources are found to contain single scenes. Additionally, we have observed that over 60% of the crawled data comprises landscape videos.

|

| 75 |

+

|

| 76 |

+

**For principles 2**, it is challenging to directly crawl a large quantity of high-quality dense captions from the internet. Therefore, we utilize a mature Image-captioner model to obtain high-quality dense captions. We conducted ablation experiments on two multimodal large models: [ShareGPT4V-Captioner-7B](https://github.com/InternLM/InternLM-XComposer/blob/main/projects/ShareGPT4V/README.md) and [LLaVA-1.6-34B](https://github.com/haotian-liu/LLaVA). The former is specifically designed for caption generation, while the latter is a general-purpose multimodal large model. After conducting our ablation experiments, we found that they are comparable in performance. However, there is a significant difference in their inference speed on the A800 GPU: 40s/it of batch size of 12 for ShareGPT4V-Captioner-7B, 15s/it of batch size of 1 for LLaVA-1.6-34B. We open-source all annotations [here](https://huggingface.co/datasets/LanguageBind/Open-Sora-Plan-v1.0.0)。

|

| 77 |

+

|

| 78 |

+

### Training Diffusion Model

|

| 79 |

+

Similar to previous work, we employ a multi-stage cascaded training approach, which consumes a total of 2,048 A800 GPU hours. We found that joint training with images significantly accelerates model convergence and enhances visual perception, aligning with the findings of [Latte](https://github.com/Vchitect/Latte). Below is our training card:

|

| 80 |

+

|

| 81 |

+

| Name | Stage 1 | Stage 2 | Stage 3 | Stage 4 |

|

| 82 |

+

|---|---|---|---|---|

|

| 83 |

+

| Training Video Size | 17×256×256 | 65×256×256 | 65×512×512 | 65×1024×1024 |

|

| 84 |

+

| Compute (#A800 GPU x #Hours) | 32 × 40 | 32 × 18 | 32 × 6 | Under training |

|

| 85 |

+

| Checkpoint | [HF](https://huggingface.co/LanguageBind/Open-Sora-Plan-v1.0.0/tree/main/17x256x256) | [HF](https://huggingface.co/LanguageBind/Open-Sora-Plan-v1.0.0/tree/main/65x256x256) | [HF](https://huggingface.co/LanguageBind/Open-Sora-Plan-v1.0.0/tree/main/65x512x512) | Under training |

|

| 86 |

+

| Log | [wandb](https://api.wandb.ai/links/linbin/p6n3evym) | [wandb](https://api.wandb.ai/links/linbin/t2g53sew) | [wandb](https://api.wandb.ai/links/linbin/uomr0xzb) | Under training |

|

| 87 |

+

| Training Data | ~40k videos | ~40k videos | ~40k videos | ~40k videos |

|

| 88 |

+

|

| 89 |

+

## Next Release Preview

|

| 90 |

+

### CausalVideoVAE

|

| 91 |

+

Currently, the released version of CausalVideoVAE (v1.0.0) has two main drawbacks: **motion blurring** and **gridding effect**. We have made a series of improvements to CausalVideoVAE to reduce its inference cost and enhance its performance. We are currently referring to this enhanced version as the "preview version," which will be released in the next update. Preview reconstruction is as follows:

|

| 92 |

+

|

| 93 |

+

**1 min Video Reconstruction with 720×1280**. Since github can't put too big video, we put it here: [origin video](https://streamable.com/u4onbb), [reconstruction video](https://streamable.com/qt8ncc).

|

| 94 |

+

|

| 95 |

+

https://github.com/PKU-YuanGroup/Open-Sora-Plan/assets/88202804/cdcfa9a3-4de0-42d4-94c0-0669710e407b

|

| 96 |

+

|

| 97 |

+

We randomly selected 100 samples from the validation set of Kinetics-400 for evaluation, and the results are presented in the following table:

|

| 98 |

+

|

| 99 |

+

| | SSIM↑ | LPIPS↓ | PSNR↑ | FLOLPIPS↓ |

|

| 100 |

+

|---|---|---|---|---|

|

| 101 |

+

| v1.0.0 | 0.829 | 0.106 | 27.171 | 0.119 |

|

| 102 |

+

| Preview | 0.877 | 0.064 | 29.695 | 0.070 |

|

| 103 |

+

|

| 104 |

+

#### Motion Blurring

|

| 105 |

+

|

| 106 |

+

| **v1.0.0** | **Preview** |

|

| 107 |

+

| --- | --- |

|

| 108 |

+

|  |  |

|

| 109 |

+

|

| 110 |

+

#### Gridding effect

|

| 111 |

+

|

| 112 |

+

| **v1.0.0** | **Preview** |

|

| 113 |

+

| --- | --- |

|

| 114 |

+

|  |  |

|

| 115 |

+

|

| 116 |

+

### Data Construction

|

| 117 |

+

|

| 118 |

+

**Data source**. As mentioned earlier, over 60% of our dataset consists of landscape videos. This implies that our ability to generate videos in other domains is limited. However, most of the current large-scale open-source datasets are primarily obtained through web scraping from platforms like YouTube. While these datasets provide a vast quantity of videos, we have concerns about the quality of the videos themselves. Therefore, we will continue to collect high-quality datasets and also welcome recommendations from the open-source community.

|

| 119 |

+

|

| 120 |

+

**Caption Generation Pipeline**. As the video duration increases, we need to consider more efficient methods for video caption generation instead of relying solely on large multimodal image models. We are currently developing a new video caption generation pipeline that provides robust support for long videos. We are excited to share more details with you in the near future. Stay tuned!

|

| 121 |

+

|

| 122 |

+

### Training Diffusion Model

|

| 123 |

+

Although v1.0.0 has shown promising results, we acknowledge that we still have a ways to go to reach the level of Sora. In our upcoming work, we will primarily focus on three aspects:

|

| 124 |

+

|

| 125 |

+

1. **Training support for dynamic resolution and duration**: We aim to develop techniques that enable training models with varying resolutions and durations, allowing for more flexible and adaptable training processes.

|

| 126 |

+

|

| 127 |

+

2. **Support for longer video generation**: We will explore methods to extend the generation capabilities of our models, enabling them to produce longer videos beyond the current limitations.

|

| 128 |

+

|

| 129 |

+

3. **Enhanced conditional control**: We seek to enhance the conditional control capabilities of our models, providing users with more options and control over the generated videos.

|

| 130 |

+

|

| 131 |

+

Furthermore, through careful observation of the generated videos, we have noticed the presence of some non-physiological speckles or abnormal flow. This can be attributed to the limited performance of CausalVideoVAE, as mentioned earlier. In future experiments, we plan to retrain a diffusion model using a more powerful version of CausalVideoVAE to address these issues.

|

docs/VQVAE.md

ADDED

|

@@ -0,0 +1,57 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# VQVAE Documentation

|

| 2 |

+

|

| 3 |

+

# Introduction

|

| 4 |

+

|

| 5 |

+

Vector Quantized Variational AutoEncoders (VQ-VAE) is a type of autoencoder that uses a discrete latent representation. It is particularly useful for tasks that require discrete latent variables, such as text-to-speech and video generation.

|

| 6 |

+

|

| 7 |

+

# Usage

|

| 8 |

+

|

| 9 |

+

## Initialization

|

| 10 |

+

|

| 11 |

+

To initialize a VQVAE model, you can use the `VideoGPTVQVAE` class. This class is a part of the `opensora.models.ae` module.

|

| 12 |

+

|

| 13 |

+

```python

|

| 14 |

+

from opensora.models.ae import VideoGPTVQVAE

|

| 15 |

+

|

| 16 |

+

vqvae = VideoGPTVQVAE()

|

| 17 |

+

```

|

| 18 |

+

|

| 19 |

+

### Training

|

| 20 |

+

|

| 21 |

+

To train the VQVAE model, you can use the `train_videogpt.sh` script. This script will train the model using the parameters specified in the script.

|

| 22 |

+

|

| 23 |

+

```bash

|

| 24 |

+

bash scripts/videogpt/train_videogpt.sh

|

| 25 |

+

```

|

| 26 |

+

|

| 27 |

+

### Loading Pretrained Models

|

| 28 |

+

|

| 29 |

+

You can load a pretrained model using the `download_and_load_model` method. This method will download the checkpoint file and load the model.

|

| 30 |

+

|

| 31 |

+

```python

|

| 32 |

+

vqvae = VideoGPTVQVAE.download_and_load_model("bair_stride4x2x2")

|

| 33 |

+

```

|

| 34 |

+

|

| 35 |

+

Alternatively, you can load a model from a checkpoint using the `load_from_checkpoint` method.

|

| 36 |

+

|

| 37 |

+

```python

|

| 38 |

+

vqvae = VQVAEModel.load_from_checkpoint("results/VQVAE/checkpoint-1000")

|

| 39 |

+

```

|

| 40 |

+

|

| 41 |

+

### Encoding and Decoding

|

| 42 |

+

|

| 43 |

+

You can encode a video using the `encode` method. This method will return the encodings and embeddings of the video.

|

| 44 |

+

|

| 45 |

+

```python

|

| 46 |

+

encodings, embeddings = vqvae.encode(x_vae, include_embeddings=True)

|

| 47 |

+

```

|

| 48 |

+

|

| 49 |

+

You can reconstruct a video from its encodings using the decode method.

|

| 50 |

+

|

| 51 |

+

```python

|

| 52 |

+

video_recon = vqvae.decode(encodings)

|

| 53 |

+

```

|

| 54 |

+

|

| 55 |

+

## Testing

|

| 56 |

+

|

| 57 |

+

You can test the VQVAE model by reconstructing a video. The `examples/rec_video.py` script provides an example of how to do this.

|

examples/get_latents_std.py

ADDED

|

@@ -0,0 +1,38 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import torch

|

| 2 |

+

from torch.utils.data import DataLoader, Subset

|

| 3 |

+

import sys

|

| 4 |

+

sys.path.append(".")

|

| 5 |

+

from opensora.models.ae.videobase import CausalVAEModel, CausalVAEDataset

|

| 6 |

+

|

| 7 |

+

num_workers = 4

|

| 8 |

+

batch_size = 12

|

| 9 |

+

|

| 10 |

+

torch.manual_seed(0)

|

| 11 |

+

torch.set_grad_enabled(False)

|

| 12 |

+

|

| 13 |

+

device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')

|

| 14 |

+

|

| 15 |

+