Spaces:

Runtime error

Runtime error

lhzstar

commited on

Commit

·

abca9bf

1

Parent(s):

370e3bc

new commits

Browse files- README.md +33 -0

- app.py +1 -1

- celebbot.py +5 -8

- img/flow_chart.jpg +0 -0

- requirements.txt +2 -1

- run_cli.py +2 -1

- run_eval.py +29 -3

- unlimiformer/__init__.py +2 -0

- unlimiformer/configs/data/contract_nli.json +12 -0

- unlimiformer/configs/data/gov_report.json +12 -0

- unlimiformer/configs/data/hotpotqa.json +12 -0

- unlimiformer/configs/data/hotpotqa_second_only.json +12 -0

- unlimiformer/configs/data/narative_qa.json +12 -0

- unlimiformer/configs/data/qasper.json +12 -0

- unlimiformer/configs/data/qmsum.json +12 -0

- unlimiformer/configs/data/quality.json +12 -0

- unlimiformer/configs/data/squad.json +12 -0

- unlimiformer/configs/data/squad_ordered_distractors.json +12 -0

- unlimiformer/configs/data/squad_shuffled_distractors.json +12 -0

- unlimiformer/configs/data/summ_screen_fd.json +12 -0

- unlimiformer/configs/model/bart_base_sled.json +6 -0

- unlimiformer/configs/model/bart_large_sled.json +6 -0

- unlimiformer/configs/model/primera_govreport_sled.json +9 -0

- unlimiformer/configs/training/base_training_args.json +22 -0

- unlimiformer/index_building.py +161 -0

- unlimiformer/metrics/__init__.py +1 -0

- unlimiformer/metrics/metrics.py +182 -0

- unlimiformer/model.py +1157 -0

- unlimiformer/random_training_unlimiformer.py +224 -0

- unlimiformer/run.py +1180 -0

- unlimiformer/run_generation.py +577 -0

- unlimiformer/usage.py +91 -0

- unlimiformer/utils/__init__.py +0 -0

- unlimiformer/utils/config.py +13 -0

- unlimiformer/utils/custom_hf_argument_parser.py +39 -0

- unlimiformer/utils/custom_seq2seq_trainer.py +328 -0

- unlimiformer/utils/decoding.py +59 -0

- unlimiformer/utils/duplicates.py +15 -0

- unlimiformer/utils/override_training_args.py +106 -0

- utils.py +24 -2

README.md

CHANGED

|

@@ -11,3 +11,36 @@ license: apache-2.0

|

|

| 11 |

---

|

| 12 |

|

| 13 |

# CelebChat

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 11 |

---

|

| 12 |

|

| 13 |

# CelebChat

|

| 14 |

+

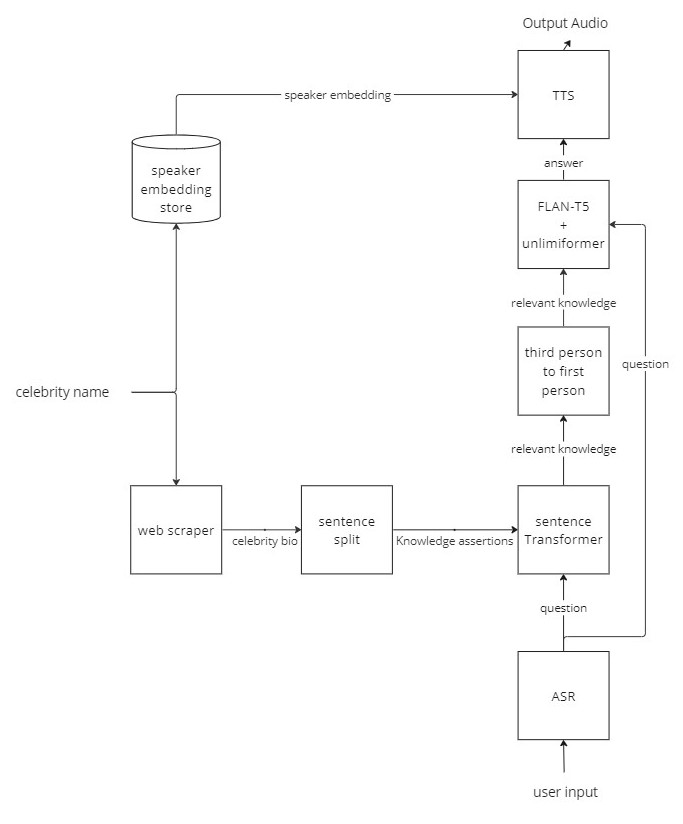

CelebChat is a Hugging Face Space where the user can talk with nearly 50 virtual celebrities.

|

| 15 |

+

|

| 16 |

+

## System details

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

## Citation

|

| 20 |

+

```

|

| 21 |

+

@article{bertsch2023unlimiformer,

|

| 22 |

+

title={Unlimiformer: Long-Range Transformers with Unlimited Length Input},

|

| 23 |

+

author={Bertsch, Amanda and Alon, Uri and Neubig, Graham and Gormley, Matthew R},

|

| 24 |

+

journal={arXiv preprint arXiv:2305.01625},

|

| 25 |

+

year={2023}

|

| 26 |

+

}

|

| 27 |

+

|

| 28 |

+

@misc{https://doi.org/10.48550/arxiv.2210.11416,

|

| 29 |

+

doi = {10.48550/ARXIV.2210.11416},

|

| 30 |

+

|

| 31 |

+

url = {https://arxiv.org/abs/2210.11416},

|

| 32 |

+

|

| 33 |

+

author = {Chung, Hyung Won and Hou, Le and Longpre, Shayne and Zoph, Barret and Tay, Yi and Fedus, William and Li, Eric and Wang, Xuezhi and Dehghani, Mostafa and Brahma, Siddhartha and Webson, Albert and Gu, Shixiang Shane and Dai, Zhuyun and Suzgun, Mirac and Chen, Xinyun and Chowdhery, Aakanksha and Narang, Sharan and Mishra, Gaurav and Yu, Adams and Zhao, Vincent and Huang, Yanping and Dai, Andrew and Yu, Hongkun and Petrov, Slav and Chi, Ed H. and Dean, Jeff and Devlin, Jacob and Roberts, Adam and Zhou, Denny and Le, Quoc V. and Wei, Jason},

|

| 34 |

+

|

| 35 |

+

keywords = {Machine Learning (cs.LG), Computation and Language (cs.CL), FOS: Computer and information sciences, FOS: Computer and information sciences},

|

| 36 |

+

|

| 37 |

+

title = {Scaling Instruction-Finetuned Language Models},

|

| 38 |

+

|

| 39 |

+

publisher = {arXiv},

|

| 40 |

+

|

| 41 |

+

year = {2022},

|

| 42 |

+

|

| 43 |

+

copyright = {Creative Commons Attribution 4.0 International}

|

| 44 |

+

}

|

| 45 |

+

|

| 46 |

+

```

|

app.py

CHANGED

|

@@ -64,7 +64,7 @@ def main():

|

|

| 64 |

st.session_state["celeb_bot"] = CelebBot(st.session_state["celeb_name"],

|

| 65 |

celeb_gender,

|

| 66 |

get_tokenizer(st.session_state["QA_model_path"]),

|

| 67 |

-

get_seq2seq_model(st.session_state["QA_model_path"]) if "flan-t5" in st.session_state["QA_model_path"] else get_causal_model(st.session_state["QA_model_path"]),

|

| 68 |

get_tokenizer(st.session_state["sentTr_model_path"]),

|

| 69 |

get_auto_model(st.session_state["sentTr_model_path"]),

|

| 70 |

*preprocess_text(st.session_state["celeb_name"], knowledge, "en_core_web_lg")

|

|

|

|

| 64 |

st.session_state["celeb_bot"] = CelebBot(st.session_state["celeb_name"],

|

| 65 |

celeb_gender,

|

| 66 |

get_tokenizer(st.session_state["QA_model_path"]),

|

| 67 |

+

get_seq2seq_model(st.session_state["QA_model_path"], _tokenizer=get_tokenizer(st.session_state["QA_model_path"])) if "flan-t5" in st.session_state["QA_model_path"] else get_causal_model(st.session_state["QA_model_path"]),

|

| 68 |

get_tokenizer(st.session_state["sentTr_model_path"]),

|

| 69 |

get_auto_model(st.session_state["sentTr_model_path"]),

|

| 70 |

*preprocess_text(st.session_state["celeb_name"], knowledge, "en_core_web_lg")

|

celebbot.py

CHANGED

|

@@ -2,21 +2,17 @@ import datetime

|

|

| 2 |

import numpy as np

|

| 3 |

import torch

|

| 4 |

import torch.nn.functional as F

|

| 5 |

-

import os

|

| 6 |

-

import json

|

| 7 |

import speech_recognition as sr

|

| 8 |

import re

|

| 9 |

import time

|

| 10 |

-

import spacy

|

| 11 |

-

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, AutoModel

|

| 12 |

import pickle

|

| 13 |

-

import streamlit as st

|

| 14 |

from sklearn.metrics.pairwise import cosine_similarity

|

| 15 |

|

| 16 |

|

| 17 |

# Build the AI

|

| 18 |

class CelebBot():

|

| 19 |

-

|

|

|

|

| 20 |

self.name = name

|

| 21 |

self.gender = gender

|

| 22 |

print("--- starting up", self.name, self.gender, "---")

|

|

@@ -29,6 +25,7 @@ class CelebBot():

|

|

| 29 |

self.spacy_model = spacy_model

|

| 30 |

|

| 31 |

self.all_knowledge = knowledge_sents

|

|

|

|

| 32 |

|

| 33 |

def speech_to_text(self):

|

| 34 |

recognizer = sr.Recognizer()

|

|

@@ -83,7 +80,7 @@ class CelebBot():

|

|

| 83 |

all_knowledge_embeddings = self.sentence_embeds_inference(self.all_knowledge)

|

| 84 |

similarity = cosine_similarity(all_knowledge_embeddings.cpu(), question_embeddings.cpu())

|

| 85 |

similarity = np.reshape(similarity, (1, -1))[0]

|

| 86 |

-

K = min(

|

| 87 |

top_K = np.sort(np.argpartition(similarity, -K)[-K: ])

|

| 88 |

all_knowledge_assertions = np.array(self.all_knowledge)[top_K]

|

| 89 |

|

|

@@ -175,7 +172,7 @@ class CelebBot():

|

|

| 175 |

knowledge = self.retrieve_knowledge_assertions()

|

| 176 |

|

| 177 |

query = f"Context: {instruction} {knowledge}\n\nChat History: {chat_his}Question: {self.text}\n\nAnswer:"

|

| 178 |

-

input_ids = self.QA_tokenizer(f"{query}", return_tensors="pt").input_ids.to(self.QA_model.device)

|

| 179 |

outputs = self.QA_model.generate(input_ids, max_length=1024, min_length=8, repetition_penalty=2.5)

|

| 180 |

self.text = self.QA_tokenizer.decode(outputs[0], skip_special_tokens=True)

|

| 181 |

return self.text

|

|

|

|

| 2 |

import numpy as np

|

| 3 |

import torch

|

| 4 |

import torch.nn.functional as F

|

|

|

|

|

|

|

| 5 |

import speech_recognition as sr

|

| 6 |

import re

|

| 7 |

import time

|

|

|

|

|

|

|

| 8 |

import pickle

|

|

|

|

| 9 |

from sklearn.metrics.pairwise import cosine_similarity

|

| 10 |

|

| 11 |

|

| 12 |

# Build the AI

|

| 13 |

class CelebBot():

|

| 14 |

+

|

| 15 |

+

def __init__(self, name, gender, QA_tokenizer, QA_model, sentTr_tokenizer, sentTr_model, spacy_model, knowledge_sents, top_k = 8):

|

| 16 |

self.name = name

|

| 17 |

self.gender = gender

|

| 18 |

print("--- starting up", self.name, self.gender, "---")

|

|

|

|

| 25 |

self.spacy_model = spacy_model

|

| 26 |

|

| 27 |

self.all_knowledge = knowledge_sents

|

| 28 |

+

self.top_k = top_k

|

| 29 |

|

| 30 |

def speech_to_text(self):

|

| 31 |

recognizer = sr.Recognizer()

|

|

|

|

| 80 |

all_knowledge_embeddings = self.sentence_embeds_inference(self.all_knowledge)

|

| 81 |

similarity = cosine_similarity(all_knowledge_embeddings.cpu(), question_embeddings.cpu())

|

| 82 |

similarity = np.reshape(similarity, (1, -1))[0]

|

| 83 |

+

K = min(self.top_k, len(self.all_knowledge))

|

| 84 |

top_K = np.sort(np.argpartition(similarity, -K)[-K: ])

|

| 85 |

all_knowledge_assertions = np.array(self.all_knowledge)[top_K]

|

| 86 |

|

|

|

|

| 172 |

knowledge = self.retrieve_knowledge_assertions()

|

| 173 |

|

| 174 |

query = f"Context: {instruction} {knowledge}\n\nChat History: {chat_his}Question: {self.text}\n\nAnswer:"

|

| 175 |

+

input_ids = self.QA_tokenizer(f"{query}", truncation=False, return_tensors="pt").input_ids.to(self.QA_model.device)

|

| 176 |

outputs = self.QA_model.generate(input_ids, max_length=1024, min_length=8, repetition_penalty=2.5)

|

| 177 |

self.text = self.QA_tokenizer.decode(outputs[0], skip_special_tokens=True)

|

| 178 |

return self.text

|

img/flow_chart.jpg

ADDED

|

requirements.txt

CHANGED

|

@@ -30,4 +30,5 @@ sentence-transformers==2.2.2

|

|

| 30 |

evaluate==0.4.1

|

| 31 |

https://huggingface.co/spacy/en_core_web_lg/resolve/main/en_core_web_lg-any-py3-none-any.whl

|

| 32 |

protobuf==3.20

|

| 33 |

-

streamlit_mic_recorder==0.0.2

|

|

|

|

|

|

| 30 |

evaluate==0.4.1

|

| 31 |

https://huggingface.co/spacy/en_core_web_lg/resolve/main/en_core_web_lg-any-py3-none-any.whl

|

| 32 |

protobuf==3.20

|

| 33 |

+

streamlit_mic_recorder==0.0.2

|

| 34 |

+

faiss-cpu==1.7.4

|

run_cli.py

CHANGED

|

@@ -5,7 +5,7 @@ import spacy

|

|

| 5 |

from celebbot import CelebBot

|

| 6 |

from utils import *

|

| 7 |

|

| 8 |

-

DEBUG =

|

| 9 |

QA_MODEL_ID = "google/flan-t5-large"

|

| 10 |

SENTTR_MODEL_ID = "sentence-transformers/all-mpnet-base-v2"

|

| 11 |

|

|

@@ -52,6 +52,7 @@ def main():

|

|

| 52 |

print("me --> ", ai.text)

|

| 53 |

|

| 54 |

answers.append(ai.question_answer())

|

|

|

|

| 55 |

|

| 56 |

if not DEBUG:

|

| 57 |

ai.text_to_speech()

|

|

|

|

| 5 |

from celebbot import CelebBot

|

| 6 |

from utils import *

|

| 7 |

|

| 8 |

+

DEBUG = True

|

| 9 |

QA_MODEL_ID = "google/flan-t5-large"

|

| 10 |

SENTTR_MODEL_ID = "sentence-transformers/all-mpnet-base-v2"

|

| 11 |

|

|

|

|

| 52 |

print("me --> ", ai.text)

|

| 53 |

|

| 54 |

answers.append(ai.question_answer())

|

| 55 |

+

print("bot --> ", ai.text)

|

| 56 |

|

| 57 |

if not DEBUG:

|

| 58 |

ai.text_to_speech()

|

run_eval.py

CHANGED

|

@@ -4,15 +4,19 @@ import spacy

|

|

| 4 |

import json

|

| 5 |

import evaluate

|

| 6 |

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, AutoModel

|

|

|

|

| 7 |

import torch

|

| 8 |

|

| 9 |

from utils import *

|

| 10 |

from celebbot import CelebBot

|

| 11 |

|

| 12 |

-

QA_MODEL_ID = "google/flan-t5-

|

| 13 |

SENTTR_MODEL_ID = "sentence-transformers/all-mpnet-base-v2"

|

| 14 |

celeb_names = ["Cate Blanchett", "David Beckham", "Emma Watson", "Lady Gaga", "Madonna", "Mark Zuckerberg"]

|

| 15 |

|

|

|

|

|

|

|

|

|

|

| 16 |

celeb_data = get_celeb_data("data.json")

|

| 17 |

references = [val['answers'] for key, val in list(celeb_data.items()) if key in celeb_names]

|

| 18 |

references = list(itertools.chain.from_iterable(references))

|

|

@@ -20,7 +24,29 @@ predictions = []

|

|

| 20 |

|

| 21 |

device = 'cpu'

|

| 22 |

QA_tokenizer = AutoTokenizer.from_pretrained(QA_MODEL_ID)

|

| 23 |

-

QA_model = AutoModelForSeq2SeqLM.from_pretrained(QA_MODEL_ID)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 24 |

sentTr_tokenizer = AutoTokenizer.from_pretrained(SENTTR_MODEL_ID)

|

| 25 |

sentTr_model = AutoModel.from_pretrained(SENTTR_MODEL_ID).to(device)

|

| 26 |

|

|

@@ -37,7 +63,7 @@ for celeb_name in celeb_names:

|

|

| 37 |

spacy_model = spacy.load("en_core_web_lg")

|

| 38 |

knowledge_sents = [i.text.strip() for i in spacy_model(knowledge).sents]

|

| 39 |

|

| 40 |

-

ai = CelebBot(celeb_name, gender, QA_tokenizer, QA_model, sentTr_tokenizer, sentTr_model, spacy_model, knowledge_sents)

|

| 41 |

for q in celeb_data[celeb_name]["questions"]:

|

| 42 |

ai.text = q

|

| 43 |

response = ai.question_answer()

|

|

|

|

| 4 |

import json

|

| 5 |

import evaluate

|

| 6 |

from transformers import AutoTokenizer, AutoModelForSeq2SeqLM, AutoModel

|

| 7 |

+

from unlimiformer import Unlimiformer, UnlimiformerArguments

|

| 8 |

import torch

|

| 9 |

|

| 10 |

from utils import *

|

| 11 |

from celebbot import CelebBot

|

| 12 |

|

| 13 |

+

QA_MODEL_ID = "google/flan-t5-xl"

|

| 14 |

SENTTR_MODEL_ID = "sentence-transformers/all-mpnet-base-v2"

|

| 15 |

celeb_names = ["Cate Blanchett", "David Beckham", "Emma Watson", "Lady Gaga", "Madonna", "Mark Zuckerberg"]

|

| 16 |

|

| 17 |

+

USE_UNLIMIFORMER = True

|

| 18 |

+

TOP_K = 8

|

| 19 |

+

|

| 20 |

celeb_data = get_celeb_data("data.json")

|

| 21 |

references = [val['answers'] for key, val in list(celeb_data.items()) if key in celeb_names]

|

| 22 |

references = list(itertools.chain.from_iterable(references))

|

|

|

|

| 24 |

|

| 25 |

device = 'cpu'

|

| 26 |

QA_tokenizer = AutoTokenizer.from_pretrained(QA_MODEL_ID)

|

| 27 |

+

QA_model = AutoModelForSeq2SeqLM.from_pretrained(QA_MODEL_ID)

|

| 28 |

+

if USE_UNLIMIFORMER:

|

| 29 |

+

defaults = UnlimiformerArguments()

|

| 30 |

+

unlimiformer_kwargs = {

|

| 31 |

+

'layer_begin': defaults.layer_begin,

|

| 32 |

+

'layer_end': defaults.layer_end,

|

| 33 |

+

'unlimiformer_head_num': defaults.unlimiformer_head_num,

|

| 34 |

+

'exclude_attention': defaults.unlimiformer_exclude,

|

| 35 |

+

'chunk_overlap': defaults.unlimiformer_chunk_overlap,

|

| 36 |

+

'model_encoder_max_len': defaults.unlimiformer_chunk_size,

|

| 37 |

+

'verbose': defaults.unlimiformer_verbose, 'tokenizer': QA_tokenizer,

|

| 38 |

+

'unlimiformer_training': defaults.unlimiformer_training,

|

| 39 |

+

'use_datastore': defaults.use_datastore,

|

| 40 |

+

'flat_index': defaults.flat_index,

|

| 41 |

+

'test_datastore': defaults.test_datastore,

|

| 42 |

+

'reconstruct_embeddings': defaults.reconstruct_embeddings,

|

| 43 |

+

'gpu_datastore': defaults.gpu_datastore,

|

| 44 |

+

'gpu_index': defaults.gpu_index

|

| 45 |

+

}

|

| 46 |

+

QA_model =Unlimiformer.convert_model(QA_model, **unlimiformer_kwargs).to(device)

|

| 47 |

+

else:

|

| 48 |

+

QA_model = QA_model.to(device)

|

| 49 |

+

|

| 50 |

sentTr_tokenizer = AutoTokenizer.from_pretrained(SENTTR_MODEL_ID)

|

| 51 |

sentTr_model = AutoModel.from_pretrained(SENTTR_MODEL_ID).to(device)

|

| 52 |

|

|

|

|

| 63 |

spacy_model = spacy.load("en_core_web_lg")

|

| 64 |

knowledge_sents = [i.text.strip() for i in spacy_model(knowledge).sents]

|

| 65 |

|

| 66 |

+

ai = CelebBot(celeb_name, gender, QA_tokenizer, QA_model, sentTr_tokenizer, sentTr_model, spacy_model, knowledge_sents, top_k=TOP_K)

|

| 67 |

for q in celeb_data[celeb_name]["questions"]:

|

| 68 |

ai.text = q

|

| 69 |

response = ai.question_answer()

|

unlimiformer/__init__.py

ADDED

|

@@ -0,0 +1,2 @@

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from .model import Unlimiformer

|

| 2 |

+

from .usage import UnlimiformerArguments

|

unlimiformer/configs/data/contract_nli.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "contract_nli",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"generation_max_length": 8,

|

| 8 |

+

"num_train_epochs": 20,

|

| 9 |

+

"metric_names": ["exact_match"],

|

| 10 |

+

"metric_for_best_model": "exact_match",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/gov_report.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "gov_report",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"generation_max_length": 1024,

|

| 6 |

+

"max_prefix_length": 0,

|

| 7 |

+

"pad_prefix": false,

|

| 8 |

+

"num_train_epochs": 10,

|

| 9 |

+

"metric_names": ["rouge"],

|

| 10 |

+

"metric_for_best_model": "rouge/geometric_mean",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/hotpotqa.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "hotpotqa",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"generation_max_length": 128,

|

| 8 |

+

"num_train_epochs": 9,

|

| 9 |

+

"metric_names": ["f1", "exact_match"],

|

| 10 |

+

"metric_for_best_model": "f1",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/hotpotqa_second_only.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "hotpotqa_second_only",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"generation_max_length": 128,

|

| 8 |

+

"num_train_epochs": 9,

|

| 9 |

+

"metric_names": ["f1", "exact_match"],

|

| 10 |

+

"metric_for_best_model": "f1",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/narative_qa.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "narrative_qa",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"num_train_epochs": 2,

|

| 8 |

+

"generation_max_length": 128,

|

| 9 |

+

"metric_names": ["f1"],

|

| 10 |

+

"metric_for_best_model": "f1",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/qasper.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "qasper",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"generation_max_length": 128,

|

| 8 |

+

"num_train_epochs": 20,

|

| 9 |

+

"metric_names": ["f1"],

|

| 10 |

+

"metric_for_best_model": "f1",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/qmsum.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "qmsum",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"num_train_epochs": 20,

|

| 8 |

+

"generation_max_length": 1024,

|

| 9 |

+

"metric_names": ["rouge"],

|

| 10 |

+

"metric_for_best_model": "rouge/geometric_mean",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/quality.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "quality",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 160,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"num_train_epochs": 20,

|

| 8 |

+

"generation_max_length": 128,

|

| 9 |

+

"metric_names": ["exact_match"],

|

| 10 |

+

"metric_for_best_model": "exact_match",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/squad.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "squad",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"num_train_epochs": 3,

|

| 8 |

+

"generation_max_length": 128,

|

| 9 |

+

"metric_names": ["f1", "exact_match"],

|

| 10 |

+

"metric_for_best_model": "f1",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/squad_ordered_distractors.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "squad_ordered_distractors",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"num_train_epochs": 3,

|

| 8 |

+

"generation_max_length": 128,

|

| 9 |

+

"metric_names": ["f1", "exact_match"],

|

| 10 |

+

"metric_for_best_model": "f1",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/squad_shuffled_distractors.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "squad_shuffled_distractors",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 64,

|

| 6 |

+

"pad_prefix": true,

|

| 7 |

+

"num_train_epochs": 3,

|

| 8 |

+

"generation_max_length": 128,

|

| 9 |

+

"metric_names": ["f1", "exact_match"],

|

| 10 |

+

"metric_for_best_model": "f1",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/data/summ_screen_fd.json

ADDED

|

@@ -0,0 +1,12 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"dataset_name": "tau/sled",

|

| 3 |

+

"dataset_config_name": "summ_screen_fd",

|

| 4 |

+

"max_source_length": 16384,

|

| 5 |

+

"max_prefix_length": 0,

|

| 6 |

+

"pad_prefix": false,

|

| 7 |

+

"num_train_epochs": 10,

|

| 8 |

+

"generation_max_length": 1024,

|

| 9 |

+

"metric_names": ["rouge"],

|

| 10 |

+

"metric_for_best_model": "rouge/geometric_mean",

|

| 11 |

+

"greater_is_better": true

|

| 12 |

+

}

|

unlimiformer/configs/model/bart_base_sled.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"model_name_or_path": "tau/bart-base-sled",

|

| 3 |

+

"use_auth_token": false,

|

| 4 |

+

"max_target_length": 1024,

|

| 5 |

+

"fp16": true

|

| 6 |

+

}

|

unlimiformer/configs/model/bart_large_sled.json

ADDED

|

@@ -0,0 +1,6 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"model_name_or_path": "tau/bart-large-sled",

|

| 3 |

+

"use_auth_token": false,

|

| 4 |

+

"max_target_length": 1024,

|

| 5 |

+

"fp16": true

|

| 6 |

+

}

|

unlimiformer/configs/model/primera_govreport_sled.json

ADDED

|

@@ -0,0 +1,9 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"model_type": "tau/sled",

|

| 3 |

+

"underlying_config": "allenai/PRIMERA",

|

| 4 |

+

"context_size": 4096,

|

| 5 |

+

"window_fraction": 0.5,

|

| 6 |

+

"prepend_prefix": true,

|

| 7 |

+

"encode_prefix": true,

|

| 8 |

+

"sliding_method": "dynamic"

|

| 9 |

+

}

|

unlimiformer/configs/training/base_training_args.json

ADDED

|

@@ -0,0 +1,22 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

{

|

| 2 |

+

"eval_steps_override": 0.5,

|

| 3 |

+

"save_steps_override": 0.5,

|

| 4 |

+

"evaluation_strategy": "steps",

|

| 5 |

+

"eval_fraction": 1000,

|

| 6 |

+

"predict_with_generate": true,

|

| 7 |

+

"gradient_checkpointing": true,

|

| 8 |

+

"do_train": true,

|

| 9 |

+

"do_eval": true,

|

| 10 |

+

"seed": 42,

|

| 11 |

+

"warmup_ratio": 0.1,

|

| 12 |

+

"save_total_limit": 2,

|

| 13 |

+

"preprocessing_num_workers": 1,

|

| 14 |

+

"load_best_model_at_end": true,

|

| 15 |

+

"lr_scheduler": "linear",

|

| 16 |

+

"adam_epsilon": 1e-6,

|

| 17 |

+

"adam_beta1": 0.9,

|

| 18 |

+

"adam_beta2": 0.98,

|

| 19 |

+

"weight_decay": 0.001,

|

| 20 |

+

"patience": 10,

|

| 21 |

+

"extra_metrics": "bertscore"

|

| 22 |

+

}

|

unlimiformer/index_building.py

ADDED

|

@@ -0,0 +1,161 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import faiss

|

| 2 |

+

import faiss.contrib.torch_utils

|

| 3 |

+

import time

|

| 4 |

+

import logging

|

| 5 |

+

|

| 6 |

+

import torch

|

| 7 |

+

import numpy as np

|

| 8 |

+

|

| 9 |

+

code_size = 64

|

| 10 |

+

|

| 11 |

+

class DatastoreBatch():

|

| 12 |

+

def __init__(self, dim, batch_size, flat_index=False, gpu_index=False, verbose=False, index_device=None) -> None:

|

| 13 |

+

self.indices = []

|

| 14 |

+

self.batch_size = batch_size

|

| 15 |

+

self.device = index_device if index_device is not None else torch.device('cuda' if gpu_index else 'cpu')

|

| 16 |

+

for i in range(batch_size):

|

| 17 |

+

self.indices.append(Datastore(dim, use_flat_index=flat_index, gpu_index=gpu_index, verbose=verbose, device=self.device))

|

| 18 |

+

|

| 19 |

+

def move_to_gpu(self):

|

| 20 |

+

for i in range(self.batch_size):

|

| 21 |

+

self.indices[i].move_to_gpu()

|

| 22 |

+

|

| 23 |

+

def add_keys(self, keys, num_keys_to_add_at_a_time=100000):

|

| 24 |

+

for i in range(self.batch_size):

|

| 25 |

+

self.indices[i].add_keys(keys[i], num_keys_to_add_at_a_time)

|

| 26 |

+

|

| 27 |

+

def train_index(self, keys):

|

| 28 |

+

for index, example_keys in zip(self.indices, keys):

|

| 29 |

+

index.train_index(example_keys)

|

| 30 |

+

|

| 31 |

+

def search(self, queries, k):

|

| 32 |

+

found_scores, found_values = [], []

|

| 33 |

+

for i in range(self.batch_size):

|

| 34 |

+

scores, values = self.indices[i].search(queries[i], k)

|

| 35 |

+

found_scores.append(scores)

|

| 36 |

+

found_values.append(values)

|

| 37 |

+

return torch.stack(found_scores, dim=0), torch.stack(found_values, dim=0)

|

| 38 |

+

|

| 39 |

+

def search_and_reconstruct(self, queries, k):

|

| 40 |

+

found_scores, found_values = [], []

|

| 41 |

+

found_vectors = []

|

| 42 |

+

for i in range(self.batch_size):

|

| 43 |

+

scores, values, vectors = self.indices[i].search_and_reconstruct(queries[i], k)

|

| 44 |

+

found_scores.append(scores)

|

| 45 |

+

found_values.append(values)

|

| 46 |

+

found_vectors.append(vectors)

|

| 47 |

+

return torch.stack(found_scores, dim=0), torch.stack(found_values, dim=0), torch.stack(found_vectors, dim=0)

|

| 48 |

+

|

| 49 |

+

class Datastore():

|

| 50 |

+

def __init__(self, dim, use_flat_index=False, gpu_index=False, verbose=False, device=None) -> None:

|

| 51 |

+

self.dimension = dim

|

| 52 |

+

self.device = device if device is not None else torch.device('cuda' if gpu_index else 'cpu')

|

| 53 |

+

self.logger = logging.getLogger('index_building')

|

| 54 |

+

self.logger.setLevel(20)

|

| 55 |

+

self.use_flat_index = use_flat_index

|

| 56 |

+

self.gpu_index = gpu_index

|

| 57 |

+

|

| 58 |

+

# Initialize faiss index

|

| 59 |

+

# TODO: is preprocessing efficient enough to spend time on?

|

| 60 |

+

if not use_flat_index:

|

| 61 |

+

self.index = faiss.IndexFlatIP(self.dimension) # inner product index because we use IP attention

|

| 62 |

+

|

| 63 |

+

# need to wrap in index ID map to enable add_with_ids

|

| 64 |

+

# self.index = faiss.IndexIDMap(self.index)

|

| 65 |

+

|

| 66 |

+

self.index_size = 0

|

| 67 |

+

# if self.gpu_index:

|

| 68 |

+

# self.move_to_gpu()

|

| 69 |

+

|

| 70 |

+

def move_to_gpu(self):

|

| 71 |

+

if self.use_flat_index:

|

| 72 |

+

# self.keys = self.keys.to(self.device)

|

| 73 |

+

return

|

| 74 |

+

else:

|

| 75 |

+

co = faiss.GpuClonerOptions()

|

| 76 |

+

co.useFloat16 = True

|

| 77 |

+

self.index = faiss.index_cpu_to_gpu(faiss.StandardGpuResources(), self.device.index, self.index, co)

|

| 78 |

+

|

| 79 |

+

def train_index(self, keys):

|

| 80 |

+

if self.use_flat_index:

|

| 81 |

+

self.add_keys(keys=keys, index_is_trained=True)

|

| 82 |

+

else:

|

| 83 |

+

keys = keys.cpu().float()

|

| 84 |

+

ncentroids = int(keys.shape[0] / 128)

|

| 85 |

+

self.index = faiss.IndexIVFPQ(self.index, self.dimension,

|

| 86 |

+

ncentroids, code_size, 8)

|

| 87 |

+

self.index.nprobe = min(32, ncentroids)

|

| 88 |

+

# if not self.gpu_index:

|

| 89 |

+

# keys = keys.cpu()

|

| 90 |

+

|

| 91 |

+

self.logger.info('Training index')

|

| 92 |

+

start_time = time.time()

|

| 93 |

+

self.index.train(keys)

|

| 94 |

+

self.logger.info(f'Training took {time.time() - start_time} s')

|

| 95 |

+

self.add_keys(keys=keys, index_is_trained=True)

|

| 96 |

+

# self.keys = None

|

| 97 |

+

if self.gpu_index:

|

| 98 |

+

self.move_to_gpu()

|

| 99 |

+

|

| 100 |

+

def add_keys(self, keys, num_keys_to_add_at_a_time=1000000, index_is_trained=False):

|

| 101 |

+

self.keys = keys

|

| 102 |

+

if not self.use_flat_index and index_is_trained:

|

| 103 |

+

start = 0

|

| 104 |

+

while start < keys.shape[0]:

|

| 105 |

+

end = min(len(keys), start + num_keys_to_add_at_a_time)

|

| 106 |

+

to_add = keys[start:end]

|

| 107 |

+

# if not self.gpu_index:

|

| 108 |

+

# to_add = to_add.cpu()

|

| 109 |

+

# self.index.add_with_ids(to_add, torch.arange(start+self.index_size, end+self.index_size))

|

| 110 |

+

self.index.add(to_add)

|

| 111 |

+

self.index_size += end - start

|

| 112 |

+

start += end

|

| 113 |

+

if (start % 1000000) == 0:

|

| 114 |

+

self.logger.info(f'Added {start} tokens so far')

|

| 115 |

+

# else:

|

| 116 |

+

# self.keys.append(keys)

|

| 117 |

+

|

| 118 |

+

# self.logger.info(f'Adding total {start} keys')

|

| 119 |

+

# self.logger.info(f'Adding took {time.time() - start_time} s')

|

| 120 |

+

|

| 121 |

+

def search_and_reconstruct(self, queries, k):

|

| 122 |

+

if len(queries.shape) == 1: # searching for only 1 vector, add one extra dim

|

| 123 |

+

self.logger.info("Searching for a single vector; unsqueezing")

|

| 124 |

+

queries = queries.unsqueeze(0)

|

| 125 |

+

# self.logger.info("Searching with reconstruct")

|

| 126 |

+

assert queries.shape[-1] == self.dimension # query vectors are same shape as "key" vectors

|

| 127 |

+

scores, values, vectors = self.index.index.search_and_reconstruct(queries.cpu().detach(), k)

|

| 128 |

+

# self.logger.info("Searching done")

|

| 129 |

+

return scores, values, vectors

|

| 130 |

+

|

| 131 |

+

def search(self, queries, k):

|

| 132 |

+

# model_device = queries.device

|

| 133 |

+

# model_dtype = queries.dtype

|

| 134 |

+

if len(queries.shape) == 1: # searching for only 1 vector, add one extra dim

|

| 135 |

+

self.logger.info("Searching for a single vector; unsqueezing")

|

| 136 |

+

queries = queries.unsqueeze(0)

|

| 137 |

+

assert queries.shape[-1] == self.dimension # query vectors are same shape as "key" vectors

|

| 138 |

+

# if not self.gpu_index:

|

| 139 |

+

# queries = queries.cpu()

|

| 140 |

+

# else:

|

| 141 |

+

# queries = queries.to(self.device)

|

| 142 |

+

if self.use_flat_index:

|

| 143 |

+

if self.gpu_index:

|

| 144 |

+

scores, values = faiss.knn_gpu(faiss.StandardGpuResources(), queries, self.keys, k,

|

| 145 |

+

metric=faiss.METRIC_INNER_PRODUCT, device=self.device.index)

|

| 146 |

+

else:

|

| 147 |

+

scores, values = faiss.knn(queries, self.keys, k, metric=faiss.METRIC_INNER_PRODUCT)

|

| 148 |

+

scores = torch.from_numpy(scores).to(queries.dtype)

|

| 149 |

+

values = torch.from_numpy(values) #.to(model_dtype)

|

| 150 |

+

else:

|

| 151 |

+

scores, values = self.index.search(queries.float(), k)

|

| 152 |

+

|

| 153 |

+

# avoid returning -1 as a value

|

| 154 |

+

# TODO: get a handle on the attention mask and mask the values that were -1

|

| 155 |

+

values = torch.where(torch.logical_or(values < 0, values >= self.keys.shape[0]), torch.zeros_like(values), values)

|

| 156 |

+

# self.logger.info("Searching done")

|

| 157 |

+

# return scores.to(model_dtype).to(model_device), values.to(model_device)

|

| 158 |

+

return scores, values

|

| 159 |

+

|

| 160 |

+

|

| 161 |

+

|

unlimiformer/metrics/__init__.py

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

from .metrics import load_metric, download_metric

|

unlimiformer/metrics/metrics.py

ADDED

|

@@ -0,0 +1,182 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from typing import List, Dict

|

| 2 |

+

import os

|

| 3 |

+

import importlib

|

| 4 |

+

from abc import ABC, abstractmethod

|

| 5 |

+

import inspect

|

| 6 |

+

import shutil

|

| 7 |

+

|

| 8 |

+

import numpy as np

|

| 9 |

+

|

| 10 |

+

from utils.decoding import decode

|

| 11 |

+

from datasets import load_metric as hf_load_metric

|

| 12 |

+

from huggingface_hub import hf_hub_download

|

| 13 |

+

|

| 14 |

+

|

| 15 |

+

class Metric(ABC):

|

| 16 |

+

def __init__(self, **kwargs) -> None:

|

| 17 |

+

super().__init__()

|

| 18 |

+

self._kwargs = kwargs

|

| 19 |

+

|

| 20 |

+

self.prefix = os.path.splitext(os.path.basename(inspect.getfile(self.__class__)))[0]

|

| 21 |

+

self.requires_decoded = False

|

| 22 |

+

|

| 23 |

+

def __call__(self, id_to_pred, id_to_labels, is_decoded=False):

|

| 24 |

+

if self.requires_decoded and is_decoded is False:

|

| 25 |

+

id_to_pred = self._decode(id_to_pred)

|

| 26 |

+

id_to_labels = self._decode(id_to_labels)

|

| 27 |

+

return self._compute_metrics(id_to_pred, id_to_labels)

|

| 28 |

+

|

| 29 |

+

@abstractmethod

|

| 30 |

+

def _compute_metrics(self, id_to_pred, id_to_labels) -> Dict[str, float]:

|

| 31 |

+

return

|

| 32 |

+

|

| 33 |

+

def _decode(self, id_to_something):

|

| 34 |

+

tokenizer = self._kwargs.get("tokenizer")

|

| 35 |

+

data_args = self._kwargs.get("data_args")

|

| 36 |

+

return decode(id_to_something, tokenizer, data_args)

|

| 37 |

+

|

| 38 |

+

|

| 39 |

+

class MetricCollection(Metric):

|

| 40 |

+

def __init__(self, metrics: List[Metric], **kwargs):

|

| 41 |

+

super().__init__(**kwargs)

|

| 42 |

+

self._metrics = metrics

|

| 43 |

+

|

| 44 |

+

def __call__(self, id_to_pred, id_to_labels):

|

| 45 |

+

return self._compute_metrics(id_to_pred, id_to_labels)

|

| 46 |

+

|

| 47 |

+

def _compute_metrics(self, id_to_pred, id_to_labels):

|

| 48 |

+

results = {}

|

| 49 |

+

|

| 50 |

+

id_to_pred_decoded = None

|

| 51 |

+

id_to_labels_decoded = None

|

| 52 |

+

for metric in self._metrics:

|

| 53 |

+

metric_prefix = f"{metric.prefix}/" if metric.prefix else ""

|

| 54 |

+

if metric.requires_decoded:

|

| 55 |

+

if id_to_pred_decoded is None:

|

| 56 |

+

id_to_pred_decoded = self._decode(id_to_pred)

|

| 57 |

+

if id_to_labels_decoded is None:

|

| 58 |

+

id_to_labels_decoded = self._decode(id_to_labels)

|

| 59 |

+

|

| 60 |

+

result = metric(id_to_pred_decoded, id_to_labels_decoded, is_decoded=True)

|

| 61 |

+

else:

|

| 62 |

+

result = metric(id_to_pred, id_to_labels)

|

| 63 |

+

|

| 64 |

+

results.update({f"{metric_prefix}{k}": np.mean(v) if type(v) is list else v for k, v in result.items() if type(v) is not str})

|

| 65 |

+

|

| 66 |

+

results["num_predicted"] = len(id_to_pred)

|

| 67 |

+

results["mean_prediction_length_characters"] = np.mean([len(pred) for pred in id_to_pred_decoded.values()])

|

| 68 |

+

|

| 69 |

+

elem = next(iter(id_to_pred.values()))

|

| 70 |

+

if not ((isinstance(elem, list) and isinstance(elem[0], str)) or isinstance(elem, str)):

|

| 71 |

+

tokenizer = self._kwargs["tokenizer"]

|

| 72 |

+

results["mean_prediction_length_tokens"] = np.mean(

|

| 73 |

+

[np.count_nonzero(np.array(pred) != tokenizer.pad_token_id) for pred in id_to_pred.values()]

|

| 74 |

+

) # includes BOS/EOS tokens

|

| 75 |

+

|

| 76 |

+

results = {key: round(value, 4) for key, value in results.items()}

|

| 77 |

+

return results

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

def load_metric(paths: List[str], **kwargs):

|

| 81 |

+

if paths is None or len(paths) == 0:

|

| 82 |

+

return None

|

| 83 |

+

if isinstance(paths, str):

|

| 84 |

+

paths = [paths]

|

| 85 |

+

else:

|

| 86 |

+

paths = [path for path in paths]

|

| 87 |

+

|

| 88 |

+

metric_cls_list = []

|

| 89 |

+

|

| 90 |

+

scrolls_custom_metrics = []

|

| 91 |

+

to_remove = []

|

| 92 |

+

for i, path in enumerate(paths):

|

| 93 |

+

if not os.path.isfile(path):

|

| 94 |

+

scrolls_custom_metrics.append(path)

|

| 95 |

+

to_remove.append(i)

|

| 96 |

+

for i in sorted(to_remove, reverse=True):

|

| 97 |

+

del paths[i]

|

| 98 |

+

if len(scrolls_custom_metrics) > 0:

|

| 99 |

+

scrolls_custom_metrics.insert(0, "") # In order to have an identifying comma in the beginning

|

| 100 |

+

metric_cls_list.append(ScrollsWrapper(",".join(scrolls_custom_metrics), **kwargs))

|

| 101 |

+

|

| 102 |

+

for path in paths:

|

| 103 |

+

path = path.strip()

|

| 104 |

+

if len(path) == 0:

|

| 105 |

+

continue

|

| 106 |

+

if os.path.isfile(path) is False:

|

| 107 |

+

path = os.path.join("src", "metrics", f"{path}.py")

|

| 108 |

+

|

| 109 |

+

module = path[:-3].replace(os.sep, ".")

|

| 110 |

+

|

| 111 |

+

metric_cls = import_main_class(module)

|

| 112 |

+

metric_cls_list.append(metric_cls(**kwargs))

|

| 113 |

+

|

| 114 |

+

return MetricCollection(metric_cls_list, **kwargs)

|

| 115 |

+

|

| 116 |

+

|

| 117 |

+

# Modified from datasets.load

|

| 118 |

+

def import_main_class(module_path):

|

| 119 |

+

"""Import a module at module_path and return its main class"""

|

| 120 |

+

module = importlib.import_module(module_path)

|

| 121 |

+

|

| 122 |

+

main_cls_type = Metric

|

| 123 |

+

|

| 124 |

+

# Find the main class in our imported module

|

| 125 |

+

module_main_cls = None

|

| 126 |

+

for name, obj in module.__dict__.items():

|

| 127 |

+

if isinstance(obj, type) and issubclass(obj, main_cls_type):

|

| 128 |

+

if inspect.isabstract(obj):

|

| 129 |

+

continue

|

| 130 |

+

module_main_cls = obj

|

| 131 |

+

break

|

| 132 |

+

|

| 133 |

+

return module_main_cls

|

| 134 |

+

|

| 135 |

+

|

| 136 |

+

class ScrollsWrapper(Metric):

|

| 137 |

+

def __init__(self, comma_separated_metric_names, **kwargs) -> None:

|

| 138 |

+

super().__init__(**kwargs)

|

| 139 |

+

self.prefix = None

|

| 140 |

+

|

| 141 |

+

self._metric = hf_load_metric(download_metric(), comma_separated_metric_names, keep_in_memory=True)

|

| 142 |

+

|

| 143 |

+

self.requires_decoded = True

|

| 144 |

+

|

| 145 |

+

def _compute_metrics(self, id_to_pred, id_to_labels) -> Dict[str, float]:

|

| 146 |

+

return self._metric.compute(**self._metric.convert_from_map_format(id_to_pred, id_to_labels))

|

| 147 |

+

|

| 148 |

+

class HFMetricWrapper(Metric):

|

| 149 |

+

def __init__(self, metric_name, **kwargs) -> None:

|

| 150 |

+

super().__init__(**kwargs)

|

| 151 |

+

self._metric = hf_load_metric(metric_name)

|

| 152 |

+

self.kwargs = HFMetricWrapper.metric_specific_kwargs.get(metric_name, {})

|

| 153 |

+

self.requires_decoded = True

|

| 154 |

+

self.prefix = metric_name

|

| 155 |

+

self.requires_decoded = True

|

| 156 |

+

|

| 157 |

+

def _compute_metrics(self, id_to_pred, id_to_labels) -> Dict[str, float]:

|

| 158 |

+

return self._metric.compute(**self.convert_from_map_format(id_to_pred, id_to_labels), **self.kwargs)

|

| 159 |

+

|

| 160 |

+

def convert_from_map_format(self, id_to_pred, id_to_labels):

|

| 161 |

+

index_to_id = list(id_to_pred.keys())

|

| 162 |

+

predictions = [id_to_pred[id_] for id_ in index_to_id]

|

| 163 |

+

references = [id_to_labels[id_] for id_ in index_to_id]

|

| 164 |

+

return {"predictions": predictions, "references": references}

|

| 165 |

+

|

| 166 |

+

metric_specific_kwargs = {

|

| 167 |

+

'bertscore': {

|

| 168 |

+

# 'model_type': 'microsoft/deberta-large-mnli' or the larger 'microsoft/deberta-xlarge-mnli'

|

| 169 |

+

'model_type': 'facebook/bart-large-mnli', # has context window of 1024,

|

| 170 |

+

'num_layers': 11 # according to: https://docs.google.com/spreadsheets/d/1RKOVpselB98Nnh_EOC4A2BYn8_201tmPODpNWu4w7xI/edit#gid=0

|

| 171 |

+

}

|

| 172 |

+

}

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

def download_metric():

|

| 176 |

+

# here we load the custom metrics

|

| 177 |

+

scrolls_metric_path = hf_hub_download(repo_id="tau/scrolls", filename="metrics/scrolls.py", repo_type='dataset')

|

| 178 |

+

updated_scrolls_metric_path = (

|

| 179 |

+

os.path.dirname(scrolls_metric_path) + os.path.basename(scrolls_metric_path).replace(".", "_") + ".py"

|

| 180 |

+

)

|

| 181 |

+

shutil.copy(scrolls_metric_path, updated_scrolls_metric_path)

|

| 182 |

+

return updated_scrolls_metric_path

|

unlimiformer/model.py

ADDED

|

@@ -0,0 +1,1157 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|