Spaces:

Runtime error

Runtime error

Upload 43 files

Browse files- .gitattributes +15 -1

- LICENSE +201 -0

- cli/SparkTTS.py +236 -0

- cli/inference.py +104 -0

- example/infer.sh +47 -0

- example/prompt_audio.wav +3 -0

- example/results/20250225113521.wav +3 -0

- requirements.txt +13 -0

- sparktts/models/audio_tokenizer.py +163 -0

- sparktts/models/bicodec.py +247 -0

- sparktts/modules/blocks/layers.py +73 -0

- sparktts/modules/blocks/samper.py +115 -0

- sparktts/modules/blocks/vocos.py +373 -0

- sparktts/modules/encoder_decoder/feat_decoder.py +115 -0

- sparktts/modules/encoder_decoder/feat_encoder.py +105 -0

- sparktts/modules/encoder_decoder/wave_generator.py +88 -0

- sparktts/modules/fsq/finite_scalar_quantization.py +251 -0

- sparktts/modules/fsq/residual_fsq.py +355 -0

- sparktts/modules/speaker/ecapa_tdnn.py +267 -0

- sparktts/modules/speaker/perceiver_encoder.py +360 -0

- sparktts/modules/speaker/pooling_layers.py +298 -0

- sparktts/modules/speaker/speaker_encoder.py +136 -0

- sparktts/modules/vq/factorized_vector_quantize.py +187 -0

- sparktts/utils/__init__.py +0 -0

- sparktts/utils/audio.py +271 -0

- sparktts/utils/file.py +221 -0

- sparktts/utils/parse_options.sh +97 -0

- sparktts/utils/token_parser.py +187 -0

- src/demos/trump/trump_en.wav +3 -0

- src/demos/zhongli/zhongli_en.wav +3 -0

- src/demos/余承东/yuchengdong_zh.wav +3 -0

- src/demos/刘德华/dehua_zh.wav +3 -0

- src/demos/哪吒/nezha_zh.wav +3 -0

- src/demos/徐志胜/zhisheng_zh.wav +3 -0

- src/demos/李靖/lijing_zh.wav +3 -0

- src/demos/杨澜/yanglan_zh.wav +3 -0

- src/demos/马云/mayun_zh.wav +3 -0

- src/demos/鲁豫/luyu_zh.wav +3 -0

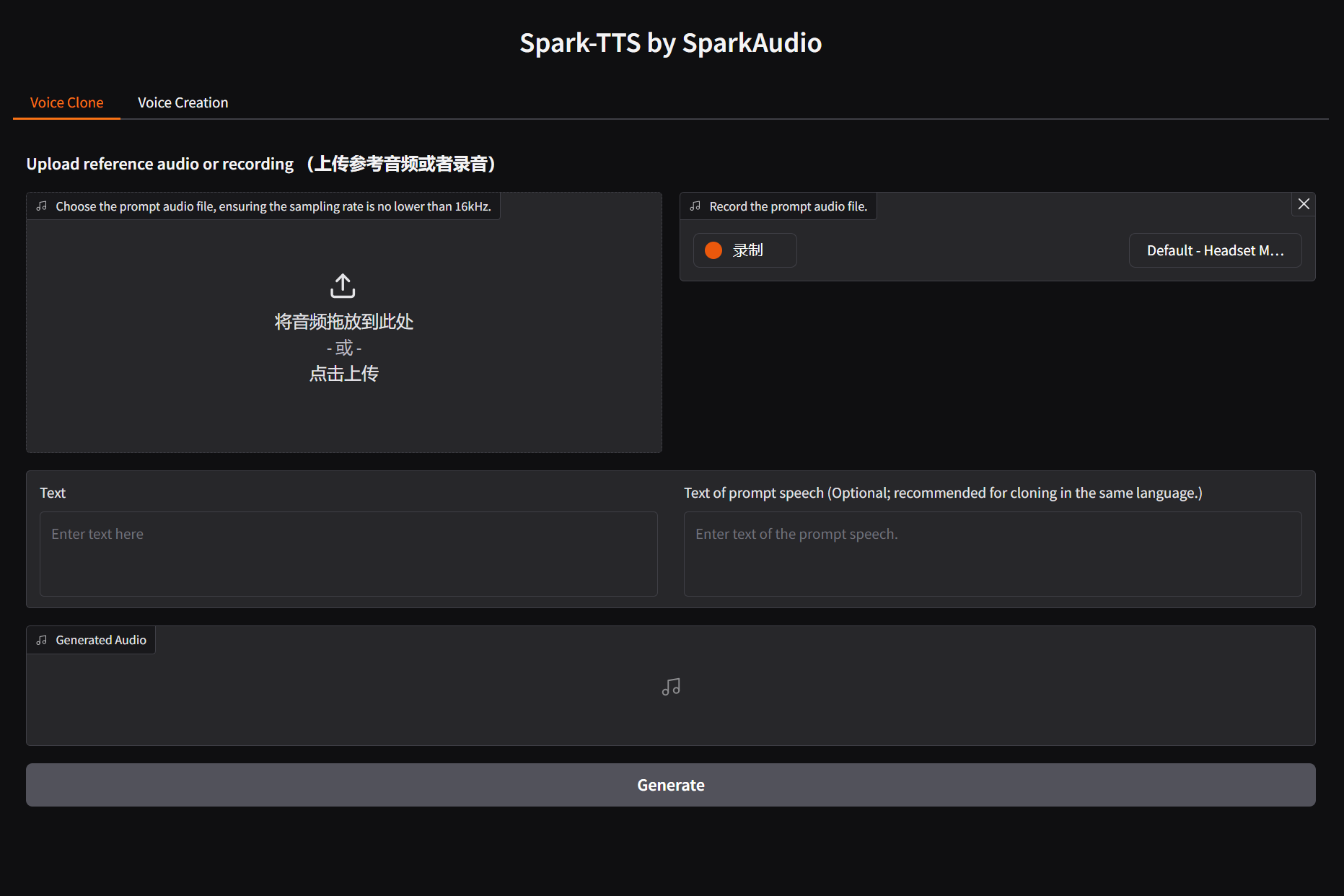

- src/figures/gradio_TTS.png +0 -0

- src/figures/gradio_control.png +0 -0

- src/figures/infer_control.png +3 -0

- src/figures/infer_voice_cloning.png +3 -0

- src/logo.webp +3 -0

- webui.py +192 -0

.gitattributes

CHANGED

|

@@ -32,4 +32,18 @@ saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

|

| 32 |

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

-

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 32 |

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -textexample/prompt_audio.wav filter=lfs diff=lfs merge=lfs -text

|

| 36 |

+

example/results/20250225113521.wav filter=lfs diff=lfs merge=lfs -text

|

| 37 |

+

src/demos/trump/trump_en.wav filter=lfs diff=lfs merge=lfs -text

|

| 38 |

+

src/demos/zhongli/zhongli_en.wav filter=lfs diff=lfs merge=lfs -text

|

| 39 |

+

src/demos/余承东/yuchengdong_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 40 |

+

src/demos/刘德华/dehua_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 41 |

+

src/demos/哪吒/nezha_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 42 |

+

src/demos/徐志胜/zhisheng_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 43 |

+

src/demos/李靖/lijing_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 44 |

+

src/demos/杨澜/yanglan_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 45 |

+

src/demos/马云/mayun_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 46 |

+

src/demos/鲁豫/luyu_zh.wav filter=lfs diff=lfs merge=lfs -text

|

| 47 |

+

src/figures/infer_control.png filter=lfs diff=lfs merge=lfs -text

|

| 48 |

+

src/figures/infer_voice_cloning.png filter=lfs diff=lfs merge=lfs -text

|

| 49 |

+

src/logo.webp filter=lfs diff=lfs merge=lfs -text

|

LICENSE

ADDED

|

@@ -0,0 +1,201 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Apache License

|

| 2 |

+

Version 2.0, January 2004

|

| 3 |

+

http://www.apache.org/licenses/

|

| 4 |

+

|

| 5 |

+

TERMS AND CONDITIONS FOR USE, REPRODUCTION, AND DISTRIBUTION

|

| 6 |

+

|

| 7 |

+

1. Definitions.

|

| 8 |

+

|

| 9 |

+

"License" shall mean the terms and conditions for use, reproduction,

|

| 10 |

+

and distribution as defined by Sections 1 through 9 of this document.

|

| 11 |

+

|

| 12 |

+

"Licensor" shall mean the copyright owner or entity authorized by

|

| 13 |

+

the copyright owner that is granting the License.

|

| 14 |

+

|

| 15 |

+

"Legal Entity" shall mean the union of the acting entity and all

|

| 16 |

+

other entities that control, are controlled by, or are under common

|

| 17 |

+

control with that entity. For the purposes of this definition,

|

| 18 |

+

"control" means (i) the power, direct or indirect, to cause the

|

| 19 |

+

direction or management of such entity, whether by contract or

|

| 20 |

+

otherwise, or (ii) ownership of fifty percent (50%) or more of the

|

| 21 |

+

outstanding shares, or (iii) beneficial ownership of such entity.

|

| 22 |

+

|

| 23 |

+

"You" (or "Your") shall mean an individual or Legal Entity

|

| 24 |

+

exercising permissions granted by this License.

|

| 25 |

+

|

| 26 |

+

"Source" form shall mean the preferred form for making modifications,

|

| 27 |

+

including but not limited to software source code, documentation

|

| 28 |

+

source, and configuration files.

|

| 29 |

+

|

| 30 |

+

"Object" form shall mean any form resulting from mechanical

|

| 31 |

+

transformation or translation of a Source form, including but

|

| 32 |

+

not limited to compiled object code, generated documentation,

|

| 33 |

+

and conversions to other media types.

|

| 34 |

+

|

| 35 |

+

"Work" shall mean the work of authorship, whether in Source or

|

| 36 |

+

Object form, made available under the License, as indicated by a

|

| 37 |

+

copyright notice that is included in or attached to the work

|

| 38 |

+

(an example is provided in the Appendix below).

|

| 39 |

+

|

| 40 |

+

"Derivative Works" shall mean any work, whether in Source or Object

|

| 41 |

+

form, that is based on (or derived from) the Work and for which the

|

| 42 |

+

editorial revisions, annotations, elaborations, or other modifications

|

| 43 |

+

represent, as a whole, an original work of authorship. For the purposes

|

| 44 |

+

of this License, Derivative Works shall not include works that remain

|

| 45 |

+

separable from, or merely link (or bind by name) to the interfaces of,

|

| 46 |

+

the Work and Derivative Works thereof.

|

| 47 |

+

|

| 48 |

+

"Contribution" shall mean any work of authorship, including

|

| 49 |

+

the original version of the Work and any modifications or additions

|

| 50 |

+

to that Work or Derivative Works thereof, that is intentionally

|

| 51 |

+

submitted to Licensor for inclusion in the Work by the copyright owner

|

| 52 |

+

or by an individual or Legal Entity authorized to submit on behalf of

|

| 53 |

+

the copyright owner. For the purposes of this definition, "submitted"

|

| 54 |

+

means any form of electronic, verbal, or written communication sent

|

| 55 |

+

to the Licensor or its representatives, including but not limited to

|

| 56 |

+

communication on electronic mailing lists, source code control systems,

|

| 57 |

+

and issue tracking systems that are managed by, or on behalf of, the

|

| 58 |

+

Licensor for the purpose of discussing and improving the Work, but

|

| 59 |

+

excluding communication that is conspicuously marked or otherwise

|

| 60 |

+

designated in writing by the copyright owner as "Not a Contribution."

|

| 61 |

+

|

| 62 |

+

"Contributor" shall mean Licensor and any individual or Legal Entity

|

| 63 |

+

on behalf of whom a Contribution has been received by Licensor and

|

| 64 |

+

subsequently incorporated within the Work.

|

| 65 |

+

|

| 66 |

+

2. Grant of Copyright License. Subject to the terms and conditions of

|

| 67 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 68 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 69 |

+

copyright license to reproduce, prepare Derivative Works of,

|

| 70 |

+

publicly display, publicly perform, sublicense, and distribute the

|

| 71 |

+

Work and such Derivative Works in Source or Object form.

|

| 72 |

+

|

| 73 |

+

3. Grant of Patent License. Subject to the terms and conditions of

|

| 74 |

+

this License, each Contributor hereby grants to You a perpetual,

|

| 75 |

+

worldwide, non-exclusive, no-charge, royalty-free, irrevocable

|

| 76 |

+

(except as stated in this section) patent license to make, have made,

|

| 77 |

+

use, offer to sell, sell, import, and otherwise transfer the Work,

|

| 78 |

+

where such license applies only to those patent claims licensable

|

| 79 |

+

by such Contributor that are necessarily infringed by their

|

| 80 |

+

Contribution(s) alone or by combination of their Contribution(s)

|

| 81 |

+

with the Work to which such Contribution(s) was submitted. If You

|

| 82 |

+

institute patent litigation against any entity (including a

|

| 83 |

+

cross-claim or counterclaim in a lawsuit) alleging that the Work

|

| 84 |

+

or a Contribution incorporated within the Work constitutes direct

|

| 85 |

+

or contributory patent infringement, then any patent licenses

|

| 86 |

+

granted to You under this License for that Work shall terminate

|

| 87 |

+

as of the date such litigation is filed.

|

| 88 |

+

|

| 89 |

+

4. Redistribution. You may reproduce and distribute copies of the

|

| 90 |

+

Work or Derivative Works thereof in any medium, with or without

|

| 91 |

+

modifications, and in Source or Object form, provided that You

|

| 92 |

+

meet the following conditions:

|

| 93 |

+

|

| 94 |

+

(a) You must give any other recipients of the Work or

|

| 95 |

+

Derivative Works a copy of this License; and

|

| 96 |

+

|

| 97 |

+

(b) You must cause any modified files to carry prominent notices

|

| 98 |

+

stating that You changed the files; and

|

| 99 |

+

|

| 100 |

+

(c) You must retain, in the Source form of any Derivative Works

|

| 101 |

+

that You distribute, all copyright, patent, trademark, and

|

| 102 |

+

attribution notices from the Source form of the Work,

|

| 103 |

+

excluding those notices that do not pertain to any part of

|

| 104 |

+

the Derivative Works; and

|

| 105 |

+

|

| 106 |

+

(d) If the Work includes a "NOTICE" text file as part of its

|

| 107 |

+

distribution, then any Derivative Works that You distribute must

|

| 108 |

+

include a readable copy of the attribution notices contained

|

| 109 |

+

within such NOTICE file, excluding those notices that do not

|

| 110 |

+

pertain to any part of the Derivative Works, in at least one

|

| 111 |

+

of the following places: within a NOTICE text file distributed

|

| 112 |

+

as part of the Derivative Works; within the Source form or

|

| 113 |

+

documentation, if provided along with the Derivative Works; or,

|

| 114 |

+

within a display generated by the Derivative Works, if and

|

| 115 |

+

wherever such third-party notices normally appear. The contents

|

| 116 |

+

of the NOTICE file are for informational purposes only and

|

| 117 |

+

do not modify the License. You may add Your own attribution

|

| 118 |

+

notices within Derivative Works that You distribute, alongside

|

| 119 |

+

or as an addendum to the NOTICE text from the Work, provided

|

| 120 |

+

that such additional attribution notices cannot be construed

|

| 121 |

+

as modifying the License.

|

| 122 |

+

|

| 123 |

+

You may add Your own copyright statement to Your modifications and

|

| 124 |

+

may provide additional or different license terms and conditions

|

| 125 |

+

for use, reproduction, or distribution of Your modifications, or

|

| 126 |

+

for any such Derivative Works as a whole, provided Your use,

|

| 127 |

+

reproduction, and distribution of the Work otherwise complies with

|

| 128 |

+

the conditions stated in this License.

|

| 129 |

+

|

| 130 |

+

5. Submission of Contributions. Unless You explicitly state otherwise,

|

| 131 |

+

any Contribution intentionally submitted for inclusion in the Work

|

| 132 |

+

by You to the Licensor shall be under the terms and conditions of

|

| 133 |

+

this License, without any additional terms or conditions.

|

| 134 |

+

Notwithstanding the above, nothing herein shall supersede or modify

|

| 135 |

+

the terms of any separate license agreement you may have executed

|

| 136 |

+

with Licensor regarding such Contributions.

|

| 137 |

+

|

| 138 |

+

6. Trademarks. This License does not grant permission to use the trade

|

| 139 |

+

names, trademarks, service marks, or product names of the Licensor,

|

| 140 |

+

except as required for reasonable and customary use in describing the

|

| 141 |

+

origin of the Work and reproducing the content of the NOTICE file.

|

| 142 |

+

|

| 143 |

+

7. Disclaimer of Warranty. Unless required by applicable law or

|

| 144 |

+

agreed to in writing, Licensor provides the Work (and each

|

| 145 |

+

Contributor provides its Contributions) on an "AS IS" BASIS,

|

| 146 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or

|

| 147 |

+

implied, including, without limitation, any warranties or conditions

|

| 148 |

+

of TITLE, NON-INFRINGEMENT, MERCHANTABILITY, or FITNESS FOR A

|

| 149 |

+

PARTICULAR PURPOSE. You are solely responsible for determining the

|

| 150 |

+

appropriateness of using or redistributing the Work and assume any

|

| 151 |

+

risks associated with Your exercise of permissions under this License.

|

| 152 |

+

|

| 153 |

+

8. Limitation of Liability. In no event and under no legal theory,

|

| 154 |

+

whether in tort (including negligence), contract, or otherwise,

|

| 155 |

+

unless required by applicable law (such as deliberate and grossly

|

| 156 |

+

negligent acts) or agreed to in writing, shall any Contributor be

|

| 157 |

+

liable to You for damages, including any direct, indirect, special,

|

| 158 |

+

incidental, or consequential damages of any character arising as a

|

| 159 |

+

result of this License or out of the use or inability to use the

|

| 160 |

+

Work (including but not limited to damages for loss of goodwill,

|

| 161 |

+

work stoppage, computer failure or malfunction, or any and all

|

| 162 |

+

other commercial damages or losses), even if such Contributor

|

| 163 |

+

has been advised of the possibility of such damages.

|

| 164 |

+

|

| 165 |

+

9. Accepting Warranty or Additional Liability. While redistributing

|

| 166 |

+

the Work or Derivative Works thereof, You may choose to offer,

|

| 167 |

+

and charge a fee for, acceptance of support, warranty, indemnity,

|

| 168 |

+

or other liability obligations and/or rights consistent with this

|

| 169 |

+

License. However, in accepting such obligations, You may act only

|

| 170 |

+

on Your own behalf and on Your sole responsibility, not on behalf

|

| 171 |

+

of any other Contributor, and only if You agree to indemnify,

|

| 172 |

+

defend, and hold each Contributor harmless for any liability

|

| 173 |

+

incurred by, or claims asserted against, such Contributor by reason

|

| 174 |

+

of your accepting any such warranty or additional liability.

|

| 175 |

+

|

| 176 |

+

END OF TERMS AND CONDITIONS

|

| 177 |

+

|

| 178 |

+

APPENDIX: How to apply the Apache License to your work.

|

| 179 |

+

|

| 180 |

+

To apply the Apache License to your work, attach the following

|

| 181 |

+

boilerplate notice, with the fields enclosed by brackets "[]"

|

| 182 |

+

replaced with your own identifying information. (Don't include

|

| 183 |

+

the brackets!) The text should be enclosed in the appropriate

|

| 184 |

+

comment syntax for the file format. We also recommend that a

|

| 185 |

+

file or class name and description of purpose be included on the

|

| 186 |

+

same "printed page" as the copyright notice for easier

|

| 187 |

+

identification within third-party archives.

|

| 188 |

+

|

| 189 |

+

Copyright [yyyy] [name of copyright owner]

|

| 190 |

+

|

| 191 |

+

Licensed under the Apache License, Version 2.0 (the "License");

|

| 192 |

+

you may not use this file except in compliance with the License.

|

| 193 |

+

You may obtain a copy of the License at

|

| 194 |

+

|

| 195 |

+

http://www.apache.org/licenses/LICENSE-2.0

|

| 196 |

+

|

| 197 |

+

Unless required by applicable law or agreed to in writing, software

|

| 198 |

+

distributed under the License is distributed on an "AS IS" BASIS,

|

| 199 |

+

WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 200 |

+

See the License for the specific language governing permissions and

|

| 201 |

+

limitations under the License.

|

cli/SparkTTS.py

ADDED

|

@@ -0,0 +1,236 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) 2025 SparkAudio

|

| 2 |

+

# 2025 Xinsheng Wang (w.xinshawn@gmail.com)

|

| 3 |

+

#

|

| 4 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 5 |

+

# you may not use this file except in compliance with the License.

|

| 6 |

+

# You may obtain a copy of the License at

|

| 7 |

+

#

|

| 8 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 9 |

+

#

|

| 10 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 11 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 12 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 13 |

+

# See the License for the specific language governing permissions and

|

| 14 |

+

# limitations under the License.

|

| 15 |

+

|

| 16 |

+

import re

|

| 17 |

+

import torch

|

| 18 |

+

from typing import Tuple

|

| 19 |

+

from pathlib import Path

|

| 20 |

+

from transformers import AutoTokenizer, AutoModelForCausalLM

|

| 21 |

+

|

| 22 |

+

from sparktts.utils.file import load_config

|

| 23 |

+

from sparktts.models.audio_tokenizer import BiCodecTokenizer

|

| 24 |

+

from sparktts.utils.token_parser import LEVELS_MAP, GENDER_MAP, TASK_TOKEN_MAP

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

class SparkTTS:

|

| 28 |

+

"""

|

| 29 |

+

Spark-TTS for text-to-speech generation.

|

| 30 |

+

"""

|

| 31 |

+

|

| 32 |

+

def __init__(self, model_dir: Path, device: torch.device = torch.device("cuda:0")):

|

| 33 |

+

"""

|

| 34 |

+

Initializes the SparkTTS model with the provided configurations and device.

|

| 35 |

+

|

| 36 |

+

Args:

|

| 37 |

+

model_dir (Path): Directory containing the model and config files.

|

| 38 |

+

device (torch.device): The device (CPU/GPU) to run the model on.

|

| 39 |

+

"""

|

| 40 |

+

self.device = device

|

| 41 |

+

self.model_dir = model_dir

|

| 42 |

+

self.configs = load_config(f"{model_dir}/config.yaml")

|

| 43 |

+

self.sample_rate = self.configs["sample_rate"]

|

| 44 |

+

self._initialize_inference()

|

| 45 |

+

|

| 46 |

+

def _initialize_inference(self):

|

| 47 |

+

"""Initializes the tokenizer, model, and audio tokenizer for inference."""

|

| 48 |

+

self.tokenizer = AutoTokenizer.from_pretrained(f"{self.model_dir}/LLM")

|

| 49 |

+

self.model = AutoModelForCausalLM.from_pretrained(f"{self.model_dir}/LLM")

|

| 50 |

+

self.audio_tokenizer = BiCodecTokenizer(self.model_dir, device=self.device)

|

| 51 |

+

self.model.to(self.device)

|

| 52 |

+

|

| 53 |

+

def process_prompt(

|

| 54 |

+

self,

|

| 55 |

+

text: str,

|

| 56 |

+

prompt_speech_path: Path,

|

| 57 |

+

prompt_text: str = None,

|

| 58 |

+

) -> Tuple[str, torch.Tensor]:

|

| 59 |

+

"""

|

| 60 |

+

Process input for voice cloning.

|

| 61 |

+

|

| 62 |

+

Args:

|

| 63 |

+

text (str): The text input to be converted to speech.

|

| 64 |

+

prompt_speech_path (Path): Path to the audio file used as a prompt.

|

| 65 |

+

prompt_text (str, optional): Transcript of the prompt audio.

|

| 66 |

+

|

| 67 |

+

Return:

|

| 68 |

+

Tuple[str, torch.Tensor]: Input prompt; global tokens

|

| 69 |

+

"""

|

| 70 |

+

|

| 71 |

+

global_token_ids, semantic_token_ids = self.audio_tokenizer.tokenize(

|

| 72 |

+

prompt_speech_path

|

| 73 |

+

)

|

| 74 |

+

global_tokens = "".join(

|

| 75 |

+

[f"<|bicodec_global_{i}|>" for i in global_token_ids.squeeze()]

|

| 76 |

+

)

|

| 77 |

+

|

| 78 |

+

# Prepare the input tokens for the model

|

| 79 |

+

if prompt_text is not None:

|

| 80 |

+

semantic_tokens = "".join(

|

| 81 |

+

[f"<|bicodec_semantic_{i}|>" for i in semantic_token_ids.squeeze()]

|

| 82 |

+

)

|

| 83 |

+

inputs = [

|

| 84 |

+

TASK_TOKEN_MAP["tts"],

|

| 85 |

+

"<|start_content|>",

|

| 86 |

+

prompt_text,

|

| 87 |

+

text,

|

| 88 |

+

"<|end_content|>",

|

| 89 |

+

"<|start_global_token|>",

|

| 90 |

+

global_tokens,

|

| 91 |

+

"<|end_global_token|>",

|

| 92 |

+

"<|start_semantic_token|>",

|

| 93 |

+

semantic_tokens,

|

| 94 |

+

]

|

| 95 |

+

else:

|

| 96 |

+

inputs = [

|

| 97 |

+

TASK_TOKEN_MAP["tts"],

|

| 98 |

+

"<|start_content|>",

|

| 99 |

+

text,

|

| 100 |

+

"<|end_content|>",

|

| 101 |

+

"<|start_global_token|>",

|

| 102 |

+

global_tokens,

|

| 103 |

+

"<|end_global_token|>",

|

| 104 |

+

]

|

| 105 |

+

|

| 106 |

+

inputs = "".join(inputs)

|

| 107 |

+

|

| 108 |

+

return inputs, global_token_ids

|

| 109 |

+

|

| 110 |

+

def process_prompt_control(

|

| 111 |

+

self,

|

| 112 |

+

gender: str,

|

| 113 |

+

pitch: str,

|

| 114 |

+

speed: str,

|

| 115 |

+

text: str,

|

| 116 |

+

):

|

| 117 |

+

"""

|

| 118 |

+

Process input for voice creation.

|

| 119 |

+

|

| 120 |

+

Args:

|

| 121 |

+

gender (str): female | male.

|

| 122 |

+

pitch (str): very_low | low | moderate | high | very_high

|

| 123 |

+

speed (str): very_low | low | moderate | high | very_high

|

| 124 |

+

text (str): The text input to be converted to speech.

|

| 125 |

+

|

| 126 |

+

Return:

|

| 127 |

+

str: Input prompt

|

| 128 |

+

"""

|

| 129 |

+

assert gender in GENDER_MAP.keys()

|

| 130 |

+

assert pitch in LEVELS_MAP.keys()

|

| 131 |

+

assert speed in LEVELS_MAP.keys()

|

| 132 |

+

|

| 133 |

+

gender_id = GENDER_MAP[gender]

|

| 134 |

+

pitch_level_id = LEVELS_MAP[pitch]

|

| 135 |

+

speed_level_id = LEVELS_MAP[speed]

|

| 136 |

+

|

| 137 |

+

pitch_label_tokens = f"<|pitch_label_{pitch_level_id}|>"

|

| 138 |

+

speed_label_tokens = f"<|speed_label_{speed_level_id}|>"

|

| 139 |

+

gender_tokens = f"<|gender_{gender_id}|>"

|

| 140 |

+

|

| 141 |

+

attribte_tokens = "".join(

|

| 142 |

+

[gender_tokens, pitch_label_tokens, speed_label_tokens]

|

| 143 |

+

)

|

| 144 |

+

|

| 145 |

+

control_tts_inputs = [

|

| 146 |

+

TASK_TOKEN_MAP["controllable_tts"],

|

| 147 |

+

"<|start_content|>",

|

| 148 |

+

text,

|

| 149 |

+

"<|end_content|>",

|

| 150 |

+

"<|start_style_label|>",

|

| 151 |

+

attribte_tokens,

|

| 152 |

+

"<|end_style_label|>",

|

| 153 |

+

]

|

| 154 |

+

|

| 155 |

+

return "".join(control_tts_inputs)

|

| 156 |

+

|

| 157 |

+

@torch.no_grad()

|

| 158 |

+

def inference(

|

| 159 |

+

self,

|

| 160 |

+

text: str,

|

| 161 |

+

prompt_speech_path: Path = None,

|

| 162 |

+

prompt_text: str = None,

|

| 163 |

+

gender: str = None,

|

| 164 |

+

pitch: str = None,

|

| 165 |

+

speed: str = None,

|

| 166 |

+

temperature: float = 0.8,

|

| 167 |

+

top_k: float = 50,

|

| 168 |

+

top_p: float = 0.95,

|

| 169 |

+

) -> torch.Tensor:

|

| 170 |

+

"""

|

| 171 |

+

Performs inference to generate speech from text, incorporating prompt audio and/or text.

|

| 172 |

+

|

| 173 |

+

Args:

|

| 174 |

+

text (str): The text input to be converted to speech.

|

| 175 |

+

prompt_speech_path (Path): Path to the audio file used as a prompt.

|

| 176 |

+

prompt_text (str, optional): Transcript of the prompt audio.

|

| 177 |

+

gender (str): female | male.

|

| 178 |

+

pitch (str): very_low | low | moderate | high | very_high

|

| 179 |

+

speed (str): very_low | low | moderate | high | very_high

|

| 180 |

+

temperature (float, optional): Sampling temperature for controlling randomness. Default is 0.8.

|

| 181 |

+

top_k (float, optional): Top-k sampling parameter. Default is 50.

|

| 182 |

+

top_p (float, optional): Top-p (nucleus) sampling parameter. Default is 0.95.

|

| 183 |

+

|

| 184 |

+

Returns:

|

| 185 |

+

torch.Tensor: Generated waveform as a tensor.

|

| 186 |

+

"""

|

| 187 |

+

if gender is not None:

|

| 188 |

+

prompt = self.process_prompt_control(gender, pitch, speed, text)

|

| 189 |

+

|

| 190 |

+

else:

|

| 191 |

+

prompt, global_token_ids = self.process_prompt(

|

| 192 |

+

text, prompt_speech_path, prompt_text

|

| 193 |

+

)

|

| 194 |

+

model_inputs = self.tokenizer([prompt], return_tensors="pt").to(self.device)

|

| 195 |

+

|

| 196 |

+

# Generate speech using the model

|

| 197 |

+

generated_ids = self.model.generate(

|

| 198 |

+

**model_inputs,

|

| 199 |

+

max_new_tokens=3000,

|

| 200 |

+

do_sample=True,

|

| 201 |

+

top_k=top_k,

|

| 202 |

+

top_p=top_p,

|

| 203 |

+

temperature=temperature,

|

| 204 |

+

)

|

| 205 |

+

|

| 206 |

+

# Trim the output tokens to remove the input tokens

|

| 207 |

+

generated_ids = [

|

| 208 |

+

output_ids[len(input_ids) :]

|

| 209 |

+

for input_ids, output_ids in zip(model_inputs.input_ids, generated_ids)

|

| 210 |

+

]

|

| 211 |

+

|

| 212 |

+

# Decode the generated tokens into text

|

| 213 |

+

predicts = self.tokenizer.batch_decode(generated_ids, skip_special_tokens=True)[0]

|

| 214 |

+

|

| 215 |

+

# Extract semantic token IDs from the generated text

|

| 216 |

+

pred_semantic_ids = (

|

| 217 |

+

torch.tensor([int(token) for token in re.findall(r"bicodec_semantic_(\d+)", predicts)])

|

| 218 |

+

.long()

|

| 219 |

+

.unsqueeze(0)

|

| 220 |

+

)

|

| 221 |

+

|

| 222 |

+

if gender is not None:

|

| 223 |

+

global_token_ids = (

|

| 224 |

+

torch.tensor([int(token) for token in re.findall(r"bicodec_global_(\d+)", predicts)])

|

| 225 |

+

.long()

|

| 226 |

+

.unsqueeze(0)

|

| 227 |

+

.unsqueeze(0)

|

| 228 |

+

)

|

| 229 |

+

|

| 230 |

+

# Convert semantic tokens back to waveform

|

| 231 |

+

wav = self.audio_tokenizer.detokenize(

|

| 232 |

+

global_token_ids.to(self.device).squeeze(0),

|

| 233 |

+

pred_semantic_ids.to(self.device),

|

| 234 |

+

)

|

| 235 |

+

|

| 236 |

+

return wav

|

cli/inference.py

ADDED

|

@@ -0,0 +1,104 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) 2025 SparkAudio

|

| 2 |

+

# 2025 Xinsheng Wang (w.xinshawn@gmail.com)

|

| 3 |

+

#

|

| 4 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 5 |

+

# you may not use this file except in compliance with the License.

|

| 6 |

+

# You may obtain a copy of the License at

|

| 7 |

+

#

|

| 8 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 9 |

+

#

|

| 10 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 11 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 12 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 13 |

+

# See the License for the specific language governing permissions and

|

| 14 |

+

# limitations under the License.

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

import os

|

| 18 |

+

import argparse

|

| 19 |

+

import torch

|

| 20 |

+

import soundfile as sf

|

| 21 |

+

import logging

|

| 22 |

+

from datetime import datetime

|

| 23 |

+

|

| 24 |

+

from cli.SparkTTS import SparkTTS

|

| 25 |

+

|

| 26 |

+

|

| 27 |

+

def parse_args():

|

| 28 |

+

"""Parse command-line arguments."""

|

| 29 |

+

parser = argparse.ArgumentParser(description="Run TTS inference.")

|

| 30 |

+

|

| 31 |

+

parser.add_argument(

|

| 32 |

+

"--model_dir",

|

| 33 |

+

type=str,

|

| 34 |

+

default="pretrained_models/Spark-TTS-0.5B",

|

| 35 |

+

help="Path to the model directory",

|

| 36 |

+

)

|

| 37 |

+

parser.add_argument(

|

| 38 |

+

"--save_dir",

|

| 39 |

+

type=str,

|

| 40 |

+

default="example/results",

|

| 41 |

+

help="Directory to save generated audio files",

|

| 42 |

+

)

|

| 43 |

+

parser.add_argument("--device", type=int, default=0, help="CUDA device number")

|

| 44 |

+

parser.add_argument(

|

| 45 |

+

"--text", type=str, required=True, help="Text for TTS generation"

|

| 46 |

+

)

|

| 47 |

+

parser.add_argument("--prompt_text", type=str, help="Transcript of prompt audio")

|

| 48 |

+

parser.add_argument(

|

| 49 |

+

"--prompt_speech_path",

|

| 50 |

+

type=str,

|

| 51 |

+

help="Path to the prompt audio file",

|

| 52 |

+

)

|

| 53 |

+

parser.add_argument("--gender", choices=["male", "female"])

|

| 54 |

+

parser.add_argument(

|

| 55 |

+

"--pitch", choices=["very_low", "low", "moderate", "high", "very_high"]

|

| 56 |

+

)

|

| 57 |

+

parser.add_argument(

|

| 58 |

+

"--speed", choices=["very_low", "low", "moderate", "high", "very_high"]

|

| 59 |

+

)

|

| 60 |

+

return parser.parse_args()

|

| 61 |

+

|

| 62 |

+

|

| 63 |

+

def run_tts(args):

|

| 64 |

+

"""Perform TTS inference and save the generated audio."""

|

| 65 |

+

logging.info(f"Using model from: {args.model_dir}")

|

| 66 |

+

logging.info(f"Saving audio to: {args.save_dir}")

|

| 67 |

+

|

| 68 |

+

# Ensure the save directory exists

|

| 69 |

+

os.makedirs(args.save_dir, exist_ok=True)

|

| 70 |

+

|

| 71 |

+

# Convert device argument to torch.device

|

| 72 |

+

device = torch.device(f"cuda:{args.device}")

|

| 73 |

+

|

| 74 |

+

# Initialize the model

|

| 75 |

+

model = SparkTTS(args.model_dir, device)

|

| 76 |

+

|

| 77 |

+

# Generate unique filename using timestamp

|

| 78 |

+

timestamp = datetime.now().strftime("%Y%m%d%H%M%S")

|

| 79 |

+

save_path = os.path.join(args.save_dir, f"{timestamp}.wav")

|

| 80 |

+

|

| 81 |

+

logging.info("Starting inference...")

|

| 82 |

+

|

| 83 |

+

# Perform inference and save the output audio

|

| 84 |

+

with torch.no_grad():

|

| 85 |

+

wav = model.inference(

|

| 86 |

+

args.text,

|

| 87 |

+

args.prompt_speech_path,

|

| 88 |

+

prompt_text=args.prompt_text,

|

| 89 |

+

gender=args.gender,

|

| 90 |

+

pitch=args.pitch,

|

| 91 |

+

speed=args.speed,

|

| 92 |

+

)

|

| 93 |

+

sf.write(save_path, wav, samplerate=16000)

|

| 94 |

+

|

| 95 |

+

logging.info(f"Audio saved at: {save_path}")

|

| 96 |

+

|

| 97 |

+

|

| 98 |

+

if __name__ == "__main__":

|

| 99 |

+

logging.basicConfig(

|

| 100 |

+

level=logging.INFO, format="%(asctime)s - %(levelname)s - %(message)s"

|

| 101 |

+

)

|

| 102 |

+

|

| 103 |

+

args = parse_args()

|

| 104 |

+

run_tts(args)

|

example/infer.sh

ADDED

|

@@ -0,0 +1,47 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

#!/bin/bash

|

| 2 |

+

|

| 3 |

+

# Copyright (c) 2025 SparkAudio

|

| 4 |

+

# 2025 Xinsheng Wang (w.xinshawn@gmail.com)

|

| 5 |

+

#

|

| 6 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 7 |

+

# you may not use this file except in compliance with the License.

|

| 8 |

+

# You may obtain a copy of the License at

|

| 9 |

+

#

|

| 10 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 11 |

+

#

|

| 12 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 13 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 14 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 15 |

+

# See the License for the specific language governing permissions and

|

| 16 |

+

# limitations under the License.

|

| 17 |

+

|

| 18 |

+

|

| 19 |

+

# Get the absolute path of the script's directory

|

| 20 |

+

script_dir=$(dirname "$(realpath "$0")")

|

| 21 |

+

|

| 22 |

+

# Get the root directory

|

| 23 |

+

root_dir=$(dirname "$script_dir")

|

| 24 |

+

|

| 25 |

+

# Set default parameters

|

| 26 |

+

device=0

|

| 27 |

+

save_dir='example/results'

|

| 28 |

+

model_dir="pretrained_models/Spark-TTS-0.5B"

|

| 29 |

+

text="身临其境,换新体验。塑造开源语音合成新范式,让智能语音更自然。"

|

| 30 |

+

prompt_text="吃燕窝就选燕之屋,本节目由26年专注高品质燕窝的燕之屋冠名播出。豆奶牛奶换着喝,营养更均衡,本节目由豆本豆豆奶特约播出。"

|

| 31 |

+

prompt_speech_path="example/prompt_audio.wav"

|

| 32 |

+

|

| 33 |

+

# Change directory to the root directory

|

| 34 |

+

cd "$root_dir" || exit

|

| 35 |

+

|

| 36 |

+

source sparktts/utils/parse_options.sh

|

| 37 |

+

|

| 38 |

+

# Run inference

|

| 39 |

+

python -m cli.inference \

|

| 40 |

+

--text "${text}" \

|

| 41 |

+

--device "${device}" \

|

| 42 |

+

--save_dir "${save_dir}" \

|

| 43 |

+

--model_dir "${model_dir}" \

|

| 44 |

+

--prompt_text "${prompt_text}" \

|

| 45 |

+

--prompt_speech_path "${prompt_speech_path}"

|

| 46 |

+

|

| 47 |

+

|

example/prompt_audio.wav

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:335e7f7789b231cd90d9670292d561ecfe6a6bdd5e737a7bc6c29730741852de

|

| 3 |

+

size 318550

|

example/results/20250225113521.wav

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:1d335b2fbd3dbab1897b4637fd2357a91879dc1ac27b1466c63b2728b3bfffa9

|

| 3 |

+

size 237484

|

requirements.txt

ADDED

|

@@ -0,0 +1,13 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

einops==0.8.1

|

| 2 |

+

einx==0.3.0

|

| 3 |

+

numpy==2.2.3

|

| 4 |

+

omegaconf==2.3.0

|

| 5 |

+

packaging==24.2

|

| 6 |

+

safetensors==0.5.2

|

| 7 |

+

soundfile==0.12.1

|

| 8 |

+

soxr==0.5.0.post1

|

| 9 |

+

torch==2.5.1

|

| 10 |

+

torchaudio==2.5.1

|

| 11 |

+

tqdm==4.66.5

|

| 12 |

+

transformers==4.46.2

|

| 13 |

+

gradio==5.18.0

|

sparktts/models/audio_tokenizer.py

ADDED

|

@@ -0,0 +1,163 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# Copyright (c) 2025 SparkAudio

|

| 2 |

+

# 2025 Xinsheng Wang (w.xinshawn@gmail.com)

|

| 3 |

+

#

|

| 4 |

+

# Licensed under the Apache License, Version 2.0 (the "License");

|

| 5 |

+

# you may not use this file except in compliance with the License.

|

| 6 |

+

# You may obtain a copy of the License at

|

| 7 |

+

#

|

| 8 |

+

# http://www.apache.org/licenses/LICENSE-2.0

|

| 9 |

+

#

|

| 10 |

+

# Unless required by applicable law or agreed to in writing, software

|

| 11 |

+

# distributed under the License is distributed on an "AS IS" BASIS,

|

| 12 |

+

# WITHOUT WARRANTIES OR CONDITIONS OF ANY KIND, either express or implied.

|

| 13 |

+

# See the License for the specific language governing permissions and

|

| 14 |

+

# limitations under the License.

|

| 15 |

+

|

| 16 |

+

|

| 17 |

+

import torch

|

| 18 |

+

import numpy as np

|

| 19 |

+

|

| 20 |

+

from pathlib import Path

|

| 21 |

+

from typing import Any, Dict, Tuple

|

| 22 |

+

from transformers import Wav2Vec2FeatureExtractor, Wav2Vec2Model

|

| 23 |

+

|

| 24 |

+

from sparktts.utils.file import load_config

|

| 25 |

+

from sparktts.utils.audio import load_audio

|

| 26 |

+

from sparktts.models.bicodec import BiCodec

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

class BiCodecTokenizer:

|

| 30 |

+

"""BiCodec tokenizer for handling audio input and tokenization."""

|

| 31 |

+

|

| 32 |

+

def __init__(self, model_dir: Path, device: torch.device = None, **kwargs):

|

| 33 |

+

super().__init__()

|

| 34 |

+

"""

|

| 35 |

+

Args:

|

| 36 |

+

model_dir: Path to the model directory.

|

| 37 |

+

device: Device to run the model on (default is GPU if available).

|

| 38 |

+

"""

|

| 39 |

+

self.device = device

|

| 40 |

+

self.model_dir = model_dir

|

| 41 |

+

self.config = load_config(f"{model_dir}/config.yaml")

|

| 42 |

+

self._initialize_model()

|

| 43 |

+

|

| 44 |

+

def _initialize_model(self):

|

| 45 |

+

"""Load and initialize the BiCodec model and Wav2Vec2 feature extractor."""

|

| 46 |

+

self.model = BiCodec.load_from_checkpoint(f"{self.model_dir}/BiCodec").to(

|

| 47 |

+

self.device

|

| 48 |

+

)

|

| 49 |

+

self.processor = Wav2Vec2FeatureExtractor.from_pretrained(

|

| 50 |

+

f"{self.model_dir}/wav2vec2-large-xlsr-53"

|

| 51 |

+

)

|

| 52 |

+

self.feature_extractor = Wav2Vec2Model.from_pretrained(

|

| 53 |

+

f"{self.model_dir}/wav2vec2-large-xlsr-53"

|

| 54 |

+

).to(self.device)

|

| 55 |

+

self.feature_extractor.config.output_hidden_states = True

|

| 56 |

+

|

| 57 |

+

def get_ref_clip(self, wav: np.ndarray) -> np.ndarray:

|

| 58 |

+

"""Get reference audio clip for speaker embedding."""

|

| 59 |

+

ref_segment_length = (

|

| 60 |

+

int(self.config["sample_rate"] * self.config["ref_segment_duration"])

|

| 61 |

+

// self.config["latent_hop_length"]

|

| 62 |

+

* self.config["latent_hop_length"]

|

| 63 |

+

)

|

| 64 |

+

wav_length = len(wav)

|

| 65 |

+

|

| 66 |

+

if ref_segment_length > wav_length:

|

| 67 |

+

# Repeat and truncate to handle insufficient length

|

| 68 |

+

wav = np.tile(wav, (1 + ref_segment_length) // wav_length)

|

| 69 |

+

|

| 70 |

+

return wav[:ref_segment_length]

|

| 71 |

+

|

| 72 |

+

def process_audio(self, wav_path: Path) -> Tuple[torch.Tensor, torch.Tensor]:

|

| 73 |

+

"""load auido and get reference audio from wav path"""

|

| 74 |

+

wav = load_audio(

|

| 75 |

+

wav_path,

|

| 76 |

+

sampling_rate=self.config["sample_rate"],

|

| 77 |

+

volume_normalize=self.config["volume_normalize"],

|

| 78 |

+

)

|

| 79 |

+

|

| 80 |

+

wav_ref = self.get_ref_clip(wav)

|

| 81 |

+

|

| 82 |

+

wav_ref = torch.from_numpy(wav_ref).unsqueeze(0).float()

|

| 83 |

+

return wav, wav_ref

|

| 84 |

+

|

| 85 |

+

def extract_wav2vec2_features(self, wavs: torch.Tensor) -> torch.Tensor:

|

| 86 |

+

"""extract wav2vec2 features"""

|

| 87 |

+

inputs = self.processor(

|

| 88 |

+

wavs,

|

| 89 |

+

sampling_rate=16000,

|

| 90 |

+

return_tensors="pt",

|

| 91 |

+

padding=True,

|

| 92 |

+

output_hidden_states=True,

|

| 93 |

+

).input_values

|

| 94 |

+

feat = self.feature_extractor(inputs.to(self.feature_extractor.device))

|

| 95 |

+

feats_mix = (

|

| 96 |

+

feat.hidden_states[11] + feat.hidden_states[14] + feat.hidden_states[16]

|

| 97 |

+

) / 3

|

| 98 |

+

|

| 99 |

+

return feats_mix

|

| 100 |

+

|

| 101 |

+

def tokenize_batch(self, batch: Dict[str, Any]) -> torch.Tensor:

|

| 102 |

+

"""tokenize the batch of audio

|

| 103 |

+

|

| 104 |

+

Args:

|

| 105 |

+

batch:

|

| 106 |

+

wavs (List[np.ndarray]): batch of audio

|

| 107 |

+

ref_wavs (torch.Tensor): reference audio. shape: (batch_size, seq_len)

|

| 108 |

+

|

| 109 |

+

Returns:

|

| 110 |

+

semantic_tokens: semantic tokens. shape: (batch_size, seq_len, latent_dim)

|

| 111 |

+

global_tokens: global tokens. shape: (batch_size, seq_len, global_dim)

|

| 112 |

+

"""

|

| 113 |

+

feats = self.extract_wav2vec2_features(batch["wav"])

|

| 114 |

+

batch["feat"] = feats

|

| 115 |

+

semantic_tokens, global_tokens = self.model.tokenize(batch)

|

| 116 |

+

|

| 117 |

+

return global_tokens, semantic_tokens

|

| 118 |

+

|

| 119 |

+

def tokenize(self, audio_path: str) -> Tuple[torch.Tensor, torch.Tensor]:

|

| 120 |

+

"""tokenize the audio"""

|

| 121 |

+

wav, ref_wav = self.process_audio(audio_path)

|

| 122 |

+

feat = self.extract_wav2vec2_features(wav)

|

| 123 |

+

batch = {

|

| 124 |

+

"wav": torch.from_numpy(wav).unsqueeze(0).float().to(self.device),

|

| 125 |

+

"ref_wav": ref_wav.to(self.device),

|

| 126 |

+

"feat": feat.to(self.device),

|

| 127 |

+

}

|

| 128 |

+

semantic_tokens, global_tokens = self.model.tokenize(batch)

|

| 129 |

+

|

| 130 |

+

return global_tokens, semantic_tokens

|

| 131 |

+

|

| 132 |

+

def detokenize(

|

| 133 |

+

self, global_tokens: torch.Tensor, semantic_tokens: torch.Tensor

|

| 134 |

+

) -> np.array:

|

| 135 |

+

"""detokenize the tokens to waveform

|

| 136 |

+

|

| 137 |

+

Args:

|

| 138 |

+

global_tokens: global tokens. shape: (batch_size, global_dim)

|

| 139 |

+

semantic_tokens: semantic tokens. shape: (batch_size, latent_dim)

|

| 140 |

+

|

| 141 |

+

Returns:

|

| 142 |

+

wav_rec: waveform. shape: (batch_size, seq_len) for batch or (seq_len,) for single

|

| 143 |

+

"""

|

| 144 |

+

global_tokens = global_tokens.unsqueeze(1)

|

| 145 |

+

wav_rec = self.model.detokenize(semantic_tokens, global_tokens)

|

| 146 |

+

return wav_rec.detach().squeeze().cpu().numpy()

|

| 147 |

+

|

| 148 |

+

|

| 149 |

+

# test

|

| 150 |

+