Spaces:

Running

Running

Upload 10 files

Browse files- flair_recognizer.py +14 -5

- index.md +8 -4

- openai_fake_data_generator.py +45 -13

- presidio_helpers.py +120 -63

- presidio_nlp_engine_config.py +137 -0

- presidio_streamlit.py +243 -118

- requirements.txt +5 -1

- text_analytics_wrapper.py +123 -0

flair_recognizer.py

CHANGED

|

@@ -1,3 +1,5 @@

|

|

|

|

|

|

|

|

| 1 |

import logging

|

| 2 |

from typing import Optional, List, Tuple, Set

|

| 3 |

|

|

@@ -74,17 +76,24 @@ class FlairRecognizer(EntityRecognizer):

|

|

| 74 |

supported_entities: Optional[List[str]] = None,

|

| 75 |

check_label_groups: Optional[Tuple[Set, Set]] = None,

|

| 76 |

model: SequenceTagger = None,

|

|

|

|

| 77 |

):

|

| 78 |

self.check_label_groups = (

|

| 79 |

check_label_groups if check_label_groups else self.CHECK_LABEL_GROUPS

|

| 80 |

)

|

| 81 |

|

| 82 |

supported_entities = supported_entities if supported_entities else self.ENTITIES

|

| 83 |

-

|

| 84 |

-

|

| 85 |

-

|

| 86 |

-

|

| 87 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 88 |

|

| 89 |

super().__init__(

|

| 90 |

supported_entities=supported_entities,

|

|

|

|

| 1 |

+

## Taken from https://github.com/microsoft/presidio/blob/main/docs/samples/python/flair_recognizer.py

|

| 2 |

+

|

| 3 |

import logging

|

| 4 |

from typing import Optional, List, Tuple, Set

|

| 5 |

|

|

|

|

| 76 |

supported_entities: Optional[List[str]] = None,

|

| 77 |

check_label_groups: Optional[Tuple[Set, Set]] = None,

|

| 78 |

model: SequenceTagger = None,

|

| 79 |

+

model_path: Optional[str] = None

|

| 80 |

):

|

| 81 |

self.check_label_groups = (

|

| 82 |

check_label_groups if check_label_groups else self.CHECK_LABEL_GROUPS

|

| 83 |

)

|

| 84 |

|

| 85 |

supported_entities = supported_entities if supported_entities else self.ENTITIES

|

| 86 |

+

|

| 87 |

+

if model and model_path:

|

| 88 |

+

raise ValueError("Only one of model or model_path should be provided.")

|

| 89 |

+

elif model and not model_path:

|

| 90 |

+

self.model = model

|

| 91 |

+

elif not model and model_path:

|

| 92 |

+

print(f"Loading model from {model_path}")

|

| 93 |

+

self.model = SequenceTagger.load(model_path)

|

| 94 |

+

else:

|

| 95 |

+

print(f"Loading model for language {supported_language}")

|

| 96 |

+

self.model = SequenceTagger.load(self.MODEL_LANGUAGES.get(supported_language))

|

| 97 |

|

| 98 |

super().__init__(

|

| 99 |

supported_entities=supported_entities,

|

index.md

CHANGED

|

@@ -2,15 +2,19 @@

|

|

| 2 |

Here's a simple app, written in pure Python, to create a demo website for Presidio.

|

| 3 |

The app is based on the [streamlit](https://streamlit.io/) package.

|

| 4 |

|

|

|

|

|

|

|

| 5 |

## Requirements

|

|

|

|

| 6 |

1. Install dependencies (preferably in a virtual environment)

|

| 7 |

|

| 8 |

```sh

|

| 9 |

-

pip install

|

| 10 |

```

|

|

|

|

| 11 |

|

| 12 |

-

2.

|

| 13 |

-

3. *Optional*: Update the `analyzer_engine` and `anonymizer_engine` functions for your specific implementation

|

| 14 |

3. Start the app:

|

| 15 |

|

| 16 |

```sh

|

|

@@ -19,4 +23,4 @@ streamlit run presidio_streamlit.py

|

|

| 19 |

|

| 20 |

## Output

|

| 21 |

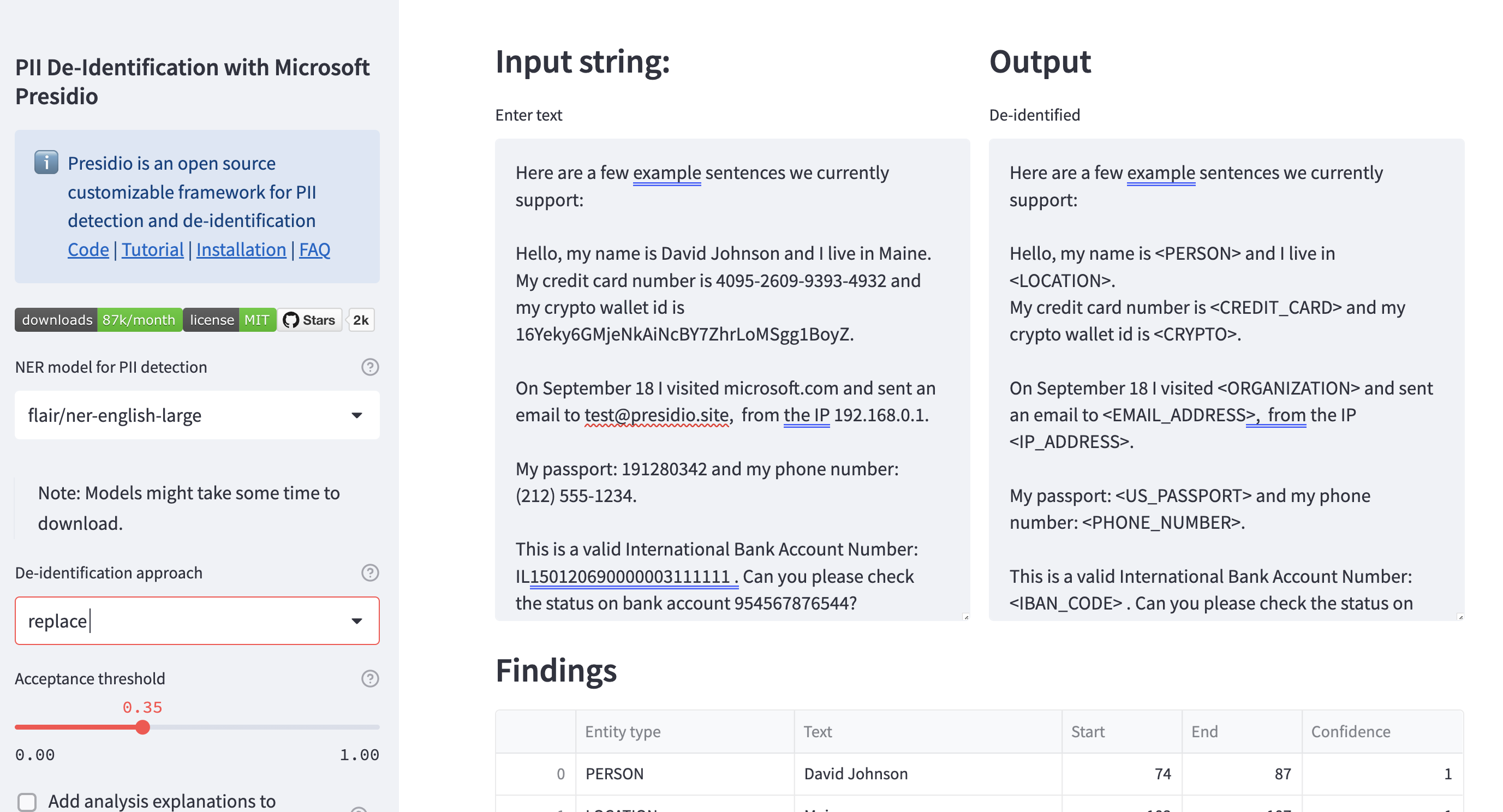

Output should be similar to this screenshot:

|

| 22 |

-

package.

|

| 4 |

|

| 5 |

+

A live version can be found here: https://huggingface.co/spaces/presidio/presidio_demo

|

| 6 |

+

|

| 7 |

## Requirements

|

| 8 |

+

1. Clone the repo and move to the `docs/samples/python/streamlit ` folder

|

| 9 |

1. Install dependencies (preferably in a virtual environment)

|

| 10 |

|

| 11 |

```sh

|

| 12 |

+

pip install -r requirements

|

| 13 |

```

|

| 14 |

+

> Note: This would install additional packages such as `transformers` and `flair` which are not mandatory for using Presidio.

|

| 15 |

|

| 16 |

+

2.

|

| 17 |

+

3. *Optional*: Update the `analyzer_engine` and `anonymizer_engine` functions for your specific implementation (in `presidio_helpers.py`).

|

| 18 |

3. Start the app:

|

| 19 |

|

| 20 |

```sh

|

|

|

|

| 23 |

|

| 24 |

## Output

|

| 25 |

Output should be similar to this screenshot:

|

| 26 |

+

|

openai_fake_data_generator.py

CHANGED

|

@@ -1,25 +1,50 @@

|

|

|

|

|

|

|

|

|

|

|

| 1 |

import openai

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 2 |

|

| 3 |

-

|

|

|

|

| 4 |

"""Set the OpenAI API key.

|

| 5 |

-

:param

|

|

|

|

| 6 |

"""

|

| 7 |

-

openai.api_key = openai_key

|

|

|

|

|

|

|

|

|

|

|

|

|

| 8 |

|

| 9 |

|

| 10 |

def call_completion_model(

|

| 11 |

-

prompt: str,

|

|

|

|

|

|

|

|

|

|

| 12 |

) -> str:

|

| 13 |

"""Creates a request for the OpenAI Completion service and returns the response.

|

| 14 |

|

| 15 |

:param prompt: The prompt for the completion model

|

| 16 |

:param model: OpenAI model name

|

| 17 |

:param max_tokens: Model's max_tokens parameter

|

|

|

|

| 18 |

"""

|

| 19 |

-

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 23 |

|

| 24 |

return response["choices"][0].text

|

| 25 |

|

|

@@ -32,16 +57,23 @@ def create_prompt(anonymized_text: str) -> str:

|

|

| 32 |

"""

|

| 33 |

|

| 34 |

prompt = f"""

|

| 35 |

-

Your role is to create synthetic text based on de-identified text with placeholders instead of

|

| 36 |

-

Replace the placeholders (e.g.

|

| 37 |

|

| 38 |

Instructions:

|

| 39 |

|

| 40 |

-

Use completely random numbers, so every digit is drawn between 0 and 9.

|

| 41 |

-

Use realistic names that come from diverse genders, ethnicities and countries.

|

| 42 |

-

If there are no placeholders, return the text as is and provide an answer.

|

|

|

|

|

|

|

|

|

|

| 43 |

input: How do I change the limit on my credit card {{credit_card_number}}?

|

| 44 |

output: How do I change the limit on my credit card 2539 3519 2345 1555?

|

|

|

|

|

|

|

|

|

|

|

|

|

| 45 |

input: {anonymized_text}

|

| 46 |

output:

|

| 47 |

"""

|

|

|

|

| 1 |

+

from collections import namedtuple

|

| 2 |

+

from typing import Optional

|

| 3 |

+

|

| 4 |

import openai

|

| 5 |

+

import logging

|

| 6 |

+

|

| 7 |

+

logger = logging.getLogger("presidio-streamlit")

|

| 8 |

+

|

| 9 |

+

OpenAIParams = namedtuple(

|

| 10 |

+

"open_ai_params",

|

| 11 |

+

["openai_key", "model", "api_base", "deployment_name", "api_version", "api_type"],

|

| 12 |

+

)

|

| 13 |

|

| 14 |

+

|

| 15 |

+

def set_openai_params(openai_params: OpenAIParams):

|

| 16 |

"""Set the OpenAI API key.

|

| 17 |

+

:param openai_params: OpenAIParams object with the following fields: key, model, api version, deployment_name,

|

| 18 |

+

The latter only relate to Azure OpenAI deployments.

|

| 19 |

"""

|

| 20 |

+

openai.api_key = openai_params.openai_key

|

| 21 |

+

openai.api_version = openai_params.api_version

|

| 22 |

+

if openai_params.api_base:

|

| 23 |

+

openai.api_base = openai_params.api_base

|

| 24 |

+

openai.api_type = openai_params.api_type

|

| 25 |

|

| 26 |

|

| 27 |

def call_completion_model(

|

| 28 |

+

prompt: str,

|

| 29 |

+

model: str = "text-davinci-003",

|

| 30 |

+

max_tokens: int = 512,

|

| 31 |

+

deployment_id: Optional[str] = None,

|

| 32 |

) -> str:

|

| 33 |

"""Creates a request for the OpenAI Completion service and returns the response.

|

| 34 |

|

| 35 |

:param prompt: The prompt for the completion model

|

| 36 |

:param model: OpenAI model name

|

| 37 |

:param max_tokens: Model's max_tokens parameter

|

| 38 |

+

:param deployment_id: Azure OpenAI deployment ID

|

| 39 |

"""

|

| 40 |

+

if deployment_id:

|

| 41 |

+

response = openai.Completion.create(

|

| 42 |

+

deployment_id=deployment_id, model=model, prompt=prompt, max_tokens=max_tokens

|

| 43 |

+

)

|

| 44 |

+

else:

|

| 45 |

+

response = openai.Completion.create(

|

| 46 |

+

model=model, prompt=prompt, max_tokens=max_tokens

|

| 47 |

+

)

|

| 48 |

|

| 49 |

return response["choices"][0].text

|

| 50 |

|

|

|

|

| 57 |

"""

|

| 58 |

|

| 59 |

prompt = f"""

|

| 60 |

+

Your role is to create synthetic text based on de-identified text with placeholders instead of Personally Identifiable Information (PII).

|

| 61 |

+

Replace the placeholders (e.g. ,<PERSON>, {{DATE}}, {{ip_address}}) with fake values.

|

| 62 |

|

| 63 |

Instructions:

|

| 64 |

|

| 65 |

+

a. Use completely random numbers, so every digit is drawn between 0 and 9.

|

| 66 |

+

b. Use realistic names that come from diverse genders, ethnicities and countries.

|

| 67 |

+

c. If there are no placeholders, return the text as is and provide an answer.

|

| 68 |

+

d. Keep the formatting as close to the original as possible.

|

| 69 |

+

e. If PII exists in the input, replace it with fake values in the output.

|

| 70 |

+

|

| 71 |

input: How do I change the limit on my credit card {{credit_card_number}}?

|

| 72 |

output: How do I change the limit on my credit card 2539 3519 2345 1555?

|

| 73 |

+

input: <PERSON> was the chief science officer at <ORGANIZATION>.

|

| 74 |

+

output: Katherine Buckjov was the chief science officer at NASA.

|

| 75 |

+

input: Cameroon lives in <LOCATION>.

|

| 76 |

+

output: Vladimir lives in Moscow.

|

| 77 |

input: {anonymized_text}

|

| 78 |

output:

|

| 79 |

"""

|

presidio_helpers.py

CHANGED

|

@@ -1,79 +1,85 @@

|

|

| 1 |

"""

|

| 2 |

Helper methods for the Presidio Streamlit app

|

| 3 |

"""

|

| 4 |

-

from typing import List, Optional

|

| 5 |

-

|

| 6 |

-

import spacy

|

| 7 |

import streamlit as st

|

| 8 |

-

from presidio_analyzer import

|

| 9 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 10 |

from presidio_anonymizer import AnonymizerEngine

|

| 11 |

from presidio_anonymizer.entities import OperatorConfig

|

| 12 |

|

| 13 |

-

from flair_recognizer import FlairRecognizer

|

| 14 |

from openai_fake_data_generator import (

|

| 15 |

-

|

| 16 |

call_completion_model,

|

| 17 |

create_prompt,

|

|

|

|

| 18 |

)

|

| 19 |

-

from

|

| 20 |

-

|

| 21 |

-

|

| 22 |

-

|

|

|

|

| 23 |

)

|

| 24 |

|

|

|

|

| 25 |

|

| 26 |

-

@st.cache_resource

|

| 27 |

-

def analyzer_engine(model_path: str):

|

| 28 |

-

"""Return AnalyzerEngine.

|

| 29 |

|

| 30 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 31 |

"StanfordAIMI/stanford-deidentifier-base",

|

| 32 |

"obi/deid_roberta_i2b2",

|

| 33 |

"en_core_web_lg"

|

|

|

|

|

|

|

| 34 |

"""

|

| 35 |

|

| 36 |

-

registry = RecognizerRegistry()

|

| 37 |

-

registry.load_predefined_recognizers()

|

| 38 |

-

|

| 39 |

# Set up NLP Engine according to the model of choice

|

| 40 |

-

if

|

| 41 |

-

|

| 42 |

-

|

| 43 |

-

|

| 44 |

-

|

| 45 |

-

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

flair_recognizer = FlairRecognizer()

|

| 49 |

-

nlp_configuration = {

|

| 50 |

-

"nlp_engine_name": "spacy",

|

| 51 |

-

"models": [{"lang_code": "en", "model_name": "en_core_web_sm"}],

|

| 52 |

-

}

|

| 53 |

-

registry.add_recognizer(flair_recognizer)

|

| 54 |

-

registry.remove_recognizer("SpacyRecognizer")

|

| 55 |

else:

|

| 56 |

-

|

| 57 |

-

spacy.cli.download("en_core_web_sm")

|

| 58 |

-

# Using a small spaCy model + a HF NER model

|

| 59 |

-

transformers_recognizer = TransformersRecognizer(model_path=model_path)

|

| 60 |

-

registry.remove_recognizer("SpacyRecognizer")

|

| 61 |

-

if model_path == "StanfordAIMI/stanford-deidentifier-base":

|

| 62 |

-

transformers_recognizer.load_transformer(**STANFORD_COFIGURATION)

|

| 63 |

-

elif model_path == "obi/deid_roberta_i2b2":

|

| 64 |

-

transformers_recognizer.load_transformer(**BERT_DEID_CONFIGURATION)

|

| 65 |

-

|

| 66 |

-

# Use small spaCy model, no need for both spacy and HF models

|

| 67 |

-

# The transformers model is used here as a recognizer, not as an NlpEngine

|

| 68 |

-

nlp_configuration = {

|

| 69 |

-

"nlp_engine_name": "spacy",

|

| 70 |

-

"models": [{"lang_code": "en", "model_name": "en_core_web_sm"}],

|

| 71 |

-

}

|

| 72 |

-

|

| 73 |

-

registry.add_recognizer(transformers_recognizer)

|

| 74 |

|

| 75 |

-

nlp_engine = NlpEngineProvider(nlp_configuration=nlp_configuration).create_engine()

|

| 76 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 77 |

analyzer = AnalyzerEngine(nlp_engine=nlp_engine, registry=registry)

|

| 78 |

return analyzer

|

| 79 |

|

|

@@ -85,17 +91,36 @@ def anonymizer_engine():

|

|

| 85 |

|

| 86 |

|

| 87 |

@st.cache_data

|

| 88 |

-

def get_supported_entities(

|

|

|

|

|

|

|

| 89 |

"""Return supported entities from the Analyzer Engine."""

|

| 90 |

-

return analyzer_engine(

|

|

|

|

|

|

|

| 91 |

|

| 92 |

|

| 93 |

@st.cache_data

|

| 94 |

-

def analyze(

|

|

|

|

|

|

|

| 95 |

"""Analyze input using Analyzer engine and input arguments (kwargs)."""

|

| 96 |

if "entities" not in kwargs or "All" in kwargs["entities"]:

|

| 97 |

kwargs["entities"] = None

|

| 98 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 99 |

|

| 100 |

|

| 101 |

def anonymize(

|

|

@@ -184,20 +209,52 @@ def annotate(text: str, analyze_results: List[RecognizerResult]):

|

|

| 184 |

def create_fake_data(

|

| 185 |

text: str,

|

| 186 |

analyze_results: List[RecognizerResult],

|

| 187 |

-

|

| 188 |

-

openai_model_name: str,

|

| 189 |

):

|

| 190 |

"""Creates a synthetic version of the text using OpenAI APIs"""

|

| 191 |

-

if not openai_key:

|

| 192 |

return "Please provide your OpenAI key"

|

| 193 |

results = anonymize(text=text, operator="replace", analyze_results=analyze_results)

|

| 194 |

-

|

| 195 |

prompt = create_prompt(results.text)

|

| 196 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 197 |

return fake

|

| 198 |

|

| 199 |

|

| 200 |

@st.cache_data

|

| 201 |

-

def call_openai_api(

|

| 202 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

| 203 |

return fake_data

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

"""

|

| 2 |

Helper methods for the Presidio Streamlit app

|

| 3 |

"""

|

| 4 |

+

from typing import List, Optional, Tuple

|

| 5 |

+

import logging

|

|

|

|

| 6 |

import streamlit as st

|

| 7 |

+

from presidio_analyzer import (

|

| 8 |

+

AnalyzerEngine,

|

| 9 |

+

RecognizerResult,

|

| 10 |

+

RecognizerRegistry,

|

| 11 |

+

PatternRecognizer,

|

| 12 |

+

Pattern,

|

| 13 |

+

)

|

| 14 |

+

from presidio_analyzer.nlp_engine import NlpEngine

|

| 15 |

from presidio_anonymizer import AnonymizerEngine

|

| 16 |

from presidio_anonymizer.entities import OperatorConfig

|

| 17 |

|

|

|

|

| 18 |

from openai_fake_data_generator import (

|

| 19 |

+

set_openai_params,

|

| 20 |

call_completion_model,

|

| 21 |

create_prompt,

|

| 22 |

+

OpenAIParams,

|

| 23 |

)

|

| 24 |

+

from presidio_nlp_engine_config import (

|

| 25 |

+

create_nlp_engine_with_spacy,

|

| 26 |

+

create_nlp_engine_with_flair,

|

| 27 |

+

create_nlp_engine_with_transformers,

|

| 28 |

+

create_nlp_engine_with_azure_text_analytics,

|

| 29 |

)

|

| 30 |

|

| 31 |

+

logger = logging.getLogger("presidio-streamlit")

|

| 32 |

|

|

|

|

|

|

|

|

|

|

| 33 |

|

| 34 |

+

@st.cache_resource

|

| 35 |

+

def nlp_engine_and_registry(

|

| 36 |

+

model_family: str,

|

| 37 |

+

model_path: str,

|

| 38 |

+

ta_key: Optional[str] = None,

|

| 39 |

+

ta_endpoint: Optional[str] = None,

|

| 40 |

+

) -> Tuple[NlpEngine, RecognizerRegistry]:

|

| 41 |

+

"""Create the NLP Engine instance based on the requested model.

|

| 42 |

+

:param model_family: Which model package to use for NER.

|

| 43 |

+

:param model_path: Which model to use for NER. E.g.,

|

| 44 |

"StanfordAIMI/stanford-deidentifier-base",

|

| 45 |

"obi/deid_roberta_i2b2",

|

| 46 |

"en_core_web_lg"

|

| 47 |

+

:param ta_key: Key to the Text Analytics endpoint (only if model_path = "Azure Text Analytics")

|

| 48 |

+

:param ta_endpoint: Endpoint of the Text Analytics instance (only if model_path = "Azure Text Analytics")

|

| 49 |

"""

|

| 50 |

|

|

|

|

|

|

|

|

|

|

| 51 |

# Set up NLP Engine according to the model of choice

|

| 52 |

+

if "spaCy" in model_family:

|

| 53 |

+

return create_nlp_engine_with_spacy(model_path)

|

| 54 |

+

elif "flair" in model_family:

|

| 55 |

+

return create_nlp_engine_with_flair(model_path)

|

| 56 |

+

elif "HuggingFace" in model_family:

|

| 57 |

+

return create_nlp_engine_with_transformers(model_path)

|

| 58 |

+

elif "Azure Text Analytics" in model_family:

|

| 59 |

+

return create_nlp_engine_with_azure_text_analytics(ta_key, ta_endpoint)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 60 |

else:

|

| 61 |

+

raise ValueError(f"Model family {model_family} not supported")

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 62 |

|

|

|

|

| 63 |

|

| 64 |

+

@st.cache_resource

|

| 65 |

+

def analyzer_engine(

|

| 66 |

+

model_family: str,

|

| 67 |

+

model_path: str,

|

| 68 |

+

ta_key: Optional[str] = None,

|

| 69 |

+

ta_endpoint: Optional[str] = None,

|

| 70 |

+

) -> AnalyzerEngine:

|

| 71 |

+

"""Create the NLP Engine instance based on the requested model.

|

| 72 |

+

:param model_family: Which model package to use for NER.

|

| 73 |

+

:param model_path: Which model to use for NER:

|

| 74 |

+

"StanfordAIMI/stanford-deidentifier-base",

|

| 75 |

+

"obi/deid_roberta_i2b2",

|

| 76 |

+

"en_core_web_lg"

|

| 77 |

+

:param ta_key: Key to the Text Analytics endpoint (only if model_path = "Azure Text Analytics")

|

| 78 |

+

:param ta_endpoint: Endpoint of the Text Analytics instance (only if model_path = "Azure Text Analytics")

|

| 79 |

+

"""

|

| 80 |

+

nlp_engine, registry = nlp_engine_and_registry(

|

| 81 |

+

model_family, model_path, ta_key, ta_endpoint

|

| 82 |

+

)

|

| 83 |

analyzer = AnalyzerEngine(nlp_engine=nlp_engine, registry=registry)

|

| 84 |

return analyzer

|

| 85 |

|

|

|

|

| 91 |

|

| 92 |

|

| 93 |

@st.cache_data

|

| 94 |

+

def get_supported_entities(

|

| 95 |

+

model_family: str, model_path: str, ta_key: str, ta_endpoint: str

|

| 96 |

+

):

|

| 97 |

"""Return supported entities from the Analyzer Engine."""

|

| 98 |

+

return analyzer_engine(

|

| 99 |

+

model_family, model_path, ta_key, ta_endpoint

|

| 100 |

+

).get_supported_entities() + ["GENERIC_PII"]

|

| 101 |

|

| 102 |

|

| 103 |

@st.cache_data

|

| 104 |

+

def analyze(

|

| 105 |

+

model_family: str, model_path: str, ta_key: str, ta_endpoint: str, **kwargs

|

| 106 |

+

):

|

| 107 |

"""Analyze input using Analyzer engine and input arguments (kwargs)."""

|

| 108 |

if "entities" not in kwargs or "All" in kwargs["entities"]:

|

| 109 |

kwargs["entities"] = None

|

| 110 |

+

|

| 111 |

+

if "deny_list" in kwargs and kwargs["deny_list"] is not None:

|

| 112 |

+

ad_hoc_recognizer = create_ad_hoc_deny_list_recognizer(kwargs["deny_list"])

|

| 113 |

+

kwargs["ad_hoc_recognizers"] = [ad_hoc_recognizer] if ad_hoc_recognizer else []

|

| 114 |

+

del kwargs["deny_list"]

|

| 115 |

+

|

| 116 |

+

if "regex_params" in kwargs and len(kwargs["regex_params"]) > 0:

|

| 117 |

+

ad_hoc_recognizer = create_ad_hoc_regex_recognizer(*kwargs["regex_params"])

|

| 118 |

+

kwargs["ad_hoc_recognizers"] = [ad_hoc_recognizer] if ad_hoc_recognizer else []

|

| 119 |

+

del kwargs["regex_params"]

|

| 120 |

+

|

| 121 |

+

return analyzer_engine(model_family, model_path, ta_key, ta_endpoint).analyze(

|

| 122 |

+

**kwargs

|

| 123 |

+

)

|

| 124 |

|

| 125 |

|

| 126 |

def anonymize(

|

|

|

|

| 209 |

def create_fake_data(

|

| 210 |

text: str,

|

| 211 |

analyze_results: List[RecognizerResult],

|

| 212 |

+

openai_params: OpenAIParams,

|

|

|

|

| 213 |

):

|

| 214 |

"""Creates a synthetic version of the text using OpenAI APIs"""

|

| 215 |

+

if not openai_params.openai_key:

|

| 216 |

return "Please provide your OpenAI key"

|

| 217 |

results = anonymize(text=text, operator="replace", analyze_results=analyze_results)

|

| 218 |

+

set_openai_params(openai_params)

|

| 219 |

prompt = create_prompt(results.text)

|

| 220 |

+

print(f"Prompt: {prompt}")

|

| 221 |

+

fake = call_openai_api(

|

| 222 |

+

prompt=prompt,

|

| 223 |

+

openai_model_name=openai_params.model,

|

| 224 |

+

openai_deployment_name=openai_params.deployment_name,

|

| 225 |

+

)

|

| 226 |

return fake

|

| 227 |

|

| 228 |

|

| 229 |

@st.cache_data

|

| 230 |

+

def call_openai_api(

|

| 231 |

+

prompt: str, openai_model_name: str, openai_deployment_name: Optional[str] = None

|

| 232 |

+

) -> str:

|

| 233 |

+

fake_data = call_completion_model(

|

| 234 |

+

prompt, model=openai_model_name, deployment_id=openai_deployment_name

|

| 235 |

+

)

|

| 236 |

return fake_data

|

| 237 |

+

|

| 238 |

+

|

| 239 |

+

def create_ad_hoc_deny_list_recognizer(

|

| 240 |

+

deny_list=Optional[List[str]],

|

| 241 |

+

) -> Optional[PatternRecognizer]:

|

| 242 |

+

if not deny_list:

|

| 243 |

+

return None

|

| 244 |

+

|

| 245 |

+

deny_list_recognizer = PatternRecognizer(

|

| 246 |

+

supported_entity="GENERIC_PII", deny_list=deny_list

|

| 247 |

+

)

|

| 248 |

+

return deny_list_recognizer

|

| 249 |

+

|

| 250 |

+

|

| 251 |

+

def create_ad_hoc_regex_recognizer(

|

| 252 |

+

regex: str, entity_type: str, score: float, context: Optional[List[str]] = None

|

| 253 |

+

) -> Optional[PatternRecognizer]:

|

| 254 |

+

if not regex:

|

| 255 |

+

return None

|

| 256 |

+

pattern = Pattern(name="Regex pattern", regex=regex, score=score)

|

| 257 |

+

regex_recognizer = PatternRecognizer(

|

| 258 |

+

supported_entity=entity_type, patterns=[pattern], context=context

|

| 259 |

+

)

|

| 260 |

+

return regex_recognizer

|

presidio_nlp_engine_config.py

ADDED

|

@@ -0,0 +1,137 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

from typing import Tuple

|

| 2 |

+

import logging

|

| 3 |

+

import spacy

|

| 4 |

+

from presidio_analyzer import RecognizerRegistry

|

| 5 |

+

from presidio_analyzer.nlp_engine import NlpEngine, NlpEngineProvider

|

| 6 |

+

|

| 7 |

+

logger = logging.getLogger("presidio-streamlit")

|

| 8 |

+

|

| 9 |

+

|

| 10 |

+

def create_nlp_engine_with_spacy(

|

| 11 |

+

model_path: str,

|

| 12 |

+

) -> Tuple[NlpEngine, RecognizerRegistry]:

|

| 13 |

+

"""

|

| 14 |

+

Instantiate an NlpEngine with a spaCy model

|

| 15 |

+

:param model_path: spaCy model path.

|

| 16 |

+

"""

|

| 17 |

+

registry = RecognizerRegistry()

|

| 18 |

+

registry.load_predefined_recognizers()

|

| 19 |

+

|

| 20 |

+

if not spacy.util.is_package(model_path):

|

| 21 |

+

spacy.cli.download(model_path)

|

| 22 |

+

|

| 23 |

+

nlp_configuration = {

|

| 24 |

+

"nlp_engine_name": "spacy",

|

| 25 |

+

"models": [{"lang_code": "en", "model_name": model_path}],

|

| 26 |

+

}

|

| 27 |

+

|

| 28 |

+

nlp_engine = NlpEngineProvider(nlp_configuration=nlp_configuration).create_engine()

|

| 29 |

+

|

| 30 |

+

return nlp_engine, registry

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

def create_nlp_engine_with_transformers(

|

| 34 |

+

model_path: str,

|

| 35 |

+

) -> Tuple[NlpEngine, RecognizerRegistry]:

|

| 36 |

+

"""

|

| 37 |

+

Instantiate an NlpEngine with a TransformersRecognizer and a small spaCy model.

|

| 38 |

+

The TransformersRecognizer would return results from Transformers models, the spaCy model

|

| 39 |

+

would return NlpArtifacts such as POS and lemmas.

|

| 40 |

+

:param model_path: HuggingFace model path.

|

| 41 |

+

"""

|

| 42 |

+

|

| 43 |

+

from transformers_rec import (

|

| 44 |

+

STANFORD_COFIGURATION,

|

| 45 |

+

BERT_DEID_CONFIGURATION,

|

| 46 |

+

TransformersRecognizer,

|

| 47 |

+

)

|

| 48 |

+

|

| 49 |

+

registry = RecognizerRegistry()

|

| 50 |

+

registry.load_predefined_recognizers()

|

| 51 |

+

|

| 52 |

+

if not spacy.util.is_package("en_core_web_sm"):

|

| 53 |

+

spacy.cli.download("en_core_web_sm")

|

| 54 |

+

# Using a small spaCy model + a HF NER model

|

| 55 |

+

transformers_recognizer = TransformersRecognizer(model_path=model_path)

|

| 56 |

+

|

| 57 |

+

if model_path == "StanfordAIMI/stanford-deidentifier-base":

|

| 58 |

+

transformers_recognizer.load_transformer(**STANFORD_COFIGURATION)

|

| 59 |

+

elif model_path == "obi/deid_roberta_i2b2":

|

| 60 |

+

transformers_recognizer.load_transformer(**BERT_DEID_CONFIGURATION)

|

| 61 |

+

else:

|

| 62 |

+

print(f"Warning: Model has no configuration, loading default.")

|

| 63 |

+

transformers_recognizer.load_transformer(**BERT_DEID_CONFIGURATION)

|

| 64 |

+

|

| 65 |

+

# Use small spaCy model, no need for both spacy and HF models

|

| 66 |

+

# The transformers model is used here as a recognizer, not as an NlpEngine

|

| 67 |

+

nlp_configuration = {

|

| 68 |

+

"nlp_engine_name": "spacy",

|

| 69 |

+

"models": [{"lang_code": "en", "model_name": "en_core_web_sm"}],

|

| 70 |

+

}

|

| 71 |

+

|

| 72 |

+

registry.add_recognizer(transformers_recognizer)

|

| 73 |

+

registry.remove_recognizer("SpacyRecognizer")

|

| 74 |

+

|

| 75 |

+

nlp_engine = NlpEngineProvider(nlp_configuration=nlp_configuration).create_engine()

|

| 76 |

+

|

| 77 |

+

return nlp_engine, registry

|

| 78 |

+

|

| 79 |

+

|

| 80 |

+

def create_nlp_engine_with_flair(

|

| 81 |

+

model_path: str,

|

| 82 |

+

) -> Tuple[NlpEngine, RecognizerRegistry]:

|

| 83 |

+

"""

|

| 84 |

+

Instantiate an NlpEngine with a FlairRecognizer and a small spaCy model.

|

| 85 |

+

The FlairRecognizer would return results from Flair models, the spaCy model

|

| 86 |

+

would return NlpArtifacts such as POS and lemmas.

|

| 87 |

+

:param model_path: Flair model path.

|

| 88 |

+

"""

|

| 89 |

+

from flair_recognizer import FlairRecognizer

|

| 90 |

+

|

| 91 |

+

registry = RecognizerRegistry()

|

| 92 |

+

registry.load_predefined_recognizers()

|

| 93 |

+

|

| 94 |

+

if not spacy.util.is_package("en_core_web_sm"):

|

| 95 |

+

spacy.cli.download("en_core_web_sm")

|

| 96 |

+

# Using a small spaCy model + a Flair NER model

|

| 97 |

+

flair_recognizer = FlairRecognizer(model_path=model_path)

|

| 98 |

+

nlp_configuration = {

|

| 99 |

+

"nlp_engine_name": "spacy",

|

| 100 |

+

"models": [{"lang_code": "en", "model_name": "en_core_web_sm"}],

|

| 101 |

+

}

|

| 102 |

+

registry.add_recognizer(flair_recognizer)

|

| 103 |

+

registry.remove_recognizer("SpacyRecognizer")

|

| 104 |

+

|

| 105 |

+

nlp_engine = NlpEngineProvider(nlp_configuration=nlp_configuration).create_engine()

|

| 106 |

+

|

| 107 |

+

return nlp_engine, registry

|

| 108 |

+

|

| 109 |

+

|

| 110 |

+

def create_nlp_engine_with_azure_text_analytics(ta_key: str, ta_endpoint: str):

|

| 111 |

+

"""

|

| 112 |

+

Instantiate an NlpEngine with a TextAnalyticsWrapper and a small spaCy model.

|

| 113 |

+

The TextAnalyticsWrapper would return results from calling Azure Text Analytics PII, the spaCy model

|

| 114 |

+

would return NlpArtifacts such as POS and lemmas.

|

| 115 |

+

:param ta_key: Azure Text Analytics key.

|

| 116 |

+

:param ta_endpoint: Azure Text Analytics endpoint.

|

| 117 |

+

"""

|

| 118 |

+

from text_analytics_wrapper import TextAnalyticsWrapper

|

| 119 |

+

|

| 120 |

+

if not ta_key or not ta_endpoint:

|

| 121 |

+

raise RuntimeError("Please fill in the Text Analytics endpoint details")

|

| 122 |

+

|

| 123 |

+

registry = RecognizerRegistry()

|

| 124 |

+

registry.load_predefined_recognizers()

|

| 125 |

+

|

| 126 |

+

ta_recognizer = TextAnalyticsWrapper(ta_endpoint=ta_endpoint, ta_key=ta_key)

|

| 127 |

+

nlp_configuration = {

|

| 128 |

+

"nlp_engine_name": "spacy",

|

| 129 |

+

"models": [{"lang_code": "en", "model_name": "en_core_web_sm"}],

|

| 130 |

+

}

|

| 131 |

+

|

| 132 |

+

nlp_engine = NlpEngineProvider(nlp_configuration=nlp_configuration).create_engine()

|

| 133 |

+

|

| 134 |

+

registry.add_recognizer(ta_recognizer)

|

| 135 |

+

registry.remove_recognizer("SpacyRecognizer")

|

| 136 |

+

|

| 137 |

+

return nlp_engine, registry

|

presidio_streamlit.py

CHANGED

|

@@ -1,13 +1,16 @@

|

|

| 1 |

"""Streamlit app for Presidio."""

|

|

|

|

| 2 |

import os

|

| 3 |

-

|

| 4 |

|

|

|

|

| 5 |

import pandas as pd

|

| 6 |

import streamlit as st

|

| 7 |

import streamlit.components.v1 as components

|

| 8 |

-

|

| 9 |

from annotated_text import annotated_text

|

|

|

|

| 10 |

|

|

|

|

| 11 |

from presidio_helpers import (

|

| 12 |

get_supported_entities,

|

| 13 |

analyze,

|

|

@@ -17,45 +20,86 @@ from presidio_helpers import (

|

|

| 17 |

analyzer_engine,

|

| 18 |

)

|

| 19 |

|

| 20 |

-

st.set_page_config(

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 21 |

|

| 22 |

# Sidebar

|

| 23 |

st.sidebar.header(

|

| 24 |

"""

|

| 25 |

-

PII De-Identification with Microsoft Presidio

|

| 26 |

"""

|

| 27 |

)

|

| 28 |

|

| 29 |

-

st.sidebar.info(

|

| 30 |

-

"Presidio is an open source customizable framework for PII detection and de-identification\n"

|

| 31 |

-

"[Code](https://aka.ms/presidio) | "

|

| 32 |

-

"[Tutorial](https://microsoft.github.io/presidio/tutorial/) | "

|

| 33 |

-

"[Installation](https://microsoft.github.io/presidio/installation/) | "

|

| 34 |

-

"[FAQ](https://microsoft.github.io/presidio/faq/)",

|

| 35 |

-

icon="ℹ️",

|

| 36 |

-

)

|

| 37 |

|

| 38 |

-

|

| 39 |

-

|

| 40 |

-

|

| 41 |

-

|

| 42 |

-

|

|

|

|

| 43 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 44 |

st_model = st.sidebar.selectbox(

|

| 45 |

-

"NER model

|

| 46 |

-

|

| 47 |

-

|

| 48 |

-

|

| 49 |

-

"flair/ner-english-large",

|

| 50 |

-

"en_core_web_lg",

|

| 51 |

-

],

|

| 52 |

-

index=1,

|

| 53 |

-

help="""

|

| 54 |

-

Select which Named Entity Recognition (NER) model to use for PII detection, in parallel to rule-based recognizers.

|

| 55 |

-

Presidio supports multiple NER packages off-the-shelf, such as spaCy, Huggingface, Stanza and Flair.

|

| 56 |

-

""",

|

| 57 |

)

|

| 58 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 59 |

|

| 60 |

st_operator = st.sidebar.selectbox(

|

| 61 |

"De-identification approach",

|

|

@@ -75,8 +119,11 @@ st_operator = st.sidebar.selectbox(

|

|

| 75 |

st_mask_char = "*"

|

| 76 |

st_number_of_chars = 15

|

| 77 |

st_encrypt_key = "WmZq4t7w!z%C&F)J"

|

| 78 |

-

|

| 79 |

-

|

|

|

|

|

|

|

|

|

|

| 80 |

if st_operator == "mask":

|

| 81 |

st_number_of_chars = st.sidebar.number_input(

|

| 82 |

"number of chars", value=st_number_of_chars, min_value=0, max_value=100

|

|

@@ -87,6 +134,22 @@ if st_operator == "mask":

|

|

| 87 |

elif st_operator == "encrypt":

|

| 88 |

st_encrypt_key = st.sidebar.text_input("AES key", value=st_encrypt_key)

|

| 89 |

elif st_operator == "synthesize":

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 90 |

st_openai_key = st.sidebar.text_input(

|

| 91 |

"OPENAI_KEY",

|

| 92 |

value=os.getenv("OPENAI_KEY", default=""),

|

|

@@ -95,36 +158,87 @@ elif st_operator == "synthesize":

|

|

| 95 |

)

|

| 96 |

st_openai_model = st.sidebar.text_input(

|

| 97 |

"OpenAI model for text synthesis",

|

| 98 |

-

value=

|

| 99 |

help="See more here: https://platform.openai.com/docs/models/",

|

| 100 |

)

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 101 |

st_threshold = st.sidebar.slider(

|

| 102 |

label="Acceptance threshold",

|

| 103 |

min_value=0.0,

|

| 104 |

max_value=1.0,

|

| 105 |

value=0.35,

|

| 106 |

-

help="Define the threshold for accepting a detection as PII.",

|

| 107 |

)

|

| 108 |

|

| 109 |

st_return_decision_process = st.sidebar.checkbox(

|

| 110 |

"Add analysis explanations to findings",

|

| 111 |

value=False,

|

| 112 |

help="Add the decision process to the output table. "

|

| 113 |

-

|

| 114 |

)

|

| 115 |

|

| 116 |

-

|

| 117 |

-

|

| 118 |

-

|

| 119 |

-

|

| 120 |

-

help="Limit the list of PII entities detected. "

|

| 121 |

-

"This list is dynamic and based on the NER model and registered recognizers. "

|

| 122 |

-

"More information can be found here: https://microsoft.github.io/presidio/analyzer/adding_recognizers/",

|

| 123 |

)

|

| 124 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 125 |

# Main panel

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 126 |

analyzer_load_state = st.info("Starting Presidio analyzer...")

|

| 127 |

-

|

| 128 |

analyzer_load_state.empty()

|

| 129 |

|

| 130 |

# Read default text

|

|

@@ -135,92 +249,103 @@ with open("demo_text.txt") as f:

|

|

| 135 |

col1, col2 = st.columns(2)

|

| 136 |

|

| 137 |

# Before:

|

| 138 |

-

col1.subheader("Input

|

| 139 |

st_text = col1.text_area(

|

| 140 |

-

label="Enter text",

|

| 141 |

-

value="".join(demo_text),

|

| 142 |

-

height=400,

|

| 143 |

)

|

| 144 |

|

| 145 |

-

|

| 146 |

-

|

| 147 |

-

|

| 148 |

-

|

| 149 |

-

|

| 150 |

-

|

| 151 |

-

|

| 152 |

-

|

| 153 |

-

|

| 154 |

-

|

| 155 |

-

if st_operator not in ("highlight", "synthesize"):

|

| 156 |

-

with col2:

|

| 157 |

-

st.subheader(f"Output")

|

| 158 |

-

st_anonymize_results = anonymize(

|

| 159 |

-

text=st_text,

|

| 160 |

-

operator=st_operator,

|

| 161 |

-

mask_char=st_mask_char,

|

| 162 |

-

number_of_chars=st_number_of_chars,

|

| 163 |

-

encrypt_key=st_encrypt_key,

|

| 164 |

-

analyze_results=st_analyze_results,

|

| 165 |

-

)

|

| 166 |

-

st.text_area(label="De-identified", value=st_anonymize_results.text, height=400)

|

| 167 |

-

elif st_operator == "synthesize":

|

| 168 |

-

with col2:

|

| 169 |

-

st.subheader(f"OpenAI Generated output")

|

| 170 |

-

fake_data = create_fake_data(

|

| 171 |

-

st_text,

|

| 172 |

-

st_analyze_results,

|

| 173 |

-

openai_key=st_openai_key,

|

| 174 |

-

openai_model_name=st_openai_model,

|

| 175 |

-

)

|

| 176 |

-

st.text_area(label="Synthetic data", value=fake_data, height=400)

|

| 177 |

-

else:

|

| 178 |

-

st.subheader("Highlighted")

|

| 179 |

-

annotated_tokens = annotate(

|

| 180 |

-

text=st_text,

|

| 181 |

-

analyze_results=st_analyze_results

|

| 182 |

)

|

| 183 |

-

# annotated_tokens

|

| 184 |

-

annotated_text(*annotated_tokens)

|

| 185 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 186 |

|

| 187 |

-

|

| 188 |

-

|

| 189 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 190 |

|

| 191 |

-

|

| 192 |

-

|

| 193 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 194 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 195 |

|

| 196 |

-

|

| 197 |

-

|

| 198 |

-

|

| 199 |

-

|

| 200 |

-

|

| 201 |

-

|

| 202 |

-

|

| 203 |

-

|

| 204 |

-

|

| 205 |

-

{

|

| 206 |

-

"entity_type": "Entity type",

|

| 207 |

-

"text": "Text",

|

| 208 |

-

"start": "Start",

|

| 209 |

-

"end": "End",

|

| 210 |

-

"score": "Confidence",

|

| 211 |

-

},

|

| 212 |

-

axis=1,

|

| 213 |

-

)

|

| 214 |

-

df_subset["Text"] = [st_text[res.start: res.end] for res in st_analyze_results]

|

| 215 |

-

if st_return_decision_process:

|

| 216 |

-

analysis_explanation_df = pd.DataFrame.from_records(

|

| 217 |

-

[r.analysis_explanation.to_dict() for r in st_analyze_results]

|

| 218 |

)

|

| 219 |

-

df_subset =

|

| 220 |

-

|

| 221 |

-

|

| 222 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 223 |

|

|

|

|

|

|

|

|

|

|

|

|

|

| 224 |

|

| 225 |

components.html(

|

| 226 |

"""

|

|

|

|

| 1 |

"""Streamlit app for Presidio."""

|

| 2 |

+

import logging

|

| 3 |

import os

|

| 4 |

+

import traceback

|

| 5 |

|

| 6 |

+

import dotenv

|

| 7 |

import pandas as pd

|

| 8 |

import streamlit as st

|

| 9 |

import streamlit.components.v1 as components

|

|

|

|

| 10 |

from annotated_text import annotated_text

|

| 11 |

+

from streamlit_tags import st_tags

|

| 12 |

|

| 13 |

+

from openai_fake_data_generator import OpenAIParams

|

| 14 |

from presidio_helpers import (

|

| 15 |

get_supported_entities,

|

| 16 |

analyze,

|

|

|

|

| 20 |

analyzer_engine,

|

| 21 |

)

|

| 22 |

|

| 23 |

+

st.set_page_config(

|

| 24 |

+

page_title="Presidio demo",

|

| 25 |

+

layout="wide",

|

| 26 |

+

initial_sidebar_state="expanded",

|

| 27 |

+

menu_items={

|

| 28 |

+

"About": "https://microsoft.github.io/presidio/",

|

| 29 |

+

},

|

| 30 |

+

)

|

| 31 |

+

|

| 32 |

+

dotenv.load_dotenv()

|

| 33 |

+

logger = logging.getLogger("presidio-streamlit")

|

| 34 |

+

|

| 35 |

+

|

| 36 |

+

allow_other_models = os.getenv("ALLOW_OTHER_MODELS", False)

|

| 37 |

+

|

| 38 |

|

| 39 |

# Sidebar

|

| 40 |

st.sidebar.header(

|

| 41 |

"""

|

| 42 |

+

PII De-Identification with [Microsoft Presidio](https://microsoft.github.io/presidio/)

|

| 43 |

"""

|

| 44 |

)

|

| 45 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 46 |

|

| 47 |

+

model_help_text = """

|

| 48 |

+

Select which Named Entity Recognition (NER) model to use for PII detection, in parallel to rule-based recognizers.

|

| 49 |

+

Presidio supports multiple NER packages off-the-shelf, such as spaCy, Huggingface, Stanza and Flair,

|

| 50 |

+

as well as service such as Azure Text Analytics PII.

|

| 51 |

+

"""

|

| 52 |

+

st_ta_key = st_ta_endpoint = ""

|

| 53 |

|

| 54 |

+

model_list = [

|

| 55 |

+

"spaCy/en_core_web_lg",

|

| 56 |

+

"flair/ner-english-large",

|

| 57 |

+

"HuggingFace/obi/deid_roberta_i2b2",

|

| 58 |

+

"HuggingFace/StanfordAIMI/stanford-deidentifier-base",

|

| 59 |

+

"Azure Text Analytics PII",

|

| 60 |

+

"Other",

|

| 61 |

+

]

|

| 62 |

+

if not allow_other_models:

|

| 63 |

+

model_list.pop()

|

| 64 |

+

# Select model

|

| 65 |

st_model = st.sidebar.selectbox(

|

| 66 |

+

"NER model package",

|

| 67 |

+

model_list,

|

| 68 |

+

index=2,

|

| 69 |

+

help=model_help_text,

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 70 |

)

|

| 71 |

+

|

| 72 |

+

# Extract model package.

|

| 73 |

+

st_model_package = st_model.split("/")[0]

|

| 74 |

+

|

| 75 |

+

# Remove package prefix (if needed)

|

| 76 |

+

st_model = (

|

| 77 |

+

st_model

|

| 78 |

+

if st_model_package not in ("spaCy", "HuggingFace")

|

| 79 |

+

else "/".join(st_model.split("/")[1:])

|

| 80 |

+

)

|

| 81 |

+

|

| 82 |

+

if st_model == "Other":

|

| 83 |

+

st_model_package = st.sidebar.selectbox(

|

| 84 |

+

"NER model OSS package", options=["spaCy", "Flair", "HuggingFace"]

|

| 85 |

+

)

|

| 86 |

+

st_model = st.sidebar.text_input(f"NER model name", value="")

|

| 87 |

+

|

| 88 |

+

if st_model == "Azure Text Analytics PII":

|

| 89 |

+

st_ta_key = st.sidebar.text_input(

|

| 90 |

+

f"Text Analytics key", value=os.getenv("TA_KEY", ""), type="password"

|

| 91 |

+

)

|

| 92 |

+

st_ta_endpoint = st.sidebar.text_input(

|

| 93 |

+

f"Text Analytics endpoint",

|

| 94 |

+

value=os.getenv("TA_ENDPOINT", default=""),

|

| 95 |

+

help="For more info: https://learn.microsoft.com/en-us/azure/cognitive-services/language-service/personally-identifiable-information/overview", # noqa: E501

|

| 96 |

+

)

|

| 97 |

+

|

| 98 |

+

|

| 99 |

+

st.sidebar.warning("Note: Models might take some time to download. ")

|

| 100 |

+

|

| 101 |

+

analyzer_params = (st_model_package, st_model, st_ta_key, st_ta_endpoint)

|

| 102 |

+

logger.debug(f"analyzer_params: {analyzer_params}")

|

| 103 |

|

| 104 |

st_operator = st.sidebar.selectbox(

|

| 105 |

"De-identification approach",

|

|

|

|

| 119 |

st_mask_char = "*"

|

| 120 |

st_number_of_chars = 15

|

| 121 |

st_encrypt_key = "WmZq4t7w!z%C&F)J"

|

| 122 |

+

|

| 123 |

+

open_ai_params = None

|

| 124 |

+

|

| 125 |

+

logger.debug(f"st_operator: {st_operator}")

|

| 126 |

+

|

| 127 |

if st_operator == "mask":

|

| 128 |

st_number_of_chars = st.sidebar.number_input(

|

| 129 |

"number of chars", value=st_number_of_chars, min_value=0, max_value=100

|

|

|

|

| 134 |

elif st_operator == "encrypt":

|

| 135 |

st_encrypt_key = st.sidebar.text_input("AES key", value=st_encrypt_key)

|

| 136 |

elif st_operator == "synthesize":

|

| 137 |

+

if os.getenv("OPENAI_TYPE", default="openai") == "Azure":

|

| 138 |

+

openai_api_type = "azure"

|

| 139 |

+

st_openai_api_base = st.sidebar.text_input(

|

| 140 |

+

"Azure OpenAI base URL",

|

| 141 |

+

value=os.getenv("AZURE_OPENAI_ENDPOINT", default=""),

|

| 142 |

+

)

|

| 143 |

+

st_deployment_name = st.sidebar.text_input(

|

| 144 |

+

"Deployment name", value=os.getenv("AZURE_OPENAI_DEPLOYMENT", default="")

|

| 145 |

+

)

|

| 146 |

+

st_openai_version = st.sidebar.text_input(

|

| 147 |

+

"OpenAI version",

|

| 148 |

+

value=os.getenv("OPENAI_API_VERSION", default="2023-05-15"),

|

| 149 |

+

)

|

| 150 |

+

else:

|

| 151 |

+

st_openai_version = openai_api_type = st_openai_api_base = None

|

| 152 |

+

st_deployment_name = ""

|

| 153 |

st_openai_key = st.sidebar.text_input(

|

| 154 |

"OPENAI_KEY",

|

| 155 |

value=os.getenv("OPENAI_KEY", default=""),

|

|

|

|

| 158 |

)

|

| 159 |

st_openai_model = st.sidebar.text_input(

|

| 160 |

"OpenAI model for text synthesis",

|

| 161 |

+

value=os.getenv("OPENAI_MODEL", default="text-davinci-003"),

|

| 162 |

help="See more here: https://platform.openai.com/docs/models/",

|

| 163 |

)

|

| 164 |

+

|

| 165 |

+

open_ai_params = OpenAIParams(

|

| 166 |

+

openai_key=st_openai_key,

|

| 167 |

+

model=st_openai_model,

|

| 168 |

+

api_base=st_openai_api_base,

|

| 169 |

+

deployment_name=st_deployment_name,

|

| 170 |

+

api_version=st_openai_version,

|

| 171 |

+

api_type=openai_api_type,

|

| 172 |

+

)

|

| 173 |

+

|

| 174 |

st_threshold = st.sidebar.slider(

|

| 175 |

label="Acceptance threshold",

|

| 176 |

min_value=0.0,

|

| 177 |

max_value=1.0,

|

| 178 |

value=0.35,

|

| 179 |

+

help="Define the threshold for accepting a detection as PII. See more here: ",

|

| 180 |

)

|

| 181 |

|

| 182 |

st_return_decision_process = st.sidebar.checkbox(

|

| 183 |

"Add analysis explanations to findings",

|

| 184 |

value=False,

|

| 185 |

help="Add the decision process to the output table. "

|

| 186 |

+

"More information can be found here: https://microsoft.github.io/presidio/analyzer/decision_process/",

|

| 187 |

)

|

| 188 |

|

| 189 |

+

# Allow and deny lists

|

| 190 |

+

st_deny_allow_expander = st.sidebar.expander(

|

| 191 |

+

"Allowlists and denylists",

|

| 192 |

+

expanded=False,

|

|

|

|

|

|

|

|

|

|

| 193 |

)

|

| 194 |

|

| 195 |

+

with st_deny_allow_expander:

|

| 196 |

+

st_allow_list = st_tags(

|

| 197 |

+

label="Add words to the allowlist", text="Enter word and press enter."

|

| 198 |

+

)

|

| 199 |

+

st.caption(

|

| 200 |

+

"Allowlists contain words that are not considered PII, but are detected as such."

|

| 201 |

+

)

|

| 202 |

+

|

| 203 |

+

st_deny_list = st_tags(

|

| 204 |

+

label="Add words to the denylist", text="Enter word and press enter."

|

| 205 |

+

)

|

| 206 |

+

st.caption(

|

| 207 |

+

"Denylists contain words that are considered PII, but are not detected as such."

|

| 208 |

+

)

|

| 209 |

# Main panel

|

| 210 |

+

|

| 211 |

+

with st.expander("About this demo", expanded=False):

|

| 212 |

+

st.info(

|

| 213 |

+

"""Presidio is an open source customizable framework for PII detection and de-identification.

|

| 214 |

+

\n\n[Code](https://aka.ms/presidio) |

|

| 215 |

+

[Tutorial](https://microsoft.github.io/presidio/tutorial/) |

|

| 216 |

+

[Installation](https://microsoft.github.io/presidio/installation/) |

|

| 217 |

+

[FAQ](https://microsoft.github.io/presidio/faq/) |"""

|

| 218 |

+

)

|

| 219 |

+

|

| 220 |

+

st.info(

|

| 221 |

+

"""

|

| 222 |

+

Use this demo to:

|

| 223 |

+

- Experiment with different off-the-shelf models and NLP packages.

|

| 224 |

+

- Explore the different de-identification options, including redaction, masking, encryption and more.

|

| 225 |

+

- Generate synthetic text with Microsoft Presidio and OpenAI.

|

| 226 |

+

- Configure allow and deny lists.

|

| 227 |

+

|

| 228 |

+

This demo website shows some of Presidio's capabilities.

|

| 229 |

+

[Visit our website](https://microsoft.github.io/presidio) for more info,

|

| 230 |

+

samples and deployment options.

|

| 231 |

+

"""

|

| 232 |

+

)

|

| 233 |

+

|

| 234 |

+

st.markdown(

|

| 235 |

+

"[](https://img.shields.io/pypi/dm/presidio-analyzer.svg)" # noqa

|

| 236 |

+

"[](https://opensource.org/licenses/MIT)"

|

| 237 |

+

""

|

| 238 |

+

)

|

| 239 |

+

|

| 240 |

analyzer_load_state = st.info("Starting Presidio analyzer...")

|

| 241 |

+

|

| 242 |

analyzer_load_state.empty()

|

| 243 |

|

| 244 |

# Read default text

|

|

|

|

| 249 |

col1, col2 = st.columns(2)

|

| 250 |

|

| 251 |

# Before:

|

| 252 |

+

col1.subheader("Input")

|

| 253 |

st_text = col1.text_area(

|

| 254 |

+

label="Enter text", value="".join(demo_text), height=400, key="text_input"

|

|

|

|

|

|

|

| 255 |

)

|

| 256 |

|

| 257 |

+

try:

|

| 258 |

+

# Choose entities

|

| 259 |

+

st_entities_expander = st.sidebar.expander("Choose entities to look for")

|

| 260 |

+