Spaces:

Configuration error

Configuration error

Add files

Browse files- LICENSE +107 -0

- README.md +84 -13

- checkpoints/.gitattributes +35 -0

- checkpoints/README.md +5 -0

- checkpoints/ckpt.txt +1 -0

- checkpoints/cloth_segm.pth +3 -0

- checkpoints/ipadapter_faceid/ckpt.txt +1 -0

- checkpoints/oms_diffusion_768_200000.safetensors +3 -0

- garment_adapter/__pycache__/attention_processor.cpython-310.pyc +0 -0

- garment_adapter/__pycache__/garment_diffusion.cpython-310.pyc +0 -0

- garment_adapter/attention_processor.py +682 -0

- garment_adapter/garment_diffusion.py +248 -0

- garment_adapter/garment_ipadapter_faceid.py +673 -0

- garment_seg/__pycache__/network.cpython-310.pyc +0 -0

- garment_seg/__pycache__/process.cpython-310.pyc +0 -0

- garment_seg/network.py +560 -0

- garment_seg/process.py +99 -0

- gradio_animatediff.py +38 -0

- gradio_controlnet_inpainting.py +76 -0

- gradio_controlnet_openpose.py +72 -0

- gradio_generate.py +61 -0

- gradio_ipadapter_faceid.py +97 -0

- gradio_ipadapter_openpose.py +109 -0

- gradio_sd_inpainting.py +62 -0

- images/workflow.png +0 -0

- inference.py +41 -0

- nohup.out +1 -0

- output_img/out_0.png +0 -0

- output_img/out_1.png +0 -0

- output_img/out_2.png +0 -0

- output_img/out_3.png +0 -0

- pipelines/OmsAnimateDiffusionPipeline.py +306 -0

- pipelines/OmsDiffusionControlNetPipeline.py +437 -0

- pipelines/OmsDiffusionInpaintPipeline.py +502 -0

- pipelines/OmsDiffusionPipeline.py +294 -0

- pipelines/__pycache__/OmsDiffusionPipeline.cpython-310.pyc +0 -0

- run.log +2 -0

- utils/__pycache__/utils.cpython-310.pyc +0 -0

- utils/resampler.py +158 -0

- utils/utils.py +72 -0

- valid_cloth/t1.png +0 -0

- valid_cloth/t2.jpg +0 -0

- valid_cloth/t3.jpg +0 -0

- valid_cloth/t4.jpg +0 -0

LICENSE

ADDED

|

@@ -0,0 +1,107 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International

|

| 2 |

+

|

| 3 |

+

Creative Commons Corporation ("Creative Commons") is not a law firm and does not provide legal services or legal advice. Distribution of Creative Commons public licenses does not create a lawyer-client or other relationship. Creative Commons makes its licenses and related information available on an "as-is" basis. Creative Commons gives no warranties regarding its licenses, any material licensed under their terms and conditions, or any related information. Creative Commons disclaims all liability for damages resulting from their use to the fullest extent possible.

|

| 4 |

+

|

| 5 |

+

Using Creative Commons Public Licenses

|

| 6 |

+

|

| 7 |

+

Creative Commons public licenses provide a standard set of terms and conditions that creators and other rights holders may use to share original works of authorship and other material subject to copyright and certain other rights specified in the public license below. The following considerations are for informational purposes only, are not exhaustive, and do not form part of our licenses.

|

| 8 |

+

|

| 9 |

+

Considerations for licensors: Our public licenses are intended for use by those authorized to give the public permission to use material in ways otherwise restricted by copyright and certain other rights. Our licenses are irrevocable. Licensors should read and understand the terms and conditions of the license they choose before applying it. Licensors should also secure all rights necessary before applying our licenses so that the public can reuse the material as expected. Licensors should clearly mark any material not subject to the license. This includes other CC-licensed material, or material used under an exception or limitation to copyright. More considerations for licensors : wiki.creativecommons.org/Considerations_for_licensors

|

| 10 |

+

|

| 11 |

+

Considerations for the public: By using one of our public licenses, a licensor grants the public permission to use the licensed material under specified terms and conditions. If the licensor's permission is not necessary for any reason–for example, because of any applicable exception or limitation to copyright–then that use is not regulated by the license. Our licenses grant only permissions under copyright and certain other rights that a licensor has authority to grant. Use of the licensed material may still be restricted for other reasons, including because others have copyright or other rights in the material. A licensor may make special requests, such as asking that all changes be marked or described. Although not required by our licenses, you are encouraged to respect those requests where reasonable. More considerations for the public : wiki.creativecommons.org/Considerations_for_licensees

|

| 12 |

+

|

| 13 |

+

Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International Public License

|

| 14 |

+

|

| 15 |

+

By exercising the Licensed Rights (defined below), You accept and agree to be bound by the terms and conditions of this Creative Commons Attribution-NonCommercial-ShareAlike 4.0 International Public License ("Public License"). To the extent this Public License may be interpreted as a contract, You are granted the Licensed Rights in consideration of Your acceptance of these terms and conditions, and the Licensor grants You such rights in consideration of benefits the Licensor receives from making the Licensed Material available under these terms and conditions.

|

| 16 |

+

|

| 17 |

+

Section 1 – Definitions.

|

| 18 |

+

|

| 19 |

+

a. Adapted Material means material subject to Copyright and Similar Rights that is derived from or based upon the Licensed Material and in which the Licensed Material is translated, altered, arranged, transformed, or otherwise modified in a manner requiring permission under the Copyright and Similar Rights held by the Licensor. For purposes of this Public License, where the Licensed Material is a musical work, performance, or sound recording, Adapted Material is always produced where the Licensed Material is synched in timed relation with a moving image.

|

| 20 |

+

b. Adapter's License means the license You apply to Your Copyright and Similar Rights in Your contributions to Adapted Material in accordance with the terms and conditions of this Public License.

|

| 21 |

+

c. BY-NC-SA Compatible License means a license listed at creativecommons.org/compatiblelicenses, approved by Creative Commons as essentially the equivalent of this Public License.

|

| 22 |

+

d. Copyright and Similar Rights means copyright and/or similar rights closely related to copyright including, without limitation, performance, broadcast, sound recording, and Sui Generis Database Rights, without regard to how the rights are labeled or categorized. For purposes of this Public License, the rights specified in Section 2(b)(1)-(2) are not Copyright and Similar Rights.

|

| 23 |

+

e. Effective Technological Measures means those measures that, in the absence of proper authority, may not be circumvented under laws fulfilling obligations under Article 11 of the WIPO Copyright Treaty adopted on December 20, 1996, and/or similar international agreements.

|

| 24 |

+

f. Exceptions and Limitations means fair use, fair dealing, and/or any other exception or limitation to Copyright and Similar Rights that applies to Your use of the Licensed Material.

|

| 25 |

+

g. License Elements means the license attributes listed in the name of a Creative Commons Public License. The License Elements of this Public License are Attribution, NonCommercial, and ShareAlike.

|

| 26 |

+

h. Licensed Material means the artistic or literary work, database, or other material to which the Licensor applied this Public License.

|

| 27 |

+

i. Licensed Rights means the rights granted to You subject to the terms and conditions of this Public License, which are limited to all Copyright and Similar Rights that apply to Your use of the Licensed Material and that the Licensor has authority to license.

|

| 28 |

+

j. Licensor means the individual(s) or entity(ies) granting rights under this Public License.

|

| 29 |

+

k. NonCommercial means not primarily intended for or directed towards commercial advantage or monetary compensation. For purposes of this Public License, the exchange of the Licensed Material for other material subject to Copyright and Similar Rights by digital file-sharing or similar means is NonCommercial provided there is no payment of monetary compensation in connection with the exchange.

|

| 30 |

+

l. Share means to provide material to the public by any means or process that requires permission under the Licensed Rights, such as reproduction, public display, public performance, distribution, dissemination, communication, or importation, and to make material available to the public including in ways that members of the public may access the material from a place and at a time individually chosen by them.

|

| 31 |

+

m. Sui Generis Database Rights means rights other than copyright resulting from Directive 96/9/EC of the European Parliament and of the Council of 11 March 1996 on the legal protection of databases, as amended and/or succeeded, as well as other essentially equivalent rights anywhere in the world.

|

| 32 |

+

n. You means the individual or entity exercising the Licensed Rights under this Public License. Your has a corresponding meaning.

|

| 33 |

+

Section 2 – Scope.

|

| 34 |

+

|

| 35 |

+

a. License grant.

|

| 36 |

+

1. Subject to the terms and conditions of this Public License, the Licensor hereby grants You a worldwide, royalty-free, non-sublicensable, non-exclusive, irrevocable license to exercise the Licensed Rights in the Licensed Material to:

|

| 37 |

+

A. reproduce and Share the Licensed Material, in whole or in part, for NonCommercial purposes only; and

|

| 38 |

+

B. produce, reproduce, and Share Adapted Material for NonCommercial purposes only.

|

| 39 |

+

2. Exceptions and Limitations. For the avoidance of doubt, where Exceptions and Limitations apply to Your use, this Public License does not apply, and You do not need to comply with its terms and conditions.

|

| 40 |

+

3. Term. The term of this Public License is specified in Section 6(a).

|

| 41 |

+

4. Media and formats; technical modifications allowed. The Licensor authorizes You to exercise the Licensed Rights in all media and formats whether now known or hereafter created, and to make technical modifications necessary to do so. The Licensor waives and/or agrees not to assert any right or authority to forbid You from making technical modifications necessary to exercise the Licensed Rights, including technical modifications necessary to circumvent Effective Technological Measures. For purposes of this Public License, simply making modifications authorized by this Section 2(a)(4) never produces Adapted Material.

|

| 42 |

+

5. Downstream recipients.

|

| 43 |

+

A. Offer from the Licensor – Licensed Material. Every recipient of the Licensed Material automatically receives an offer from the Licensor to exercise the Licensed Rights under the terms and conditions of this Public License.

|

| 44 |

+

B. Additional offer from the Licensor – Adapted Material. Every recipient of Adapted Material from You automatically receives an offer from the Licensor to exercise the Licensed Rights in the Adapted Material under the conditions of the Adapter's License You apply.

|

| 45 |

+

C. No downstream restrictions. You may not offer or impose any additional or different terms or conditions on, or apply any Effective Technological Measures to, the Licensed Material if doing so restricts exercise of the Licensed Rights by any recipient of the Licensed Material.

|

| 46 |

+

6. No endorsement. Nothing in this Public License constitutes or may be construed as permission to assert or imply that You are, or that Your use of the Licensed Material is, connected with, or sponsored, endorsed, or granted official status by, the Licensor or others designated to receive attribution as provided in Section 3(a)(1)(A)(i).

|

| 47 |

+

b. Other rights.

|

| 48 |

+

1. Moral rights, such as the right of integrity, are not licensed under this Public License, nor are publicity, privacy, and/or other similar personality rights; however, to the extent possible, the Licensor waives and/or agrees not to assert any such rights held by the Licensor to the limited extent necessary to allow You to exercise the Licensed Rights, but not otherwise.

|

| 49 |

+

2. Patent and trademark rights are not licensed under this Public License.

|

| 50 |

+

3. To the extent possible, the Licensor waives any right to collect royalties from You for the exercise of the Licensed Rights, whether directly or through a collecting society under any voluntary or waivable statutory or compulsory licensing scheme. In all other cases the Licensor expressly reserves any right to collect such royalties, including when the Licensed Material is used other than for NonCommercial purposes.

|

| 51 |

+

Section 3 – License Conditions.

|

| 52 |

+

|

| 53 |

+

Your exercise of the Licensed Rights is expressly made subject to the following conditions.

|

| 54 |

+

|

| 55 |

+

a. Attribution.

|

| 56 |

+

1. If You Share the Licensed Material (including in modified form), You must:

|

| 57 |

+

A. retain the following if it is supplied by the Licensor with the Licensed Material:

|

| 58 |

+

i. identification of the creator(s) of the Licensed Material and any others designated to receive attribution, in any reasonable manner requested by the Licensor (including by pseudonym if designated);

|

| 59 |

+

ii. a copyright notice;

|

| 60 |

+

iii. a notice that refers to this Public License;

|

| 61 |

+

iv. a notice that refers to the disclaimer of warranties;

|

| 62 |

+

v. a URI or hyperlink to the Licensed Material to the extent reasonably practicable;

|

| 63 |

+

|

| 64 |

+

B. indicate if You modified the Licensed Material and retain an indication of any previous modifications; and

|

| 65 |

+

C. indicate the Licensed Material is licensed under this Public License, and include the text of, or the URI or hyperlink to, this Public License.

|

| 66 |

+

2. You may satisfy the conditions in Section 3(a)(1) in any reasonable manner based on the medium, means, and context in which You Share the Licensed Material. For example, it may be reasonable to satisfy the conditions by providing a URI or hyperlink to a resource that includes the required information.

|

| 67 |

+

3. If requested by the Licensor, You must remove any of the information required by Section 3(a)(1)(A) to the extent reasonably practicable.

|

| 68 |

+

b. ShareAlike.In addition to the conditions in Section 3(a), if You Share Adapted Material You produce, the following conditions also apply.

|

| 69 |

+

1. The Adapter's License You apply must be a Creative Commons license with the same License Elements, this version or later, or a BY-NC-SA Compatible License.

|

| 70 |

+

2. You must include the text of, or the URI or hyperlink to, the Adapter's License You apply. You may satisfy this condition in any reasonable manner based on the medium, means, and context in which You Share Adapted Material.

|

| 71 |

+

3. You may not offer or impose any additional or different terms or conditions on, or apply any Effective Technological Measures to, Adapted Material that restrict exercise of the rights granted under the Adapter's License You apply.

|

| 72 |

+

Section 4 – Sui Generis Database Rights.

|

| 73 |

+

|

| 74 |

+

Where the Licensed Rights include Sui Generis Database Rights that apply to Your use of the Licensed Material:

|

| 75 |

+

|

| 76 |

+

a. for the avoidance of doubt, Section 2(a)(1) grants You the right to extract, reuse, reproduce, and Share all or a substantial portion of the contents of the database for NonCommercial purposes only;

|

| 77 |

+

b. if You include all or a substantial portion of the database contents in a database in which You have Sui Generis Database Rights, then the database in which You have Sui Generis Database Rights (but not its individual contents) is Adapted Material, including for purposes of Section 3(b); and

|

| 78 |

+

c. You must comply with the conditions in Section 3(a) if You Share all or a substantial portion of the contents of the database.

|

| 79 |

+

For the avoidance of doubt, this Section 4 supplements and does not replace Your obligations under this Public License where the Licensed Rights include other Copyright and Similar Rights.

|

| 80 |

+

Section 5 – Disclaimer of Warranties and Limitation of Liability.

|

| 81 |

+

|

| 82 |

+

a. Unless otherwise separately undertaken by the Licensor, to the extent possible, the Licensor offers the Licensed Material as-is and as-available, and makes no representations or warranties of any kind concerning the Licensed Material, whether express, implied, statutory, or other. This includes, without limitation, warranties of title, merchantability, fitness for a particular purpose, non-infringement, absence of latent or other defects, accuracy, or the presence or absence of errors, whether or not known or discoverable. Where disclaimers of warranties are not allowed in full or in part, this disclaimer may not apply to You.

|

| 83 |

+

b. To the extent possible, in no event will the Licensor be liable to You on any legal theory (including, without limitation, negligence) or otherwise for any direct, special, indirect, incidental, consequential, punitive, exemplary, or other losses, costs, expenses, or damages arising out of this Public License or use of the Licensed Material, even if the Licensor has been advised of the possibility of such losses, costs, expenses, or damages. Where a limitation of liability is not allowed in full or in part, this limitation may not apply to You.

|

| 84 |

+

c. The disclaimer of warranties and limitation of liability provided above shall be interpreted in a manner that, to the extent possible, most closely approximates an absolute disclaimer and waiver of all liability.

|

| 85 |

+

Section 6 – Term and Termination.

|

| 86 |

+

|

| 87 |

+

a. This Public License applies for the term of the Copyright and Similar Rights licensed here. However, if You fail to comply with this Public License, then Your rights under this Public License terminate automatically.

|

| 88 |

+

b. Where Your right to use the Licensed Material has terminated under Section 6(a), it reinstates:

|

| 89 |

+

1. automatically as of the date the violation is cured, provided it is cured within 30 days of Your discovery of the violation; or

|

| 90 |

+

2. upon express reinstatement by the Licensor.

|

| 91 |

+

For the avoidance of doubt, this Section 6(b) does not affect any right the Licensor may have to seek remedies for Your violations of this Public License.

|

| 92 |

+

|

| 93 |

+

c. For the avoidance of doubt, the Licensor may also offer the Licensed Material under separate terms or conditions or stop distributing the Licensed Material at any time; however, doing so will not terminate this Public License.

|

| 94 |

+

d. Sections 1, 5, 6, 7, and 8 survive termination of this Public License.

|

| 95 |

+

Section 7 – Other Terms and Conditions.

|

| 96 |

+

|

| 97 |

+

a. The Licensor shall not be bound by any additional or different terms or conditions communicated by You unless expressly agreed.

|

| 98 |

+

b. Any arrangements, understandings, or agreements regarding the Licensed Material not stated herein are separate from and independent of the terms and conditions of this Public License.

|

| 99 |

+

Section 8 – Interpretation.

|

| 100 |

+

|

| 101 |

+

a. For the avoidance of doubt, this Public License does not, and shall not be interpreted to, reduce, limit, restrict, or impose conditions on any use of the Licensed Material that could lawfully be made without permission under this Public License.

|

| 102 |

+

b. To the extent possible, if any provision of this Public License is deemed unenforceable, it shall be automatically reformed to the minimum extent necessary to make it enforceable. If the provision cannot be reformed, it shall be severed from this Public License without affecting the enforceability of the remaining terms and conditions.

|

| 103 |

+

c. No term or condition of this Public License will be waived and no failure to comply consented to unless expressly agreed to by the Licensor.

|

| 104 |

+

d. Nothing in this Public License constitutes or may be interpreted as a limitation upon, or waiver of, any privileges and immunities that apply to the Licensor or You, including from the legal processes of any jurisdiction or authority.

|

| 105 |

+

Creative Commons is not a party to its public licenses. Notwithstanding, Creative Commons may elect to apply one of its public licenses to material it publishes and in those instances will be considered the "Licensor." The text of the Creative Commons public licenses is dedicated to the public domain under the CC0 Public Domain Dedication. Except for the limited purpose of indicating that material is shared under a Creative Commons public license or as otherwise permitted by the Creative Commons policies published at creativecommons.org/policies, Creative Commons does not authorize the use of the trademark "Creative Commons" or any other trademark or logo of Creative Commons without its prior written consent including, without limitation, in connection with any unauthorized modifications to any of its public licenses or any other arrangements, understandings, or agreements concerning use of licensed material. For the avoidance of doubt, this paragraph does not form part of the public licenses.

|

| 106 |

+

|

| 107 |

+

Creative Commons may be contacted at creativecommons.org.

|

README.md

CHANGED

|

@@ -1,13 +1,84 @@

|

|

| 1 |

-

|

| 2 |

-

|

| 3 |

-

|

| 4 |

-

|

| 5 |

-

|

| 6 |

-

|

| 7 |

-

|

| 8 |

-

|

| 9 |

-

|

| 10 |

-

|

| 11 |

-

|

| 12 |

-

|

| 13 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

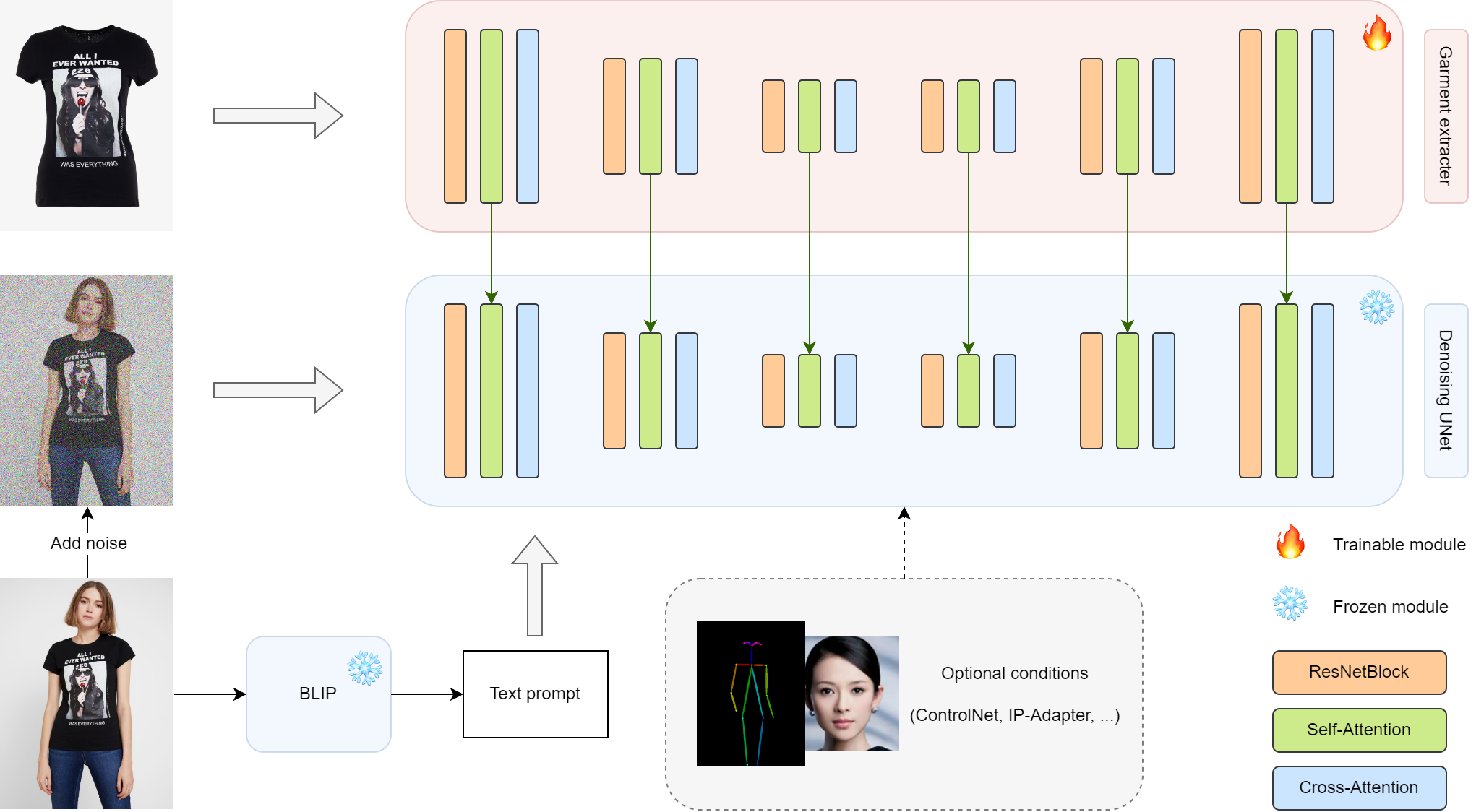

# Magic Clothing

|

| 2 |

+

This repository is the official implementation of Magic Clothing

|

| 3 |

+

|

| 4 |

+

Magic Clothing is a branch version of [OOTDiffusion](https://github.com/levihsu/OOTDiffusion), focusing on controllable garment-driven image synthesis

|

| 5 |

+

|

| 6 |

+

Please refer to our [previous paper](https://arxiv.org/abs/2403.01779) for more details

|

| 7 |

+

|

| 8 |

+

> **Magic Clothing: Controllable Garment-Driven Image Synthesis** (coming soon)<br>

|

| 9 |

+

> [Weifeng Chen](https://github.com/ShineChen1024)\*, [Tao Gu](https://github.com/T-Gu)\*, [Yuhao Xu](http://levihsu.github.io/), [Chengcai Chen](https://www.researchgate.net/profile/Chengcai-Chen)<br>

|

| 10 |

+

> \* Equal contribution<br>

|

| 11 |

+

> Xiao-i Research

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

## News

|

| 15 |

+

|

| 16 |

+

🔥 [2024/3/8] We released the model weights trained on the 768 resolution. The strength of clothing and text prompts can be independently adjusted.

|

| 17 |

+

|

| 18 |

+

🤗 [Hugging Face link](https://huggingface.co/ShineChen1024/MagicClothing)

|

| 19 |

+

|

| 20 |

+

🔥 [2024/2/28] We support [IP-Adapter-FaceID](https://huggingface.co/h94/IP-Adapter-FaceID) with [ControlNet-Openpose](https://github.com/lllyasviel/ControlNet-v1-1-nightly)! A portrait and a reference pose image can be used as additional conditions.

|

| 21 |

+

|

| 22 |

+

Have fun with **gradio_ipadapter_openpose.py**

|

| 23 |

+

|

| 24 |

+

🔥 [2024/2/23] We support [IP-Adapter-FaceID](https://huggingface.co/h94/IP-Adapter-FaceID) now! A portrait image can be used as an additional condition.

|

| 25 |

+

|

| 26 |

+

Have fun with **gradio_ipadapter_faceid.py**

|

| 27 |

+

|

| 28 |

+

|

| 29 |

+

|

| 30 |

+

|

| 31 |

+

|

| 32 |

+

|

| 33 |

+

## Installation

|

| 34 |

+

|

| 35 |

+

1. Clone the repository

|

| 36 |

+

|

| 37 |

+

```sh

|

| 38 |

+

git clone https://github.com/ShineChen1024/MagicClothing.git

|

| 39 |

+

```

|

| 40 |

+

|

| 41 |

+

2. Create a conda environment and install the required packages

|

| 42 |

+

|

| 43 |

+

```sh

|

| 44 |

+

conda create -n magicloth python==3.10

|

| 45 |

+

conda activate magicloth

|

| 46 |

+

pip install torch==2.0.1 torchvision==0.15.2 numpy==1.25.1 diffusers==0.25.1 opencv-python==4.9.0.80 transformers==4.31.0 gradio==4.16.0 safetensors==0.3.1 controlnet-aux==0.0.6 accelerate==0.21.0

|

| 47 |

+

```

|

| 48 |

+

|

| 49 |

+

## Inference

|

| 50 |

+

|

| 51 |

+

1. Python demo

|

| 52 |

+

|

| 53 |

+

> 512 weights

|

| 54 |

+

|

| 55 |

+

```sh

|

| 56 |

+

python inference.py --cloth_path [your cloth path] --model_path [your model path]

|

| 57 |

+

```

|

| 58 |

+

|

| 59 |

+

> 768 weights

|

| 60 |

+

|

| 61 |

+

```sh

|

| 62 |

+

python inference.py --cloth_path [your cloth path] --model_path [your model path] --enable_cloth_guidance

|

| 63 |

+

```

|

| 64 |

+

|

| 65 |

+

2. Gradio demo

|

| 66 |

+

|

| 67 |

+

> 512 weights

|

| 68 |

+

|

| 69 |

+

```sh

|

| 70 |

+

python gradio_generate.py --model_path [your model path]

|

| 71 |

+

```

|

| 72 |

+

|

| 73 |

+

> 768 weights

|

| 74 |

+

|

| 75 |

+

```sh

|

| 76 |

+

python gradio_generate.py --model_path [your model path] --enable_cloth_guidance

|

| 77 |

+

```

|

| 78 |

+

|

| 79 |

+

## TODO List

|

| 80 |

+

- [ ] Paper

|

| 81 |

+

- [x] Gradio demo

|

| 82 |

+

- [x] Inference code

|

| 83 |

+

- [x] Model weights

|

| 84 |

+

- [ ] Training code

|

checkpoints/.gitattributes

ADDED

|

@@ -0,0 +1,35 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

*.7z filter=lfs diff=lfs merge=lfs -text

|

| 2 |

+

*.arrow filter=lfs diff=lfs merge=lfs -text

|

| 3 |

+

*.bin filter=lfs diff=lfs merge=lfs -text

|

| 4 |

+

*.bz2 filter=lfs diff=lfs merge=lfs -text

|

| 5 |

+

*.ckpt filter=lfs diff=lfs merge=lfs -text

|

| 6 |

+

*.ftz filter=lfs diff=lfs merge=lfs -text

|

| 7 |

+

*.gz filter=lfs diff=lfs merge=lfs -text

|

| 8 |

+

*.h5 filter=lfs diff=lfs merge=lfs -text

|

| 9 |

+

*.joblib filter=lfs diff=lfs merge=lfs -text

|

| 10 |

+

*.lfs.* filter=lfs diff=lfs merge=lfs -text

|

| 11 |

+

*.mlmodel filter=lfs diff=lfs merge=lfs -text

|

| 12 |

+

*.model filter=lfs diff=lfs merge=lfs -text

|

| 13 |

+

*.msgpack filter=lfs diff=lfs merge=lfs -text

|

| 14 |

+

*.npy filter=lfs diff=lfs merge=lfs -text

|

| 15 |

+

*.npz filter=lfs diff=lfs merge=lfs -text

|

| 16 |

+

*.onnx filter=lfs diff=lfs merge=lfs -text

|

| 17 |

+

*.ot filter=lfs diff=lfs merge=lfs -text

|

| 18 |

+

*.parquet filter=lfs diff=lfs merge=lfs -text

|

| 19 |

+

*.pb filter=lfs diff=lfs merge=lfs -text

|

| 20 |

+

*.pickle filter=lfs diff=lfs merge=lfs -text

|

| 21 |

+

*.pkl filter=lfs diff=lfs merge=lfs -text

|

| 22 |

+

*.pt filter=lfs diff=lfs merge=lfs -text

|

| 23 |

+

*.pth filter=lfs diff=lfs merge=lfs -text

|

| 24 |

+

*.rar filter=lfs diff=lfs merge=lfs -text

|

| 25 |

+

*.safetensors filter=lfs diff=lfs merge=lfs -text

|

| 26 |

+

saved_model/**/* filter=lfs diff=lfs merge=lfs -text

|

| 27 |

+

*.tar.* filter=lfs diff=lfs merge=lfs -text

|

| 28 |

+

*.tar filter=lfs diff=lfs merge=lfs -text

|

| 29 |

+

*.tflite filter=lfs diff=lfs merge=lfs -text

|

| 30 |

+

*.tgz filter=lfs diff=lfs merge=lfs -text

|

| 31 |

+

*.wasm filter=lfs diff=lfs merge=lfs -text

|

| 32 |

+

*.xz filter=lfs diff=lfs merge=lfs -text

|

| 33 |

+

*.zip filter=lfs diff=lfs merge=lfs -text

|

| 34 |

+

*.zst filter=lfs diff=lfs merge=lfs -text

|

| 35 |

+

*tfevents* filter=lfs diff=lfs merge=lfs -text

|

checkpoints/README.md

ADDED

|

@@ -0,0 +1,5 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

---

|

| 2 |

+

license: cc-by-nc-sa-4.0

|

| 3 |

+

---

|

| 4 |

+

|

| 5 |

+

Model weights of [Magic Clothing](https://github.com/ShineChen1024/MagicClothing)

|

checkpoints/ckpt.txt

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

# put cloth_segm.pth here

|

checkpoints/cloth_segm.pth

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:f71fad2bc11789a996acc507d1a5a1602ae0edefc2b9aba1cd198be5cc9c1a44

|

| 3 |

+

size 176625341

|

checkpoints/ipadapter_faceid/ckpt.txt

ADDED

|

@@ -0,0 +1 @@

|

|

|

|

|

|

|

| 1 |

+

download ckpt from https://huggingface.co/h94/IP-Adapter-FaceID, put the weights here

|

checkpoints/oms_diffusion_768_200000.safetensors

ADDED

|

@@ -0,0 +1,3 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

version https://git-lfs.github.com/spec/v1

|

| 2 |

+

oid sha256:763bf6f0c2484162901c523dbb0cb310b301535594f05e64c173238b24034191

|

| 3 |

+

size 3438118560

|

garment_adapter/__pycache__/attention_processor.cpython-310.pyc

ADDED

|

Binary file (12.8 kB). View file

|

|

|

garment_adapter/__pycache__/garment_diffusion.cpython-310.pyc

ADDED

|

Binary file (6.86 kB). View file

|

|

|

garment_adapter/attention_processor.py

ADDED

|

@@ -0,0 +1,682 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import pdb

|

| 2 |

+

|

| 3 |

+

import torch

|

| 4 |

+

from typing import Optional

|

| 5 |

+

import torch.nn.functional as F

|

| 6 |

+

from diffusers.utils import USE_PEFT_BACKEND

|

| 7 |

+

import torch.nn as nn

|

| 8 |

+

from diffusers.models.attention_processor import Attention

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

class AttnProcessor(nn.Module):

|

| 12 |

+

r"""

|

| 13 |

+

Default processor for performing attention-related computations.

|

| 14 |

+

"""

|

| 15 |

+

|

| 16 |

+

def __init__(self):

|

| 17 |

+

super().__init__()

|

| 18 |

+

|

| 19 |

+

def __call__(

|

| 20 |

+

self,

|

| 21 |

+

attn: Attention,

|

| 22 |

+

hidden_states: torch.FloatTensor,

|

| 23 |

+

encoder_hidden_states: Optional[torch.FloatTensor] = None,

|

| 24 |

+

attention_mask: Optional[torch.FloatTensor] = None,

|

| 25 |

+

temb: Optional[torch.FloatTensor] = None,

|

| 26 |

+

scale: float = 1.0,

|

| 27 |

+

attn_store=None,

|

| 28 |

+

do_classifier_free_guidance=None,

|

| 29 |

+

enable_cloth_guidance=None

|

| 30 |

+

) -> torch.Tensor:

|

| 31 |

+

residual = hidden_states

|

| 32 |

+

|

| 33 |

+

args = () if USE_PEFT_BACKEND else (scale,)

|

| 34 |

+

|

| 35 |

+

if attn.spatial_norm is not None:

|

| 36 |

+

hidden_states = attn.spatial_norm(hidden_states, temb)

|

| 37 |

+

|

| 38 |

+

input_ndim = hidden_states.ndim

|

| 39 |

+

|

| 40 |

+

if input_ndim == 4:

|

| 41 |

+

batch_size, channel, height, width = hidden_states.shape

|

| 42 |

+

hidden_states = hidden_states.view(batch_size, channel, height * width).transpose(1, 2)

|

| 43 |

+

|

| 44 |

+

batch_size, sequence_length, _ = (

|

| 45 |

+

hidden_states.shape if encoder_hidden_states is None else encoder_hidden_states.shape

|

| 46 |

+

)

|

| 47 |

+

attention_mask = attn.prepare_attention_mask(attention_mask, sequence_length, batch_size)

|

| 48 |

+

|

| 49 |

+

if attn.group_norm is not None:

|

| 50 |

+

hidden_states = attn.group_norm(hidden_states.transpose(1, 2)).transpose(1, 2)

|

| 51 |

+

|

| 52 |

+

query = attn.to_q(hidden_states, *args)

|

| 53 |

+

|

| 54 |

+

if encoder_hidden_states is None:

|

| 55 |

+

encoder_hidden_states = hidden_states

|

| 56 |

+

elif attn.norm_cross:

|

| 57 |

+

encoder_hidden_states = attn.norm_encoder_hidden_states(encoder_hidden_states)

|

| 58 |

+

|

| 59 |

+

key = attn.to_k(encoder_hidden_states, *args)

|

| 60 |

+

value = attn.to_v(encoder_hidden_states, *args)

|

| 61 |

+

|

| 62 |

+

query = attn.head_to_batch_dim(query)

|

| 63 |

+

key = attn.head_to_batch_dim(key)

|

| 64 |

+

value = attn.head_to_batch_dim(value)

|

| 65 |

+

|

| 66 |

+

attention_probs = attn.get_attention_scores(query, key, attention_mask)

|

| 67 |

+

hidden_states = torch.bmm(attention_probs, value)

|

| 68 |

+

hidden_states = attn.batch_to_head_dim(hidden_states)

|

| 69 |

+

|

| 70 |

+

# linear proj

|

| 71 |

+

hidden_states = attn.to_out[0](hidden_states, *args)

|

| 72 |

+

# dropout

|

| 73 |

+

hidden_states = attn.to_out[1](hidden_states)

|

| 74 |

+

|

| 75 |

+

if input_ndim == 4:

|

| 76 |

+

hidden_states = hidden_states.transpose(-1, -2).reshape(batch_size, channel, height, width)

|

| 77 |

+

|

| 78 |

+

if attn.residual_connection:

|

| 79 |

+

hidden_states = hidden_states + residual

|

| 80 |

+

|

| 81 |

+

hidden_states = hidden_states / attn.rescale_output_factor

|

| 82 |

+

|

| 83 |

+

return hidden_states

|

| 84 |

+

|

| 85 |

+

|

| 86 |

+

class REFAttnProcessor(nn.Module):

|

| 87 |

+

def __init__(self, name, type="read"):

|

| 88 |

+

super().__init__()

|

| 89 |

+

self.name = name

|

| 90 |

+

self.type = type

|

| 91 |

+

|

| 92 |

+

def __call__(

|

| 93 |

+

self,

|

| 94 |

+

attn: Attention,

|

| 95 |

+

hidden_states: torch.FloatTensor,

|

| 96 |

+

encoder_hidden_states: Optional[torch.FloatTensor] = None,

|

| 97 |

+

attention_mask: Optional[torch.FloatTensor] = None,

|

| 98 |

+

temb: Optional[torch.FloatTensor] = None,

|

| 99 |

+

scale: float = 1.0,

|

| 100 |

+

attn_store=None,

|

| 101 |

+

do_classifier_free_guidance=None,

|

| 102 |

+

enable_cloth_guidance=None

|

| 103 |

+

) -> torch.Tensor:

|

| 104 |

+

if self.type == "read":

|

| 105 |

+

attn_store[self.name] = hidden_states

|

| 106 |

+

elif self.type == "write":

|

| 107 |

+

ref_hidden_states = attn_store[self.name]

|

| 108 |

+

if do_classifier_free_guidance:

|

| 109 |

+

empty_copy = torch.zeros_like(ref_hidden_states)

|

| 110 |

+

if enable_cloth_guidance:

|

| 111 |

+

ref_hidden_states = torch.cat([empty_copy, ref_hidden_states, ref_hidden_states])

|

| 112 |

+

else:

|

| 113 |

+

ref_hidden_states = torch.cat([empty_copy, ref_hidden_states])

|

| 114 |

+

hidden_states = torch.cat([hidden_states, ref_hidden_states], dim=1)

|

| 115 |

+

else:

|

| 116 |

+

raise ValueError("unsupport type")

|

| 117 |

+

residual = hidden_states

|

| 118 |

+

|

| 119 |

+

args = () if USE_PEFT_BACKEND else (scale,)

|

| 120 |

+

|

| 121 |

+

if attn.spatial_norm is not None:

|

| 122 |

+

hidden_states = attn.spatial_norm(hidden_states, temb)

|

| 123 |

+

|

| 124 |

+

input_ndim = hidden_states.ndim

|

| 125 |

+

|

| 126 |

+

if input_ndim == 4:

|

| 127 |

+

batch_size, channel, height, width = hidden_states.shape

|

| 128 |

+

hidden_states = hidden_states.view(batch_size, channel, height * width).transpose(1, 2)

|

| 129 |

+

|

| 130 |

+

batch_size, sequence_length, _ = (

|

| 131 |

+

hidden_states.shape if encoder_hidden_states is None else encoder_hidden_states.shape

|

| 132 |

+

)

|

| 133 |

+

attention_mask = attn.prepare_attention_mask(attention_mask, sequence_length, batch_size)

|

| 134 |

+

|

| 135 |

+

if attn.group_norm is not None:

|

| 136 |

+

hidden_states = attn.group_norm(hidden_states.transpose(1, 2)).transpose(1, 2)

|

| 137 |

+

|

| 138 |

+

query = attn.to_q(hidden_states, *args)

|

| 139 |

+

|

| 140 |

+

if encoder_hidden_states is None:

|

| 141 |

+

encoder_hidden_states = hidden_states

|

| 142 |

+

elif attn.norm_cross:

|

| 143 |

+

encoder_hidden_states = attn.norm_encoder_hidden_states(encoder_hidden_states)

|

| 144 |

+

|

| 145 |

+

key = attn.to_k(encoder_hidden_states, *args)

|

| 146 |

+

value = attn.to_v(encoder_hidden_states, *args)

|

| 147 |

+

|

| 148 |

+

query = attn.head_to_batch_dim(query)

|

| 149 |

+

key = attn.head_to_batch_dim(key)

|

| 150 |

+

value = attn.head_to_batch_dim(value)

|

| 151 |

+

|

| 152 |

+

attention_probs = attn.get_attention_scores(query, key, attention_mask)

|

| 153 |

+

hidden_states = torch.bmm(attention_probs, value)

|

| 154 |

+

hidden_states = attn.batch_to_head_dim(hidden_states)

|

| 155 |

+

|

| 156 |

+

if self.type == "write":

|

| 157 |

+

hidden_states, _ = torch.chunk(hidden_states, 2, dim=1)

|

| 158 |

+

|

| 159 |

+

# linear proj

|

| 160 |

+

hidden_states = attn.to_out[0](hidden_states, *args)

|

| 161 |

+

# dropout

|

| 162 |

+

hidden_states = attn.to_out[1](hidden_states)

|

| 163 |

+

|

| 164 |

+

if input_ndim == 4:

|

| 165 |

+

hidden_states = hidden_states.transpose(-1, -2).reshape(batch_size, channel, height, width)

|

| 166 |

+

|

| 167 |

+

if attn.residual_connection:

|

| 168 |

+

hidden_states = hidden_states + residual

|

| 169 |

+

|

| 170 |

+

hidden_states = hidden_states / attn.rescale_output_factor

|

| 171 |

+

|

| 172 |

+

return hidden_states

|

| 173 |

+

|

| 174 |

+

|

| 175 |

+

class AttnProcessor2_0(nn.Module):

|

| 176 |

+

r"""

|

| 177 |

+

Processor for implementing scaled dot-product attention (enabled by default if you're using PyTorch 2.0).

|

| 178 |

+

"""

|

| 179 |

+

|

| 180 |

+

def __init__(self):

|

| 181 |

+

super().__init__()

|

| 182 |

+

if not hasattr(F, "scaled_dot_product_attention"):

|

| 183 |

+

raise ImportError("AttnProcessor2_0 requires PyTorch 2.0, to use it, please upgrade PyTorch to 2.0.")

|

| 184 |

+

|

| 185 |

+

def __call__(

|

| 186 |

+

self,

|

| 187 |

+

attn: Attention,

|

| 188 |

+

hidden_states: torch.FloatTensor,

|

| 189 |

+

encoder_hidden_states: Optional[torch.FloatTensor] = None,

|

| 190 |

+

attention_mask: Optional[torch.FloatTensor] = None,

|

| 191 |

+

temb: Optional[torch.FloatTensor] = None,

|

| 192 |

+

scale: float = 1.0,

|

| 193 |

+

attn_store=None,

|

| 194 |

+

do_classifier_free_guidance=None,

|

| 195 |

+

enable_cloth_guidance=None

|

| 196 |

+

) -> torch.FloatTensor:

|

| 197 |

+

residual = hidden_states

|

| 198 |

+

if attn.spatial_norm is not None:

|

| 199 |

+

hidden_states = attn.spatial_norm(hidden_states, temb)

|

| 200 |

+

|

| 201 |

+

input_ndim = hidden_states.ndim

|

| 202 |

+

|

| 203 |

+

if input_ndim == 4:

|

| 204 |

+

batch_size, channel, height, width = hidden_states.shape

|

| 205 |

+

hidden_states = hidden_states.view(batch_size, channel, height * width).transpose(1, 2)

|

| 206 |

+

|

| 207 |

+

batch_size, sequence_length, _ = (

|

| 208 |

+

hidden_states.shape if encoder_hidden_states is None else encoder_hidden_states.shape

|

| 209 |

+

)

|

| 210 |

+

|

| 211 |

+

if attention_mask is not None:

|

| 212 |

+

attention_mask = attn.prepare_attention_mask(attention_mask, sequence_length, batch_size)

|

| 213 |

+

# scaled_dot_product_attention expects attention_mask shape to be

|

| 214 |

+

# (batch, heads, source_length, target_length)

|

| 215 |

+

attention_mask = attention_mask.view(batch_size, attn.heads, -1, attention_mask.shape[-1])

|

| 216 |

+

|

| 217 |

+

if attn.group_norm is not None:

|

| 218 |

+

hidden_states = attn.group_norm(hidden_states.transpose(1, 2)).transpose(1, 2)

|

| 219 |

+

|

| 220 |

+

args = () if USE_PEFT_BACKEND else (scale,)

|

| 221 |

+

query = attn.to_q(hidden_states, *args)

|

| 222 |

+

|

| 223 |

+

if encoder_hidden_states is None:

|

| 224 |

+

encoder_hidden_states = hidden_states

|

| 225 |

+

elif attn.norm_cross:

|

| 226 |

+

encoder_hidden_states = attn.norm_encoder_hidden_states(encoder_hidden_states)

|

| 227 |

+

|

| 228 |

+

key = attn.to_k(encoder_hidden_states, *args)

|

| 229 |

+

value = attn.to_v(encoder_hidden_states, *args)

|

| 230 |

+

|

| 231 |

+

inner_dim = key.shape[-1]

|

| 232 |

+

head_dim = inner_dim // attn.heads

|

| 233 |

+

|

| 234 |

+

query = query.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

|

| 235 |

+

|

| 236 |

+

key = key.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

|

| 237 |

+

value = value.view(batch_size, -1, attn.heads, head_dim).transpose(1, 2)

|

| 238 |

+

|

| 239 |

+

# the output of sdp = (batch, num_heads, seq_len, head_dim)

|

| 240 |

+

# TODO: add support for attn.scale when we move to Torch 2.1

|

| 241 |

+

hidden_states = F.scaled_dot_product_attention(

|

| 242 |

+

query, key, value, attn_mask=attention_mask, dropout_p=0.0, is_causal=False

|

| 243 |

+

)

|

| 244 |

+

|

| 245 |

+

hidden_states = hidden_states.transpose(1, 2).reshape(batch_size, -1, attn.heads * head_dim)

|

| 246 |

+

hidden_states = hidden_states.to(query.dtype)

|

| 247 |

+

|

| 248 |

+

# linear proj

|

| 249 |

+

hidden_states = attn.to_out[0](hidden_states, *args)

|

| 250 |

+

# dropout

|

| 251 |

+

hidden_states = attn.to_out[1](hidden_states)

|

| 252 |

+

|

| 253 |

+

if input_ndim == 4:

|

| 254 |

+

hidden_states = hidden_states.transpose(-1, -2).reshape(batch_size, channel, height, width)

|

| 255 |

+

|

| 256 |

+

if attn.residual_connection:

|

| 257 |

+

hidden_states = hidden_states + residual

|

| 258 |

+

|

| 259 |

+

hidden_states = hidden_states / attn.rescale_output_factor

|

| 260 |

+

|

| 261 |

+

return hidden_states

|

| 262 |

+

|

| 263 |

+

|

| 264 |

+

class REFAttnProcessor2_0(nn.Module):

|

| 265 |

+

def __init__(self, name, type="read"):

|

| 266 |

+

super().__init__()

|

| 267 |

+

if not hasattr(F, "scaled_dot_product_attention"):

|

| 268 |

+

raise ImportError("AttnProcessor2_0 requires PyTorch 2.0, to use it, please upgrade PyTorch to 2.0.")

|

| 269 |

+

self.name = name

|

| 270 |

+

self.type = type

|

| 271 |

+

|

| 272 |

+

def __call__(

|

| 273 |

+

self,

|

| 274 |

+

attn: Attention,

|

| 275 |

+

hidden_states: torch.FloatTensor,

|

| 276 |

+

encoder_hidden_states: Optional[torch.FloatTensor] = None,

|

| 277 |

+

attention_mask: Optional[torch.FloatTensor] = None,

|

| 278 |

+

temb: Optional[torch.FloatTensor] = None,

|

| 279 |

+

scale: float = 1.0,

|

| 280 |

+

attn_store=None,

|

| 281 |

+

do_classifier_free_guidance=False,

|

| 282 |

+

enable_cloth_guidance=True

|

| 283 |

+

) -> torch.FloatTensor:

|

| 284 |

+

if self.type == "read":

|

| 285 |

+

attn_store[self.name] = hidden_states

|

| 286 |

+

elif self.type == "write":

|

| 287 |

+

ref_hidden_states = attn_store[self.name]

|

| 288 |

+

if do_classifier_free_guidance:

|

| 289 |

+

empty_copy = torch.zeros_like(ref_hidden_states)

|

| 290 |

+

if enable_cloth_guidance:

|

| 291 |

+

ref_hidden_states = torch.cat([empty_copy, ref_hidden_states, ref_hidden_states])

|

| 292 |

+

else:

|

| 293 |

+

ref_hidden_states = torch.cat([empty_copy, ref_hidden_states])

|

| 294 |

+

hidden_states = torch.cat([hidden_states, ref_hidden_states], dim=1)

|

| 295 |

+

else:

|

| 296 |

+

raise ValueError("unsupport type")

|

| 297 |

+

residual = hidden_states

|

| 298 |

+

if attn.spatial_norm is not None:

|

| 299 |

+

hidden_states = attn.spatial_norm(hidden_states, temb)

|

| 300 |

+

|

| 301 |

+

input_ndim = hidden_states.ndim

|

| 302 |

+

|

| 303 |

+

if input_ndim == 4:

|

| 304 |

+