license: apache-2.0

language:

- bg

- ca

- cs

- cy

- da

- de

- el

- en

- es

- et

- eu

- fi

- fr

- ga

- gl

- hr

- hu

- it

- lt

- lv

- mt

- nl

- nb

- 'no'

- nn

- oc

- pl

- pt

- ro

- ru

- sl

- sk

- sr

- sv

- uk

- ast

- an

Salamandra Model Card

SalamandraTA-7b-instruct is a translation LLM that has been instruction-tuned from SalamandraTA-7b-base. The base model results from continually pre-training Salamandra-7b on parallel data. The model is proficent in 37 european languages and support translation-related tasks, namely: sentence-level-translation, paragraph-level-translation, document-level-translation, automatic post-editing, machine translation evaluation, multi-reference-translation, named-entity-recognition and context-aware translation.

DISCLAIMER: This version of Salamandra is tailored exclusively for translation tasks. It lacks chat capabilities and has not been trained with any chat instructions.

Hardware and Software

Training Framework

SalamandraTA-7b-base was continually pre-trained using NVIDIA’s NeMo Framework, which leverages PyTorch Lightning for efficient model training in highly distributed settings.

SalamandraTA-7b-instruct was produced with FastChat.

Compute Infrastructure

All models were trained on MareNostrum 5, a pre-exascale EuroHPC supercomputer hosted and operated by Barcelona Supercomputing Center.

The accelerated partition is composed of 1,120 nodes with the following specifications:

- 4x Nvidia Hopper GPUs with 64GB HBM2 memory

- 2x Intel Sapphire Rapids 8460Y+ at 2.3Ghz and 32c each (64 cores)

- 4x NDR200 (BW per node 800Gb/s)

- 512 GB of Main memory (DDR5)

- 460GB on NVMe storage

How to use

You can translate between the following 37 languages:

Aragonese, Aranese, Asturian, Basque, Bulgarian, Croatian, Czech, Danish, Dutch, English, Estonian, Finnish, French, Galician, German, Greek, Hungarian, Irish, Italian, Latvian, Lithuanian, Maltese, Norwegian Bokmål, Norwegian Nynorsk, Occitan, Polish, Portuguese, Romanian, Russian, Serbian, Slovak, Slovenian, Spanish, Swedish, Ukrainian, Valencian, Welsh.

The instruction-following model use the commonly adopted ChatML template:

<|im_start|>system

{SYSTEM PROMPT}<|im_end|>

<|im_start|>user

{USER PROMPT}<|im_end|>

<|im_start|>assistant

{MODEL RESPONSE}<|im_end|>

<|im_start|>user

[...]

The easiest way to apply it is by using the tokenizer's built-in functions, as shown in the following snippet.

from datetime import datetime

from transformers import AutoTokenizer, AutoModelForCausalLM

import transformers

import torch

model_id = "BSC-LT/salamandraTA-7b-instruct"

source = 'Spanish'

target = 'Catalan'

sentence = "Ayer se fue, tomó sus cosas y se puso a navegar. Una camisa, un pantalón vaquero y una canción, dónde irá, dónde irá. Se despidió, y decidió batirse en duelo con el mar. Y recorrer el mundo en su velero. Y navegar, nai-na-na, navegar"

text = f"Translate the following text from {source} into {target}.\n{source}: {sentence} \n{target}:"

tokenizer = AutoTokenizer.from_pretrained(model_id)

stop_sequence = '<|im_end|>'

eos_tokens = [tokenizer.eos_token_id,tokenizer.convert_tokens_to_ids(stop_sequence)]

model = AutoModelForCausalLM.from_pretrained(

model_id,

device_map="auto",

torch_dtype=torch.bfloat16

)

message = [ { "role": "user", "content": text } ]

date_string = datetime.today().strftime('%Y-%m-%d')

prompt = tokenizer.apply_chat_template(

message,

tokenize=False,

add_generation_prompt=True,

date_string=date_string

)

inputs = tokenizer.encode(prompt, add_special_tokens=False, return_tensors="pt")

input_length = inputs.shape[1]

outputs = model.generate(input_ids=inputs.to(model.device),

max_new_tokens=400,

early_stopping=True,

eos_token_id=eos_tokens,

pad_token_id=tokenizer.eos_token_id,

num_beams=5)

print(tokenizer.decode(outputs[0, input_length:], skip_special_tokens=True))

# Ahir se'n va anar, va recollir les seves coses i es va fer a la mar. Una camisa, uns texans i una cançó, on anirà, on anirà. Es va acomiadar i va decidir batre's en duel amb el mar. I fer la volta al món en el seu veler. I navegar, nai-na-na, navegar

Using this template, each turn is preceded by a <|im_start|> delimiter and the role of the entity

(either user, for content supplied by the user, or assistant for LLM responses), and finished with the <|im_end|> token.

Post-editing

For post-editing tasks you can try using the following prompt template:

source = 'Catalan'

target = 'English'

source_sentence = 'Necessite saber qui son Rafael Nadal i Maria Magdalena.'

machine_translation = 'I need to know who is Rafael Christmas and Maria the Muffin.'

text = f"Please fix any mistakes in the following {source}-{target} machine translation or keep it unedited if it's correct.\nSource: {source_sentence} \nMT: {machine_translation} \nCorrected:"

# I need to know who is Rafael Nadal and Maria Magdalena.

Data

Pretraining Data

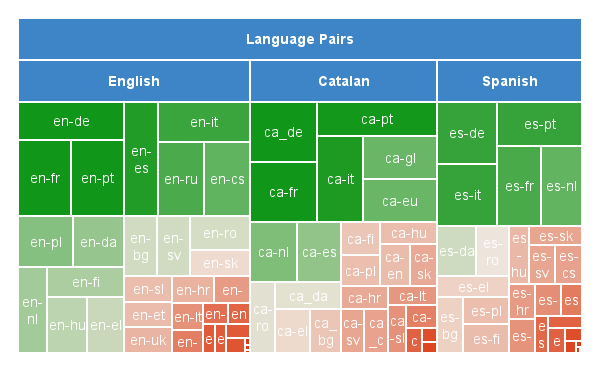

The training corpus consists of 252 billion tokens of Catalan-, Spanish-centric, and English-centric parallel data, including all of the official European languages plus Catalan, Basque, Galician, Asturian, Aragonese and Aranese. It amounts to 6,574,251,526 parallel sentence pairs.

This highly multilingual corpus is predominantly composed of data sourced from OPUS, with additional data taken from the NTEU project, Project Aina’s existing corpora, and our own curated datasets.. Where little parallel Catalan <-> xx data could be found, synthetic Catalan data was generated from the Spanish side of the collected Spanish <-> xx corpora using Projecte Aina’s Spanish-Catalan model. The final distribution of languages was as below:

Click the expand button below to see the full list of corpora included in the training data.

Data Sources

| Dataset | Ca-xx Languages | Es-xx Langugages | En-xx Languages |

|---|---|---|---|

| AINA | en | ||

| ARANESE-SYNTH-CORPUS-BSC | arn | ||

| BOUA-BSC | val | ||

| BOUMH | val | ||

| BOUA-PILAR | val | ||

| CCMatrix | eu | ga | |

| DGT | bg,cs,da,de,el ,et,fi,fr,ga,hr,hu,lt,lv,mt,nl,pl,pt,ro,sk,sl,sv | da,et,ga,hr,hu,lt,lv,mt,sh,sl | |

| DOGV-BSC | val | ||

| DOGV-PILAR | val | ||

| ELRC-EMEA | bg,cs,da,hu,lt,lv,mt,pl,ro,sk,sl | et,hr,lv,ro,sk,sl | |

| EMEA | bg,cs,da,el,fi,hu,lt,mt,nl,pl,ro,sk,sl,sv | et,mt | |

| EUBookshop | lt,pl,pt | cs,da,de,el,fi,fr,ga,it,lv,mt,nl,pl,pt,ro,sk,sl,sv | cy,ga |

| Europarl | bg,cs,da,el,en,fi,fr,hu,lt,lv,nl,pl,pt ,ro,sk,sl,sv | ||

| Europat | en,hr | no | |

| GAITU | eu | ||

| KDE4 | bg,cs,da,de,el ,et,eu,fi,fr,ga,gl,hr,it,lt,lv,nl,pl,pt,ro,sk,sl,sv | bg,ga,hr | cy,ga,nn,oc |

| GlobalVoices | bg,de,fr,it,nl,pl,pt | bg,de,fr,pt | |

| GNOME | eu,fr,ga,gl,pt | ga | cy,ga,nn |

| JRC-Arquis | cs,da,et,fr,lt,lv,mt,nl,pl ,ro,sv | et | |

| LES-CORTS-VALENCIANES-BSC | val | ||

| MaCoCu | en | hr,mt,uk | |

| MultiCCAligned | bg,cs,de,el,et,fi,fr,hr,hu,it,lt,lv,nl,pl,ro,sk,sv | bg,fi,fr,hr,it,lv,nl,pt | bg,cy,da,et,fi,hr,hu,lt,lv,no,sl,sr,uk |

| MultiHPLT | en, et,fi,ga,hr,mt | fi,ga,gl,hr,mt,nn,sr | |

| MultiParaCrawl | bg,da | de,en,fr,ga,hr,hu,it,mt,pt | bg,cs,da,de,el,et,fi,fr,ga,hr,hu,lt,lv,mt,nn,pl,ro,sk,sl,uk |

| MultiUN | fr | ||

| News-Commentary | fr | ||

| NLLB | bg,da,el,en,et,fi,fr,gl,hu,it ,lt,lv,pt,ro,sk,sl | bg,cs,da,de,el ,et,fi,fr,hu,it,lt,lv,nl,pl,pt ,ro,sk,sl,sv | bg,cs,cy,da,de,el,et,fi,fr,ga,hr,hu,it,lt,lv,mt,nl,no,oc,pl,pt,ro,ru,sk,sl,sr,sv,uk |

| NÓS | gl | ||

| NÓS-SYN | gl | ||

| NTEU | bg,cs,da,de,el,en,et,fi,fr,ga,hr,hu,it,lt,lv,mt,nl,pl,pt,ro,sk,sl,sv | da,et,ga,hr,lt,lv,mt,ro,sk,sl,sv | |

| OpenSubtitles | bg,cs,da,de,el ,et,eu,fi,gl,hr,hu,lt,lv,nl,pl,pt,ro,sk,sl,sv | da,de,fi,fr,hr,hu,it,lv,nl | bg,cs,de,el,et,hr,fi,fr,hr,hu,no,sl,sr |

| OPUS-100 | en | gl | |

| StanfordNLP-NMT | cs | ||

| Tatoeba | de,pt | pt | |

| TildeModel | bg | et,hr,lt,lv,mt | |

| UNPC | en,fr | ru | |

| VALENCIAN-AUTH | val | ||

| VALENCIAN-SYNTH | val | ||

| WikiMatrix | bg,cs,da,de,el ,et,eu,fi,fr,gl,hr,hu,it,lt,nl,pl,pt,ro,sk,sl,sv | bg,en,fr,hr,it,pt | oc,sh |

| Wikimedia | cy,nn | ||

| XLENT | eu,ga,gl | ga | cy,et,ga,gl,hr,oc,sh |

To consult the data summary document with the respective licences, please send an e-mail to ipr@bsc.es.

References

- Aulamo, M., Sulubacak, U., Virpioja, S., & Tiedemann, J. (2020). OpusTools and Parallel Corpus Diagnostics. In N. Calzolari, F. Béchet, P. Blache, K. Choukri, C. Cieri, T. Declerck, S. Goggi, H. Isahara, B. Maegaard, J. Mariani, H. Mazo, A. Moreno, J. Odijk, & S. Piperidis (Eds.), Proceedings of the Twelfth Language Resources and Evaluation Conference (pp. 3782–3789). European Language Resources Association. https://aclanthology.org/2020.lrec-1.467

- Chaudhary, V., Tang, Y., Guzmán, F., Schwenk, H., & Koehn, P. (2019). Low-Resource Corpus Filtering Using Multilingual Sentence Embeddings. In O. Bojar, R. Chatterjee, C. Federmann, M. Fishel, Y. Graham, B. Haddow, M. Huck, A. J. Yepes, P. Koehn, A. Martins, C. Monz, M. Negri, A. Névéol, M. Neves, M. Post, M. Turchi, & K. Verspoor (Eds.), Proceedings of the Fourth Conference on Machine Translation (Volume 3: Shared Task Papers, Day 2) (pp. 261–266). Association for Computational Linguistics. https://doi.org/10.18653/v1/W19-5435

- DGT-Translation Memory—European Commission. (n.d.). Retrieved November 4, 2024, from https://joint-research-centre.ec.europa.eu/language-technology-resources/dgt-translation-memory_en

- Eisele, A., & Chen, Y. (2010). MultiUN: A Multilingual Corpus from United Nation Documents. In N. Calzolari, K. Choukri, B. Maegaard, J. Mariani, J. Odijk, S. Piperidis, M. Rosner, & D. Tapias (Eds.), Proceedings of the Seventh International Conference on Language Resources and Evaluation (LREC’10). European Language Resources Association (ELRA). http://www.lrec-conf.org/proceedings/lrec2010/pdf/686_Paper.pdf

- El-Kishky, A., Chaudhary, V., Guzmán, F., & Koehn, P. (2020). CCAligned: A Massive Collection of Cross-Lingual Web-Document Pairs. Proceedings of the 2020 Conference on Empirical Methods in Natural Language Processing (EMNLP), 5960–5969. https://doi.org/10.18653/v1/2020.emnlp-main.480

- El-Kishky, A., Renduchintala, A., Cross, J., Guzmán, F., & Koehn, P. (2021). XLEnt: Mining a Large Cross-lingual Entity Dataset with Lexical-Semantic-Phonetic Word Alignment. Proceedings of the 2021 Conference on Empirical Methods in Natural Language Processing, 10424–10430. https://doi.org/10.18653/v1/2021.emnlp-main.814

- Fan, A., Bhosale, S., Schwenk, H., Ma, Z., El-Kishky, A., Goyal, S., Baines, M., Celebi, O., Wenzek, G., Chaudhary, V., Goyal, N., Birch, T., Liptchinsky, V., Edunov, S., Grave, E., Auli, M., & Joulin, A. (2020). Beyond English-Centric Multilingual Machine Translation (No. arXiv:2010.11125). arXiv. https://doi.org/10.48550/arXiv.2010.11125

- García-Martínez, M., Bié, L., Cerdà, A., Estela, A., Herranz, M., Krišlauks, R., Melero, M., O’Dowd, T., O’Gorman, S., Pinnis, M., Stafanovič, A., Superbo, R., & Vasiļevskis, A. (2021). Neural Translation for European Union (NTEU). 316–334. https://aclanthology.org/2021.mtsummit-up.23

- Gibert, O. de, Nail, G., Arefyev, N., Bañón, M., Linde, J. van der, Ji, S., Zaragoza-Bernabeu, J., Aulamo, M., Ramírez-Sánchez, G., Kutuzov, A., Pyysalo, S., Oepen, S., & Tiedemann, J. (2024). A New Massive Multilingual Dataset for High-Performance Language Technologies (No. arXiv:2403.14009). arXiv. http://arxiv.org/abs/2403.14009

- Koehn, P. (2005). Europarl: A Parallel Corpus for Statistical Machine Translation. Proceedings of Machine Translation Summit X: Papers, 79–86. https://aclanthology.org/2005.mtsummit-papers.11

- Kreutzer, J., Caswell, I., Wang, L., Wahab, A., Van Esch, D., Ulzii-Orshikh, N., Tapo, A., Subramani, N., Sokolov, A., Sikasote, C., Setyawan, M., Sarin, S., Samb, S., Sagot, B., Rivera, C., Rios, A., Papadimitriou, I., Osei, S., Suarez, P. O., … Adeyemi, M. (2022). Quality at a Glance: An Audit of Web-Crawled Multilingual Datasets. Transactions of the Association for Computational Linguistics, 10, 50–72. https://doi.org/10.1162/tacl_a_00447

- Rozis, R.,Skadiņš, R (2017). Tilde MODEL - Multilingual Open Data for EU Languages. https://aclanthology.org/W17-0235

- Schwenk, H., Chaudhary, V., Sun, S., Gong, H., & Guzmán, F. (2019). WikiMatrix: Mining 135M Parallel Sentences in 1620 Language Pairs from Wikipedia (No. arXiv:1907.05791). arXiv. https://doi.org/10.48550/arXiv.1907.05791

- Schwenk, H., Wenzek, G., Edunov, S., Grave, E., & Joulin, A. (2020). CCMatrix: Mining Billions of High-Quality Parallel Sentences on the WEB (No. arXiv:1911.04944). arXiv. https://doi.org/10.48550/arXiv.1911.04944

- Steinberger, R., Pouliquen, B., Widiger, A., Ignat, C., Erjavec, T., Tufiş, D., & Varga, D. (n.d.). The JRC-Acquis: A Multilingual Aligned Parallel Corpus with 20+ Languages. http://www.lrec-conf.org/proceedings/lrec2006/pdf/340_pdf

- Subramani, N., Luccioni, S., Dodge, J., & Mitchell, M. (2023). Detecting Personal Information in Training Corpora: An Analysis. In A. Ovalle, K.-W. Chang, N. Mehrabi, Y. Pruksachatkun, A. Galystan, J. Dhamala, A. Verma, T. Cao, A. Kumar, & R. Gupta (Eds.), Proceedings of the 3rd Workshop on Trustworthy Natural Language Processing (TrustNLP 2023) (pp. 208–220). Association for Computational Linguistics. https://doi.org/10.18653/v1/2023.trustnlp-1.18

- Tiedemann, J. (23-25). Parallel Data, Tools and Interfaces in OPUS. In N. C. (Conference Chair), K. Choukri, T. Declerck, M. U. Doğan, B. Maegaard, J. Mariani, A. Moreno, J. Odijk, & S. Piperidis (Eds.), Proceedings of the Eight International Conference on Language Resources and Evaluation (LREC’12). European Language Resources Association (ELRA). http://www.lrec-conf.org/proceedings/lrec2012/pdf/463_Paper

- Ziemski, M., Junczys-Dowmunt, M., & Pouliquen, B. (n.d.). The United Nations Parallel Corpus v1.0. https://aclanthology.org/L16-1561

Instruction Tuning Data

This model has been fine-tuned on ~135k instructions, primarily targeting machine translation performance for Catalan, English, and Spanish. Additional instruction data for other European and closely related Iberian languages was also included, as it yielded a positive impact on the languages of interest. That said, the performance in these additional languages is not guaranteed due to the limited amount of available data and the lack of resources for thorough testing.

A portion of our fine-tuning data comes directly from, or is sampled from TowerBlocks. We also created additional datasets for our main languages of interest. While tasks relating to machine translation are included, it’s important to note that no chat data was used in the fine-tuning process.

Click the expand button below to see the full list of tasks included in the finetuning data.

Data Sources

| Task | Source | Languages | Count |

|---|---|---|---|

| Multi-reference Translation | TowerBlocks: Tatoeba Dev (filtered) | mixed | 10000 |

| Paraphrase | TowerBlocks: PAWS-X Dev | mixed | 3521 |

| Named-entity Recognition | AnCora-Ca-NER | ca | 12059 |

| Named-entity Recognition | BasqueGLUE, EusIE | eu | 4304 |

| Named-entity Recognition | SLI NERC Galician Gold Corpus | gl | 6483 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | pt | 854 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | nl | 800 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | es | 1654 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | en | 1671 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | ru | 800 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | it | 858 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | fr | 857 |

| Named-entity Recognition | TowerBlocks: MultiCoNER 2022 and 2023 Dev | de | 1312 |

| Terminology-aware Translation | TowerBlocks: WMT21 Terminology Dev (filtered) | en-ru | 50 |

| Terminology-aware Translation | TowerBlocks: WMT21 Terminology Dev (filtered) | en-fr | 29 |

| Automatic Post Edition | TowerBlocks: QT21, ApeQuest | en-fr | 6133 |

| Automatic Post Edition | TowerBlocks: QT21, ApeQuest | en-nl | 9077 |

| Automatic Post Edition | TowerBlocks: QT21, ApeQuest | en-pt | 5762 |

| Automatic Post Edition | TowerBlocks: QT21, ApeQuest | de-en | 10000 |

| Automatic Post Edition | TowerBlocks: QT21, ApeQuest | en-de | 10000 |

| Machine Translation Evaluation | TowerBlocks-sample: WMT20 to WMT22 Metrics MQM, WMT17 to WMT22 Metrics Direct Assessments | en-ru, en-pl, ru-en, en-de, en-ru, de-fr, de-en, en-de | 353 |

| Machine Translation Evaluation | Non-public | four pivot languages (eu, es, ca, gl) paired with European languages (bg, cs, da, de, el, en, et, fi, fr, ga, hr, hu, it, lt, lv, mt, nl, pl, pt, ro, sk, sl, sv) | 9700 |

| General Machine Translation | TowerBlocks: WMT14 to WMT21, NTREX, Flores Dev, FRMT, QT21, ApeQuest, OPUS (Quality Filtered), MT-GenEval | nl-en, en-ru, it-en, fr-en, es-en, en-fr, ru-en, fr-de, en-nl, de-fr | 500 |

| General Machine Translation | Non-public | three pivot languages (es, ca, en) paired with European languages (ast, arn, arg, bg, cs, cy, da, de, el, et, fi, ga, gl, hr, it, lt, lv, mt, nb, nn, nl, oc, pl, pt, ro, ru, sk, sl, sr, sv, uk, eu) | 9350 |

| Fill-in-the-Blank | Non-public | five pivot languages (ca, es, eu, gl, en) paired with European languages (cs, da, de, el, et, fi, fr, ga, hr, hu, it, lt, lv, mt, nl, pl, pt, ro, sk, sl, sv) | 11500 |

| Document-level Translation | Non-public | two pivot languages (es, en) paired with European languages (bg, cs, da, de, el, et, fi, fr, hu, it, lt, lv, nl, pl, pt, ro, ru, sk, sv) | 7600 |

| Paragraph-level Translation | Non-public | two pivot languages (es, en) paired with European languages (bg, cs, da, de, el, et, fi, fr, hu, it, lt, lv, nl, pl, pt, ro, ru, sk, sv) | 7600 |

| Context-Aware Translation | TowerBlocks: MT-GenEval | en-it | 348 |

| Context-Aware Translation | TowerBlocks: MT-GenEval | en-ru | 454 |

| Context-Aware Translation | TowerBlocks: MT-GenEval | en-fr | 369 |

| Context-Aware Translation | TowerBlocks: MT-GenEval | en-nl | 417 |

| Context-Aware Translation | TowerBlocks: MT-GenEval | en-es | 431 |

| Context-Aware Translation | TowerBlocks: MT-GenEval | en-de | 558 |

| ** Total ** | 135,404 |

The non-public portion of this dataset was jointly created by BSC, HiTZ, and CiTIUS. For further information regarding the instruction-tuning data, please contact langtech@bsc.es.

Evaluation

Below are the evaluation results on the Flores+200 devtest set, compared against the state-of-the-art MADLAD400-7B model (Kudugunta, S., et al.) and SalamandraTA-7b-base model. These results cover translation directions between CA-XX, ES-XX, EN-XX, as well as XX-CA, XX-ES, and XX-EN. The metrics have been computed excluding Asturian, Aranese, and Aragonese as we report them separately. The evaluation was conducted using MT Lens following the standard setting (beam search with beam size 5, limiting the translation length to 500 tokens). We report the following metrics:

Click to show metrics details

BLEU: Sacrebleu implementation. Signature: nrefs:1— case:mixed— eff:no— tok:13a— smooth:exp—version:2.3.1TER: Sacrebleu implementation.ChrF: Sacrebleu implementation.Comet: Model checkpoint: "Unbabel/wmt22-comet-da".Comet-kiwi: Model checkpoint: "Unbabel/wmt22-cometkiwi-da".Bleurt: Model checkpoint: "lucadiliello/BLEURT-20".MetricX: Model checkpoint: "google/metricx-23-xl-v2p0".MetricX-QE: Model checkpoint: "google/metricx-23-qe-xl-v2p0".

English

This section presents the evaluation metrics for the English translation tasks.

| Bleu↑ | Ter↓ | ChrF↑ | Comet↑ | Comet-kiwi↑ | Bleurt↑ | MetricX↓ | MetricX-QE↓ | |

|---|---|---|---|---|---|---|---|---|

| EN-XX | ||||||||

| SalamandraTA-7b-instruct | 36.29 | 50.62 | 63.3 | 0.89 | 0.85 | 0.79 | 1.02 | 0.94 |

| MADLAD400-7B | 35.73 | 51.87 | 63.46 | 0.88 | 0.85 | 0.79 | 1.16 | 1.1 |

| SalamandraTA-7b-base | 34.99 | 52.64 | 62.58 | 0.87 | 0.84 | 0.77 | 1.45 | 1.23 |

| XX-EN | ||||||||

| SalamandraTA-7b-instruct | 44.69 | 41.72 | 68.17 | 0.89 | 0.85 | 0.8 | 1.09 | 1.11 |

| SalamandraTA-7b-base | 44.12 | 43 | 68.43 | 0.89 | 0.85 | 0.8 | 1.13 | 1.22 |

| MADLAD400-7B | 43.2 | 43.33 | 67.98 | 0.89 | 0.86 | 0.8 | 1.13 | 1.15 |

Spanish

This section presents the evaluation metrics for the Spanish translation tasks.

| Bleu↑ | Ter↓ | ChrF↑ | Comet↑ | Comet-kiwi↑ | Bleurt↑ | MetricX↓ | MetricX-QE↓ | |

|---|---|---|---|---|---|---|---|---|

| ES-XX | ||||||||

| SalamandraTA-7b-instruct | 23.67 | 65.71 | 53.55 | 0.87 | 0.82 | 0.75 | 1.04 | 1.05 |

| MADLAD400-7B | 22.48 | 68.91 | 53.93 | 0.86 | 0.83 | 0.75 | 1.09 | 1.14 |

| SalamandraTA-7b-base | 21.63 | 70.08 | 52.98 | 0.86 | 0.83 | 0.74 | 1.24 | 1.12 |

| XX-ES | ||||||||

| SalamandraTA-7b-instruct | 25.56 | 62.51 | 52.69 | 0.85 | 0.83 | 0.73 | 0.94 | 1.33 |

| MADLAD400-7B | 24.85 | 61.82 | 53 | 0.85 | 0.84 | 0.74 | 1.05 | 1.5 |

| SalamandraTA-7b-base | 24.71 | 62.33 | 52.96 | 0.85 | 0.84 | 0.73 | 1.06 | 1.37 |

Catalan

This section presents the evaluation metrics for the Catalan translation tasks.

| Bleu↑ | Ter↓ | ChrF↑ | Comet↑ | Comet-kiwi↑ | Bleurt↑ | MetricX↓ | MetricX-QE↓ | |

|---|---|---|---|---|---|---|---|---|

| CA-XX | ||||||||

| MADLAD400-7B | 29.37 | 59.01 | 58.47 | 0.87 | 0.81 | 0.77 | 1.08 | 1.31 |

| SalamandraTA-7b-instruct | 29.23 | 58.32 | 57.76 | 0.87 | 0.81 | 0.77 | 1.08 | 1.22 |

| SalamandraTA-7b-base | 29.06 | 59.32 | 58 | 0.87 | 0.81 | 0.76 | 1.23 | 1.28 |

| XX-CA | ||||||||

| SalamandraTA-7b-instruct | 33.64 | 54.49 | 59.03 | 0.86 | 0.8 | 0.75 | 1.07 | 1.6 |

| MADLAD400-7B | 33.02 | 55.01 | 59.38 | 0.86 | 0.81 | 0.75 | 1.18 | 1.79 |

| SalamandraTA-7b-base | 32.75 | 55.78 | 59.42 | 0.86 | 0.81 | 0.75 | 1.17 | 1.63 |

Low-Resource Languages of Spain

The tables below summarize the performance metrics for English, Spanish, and Catalan to Asturian, Aranese and Aragonese.

English-XX

| Source | Target | Bleu↑ | Ter↓ | ChrF↑ | |

|---|---|---|---|---|---|

| SalamandraTA-7b-instruct | en | ast | 31.49 | 54.01 | 60.65 |

| SalamandraTA-7b-base | en | ast | 26.4 | 64.02 | 57.35 |

| nllb-3.3B | en | ast | 22.02 | 77.26 | 51.4 |

| SalamandraTA-7b-instruct | en | arn | 13.04 | 87.13 | 37.56 |

| SalamandraTA-7b-base | en | arn | 8.36 | 90.85 | 34.06 |

| SalamandraTA-7b-instruct | en | arg | 20.43 | 65.62 | 50.79 |

| SalamandraTA-7b-base | en | arg | 12.24 | 73.48 | 44.75 |

Spanish-XX

| Source | Target | Bleu↑ | Ter↓ | ChrF↑ | |

|---|---|---|---|---|---|

| SalamandraTA-7b-instruct | es | ast | 21.28 | 68.11 | 52.73 |

| SalamandraTA-7b-base | es | ast | 17.65 | 75.78 | 51.05 |

| salamandraTA2B | es | ast | 16.68 | 77.29 | 49.46 |

| nllb-3.3B | es | ast | 11.85 | 100.86 | 40.27 |

| SalamandraTA-7b-base | es | arn | 29.19 | 71.85 | 49.42 |

| SalamandraTA-7b-instruct | es | arn | 26.82 | 74.04 | 47.55 |

| salamandraTA2B | es | arn | 25.41 | 74.71 | 47.33 |

| SalamandraTA-7b-base | es | arg | 53.96 | 31.51 | 76.08 |

| SalamandraTA-7b-instruct | es | arg | 47.54 | 36.57 | 72.38 |

| salamandraTA2B | es | arg | 44.57 | 37.93 | 71.32 |

Catalan-XX

| Source | Target | Bleu↑ | Ter↓ | ChrF↑ | |

|---|---|---|---|---|---|

| SalamandraTA-7b-instruct | ca | ast | 27.86 | 58.19 | 57.98 |

| SalamandraTA-7b-base | ca | ast | 26.11 | 63.63 | 58.08 |

| salamandraTA2B | ca | ast | 25.32 | 62.59 | 55.98 |

| nllb-3.3B | ca | ast | 17.17 | 91.47 | 45.83 |

| SalamandraTA-7b-base | ca | arn | 17.77 | 80.88 | 42.12 |

| SalamandraTA-7b-instruct | ca | arn | 16.45 | 82.01 | 41.04 |

| salamandraTA2B | ca | arn | 15.37 | 82.76 | 40.53 |

| SalamandraTA-7b-base | ca | arg | 22.53 | 62.37 | 54.32 |

| SalamandraTA-7b-instruct | ca | arg | 21.62 | 63.38 | 53.01 |

| salamandraTA2B | ca | arg | 18.6 | 65.82 | 51.21 |

Ethical Considerations and Limitations

Detailed information on the work done to examine the presence of unwanted social and cognitive biases in the base model can be found at Salamandra-7B model card. With regard to MT models, no specific analysis has yet been carried out in order to evaluate potential biases or limitations in translation accuracy across different languages, dialects, or domains. However, we recognize the importance of identifying and addressing any harmful stereotypes, cultural inaccuracies, or systematic performance discrepancies that may arise in Machine Translation. As such, we plan to perform more analyses as soon as we have implemented the necessary metrics and methods within our evaluation framework MT Lens. Note that the model has only undergone preliminary instruction tuning. We urge developers to consider potential limitations and conduct safety testing and tuning tailored to their specific applications.

Additional information

Author

The Language Technologies Unit from Barcelona Supercomputing Center.

Contact

For further information, please send an email to langtech@bsc.es.

Copyright

Copyright(c) 2024 by Language Technologies Unit, Barcelona Supercomputing Center.

Funding

This work has been promoted and financed by the Government of Catalonia through the Aina Project.

This work is funded by the Ministerio para la Transformación Digital y de la Función Pública - Funded by EU – NextGenerationEU within the framework of ILENIA Project with reference 2022/TL22/00215337.

Acknowledgements

This project has benefited from the contributions of numerous teams and institutions, mainly through data contributions, knowledge transfer or technical support.

In Catalonia, many institutions have been involved in the project. Our thanks to Òmnium Cultural, Parlament de Catalunya, Institut d'Estudis Aranesos, Racó Català, Vilaweb, ACN, Nació Digital, El món and Aquí Berguedà.

At the national level, we are especially grateful to our ILENIA project partners: CENID, HiTZ and CiTIUS for their participation. We also extend our genuine gratitude to the Spanish Senate and Congress, Fundación Dialnet, and the ‘Instituto Universitario de Sistemas Inteligentes y Aplicaciones Numéricas en Ingeniería (SIANI)’ of the University of Las Palmas de Gran Canaria.

At the international level, we thank the Welsh government, DFKI, Occiglot project, especially Malte Ostendorff, and The Common Crawl Foundation, especially Pedro Ortiz, for their collaboration. We would also like to give special thanks to the NVIDIA team, with whom we have met regularly, specially to: Ignacio Sarasua, Adam Henryk Grzywaczewski, Oleg Sudakov, Sergio Perez, Miguel Martinez, Felipes Soares and Meriem Bendris. Their constant support has been especially appreciated throughout the entire process.

Their valuable efforts have been instrumental in the development of this work.

Disclaimer

Be aware that the model may contain biases or other unintended distortions. When third parties deploy systems or provide services based on this model, or use the model themselves, they bear the responsibility for mitigating any associated risks and ensuring compliance with applicable regulations, including those governing the use of Artificial Intelligence.

The Barcelona Supercomputing Center, as the owner and creator of the model, shall not be held liable for any outcomes resulting from third-party use.