metadata

license: apache-2.0

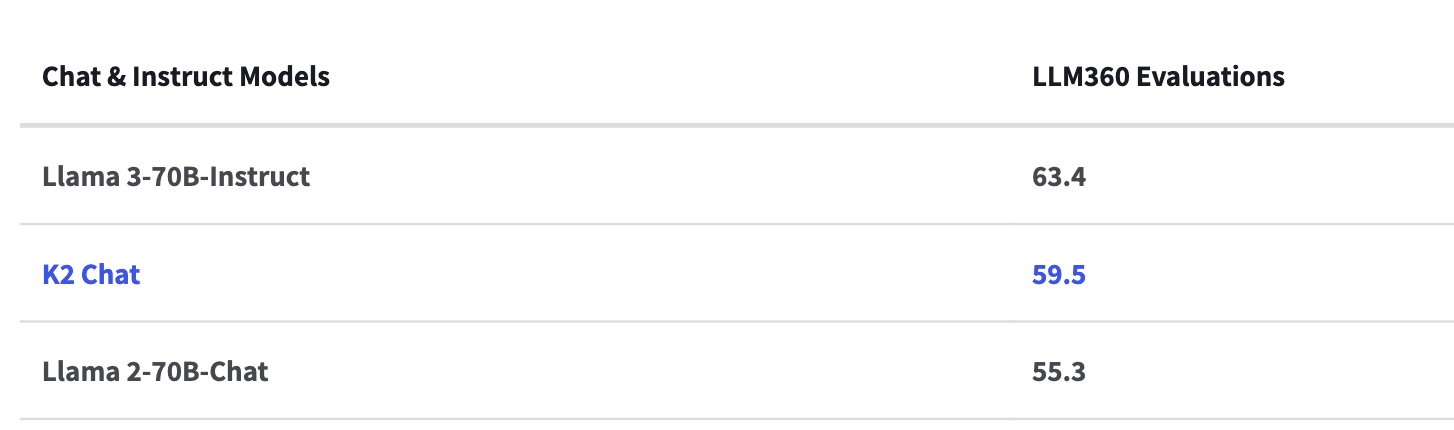

K2-Chat: a fully-reproducible large language model outperforming Llama 2 70B using 35% less compute

blurb

Loading K2-Chat

Loading K2

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("LLM360/K2-Chat")

model = AutoModelForCausalLM.from_pretrained("LLM360/K2-Chat")

prompt = 'hi how are you doing'

input_ids = tokenizer(prompt, return_tensors="pt").input_ids

gen_tokens = model.generate(input_ids, do_sample=True, max_length=128)

print("-"*20 + "Output for model" + 20 * '-')

print(tokenizer.batch_decode(gen_tokens)[0])