Update README.md

#2

by

danelcsb

- opened

README.md

CHANGED

|

@@ -1,8 +1,27 @@

|

|

| 1 |

---

|

| 2 |

library_name: transformers

|

| 3 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

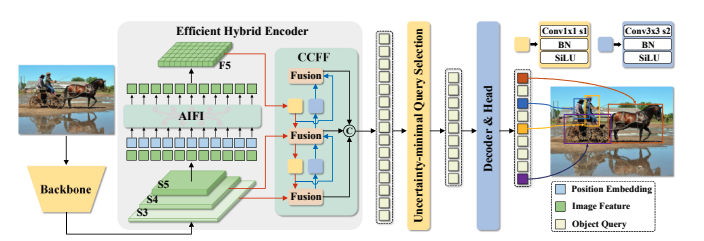

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 4 |

---

|

| 5 |

|

|

|

|

| 6 |

# Model Card for Model ID

|

| 7 |

|

| 8 |

<!-- Provide a quick summary of what the model is/does. -->

|

|

@@ -13,24 +32,41 @@ tags: []

|

|

| 13 |

|

| 14 |

### Model Description

|

| 15 |

|

| 16 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 17 |

|

| 18 |

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

|

| 19 |

|

| 20 |

-

- **Developed by:**

|

| 21 |

-

- **Funded by [optional]:**

|

| 22 |

-

|

| 23 |

-

|

| 24 |

-

- **

|

| 25 |

-

- **

|

| 26 |

-

- **

|

|

|

|

|

|

|

| 27 |

|

| 28 |

### Model Sources [optional]

|

| 29 |

|

| 30 |

<!-- Provide the basic links for the model. -->

|

| 31 |

|

| 32 |

-

- **Repository:**

|

| 33 |

-

- **Paper [optional]:**

|

| 34 |

- **Demo [optional]:** [More Information Needed]

|

| 35 |

|

| 36 |

## Uses

|

|

@@ -41,26 +77,20 @@ This is the model card of a 🤗 transformers model that has been pushed on the

|

|

| 41 |

|

| 42 |

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

|

| 43 |

|

| 44 |

-

[

|

| 45 |

|

| 46 |

### Downstream Use [optional]

|

| 47 |

|

| 48 |

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

|

| 49 |

|

| 50 |

-

[More Information Needed]

|

| 51 |

-

|

| 52 |

### Out-of-Scope Use

|

| 53 |

|

| 54 |

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

|

| 55 |

|

| 56 |

-

[More Information Needed]

|

| 57 |

-

|

| 58 |

## Bias, Risks, and Limitations

|

| 59 |

|

| 60 |

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

|

| 61 |

|

| 62 |

-

[More Information Needed]

|

| 63 |

-

|

| 64 |

### Recommendations

|

| 65 |

|

| 66 |

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

|

|

@@ -71,7 +101,32 @@ Users (both direct and downstream) should be made aware of the risks, biases and

|

|

| 71 |

|

| 72 |

Use the code below to get started with the model.

|

| 73 |

|

| 74 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 75 |

|

| 76 |

## Training Details

|

| 77 |

|

|

@@ -79,55 +134,55 @@ Use the code below to get started with the model.

|

|

| 79 |

|

| 80 |

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

|

| 81 |

|

| 82 |

-

[

|

| 83 |

|

| 84 |

### Training Procedure

|

| 85 |

|

| 86 |

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

|

| 87 |

|

| 88 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 89 |

|

| 90 |

-

|

| 91 |

|

|

|

|

| 92 |

|

| 93 |

#### Training Hyperparameters

|

| 94 |

|

| 95 |

-

- **Training regime:**

|

|

|

|

|

|

|

| 96 |

|

| 97 |

#### Speeds, Sizes, Times [optional]

|

| 98 |

|

| 99 |

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

|

| 100 |

|

| 101 |

-

[More Information Needed]

|

| 102 |

-

|

| 103 |

## Evaluation

|

| 104 |

|

| 105 |

<!-- This section describes the evaluation protocols and provides the results. -->

|

| 106 |

|

|

|

|

|

|

|

| 107 |

### Testing Data, Factors & Metrics

|

| 108 |

|

| 109 |

#### Testing Data

|

| 110 |

|

| 111 |

<!-- This should link to a Dataset Card if possible. -->

|

| 112 |

|

| 113 |

-

[More Information Needed]

|

| 114 |

-

|

| 115 |

#### Factors

|

| 116 |

|

| 117 |

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

|

| 118 |

|

| 119 |

-

[More Information Needed]

|

| 120 |

-

|

| 121 |

#### Metrics

|

| 122 |

|

| 123 |

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

|

| 124 |

|

| 125 |

-

[More Information Needed]

|

| 126 |

-

|

| 127 |

### Results

|

| 128 |

|

| 129 |

-

[More Information Needed]

|

| 130 |

-

|

| 131 |

#### Summary

|

| 132 |

|

| 133 |

|

|

@@ -136,64 +191,52 @@ Use the code below to get started with the model.

|

|

| 136 |

|

| 137 |

<!-- Relevant interpretability work for the model goes here -->

|

| 138 |

|

| 139 |

-

[More Information Needed]

|

| 140 |

-

|

| 141 |

## Environmental Impact

|

| 142 |

|

| 143 |

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

|

| 144 |

|

| 145 |

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

|

| 146 |

|

| 147 |

-

- **Hardware Type:** [More Information Needed]

|

| 148 |

-

- **Hours used:** [More Information Needed]

|

| 149 |

-

- **Cloud Provider:** [More Information Needed]

|

| 150 |

-

- **Compute Region:** [More Information Needed]

|

| 151 |

-

- **Carbon Emitted:** [More Information Needed]

|

| 152 |

|

| 153 |

## Technical Specifications [optional]

|

| 154 |

|

| 155 |

### Model Architecture and Objective

|

| 156 |

|

| 157 |

-

[

|

| 158 |

|

| 159 |

### Compute Infrastructure

|

| 160 |

|

| 161 |

-

[More Information Needed]

|

| 162 |

-

|

| 163 |

#### Hardware

|

| 164 |

|

| 165 |

-

[More Information Needed]

|

| 166 |

-

|

| 167 |

#### Software

|

| 168 |

|

| 169 |

-

[More Information Needed]

|

| 170 |

-

|

| 171 |

## Citation [optional]

|

| 172 |

|

| 173 |

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 174 |

|

| 175 |

**BibTeX:**

|

| 176 |

|

| 177 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 178 |

|

| 179 |

**APA:**

|

| 180 |

|

| 181 |

-

[More Information Needed]

|

| 182 |

-

|

| 183 |

## Glossary [optional]

|

| 184 |

|

| 185 |

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

|

| 186 |

|

| 187 |

-

[More Information Needed]

|

| 188 |

-

|

| 189 |

## More Information [optional]

|

| 190 |

|

| 191 |

-

[More Information Needed]

|

| 192 |

-

|

| 193 |

## Model Card Authors [optional]

|

| 194 |

|

| 195 |

-

[

|

| 196 |

-

|

| 197 |

-

## Model Card Contact

|

| 198 |

|

| 199 |

-

|

|

|

|

| 1 |

---

|

| 2 |

library_name: transformers

|

| 3 |

+

license: apache-2.0

|

| 4 |

+

language:

|

| 5 |

+

- en

|

| 6 |

+

pipeline_tag: object-detection

|

| 7 |

+

tags:

|

| 8 |

+

- object-detection

|

| 9 |

+

- vision

|

| 10 |

+

datasets:

|

| 11 |

+

- coco

|

| 12 |

+

widget:

|

| 13 |

+

- src: >-

|

| 14 |

+

https://huggingface.co/datasets/mishig/sample_images/resolve/main/savanna.jpg

|

| 15 |

+

example_title: Savanna

|

| 16 |

+

- src: >-

|

| 17 |

+

https://huggingface.co/datasets/mishig/sample_images/resolve/main/football-match.jpg

|

| 18 |

+

example_title: Football Match

|

| 19 |

+

- src: >-

|

| 20 |

+

https://huggingface.co/datasets/mishig/sample_images/resolve/main/airport.jpg

|

| 21 |

+

example_title: Airport

|

| 22 |

---

|

| 23 |

|

| 24 |

+

|

| 25 |

# Model Card for Model ID

|

| 26 |

|

| 27 |

<!-- Provide a quick summary of what the model is/does. -->

|

|

|

|

| 32 |

|

| 33 |

### Model Description

|

| 34 |

|

| 35 |

+

The YOLO series has become the most popular framework for real-time object detection due to its reasonable trade-off between speed and accuracy.

|

| 36 |

+

However, we observe that the speed and accuracy of YOLOs are negatively affected by the NMS.

|

| 37 |

+

Recently, end-to-end Transformer-based detectors (DETRs) have provided an alternative to eliminating NMS.

|

| 38 |

+

Nevertheless, the high computational cost limits their practicality and hinders them from fully exploiting the advantage of excluding NMS.

|

| 39 |

+

In this paper, we propose the Real-Time DEtection TRansformer (RT-DETR), the first real-time end-to-end object detector to our best knowledge that addresses the above dilemma.

|

| 40 |

+

We build RT-DETR in two steps, drawing on the advanced DETR:

|

| 41 |

+

first we focus on maintaining accuracy while improving speed, followed by maintaining speed while improving accuracy.

|

| 42 |

+

Specifically, we design an efficient hybrid encoder to expeditiously process multi-scale features by decoupling intra-scale interaction and cross-scale fusion to improve speed.

|

| 43 |

+

Then, we propose the uncertainty-minimal query selection to provide high-quality initial queries to the decoder, thereby improving accuracy.

|

| 44 |

+

In addition, RT-DETR supports flexible speed tuning by adjusting the number of decoder layers to adapt to various scenarios without retraining.

|

| 45 |

+

Our RT-DETR-R50 / R101 achieves 53.1% / 54.3% AP on COCO and 108 / 74 FPS on T4 GPU, outperforming previously advanced YOLOs in both speed and accuracy.

|

| 46 |

+

We also develop scaled RT-DETRs that outperform the lighter YOLO detectors (S and M models).

|

| 47 |

+

Furthermore, RT-DETR-R50 outperforms DINO-R50 by 2.2% AP in accuracy and about 21 times in FPS.

|

| 48 |

+

After pre-training with Objects365, RT-DETR-R50 / R101 achieves 55.3% / 56.2% AP. The project page: this [https URL](https://zhao-yian.github.io/RTDETR/).

|

| 49 |

+

|

| 50 |

+

|

| 51 |

|

| 52 |

This is the model card of a 🤗 transformers model that has been pushed on the Hub. This model card has been automatically generated.

|

| 53 |

|

| 54 |

+

- **Developed by:** Yian Zhao and Sangbum Choi

|

| 55 |

+

- **Funded by [optional]:** National Key R&D Program of China (No.2022ZD0118201), Natural Science Foundation of China (No.61972217, 32071459, 62176249, 62006133, 62271465),

|

| 56 |

+

and the Shenzhen Medical Research Funds in China (No.

|

| 57 |

+

B2302037).

|

| 58 |

+

- **Shared by [optional]:** Sangbum Choi

|

| 59 |

+

- **Model type:**

|

| 60 |

+

- **Language(s) (NLP):**

|

| 61 |

+

- **License:** Apache-2.0

|

| 62 |

+

- **Finetuned from model [optional]:**

|

| 63 |

|

| 64 |

### Model Sources [optional]

|

| 65 |

|

| 66 |

<!-- Provide the basic links for the model. -->

|

| 67 |

|

| 68 |

+

- **Repository:** https://github.com/lyuwenyu/RT-DETR

|

| 69 |

+

- **Paper [optional]:** https://arxiv.org/abs/2304.08069

|

| 70 |

- **Demo [optional]:** [More Information Needed]

|

| 71 |

|

| 72 |

## Uses

|

|

|

|

| 77 |

|

| 78 |

<!-- This section is for the model use without fine-tuning or plugging into a larger ecosystem/app. -->

|

| 79 |

|

| 80 |

+

You can use the raw model for object detection. See the [model hub](https://huggingface.co/models?search=rtdetr) to look for all available RTDETR models.

|

| 81 |

|

| 82 |

### Downstream Use [optional]

|

| 83 |

|

| 84 |

<!-- This section is for the model use when fine-tuned for a task, or when plugged into a larger ecosystem/app -->

|

| 85 |

|

|

|

|

|

|

|

| 86 |

### Out-of-Scope Use

|

| 87 |

|

| 88 |

<!-- This section addresses misuse, malicious use, and uses that the model will not work well for. -->

|

| 89 |

|

|

|

|

|

|

|

| 90 |

## Bias, Risks, and Limitations

|

| 91 |

|

| 92 |

<!-- This section is meant to convey both technical and sociotechnical limitations. -->

|

| 93 |

|

|

|

|

|

|

|

| 94 |

### Recommendations

|

| 95 |

|

| 96 |

<!-- This section is meant to convey recommendations with respect to the bias, risk, and technical limitations. -->

|

|

|

|

| 101 |

|

| 102 |

Use the code below to get started with the model.

|

| 103 |

|

| 104 |

+

```

|

| 105 |

+

import torch

|

| 106 |

+

import requests

|

| 107 |

+

|

| 108 |

+

from PIL import Image

|

| 109 |

+

from transformers import RTDetrForObjectDetection, RTDetrImageProcessor

|

| 110 |

+

|

| 111 |

+

url = 'http://images.cocodataset.org/val2017/000000039769.jpg'

|

| 112 |

+

image = Image.open(requests.get(url, stream=True).raw)

|

| 113 |

+

|

| 114 |

+

image_processor = RTDetrImageProcessor.from_pretrained("PekingU/rtdetr_r101vd_coco_o365")

|

| 115 |

+

model = RTDetrForObjectDetection.from_pretrained("PekingU/rtdetr_r101vd_coco_o365")

|

| 116 |

+

|

| 117 |

+

inputs = image_processor(images=image, return_tensors="pt")

|

| 118 |

+

|

| 119 |

+

with torch.no_grad():

|

| 120 |

+

outputs = model(**inputs)

|

| 121 |

+

|

| 122 |

+

results = image_processor.post_process_object_detection(outputs, target_sizes=torch.tensor([image.size[::-1]]), threshold=0.3)

|

| 123 |

+

|

| 124 |

+

for result in results:

|

| 125 |

+

for score, label_id, box in zip(result["scores"], result["labels"], result["boxes"]):

|

| 126 |

+

score, label = score.item(), label_id.item()

|

| 127 |

+

box = [round(i, 2) for i in box.tolist()]

|

| 128 |

+

print(f"{model.config.id2label[label]}: {score:.2f} {box}")

|

| 129 |

+

```

|

| 130 |

|

| 131 |

## Training Details

|

| 132 |

|

|

|

|

| 134 |

|

| 135 |

<!-- This should link to a Dataset Card, perhaps with a short stub of information on what the training data is all about as well as documentation related to data pre-processing or additional filtering. -->

|

| 136 |

|

| 137 |

+

The RTDETR model was trained on [COCO 2017 object detection](https://cocodataset.org/#download), a dataset consisting of 118k/5k annotated images for training/validation respectively.

|

| 138 |

|

| 139 |

### Training Procedure

|

| 140 |

|

| 141 |

<!-- This relates heavily to the Technical Specifications. Content here should link to that section when it is relevant to the training procedure. -->

|

| 142 |

|

| 143 |

+

We conduct experiments on

|

| 144 |

+

COCO [20] and Objects365 [35], where RT-DETR is trained

|

| 145 |

+

on COCO train2017 and validated on COCO val2017

|

| 146 |

+

dataset. We report the standard COCO metrics, including

|

| 147 |

+

AP (averaged over uniformly sampled IoU thresholds ranging from 0.50-0.95 with a step size of 0.05), AP50, AP75, as

|

| 148 |

+

well as AP at different scales: APS, APM, APL.

|

| 149 |

|

| 150 |

+

#### Preprocessing [optional]

|

| 151 |

|

| 152 |

+

Images are resized/rescaled such that the shortest side is at 640 pixels.

|

| 153 |

|

| 154 |

#### Training Hyperparameters

|

| 155 |

|

| 156 |

+

- **Training regime:** <!--fp32, fp16 mixed precision, bf16 mixed precision, bf16 non-mixed precision, fp16 non-mixed precision, fp8 mixed precision -->

|

| 157 |

+

|

| 158 |

+

|

| 159 |

|

| 160 |

#### Speeds, Sizes, Times [optional]

|

| 161 |

|

| 162 |

<!-- This section provides information about throughput, start/end time, checkpoint size if relevant, etc. -->

|

| 163 |

|

|

|

|

|

|

|

| 164 |

## Evaluation

|

| 165 |

|

| 166 |

<!-- This section describes the evaluation protocols and provides the results. -->

|

| 167 |

|

| 168 |

+

This model achieves an AP (average precision) of 53.1 on COCO 2017 validation. For more details regarding evaluation results, we refer to table 2 of the original paper.

|

| 169 |

+

|

| 170 |

### Testing Data, Factors & Metrics

|

| 171 |

|

| 172 |

#### Testing Data

|

| 173 |

|

| 174 |

<!-- This should link to a Dataset Card if possible. -->

|

| 175 |

|

|

|

|

|

|

|

| 176 |

#### Factors

|

| 177 |

|

| 178 |

<!-- These are the things the evaluation is disaggregating by, e.g., subpopulations or domains. -->

|

| 179 |

|

|

|

|

|

|

|

| 180 |

#### Metrics

|

| 181 |

|

| 182 |

<!-- These are the evaluation metrics being used, ideally with a description of why. -->

|

| 183 |

|

|

|

|

|

|

|

| 184 |

### Results

|

| 185 |

|

|

|

|

|

|

|

| 186 |

#### Summary

|

| 187 |

|

| 188 |

|

|

|

|

| 191 |

|

| 192 |

<!-- Relevant interpretability work for the model goes here -->

|

| 193 |

|

|

|

|

|

|

|

| 194 |

## Environmental Impact

|

| 195 |

|

| 196 |

<!-- Total emissions (in grams of CO2eq) and additional considerations, such as electricity usage, go here. Edit the suggested text below accordingly -->

|

| 197 |

|

| 198 |

Carbon emissions can be estimated using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700).

|

| 199 |

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 200 |

|

| 201 |

## Technical Specifications [optional]

|

| 202 |

|

| 203 |

### Model Architecture and Objective

|

| 204 |

|

| 205 |

+

|

| 206 |

|

| 207 |

### Compute Infrastructure

|

| 208 |

|

|

|

|

|

|

|

| 209 |

#### Hardware

|

| 210 |

|

|

|

|

|

|

|

| 211 |

#### Software

|

| 212 |

|

|

|

|

|

|

|

| 213 |

## Citation [optional]

|

| 214 |

|

| 215 |

<!-- If there is a paper or blog post introducing the model, the APA and Bibtex information for that should go in this section. -->

|

| 216 |

|

| 217 |

**BibTeX:**

|

| 218 |

|

| 219 |

+

```bibtex

|

| 220 |

+

@misc{lv2023detrs,

|

| 221 |

+

title={DETRs Beat YOLOs on Real-time Object Detection},

|

| 222 |

+

author={Yian Zhao and Wenyu Lv and Shangliang Xu and Jinman Wei and Guanzhong Wang and Qingqing Dang and Yi Liu and Jie Chen},

|

| 223 |

+

year={2023},

|

| 224 |

+

eprint={2304.08069},

|

| 225 |

+

archivePrefix={arXiv},

|

| 226 |

+

primaryClass={cs.CV}

|

| 227 |

+

}

|

| 228 |

+

```

|

| 229 |

|

| 230 |

**APA:**

|

| 231 |

|

|

|

|

|

|

|

| 232 |

## Glossary [optional]

|

| 233 |

|

| 234 |

<!-- If relevant, include terms and calculations in this section that can help readers understand the model or model card. -->

|

| 235 |

|

|

|

|

|

|

|

| 236 |

## More Information [optional]

|

| 237 |

|

|

|

|

|

|

|

| 238 |

## Model Card Authors [optional]

|

| 239 |

|

| 240 |

+

[Sangbum Choi](https://huggingface.co/danelcsb)

|

|

|

|

|

|

|

| 241 |

|

| 242 |

+

## Model Card Contact

|