license: other

language:

- en

library_name: transformers

inference: false

thumbnail: >-

https://h2o.ai/etc.clientlibs/h2o/clientlibs/clientlib-site/resources/images/favicon.ico

tags:

- gpt

- llm

- large language model

- LLaMa

datasets:

- h2oai/h2ogpt-oig-oasst1-instruct-cleaned-v2

TheBloke's LLM work is generously supported by a grant from andreessen horowitz (a16z)

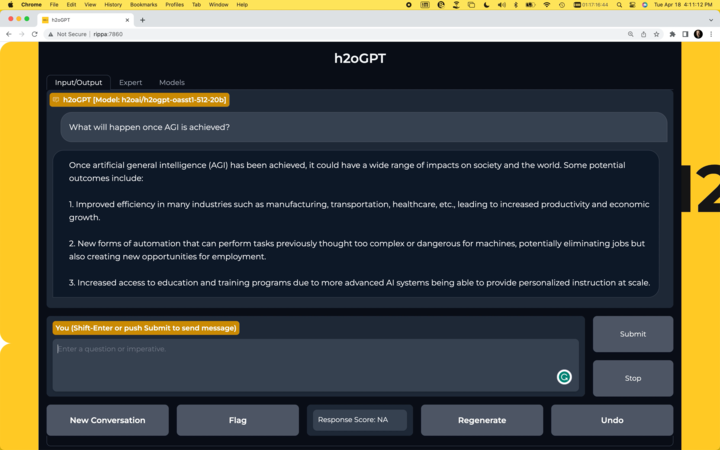

h2ogpt-oasst1-512-30B-GPTQ

These files are GPTQ format model files of H2O.ai's h2ogpt-research-oig-oasst1-512-30b.

It is the result of quantising to 4bit using GPTQ-for-LLaMa.

Repositories available

- 4bit GPTQ models for GPU inference.

- 4bit and 5bit GGML models for CPU inference.

- float16 HF format unquantised model for GPU inference and further conversions

Prompt Template

You need to use this template:

<human>: PROMPT

<bot>:

The model has no stopping token so it will keep talking to itself.

You will need to set up a custom stopping string of <bot>

How to easily download and use this model in text-generation-webui

Open the text-generation-webui UI as normal.

- Click the Model tab.

- Under Download custom model or LoRA, enter

TheBloke/h2ogpt-oasst1-512-30B-GPTQ. - Click Download.

- Wait until it says it's finished downloading.

- Click the Refresh icon next to Model in the top left.

- In the Model drop-down: choose the model you just downloaded,

h2ogpt-oasst1-512-30B-GPTQ. - If you see an error in the bottom right, ignore it - it's temporary.

- Fill out the

GPTQ parameterson the right:Bits = 4,Groupsize = None,model_type = Llama - Click Save settings for this model in the top right.

- Click Reload the Model in the top right.

- Once it says it's loaded, click the Text Generation tab and enter a prompt!

Provided files

Compatible file - h2ogpt-oasst1-512-30B-GPTQ-4bit.ooba.act-order.safetensors

In the main branch - the default one - you will find h2ogpt-oasst1-512-30B-GPTQ-4bit.ooba.act-order.safetensors

This will work with all versions of GPTQ-for-LLaMa. It has maximum compatibility.

It has no groupsize, so as to ensure the model can load on a 24GB VRAM card.

It was created with the --act-order parameter to get the best possible quantisation quality with the restriction of being able to load in 24GB.

h2ogpt-oasst1-512-30B-GPTQ-4bit.ooba.act-order.safetensors- Works with all versions of GPTQ-for-LLaMa code, both Triton and CUDA branches

- Works with text-generation-webui one-click-installers

- Parameters: Groupsize = None. act-order.

- Command used to create the GPTQ:

python llama.py /workspace/drom-65b/HF c4 --wbits 4 --true-sequential --act-order --save_safetensors /workspace/drom-gptq/dromedary-65B-GPTQ-4bit.safetensors

Discord

For further support, and discussions on these models and AI in general, join us at:

Thanks, and how to contribute.

Thanks to the chirper.ai team!

I've had a lot of people ask if they can contribute. I enjoy providing models and helping people, and would love to be able to spend even more time doing it, as well as expanding into new projects like fine tuning/training.

If you're able and willing to contribute it will be most gratefully received and will help me to keep providing more models, and to start work on new AI projects.

Donaters will get priority support on any and all AI/LLM/model questions and requests, access to a private Discord room, plus other benefits.

- Patreon: https://patreon.com/TheBlokeAI

- Ko-Fi: https://ko-fi.com/TheBlokeAI

Special thanks to: Aemon Algiz.

Patreon special mentions: Sam, theTransient, Jonathan Leane, Steven Wood, webtim, Johann-Peter Hartmann, Geoffrey Montalvo, Gabriel Tamborski, Willem Michiel, John Villwock, Derek Yates, Mesiah Bishop, Eugene Pentland, Pieter, Chadd, Stephen Murray, Daniel P. Andersen, terasurfer, Brandon Frisco, Thomas Belote, Sid, Nathan LeClaire, Magnesian, Alps Aficionado, Stanislav Ovsiannikov, Alex, Joseph William Delisle, Nikolai Manek, Michael Davis, Junyu Yang, K, J, Spencer Kim, Stefan Sabev, Olusegun Samson, transmissions 11, Michael Levine, Cory Kujawski, Rainer Wilmers, zynix, Kalila, Luke @flexchar, Ajan Kanaga, Mandus, vamX, Ai Maven, Mano Prime, Matthew Berman, subjectnull, Vitor Caleffi, Clay Pascal, biorpg, alfie_i, 阿明, Jeffrey Morgan, ya boyyy, Raymond Fosdick, knownsqashed, Olakabola, Leonard Tan, ReadyPlayerEmma, Enrico Ros, Dave, Talal Aujan, Illia Dulskyi, Sean Connelly, senxiiz, Artur Olbinski, Elle, Raven Klaugh, Fen Risland, Deep Realms, Imad Khwaja, Fred von Graf, Will Dee, usrbinkat, SuperWojo, Alexandros Triantafyllidis, Swaroop Kallakuri, Dan Guido, John Detwiler, Pedro Madruga, Iucharbius, Viktor Bowallius, Asp the Wyvern, Edmond Seymore, Trenton Dambrowitz, Space Cruiser, Spiking Neurons AB, Pyrater, LangChain4j, Tony Hughes, Kacper Wikieł, Rishabh Srivastava, David Ziegler, Luke Pendergrass, Andrey, Gabriel Puliatti, Lone Striker, Sebastain Graf, Pierre Kircher, Randy H, NimbleBox.ai, Vadim, danny, Deo Leter

Thank you to all my generous patrons and donaters!

And thank you again to a16z for their generous grant.

Original h2oGPT Model Card

Summary

H2O.ai's h2oai/h2ogpt-research-oig-oasst1-512-30b is a 30 billion parameter instruction-following large language model for research use only.

Due to the license attached to LLaMA models by Meta AI it is not possible to directly distribute LLaMA-based models. Instead we provide LORA weights.

- Base model: decapoda-research/llama-30b-hf

- Fine-tuning dataset: h2oai/h2ogpt-oig-oasst1-instruct-cleaned-v2

- Data-prep and fine-tuning code: H2O.ai GitHub

- Training logs: zip

The model was trained using h2oGPT code as:

torchrun --nproc_per_node=8 finetune.py --base_model=decapoda-research/llama-30b-hf --micro_batch_size=1 --batch_size=8 --cutoff_len=512 --num_epochs=2.0 --val_set_size=0 --eval_steps=100000 --save_steps=17000 --save_total_limit=20 --prompt_type=plain --save_code=True --train_8bit=False --run_id=llama30b_17 --llama_flash_attn=True --lora_r=64 --lora_target_modules=['q_proj', 'k_proj', 'v_proj', 'o_proj'] --learning_rate=2e-4 --lora_alpha=32 --drop_truncations=True --data_path=h2oai/h2ogpt-oig-oasst1-instruct-cleaned-v2 --data_mix_in_path=h2oai/openassistant_oasst1_h2ogpt --data_mix_in_factor=1.0 --data_mix_in_prompt_type=plain --data_mix_in_col_dict={'input': 'input'}

On h2oGPT Hash: 131f6d098b43236b5f91e76fc074ad089d6df368

Only the last checkpoint at epoch 2.0 and step 137,846 is provided in this model repository because the LORA state is large enough and there are enough checkpoints to make total run 19GB. Feel free to request additional checkpoints and we can consider adding more.

Chatbot

- Run your own chatbot: H2O.ai GitHub

Usage:

Usage as LORA:

Build HF model:

Use: https://github.com/h2oai/h2ogpt/blob/main/export_hf_checkpoint.py and change:

BASE_MODEL = 'decapoda-research/llama-30b-hf'

LORA_WEIGHTS = '<lora_weights_path>'

OUTPUT_NAME = "local_h2ogpt-research-oasst1-512-30b"

where <lora_weights_path> is a directory of some name that contains the files in this HF model repository:

- adapter_config.json

- adapter_model.bin

- special_tokens_map.json

- tokenizer.model

- tokenizer_config.json

Once the HF model is built, to use the model with the transformers library on a machine with GPUs, first make sure you have the transformers and accelerate libraries installed.

pip install transformers==4.28.1

pip install accelerate==0.18.0

import torch

from transformers import pipeline

generate_text = pipeline(model="local_h2ogpt-research-oasst1-512-30b", torch_dtype=torch.bfloat16, trust_remote_code=True, device_map="auto")

res = generate_text("Why is drinking water so healthy?", max_new_tokens=100)

print(res[0]["generated_text"])

Alternatively, if you prefer to not use trust_remote_code=True you can download instruct_pipeline.py,

store it alongside your notebook, and construct the pipeline yourself from the loaded model and tokenizer:

import torch

from h2oai_pipeline import H2OTextGenerationPipeline

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("local_h2ogpt-research-oasst1-512-30b", padding_side="left")

model = AutoModelForCausalLM.from_pretrained("local_h2ogpt-research-oasst1-512-30b", torch_dtype=torch.bfloat16, device_map="auto")

generate_text = H2OTextGenerationPipeline(model=model, tokenizer=tokenizer)

res = generate_text("Why is drinking water so healthy?", max_new_tokens=100)

print(res[0]["generated_text"])

Model Architecture with LORA and flash attention

PeftModelForCausalLM(

(base_model): LoraModel(

(model): LlamaForCausalLM(

(model): LlamaModel(

(embed_tokens): Embedding(32000, 6656, padding_idx=31999)

(layers): ModuleList(

(0-59): 60 x LlamaDecoderLayer(

(self_attn): LlamaAttention(

(q_proj): Linear(

in_features=6656, out_features=6656, bias=False

(lora_dropout): ModuleDict(

(default): Dropout(p=0.05, inplace=False)

)

(lora_A): ModuleDict(

(default): Linear(in_features=6656, out_features=64, bias=False)

)

(lora_B): ModuleDict(

(default): Linear(in_features=64, out_features=6656, bias=False)

)

)

(k_proj): Linear(

in_features=6656, out_features=6656, bias=False

(lora_dropout): ModuleDict(

(default): Dropout(p=0.05, inplace=False)

)

(lora_A): ModuleDict(

(default): Linear(in_features=6656, out_features=64, bias=False)

)

(lora_B): ModuleDict(

(default): Linear(in_features=64, out_features=6656, bias=False)

)

)

(v_proj): Linear(

in_features=6656, out_features=6656, bias=False

(lora_dropout): ModuleDict(

(default): Dropout(p=0.05, inplace=False)

)

(lora_A): ModuleDict(

(default): Linear(in_features=6656, out_features=64, bias=False)

)

(lora_B): ModuleDict(

(default): Linear(in_features=64, out_features=6656, bias=False)

)

)

(o_proj): Linear(

in_features=6656, out_features=6656, bias=False

(lora_dropout): ModuleDict(

(default): Dropout(p=0.05, inplace=False)

)

(lora_A): ModuleDict(

(default): Linear(in_features=6656, out_features=64, bias=False)

)

(lora_B): ModuleDict(

(default): Linear(in_features=64, out_features=6656, bias=False)

)

)

(rotary_emb): LlamaRotaryEmbedding()

)

(mlp): LlamaMLP(

(gate_proj): Linear(in_features=6656, out_features=17920, bias=False)

(down_proj): Linear(in_features=17920, out_features=6656, bias=False)

(up_proj): Linear(in_features=6656, out_features=17920, bias=False)

(act_fn): SiLUActivation()

)

(input_layernorm): LlamaRMSNorm()

(post_attention_layernorm): LlamaRMSNorm()

)

)

(norm): LlamaRMSNorm()

)

(lm_head): Linear(in_features=6656, out_features=32000, bias=False)

)

)

)

trainable params: 204472320 || all params: 32733415936 || trainable%: 0.6246592790675496

Model Configuration

{

"base_model_name_or_path": "decapoda-research/llama-30b-hf",

"bias": "none",

"fan_in_fan_out": false,

"inference_mode": true,

"init_lora_weights": true,

"lora_alpha": 32,

"lora_dropout": 0.05,

"modules_to_save": null,

"peft_type": "LORA",

"r": 64,

"target_modules": [

"q_proj",

"k_proj",

"v_proj",

"o_proj"

],

"task_type": "CAUSAL_LM"

Model Validation

Classical benchmarks align with base LLaMa 30B model, but are not useful for conversational purposes. One could use GPT3.5 or GPT4 to evaluate responses, while here we use a RLHF based reward model. This is run using h2oGPT:

python generate.py --base_model=decapoda-research/llama-30b-hf --gradio=False --infer_devices=False --eval_sharegpt_prompts_only=100 --eval_sharegpt_as_output=False --lora_weights=llama-30b-hf.h2oaih2ogpt-oig-oasst1-instruct-cleaned-v2.2.0_epochs.131f6d098b43236b5f91e76fc074ad089d6df368.llama30b_17

So the model gets a reward model score mean of 0.55 and median of 0.58. This compares to our 20B model that gets 0.49 mean and 0.48 median or Dollyv2 that gets 0.37 mean and 0.27 median.

Logs and prompt-response pairs

The full distribution of scores is shown here:

Same plot for our h2oGPT 20B:

Same plot for DB Dollyv2:

Disclaimer

Please read this disclaimer carefully before using the large language model provided in this repository. Your use of the model signifies your agreement to the following terms and conditions.

- The LORA contained in this repository is only for research (non-commercial) purposes.

- Biases and Offensiveness: The large language model is trained on a diverse range of internet text data, which may contain biased, racist, offensive, or otherwise inappropriate content. By using this model, you acknowledge and accept that the generated content may sometimes exhibit biases or produce content that is offensive or inappropriate. The developers of this repository do not endorse, support, or promote any such content or viewpoints.

- Limitations: The large language model is an AI-based tool and not a human. It may produce incorrect, nonsensical, or irrelevant responses. It is the user's responsibility to critically evaluate the generated content and use it at their discretion.

- Use at Your Own Risk: Users of this large language model must assume full responsibility for any consequences that may arise from their use of the tool. The developers and contributors of this repository shall not be held liable for any damages, losses, or harm resulting from the use or misuse of the provided model.

- Ethical Considerations: Users are encouraged to use the large language model responsibly and ethically. By using this model, you agree not to use it for purposes that promote hate speech, discrimination, harassment, or any form of illegal or harmful activities.

- Reporting Issues: If you encounter any biased, offensive, or otherwise inappropriate content generated by the large language model, please report it to the repository maintainers through the provided channels. Your feedback will help improve the model and mitigate potential issues.

- Changes to this Disclaimer: The developers of this repository reserve the right to modify or update this disclaimer at any time without prior notice. It is the user's responsibility to periodically review the disclaimer to stay informed about any changes.

By using the large language model provided in this repository, you agree to accept and comply with the terms and conditions outlined in this disclaimer. If you do not agree with any part of this disclaimer, you should refrain from using the model and any content generated by it.