license: llama2

metrics:

- code_eval

library_name: transformers

tags:

- code

model-index:

- name: WizardCoder-Python-34B-V1.0

results:

- task:

type: text-generation

dataset:

type: openai_humaneval

name: HumanEval

metrics:

- name: pass@1

type: pass@1

value: 0.732

verified: false

WizardCoder: Empowering Code Large Language Models with Evol-Instruct

🤗 HF Repo •🐱 Github Repo • 🐦 Twitter

📃 [WizardLM] • 📃 [WizardCoder] • 📃 [WizardMath]

👋 Join our Discord

News

[2024/01/04] 🔥 We released WizardCoder-33B-V1.1 trained from deepseek-coder-33b-base, the SOTA OSS Code LLM on EvalPlus Leaderboard, achieves 79.9 pass@1 on HumanEval, 73.2 pass@1 on HumanEval-Plus, 78.9 pass@1 on MBPP, and 66.9 pass@1 on MBPP-Plus.

[2024/01/04] 🔥 WizardCoder-33B-V1.1 outperforms ChatGPT 3.5, Gemini Pro, and DeepSeek-Coder-33B-instruct on HumanEval and HumanEval-Plus pass@1.

[2024/01/04] 🔥 WizardCoder-33B-V1.1 is comparable with ChatGPT 3.5, and surpasses Gemini Pro on MBPP and MBPP-Plus pass@1.

| Model | Checkpoint | Paper | HumanEval | HumanEval+ | MBPP | MBPP+ | License |

|---|---|---|---|---|---|---|---|

| GPT-4-Turbo (Nov 2023) | - | - | 85.4 | 81.7 | 83.0 | 70.7 | - |

| GPT-4 (May 2023) | - | - | 88.4 | 76.8 | - | - | - |

| GPT-3.5-Turbo (Nov 2023) | - | - | 72.6 | 65.9 | 81.7 | 69.4 | - |

| Gemini Pro | - | - | 63.4 | 55.5 | 72.9 | 57.9 | - |

| DeepSeek-Coder-33B-instruct | - | - | 78.7 | 72.6 | 78.7 | 66.7 | - |

| WizardCoder-33B-V1.1 | 🤗 HF Link | 📃 [WizardCoder] | 79.9 | 73.2 | 78.9 | 66.9 | MSFTResearch |

| WizardCoder-Python-34B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 73.2 | 64.6 | 73.2 | 59.9 | Llama2 |

| WizardCoder-15B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 59.8 | 52.4 | -- | -- | OpenRAIL-M |

| WizardCoder-Python-13B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 64.0 | -- | -- | -- | Llama2 |

| WizardCoder-Python-7B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 55.5 | -- | -- | -- | Llama2 |

| WizardCoder-3B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 34.8 | -- | -- | -- | OpenRAIL-M |

| WizardCoder-1B-V1.0 | 🤗 HF Link | 📃 [WizardCoder] | 23.8 | -- | -- | -- | OpenRAIL-M |

- Our WizardMath-70B-V1.0 model slightly outperforms some closed-source LLMs on the GSM8K, including ChatGPT 3.5, Claude Instant 1 and PaLM 2 540B.

- Our WizardMath-70B-V1.0 model achieves 81.6 pass@1 on the GSM8k Benchmarks, which is 24.8 points higher than the SOTA open-source LLM, and achieves 22.7 pass@1 on the MATH Benchmarks, which is 9.2 points higher than the SOTA open-source LLM.

| Model | Checkpoint | Paper | GSM8k | MATH | Online Demo | License |

|---|---|---|---|---|---|---|

| WizardMath-70B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 81.6 | 22.7 | Demo | Llama 2 |

| WizardMath-13B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 63.9 | 14.0 | Demo | Llama 2 |

| WizardMath-7B-V1.0 | 🤗 HF Link | 📃 [WizardMath] | 54.9 | 10.7 | Demo | Llama 2 |

- [08/09/2023] We released WizardLM-70B-V1.0 model. Here is Full Model Weight.

| Model | Checkpoint | Paper | MT-Bench | AlpacaEval | GSM8k | HumanEval | License |

|---|---|---|---|---|---|---|---|

| WizardLM-70B-V1.0 | 🤗 HF Link | 📃Coming Soon | 7.78 | 92.91% | 77.6% | 50.6 | Llama 2 License |

| WizardLM-13B-V1.2 | 🤗 HF Link | 7.06 | 89.17% | 55.3% | 36.6 | Llama 2 License | |

| WizardLM-13B-V1.1 | 🤗 HF Link | 6.76 | 86.32% | 25.0 | Non-commercial | ||

| WizardLM-30B-V1.0 | 🤗 HF Link | 7.01 | 37.8 | Non-commercial | |||

| WizardLM-13B-V1.0 | 🤗 HF Link | 6.35 | 75.31% | 24.0 | Non-commercial | ||

| WizardLM-7B-V1.0 | 🤗 HF Link | 📃 [WizardLM] | 19.1 | Non-commercial | |||

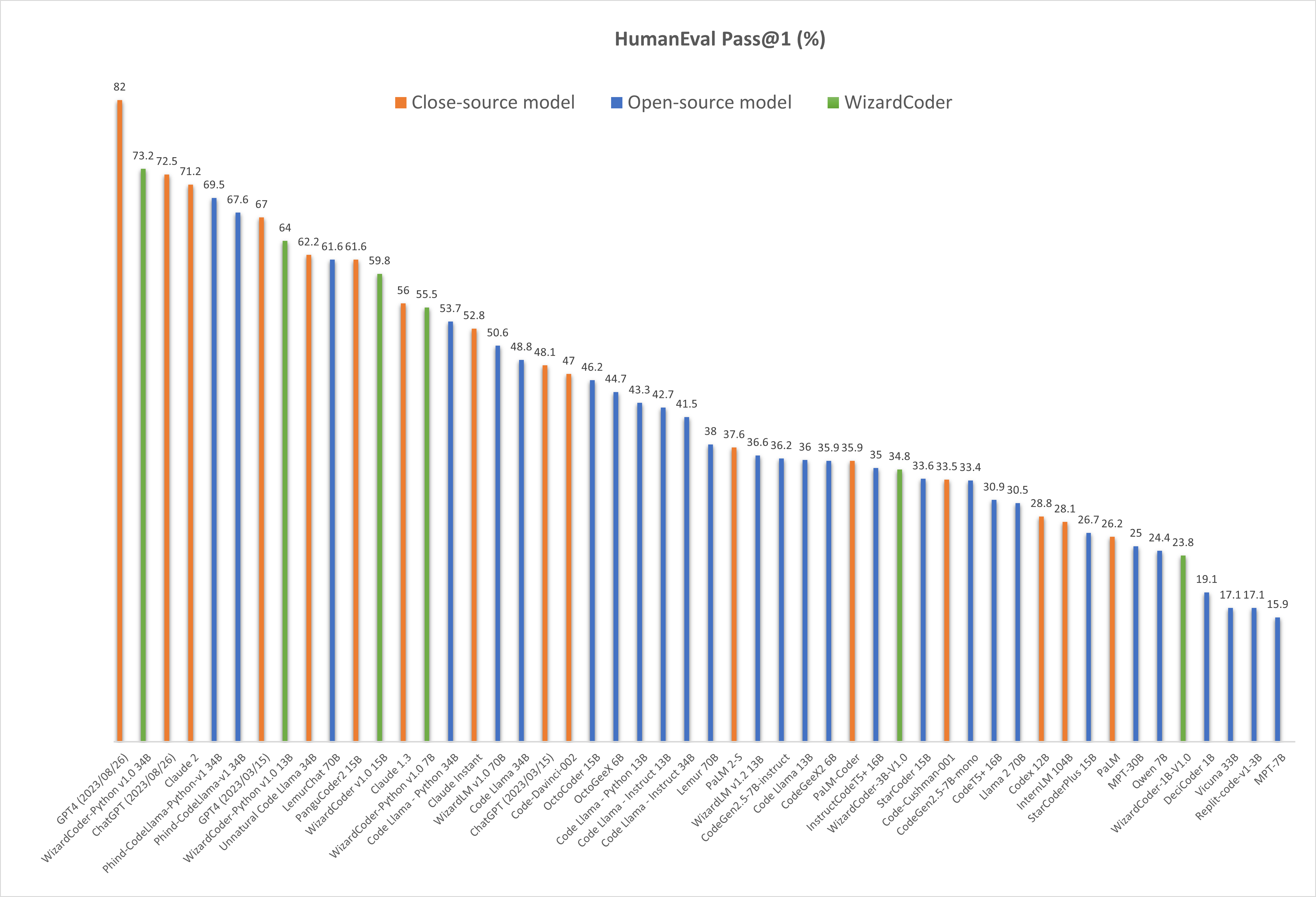

Comparing WizardCoder-Python-34B-V1.0 with Other LLMs.

🔥 The following figure shows that our WizardCoder-Python-34B-V1.0 attains the second position in this benchmark, surpassing GPT4 (2023/03/15, 73.2 vs. 67.0), ChatGPT-3.5 (73.2 vs. 72.5) and Claude2 (73.2 vs. 71.2).

Prompt Format

"Below is an instruction that describes a task. Write a response that appropriately completes the request.\n\n### Instruction:\n{instruction}\n\n### Response:"

Inference Demo Script

We provide the inference demo code here.

Citation

Please cite the repo if you use the data, method or code in this repo.

@article{luo2023wizardcoder,

title={WizardCoder: Empowering Code Large Language Models with Evol-Instruct},

author={Luo, Ziyang and Xu, Can and Zhao, Pu and Sun, Qingfeng and Geng, Xiubo and Hu, Wenxiang and Tao, Chongyang and Ma, Jing and Lin, Qingwei and Jiang, Daxin},

journal={arXiv preprint arXiv:2306.08568},

year={2023}

}