metadata

license: apache-2.0

tags:

- afro-digits-speech

datasets:

- crowd-speech-africa

metrics:

- accuracy

model-index:

- name: afrospeech-wav2vec-all-6

results:

- task:

name: Audio Classification

type: audio-classification

dataset:

name: Afro Speech

type: chrisjay/crowd-speech-africa

args: 'no'

metrics:

- name: Validation Accuracy

type: accuracy

value: 0.6205

afrospeech-wav2vec-all-6

This model is a fine-tuned version of facebook/wav2vec2-base on the crowd-speech-africa. It achieves the following results on the validation set:

- F1: 0.5787048581502744

- Accuracy: 0.6205357142857143

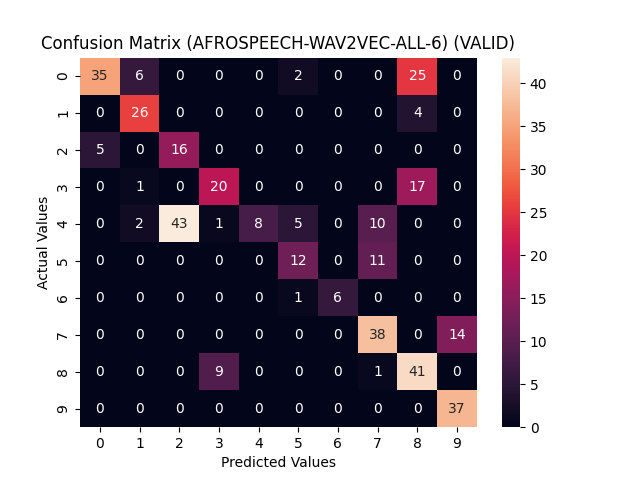

The confusion matrix below helps to give a better look at the model's performance across the digits. Through it, we can see the precision and recall of the model as well as other important insights.

Model description

More information needed

Intended uses & limitations

More information needed

Training and evaluation data

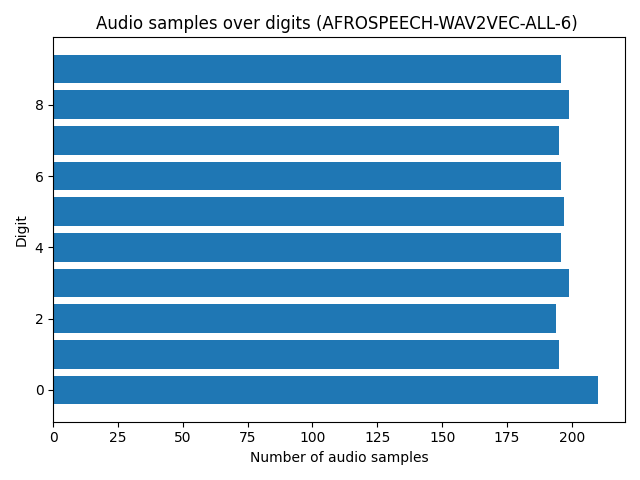

- Size of training set: 1977

- Size of validation set: 396

Training procedure

Training hyperparameters

The following hyperparameters were used during training:

- learning_rate: 3e-05

- train_batch_size: 64

- eval_batch_size: 64

- optimizer: Adam with betas=(0.9,0.999) and epsilon=1e-08

- num_epochs: 150

Training results

| Training Loss | Epoch | Validation Accuracy |

|---|---|---|

| 2.0466 | 1 | 0.1130 |

| 0.0468 | 50 | 0.6116 |

| 0.0292 | 100 | 0.5305 |

| 0.0155 | 150 | 0.5319 |

Framework versions

- Transformers 4.21.3

- Pytorch 1.12.0

- Datasets 1.14.0

- Tokenizers 0.12.1