CrisisTransformers

CrisisTransformers is a family of pre-trained language models and sentence encoders introduced in the paper "CrisisTransformers: Pre-trained language models and sentence encoders for crisis-related social media texts". The models were trained based on the RoBERTa pre-training procedure on a massive corpus of over 15 billion word tokens sourced from tweets associated with 30+ crisis events such as disease outbreaks, natural disasters, conflicts, etc. Please refer to the associated paper for more details.

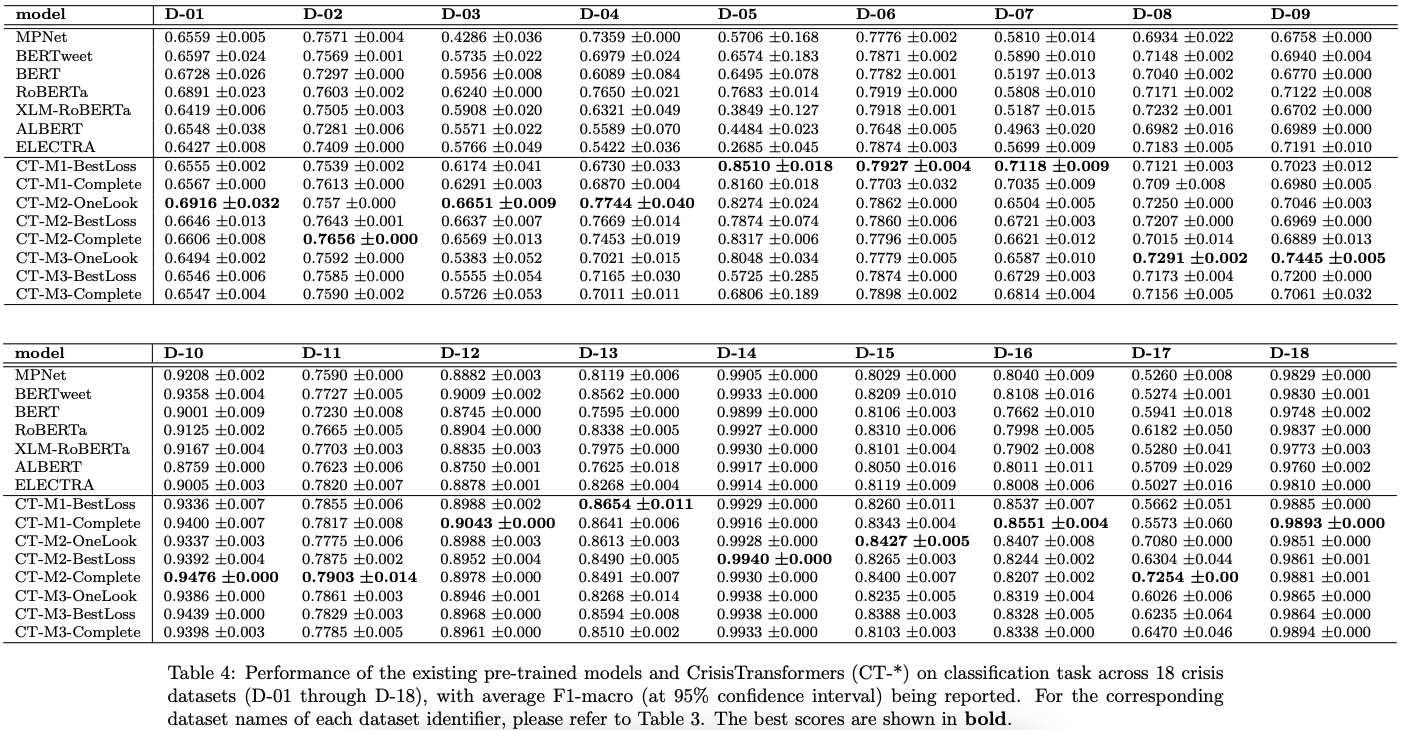

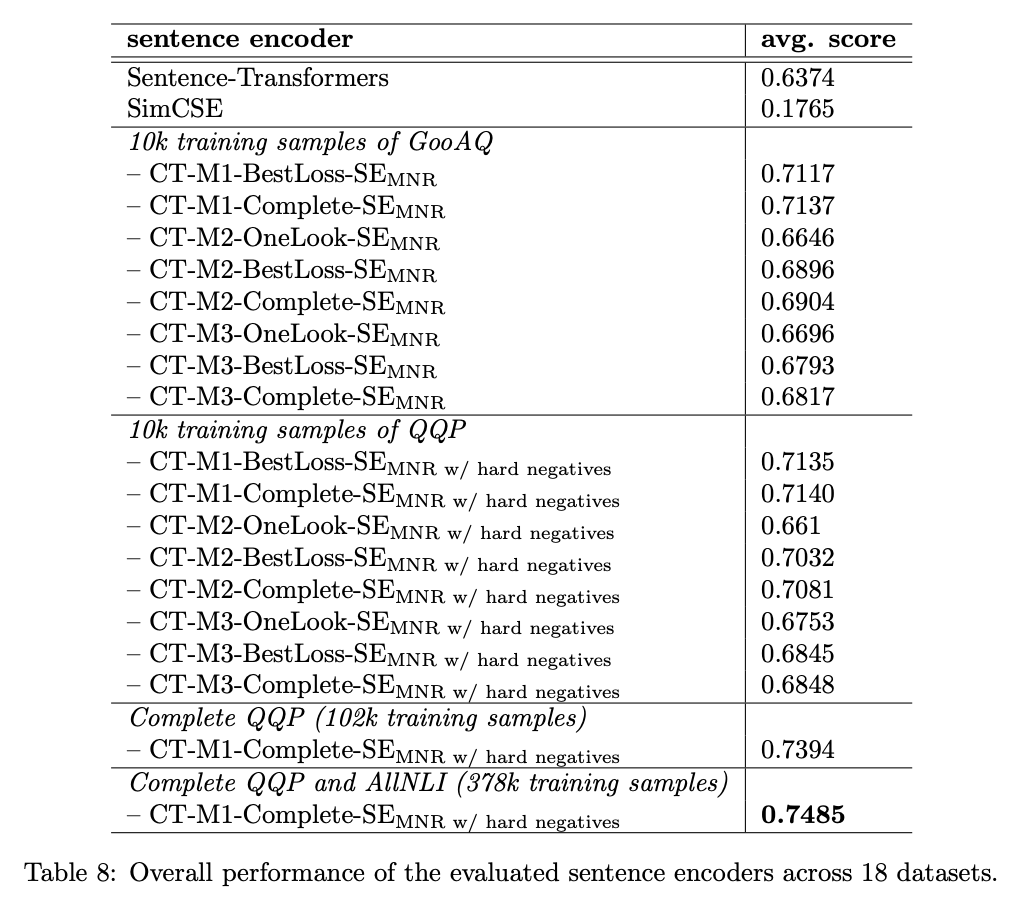

CrisisTransformers were evaluated on 18 public crisis-specific datasets against strong baselines such as BERT, RoBERTa, BERTweet, etc. Our pre-trained models outperform the baselines across all 18 datasets in classification tasks, and our best-performing sentence-encoder outperforms the state-of-the-art by more than 17% in sentence encoding tasks.

Uses

CrisisTransformers has 8 pre-trained models and a sentence encoder. The pre-trained models should be finetuned for downstream tasks just like BERT and RoBERTa. The sentence encoder can be used out-of-the-box just like Sentence-Transformers for sentence encoding to facilitate tasks such as semantic search, clustering, topic modelling.

Models and naming conventions

CT-M1 models were trained from scratch up to 40 epochs, while CT-M2 models were initialized with pre-trained RoBERTa's weights and CT-M3 models were initialized with pre-trained BERTweet's weights and both trained for up to 20 epochs. OneLook represents the checkpoint after 1 epoch, BestLoss represents the checkpoint with the lowest loss during training, and Complete represents the checkpoint after completing all epochs. SE represents sentence encoder.

| pre-trained model | source |

|---|---|

| CT-M1-BestLoss | crisistransformers/CT-M1-BestLoss |

| CT-M1-Complete | crisistransformers/CT-M1-Complete |

| CT-M2-OneLook | crisistransformers/CT-M2-OneLook |

| CT-M2-BestLoss | crisistransformers/CT-M2-BestLoss |

| CT-M2-Complete | crisistransformers/CT-M2-Complete |

| CT-M3-OneLook | crisistransformers/CT-M3-OneLook |

| CT-M3-BestLoss | crisistransformers/CT-M3-BestLoss |

| CT-M3-Complete | crisistransformers/CT-M3-Complete |

| sentence encoder | source |

|---|---|

| CT-M1-Complete-SE | crisistransformers/CT-M1-Complete-SE |

Results

Here are the main results from the associated paper.

Citation

If you use CrisisTransformers, please cite the following paper:

@misc{lamsal2023crisistransformers,

title={CrisisTransformers: Pre-trained language models and sentence encoders for crisis-related social media texts},

author={Rabindra Lamsal and

Maria Rodriguez Read and

Shanika Karunasekera},

year={2023},

eprint={2309.05494},

archivePrefix={arXiv},

primaryClass={cs.CL}

}