repoName

stringlengths 7

77

| tree

stringlengths 0

2.85M

| readme

stringlengths 0

4.9M

|

|---|---|---|

litmus-zhang_Near-Submission | README.md

asconfig.json

assembly

as_types.d.ts

index.ts

model.ts

tsconfig.json

package.json

| # Near-Submission

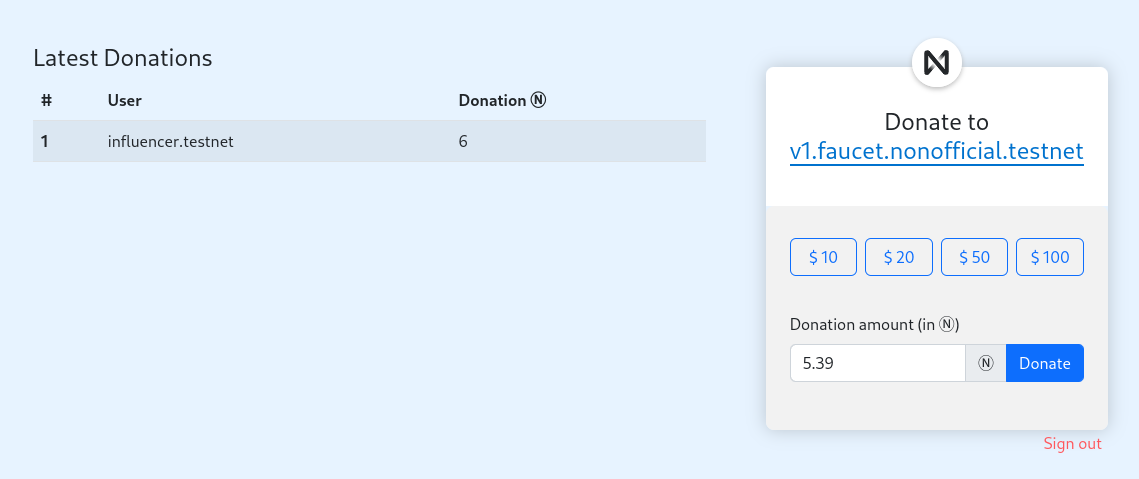

This is an ecommerce dapp built on NEAR blockchain, where anyone can add product to sell, and also get paid directly to their account

Link to demo: https://litmus-zhang.github.io/near-marketplace/

|

kateincoding_duckychain | Cargo.toml

README.md

neardev

dev-account.env

src

lib.rs

test_deploy.sh

| # duckychain

yellow pages using rust with smart-contracts

# Presentacion de nuestro proyecto:

[Presentacion](https://www.canva.com/design/DAFH0rbSH0w/Za9FCwZD4FWsE-5fSdK3lA/view?utm_content=DAFH0rbSH0w&utm_campaign=designshare&utm_medium=link&utm_source=publishpresent)

# Colaboradores

* Katherine Soto | <img alt="GitHub" width="26px" src="https://raw.githubusercontent.com/github/explore/78df643247d429f6cc873026c0622819ad797942/topics/github/github.png" />[GitHub](https://github.com/kateincoding)

* Andre Iglesias | <img alt="GitHub" width="26px" src="https://raw.githubusercontent.com/github/explore/78df643247d429f6cc873026c0622819ad797942/topics/github/github.png" /> [GitHub](https://github.com/AndreIglesias/AndreIglesias)

|

Git-Leon_near-archive | near-project-template

as-pect.config.js

asconfig.json

assembly

__tests__

as-pect.d.ts

example.spec.ts

index.ts

package-lock.json

package.json

tsconfig.json

near-protocol-notes

1-monday

content.md

2-tuesday

content.md

3-wednesday

content.md

4-thursday

content.md

5-friday

content.md

README.md

_config.yml

index.md

overview.md

run.sh

near.my-first-unittest

README.md

as-pect.config.js

asconfig.json

assembly

__tests__

as-pect.d.ts

counter.spec.ts

counter

counter-test-implementation.ts

counter.spec.ts

example.spec.ts

as_types.d.ts

index.ts

tsconfig.json

package-lock.json

package.json

near.react.my-first-fullstack

.gitpod.yml

README.md

babel.config.js

contract

README.md

as-pect.config.js

asconfig.json

assembly

__tests__

as-pect.d.ts

main.spec.ts

as_types.d.ts

index.ts

tsconfig.json

compile.js

package-lock.json

package.json

package.json

src

App.js

__mocks__

fileMock.js

assets

logo-black.svg

logo-white.svg

config.js

global.css

index.html

index.js

jest.init.js

main.test.js

utils.js

wallet

login

index.html

near.react.token-sender

.gitpod.yml

.vscode

settings.json

README.md

babel.config.js

contract

README.md

as-pect.config.js

asconfig.json

assembly

TokenSenderFactory.ts

TokenSenderImpl.ts

TokenSenderInterface.ts

TokenSenderLogger.ts

__tests__

as-pect.d.ts

main.spec.ts

as_types.d.ts

index.ts

tsconfig.json

compile.js

package-lock.json

package.json

package.json

src

App.js

__mocks__

fileMock.js

assets

logo-black.svg

logo-white.svg

components

MetaData.js

NavbarComponent.js

TokenSender.js

config.js

global.css

index.html

index.js

jest.init.js

main.test.js

near-wallet-connection-model.js

near-wallet-connection.js

utils.js

wallet

login

index.html

near.reactjs.components

.gitpod.yml

README.md

babel.config.js

contract

README.md

as-pect.config.js

asconfig.json

assembly

__tests__

as-pect.d.ts

main.spec.ts

as_types.d.ts

index.ts

tsconfig.json

compile.js

package-lock.json

package.json

package.json

src

App.js

__mocks__

fileMock.js

assets

logo-black.svg

logo-white.svg

components

MetaData.js

NavbarComponent.js

config.js

global.css

index.html

index.js

jest.init.js

main.test.js

near-wallet-connection-model.js

near-wallet-connection.js

utils.js

wallet

login

index.html

near.reactjs.projecttemplate

.gitpod.yml

README.md

babel.config.js

contract

README.md

as-pect.config.js

asconfig.json

assembly

__tests__

as-pect.d.ts

main.spec.ts

as_types.d.ts

index.ts

tsconfig.json

compile.js

package-lock.json

package.json

package.json

src

App.js

__mocks__

fileMock.js

assets

logo-black.svg

logo-white.svg

config.js

global.css

index.html

index.js

jest.init.js

main.test.js

near-wallet-connection.js

utils.js

wallet

login

index.html

| [React]: https://reactjs.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[jest]: https://jestjs.io/

[NEAR accounts]: https://docs.near.org/docs/concepts/account

[NEAR Wallet]: https://wallet.testnet.near.org/

[near-cli]: https://github.com/near/near-cli

[gh-pages]: https://github.com/tschaub/gh-pages

[tutorial]: https://curriculeon.github.io/Curriculeon/lectures/blockchain/near/my-first-react/content.html

# NEAR React Application

* **Objective**

* To create a reusable project template for NEAR-ReactJs applications

* **Description**

* This project was created by following [tutorial]

* This application was initialized with [create-near-app]

* **Prerequisite Software**

* [Node.Js] ≥ 12

* `yarn`

## Quick Start

* Execute the command below to install `yarn` dependencies

* `yarn install`

* Execute the command below to run the local development server

* `yarn dev`

[<img src="./quickstart.gif">](./quickstart.gif)

## Application Architecture

* The "backend" code lives in the `/contract` folder.

* See the README there for more info.

* The frontend code lives in the `/src` folder.

* `/src/index.html` is a great place to start exploring.

* Note that it loads in `/src/index.js`, where you can learn how the frontend connects to the NEAR blockchain.

* Tests: there are different kinds of tests for the frontend and the smart contract.

* See `contract/README` for info about how it's tested.

* The frontend code gets tested with [jest].

* You can run both of these at once with `yarn run test`.

## Deployment

* Every smart contract in NEAR has its [own associated account][NEAR accounts].

* When you run `yarn dev`, your smart contract gets deployed to the live NEAR TestNet with a throwaway account.

* When you're ready to make it permanent, here's how.

### Step 1 - Create an acount for the contract

* Create an account for the contract

* Each account on NEAR can have at most one contract deployed to it.

* If you've already created an account such as `your-name.testnet`, you can deploy your contract to `near.react.my-first-fullstack.your-name.testnet`.

* Assuming you've already created an account on [NEAR Wallet], here's how to create `near.react.my-first-fullstack.your-name.testnet`:

* Execute the command below to authorize NEAR

* `near login`

* Create a subaccount (replace `YOUR-NAME` below with your actual account name):

* `near create-account near.react.my-first-fullstack.YOUR-NAME.testnet --masterAccount YOUR-NAME.testnet`

### Step 2 - Set contract name in code

* Modify the line in `src/config.js` that sets the account name of the contract.

* Set the account name to the account id you used above.

* `const CONTRACT_NAME = process.env.CONTRACT_NAME || 'near.react.my-first-fullstack.YOUR-NAME.testnet'`

### Step 3 - Deploy

* Execute the command below to build and deploy the smart contract to the NEAR TestNet

* `yarn deploy`

1. builds & deploys smart contract to NEAR TestNet

2. builds & deploys frontend code to GitHub using [gh-pages].

* This will only work if the project already has a repository set up on GitHub.

* Feel free to modify the `deploy` script in `package.json` to deploy elsewhere.

## Troubleshooting

* On Windows, if you're seeing an error containing `EPERM` it may be related to spaces in your path.

* Please see [this issue](https://github.com/zkat/npx/issues/209) for more details.

near.react.my-first-fullstack Smart Contract

==================

A [smart contract] written in [AssemblyScript] for an app initialized with [create-near-app]

Quick Start

===========

Before you compile this code, you will need to install [Node.js] ≥ 12

Exploring The Code

==================

1. The main smart contract code lives in `assembly/index.ts`. You can compile

it with the `./compile` script.

2. Tests: You can run smart contract tests with the `./test` script. This runs

standard AssemblyScript tests using [as-pect].

[smart contract]: https://docs.near.org/docs/develop/contracts/overview

[AssemblyScript]: https://www.assemblyscript.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[as-pect]: https://www.npmjs.com/package/@as-pect/cli

near.react.my-first-fullstack Smart Contract

==================

A [smart contract] written in [AssemblyScript] for an app initialized with [create-near-app]

Quick Start

===========

Before you compile this code, you will need to install [Node.js] ≥ 12

Exploring The Code

==================

1. The main smart contract code lives in `assembly/index.ts`. You can compile

it with the `./compile` script.

2. Tests: You can run smart contract tests with the `./test` script. This runs

standard AssemblyScript tests using [as-pect].

[smart contract]: https://docs.near.org/docs/develop/contracts/overview

[AssemblyScript]: https://www.assemblyscript.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[as-pect]: https://www.npmjs.com/package/@as-pect/cli

# My First NEAR Unittest

* **Objective**

* to establish a reusable template for expressing simple unit test structures

* **Purpose**

* to familiarize with unit testing in the NEAR ecosystem

* **Description**

* this project was created by following [this tutorial](https://curriculeon.github.io/Curriculeon/lectures/blockchain/near/unittest/content.html)

## Prerequisites

* Ensure [NodeJS](https://curriculeon.github.io/Curriculeon/lectures/nodejs/installation/content.html) and [yarn](https://curriculeon.github.io/Curriculeon/lectures/nodejs/yarn-installation/content.html) are installed on your machine.

* Execute the command below to fetch all of the node modules specified in the `package.json`

* `npm install`

* Execute the command below to run the unit tests

* `yarn asp`

near.react.my-first-fullstack Smart Contract

==================

A [smart contract] written in [AssemblyScript] for an app initialized with [create-near-app]

Quick Start

===========

Before you compile this code, you will need to install [Node.js] ≥ 12

Exploring The Code

==================

1. The main smart contract code lives in `assembly/index.ts`. You can compile

it with the `./compile` script.

2. Tests: You can run smart contract tests with the `./test` script. This runs

standard AssemblyScript tests using [as-pect].

[smart contract]: https://docs.near.org/docs/develop/contracts/overview

[AssemblyScript]: https://www.assemblyscript.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[as-pect]: https://www.npmjs.com/package/@as-pect/cli

near.react.my-first-fullstack

==================

This [React] app was initialized with [create-near-app]

Quick Start

===========

To run this project locally:

1. Prerequisites: Make sure you've installed [Node.js] ≥ 12

2. Install dependencies: `yarn install`

3. Run the local development server: `yarn dev` (see `package.json` for a

full list of `scripts` you can run with `yarn`)

Now you'll have a local development environment backed by the NEAR TestNet!

Go ahead and play with the app and the code. As you make code changes, the app will automatically reload.

Exploring The Code

==================

1. The "backend" code lives in the `/contract` folder. See the README there for

more info.

2. The frontend code lives in the `/src` folder. `/src/index.html` is a great

place to start exploring. Note that it loads in `/src/index.js`, where you

can learn how the frontend connects to the NEAR blockchain.

3. Tests: there are different kinds of tests for the frontend and the smart

contract. See `contract/README` for info about how it's tested. The frontend

code gets tested with [jest]. You can run both of these at once with `yarn

run test`.

Deploy

======

Every smart contract in NEAR has its [own associated account][NEAR accounts]. When you run `yarn dev`, your smart contract gets deployed to the live NEAR TestNet with a throwaway account. When you're ready to make it permanent, here's how.

Step 0: Install near-cli (optional)

-------------------------------------

[near-cli] is a command line interface (CLI) for interacting with the NEAR blockchain. It was installed to the local `node_modules` folder when you ran `yarn install`, but for best ergonomics you may want to install it globally:

yarn install --global near-cli

Or, if you'd rather use the locally-installed version, you can prefix all `near` commands with `npx`

Ensure that it's installed with `near --version` (or `npx near --version`)

Step 1: Create an account for the contract

------------------------------------------

Each account on NEAR can have at most one contract deployed to it. If you've already created an account such as `your-name.testnet`, you can deploy your contract to `near.react.my-first-fullstack.your-name.testnet`. Assuming you've already created an account on [NEAR Wallet], here's how to create `near.react.my-first-fullstack.your-name.testnet`:

1. Authorize NEAR CLI, following the commands it gives you:

near login

2. Create a subaccount (replace `YOUR-NAME` below with your actual account name):

near create-account near.react.my-first-fullstack.YOUR-NAME.testnet --masterAccount YOUR-NAME.testnet

Step 2: set contract name in code

---------------------------------

Modify the line in `src/config.js` that sets the account name of the contract. Set it to the account id you used above.

const CONTRACT_NAME = process.env.CONTRACT_NAME || 'near.react.my-first-fullstack.YOUR-NAME.testnet'

Step 3: deploy!

---------------

One command:

yarn deploy

As you can see in `package.json`, this does two things:

1. builds & deploys smart contract to NEAR TestNet

2. builds & deploys frontend code to GitHub using [gh-pages]. This will only work if the project already has a repository set up on GitHub. Feel free to modify the `deploy` script in `package.json` to deploy elsewhere.

Troubleshooting

===============

On Windows, if you're seeing an error containing `EPERM` it may be related to spaces in your path. Please see [this issue](https://github.com/zkat/npx/issues/209) for more details.

[React]: https://reactjs.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[jest]: https://jestjs.io/

[NEAR accounts]: https://docs.near.org/docs/concepts/account

[NEAR Wallet]: https://wallet.testnet.near.org/

[near-cli]: https://github.com/near/near-cli

[gh-pages]: https://github.com/tschaub/gh-pages

[React]: https://reactjs.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[jest]: https://jestjs.io/

[NEAR accounts]: https://docs.near.org/docs/concepts/account

[NEAR Wallet]: https://wallet.testnet.near.org/

[near-cli]: https://github.com/near/near-cli

[gh-pages]: https://github.com/tschaub/gh-pages

[tutorial]: https://curriculeon.github.io/Curriculeon/lectures/blockchain/near/my-first-react/content.html

# NEAR React Application

* **Objective**

* To create a reusable project template for NEAR-ReactJs applications

* **Description**

* This project was created by following [tutorial]

* This application was initialized with [create-near-app]

* **Prerequisite Software**

* [Node.Js] ≥ 12

* `yarn`

## Quick Start

* Execute the command below to install `yarn` dependencies

* `yarn install`

* Execute the command below to run the local development server

* `yarn dev`

[<img src="./quickstart.gif">](./quickstart.gif)

## Application Architecture

* The "backend" code lives in the `/contract` folder.

* See the README there for more info.

* The frontend code lives in the `/src` folder.

* `/src/index.html` is a great place to start exploring.

* Note that it loads in `/src/index.js`, where you can learn how the frontend connects to the NEAR blockchain.

* Tests: there are different kinds of tests for the frontend and the smart contract.

* See `contract/README` for info about how it's tested.

* The frontend code gets tested with [jest].

* You can run both of these at once with `yarn run test`.

## Deployment

* Every smart contract in NEAR has its [own associated account][NEAR accounts].

* When you run `yarn dev`, your smart contract gets deployed to the live NEAR TestNet with a throwaway account.

* When you're ready to make it permanent, here's how.

### Step 1 - Create an acount for the contract

* Create an account for the contract

* Each account on NEAR can have at most one contract deployed to it.

* If you've already created an account such as `your-name.testnet`, you can deploy your contract to `near.react.my-first-fullstack.your-name.testnet`.

* Assuming you've already created an account on [NEAR Wallet], here's how to create `near.react.my-first-fullstack.your-name.testnet`:

* Execute the command below to authorize NEAR

* `near login`

* Create a subaccount (replace `YOUR-NAME` below with your actual account name):

* `near create-account near.react.my-first-fullstack.YOUR-NAME.testnet --masterAccount YOUR-NAME.testnet`

### Step 2 - Set contract name in code

* Modify the line in `src/config.js` that sets the account name of the contract.

* Set the account name to the account id you used above.

* `const CONTRACT_NAME = process.env.CONTRACT_NAME || 'near.react.my-first-fullstack.YOUR-NAME.testnet'`

### Step 3 - Deploy

* Execute the command below to build and deploy the smart contract to the NEAR TestNet

* `yarn deploy`

1. builds & deploys smart contract to NEAR TestNet

2. builds & deploys frontend code to GitHub using [gh-pages].

* This will only work if the project already has a repository set up on GitHub.

* Feel free to modify the `deploy` script in `package.json` to deploy elsewhere.

## Send Funds

* Execute the command below from a browser terminal

* `await window.contract.addFunds({recipient:'test1', amount:10})`

## Troubleshooting

* On Windows, if you're seeing an error containing `EPERM` it may be related to spaces in your path.

* Please see [this issue](https://github.com/zkat/npx/issues/209) for more details.

near.react.my-first-fullstack Smart Contract

==================

A [smart contract] written in [AssemblyScript] for an app initialized with [create-near-app]

Quick Start

===========

Before you compile this code, you will need to install [Node.js] ≥ 12

Exploring The Code

==================

1. The main smart contract code lives in `assembly/index.ts`. You can compile

it with the `./compile` script.

2. Tests: You can run smart contract tests with the `./test` script. This runs

standard AssemblyScript tests using [as-pect].

[smart contract]: https://docs.near.org/docs/develop/contracts/overview

[AssemblyScript]: https://www.assemblyscript.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[as-pect]: https://www.npmjs.com/package/@as-pect/cli

# Prerequesites

* Ensure [`Ruby` and `Jekyll` are installed](https://curriculeon.github.io/Curriculeon/lectures/jekyll/installation/content.html) on your machine.

* Execute the command below from the root directory of the project upon cloning

* `chmod u+x ./run.sh`

* `./run.sh`

* The application should serve on [`localhost:4000`](http://localhost:4000/) by default.

[React]: https://reactjs.org/

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[jest]: https://jestjs.io/

[NEAR accounts]: https://docs.near.org/docs/concepts/account

[NEAR Wallet]: https://wallet.testnet.near.org/

[near-cli]: https://github.com/near/near-cli

[gh-pages]: https://github.com/tschaub/gh-pages

[tutorial]: https://curriculeon.github.io/Curriculeon/lectures/blockchain/near/my-first-react/content.html

# NEAR React Application

* **Objective**

* To create a reusable project template for NEAR-ReactJs applications

* **Description**

* This project was created by following [tutorial]

* This application was initialized with [create-near-app]

* **Prerequisite Software**

* [Node.Js] ≥ 12

* `yarn`

## Quick Start

* Execute the command below to install `yarn` dependencies

* `yarn install`

* Execute the command below to run the local development server

* `yarn dev`

[<img src="./quickstart.gif">](./quickstart.gif)

## Application Architecture

* The "backend" code lives in the `/contract` folder.

* See the README there for more info.

* The frontend code lives in the `/src` folder.

* `/src/index.html` is a great place to start exploring.

* Note that it loads in `/src/index.js`, where you can learn how the frontend connects to the NEAR blockchain.

* Tests: there are different kinds of tests for the frontend and the smart contract.

* See `contract/README` for info about how it's tested.

* The frontend code gets tested with [jest].

* You can run both of these at once with `yarn run test`.

## Deployment

* Every smart contract in NEAR has its [own associated account][NEAR accounts].

* When you run `yarn dev`, your smart contract gets deployed to the live NEAR TestNet with a throwaway account.

* When you're ready to make it permanent, here's how.

### Step 1 - Create an acount for the contract

* Create an account for the contract

* Each account on NEAR can have at most one contract deployed to it.

* If you've already created an account such as `your-name.testnet`, you can deploy your contract to `near.react.my-first-fullstack.your-name.testnet`.

* Assuming you've already created an account on [NEAR Wallet], here's how to create `near.react.my-first-fullstack.your-name.testnet`:

* Execute the command below to authorize NEAR

* `near login`

* Create a subaccount (replace `YOUR-NAME` below with your actual account name):

* `near create-account near.react.my-first-fullstack.YOUR-NAME.testnet --masterAccount YOUR-NAME.testnet`

### Step 2 - Set contract name in code

* Modify the line in `src/config.js` that sets the account name of the contract.

* Set the account name to the account id you used above.

* `const CONTRACT_NAME = process.env.CONTRACT_NAME || 'near.react.my-first-fullstack.YOUR-NAME.testnet'`

### Step 3 - Deploy

* Execute the command below to build and deploy the smart contract to the NEAR TestNet

* `yarn deploy`

1. builds & deploys smart contract to NEAR TestNet

2. builds & deploys frontend code to GitHub using [gh-pages].

* This will only work if the project already has a repository set up on GitHub.

* Feel free to modify the `deploy` script in `package.json` to deploy elsewhere.

## Troubleshooting

* On Windows, if you're seeing an error containing `EPERM` it may be related to spaces in your path.

* Please see [this issue](https://github.com/zkat/npx/issues/209) for more details.

|

esaminu_test-rs-boilerplate-1003 | .eslintrc.yml

.github

ISSUE_TEMPLATE

01_BUG_REPORT.md

02_FEATURE_REQUEST.md

03_CODEBASE_IMPROVEMENT.md

04_SUPPORT_QUESTION.md

config.yml

PULL_REQUEST_TEMPLATE.md

labels.yml

workflows

codeql.yml

deploy-to-console.yml

labels.yml

lock.yml

pr-labels.yml

stale.yml

.gitpod.yml

README.md

contract

Cargo.toml

README.md

build.sh

deploy.sh

src

lib.rs

docs

CODE_OF_CONDUCT.md

CONTRIBUTING.md

SECURITY.md

frontend

App.js

assets

global.css

logo-black.svg

logo-white.svg

index.html

index.js

near-interface.js

near-wallet.js

package.json

start.sh

ui-components.js

integration-tests

Cargo.toml

src

tests.rs

package.json

| # Hello NEAR Contract

The smart contract exposes two methods to enable storing and retrieving a greeting in the NEAR network.

```rust

const DEFAULT_GREETING: &str = "Hello";

#[near_bindgen]

#[derive(BorshDeserialize, BorshSerialize)]

pub struct Contract {

greeting: String,

}

impl Default for Contract {

fn default() -> Self {

Self{greeting: DEFAULT_GREETING.to_string()}

}

}

#[near_bindgen]

impl Contract {

// Public: Returns the stored greeting, defaulting to 'Hello'

pub fn get_greeting(&self) -> String {

return self.greeting.clone();

}

// Public: Takes a greeting, such as 'howdy', and records it

pub fn set_greeting(&mut self, greeting: String) {

// Record a log permanently to the blockchain!

log!("Saving greeting {}", greeting);

self.greeting = greeting;

}

}

```

<br />

# Quickstart

1. Make sure you have installed [rust](https://rust.org/).

2. Install the [`NEAR CLI`](https://github.com/near/near-cli#setup)

<br />

## 1. Build and Deploy the Contract

You can automatically compile and deploy the contract in the NEAR testnet by running:

```bash

./deploy.sh

```

Once finished, check the `neardev/dev-account` file to find the address in which the contract was deployed:

```bash

cat ./neardev/dev-account

# e.g. dev-1659899566943-21539992274727

```

<br />

## 2. Retrieve the Greeting

`get_greeting` is a read-only method (aka `view` method).

`View` methods can be called for **free** by anyone, even people **without a NEAR account**!

```bash

# Use near-cli to get the greeting

near view <dev-account> get_greeting

```

<br />

## 3. Store a New Greeting

`set_greeting` changes the contract's state, for which it is a `change` method.

`Change` methods can only be invoked using a NEAR account, since the account needs to pay GAS for the transaction.

```bash

# Use near-cli to set a new greeting

near call <dev-account> set_greeting '{"message":"howdy"}' --accountId <dev-account>

```

**Tip:** If you would like to call `set_greeting` using your own account, first login into NEAR using:

```bash

# Use near-cli to login your NEAR account

near login

```

and then use the logged account to sign the transaction: `--accountId <your-account>`.

<h1 align="center">

<a href="https://github.com/near/boilerplate-template-rs">

<picture>

<source media="(prefers-color-scheme: dark)" srcset="https://raw.githubusercontent.com/near/boilerplate-template-rs/main/docs/images/pagoda_logo_light.png">

<source media="(prefers-color-scheme: light)" srcset="https://raw.githubusercontent.com/near/boilerplate-template-rs/main/docs/images/pagoda_logo_dark.png">

<img alt="" src="https://raw.githubusercontent.com/near/boilerplate-template-rs/main/docs/images/pagoda_logo_dark.png">

</picture>

</a>

</h1>

<div align="center">

Rust Boilerplate Template

<br />

<br />

<a href="https://github.com/near/boilerplate-template-rs/issues/new?assignees=&labels=bug&template=01_BUG_REPORT.md&title=bug%3A+">Report a Bug</a>

·

<a href="https://github.com/near/boilerplate-template-rs/issues/new?assignees=&labels=enhancement&template=02_FEATURE_REQUEST.md&title=feat%3A+">Request a Feature</a>

.

<a href="https://github.com/near/boilerplate-template-rs/issues/new?assignees=&labels=question&template=04_SUPPORT_QUESTION.md&title=support%3A+">Ask a Question</a>

</div>

<div align="center">

<br />

[](https://github.com/near/boilerplate-template-rs/issues?q=is%3Aissue+is%3Aopen+label%3A%22help+wanted%22)

[](https://github.com/near)

</div>

<details open="open">

<summary>Table of Contents</summary>

- [About](#about)

- [Built With](#built-with)

- [Getting Started](#getting-started)

- [Prerequisites](#prerequisites)

- [Installation](#installation)

- [Usage](#usage)

- [Roadmap](#roadmap)

- [Support](#support)

- [Project assistance](#project-assistance)

- [Contributing](#contributing)

- [Authors & contributors](#authors--contributors)

- [Security](#security)

</details>

---

## About

This project is created for easy-to-start as a React + Rust skeleton template in the Pagoda Gallery. It was initialized with [create-near-app]. Clone it and start to build your own gallery project!

### Built With

[create-near-app], [amazing-github-template](https://github.com/dec0dOS/amazing-github-template)

Getting Started

==================

### Prerequisites

Make sure you have a [current version of Node.js](https://nodejs.org/en/about/releases/) installed – we are targeting versions `16+`.

Read about other [prerequisites](https://docs.near.org/develop/prerequisites) in our docs.

### Installation

Install all dependencies:

npm install

Build your contract:

npm run build

Deploy your contract to TestNet with a temporary dev account:

npm run deploy

Usage

=====

Test your contract:

npm test

Start your frontend:

npm start

Exploring The Code

==================

1. The smart-contract code lives in the `/contract` folder. See the README there for

more info. In blockchain apps the smart contract is the "backend" of your app.

2. The frontend code lives in the `/frontend` folder. `/frontend/index.html` is a great

place to start exploring. Note that it loads in `/frontend/index.js`,

this is your entrypoint to learn how the frontend connects to the NEAR blockchain.

3. Test your contract: `npm test`, this will run the tests in `integration-tests` directory.

Deploy

======

Every smart contract in NEAR has its [own associated account][NEAR accounts].

When you run `npm run deploy`, your smart contract gets deployed to the live NEAR TestNet with a temporary dev account.

When you're ready to make it permanent, here's how:

Step 0: Install near-cli (optional)

-------------------------------------

[near-cli] is a command line interface (CLI) for interacting with the NEAR blockchain. It was installed to the local `node_modules` folder when you ran `npm install`, but for best ergonomics you may want to install it globally:

npm install --global near-cli

Or, if you'd rather use the locally-installed version, you can prefix all `near` commands with `npx`

Ensure that it's installed with `near --version` (or `npx near --version`)

Step 1: Create an account for the contract

------------------------------------------

Each account on NEAR can have at most one contract deployed to it. If you've already created an account such as `your-name.testnet`, you can deploy your contract to `near-blank-project.your-name.testnet`. Assuming you've already created an account on [NEAR Wallet], here's how to create `near-blank-project.your-name.testnet`:

1. Authorize NEAR CLI, following the commands it gives you:

near login

2. Create a subaccount (replace `YOUR-NAME` below with your actual account name):

near create-account near-blank-project.YOUR-NAME.testnet --masterAccount YOUR-NAME.testnet

Step 2: deploy the contract

---------------------------

Use the CLI to deploy the contract to TestNet with your account ID.

Replace `PATH_TO_WASM_FILE` with the `wasm` that was generated in `contract` build directory.

near deploy --accountId near-blank-project.YOUR-NAME.testnet --wasmFile PATH_TO_WASM_FILE

Step 3: set contract name in your frontend code

-----------------------------------------------

Modify the line in `src/config.js` that sets the account name of the contract. Set it to the account id you used above.

const CONTRACT_NAME = process.env.CONTRACT_NAME || 'near-blank-project.YOUR-NAME.testnet'

Troubleshooting

===============

On Windows, if you're seeing an error containing `EPERM` it may be related to spaces in your path. Please see [this issue](https://github.com/zkat/npx/issues/209) for more details.

[create-near-app]: https://github.com/near/create-near-app

[Node.js]: https://nodejs.org/en/download/package-manager/

[jest]: https://jestjs.io/

[NEAR accounts]: https://docs.near.org/concepts/basics/account

[NEAR Wallet]: https://wallet.testnet.near.org/

[near-cli]: https://github.com/near/near-cli

[gh-pages]: https://github.com/tschaub/gh-pages

## Roadmap

See the [open issues](https://github.com/near/boilerplate-template-rs/issues) for a list of proposed features (and known issues).

- [Top Feature Requests](https://github.com/near/boilerplate-template-rs/issues?q=label%3Aenhancement+is%3Aopen+sort%3Areactions-%2B1-desc) (Add your votes using the 👍 reaction)

- [Top Bugs](https://github.com/near/boilerplate-template-rs/issues?q=is%3Aissue+is%3Aopen+label%3Abug+sort%3Areactions-%2B1-desc) (Add your votes using the 👍 reaction)

- [Newest Bugs](https://github.com/near/boilerplate-template-rs/issues?q=is%3Aopen+is%3Aissue+label%3Abug)

## Support

Reach out to the maintainer:

- [GitHub issues](https://github.com/near/boilerplate-template-rs/issues/new?assignees=&labels=question&template=04_SUPPORT_QUESTION.md&title=support%3A+)

## Project assistance

If you want to say **thank you** or/and support active development of Rust Boilerplate Template:

- Add a [GitHub Star](https://github.com/near/boilerplate-template-rs) to the project.

- Tweet about the Rust Boilerplate Template.

- Write interesting articles about the project on [Dev.to](https://dev.to/), [Medium](https://medium.com/) or your personal blog.

Together, we can make Rust Boilerplate Template **better**!

## Contributing

First off, thanks for taking the time to contribute! Contributions are what make the open-source community such an amazing place to learn, inspire, and create. Any contributions you make will benefit everybody else and are **greatly appreciated**.

Please read [our contribution guidelines](docs/CONTRIBUTING.md), and thank you for being involved!

## Authors & contributors

The original setup of this repository is by [Dmitriy Sheleg](https://github.com/shelegdmitriy).

For a full list of all authors and contributors, see [the contributors page](https://github.com/near/boilerplate-template-rs/contributors).

## Security

Rust Boilerplate Template follows good practices of security, but 100% security cannot be assured.

Rust Boilerplate Template is provided **"as is"** without any **warranty**. Use at your own risk.

_For more information and to report security issues, please refer to our [security documentation](docs/SECURITY.md)._

|

harshD42_near-evoting | .gitpod.yml

README.md

babel.config.js

contract

README.md

as-pect.config.js

asconfig.json

assembly

__tests__

as-pect.d.ts

main.spec.ts

as_types.d.ts

index.ts

tsconfig.json

compile.js

node_modules

.bin

acorn.cmd

acorn.ps1

asb.cmd

asb.ps1

asbuild.cmd

asbuild.ps1

asc.cmd

asc.ps1

asinit.cmd

asinit.ps1

asp.cmd

asp.ps1

aspect.cmd

aspect.ps1

assemblyscript-build.cmd

assemblyscript-build.ps1

eslint.cmd

eslint.ps1

esparse.cmd

esparse.ps1

esvalidate.cmd

esvalidate.ps1

js-yaml.cmd

js-yaml.ps1

mkdirp.cmd

mkdirp.ps1

near-vm-as.cmd

near-vm-as.ps1

near-vm.cmd

near-vm.ps1

nearley-railroad.cmd

nearley-railroad.ps1

nearley-test.cmd

nearley-test.ps1

nearley-unparse.cmd

nearley-unparse.ps1

nearleyc.cmd

nearleyc.ps1

node-which.cmd

node-which.ps1

resolve.cmd

resolve.ps1

rimraf.cmd

rimraf.ps1

semver.cmd

semver.ps1

shjs.cmd

shjs.ps1

wasm-opt.cmd

wasm-opt.ps1

.package-lock.json

@as-covers

assembly

CONTRIBUTING.md

README.md

index.ts

package.json

tsconfig.json

core

CONTRIBUTING.md

README.md

package.json

glue

README.md

lib

index.d.ts

index.js

package.json

transform

README.md

lib

index.d.ts

index.js

util.d.ts

util.js

node_modules

visitor-as

.github

workflows

test.yml

README.md

as

index.d.ts

index.js

asconfig.json

dist

astBuilder.d.ts

astBuilder.js

base.d.ts

base.js

baseTransform.d.ts

baseTransform.js

decorator.d.ts

decorator.js

examples

capitalize.d.ts

capitalize.js

exportAs.d.ts

exportAs.js

functionCallTransform.d.ts

functionCallTransform.js

includeBytesTransform.d.ts

includeBytesTransform.js

list.d.ts

list.js

toString.d.ts

toString.js

index.d.ts

index.js

path.d.ts

path.js

simpleParser.d.ts

simpleParser.js

transformRange.d.ts

transformRange.js

transformer.d.ts

transformer.js

utils.d.ts

utils.js

visitor.d.ts

visitor.js

package.json

tsconfig.json

package.json

@as-pect

assembly

README.md

assembly

index.ts

internal

Actual.ts

Expectation.ts

Expected.ts

Reflect.ts

ReflectedValueType.ts

Test.ts

assert.ts

call.ts

comparison

toIncludeComparison.ts

toIncludeEqualComparison.ts

log.ts

noOp.ts

package.json

types

as-pect.d.ts

as-pect.portable.d.ts

env.d.ts

cli

README.md

init

as-pect.config.js

env.d.ts

example.spec.ts

init-types.d.ts

portable-types.d.ts

lib

as-pect.cli.amd.d.ts

as-pect.cli.amd.js

help.d.ts

help.js

index.d.ts

index.js

init.d.ts

init.js

portable.d.ts

portable.js

run.d.ts

run.js

test.d.ts

test.js

types.d.ts

types.js

util

CommandLineArg.d.ts

CommandLineArg.js

IConfiguration.d.ts

IConfiguration.js

asciiArt.d.ts

asciiArt.js

collectReporter.d.ts

collectReporter.js

getTestEntryFiles.d.ts

getTestEntryFiles.js

removeFile.d.ts

removeFile.js

strings.d.ts

strings.js

writeFile.d.ts

writeFile.js

worklets

ICommand.d.ts

ICommand.js

compiler.d.ts

compiler.js

package.json

core

README.md

lib

as-pect.core.amd.d.ts

as-pect.core.amd.js

index.d.ts

index.js

reporter

CombinationReporter.d.ts

CombinationReporter.js

EmptyReporter.d.ts

EmptyReporter.js

IReporter.d.ts

IReporter.js

SummaryReporter.d.ts

SummaryReporter.js

VerboseReporter.d.ts

VerboseReporter.js

test

IWarning.d.ts

IWarning.js

TestContext.d.ts

TestContext.js

TestNode.d.ts

TestNode.js

transform

assemblyscript.d.ts

assemblyscript.js

createAddReflectedValueKeyValuePairsMember.d.ts

createAddReflectedValueKeyValuePairsMember.js

createGenericTypeParameter.d.ts

createGenericTypeParameter.js

createStrictEqualsMember.d.ts

createStrictEqualsMember.js

emptyTransformer.d.ts

emptyTransformer.js

hash.d.ts

hash.js

index.d.ts

index.js

util

IAspectExports.d.ts

IAspectExports.js

IWriteable.d.ts

IWriteable.js

ReflectedValue.d.ts

ReflectedValue.js

TestNodeType.d.ts

TestNodeType.js

rTrace.d.ts

rTrace.js

stringifyReflectedValue.d.ts

stringifyReflectedValue.js

timeDifference.d.ts

timeDifference.js

wasmTools.d.ts

wasmTools.js

package.json

csv-reporter

index.ts

lib

as-pect.csv-reporter.amd.d.ts

as-pect.csv-reporter.amd.js

index.d.ts

index.js

package.json

readme.md

tsconfig.json

json-reporter

index.ts

lib

as-pect.json-reporter.amd.d.ts

as-pect.json-reporter.amd.js

index.d.ts

index.js

package.json

readme.md

tsconfig.json

snapshots

__tests__

snapshot.spec.ts

jest.config.js

lib

Snapshot.d.ts

Snapshot.js

SnapshotDiff.d.ts

SnapshotDiff.js

SnapshotDiffResult.d.ts

SnapshotDiffResult.js

as-pect.core.amd.d.ts

as-pect.core.amd.js

index.d.ts

index.js

parser

grammar.d.ts

grammar.js

package.json

src

Snapshot.ts

SnapshotDiff.ts

SnapshotDiffResult.ts

index.ts

parser

grammar.ts

tsconfig.json

@assemblyscript

loader

README.md

index.d.ts

index.js

package.json

umd

index.d.ts

index.js

package.json

@babel

code-frame

README.md

lib

index.js

package.json

helper-validator-identifier

README.md

lib

identifier.js

index.js

keyword.js

package.json

scripts

generate-identifier-regex.js

highlight

README.md

lib

index.js

node_modules

ansi-styles

index.js

package.json

readme.md

chalk

index.js

package.json

readme.md

templates.js

types

index.d.ts

color-convert

CHANGELOG.md

README.md

conversions.js

index.js

package.json

route.js

color-name

.eslintrc.json

README.md

index.js

package.json

test.js

escape-string-regexp

index.js

package.json

readme.md

has-flag

index.js

package.json

readme.md

supports-color

browser.js

index.js

package.json

readme.md

package.json

@eslint

eslintrc

CHANGELOG.md

README.md

conf

config-schema.js

environments.js

eslint-all.js

eslint-recommended.js

lib

cascading-config-array-factory.js

config-array-factory.js

config-array

config-array.js

config-dependency.js

extracted-config.js

ignore-pattern.js

index.js

override-tester.js

flat-compat.js

index.js

shared

ajv.js

config-ops.js

config-validator.js

deprecation-warnings.js

naming.js

relative-module-resolver.js

types.js

package.json

@humanwhocodes

config-array

README.md

api.js

package.json

object-schema

.eslintrc.js

.github

workflows

nodejs-test.yml

release-please.yml

CHANGELOG.md

README.md

package.json

src

index.js

merge-strategy.js

object-schema.js

validation-strategy.js

tests

merge-strategy.js

object-schema.js

validation-strategy.js

acorn-jsx

README.md

index.d.ts

index.js

package.json

xhtml.js

acorn

CHANGELOG.md

README.md

dist

acorn.d.ts

acorn.js

acorn.mjs.d.ts

bin.js

package.json

ajv

.tonic_example.js

README.md

dist

ajv.bundle.js

ajv.min.js

lib

ajv.d.ts

ajv.js

cache.js

compile

async.js

equal.js

error_classes.js

formats.js

index.js

resolve.js

rules.js

schema_obj.js

ucs2length.js

util.js

data.js

definition_schema.js

dotjs

README.md

_limit.js

_limitItems.js

_limitLength.js

_limitProperties.js

allOf.js

anyOf.js

comment.js

const.js

contains.js

custom.js

dependencies.js

enum.js

format.js

if.js

index.js

items.js

multipleOf.js

not.js

oneOf.js

pattern.js

properties.js

propertyNames.js

ref.js

required.js

uniqueItems.js

validate.js

keyword.js

refs

data.json

json-schema-draft-04.json

json-schema-draft-06.json

json-schema-draft-07.json

json-schema-secure.json

package.json

scripts

.eslintrc.yml

bundle.js

compile-dots.js

ansi-colors

README.md

index.js

package.json

symbols.js

types

index.d.ts

ansi-regex

index.d.ts

index.js

package.json

readme.md

ansi-styles

index.d.ts

index.js

package.json

readme.md

argparse

CHANGELOG.md

README.md

index.js

lib

action.js

action

append.js

append

constant.js

count.js

help.js

store.js

store

constant.js

false.js

true.js

subparsers.js

version.js

action_container.js

argparse.js

argument

error.js

exclusive.js

group.js

argument_parser.js

const.js

help

added_formatters.js

formatter.js

namespace.js

utils.js

package.json

as-bignum

README.md

assembly

__tests__

as-pect.d.ts

i128.spec.as.ts

safe_u128.spec.as.ts

u128.spec.as.ts

u256.spec.as.ts

utils.ts

fixed

fp128.ts

fp256.ts

index.ts

safe

fp128.ts

fp256.ts

types.ts

globals.ts

index.ts

integer

i128.ts

i256.ts

index.ts

safe

i128.ts

i256.ts

i64.ts

index.ts

u128.ts

u256.ts

u64.ts

u128.ts

u256.ts

tsconfig.json

utils.ts

package.json

asbuild

README.md

dist

cli.d.ts

cli.js

commands

build.d.ts

build.js

fmt.d.ts

fmt.js

index.d.ts

index.js

init

cmd.d.ts

cmd.js

files

asconfigJson.d.ts

asconfigJson.js

aspecConfig.d.ts

aspecConfig.js

assembly_files.d.ts

assembly_files.js

eslintConfig.d.ts

eslintConfig.js

gitignores.d.ts

gitignores.js

index.d.ts

index.js

indexJs.d.ts

indexJs.js

packageJson.d.ts

packageJson.js

test_files.d.ts

test_files.js

index.d.ts

index.js

interfaces.d.ts

interfaces.js

run.d.ts

run.js

test.d.ts

test.js

index.d.ts

index.js

main.d.ts

main.js

utils.d.ts

utils.js

index.js

node_modules

cliui

CHANGELOG.md

LICENSE.txt

README.md

index.js

package.json

wrap-ansi

index.js

package.json

readme.md

y18n

CHANGELOG.md

README.md

index.js

package.json

yargs-parser

CHANGELOG.md

LICENSE.txt

README.md

index.js

lib

tokenize-arg-string.js

package.json

yargs

CHANGELOG.md

README.md

build

lib

apply-extends.d.ts

apply-extends.js

argsert.d.ts

argsert.js

command.d.ts

command.js

common-types.d.ts

common-types.js

completion-templates.d.ts

completion-templates.js

completion.d.ts

completion.js

is-promise.d.ts

is-promise.js

levenshtein.d.ts

levenshtein.js

middleware.d.ts

middleware.js

obj-filter.d.ts

obj-filter.js

parse-command.d.ts

parse-command.js

process-argv.d.ts

process-argv.js

usage.d.ts

usage.js

validation.d.ts

validation.js

yargs.d.ts

yargs.js

yerror.d.ts

yerror.js

index.js

locales

be.json

de.json

en.json

es.json

fi.json

fr.json

hi.json

hu.json

id.json

it.json

ja.json

ko.json

nb.json

nl.json

nn.json

pirate.json

pl.json

pt.json

pt_BR.json

ru.json

th.json

tr.json

zh_CN.json

zh_TW.json

package.json

yargs.js

package.json

assemblyscript-json

.eslintrc.js

.travis.yml

README.md

assembly

JSON.ts

decoder.ts

encoder.ts

index.ts

tsconfig.json

util

index.ts

index.js

package.json

temp-docs

README.md

classes

decoderstate.md

json.arr.md

json.bool.md

json.float.md

json.integer.md

json.null.md

json.num.md

json.obj.md

json.str.md

json.value.md

jsondecoder.md

jsonencoder.md

jsonhandler.md

throwingjsonhandler.md

modules

json.md

assemblyscript-regex

.eslintrc.js

.github

workflows

benchmark.yml

release.yml

test.yml

README.md

as-pect.config.js

asconfig.empty.json

asconfig.json

assembly

__spec_tests__

generated.spec.ts

__tests__

alterations.spec.ts

as-pect.d.ts

boundary-assertions.spec.ts

capture-group.spec.ts

character-classes.spec.ts

character-sets.spec.ts

characters.ts

empty.ts

quantifiers.spec.ts

range-quantifiers.spec.ts

regex.spec.ts

utils.ts

char.ts

env.ts

index.ts

nfa

matcher.ts

nfa.ts

types.ts

walker.ts

parser

node.ts

parser.ts

string-iterator.ts

walker.ts

regexp.ts

tsconfig.json

util.ts

benchmark

benchmark.js

package.json

spec

test-generator.js

ts

index.ts

tsconfig.json

assemblyscript-temporal

.github

workflows

node.js.yml

release.yml

.vscode

launch.json

README.md

as-pect.config.js

asconfig.empty.json

asconfig.json

assembly

__tests__

README.md

as-pect.d.ts

date.spec.ts

duration.spec.ts

empty.ts

plaindate.spec.ts

plaindatetime.spec.ts

plainmonthday.spec.ts

plaintime.spec.ts

plainyearmonth.spec.ts

timezone.spec.ts

zoneddatetime.spec.ts

constants.ts

date.ts

duration.ts

enums.ts

env.ts

index.ts

instant.ts

now.ts

plaindate.ts

plaindatetime.ts

plainmonthday.ts

plaintime.ts

plainyearmonth.ts

timezone.ts

tsconfig.json

tz

__tests__

index.spec.ts

rule.spec.ts

zone.spec.ts

iana.ts

index.ts

rule.ts

zone.ts

utils.ts

zoneddatetime.ts

development.md

package.json

tzdb

README.md

iana

theory.html

zoneinfo2tdf.pl

assemblyscript

README.md

cli

README.md

asc.d.ts

asc.js

asc.json

shim

README.md

fs.js

path.js

process.js

transform.d.ts

transform.js

util

colors.d.ts

colors.js

find.d.ts

find.js

mkdirp.d.ts

mkdirp.js

options.d.ts

options.js

utf8.d.ts

utf8.js

dist

asc.js

assemblyscript.d.ts

assemblyscript.js

sdk.js

index.d.ts

index.js

lib

loader

README.md

index.d.ts

index.js

package.json

umd

index.d.ts

index.js

package.json

rtrace

README.md

bin

rtplot.js

index.d.ts

index.js

package.json

umd

index.d.ts

index.js

package.json

package-lock.json

package.json

std

README.md

assembly.json

assembly

array.ts

arraybuffer.ts

atomics.ts

bindings

Date.ts

Math.ts

Reflect.ts

asyncify.ts

console.ts

wasi.ts

wasi_snapshot_preview1.ts

wasi_unstable.ts

builtins.ts

compat.ts

console.ts

crypto.ts

dataview.ts

date.ts

diagnostics.ts

error.ts

function.ts

index.d.ts

iterator.ts

map.ts

math.ts

memory.ts

number.ts

object.ts

polyfills.ts

process.ts

reference.ts

regexp.ts

rt.ts

rt

README.md

common.ts

index-incremental.ts

index-minimal.ts

index-stub.ts

index.d.ts

itcms.ts

rtrace.ts

stub.ts

tcms.ts

tlsf.ts

set.ts

shared

feature.ts

target.ts

tsconfig.json

typeinfo.ts

staticarray.ts

string.ts

symbol.ts

table.ts

tsconfig.json

typedarray.ts

uri.ts

util

casemap.ts

error.ts

hash.ts

math.ts

memory.ts

number.ts

sort.ts

string.ts

uri.ts

vector.ts

wasi

index.ts

portable.json

portable

index.d.ts

index.js

types

assembly

index.d.ts

package.json

portable

index.d.ts

package.json

tsconfig-base.json

astral-regex

index.d.ts

index.js

package.json

readme.md

axios

CHANGELOG.md

README.md

UPGRADE_GUIDE.md

dist

axios.js

axios.min.js

index.d.ts

index.js

lib

adapters

README.md

http.js

xhr.js

axios.js

cancel

Cancel.js

CancelToken.js

isCancel.js

core

Axios.js

InterceptorManager.js

README.md

buildFullPath.js

createError.js

dispatchRequest.js

enhanceError.js

mergeConfig.js

settle.js

transformData.js

defaults.js

helpers

README.md

bind.js

buildURL.js

combineURLs.js

cookies.js

deprecatedMethod.js

isAbsoluteURL.js

isURLSameOrigin.js

normalizeHeaderName.js

parseHeaders.js

spread.js

utils.js

package.json

balanced-match

.github

FUNDING.yml

LICENSE.md

README.md

index.js

package.json

base-x

LICENSE.md

README.md

package.json

src

index.d.ts

index.js

binary-install

README.md

example

binary.js

package.json

run.js

index.js

package.json

src

binary.js

binaryen

README.md

index.d.ts

package-lock.json

package.json

wasm.d.ts

bn.js

CHANGELOG.md

README.md

lib

bn.js

package.json

brace-expansion

README.md

index.js

package.json

bs58

CHANGELOG.md

README.md

index.js

package.json

callsites

index.d.ts

index.js

package.json

readme.md

camelcase

index.d.ts

index.js

package.json

readme.md

chalk

index.d.ts

package.json

readme.md

source

index.js

templates.js

util.js

chownr

README.md

chownr.js

package.json

cliui

CHANGELOG.md

LICENSE.txt

README.md

build

lib

index.js

string-utils.js

package.json

color-convert

CHANGELOG.md

README.md

conversions.js

index.js

package.json

route.js

color-name

README.md

index.js

package.json

commander

CHANGELOG.md

Readme.md

index.js

package.json

typings

index.d.ts

concat-map

.travis.yml

example

map.js

index.js

package.json

test

map.js

cross-spawn

CHANGELOG.md

README.md

index.js

lib

enoent.js

parse.js

util

escape.js

readShebang.js

resolveCommand.js

package.json

csv-stringify

README.md

lib

browser

index.js

sync.js

es5

index.d.ts

index.js

sync.d.ts

sync.js

index.d.ts

index.js

sync.d.ts

sync.js

package.json

debug

README.md

package.json

src

browser.js

common.js

index.js

node.js

decamelize

index.js

package.json

readme.md

deep-is

.travis.yml

example

cmp.js

index.js

package.json

test

NaN.js

cmp.js

neg-vs-pos-0.js

diff

CONTRIBUTING.md

README.md

dist

diff.js

lib

convert

dmp.js

xml.js

diff

array.js

base.js

character.js

css.js

json.js

line.js

sentence.js

word.js

index.es6.js

index.js

patch

apply.js

create.js

merge.js

parse.js

util

array.js

distance-iterator.js

params.js

package.json

release-notes.md

runtime.js

discontinuous-range

.travis.yml

README.md

index.js

package.json

test

main-test.js

doctrine

CHANGELOG.md

README.md

lib

doctrine.js

typed.js

utility.js

package.json

emoji-regex

LICENSE-MIT.txt

README.md

es2015

index.js

text.js

index.d.ts

index.js

package.json

text.js

enquirer

CHANGELOG.md

README.md

index.d.ts

index.js

lib

ansi.js

combos.js

completer.js

interpolate.js

keypress.js

placeholder.js

prompt.js

prompts

autocomplete.js

basicauth.js

confirm.js

editable.js

form.js

index.js

input.js

invisible.js

list.js

multiselect.js

numeral.js

password.js

quiz.js

scale.js

select.js

snippet.js

sort.js

survey.js

text.js

toggle.js

render.js

roles.js

state.js

styles.js

symbols.js

theme.js

timer.js

types

array.js

auth.js

boolean.js

index.js

number.js

string.js

utils.js

package.json

env-paths

index.d.ts

index.js

package.json

readme.md

escalade

dist

index.js

index.d.ts

package.json

readme.md

sync

index.d.ts

index.js

escape-string-regexp

index.d.ts

index.js

package.json

readme.md

eslint-scope

CHANGELOG.md

README.md

lib

definition.js

index.js

pattern-visitor.js

reference.js

referencer.js

scope-manager.js

scope.js

variable.js

package.json

eslint-utils

README.md

index.js

node_modules

eslint-visitor-keys

CHANGELOG.md

README.md

lib

index.js

visitor-keys.json

package.json

package.json

eslint-visitor-keys

CHANGELOG.md

README.md

lib

index.js

visitor-keys.json

package.json

eslint

CHANGELOG.md

README.md

bin

eslint.js

conf

category-list.json

config-schema.js

default-cli-options.js

eslint-all.js

eslint-recommended.js

replacements.json

lib

api.js

cli-engine

cli-engine.js

file-enumerator.js

formatters

checkstyle.js

codeframe.js

compact.js

html.js

jslint-xml.js

json-with-metadata.js

json.js

junit.js

stylish.js

table.js

tap.js

unix.js

visualstudio.js

hash.js

index.js

lint-result-cache.js

load-rules.js

xml-escape.js

cli.js

config

default-config.js

flat-config-array.js

flat-config-schema.js

rule-validator.js

eslint

eslint.js

index.js

init

autoconfig.js

config-file.js

config-initializer.js

config-rule.js

npm-utils.js

source-code-utils.js

linter

apply-disable-directives.js

code-path-analysis

code-path-analyzer.js

code-path-segment.js

code-path-state.js

code-path.js

debug-helpers.js

fork-context.js

id-generator.js

config-comment-parser.js

index.js

interpolate.js

linter.js

node-event-generator.js

report-translator.js

rule-fixer.js

rules.js

safe-emitter.js

source-code-fixer.js

timing.js

options.js

rule-tester

index.js

rule-tester.js

rules

accessor-pairs.js

array-bracket-newline.js

array-bracket-spacing.js

array-callback-return.js

array-element-newline.js

arrow-body-style.js

arrow-parens.js

arrow-spacing.js

block-scoped-var.js

block-spacing.js

brace-style.js

callback-return.js

camelcase.js

capitalized-comments.js

class-methods-use-this.js

comma-dangle.js

comma-spacing.js

comma-style.js

complexity.js

computed-property-spacing.js

consistent-return.js

consistent-this.js

constructor-super.js

curly.js

default-case-last.js

default-case.js

default-param-last.js

dot-location.js

dot-notation.js

eol-last.js

eqeqeq.js

for-direction.js

func-call-spacing.js

func-name-matching.js

func-names.js

func-style.js

function-call-argument-newline.js

function-paren-newline.js

generator-star-spacing.js

getter-return.js

global-require.js

grouped-accessor-pairs.js

guard-for-in.js

handle-callback-err.js

id-blacklist.js

id-denylist.js

id-length.js

id-match.js

implicit-arrow-linebreak.js

indent-legacy.js

indent.js

index.js

init-declarations.js

jsx-quotes.js

key-spacing.js

keyword-spacing.js

line-comment-position.js

linebreak-style.js

lines-around-comment.js

lines-around-directive.js

lines-between-class-members.js

max-classes-per-file.js

max-depth.js

max-len.js

max-lines-per-function.js

max-lines.js

max-nested-callbacks.js

max-params.js

max-statements-per-line.js

max-statements.js

multiline-comment-style.js

multiline-ternary.js

new-cap.js

new-parens.js

newline-after-var.js

newline-before-return.js

newline-per-chained-call.js

no-alert.js

no-array-constructor.js

no-async-promise-executor.js

no-await-in-loop.js

no-bitwise.js

no-buffer-constructor.js

no-caller.js

no-case-declarations.js

no-catch-shadow.js

no-class-assign.js

no-compare-neg-zero.js

no-cond-assign.js

no-confusing-arrow.js

no-console.js

no-const-assign.js

no-constant-condition.js

no-constructor-return.js

no-continue.js

no-control-regex.js

no-debugger.js

no-delete-var.js

no-div-regex.js

no-dupe-args.js

no-dupe-class-members.js

no-dupe-else-if.js

no-dupe-keys.js

no-duplicate-case.js

no-duplicate-imports.js

no-else-return.js

no-empty-character-class.js

no-empty-function.js

no-empty-pattern.js

no-empty.js

no-eq-null.js

no-eval.js

no-ex-assign.js

no-extend-native.js

no-extra-bind.js

no-extra-boolean-cast.js

no-extra-label.js

no-extra-parens.js

no-extra-semi.js

no-fallthrough.js

no-floating-decimal.js

no-func-assign.js

no-global-assign.js

no-implicit-coercion.js

no-implicit-globals.js

no-implied-eval.js

no-import-assign.js

no-inline-comments.js

no-inner-declarations.js

no-invalid-regexp.js

no-invalid-this.js

no-irregular-whitespace.js

no-iterator.js

no-label-var.js

no-labels.js

no-lone-blocks.js

no-lonely-if.js

no-loop-func.js

no-loss-of-precision.js

no-magic-numbers.js

no-misleading-character-class.js

no-mixed-operators.js

no-mixed-requires.js

no-mixed-spaces-and-tabs.js

no-multi-assign.js

no-multi-spaces.js

no-multi-str.js

no-multiple-empty-lines.js

no-native-reassign.js

no-negated-condition.js

no-negated-in-lhs.js

no-nested-ternary.js

no-new-func.js

no-new-object.js

no-new-require.js

no-new-symbol.js

no-new-wrappers.js

no-new.js

no-nonoctal-decimal-escape.js

no-obj-calls.js

no-octal-escape.js

no-octal.js

no-param-reassign.js

no-path-concat.js

no-plusplus.js

no-process-env.js

no-process-exit.js

no-promise-executor-return.js

no-proto.js

no-prototype-builtins.js

no-redeclare.js

no-regex-spaces.js

no-restricted-exports.js

no-restricted-globals.js

no-restricted-imports.js

no-restricted-modules.js

no-restricted-properties.js

no-restricted-syntax.js

no-return-assign.js

no-return-await.js

no-script-url.js

no-self-assign.js

no-self-compare.js

no-sequences.js

no-setter-return.js

no-shadow-restricted-names.js

no-shadow.js

no-spaced-func.js

no-sparse-arrays.js

no-sync.js

no-tabs.js

no-template-curly-in-string.js

no-ternary.js

no-this-before-super.js

no-throw-literal.js

no-trailing-spaces.js

no-undef-init.js

no-undef.js

no-undefined.js

no-underscore-dangle.js

no-unexpected-multiline.js

no-unmodified-loop-condition.js

no-unneeded-ternary.js

no-unreachable-loop.js

no-unreachable.js

no-unsafe-finally.js

no-unsafe-negation.js

no-unsafe-optional-chaining.js

no-unused-expressions.js

no-unused-labels.js

no-unused-vars.js

no-use-before-define.js

no-useless-backreference.js

no-useless-call.js

no-useless-catch.js

no-useless-computed-key.js

no-useless-concat.js

no-useless-constructor.js

no-useless-escape.js

no-useless-rename.js

no-useless-return.js

no-var.js

no-void.js

no-warning-comments.js

no-whitespace-before-property.js

no-with.js

nonblock-statement-body-position.js

object-curly-newline.js

object-curly-spacing.js

object-property-newline.js

object-shorthand.js

one-var-declaration-per-line.js

one-var.js

operator-assignment.js

operator-linebreak.js

padded-blocks.js

padding-line-between-statements.js

prefer-arrow-callback.js

prefer-const.js

prefer-destructuring.js

prefer-exponentiation-operator.js

prefer-named-capture-group.js

prefer-numeric-literals.js

prefer-object-spread.js

prefer-promise-reject-errors.js

prefer-reflect.js

prefer-regex-literals.js

prefer-rest-params.js

prefer-spread.js

prefer-template.js

quote-props.js

quotes.js

radix.js

require-atomic-updates.js

require-await.js

require-jsdoc.js

require-unicode-regexp.js

require-yield.js

rest-spread-spacing.js

semi-spacing.js

semi-style.js

semi.js

sort-imports.js

sort-keys.js

sort-vars.js

space-before-blocks.js

space-before-function-paren.js

space-in-parens.js

space-infix-ops.js

space-unary-ops.js

spaced-comment.js

strict.js

switch-colon-spacing.js

symbol-description.js

template-curly-spacing.js

template-tag-spacing.js

unicode-bom.js

use-isnan.js

utils

ast-utils.js

fix-tracker.js

keywords.js

lazy-loading-rule-map.js

patterns

letters.js

unicode

index.js

is-combining-character.js

is-emoji-modifier.js

is-regional-indicator-symbol.js

is-surrogate-pair.js

valid-jsdoc.js

valid-typeof.js

vars-on-top.js

wrap-iife.js

wrap-regex.js

yield-star-spacing.js

yoda.js

shared

ajv.js

ast-utils.js

config-validator.js

deprecation-warnings.js

logging.js

relative-module-resolver.js

runtime-info.js

string-utils.js

traverser.js

types.js

source-code

index.js

source-code.js

token-store

backward-token-comment-cursor.js

backward-token-cursor.js

cursor.js

cursors.js

decorative-cursor.js

filter-cursor.js

forward-token-comment-cursor.js

forward-token-cursor.js

index.js

limit-cursor.js

padded-token-cursor.js

skip-cursor.js

utils.js

messages

all-files-ignored.js

extend-config-missing.js

failed-to-read-json.js

file-not-found.js

no-config-found.js

plugin-conflict.js

plugin-invalid.js

plugin-missing.js

print-config-with-directory-path.js

whitespace-found.js

package.json

espree

CHANGELOG.md

README.md

espree.js

lib

ast-node-types.js

espree.js

features.js

options.js

token-translator.js

visitor-keys.js

node_modules

eslint-visitor-keys

CHANGELOG.md

README.md

lib

index.js

visitor-keys.json

package.json

package.json

esprima

README.md

bin

esparse.js

esvalidate.js

dist

esprima.js

package.json

esquery

README.md

dist

esquery.esm.js

esquery.esm.min.js

esquery.js

esquery.lite.js

esquery.lite.min.js

esquery.min.js

license.txt

node_modules

estraverse

README.md

estraverse.js

gulpfile.js

package.json

package.json

parser.js

esrecurse

README.md

esrecurse.js

gulpfile.babel.js

node_modules

estraverse

README.md

estraverse.js

gulpfile.js

package.json

package.json

estraverse

README.md

estraverse.js

gulpfile.js

package.json

esutils

README.md

lib

ast.js

code.js

keyword.js

utils.js

package.json

fast-deep-equal

README.md

es6

index.d.ts

index.js

react.d.ts

react.js

index.d.ts

index.js

package.json

react.d.ts

react.js

fast-json-stable-stringify

.eslintrc.yml

.github

FUNDING.yml

.travis.yml

README.md

benchmark

index.js

test.json

example

key_cmp.js

nested.js

str.js

value_cmp.js

index.d.ts

index.js

package.json

test

cmp.js

nested.js

str.js

to-json.js

fast-levenshtein

LICENSE.md

README.md

levenshtein.js

package.json

file-entry-cache

README.md

cache.js

changelog.md

package.json

find-up

index.d.ts

index.js

package.json

readme.md

flat-cache

README.md

changelog.md

package.json

src

cache.js

del.js

utils.js

flatted

.github

FUNDING.yml

workflows

node.js.yml

README.md

SPECS.md

cjs

index.js

package.json

es.js

esm

index.js

index.js

min.js

package.json

php

flatted.php

types.d.ts

follow-redirects

README.md

http.js

https.js

index.js

node_modules

debug

.coveralls.yml

.travis.yml

CHANGELOG.md

README.md

karma.conf.js

node.js

package.json

src

browser.js

debug.js

index.js

node.js

ms

index.js

license.md

package.json

readme.md

package.json

fs-minipass

README.md

index.js

package.json

fs.realpath

README.md

index.js

old.js

package.json

function-bind

.jscs.json

.travis.yml

README.md

implementation.js

index.js

package.json

test

index.js

functional-red-black-tree

README.md

bench

test.js

package.json

rbtree.js

test

test.js

get-caller-file

LICENSE.md

README.md

index.d.ts

index.js

package.json

glob-parent

CHANGELOG.md

README.md

index.js

package.json

glob

README.md

common.js

glob.js

package.json

sync.js

globals

globals.json

index.d.ts

index.js

package.json

readme.md

has-flag

index.d.ts

index.js

package.json

readme.md

has

README.md

package.json

src

index.js

test

index.js

hasurl

README.md

index.js

package.json

ignore

CHANGELOG.md

README.md

index.d.ts

index.js

legacy.js

package.json

import-fresh

index.d.ts

index.js

package.json

readme.md

imurmurhash

README.md

imurmurhash.js

imurmurhash.min.js

package.json

inflight

README.md

inflight.js

package.json

inherits

README.md

inherits.js

inherits_browser.js

package.json

interpret

README.md

index.js

mjs-stub.js

package.json

is-core-module

CHANGELOG.md

README.md

core.json

index.js

package.json

test

index.js

is-extglob

README.md

index.js

package.json

is-fullwidth-code-point

index.d.ts

index.js

package.json

readme.md

is-glob

README.md

index.js

package.json

isarray

.travis.yml

README.md

component.json

index.js

package.json

test.js

isexe

README.md

index.js

mode.js

package.json

test

basic.js

windows.js

isobject

README.md

index.js

package.json

js-base64

LICENSE.md

README.md

base64.d.ts

base64.js