The viewer is disabled because this dataset repo requires arbitrary Python code execution. Please consider

removing the

loading script

and relying on

automated data support

(you can use

convert_to_parquet

from the datasets library). If this is not possible, please

open a discussion

for direct help.

Dataset Card for XGLUE

Dataset Summary

XGLUE is a new benchmark dataset to evaluate the performance of cross-lingual pre-trained models with respect to cross-lingual natural language understanding and generation.

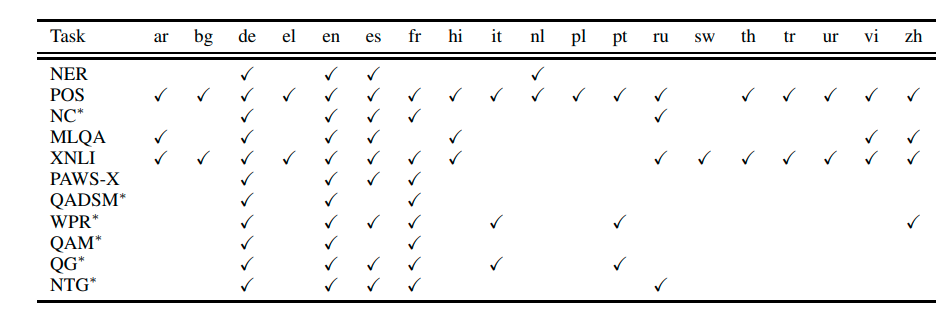

XGLUE is composed of 11 tasks spans 19 languages. For each task, the training data is only available in English. This means that to succeed at XGLUE, a model must have a strong zero-shot cross-lingual transfer capability to learn from the English data of a specific task and transfer what it learned to other languages. Comparing to its concurrent work XTREME, XGLUE has two characteristics: First, it includes cross-lingual NLU and cross-lingual NLG tasks at the same time; Second, besides including 5 existing cross-lingual tasks (i.e. NER, POS, MLQA, PAWS-X and XNLI), XGLUE selects 6 new tasks from Bing scenarios as well, including News Classification (NC), Query-Ad Matching (QADSM), Web Page Ranking (WPR), QA Matching (QAM), Question Generation (QG) and News Title Generation (NTG). Such diversities of languages, tasks and task origin provide a comprehensive benchmark for quantifying the quality of a pre-trained model on cross-lingual natural language understanding and generation.

The training data of each task is in English while the validation and test data is present in multiple different languages. The following table shows which languages are present as validation and test data for each config.

Therefore, for each config, a cross-lingual pre-trained model should be fine-tuned on the English training data, and evaluated on for all languages.

Supported Tasks and Leaderboards

The XGLUE leaderboard can be found on the homepage and

consists of a XGLUE-Understanding Score (the average of the tasks ner, pos, mlqa, nc, xnli, paws-x, qadsm, wpr, qam) and a XGLUE-Generation Score (the average of the tasks qg, ntg).

Languages

For all tasks (configurations), the "train" split is in English (en).

For each task, the "validation" and "test" splits are present in these languages:

- ner:

en,de,es,nl - pos:

en,de,es,nl,bg,el,fr,pl,tr,vi,zh,ur,hi,it,ar,ru,th - mlqa:

en,de,ar,es,hi,vi,zh - nc:

en,de,es,fr,ru - xnli:

en,ar,bg,de,el,es,fr,hi,ru,sw,th,tr,ur,vi,zh - paws-x:

en,de,es,fr - qadsm:

en,de,fr - wpr:

en,de,es,fr,it,pt,zh - qam:

en,de,fr - qg:

en,de,es,fr,it,pt - ntg:

en,de,es,fr,ru

Dataset Structure

Data Instances

ner

An example of 'test.nl' looks as follows.

{

"ner": [

"O",

"O",

"O",

"B-LOC",

"O",

"B-LOC",

"O",

"B-LOC",

"O",

"O",

"O",

"O",

"O",

"O",

"O",

"B-PER",

"I-PER",

"O",

"O",

"B-LOC",

"O",

"O"

],

"words": [

"Dat",

"is",

"in",

"Itali\u00eb",

",",

"Spanje",

"of",

"Engeland",

"misschien",

"geen",

"probleem",

",",

"maar",

"volgens",

"'",

"Der",

"Kaiser",

"'",

"in",

"Duitsland",

"wel",

"."

]

}

pos

An example of 'test.fr' looks as follows.

{

"pos": [

"PRON",

"VERB",

"SCONJ",

"ADP",

"PRON",

"CCONJ",

"DET",

"NOUN",

"ADP",

"NOUN",

"CCONJ",

"NOUN",

"ADJ",

"PRON",

"PRON",

"AUX",

"ADV",

"VERB",

"PUNCT",

"PRON",

"VERB",

"VERB",

"DET",

"ADJ",

"NOUN",

"ADP",

"DET",

"NOUN",

"PUNCT"

],

"words": [

"Je",

"sens",

"qu'",

"entre",

"\u00e7a",

"et",

"les",

"films",

"de",

"m\u00e9decins",

"et",

"scientifiques",

"fous",

"que",

"nous",

"avons",

"d\u00e9j\u00e0",

"vus",

",",

"nous",

"pourrions",

"emprunter",

"un",

"autre",

"chemin",

"pour",

"l'",

"origine",

"."

]

}

mlqa

An example of 'test.hi' looks as follows.

{

"answers": {

"answer_start": [

378

],

"text": [

"\u0909\u0924\u094d\u0924\u0930 \u092a\u0942\u0930\u094d\u0935"

]

},

"context": "\u0909\u0938\u0940 \"\u090f\u0930\u093f\u092f\u093e XX \" \u0928\u093e\u092e\u0915\u0930\u0923 \u092a\u094d\u0930\u0923\u093e\u0932\u0940 \u0915\u093e \u092a\u094d\u0930\u092f\u094b\u0917 \u0928\u0947\u0935\u093e\u0926\u093e \u092a\u0930\u0940\u0915\u094d\u0937\u0923 \u0938\u094d\u0925\u0932 \u0915\u0947 \u0905\u0928\u094d\u092f \u092d\u093e\u0917\u094b\u0902 \u0915\u0947 \u0932\u093f\u090f \u0915\u093f\u092f\u093e \u0917\u092f\u093e \u0939\u0948\u0964\u092e\u0942\u0932 \u0930\u0942\u092a \u092e\u0947\u0902 6 \u092c\u091f\u0947 10 \u092e\u0940\u0932 \u0915\u093e \u092f\u0939 \u0906\u092f\u0924\u093e\u0915\u093e\u0930 \u0905\u0921\u094d\u0921\u093e \u0905\u092c \u0924\u0925\u093e\u0915\u0925\u093f\u0924 '\u0917\u094d\u0930\u0942\u092e \u092c\u0949\u0915\u094d\u0938 \" \u0915\u093e \u090f\u0915 \u092d\u093e\u0917 \u0939\u0948, \u091c\u094b \u0915\u093f 23 \u092c\u091f\u0947 25.3 \u092e\u0940\u0932 \u0915\u093e \u090f\u0915 \u092a\u094d\u0930\u0924\u093f\u092c\u0902\u0927\u093f\u0924 \u0939\u0935\u093e\u0908 \u0915\u094d\u0937\u0947\u0924\u094d\u0930 \u0939\u0948\u0964 \u092f\u0939 \u0915\u094d\u0937\u0947\u0924\u094d\u0930 NTS \u0915\u0947 \u0906\u0902\u0924\u0930\u093f\u0915 \u0938\u0921\u093c\u0915 \u092a\u094d\u0930\u092c\u0902\u0927\u0928 \u0938\u0947 \u091c\u0941\u0921\u093c\u093e \u0939\u0948, \u091c\u093f\u0938\u0915\u0940 \u092a\u0915\u094d\u0915\u0940 \u0938\u0921\u093c\u0915\u0947\u0902 \u0926\u0915\u094d\u0937\u093f\u0923 \u092e\u0947\u0902 \u092e\u0930\u0915\u0930\u0940 \u0915\u0940 \u0913\u0930 \u0914\u0930 \u092a\u0936\u094d\u091a\u093f\u092e \u092e\u0947\u0902 \u092f\u0941\u0915\u094d\u0915\u093e \u092b\u094d\u0932\u0948\u091f \u0915\u0940 \u0913\u0930 \u091c\u093e\u0924\u0940 \u0939\u0948\u0902\u0964 \u091d\u0940\u0932 \u0938\u0947 \u0909\u0924\u094d\u0924\u0930 \u092a\u0942\u0930\u094d\u0935 \u0915\u0940 \u0913\u0930 \u092c\u0922\u093c\u0924\u0947 \u0939\u0941\u090f \u0935\u094d\u092f\u093e\u092a\u0915 \u0914\u0930 \u0914\u0930 \u0938\u0941\u0935\u094d\u092f\u0935\u0938\u094d\u0925\u093f\u0924 \u0917\u094d\u0930\u0942\u092e \u091d\u0940\u0932 \u0915\u0940 \u0938\u0921\u093c\u0915\u0947\u0902 \u090f\u0915 \u0926\u0930\u094d\u0930\u0947 \u0915\u0947 \u091c\u0930\u093f\u092f\u0947 \u092a\u0947\u091a\u0940\u0926\u093e \u092a\u0939\u093e\u0921\u093c\u093f\u092f\u094b\u0902 \u0938\u0947 \u0939\u094b\u0915\u0930 \u0917\u0941\u091c\u0930\u0924\u0940 \u0939\u0948\u0902\u0964 \u092a\u0939\u0932\u0947 \u0938\u0921\u093c\u0915\u0947\u0902 \u0917\u094d\u0930\u0942\u092e \u0918\u093e\u091f\u0940",

"question": "\u091d\u0940\u0932 \u0915\u0947 \u0938\u093e\u092a\u0947\u0915\u094d\u0937 \u0917\u094d\u0930\u0942\u092e \u0932\u0947\u0915 \u0930\u094b\u0921 \u0915\u0939\u093e\u0901 \u091c\u093e\u0924\u0940 \u0925\u0940?"

}

nc

An example of 'test.es' looks as follows.

{

"news_body": "El bizcocho es seguramente el producto m\u00e1s b\u00e1sico y sencillo de toda la reposter\u00eda : consiste en poco m\u00e1s que mezclar unos cuantos ingredientes, meterlos al horno y esperar a que se hagan. Por obra y gracia del impulsor qu\u00edmico, tambi\u00e9n conocido como \"levadura de tipo Royal\", despu\u00e9s de un rato de calorcito esta combinaci\u00f3n de harina, az\u00facar, huevo, grasa -aceite o mantequilla- y l\u00e1cteo se transforma en uno de los productos m\u00e1s deliciosos que existen para desayunar o merendar . Por muy manazas que seas, es m\u00e1s que probable que tu bizcocho casero supere en calidad a cualquier infamia industrial envasada. Para lograr un bizcocho digno de admiraci\u00f3n s\u00f3lo tienes que respetar unas pocas normas que afectan a los ingredientes, proporciones, mezclado, horneado y desmoldado. Todas las tienes resumidas en unos dos minutos el v\u00eddeo de arriba, en el que adem \u00e1s aprender\u00e1s alg\u00fan truquillo para que tu bizcochaco quede m\u00e1s fino, jugoso, esponjoso y amoroso. M\u00e1s en MSN:",

"news_category": "foodanddrink",

"news_title": "Cocina para lerdos: las leyes del bizcocho"

}

xnli

An example of 'validation.th' looks as follows.

{

"hypothesis": "\u0e40\u0e02\u0e32\u0e42\u0e17\u0e23\u0e2b\u0e32\u0e40\u0e40\u0e21\u0e48\u0e02\u0e2d\u0e07\u0e40\u0e02\u0e32\u0e2d\u0e22\u0e48\u0e32\u0e07\u0e23\u0e27\u0e14\u0e40\u0e23\u0e47\u0e27\u0e2b\u0e25\u0e31\u0e07\u0e08\u0e32\u0e01\u0e17\u0e35\u0e48\u0e23\u0e16\u0e42\u0e23\u0e07\u0e40\u0e23\u0e35\u0e22\u0e19\u0e2a\u0e48\u0e07\u0e40\u0e02\u0e32\u0e40\u0e40\u0e25\u0e49\u0e27",

"label": 1,

"premise": "\u0e41\u0e25\u0e30\u0e40\u0e02\u0e32\u0e1e\u0e39\u0e14\u0e27\u0e48\u0e32, \u0e21\u0e48\u0e32\u0e21\u0e4a\u0e32 \u0e1c\u0e21\u0e2d\u0e22\u0e39\u0e48\u0e1a\u0e49\u0e32\u0e19"

}

paws-x

An example of 'test.es' looks as follows.

{

"label": 1,

"sentence1": "La excepci\u00f3n fue entre fines de 2005 y 2009 cuando jug\u00f3 en Suecia con Carlstad United BK, Serbia con FK Borac \u010ca\u010dak y el FC Terek Grozny de Rusia.",

"sentence2": "La excepci\u00f3n se dio entre fines del 2005 y 2009, cuando jug\u00f3 con Suecia en el Carlstad United BK, Serbia con el FK Borac \u010ca\u010dak y el FC Terek Grozny de Rusia."

}

qadsm

An example of 'train' looks as follows.

{

"ad_description": "Your New England Cruise Awaits! Holland America Line Official Site.",

"ad_title": "New England Cruises",

"query": "cruise portland maine",

"relevance_label": 1

}

wpr

An example of 'test.zh' looks as follows.

{

"query": "maxpro\u5b98\u7f51",

"relavance_label": 0,

"web_page_snippet": "\u5728\u7ebf\u8d2d\u4e70\uff0c\u552e\u540e\u670d\u52a1\u3002vivo\u667a\u80fd\u624b\u673a\u5f53\u5b63\u660e\u661f\u673a\u578b\u6709NEX\uff0cvivo X21\uff0cvivo X20\uff0c\uff0cvivo X23\u7b49\uff0c\u5728vivo\u5b98\u7f51\u8d2d\u4e70\u624b\u673a\u53ef\u4ee5\u4eab\u53d712 \u671f\u514d\u606f\u4ed8\u6b3e\u3002 \u54c1\u724c Funtouch OS \u4f53\u9a8c\u5e97 | ...",

"wed_page_title": "vivo\u667a\u80fd\u624b\u673a\u5b98\u65b9\u7f51\u7ad9-AI\u975e\u51e1\u6444\u5f71X23"

}

qam

An example of 'validation.en' looks as follows.

{

"annswer": "Erikson has stated that after the last novel of the Malazan Book of the Fallen was finished, he and Esslemont would write a comprehensive guide tentatively named The Encyclopaedia Malazica.",

"label": 0,

"question": "main character of malazan book of the fallen"

}

qg

An example of 'test.de' looks as follows.

{

"answer_passage": "Medien bei WhatsApp automatisch speichern. Tippen Sie oben rechts unter WhatsApp auf die drei Punkte oder auf die Men\u00fc-Taste Ihres Smartphones. Dort wechseln Sie in die \"Einstellungen\" und von hier aus weiter zu den \"Chat-Einstellungen\". Unter dem Punkt \"Medien Auto-Download\" k\u00f6nnen Sie festlegen, wann die WhatsApp-Bilder heruntergeladen werden sollen.",

"question": "speichenn von whats app bilder unterbinden"

}

ntg

An example of 'test.en' looks as follows.

{

"news_body": "Check out this vintage Willys Pickup! As they say, the devil is in the details, and it's not every day you see such attention paid to every last area of a restoration like with this 1961 Willys Pickup . Already the Pickup has a unique look that shares some styling with the Jeep, plus some original touches you don't get anywhere else. It's a classy way to show up to any event, all thanks to Hollywood Motors . A burgundy paint job contrasts with white lower panels and the roof. Plenty of tasteful chrome details grace the exterior, including the bumpers, headlight bezels, crossmembers on the grille, hood latches, taillight bezels, exhaust finisher, tailgate hinges, etc. Steel wheels painted white and chrome hubs are a tasteful addition. Beautiful oak side steps and bed strips add a touch of craftsmanship to this ride. This truck is of real showroom quality, thanks to the astoundingly detailed restoration work performed on it, making this Willys Pickup a fierce contender for best of show. Under that beautiful hood is a 225 Buick V6 engine mated to a three-speed manual transmission, so you enjoy an ideal level of control. Four wheel drive is functional, making it that much more utilitarian and downright cool. The tires are new, so you can enjoy a lot of life out of them, while the wheels and hubs are in great condition. Just in case, a fifth wheel with a tire and a side mount are included. Just as important, this Pickup runs smoothly, so you can go cruising or even hit the open road if you're interested in participating in some classic rallies. You might associate Willys with the famous Jeep CJ, but the automaker did produce a fair amount of trucks. The Pickup is quite the unique example, thanks to distinct styling that really turns heads, making it a favorite at quite a few shows. Source: Hollywood Motors Check These Rides Out Too: Fear No Trails With These Off-Roaders 1965 Pontiac GTO: American Icon For Sale In Canada Low-Mileage 1955 Chevy 3100 Represents Turn In Pickup Market",

"news_title": "This 1961 Willys Pickup Will Let You Cruise In Style"

}

Data Fields

ner

In the following each data field in ner is explained. The data fields are the same among all splits.

words: a list of words composing the sentence.ner: a list of entitity classes corresponding to each word respectively.

pos

In the following each data field in pos is explained. The data fields are the same among all splits.

words: a list of words composing the sentence.pos: a list of "part-of-speech" classes corresponding to each word respectively.

mlqa

In the following each data field in mlqa is explained. The data fields are the same among all splits.

context: a string, the context containing the answer.question: a string, the question to be answered.answers: a string, the answer toquestion.

nc

In the following each data field in nc is explained. The data fields are the same among all splits.

news_title: a string, to the title of the news report.news_body: a string, to the actual news report.news_category: a string, the category of the news report, e.g.foodanddrink

xnli

In the following each data field in xnli is explained. The data fields are the same among all splits.

premise: a string, the context/premise, i.e. the first sentence for natural language inference.hypothesis: a string, a sentence whereas its relation topremiseis to be classified, i.e. the second sentence for natural language inference.label: a class catory (int), natural language inference relation class betweenhypothesisandpremise. One of 0: entailment, 1: contradiction, 2: neutral.

paws-x

In the following each data field in paws-x is explained. The data fields are the same among all splits.

sentence1: a string, a sentence.sentence2: a string, a sentence whereas the sentence is either a paraphrase ofsentence1or not.label: a class label (int), whethersentence2is a paraphrase ofsentence1One of 0: different, 1: same.

qadsm

In the following each data field in qadsm is explained. The data fields are the same among all splits.

query: a string, the search query one would insert into a search engine.ad_title: a string, the title of the advertisement.ad_description: a string, the content of the advertisement, i.e. the main body.relevance_label: a class label (int), how relevant the advertisementad_title+ad_descriptionis to the search queryquery. One of 0: Bad, 1: Good.

wpr

In the following each data field in wpr is explained. The data fields are the same among all splits.

query: a string, the search query one would insert into a search engine.web_page_title: a string, the title of a web page.web_page_snippet: a string, the content of a web page, i.e. the main body.relavance_label: a class label (int), how relevant the web pageweb_page_snippet+web_page_snippetis to the search queryquery. One of 0: Bad, 1: Fair, 2: Good, 3: Excellent, 4: Perfect.

qam

In the following each data field in qam is explained. The data fields are the same among all splits.

question: a string, a question.answer: a string, a possible answer toquestion.label: a class label (int), whether theansweris relevant to thequestion. One of 0: False, 1: True.

qg

In the following each data field in qg is explained. The data fields are the same among all splits.

answer_passage: a string, a detailed answer to thequestion.question: a string, a question.

ntg

In the following each data field in ntg is explained. The data fields are the same among all splits.

news_body: a string, the content of a news article.news_title: a string, the title corresponding to the news articlenews_body.

Data Splits

ner

The following table shows the number of data samples/number of rows for each split in ner.

| train | validation.en | validation.de | validation.es | validation.nl | test.en | test.de | test.es | test.nl | |

|---|---|---|---|---|---|---|---|---|---|

| ner | 14042 | 3252 | 2874 | 1923 | 2895 | 3454 | 3007 | 1523 | 5202 |

pos

The following table shows the number of data samples/number of rows for each split in pos.

| train | validation.en | validation.de | validation.es | validation.nl | validation.bg | validation.el | validation.fr | validation.pl | validation.tr | validation.vi | validation.zh | validation.ur | validation.hi | validation.it | validation.ar | validation.ru | validation.th | test.en | test.de | test.es | test.nl | test.bg | test.el | test.fr | test.pl | test.tr | test.vi | test.zh | test.ur | test.hi | test.it | test.ar | test.ru | test.th | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| pos | 25376 | 2001 | 798 | 1399 | 717 | 1114 | 402 | 1475 | 2214 | 987 | 799 | 499 | 551 | 1658 | 563 | 908 | 578 | 497 | 2076 | 976 | 425 | 595 | 1115 | 455 | 415 | 2214 | 982 | 799 | 499 | 534 | 1683 | 481 | 679 | 600 | 497 |

mlqa

The following table shows the number of data samples/number of rows for each split in mlqa.

| train | validation.en | validation.de | validation.ar | validation.es | validation.hi | validation.vi | validation.zh | test.en | test.de | test.ar | test.es | test.hi | test.vi | test.zh | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| mlqa | 87599 | 1148 | 512 | 517 | 500 | 507 | 511 | 504 | 11590 | 4517 | 5335 | 5253 | 4918 | 5495 | 5137 |

nc

The following table shows the number of data samples/number of rows for each split in nc.

| train | validation.en | validation.de | validation.es | validation.fr | validation.ru | test.en | test.de | test.es | test.fr | test.ru | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| nc | 100000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 |

xnli

The following table shows the number of data samples/number of rows for each split in xnli.

| train | validation.en | validation.ar | validation.bg | validation.de | validation.el | validation.es | validation.fr | validation.hi | validation.ru | validation.sw | validation.th | validation.tr | validation.ur | validation.vi | validation.zh | test.en | test.ar | test.bg | test.de | test.el | test.es | test.fr | test.hi | test.ru | test.sw | test.th | test.tr | test.ur | test.vi | test.zh | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| xnli | 392702 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 |

nc

The following table shows the number of data samples/number of rows for each split in nc.

| train | validation.en | validation.de | validation.es | validation.fr | validation.ru | test.en | test.de | test.es | test.fr | test.ru | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| nc | 100000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 |

xnli

The following table shows the number of data samples/number of rows for each split in xnli.

| train | validation.en | validation.ar | validation.bg | validation.de | validation.el | validation.es | validation.fr | validation.hi | validation.ru | validation.sw | validation.th | validation.tr | validation.ur | validation.vi | validation.zh | test.en | test.ar | test.bg | test.de | test.el | test.es | test.fr | test.hi | test.ru | test.sw | test.th | test.tr | test.ur | test.vi | test.zh | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| xnli | 392702 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 2490 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 | 5010 |

paws-x

The following table shows the number of data samples/number of rows for each split in paws-x.

| train | validation.en | validation.de | validation.es | validation.fr | test.en | test.de | test.es | test.fr | |

|---|---|---|---|---|---|---|---|---|---|

| paws-x | 49401 | 2000 | 2000 | 2000 | 2000 | 2000 | 2000 | 2000 | 2000 |

qadsm

The following table shows the number of data samples/number of rows for each split in qadsm.

| train | validation.en | validation.de | validation.fr | test.en | test.de | test.fr | |

|---|---|---|---|---|---|---|---|

| qadsm | 100000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 |

wpr

The following table shows the number of data samples/number of rows for each split in wpr.

| train | validation.en | validation.de | validation.es | validation.fr | validation.it | validation.pt | validation.zh | test.en | test.de | test.es | test.fr | test.it | test.pt | test.zh | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| wpr | 99997 | 10008 | 10004 | 10004 | 10005 | 10003 | 10001 | 10002 | 10004 | 9997 | 10006 | 10020 | 10001 | 10015 | 9999 |

qam

The following table shows the number of data samples/number of rows for each split in qam.

| train | validation.en | validation.de | validation.fr | test.en | test.de | test.fr | |

|---|---|---|---|---|---|---|---|

| qam | 100000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 |

qg

The following table shows the number of data samples/number of rows for each split in qg.

| train | validation.en | validation.de | validation.es | validation.fr | validation.it | validation.pt | test.en | test.de | test.es | test.fr | test.it | test.pt | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| qg | 100000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 |

ntg

The following table shows the number of data samples/number of rows for each split in ntg.

| train | validation.en | validation.de | validation.es | validation.fr | validation.ru | test.en | test.de | test.es | test.fr | test.ru | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| ntg | 300000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 | 10000 |

Dataset Creation

Curation Rationale

[More Information Needed]

Source Data

Initial Data Collection and Normalization

[More Information Needed]

Who are the source language producers?

[More Information Needed]

Annotations

[More Information Needed]

Annotation process

[More Information Needed]

Who are the annotators?

[More Information Needed]

Personal and Sensitive Information

[More Information Needed]

Considerations for Using the Data

Social Impact of Dataset

[More Information Needed]

Discussion of Biases

[More Information Needed]

Other Known Limitations

[More Information Needed]

Additional Information

Dataset Curators

The dataset is maintained mainly by Yaobo Liang, Yeyun Gong, Nan Duan, Ming Gong, Linjun Shou, and Daniel Campos from Microsoft Research.

Licensing Information

The XGLUE datasets are intended for non-commercial research purposes only to promote advancement in the field of artificial intelligence and related areas, and is made available free of charge without extending any license or other intellectual property rights. The dataset is provided “as is” without warranty and usage of the data has risks since we may not own the underlying rights in the documents. We are not be liable for any damages related to use of the dataset. Feedback is voluntarily given and can be used as we see fit. Upon violation of any of these terms, your rights to use the dataset will end automatically.

If you have questions about use of the dataset or any research outputs in your products or services, we encourage you to undertake your own independent legal review. For other questions, please feel free to contact us.

Citation Information

If you use this dataset, please cite it. Additionally, since XGLUE is also built out of exiting 5 datasets, please ensure you cite all of them.

An example:

We evaluate our model using the XGLUE benchmark \cite{Liang2020XGLUEAN}, a cross-lingual evaluation benchmark

consiting of Named Entity Resolution (NER) \cite{Sang2002IntroductionTT} \cite{Sang2003IntroductionTT},

Part of Speech Tagging (POS) \cite{11234/1-3105}, News Classification (NC), MLQA \cite{Lewis2019MLQAEC},

XNLI \cite{Conneau2018XNLIEC}, PAWS-X \cite{Yang2019PAWSXAC}, Query-Ad Matching (QADSM), Web Page Ranking (WPR),

QA Matching (QAM), Question Generation (QG) and News Title Generation (NTG).

@article{Liang2020XGLUEAN,

title={XGLUE: A New Benchmark Dataset for Cross-lingual Pre-training, Understanding and Generation},

author={Yaobo Liang and Nan Duan and Yeyun Gong and Ning Wu and Fenfei Guo and Weizhen Qi and Ming Gong and Linjun Shou and Daxin Jiang and Guihong Cao and Xiaodong Fan and Ruofei Zhang and Rahul Agrawal and Edward Cui and Sining Wei and Taroon Bharti and Ying Qiao and Jiun-Hung Chen and Winnie Wu and Shuguang Liu and Fan Yang and Daniel Campos and Rangan Majumder and Ming Zhou},

journal={arXiv},

year={2020},

volume={abs/2004.01401}

}

@misc{11234/1-3105,

title={Universal Dependencies 2.5},

author={Zeman, Daniel and Nivre, Joakim and Abrams, Mitchell and Aepli, No{\"e}mi and Agi{\'c}, {\v Z}eljko and Ahrenberg, Lars and Aleksandravi{\v c}i{\=u}t{\.e}, Gabriel{\.e} and Antonsen, Lene and Aplonova, Katya and Aranzabe, Maria Jesus and Arutie, Gashaw and Asahara, Masayuki and Ateyah, Luma and Attia, Mohammed and Atutxa, Aitziber and Augustinus, Liesbeth and Badmaeva, Elena and Ballesteros, Miguel and Banerjee, Esha and Bank, Sebastian and Barbu Mititelu, Verginica and Basmov, Victoria and Batchelor, Colin and Bauer, John and Bellato, Sandra and Bengoetxea, Kepa and Berzak, Yevgeni and Bhat, Irshad Ahmad and Bhat, Riyaz Ahmad and Biagetti, Erica and Bick, Eckhard and Bielinskien{\.e}, Agn{\.e} and Blokland, Rogier and Bobicev, Victoria and Boizou, Lo{\"{\i}}c and Borges V{\"o}lker, Emanuel and B{\"o}rstell, Carl and Bosco, Cristina and Bouma, Gosse and Bowman, Sam and Boyd, Adriane and Brokait{\.e}, Kristina and Burchardt, Aljoscha and Candito, Marie and Caron, Bernard and Caron, Gauthier and Cavalcanti, Tatiana and Cebiro{\u g}lu Eryi{\u g}it, G{\"u}l{\c s}en and Cecchini, Flavio Massimiliano and Celano, Giuseppe G. A. and {\v C}{\'e}pl{\"o}, Slavom{\'{\i}}r and Cetin, Savas and Chalub, Fabricio and Choi, Jinho and Cho, Yongseok and Chun, Jayeol and Cignarella, Alessandra T. and Cinkov{\'a}, Silvie and Collomb, Aur{\'e}lie and {\c C}{\"o}ltekin, {\c C}a{\u g}r{\i} and Connor, Miriam and Courtin, Marine and Davidson, Elizabeth and de Marneffe, Marie-Catherine and de Paiva, Valeria and de Souza, Elvis and Diaz de Ilarraza, Arantza and Dickerson, Carly and Dione, Bamba and Dirix, Peter and Dobrovoljc, Kaja and Dozat, Timothy and Droganova, Kira and Dwivedi, Puneet and Eckhoff, Hanne and Eli, Marhaba and Elkahky, Ali and Ephrem, Binyam and Erina, Olga and Erjavec, Toma{\v z} and Etienne, Aline and Evelyn, Wograine and Farkas, Rich{\'a}rd and Fernandez Alcalde, Hector and Foster, Jennifer and Freitas, Cl{\'a}udia and Fujita, Kazunori and Gajdo{\v s}ov{\'a}, Katar{\'{\i}}na and Galbraith, Daniel and Garcia, Marcos and G{\"a}rdenfors, Moa and Garza, Sebastian and Gerdes, Kim and Ginter, Filip and Goenaga, Iakes and Gojenola, Koldo and G{\"o}k{\i}rmak, Memduh and Goldberg, Yoav and G{\'o}mez Guinovart, Xavier and Gonz{\'a}lez Saavedra, Berta and Grici{\=u}t{\.e}, Bernadeta and Grioni, Matias and Gr{\=u}z{\={\i}}tis, Normunds and Guillaume, Bruno and Guillot-Barbance, C{\'e}line and Habash, Nizar and Haji{\v c}, Jan and Haji{\v c} jr., Jan and H{\"a}m{\"a}l{\"a}inen, Mika and H{\`a} M{\~y}, Linh and Han, Na-Rae and Harris, Kim and Haug, Dag and Heinecke, Johannes and Hennig, Felix and Hladk{\'a}, Barbora and Hlav{\'a}{\v c}ov{\'a}, Jaroslava and Hociung, Florinel and Hohle, Petter and Hwang, Jena and Ikeda, Takumi and Ion, Radu and Irimia, Elena and Ishola, {\d O}l{\'a}j{\'{\i}}d{\'e} and Jel{\'{\i}}nek, Tom{\'a}{\v s} and Johannsen, Anders and J{\o}rgensen, Fredrik and Juutinen, Markus and Ka{\c s}{\i}kara, H{\"u}ner and Kaasen, Andre and Kabaeva, Nadezhda and Kahane, Sylvain and Kanayama, Hiroshi and Kanerva, Jenna and Katz, Boris and Kayadelen, Tolga and Kenney, Jessica and Kettnerov{\'a}, V{\'a}clava and Kirchner, Jesse and Klementieva, Elena and K{\"o}hn, Arne and Kopacewicz, Kamil and Kotsyba, Natalia and Kovalevskait{\.e}, Jolanta and Krek, Simon and Kwak, Sookyoung and Laippala, Veronika and Lambertino, Lorenzo and Lam, Lucia and Lando, Tatiana and Larasati, Septina Dian and Lavrentiev, Alexei and Lee, John and L{\^e} H{\`{\^o}}ng, Phương and Lenci, Alessandro and Lertpradit, Saran and Leung, Herman and Li, Cheuk Ying and Li, Josie and Li, Keying and Lim, {KyungTae} and Liovina, Maria and Li, Yuan and Ljube{\v s}i{\'c}, Nikola and Loginova, Olga and Lyashevskaya, Olga and Lynn, Teresa and Macketanz, Vivien and Makazhanov, Aibek and Mandl, Michael and Manning, Christopher and Manurung, Ruli and M{\u a}r{\u a}nduc, C{\u a}t{\u a}lina and Mare{\v c}ek, David and Marheinecke, Katrin and Mart{\'{\i}}nez Alonso, H{\'e}ctor and Martins, Andr{\'e} and Ma{\v s}ek, Jan and Matsumoto, Yuji and {McDonald}, Ryan and {McGuinness}, Sarah and Mendon{\c c}a, Gustavo and Miekka, Niko and Misirpashayeva, Margarita and Missil{\"a}, Anna and Mititelu, C{\u a}t{\u a}lin and Mitrofan, Maria and Miyao, Yusuke and Montemagni, Simonetta and More, Amir and Moreno Romero, Laura and Mori, Keiko Sophie and Morioka, Tomohiko and Mori, Shinsuke and Moro, Shigeki and Mortensen, Bjartur and Moskalevskyi, Bohdan and Muischnek, Kadri and Munro, Robert and Murawaki, Yugo and M{\"u}{\"u}risep, Kaili and Nainwani, Pinkey and Navarro Hor{\~n}iacek, Juan Ignacio and Nedoluzhko, Anna and Ne{\v s}pore-B{\=e}rzkalne, Gunta and Nguy{\~{\^e}}n Th{\d i}, Lương and Nguy{\~{\^e}}n Th{\d i} Minh, Huy{\`{\^e}}n and Nikaido, Yoshihiro and Nikolaev, Vitaly and Nitisaroj, Rattima and Nurmi, Hanna and Ojala, Stina and Ojha, Atul Kr. and Ol{\'u}{\`o}kun, Ad{\'e}day{\d o}̀ and Omura, Mai and Osenova, Petya and {\"O}stling, Robert and {\O}vrelid, Lilja and Partanen, Niko and Pascual, Elena and Passarotti, Marco and Patejuk, Agnieszka and Paulino-Passos, Guilherme and Peljak-{\L}api{\'n}ska, Angelika and Peng, Siyao and Perez, Cenel-Augusto and Perrier, Guy and Petrova, Daria and Petrov, Slav and Phelan, Jason and Piitulainen, Jussi and Pirinen, Tommi A and Pitler, Emily and Plank, Barbara and Poibeau, Thierry and Ponomareva, Larisa and Popel, Martin and Pretkalni{\c n}a, Lauma and Pr{\'e}vost, Sophie and Prokopidis, Prokopis and Przepi{\'o}rkowski, Adam and Puolakainen, Tiina and Pyysalo, Sampo and Qi, Peng and R{\"a}{\"a}bis, Andriela and Rademaker, Alexandre and Ramasamy, Loganathan and Rama, Taraka and Ramisch, Carlos and Ravishankar, Vinit and Real, Livy and Reddy, Siva and Rehm, Georg and Riabov, Ivan and Rie{\ss}ler, Michael and Rimkut{\.e}, Erika and Rinaldi, Larissa and Rituma, Laura and Rocha, Luisa and Romanenko, Mykhailo and Rosa, Rudolf and Rovati, Davide and Roșca, Valentin and Rudina, Olga and Rueter, Jack and Sadde, Shoval and Sagot, Beno{\^{\i}}t and Saleh, Shadi and Salomoni, Alessio and Samard{\v z}i{\'c}, Tanja and Samson, Stephanie and Sanguinetti, Manuela and S{\"a}rg, Dage and Saul{\={\i}}te, Baiba and Sawanakunanon, Yanin and Schneider, Nathan and Schuster, Sebastian and Seddah, Djam{\'e} and Seeker, Wolfgang and Seraji, Mojgan and Shen, Mo and Shimada, Atsuko and Shirasu, Hiroyuki and Shohibussirri, Muh and Sichinava, Dmitry and Silveira, Aline and Silveira, Natalia and Simi, Maria and Simionescu, Radu and Simk{\'o}, Katalin and {\v S}imkov{\'a}, M{\'a}ria and Simov, Kiril and Smith, Aaron and Soares-Bastos, Isabela and Spadine, Carolyn and Stella, Antonio and Straka, Milan and Strnadov{\'a}, Jana and Suhr, Alane and Sulubacak, Umut and Suzuki, Shingo and Sz{\'a}nt{\'o}, Zsolt and Taji, Dima and Takahashi, Yuta and Tamburini, Fabio and Tanaka, Takaaki and Tellier, Isabelle and Thomas, Guillaume and Torga, Liisi and Trosterud, Trond and Trukhina, Anna and Tsarfaty, Reut and Tyers, Francis and Uematsu, Sumire and Ure{\v s}ov{\'a}, Zde{\v n}ka and Uria, Larraitz and Uszkoreit, Hans and Utka, Andrius and Vajjala, Sowmya and van Niekerk, Daniel and van Noord, Gertjan and Varga, Viktor and Villemonte de la Clergerie, Eric and Vincze, Veronika and Wallin, Lars and Walsh, Abigail and Wang, Jing Xian and Washington, Jonathan North and Wendt, Maximilan and Williams, Seyi and Wir{\'e}n, Mats and Wittern, Christian and Woldemariam, Tsegay and Wong, Tak-sum and Wr{\'o}blewska, Alina and Yako, Mary and Yamazaki, Naoki and Yan, Chunxiao and Yasuoka, Koichi and Yavrumyan, Marat M. and Yu, Zhuoran and {\v Z}abokrtsk{\'y}, Zden{\v e}k and Zeldes, Amir and Zhang, Manying and Zhu, Hanzhi},

url={http://hdl.handle.net/11234/1-3105},

note={{LINDAT}/{CLARIAH}-{CZ} digital library at the Institute of Formal and Applied Linguistics ({{\'U}FAL}), Faculty of Mathematics and Physics, Charles University},

copyright={Licence Universal Dependencies v2.5},

year={2019}

}

@article{Sang2003IntroductionTT,

title={Introduction to the CoNLL-2003 Shared Task: Language-Independent Named Entity Recognition},

author={Erik F. Tjong Kim Sang and Fien De Meulder},

journal={ArXiv},

year={2003},

volume={cs.CL/0306050}

}

@article{Sang2002IntroductionTT,

title={Introduction to the CoNLL-2002 Shared Task: Language-Independent Named Entity Recognition},

author={Erik F. Tjong Kim Sang},

journal={ArXiv},

year={2002},

volume={cs.CL/0209010}

}

@inproceedings{Conneau2018XNLIEC,

title={XNLI: Evaluating Cross-lingual Sentence Representations},

author={Alexis Conneau and Guillaume Lample and Ruty Rinott and Adina Williams and Samuel R. Bowman and Holger Schwenk and Veselin Stoyanov},

booktitle={EMNLP},

year={2018}

}

@article{Lewis2019MLQAEC,

title={MLQA: Evaluating Cross-lingual Extractive Question Answering},

author={Patrick Lewis and Barlas Oguz and Ruty Rinott and Sebastian Riedel and Holger Schwenk},

journal={ArXiv},

year={2019},

volume={abs/1910.07475}

}

@article{Yang2019PAWSXAC,

title={PAWS-X: A Cross-lingual Adversarial Dataset for Paraphrase Identification},

author={Yinfei Yang and Yuan Zhang and Chris Tar and Jason Baldridge},

journal={ArXiv},

year={2019},

volume={abs/1908.11828}

}

Contributions

Thanks to @patrickvonplaten for adding this dataset.

- Downloads last month

- 520