DepthPro: Monocular Depth Estimation

Table of Contents

Model Details

DepthPro is a foundation model for zero-shot metric monocular depth estimation, designed to generate high-resolution depth maps with remarkable sharpness and fine-grained details. It employs a multi-scale Vision Transformer (ViT)-based architecture, where images are downsampled, divided into patches, and processed using a shared Dinov2 encoder. The extracted patch-level features are merged, upsampled, and refined using a DPT-like fusion stage, enabling precise depth estimation.

The abstract from the paper is the following:

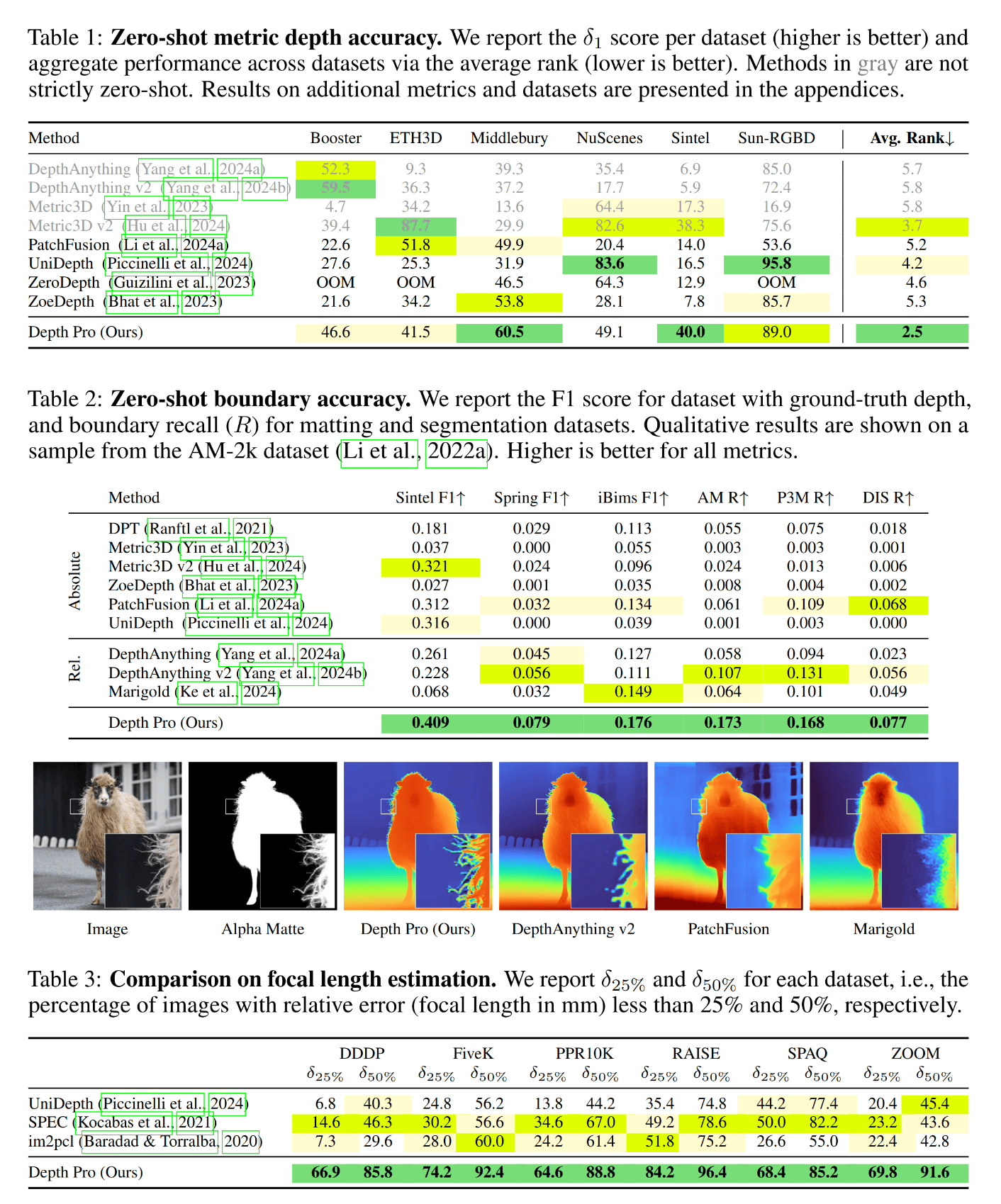

We present a foundation model for zero-shot metric monocular depth estimation. Our model, Depth Pro, synthesizes high-resolution depth maps with unparalleled sharpness and high-frequency details. The predictions are metric, with absolute scale, without relying on the availability of metadata such as camera intrinsics. And the model is fast, producing a 2.25-megapixel depth map in 0.3 seconds on a standard GPU. These characteristics are enabled by a number of technical contributions, including an efficient multi-scale vision transformer for dense prediction, a training protocol that combines real and synthetic datasets to achieve high metric accuracy alongside fine boundary tracing, dedicated evaluation metrics for boundary accuracy in estimated depth maps, and state-of-the-art focal length estimation from a single image. Extensive experiments analyze specific design choices and demonstrate that Depth Pro outperforms prior work along multiple dimensions.

This is the model card of a 🤗 transformers model that has been pushed on the Hub.

- Developed by: Aleksei Bochkovskii, Amaël Delaunoy, Hugo Germain, Marcel Santos, Yichao Zhou, Stephan R. Richter, Vladlen Koltun.

- Model type: DepthPro

- License: Apple-ASCL

Model Sources

- HF Docs: DepthPro

- Repository: https://github.com/apple/ml-depth-pro

- Paper: https://arxiv.org/abs/2410.02073

How to Get Started with the Model

Use the code below to get started with the model.

import requests

from PIL import Image

import torch

from transformers import DepthProImageProcessorFast, DepthProForDepthEstimation

url = 'https://huggingface.co/datasets/mishig/sample_images/resolve/main/tiger.jpg'

image = Image.open(requests.get(url, stream=True).raw)

image_processor = DepthProImageProcessorFast.from_pretrained("geetu040/DepthPro")

model = DepthProForDepthEstimation.from_pretrained("geetu040/DepthPro")

inputs = image_processor(images=image, return_tensors="pt")

with torch.no_grad():

outputs = model(**inputs)

post_processed_output = image_processor.post_process_depth_estimation(

outputs, target_sizes=[(image.height, image.width)],

)

fov = post_processed_output[0]["fov"]

depth = post_processed_output[0]["predicted_depth"]

depth = (depth - depth.min()) / depth.max()

depth = depth * 255.

depth = depth.detach().cpu().numpy()

depth = Image.fromarray(depth.astype("uint8"))

Training Details

Training Data

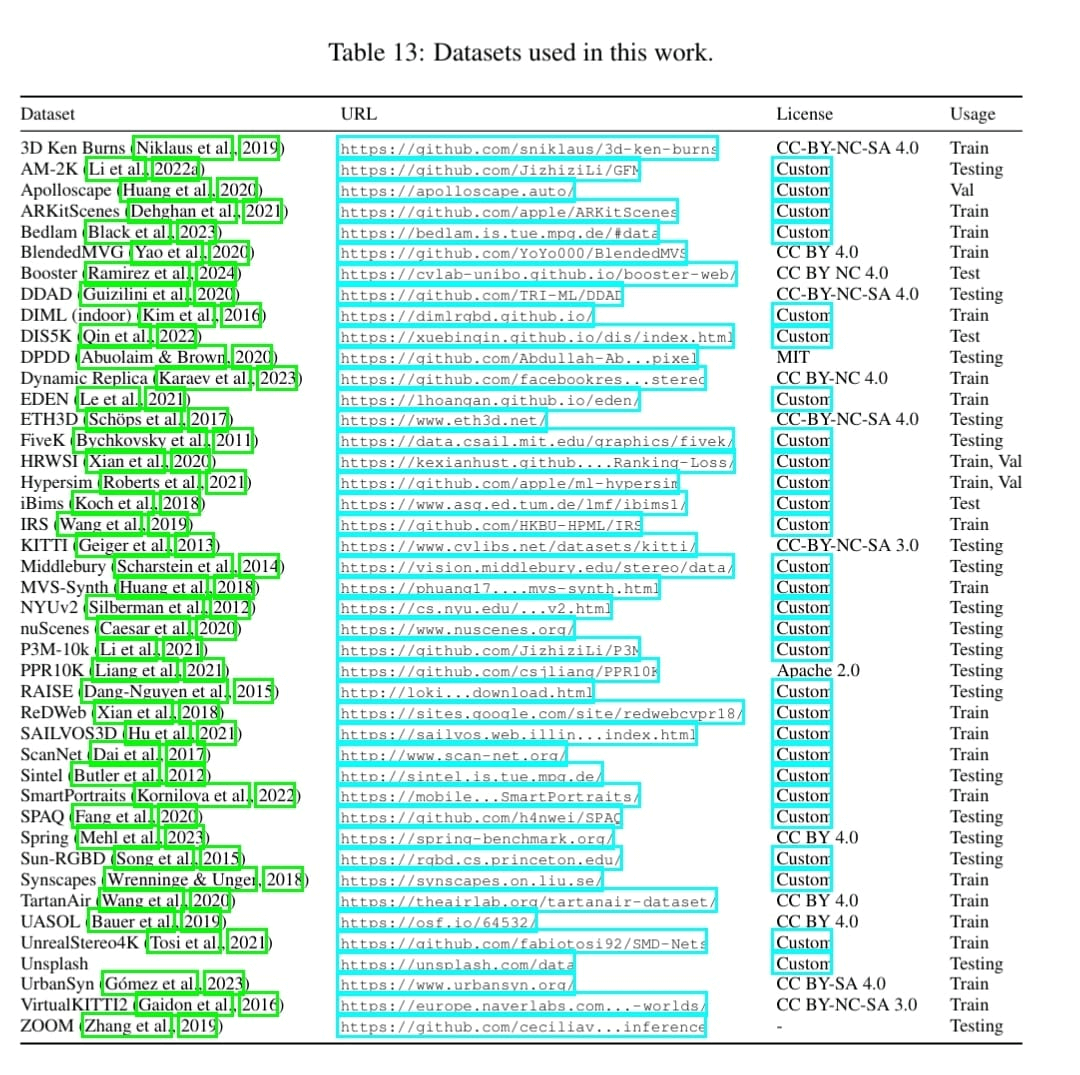

The DepthPro model was trained on the following datasets:

Preprocessing

Images go through the following preprocessing steps:

- rescaled by

1/225. - normalized with

mean=[0.5, 0.5, 0.5]andstd=[0.5, 0.5, 0.5] - resized to

1536x1536pixels

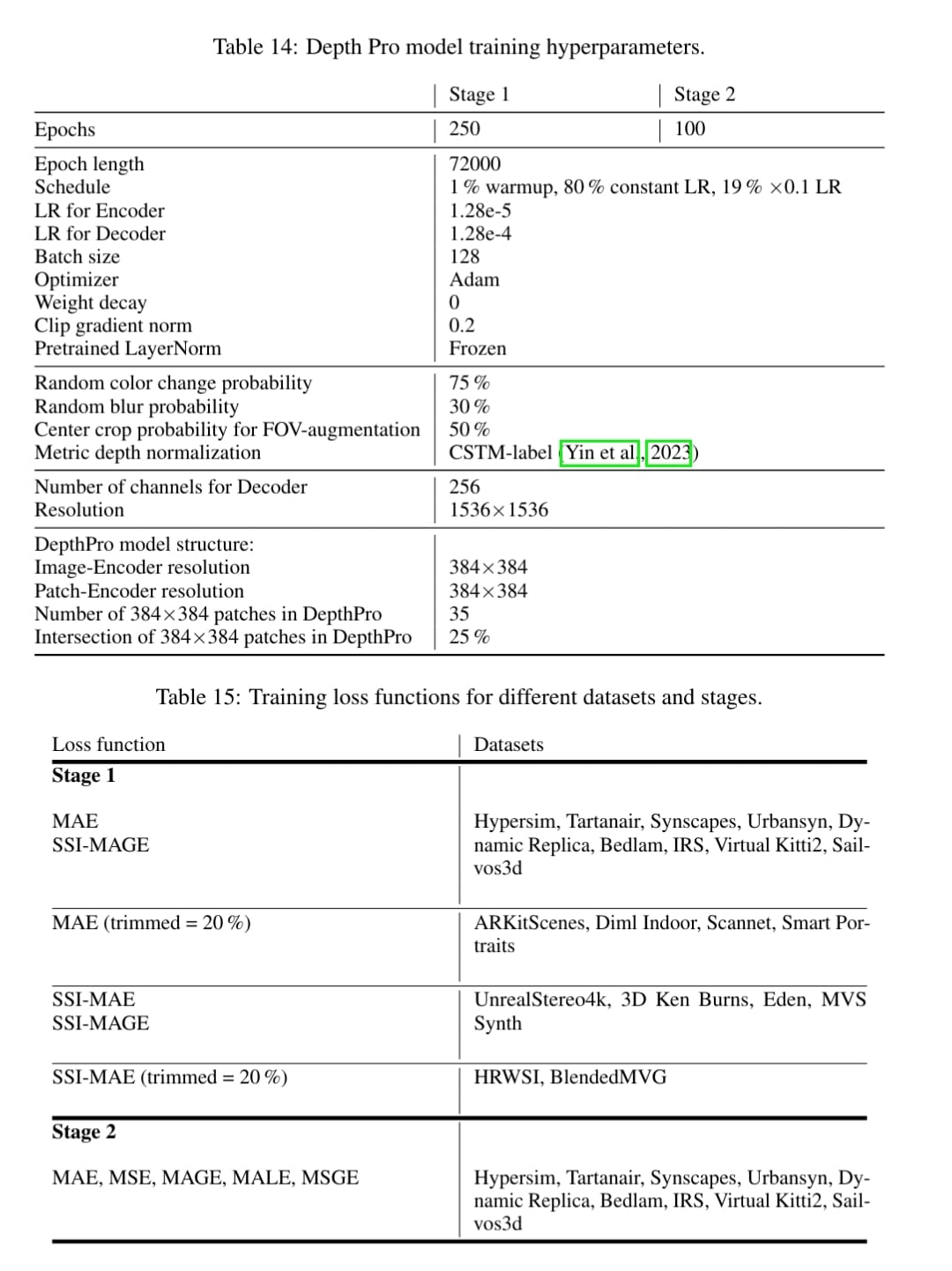

Training Hyperparameters

Evaluation

Model Architecture and Objective

The DepthProForDepthEstimation model uses a DepthProEncoder, for encoding the input image and a FeatureFusionStage for fusing the output features from encoder.

The DepthProEncoder further uses two encoders:

patch_encoder- Input image is scaled with multiple ratios, as specified in the

scaled_images_ratiosconfiguration. - Each scaled image is split into smaller patches of size

patch_sizewith overlapping areas determined byscaled_images_overlap_ratios. - These patches are processed by the

patch_encoder

- Input image is scaled with multiple ratios, as specified in the

image_encoder- Input image is also rescaled to

patch_sizeand processed by theimage_encoder

- Input image is also rescaled to

Both these encoders can be configured via patch_model_config and image_model_config respectively, both of which are seperate Dinov2Model by default.

Outputs from both encoders (last_hidden_state) and selected intermediate states (hidden_states) from patch_encoder are fused by a DPT-based FeatureFusionStage for depth estimation.

The network is supplemented with a focal length estimation head. A small convolutional head ingests frozen features from the depth estimation network and task-specific features from a separate ViT image encoder to predict the horizontal angular field-of-view.

Citation

BibTeX:

@misc{bochkovskii2024depthprosharpmonocular,

title={Depth Pro: Sharp Monocular Metric Depth in Less Than a Second},

author={Aleksei Bochkovskii and Amaël Delaunoy and Hugo Germain and Marcel Santos and Yichao Zhou and Stephan R. Richter and Vladlen Koltun},

year={2024},

eprint={2410.02073},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2410.02073},

}

Model Card Authors

- Downloads last month

- 292