File size: 4,367 Bytes

55f4282 02f4eb3 f79a22a 55f4282 02f4eb3 4593e56 b659168 6701ad4 02f4eb3 3600519 6701ad4 3600519 02f4eb3 b1d114d 02f4eb3 a6ccb26 02f4eb3 d0e3ef9 02f4eb3 a6ccb26 02f4eb3 a6ccb26 02f4eb3 d0e3ef9 02f4eb3 |

1 2 3 4 5 6 7 8 9 10 11 12 13 14 15 16 17 18 19 20 21 22 23 24 25 26 27 28 29 30 31 32 33 34 35 36 37 38 39 40 41 42 43 44 45 46 47 48 49 50 51 52 53 54 55 56 57 58 59 60 61 62 63 64 65 66 67 68 69 70 71 72 73 74 75 76 77 78 79 80 81 82 83 84 85 86 87 88 89 90 91 92 93 94 95 96 97 98 99 100 101 102 103 104 105 106 107 108 109 110 111 112 113 114 115 116 117 118 119 120 121 122 123 124 125 126 127 128 129 130 |

---

license: apache-2.0

language:

- en

library_name: transformers

inference: false

thumbnail: https://h2o.ai/etc.clientlibs/h2o/clientlibs/clientlib-site/resources/images/favicon.ico

tags:

- gpt

- llm

- large language model

- open-source

datasets:

- h2oai/openassistant_oasst1

---

# h2oGPT Model Card

## Summary

H2O.ai's `h2ogpt-oasst1-512-12b` is a 12 billion parameter instruction-following large language model licensed for commercial use.

- Base model: [EleutherAI/pythia-12b](https://huggingface.co/EleutherAI/pythia-12b)

- Fine-tuning dataset: [h2oai/openassistant_oasst1](https://huggingface.co/datasets/h2oai/openassistant_oasst1)

- Data-prep and fine-tuning code: [H2O.ai GitHub](https://github.com/h2oai/h2ogpt)

- Training logs: [zip](https://huggingface.co/h2oai/h2ogpt-oasst1-512-12b/blob/main/pythia-12b.openassistant_oasst1.json.1_epochs.d45a9d34d34534e076cc6797614b322bd0efb11c.15.zip)

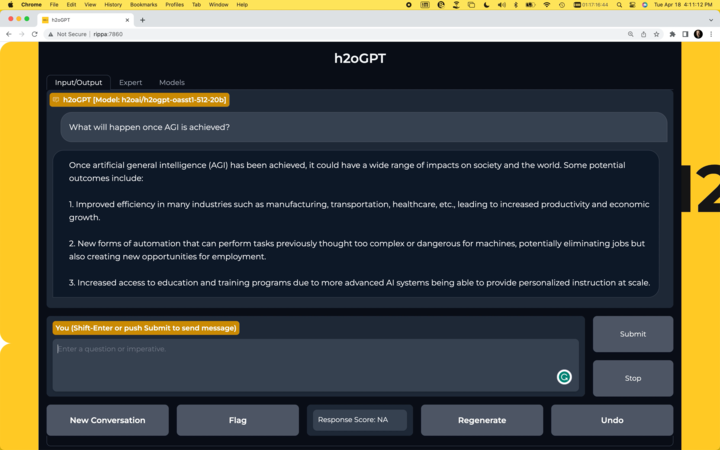

## Chatbot

- Run your own chatbot: [H2O.ai GitHub](https://github.com/h2oai/h2ogpt)

[](https://github.com/h2oai/h2ogpt)

## Usage

To use the model with the `transformers` library on a machine with GPUs, first make sure you have the `transformers` and `accelerate` libraries installed.

```bash

pip install transformers==4.28.1

pip install accelerate==0.18.0

```

```python

import torch

from transformers import pipeline

generate_text = pipeline(model="h2oai/h2ogpt-oasst1-512-12b", torch_dtype=torch.bfloat16, trust_remote_code=True, device_map="auto")

res = generate_text("Why is drinking water so healthy?", max_new_tokens=100)

print(res[0]["generated_text"])

```

Alternatively, if you prefer to not use `trust_remote_code=True` you can download [instruct_pipeline.py](https://huggingface.co/h2oai/h2ogpt-oasst1-512-12b/blob/main/h2oai_pipeline.py),

store it alongside your notebook, and construct the pipeline yourself from the loaded model and tokenizer:

```python

import torch

from h2oai_pipeline import H2OTextGenerationPipeline

from transformers import AutoModelForCausalLM, AutoTokenizer

tokenizer = AutoTokenizer.from_pretrained("h2oai/h2ogpt-oasst1-512-12b", padding_side="left")

model = AutoModelForCausalLM.from_pretrained("h2oai/h2ogpt-oasst1-512-12b", torch_dtype=torch.bfloat16, device_map="auto")

generate_text = H2OTextGenerationPipeline(model=model, tokenizer=tokenizer)

res = generate_text("Why is drinking water so healthy?", max_new_tokens=100)

print(res[0]["generated_text"])

```

## Model Architecture

```

GPTNeoXForCausalLM(

(gpt_neox): GPTNeoXModel(

(embed_in): Embedding(50688, 5120)

(layers): ModuleList(

(0-35): 36 x GPTNeoXLayer(

(input_layernorm): LayerNorm((5120,), eps=1e-05, elementwise_affine=True)

(post_attention_layernorm): LayerNorm((5120,), eps=1e-05, elementwise_affine=True)

(attention): GPTNeoXAttention(

(rotary_emb): RotaryEmbedding()

(query_key_value): Linear(in_features=5120, out_features=15360, bias=True)

(dense): Linear(in_features=5120, out_features=5120, bias=True)

)

(mlp): GPTNeoXMLP(

(dense_h_to_4h): Linear(in_features=5120, out_features=20480, bias=True)

(dense_4h_to_h): Linear(in_features=20480, out_features=5120, bias=True)

(act): GELUActivation()

)

)

)

(final_layer_norm): LayerNorm((5120,), eps=1e-05, elementwise_affine=True)

)

(embed_out): Linear(in_features=5120, out_features=50688, bias=False)

)

```

## Model Configuration

```json

GPTNeoXConfig {

"_name_or_path": "h2oai/h2ogpt-oasst1-512-12b",

"architectures": [

"GPTNeoXForCausalLM"

],

"bos_token_id": 0,

"custom_pipelines": {

"text-generation": {

"impl": "h2oai_pipeline.H2OTextGenerationPipeline",

"pt": "AutoModelForCausalLM"

}

},

"eos_token_id": 0,

"hidden_act": "gelu",

"hidden_size": 5120,

"initializer_range": 0.02,

"intermediate_size": 20480,

"layer_norm_eps": 1e-05,

"max_position_embeddings": 2048,

"model_type": "gpt_neox",

"num_attention_heads": 40,

"num_hidden_layers": 36,

"rotary_emb_base": 10000,

"rotary_pct": 0.25,

"tie_word_embeddings": false,

"torch_dtype": "float16",

"transformers_version": "4.28.1",

"use_cache": true,

"use_parallel_residual": true,

"vocab_size": 50688

}

```

|