ZeroSwot ✨🤖✨

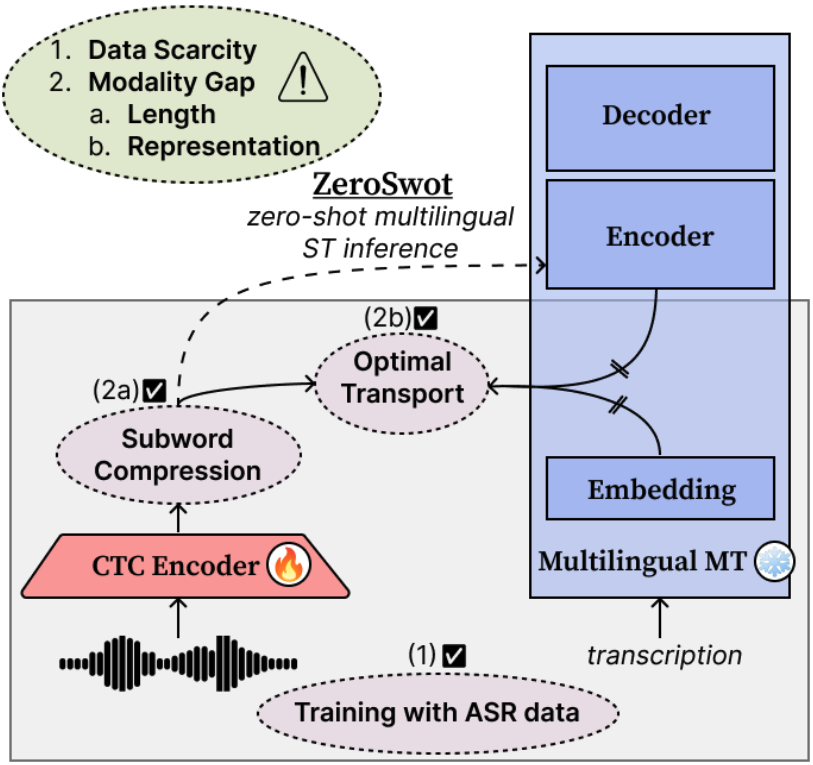

ZeroSwot is a state-of-the-art zero-shot end-to-end Speech Translation system.

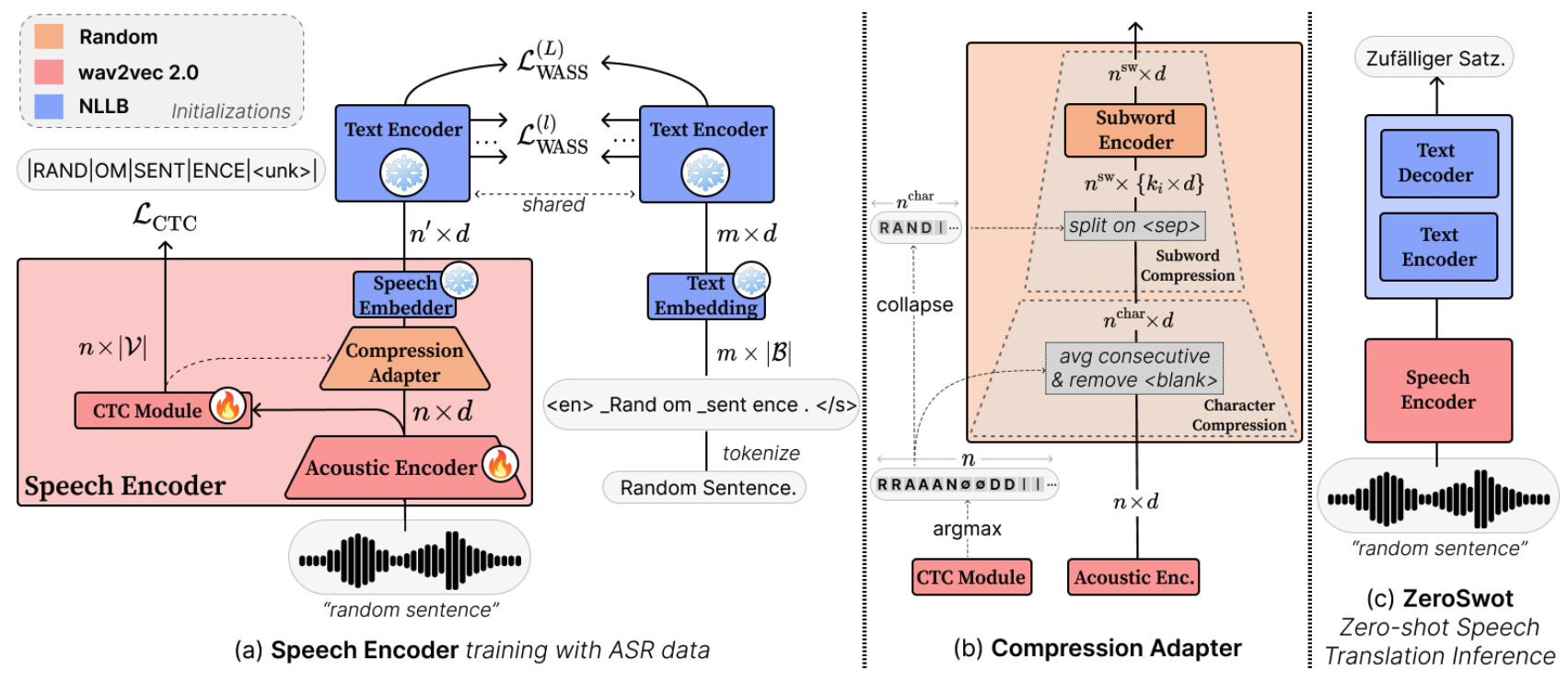

The model is created by adapting a wav2vec2.0-based encoder to the embedding space of NLLB, using a novel subword compression module and Optimal Transport, while only utilizing ASR data. It thus enables Zero-shot E2E Speech Translation to all the 200 languages supported by NLLB.

For more details please refer to our paper and the original repo build on fairseq.

Architecture

The compression module is a light-weight transformer that takes as input the hidden state of wav2vec2.0 and the corresponding CTC predictions, and compresses them to subword-like embeddings similar to those expected from NLLB and aligns them using Optimal Transport. For inference we simply pass the output of the speech encoder to NLLB encoder.

Version

This version of ZeroSwot is trained with ASR data from CommonVoice. It adapts wav2vec2.0-large to the embedding space of the nllb-200-distilled-600M_covost2 model, which is a multilingually finetuned NLLB on MuST-C MT data. We have more versions available:

| Models | ASR data | NLLB version |

|---|---|---|

| ZeroSwot-Medium_asr-mustc | MuST-C v1.0 | distilled-600M original |

| ZeroSwot-Medium_asr-mustc_mt-mustc | MuST-C v1.0 | distilled-600M finetuned w/ MuST-C |

| ZeroSwot-Large_asr-mustc | MuST-C v1.0 | distilled-1.3B original |

| ZeroSwot-Large_asr-mustc_mt-mustc | MuST-C v1.0 | distilled-1.3B finetuned w/ MuST-C |

| ZeroSwot-Medium_asr-cv | CommonVoice | distilled-600M original |

| ZeroSwot-Medium_asr-cv_mt-covost2 | CommonVoice | distilled-600M finetuned w/ CoVoST2 |

| ZeroSwot-Large_asr-cv | CommonVoice | distilled-1.3B original |

| ZeroSwot-Large_asr-cv_mt-covost2 | CommonVoice | distilled-1.3B finetuned w/ CoVoST2 |

Usage

The model is tested with python 3.9.16 and Transformer v4.41.2. Install also torchaudio and sentencepiece for processing.

pip install transformers torchaudio sentencepiece

from transformers import Wav2Vec2Processor, NllbTokenizer, AutoModel, AutoModelForSeq2SeqLM

import torchaudio

def load_and_resample_audio(audio_path, target_sr=16000):

audio, orig_freq = torchaudio.load(audio_path)

if orig_freq != target_sr:

audio = torchaudio.functional.resample(audio, orig_freq=orig_freq, new_freq=target_sr)

audio = audio.squeeze(0).numpy()

return audio

# Load processors and tokenizers

processor = Wav2Vec2Processor.from_pretrained("facebook/wav2vec2-large-960h-lv60-self")

tokenizer = NllbTokenizer.from_pretrained("johntsi/nllb-200-distilled-600M_covost2_en-to-15")

# Load ZeroSwot Encoder

commit_hash = "4cebecf19220ee375078046392290fcc12d1e321"

zeroswot_encoder = AutoModel.from_pretrained(

"johntsi/ZeroSwot-Medium_asr-cv_mt-covost2_en-to-15", trust_remote_code=True, revision=commit_hash,

)

zeroswot_encoder.eval()

zeroswot_encoder.to("cuda")

# Load NLLB Model

nllb_model = AutoModelForSeq2SeqLM.from_pretrained("johntsi/nllb-200-distilled-600M_covost2_en-to-15")

nllb_model.eval()

nllb_model.to("cuda")

# Load audio file

audio = load_and_resample_audio(path_to_audio_file) # you can use "resources/sample.wav" for testing

input_values = processor(audio, sampling_rate=16000, return_tensors="pt").to("cuda")

# translation to German

compressed_embeds, attention_mask = zeroswot_encoder(**input_values)

predicted_ids = nllb_model.generate(

inputs_embeds=compressed_embeds,

attention_mask=attention_mask,

forced_bos_token_id=tokenizer.lang_code_to_id["deu_Latn"],

num_beams=5,

)

translation = tokenizer.decode(predicted_ids[0], skip_special_tokens=True)

print(translation)

Results

BLEU scores on CoVoST-2 test compared to supervised SOTA models XLS-R-1B and SeamlessM4T-Medium. You can refer to Table 5 of the Results section in the paper for more details.

| Models | ZS | Size (B) | Ar | Ca | Cy | De | Et | Fa | Id | Ja | Lv | Mn | Sl | Sv | Ta | Tr | Zh | Average |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| XLS-R-1B | ✗ | 1.0 | 19.2 | 32.1 | 31.8 | 26.2 | 22.4 | 21.3 | 30.3 | 39.9 | 22.0 | 14.9 | 25.4 | 32.3 | 18.1 | 17.1 | 36.7 | 26.0 |

| SeamlessM4T-Medium | ✗ | 1.2 | 20.8 | 37.3 | 29.9 | 31.4 | 23.3 | 17.2 | 34.8 | 37.5 | 19.5 | 12.9 | 29.0 | 37.3 | 18.9 | 19.8 | 30.0 | 26.6 |

| ZeroSwot-M_asr-cv | ✓ | 0.35/0.95 | 17.6 | 32.5 | 18.0 | 29.9 | 20.4 | 16.3 | 32.4 | 32.0 | 13.3 | 10.0 | 25.2 | 34.4 | 17.8 | 15.6 | 30.5 | 23.1 |

| ZeroSwot-M_asr-cv_mt-covost2 | ✓ | 0.35/0.95 | 24.4 | 38.7 | 28.8 | 31.2 | 26.2 | 26.0 | 36.0 | 46.0 | 24.8 | 19.0 | 31.6 | 37.8 | 24.4 | 18.6 | 39.0 | 30.2 |

Citation

If you find ZeroSwot useful for your research, please cite our paper :)

@inproceedings{tsiamas-etal-2024-pushing,

title = {{Pushing the Limits of Zero-shot End-to-End Speech Translation}},

author = "Tsiamas, Ioannis and

G{\'a}llego, Gerard and

Fonollosa, Jos{\'e} and

Costa-juss{\`a}, Marta",

editor = "Ku, Lun-Wei and

Martins, Andre and

Srikumar, Vivek",

booktitle = "Findings of the Association for Computational Linguistics ACL 2024",

month = aug,

year = "2024",

address = "Bangkok, Thailand and virtual meeting",

publisher = "Association for Computational Linguistics",

url = "https://aclanthology.org/2024.findings-acl.847",

pages = "14245--14267",

}

- Downloads last month

- 12