NASA Solar Dynamics Observatory Vision Transformer v.1 (SDO_VT1)

Authors:

Frank Soboczenski, University of York & King's College London, UK

Paul Wright, Wright AI Ltd, Leeds, UK

General:

This Vision Transformer model has been fine-tuned on Solar Dynamics Observatory (SDO) data. The images used are available here: Solar Dynamics Observatory Gallery. This is a Vision Transformer model fine-tuned on SDO data in an active region classification task. We aim to highlight the ease of use of the HuggingFace platform, integration with popular deep learning frameworks such as PyTorch, TensorFlow, or JAX, performance monitoring with Weights and Biases, and the ability to effortlessly utilize pre-trained large scale Transformer models for targeted fine-tuning purposes. This is to our knowledge the first Vision Transformer model on NASA SDO mission data and we are working on additional versions to address challenges in this domain.

The data used was provided courtesy of NASA/SDO and the AIA, EVE, and HMI science teams.

The authors gratefully acknowledge the entire NASA Solar Dynamics Observatory Mission Team.

For the SDO team: this model is the first version for demonstration purposes. It is only trained on the SDO Gallery data atm and we're working on additional data.

We will include more technical details here soon.

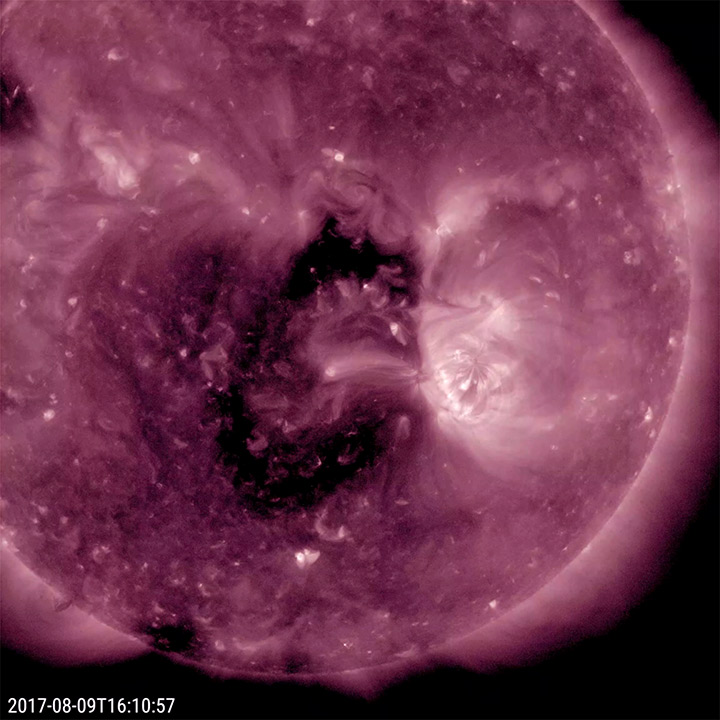

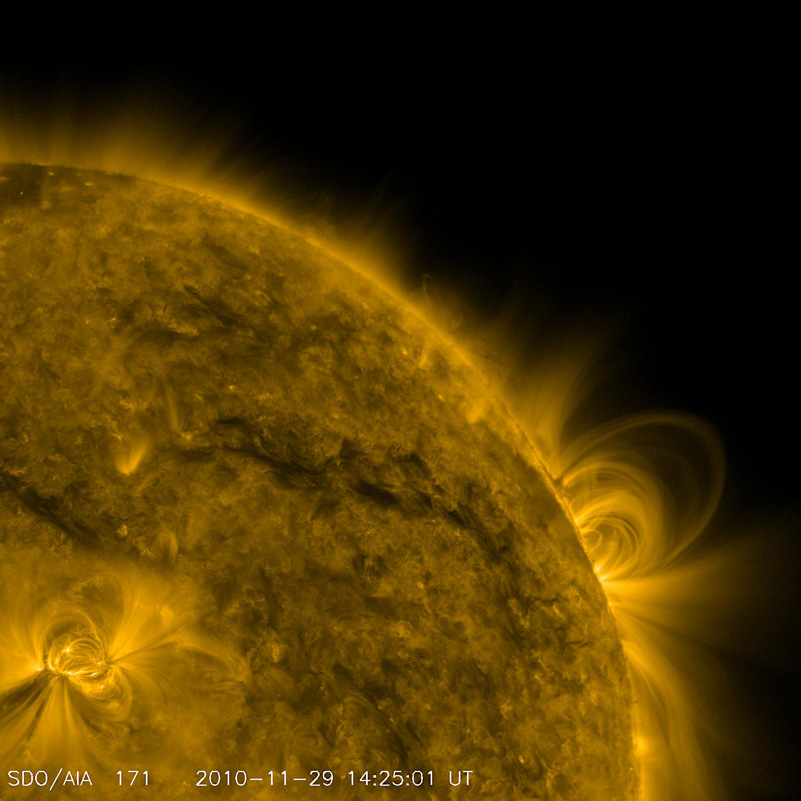

Example Images

--> Use one of the images below for the inference API field on the upper right.

Additional images for testing can be found at: Solar Dynamics Observatory Gallery You can use the following tags to further select images for testing: "coronal holes", "loops" or "flares" You can also choose "active regions" to get a general pool for testing.

NASA_SDO_Coronal_Hole

NASA_SDO_Coronal_Loop

NASA_SDO_Solar_Flare

Training data

The ViT model was pretrained on a dataset consisting of 14 million images and 21k classes (ImageNet-21k. More information on the base model used can be found here: (https://huggingface.co/google/vit-base-patch16-224-in21k)

How to use this Model

(quick snippet to work on Google Colab - comment the pip install for local use if you have transformers already installed)

!pip install transformers --quiet

from transformers import AutoFeatureExtractor, AutoModelForImageClassification

from PIL import Image

import requests

url = 'https://sdo.gsfc.nasa.gov/assets/gallery/preview/211_coronalhole.jpg'

image = Image.open(requests.get(url, stream=True).raw)

feature_extractor = AutoFeatureExtractor.from_pretrained("kenobi/SDO_VT1")

model = AutoModelForImageClassification.from_pretrained("kenobi/SDO_VT1")

inputs = feature_extractor(images=image, return_tensors="pt")

outputs = model(**inputs)

logits = outputs.logits

# model predicts one of the three fine-tuned classes (NASA_SDO_Coronal_Hole, NASA_SDO_Coronal_Loop or NASA_SDO_Solar_Flare)

predicted_class_idx = logits.argmax(-1).item()

print("Predicted class:", model.config.id2label[predicted_class_idx])

BibTeX & References

A publication on this work is currently in preparation. In the meantime, please refer to this model by using the following citation:

@misc{sdovt2022,

author = {Frank Soboczenski and Paul J Wright},

title = {SDOVT: A Vision Transformer Model for Solar Dynamics Observatory (SDO) Data},

url = {https://huggingface.co/kenobi/SDO_VT1/},

version = {1.0},

year = {2022},

}

For the base ViT model used please refer to:

@misc{wu2020visual,

title={Visual Transformers: Token-based Image Representation and Processing for Computer Vision},

author={Bichen Wu and Chenfeng Xu and Xiaoliang Dai and Alvin Wan and Peizhao Zhang and Zhicheng Yan and Masayoshi Tomizuka and Joseph Gonzalez and Kurt Keutzer and Peter Vajda},

year={2020},

eprint={2006.03677},

archivePrefix={arXiv},

primaryClass={cs.CV}

}

For referring to Imagenet:

@inproceedings{deng2009imagenet,

title={Imagenet: A large-scale hierarchical image database},

author={Deng, Jia and Dong, Wei and Socher, Richard and Li, Li-Jia and Li, Kai and Fei-Fei, Li},

booktitle={2009 IEEE conference on computer vision and pattern recognition},

pages={248--255},

year={2009},

organization={Ieee}

}

- Downloads last month

- 25

Evaluation results

- Accuracyself-reported0.870