metadata

license: mit

tags:

- stable-diffusion

- stable-diffusion-diffusers

inference: false

SDXL-VAE-FP16-Fix

SDXL-VAE-FP16-Fix is the SDXL VAE, but modified to run in fp16 precision without generating NaNs.

| VAE | Decoding in float32 / bfloat16 precision |

Decoding in float16 precision |

|---|---|---|

| SDXL-VAE | ✅  |

⚠️  |

| SDXL-VAE-FP16-Fix | ✅  |

✅  |

🧨 Diffusers Usage

Just load this checkpoint via AutoencoderKL:

from diffusers import DiffusionPipeline, AutoencoderKL

vae = AutoencoderKL.from_pretrained("madebyollin/sdxl-vae-fp16-fix", torch_dtype=torch.float16, force_upcast=True)

pipe = DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-xl-base-0.9", vae=vae, torch_dtype=torch.float16, variant="fp16", use_safetensors=True)

pipe.to("cuda")

refiner = DiffusionPipeline.from_pretrained("stabilityai/stable-diffusion-xl-refiner-0.9", vae=vae, torch_dtype=torch.float16, use_safetensors=True, variant="fp16")

refiner.to("cuda")

Details

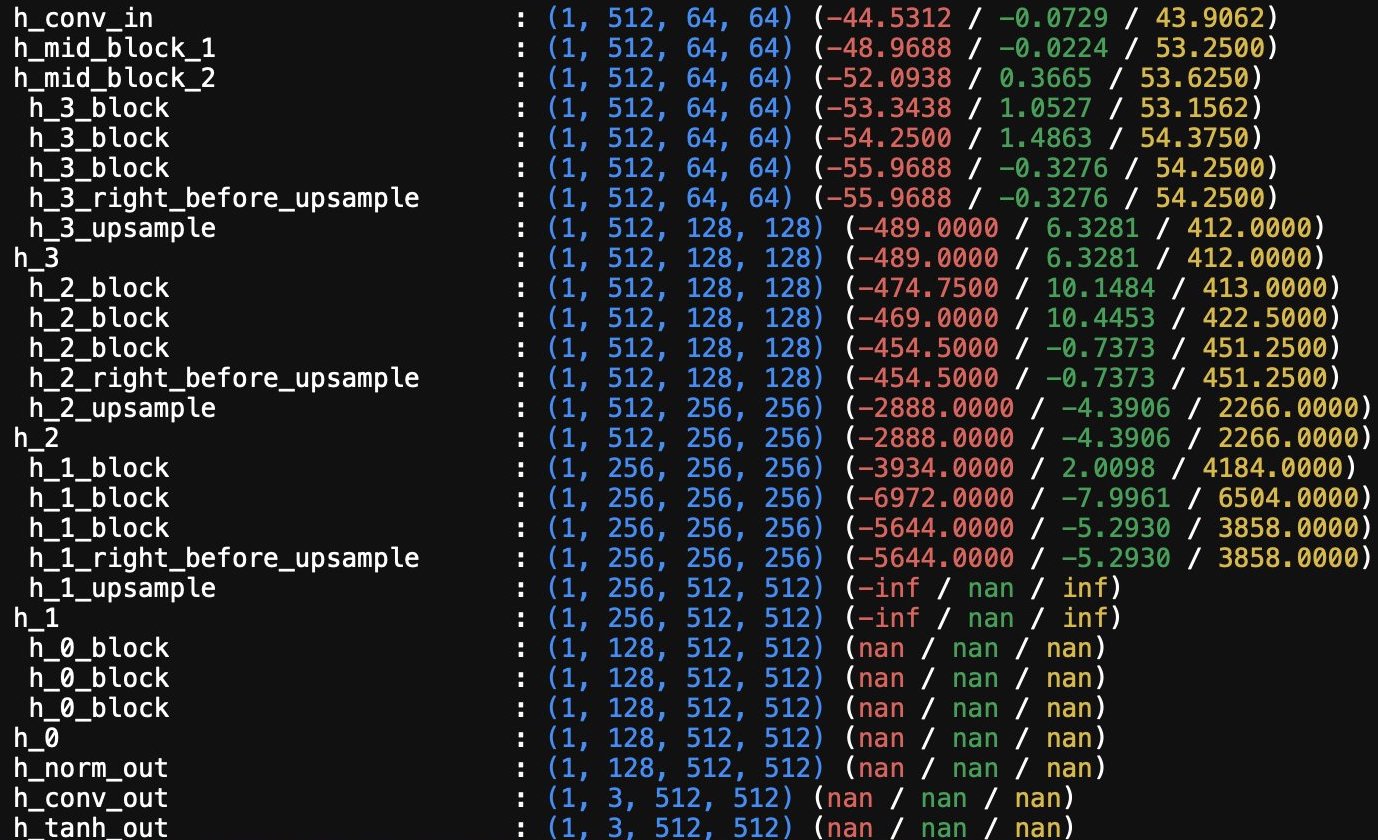

SDXL-VAE generates NaNs in fp16 because the internal activation values are too big:

SDXL-VAE-FP16-Fix was created by finetuning the SDXL-VAE to:

- keep the final output the same, but

- make the internal activation values smaller, by

- scaling down weights and biases within the network

There are slight discrepancies between the output of SDXL-VAE-FP16-Fix and SDXL-VAE, but the decoded images should be close enough for most purposes.