SmolLM2-MedIT-Upscale-2B

Model Summary

SmolLM2-MedIT-Upscale-2B is an expanded version of the SmolLM2-1.7B-Instruct model, increasing its parameter count to 2 billion. This expansion was achieved by doubling the number of heads in the q_proj, k_proj, v_proj, and o_proj layers, resulting in vectors of length 4096, compared to 2048 in the original model.

Purpose of Expansion

This model was developed to test the hypothesis that self-attention layers do not extend the "memory" of the model. By broadening the attention layers, we aim to observe the impact on the model's performance and memory capabilities.

Training Status

This model underwent instruction fine-tuning for 8,800 steps using a batch size of 4, gradient accumulation for 32 steps, a maximum sequence length of 1,280, and a learning rate of 1e-5. Additionally, it was fine-tuned with 1,600 steps of DPO under the same configuration.

Note: The model has undergone preliminary training focused on assessing the effects of the expanded attention layers. It is not fully trained to its maximum potential. We encourage the community to contribute to its further training; pull requests are welcome.

Analysis of Expanded Layers

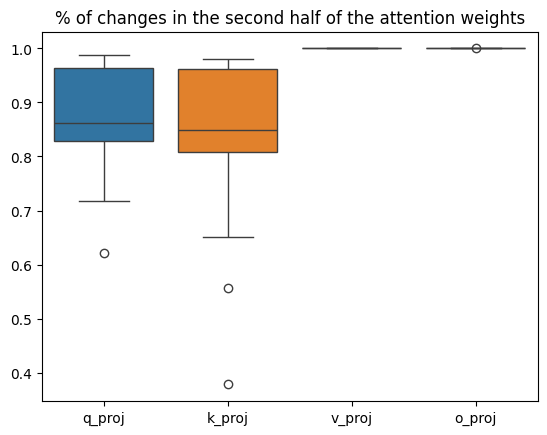

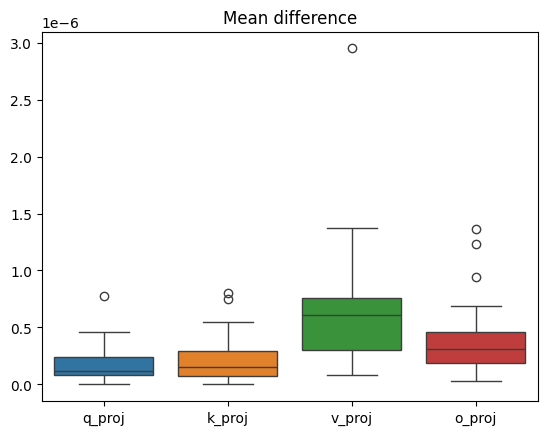

During fine-tuning, we analyzed the changes in the new parameters of the expanded layers:

Minimum percentage of new parameters that changed:

q_proj: 62.17%k_proj: 37.85%v_proj: 99.99%o_proj: 99.98%

Maximum percentage of new parameters that changed:

q_proj: 98.86%k_proj: 97.99%v_proj: 99.99%o_proj: 99.99%

Average change in new parameters after fine-tuning:

q_proj: 1.838e-07k_proj: 2.277e-07v_proj: 6.490e-07o_proj: 3.924e-07

These results are illustrated in the following charts:

Usage

To utilize this model, follow the instructions provided for the original SmolLM2-1.7B-Instruct model, adjusting for the increased parameter size.

Contributing

We welcome contributions to further train and evaluate this model. Please submit pull requests with your improvements.

License

This model is licensed under the Apache 2.0 License.

Citation

If you use this model in your research, please cite it as follows:

@misc{SmolLM2-MedIT-Upscale-2B,

author = {Mariusz Kurman, MedIT Solutions},

title = {SmolLM2-MedIT-Upscale-2B: An Expanded Version of SmolLM2-1.7B-Instruct},

year = {2024},

publisher = {Hugging Face},

url = {https://huggingface.co/meditsolutions/SmolLM2-MedIT-Upscale-2B},

}

Open LLM Leaderboard Evaluation Results

Detailed results can be found here

| Metric | Value |

|---|---|

| Avg. | 15.17 |

| IFEval (0-Shot) | 64.29 |

| BBH (3-Shot) | 10.51 |

| MATH Lvl 5 (4-Shot) | 1.06 |

| GPQA (0-shot) | 1.90 |

| MuSR (0-shot) | 2.45 |

| MMLU-PRO (5-shot) | 10.78 |

- Downloads last month

- 119

Model tree for meditsolutions/SmolLM2-MedIT-Upscale-2B

Base model

HuggingFaceTB/SmolLM2-1.7BDatasets used to train meditsolutions/SmolLM2-MedIT-Upscale-2B

Evaluation results

- strict accuracy on IFEval (0-Shot)Open LLM Leaderboard64.290

- normalized accuracy on BBH (3-Shot)Open LLM Leaderboard10.510

- exact match on MATH Lvl 5 (4-Shot)Open LLM Leaderboard1.060

- acc_norm on GPQA (0-shot)Open LLM Leaderboard1.900

- acc_norm on MuSR (0-shot)Open LLM Leaderboard2.450

- accuracy on MMLU-PRO (5-shot)test set Open LLM Leaderboard10.780