license: apache-2.0

language:

- en

library_name: open_clip

tags:

- clip

- genshin-impact

- game

base_model:

- laion/CLIP-ViT-B-16-laion2B-s34B-b88K

GenshinCLIP

A simple and small-size open-sourced CLIP model fine-tuned on Genshin Impact's image-text pairs.

Visit the github for case study and data pair examples.

The model is far from being perfect, but could still offer some better text-image matching performance in some Genshin Impact scenarios.

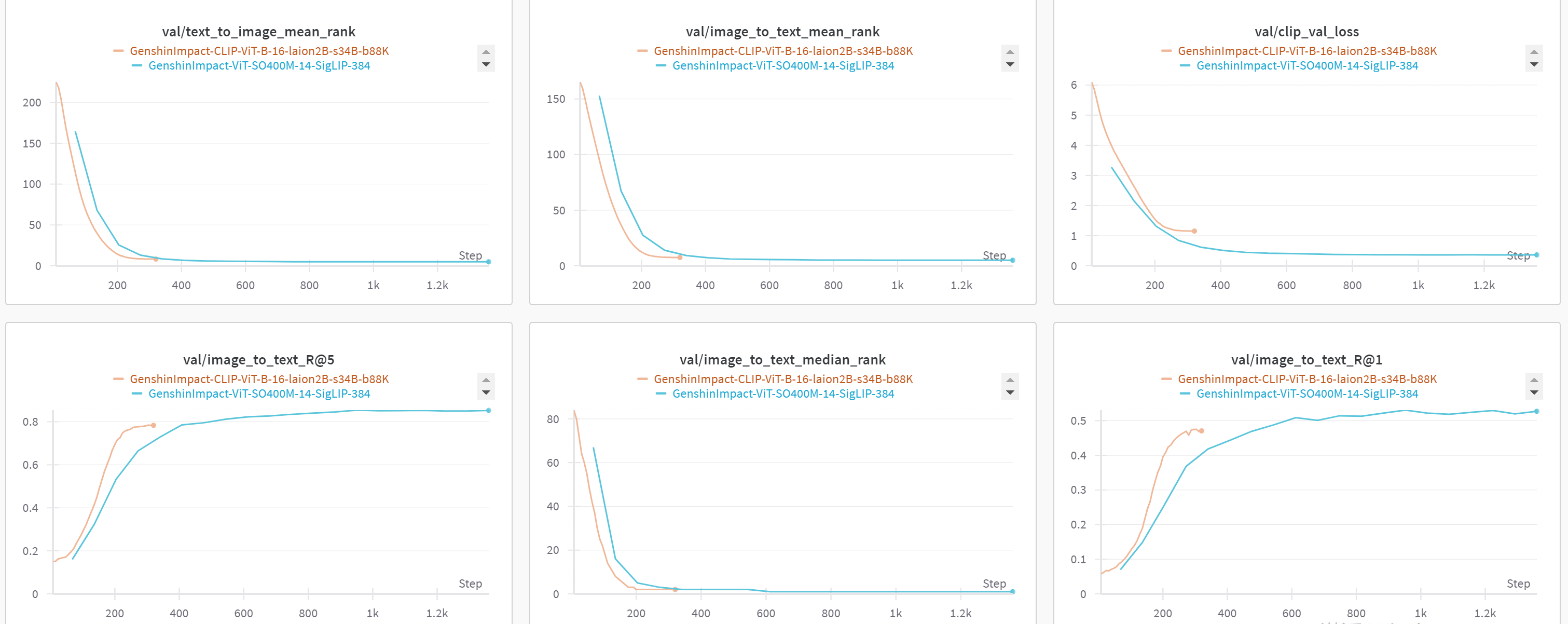

| Model | Checkpoint Size | Val Loss |

|---|---|---|

| GenshinImpact-CLIP-ViT-B-16-laion2B-s34B-b88K | 0.59 GB | 1.152 |

| GenshinImpact-ViT-SO400M-14-SigLIP-384 | 3.51 GB | 0.362 |

Intended uses & limitations

You can use the raw model for tasks like zero-shot image classification and image-text retrieval.

How to use (With OpenCLIP)

Here is how to use this model to perform zero-shot image classification:

import torch

import torch.nn.functional as F

from PIL import Image

import requests

from open_clip import create_model_from_pretrained, get_tokenizer

def preprocess_text(string):

return "Genshin Impact\n" + string

device = torch.device("cuda:0" if torch.cuda.is_available() else "cpu")

# load checkpoint from local path

# model_path = "path/to/open_clip_pytorch_model.bin"

# model_name = "ViT-B-16"

# model, preprocess = create_model_from_pretrained(model_name=model_name, pretrained=model_path, device=device)

# tokenizer = get_tokenizer(model_name)

# or load from hub

model, preprocess = create_model_from_pretrained('hf-hub:mrzjy/GenshinImpact-CLIP-ViT-B-16-laion2B-s34B-b88K')

tokenizer = get_tokenizer('hf-hub:mrzjy/GenshinImpact-CLIP-ViT-B-16-laion2B-s34B-b88K')

# image

image_url = "https://static.wikia.nocookie.net/gensin-impact/images/3/33/Qingce_Village.png"

image = Image.open(requests.get(image_url, stream=True).raw)

image = preprocess(image).unsqueeze(0).to(device)

# text choices

labels = [

"This is an area of Liyue",

"This is an area of Mondstadt",

"This is an area of Sumeru",

"This is Qingce Village"

]

labels = [preprocess_text(l) for l in labels]

text = tokenizer(labels, context_length=model.context_length).to(device)

with torch.autocast(device_type=device.type):

with torch.no_grad():

image_features = model.encode_image(image)

text_features = model.encode_text(text)

image_features /= image_features.norm(dim=-1, keepdim=True)

text_features /= text_features.norm(dim=-1, keepdim=True)

text_probs = (100.0 * image_features @ text_features.T).softmax(dim=-1)

print(text_probs) # [0.0319, 0.0062, 0.0012, 0.9608]

Model Card

CLIP for GenshinImpact

CLIP-ViT-B-16-laion2B-s34B-b88K model further fine-tuned on 17k Genshin Impact English text-image pairs at resolution 384x384.

Training data description

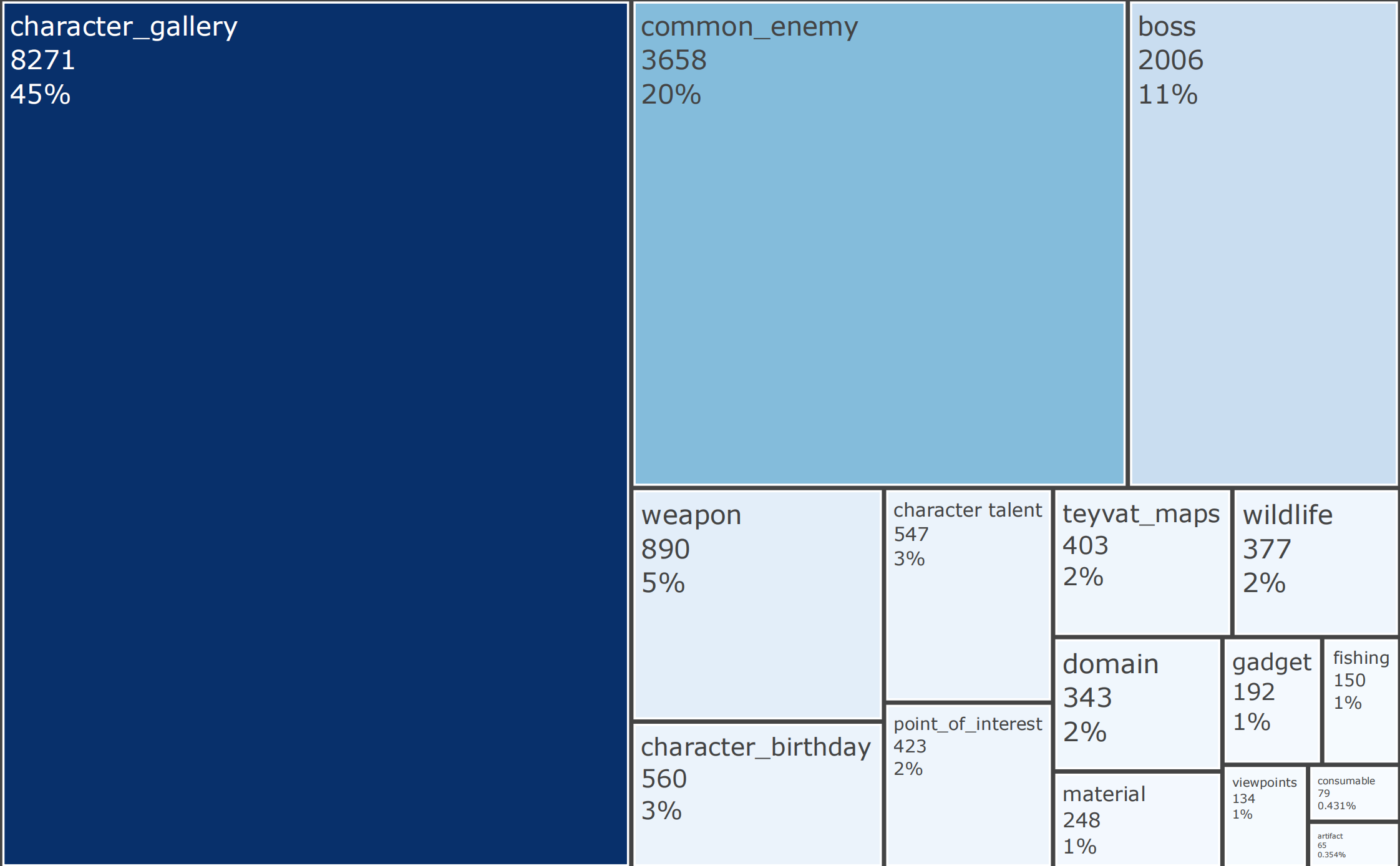

There're currently 17,428 (train) and 918 (validation) text-image pairs used for model training.

All the images and texts are crawled from Genshin Fandom Wiki and are manually parsed to form text-image pairs.

Image Processing:

- Size: Resize all images to 384x384 pixels to match the original model training settings.

- Format: Accept images in PNG or GIF format. For GIFs, extract a random frame to create a static image for text-image pairs.

Text Processing:

- Source: Text can be from the simple caption attribute of an HTML

<img>tag or specified web content. - Format: Prepend all texts with "Genshin Impact" along with some simple template to form natural language sentences.

Data Distribution:

Validation Loss Curve