You need to agree to share your contact information to access this model

This repository is publicly accessible, but you have to accept the conditions to access its files and content.

Our models are intended for academic use only. If you are not affiliated with an academic institution, please provide a rationale for using our models. Please allow us a few business days to manually review subscriptions.

Log in or Sign Up to review the conditions and access this model content.

xlm-roberta-large-manifesto

Model description

An xlm-roberta-large model finetuned on multilingual training data labeled using the Manifesto Project's coding scheme.

How to use the model

from transformers import AutoTokenizer, pipeline

tokenizer = AutoTokenizer.from_pretrained("xlm-roberta-large")

pipe = pipeline(

model="poltextlab/xlm-roberta-large-manifesto",

task="text-classification",

tokenizer=tokenizer,

use_fast=False,

token="<your_hf_read_only_token>"

)

text = "We will place an immediate 6-month halt on the finance driven closure of beds and wards, and set up an independent audit of needs and facilities."

pipe(text)

Gated access

Due to the gated access, you must pass the token parameter when loading the model. In earlier versions of the Transformers package, you may need to use the use_auth_token parameter instead.

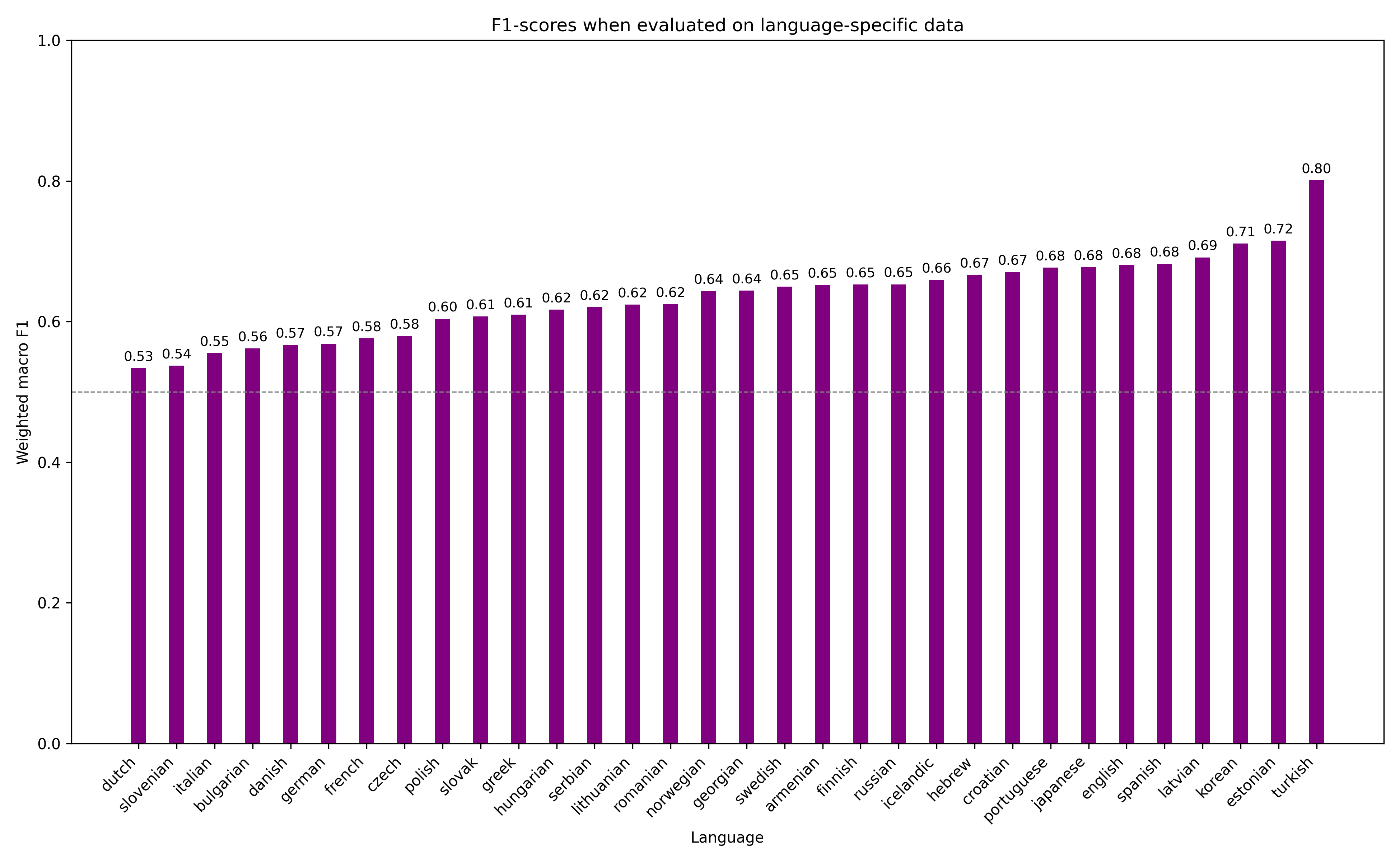

Model performance

The model was evaluated on a test set of 305141 examples, which were split in a stratified manner, where for every label, 20% of all occurences were randomly selected.

Metrics (precision, recall and F1-score are weighted macro averages):

| Precision | Recall | F1-Score | Accuracy | Top3_Acc | Top5_Acc |

|---|---|---|---|---|---|

| 0.6495 | 0.6547 | 0.6507 | 0.6547 | 0.8505 | 0.9073 |

Debugging and issues

This architecture uses the sentencepiece tokenizer. In order to run the model before transformers==4.27 you need to install it manually.

- Downloads last month

- 2