Finetuning Llama-7b on text-to-sql task

This model is a fine-tuned version of codellama/CodeLlama-7b-hf on b-mc2/sql-create-context.

Training and evaluation data

The model is trained on 10,000 random samples from b-mc2/sql-create-context. It is trained in a manner described by Phil Schmid here.

Training hyperparameters

| Hyperparameter | Value |

|---|---|

| learning_rate | 0.0002 |

| train_batch_size | 50 |

| eval_batch_size | 8 |

| seed | 42 |

| gradient_accumulation_steps | 2 |

| total_train_batch_size | 100 |

| optimizer | Adam with betas=(0.9,0.999) and epsilon=1e-08 |

| lr_scheduler_type | constant |

| lr_scheduler_warmup_ratio | 0.03 |

| num_epochs | 3 |

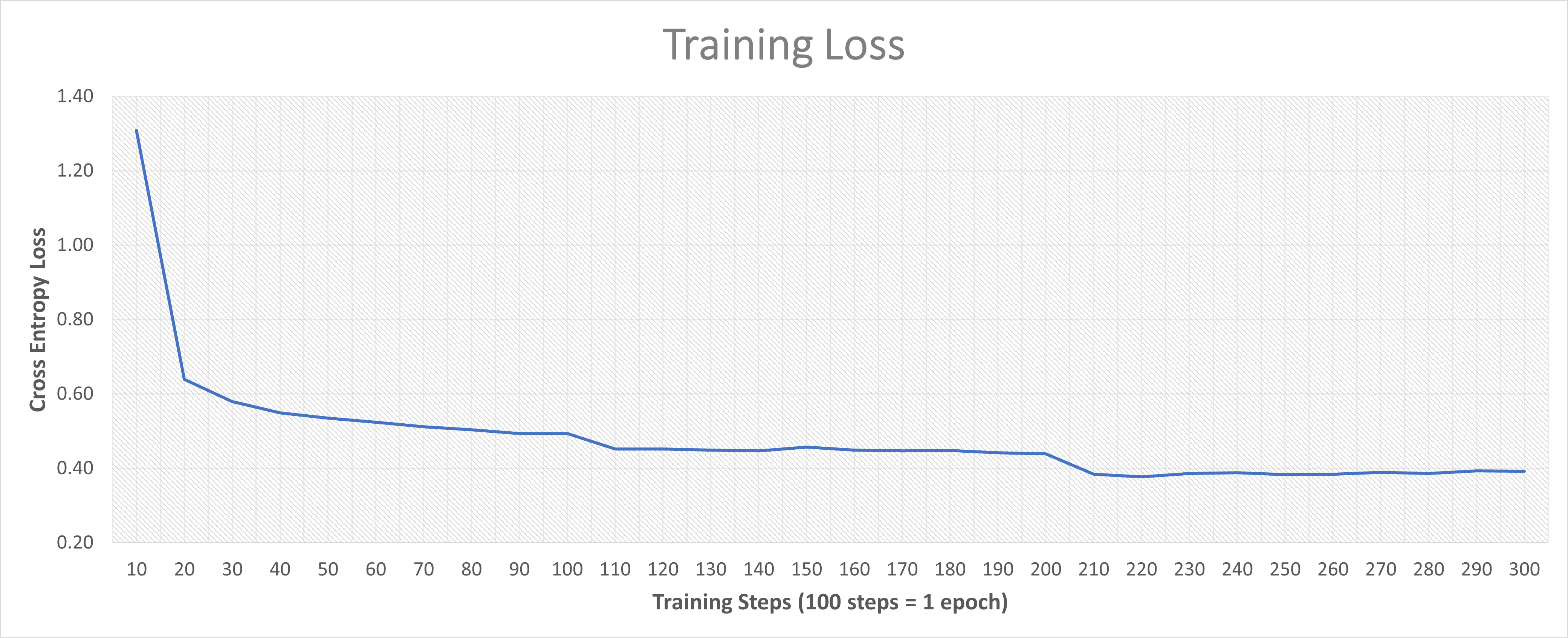

Training results

Framework versions

- PEFT 0.7.2.dev0

- Transformers 4.36.2

- Pytorch 2.1.2+cu121

- Datasets 2.16.1

- Tokenizers 0.15.2

- Downloads last month

- 0

Inference Providers

NEW

This model is not currently available via any of the supported Inference Providers.

The model cannot be deployed to the HF Inference API:

The model has no pipeline_tag.

Model tree for pratikdoshi/finetune-llama-7b-text-to-sql

Base model

codellama/CodeLlama-7b-hf