StoryVisualizationaTask

#193

by

Anyou

- opened

- README.md +0 -207

- __init__.py +0 -0

- config.yaml +63 -0

- data_script/flintstones_hdf5.py +51 -0

- data_script/pororo_hdf5.py +83 -0

- data_script/vist_hdf5.py +111 -0

- data_script/vist_img_download.py +61 -0

- datasets/flintstones.py +93 -0

- datasets/pororo.py +144 -0

- datasets/vistdii.py +94 -0

- datasets/vistsis.py +94 -0

- environment.yml +271 -0

- fid_utils.py +41 -0

- main.py +537 -0

- models/blip_override/blip.py +240 -0

- models/blip_override/med.py +955 -0

- models/blip_override/med_config.json +21 -0

- models/blip_override/vit.py +302 -0

- models/diffusers_override/attention.py +669 -0

- models/diffusers_override/unet_2d_blocks.py +1602 -0

- models/diffusers_override/unet_2d_condition.py +359 -0

- models/inception.py +314 -0

- v1-5-pruned-emaonly.ckpt → pororo_100.h5 +2 -2

- readme-storyvisualization.md +123 -0

- requirements.txt +10 -0

- run.sh +1 -0

- test.py +94 -0

- transtoyolo.py +320 -0

- v1-5-pruned-emaonly.safetensors +0 -3

- v1-5-pruned.safetensors +0 -3

README.md

DELETED

|

@@ -1,207 +0,0 @@

|

|

| 1 |

-

---

|

| 2 |

-

license: creativeml-openrail-m

|

| 3 |

-

tags:

|

| 4 |

-

- stable-diffusion

|

| 5 |

-

- stable-diffusion-diffusers

|

| 6 |

-

- text-to-image

|

| 7 |

-

inference: true

|

| 8 |

-

extra_gated_prompt: |-

|

| 9 |

-

This model is open access and available to all, with a CreativeML OpenRAIL-M license further specifying rights and usage.

|

| 10 |

-

The CreativeML OpenRAIL License specifies:

|

| 11 |

-

|

| 12 |

-

1. You can't use the model to deliberately produce nor share illegal or harmful outputs or content

|

| 13 |

-

2. CompVis claims no rights on the outputs you generate, you are free to use them and are accountable for their use which must not go against the provisions set in the license

|

| 14 |

-

3. You may re-distribute the weights and use the model commercially and/or as a service. If you do, please be aware you have to include the same use restrictions as the ones in the license and share a copy of the CreativeML OpenRAIL-M to all your users (please read the license entirely and carefully)

|

| 15 |

-

Please read the full license carefully here: https://huggingface.co/spaces/CompVis/stable-diffusion-license

|

| 16 |

-

|

| 17 |

-

extra_gated_heading: Please read the LICENSE to access this model

|

| 18 |

-

---

|

| 19 |

-

|

| 20 |

-

# Stable Diffusion v1-5 Model Card

|

| 21 |

-

|

| 22 |

-

Stable Diffusion is a latent text-to-image diffusion model capable of generating photo-realistic images given any text input.

|

| 23 |

-

For more information about how Stable Diffusion functions, please have a look at [🤗's Stable Diffusion blog](https://huggingface.co/blog/stable_diffusion).

|

| 24 |

-

|

| 25 |

-

The **Stable-Diffusion-v1-5** checkpoint was initialized with the weights of the [Stable-Diffusion-v1-2](https:/steps/huggingface.co/CompVis/stable-diffusion-v1-2)

|

| 26 |

-

checkpoint and subsequently fine-tuned on 595k steps at resolution 512x512 on "laion-aesthetics v2 5+" and 10% dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

|

| 27 |

-

|

| 28 |

-

You can use this both with the [🧨Diffusers library](https://github.com/huggingface/diffusers) and the [RunwayML GitHub repository](https://github.com/runwayml/stable-diffusion).

|

| 29 |

-

|

| 30 |

-

### Diffusers

|

| 31 |

-

```py

|

| 32 |

-

from diffusers import StableDiffusionPipeline

|

| 33 |

-

import torch

|

| 34 |

-

|

| 35 |

-

model_id = "runwayml/stable-diffusion-v1-5"

|

| 36 |

-

pipe = StableDiffusionPipeline.from_pretrained(model_id, torch_dtype=torch.float16)

|

| 37 |

-

pipe = pipe.to("cuda")

|

| 38 |

-

|

| 39 |

-

prompt = "a photo of an astronaut riding a horse on mars"

|

| 40 |

-

image = pipe(prompt).images[0]

|

| 41 |

-

|

| 42 |

-

image.save("astronaut_rides_horse.png")

|

| 43 |

-

```

|

| 44 |

-

For more detailed instructions, use-cases and examples in JAX follow the instructions [here](https://github.com/huggingface/diffusers#text-to-image-generation-with-stable-diffusion)

|

| 45 |

-

|

| 46 |

-

### Original GitHub Repository

|

| 47 |

-

|

| 48 |

-

1. Download the weights

|

| 49 |

-

- [v1-5-pruned-emaonly.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned-emaonly.ckpt) - 4.27GB, ema-only weight. uses less VRAM - suitable for inference

|

| 50 |

-

- [v1-5-pruned.ckpt](https://huggingface.co/runwayml/stable-diffusion-v1-5/resolve/main/v1-5-pruned.ckpt) - 7.7GB, ema+non-ema weights. uses more VRAM - suitable for fine-tuning

|

| 51 |

-

|

| 52 |

-

2. Follow instructions [here](https://github.com/runwayml/stable-diffusion).

|

| 53 |

-

|

| 54 |

-

## Model Details

|

| 55 |

-

- **Developed by:** Robin Rombach, Patrick Esser

|

| 56 |

-

- **Model type:** Diffusion-based text-to-image generation model

|

| 57 |

-

- **Language(s):** English

|

| 58 |

-

- **License:** [The CreativeML OpenRAIL M license](https://huggingface.co/spaces/CompVis/stable-diffusion-license) is an [Open RAIL M license](https://www.licenses.ai/blog/2022/8/18/naming-convention-of-responsible-ai-licenses), adapted from the work that [BigScience](https://bigscience.huggingface.co/) and [the RAIL Initiative](https://www.licenses.ai/) are jointly carrying in the area of responsible AI licensing. See also [the article about the BLOOM Open RAIL license](https://bigscience.huggingface.co/blog/the-bigscience-rail-license) on which our license is based.

|

| 59 |

-

- **Model Description:** This is a model that can be used to generate and modify images based on text prompts. It is a [Latent Diffusion Model](https://arxiv.org/abs/2112.10752) that uses a fixed, pretrained text encoder ([CLIP ViT-L/14](https://arxiv.org/abs/2103.00020)) as suggested in the [Imagen paper](https://arxiv.org/abs/2205.11487).

|

| 60 |

-

- **Resources for more information:** [GitHub Repository](https://github.com/CompVis/stable-diffusion), [Paper](https://arxiv.org/abs/2112.10752).

|

| 61 |

-

- **Cite as:**

|

| 62 |

-

|

| 63 |

-

@InProceedings{Rombach_2022_CVPR,

|

| 64 |

-

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

|

| 65 |

-

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

|

| 66 |

-

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

|

| 67 |

-

month = {June},

|

| 68 |

-

year = {2022},

|

| 69 |

-

pages = {10684-10695}

|

| 70 |

-

}

|

| 71 |

-

|

| 72 |

-

# Uses

|

| 73 |

-

|

| 74 |

-

## Direct Use

|

| 75 |

-

The model is intended for research purposes only. Possible research areas and

|

| 76 |

-

tasks include

|

| 77 |

-

|

| 78 |

-

- Safe deployment of models which have the potential to generate harmful content.

|

| 79 |

-

- Probing and understanding the limitations and biases of generative models.

|

| 80 |

-

- Generation of artworks and use in design and other artistic processes.

|

| 81 |

-

- Applications in educational or creative tools.

|

| 82 |

-

- Research on generative models.

|

| 83 |

-

|

| 84 |

-

Excluded uses are described below.

|

| 85 |

-

|

| 86 |

-

### Misuse, Malicious Use, and Out-of-Scope Use

|

| 87 |

-

_Note: This section is taken from the [DALLE-MINI model card](https://huggingface.co/dalle-mini/dalle-mini), but applies in the same way to Stable Diffusion v1_.

|

| 88 |

-

|

| 89 |

-

|

| 90 |

-

The model should not be used to intentionally create or disseminate images that create hostile or alienating environments for people. This includes generating images that people would foreseeably find disturbing, distressing, or offensive; or content that propagates historical or current stereotypes.

|

| 91 |

-

|

| 92 |

-

#### Out-of-Scope Use

|

| 93 |

-

The model was not trained to be factual or true representations of people or events, and therefore using the model to generate such content is out-of-scope for the abilities of this model.

|

| 94 |

-

|

| 95 |

-

#### Misuse and Malicious Use

|

| 96 |

-

Using the model to generate content that is cruel to individuals is a misuse of this model. This includes, but is not limited to:

|

| 97 |

-

|

| 98 |

-

- Generating demeaning, dehumanizing, or otherwise harmful representations of people or their environments, cultures, religions, etc.

|

| 99 |

-

- Intentionally promoting or propagating discriminatory content or harmful stereotypes.

|

| 100 |

-

- Impersonating individuals without their consent.

|

| 101 |

-

- Sexual content without consent of the people who might see it.

|

| 102 |

-

- Mis- and disinformation

|

| 103 |

-

- Representations of egregious violence and gore

|

| 104 |

-

- Sharing of copyrighted or licensed material in violation of its terms of use.

|

| 105 |

-

- Sharing content that is an alteration of copyrighted or licensed material in violation of its terms of use.

|

| 106 |

-

|

| 107 |

-

## Limitations and Bias

|

| 108 |

-

|

| 109 |

-

### Limitations

|

| 110 |

-

|

| 111 |

-

- The model does not achieve perfect photorealism

|

| 112 |

-

- The model cannot render legible text

|

| 113 |

-

- The model does not perform well on more difficult tasks which involve compositionality, such as rendering an image corresponding to “A red cube on top of a blue sphere”

|

| 114 |

-

- Faces and people in general may not be generated properly.

|

| 115 |

-

- The model was trained mainly with English captions and will not work as well in other languages.

|

| 116 |

-

- The autoencoding part of the model is lossy

|

| 117 |

-

- The model was trained on a large-scale dataset

|

| 118 |

-

[LAION-5B](https://laion.ai/blog/laion-5b/) which contains adult material

|

| 119 |

-

and is not fit for product use without additional safety mechanisms and

|

| 120 |

-

considerations.

|

| 121 |

-

- No additional measures were used to deduplicate the dataset. As a result, we observe some degree of memorization for images that are duplicated in the training data.

|

| 122 |

-

The training data can be searched at [https://rom1504.github.io/clip-retrieval/](https://rom1504.github.io/clip-retrieval/) to possibly assist in the detection of memorized images.

|

| 123 |

-

|

| 124 |

-

### Bias

|

| 125 |

-

|

| 126 |

-

While the capabilities of image generation models are impressive, they can also reinforce or exacerbate social biases.

|

| 127 |

-

Stable Diffusion v1 was trained on subsets of [LAION-2B(en)](https://laion.ai/blog/laion-5b/),

|

| 128 |

-

which consists of images that are primarily limited to English descriptions.

|

| 129 |

-

Texts and images from communities and cultures that use other languages are likely to be insufficiently accounted for.

|

| 130 |

-

This affects the overall output of the model, as white and western cultures are often set as the default. Further, the

|

| 131 |

-

ability of the model to generate content with non-English prompts is significantly worse than with English-language prompts.

|

| 132 |

-

|

| 133 |

-

### Safety Module

|

| 134 |

-

|

| 135 |

-

The intended use of this model is with the [Safety Checker](https://github.com/huggingface/diffusers/blob/main/src/diffusers/pipelines/stable_diffusion/safety_checker.py) in Diffusers.

|

| 136 |

-

This checker works by checking model outputs against known hard-coded NSFW concepts.

|

| 137 |

-

The concepts are intentionally hidden to reduce the likelihood of reverse-engineering this filter.

|

| 138 |

-

Specifically, the checker compares the class probability of harmful concepts in the embedding space of the `CLIPTextModel` *after generation* of the images.

|

| 139 |

-

The concepts are passed into the model with the generated image and compared to a hand-engineered weight for each NSFW concept.

|

| 140 |

-

|

| 141 |

-

|

| 142 |

-

## Training

|

| 143 |

-

|

| 144 |

-

**Training Data**

|

| 145 |

-

The model developers used the following dataset for training the model:

|

| 146 |

-

|

| 147 |

-

- LAION-2B (en) and subsets thereof (see next section)

|

| 148 |

-

|

| 149 |

-

**Training Procedure**

|

| 150 |

-

Stable Diffusion v1-5 is a latent diffusion model which combines an autoencoder with a diffusion model that is trained in the latent space of the autoencoder. During training,

|

| 151 |

-

|

| 152 |

-

- Images are encoded through an encoder, which turns images into latent representations. The autoencoder uses a relative downsampling factor of 8 and maps images of shape H x W x 3 to latents of shape H/f x W/f x 4

|

| 153 |

-

- Text prompts are encoded through a ViT-L/14 text-encoder.

|

| 154 |

-

- The non-pooled output of the text encoder is fed into the UNet backbone of the latent diffusion model via cross-attention.

|

| 155 |

-

- The loss is a reconstruction objective between the noise that was added to the latent and the prediction made by the UNet.

|

| 156 |

-

|

| 157 |

-

Currently six Stable Diffusion checkpoints are provided, which were trained as follows.

|

| 158 |

-

- [`stable-diffusion-v1-1`](https://huggingface.co/CompVis/stable-diffusion-v1-1): 237,000 steps at resolution `256x256` on [laion2B-en](https://huggingface.co/datasets/laion/laion2B-en).

|

| 159 |

-

194,000 steps at resolution `512x512` on [laion-high-resolution](https://huggingface.co/datasets/laion/laion-high-resolution) (170M examples from LAION-5B with resolution `>= 1024x1024`).

|

| 160 |

-

- [`stable-diffusion-v1-2`](https://huggingface.co/CompVis/stable-diffusion-v1-2): Resumed from `stable-diffusion-v1-1`.

|

| 161 |

-

515,000 steps at resolution `512x512` on "laion-improved-aesthetics" (a subset of laion2B-en,

|

| 162 |

-

filtered to images with an original size `>= 512x512`, estimated aesthetics score `> 5.0`, and an estimated watermark probability `< 0.5`. The watermark estimate is from the LAION-5B metadata, the aesthetics score is estimated using an [improved aesthetics estimator](https://github.com/christophschuhmann/improved-aesthetic-predictor)).

|

| 163 |

-

- [`stable-diffusion-v1-3`](https://huggingface.co/CompVis/stable-diffusion-v1-3): Resumed from `stable-diffusion-v1-2` - 195,000 steps at resolution `512x512` on "laion-improved-aesthetics" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

|

| 164 |

-

- [`stable-diffusion-v1-4`](https://huggingface.co/CompVis/stable-diffusion-v1-4) Resumed from `stable-diffusion-v1-2` - 225,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

|

| 165 |

-

- [`stable-diffusion-v1-5`](https://huggingface.co/runwayml/stable-diffusion-v1-5) Resumed from `stable-diffusion-v1-2` - 595,000 steps at resolution `512x512` on "laion-aesthetics v2 5+" and 10 % dropping of the text-conditioning to improve [classifier-free guidance sampling](https://arxiv.org/abs/2207.12598).

|

| 166 |

-

- [`stable-diffusion-inpainting`](https://huggingface.co/runwayml/stable-diffusion-inpainting) Resumed from `stable-diffusion-v1-5` - then 440,000 steps of inpainting training at resolution 512x512 on “laion-aesthetics v2 5+” and 10% dropping of the text-conditioning. For inpainting, the UNet has 5 additional input channels (4 for the encoded masked-image and 1 for the mask itself) whose weights were zero-initialized after restoring the non-inpainting checkpoint. During training, we generate synthetic masks and in 25% mask everything.

|

| 167 |

-

|

| 168 |

-

- **Hardware:** 32 x 8 x A100 GPUs

|

| 169 |

-

- **Optimizer:** AdamW

|

| 170 |

-

- **Gradient Accumulations**: 2

|

| 171 |

-

- **Batch:** 32 x 8 x 2 x 4 = 2048

|

| 172 |

-

- **Learning rate:** warmup to 0.0001 for 10,000 steps and then kept constant

|

| 173 |

-

|

| 174 |

-

## Evaluation Results

|

| 175 |

-

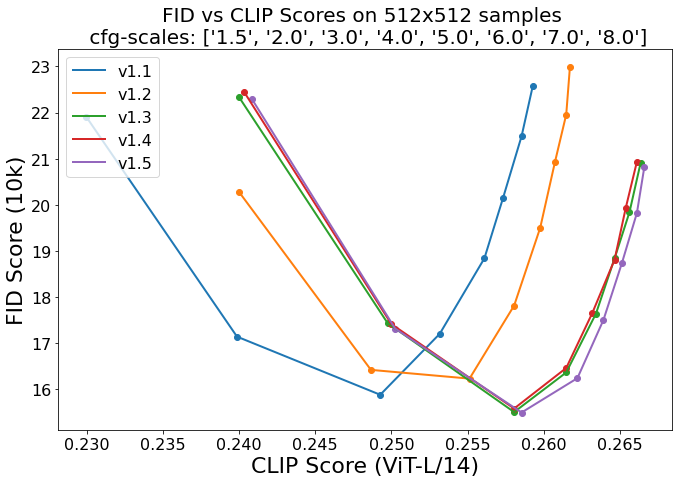

Evaluations with different classifier-free guidance scales (1.5, 2.0, 3.0, 4.0,

|

| 176 |

-

5.0, 6.0, 7.0, 8.0) and 50 PNDM/PLMS sampling

|

| 177 |

-

steps show the relative improvements of the checkpoints:

|

| 178 |

-

|

| 179 |

-

|

| 180 |

-

|

| 181 |

-

Evaluated using 50 PLMS steps and 10000 random prompts from the COCO2017 validation set, evaluated at 512x512 resolution. Not optimized for FID scores.

|

| 182 |

-

## Environmental Impact

|

| 183 |

-

|

| 184 |

-

**Stable Diffusion v1** **Estimated Emissions**

|

| 185 |

-

Based on that information, we estimate the following CO2 emissions using the [Machine Learning Impact calculator](https://mlco2.github.io/impact#compute) presented in [Lacoste et al. (2019)](https://arxiv.org/abs/1910.09700). The hardware, runtime, cloud provider, and compute region were utilized to estimate the carbon impact.

|

| 186 |

-

|

| 187 |

-

- **Hardware Type:** A100 PCIe 40GB

|

| 188 |

-

- **Hours used:** 150000

|

| 189 |

-

- **Cloud Provider:** AWS

|

| 190 |

-

- **Compute Region:** US-east

|

| 191 |

-

- **Carbon Emitted (Power consumption x Time x Carbon produced based on location of power grid):** 11250 kg CO2 eq.

|

| 192 |

-

|

| 193 |

-

|

| 194 |

-

## Citation

|

| 195 |

-

|

| 196 |

-

```bibtex

|

| 197 |

-

@InProceedings{Rombach_2022_CVPR,

|

| 198 |

-

author = {Rombach, Robin and Blattmann, Andreas and Lorenz, Dominik and Esser, Patrick and Ommer, Bj\"orn},

|

| 199 |

-

title = {High-Resolution Image Synthesis With Latent Diffusion Models},

|

| 200 |

-

booktitle = {Proceedings of the IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR)},

|

| 201 |

-

month = {June},

|

| 202 |

-

year = {2022},

|

| 203 |

-

pages = {10684-10695}

|

| 204 |

-

}

|

| 205 |

-

```

|

| 206 |

-

|

| 207 |

-

*This model card was written by: Robin Rombach and Patrick Esser and is based on the [DALL-E Mini model card](https://huggingface.co/dalle-mini/dalle-mini).*

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

__init__.py

ADDED

|

Binary file (2 Bytes). View file

|

|

|

config.yaml

ADDED

|

@@ -0,0 +1,63 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

# device

|

| 2 |

+

mode: sample # train sample

|

| 3 |

+

gpu_ids: [3] # gpu ids

|

| 4 |

+

batch_size: 1 # batch size each item denotes one story

|

| 5 |

+

num_workers: 4 # number of workers

|

| 6 |

+

num_cpu_cores: -1 # number of cpu cores

|

| 7 |

+

seed: 0 # random seed

|

| 8 |

+

ckpt_dir: /root/lihui/StoryVisualization/save_ckpt_epoch5_new # checkpoint directory

|

| 9 |

+

run_name: ARLDM # name for this run

|

| 10 |

+

|

| 11 |

+

# task

|

| 12 |

+

dataset: pororo # pororo flintstones vistsis vistdii

|

| 13 |

+

task: visualization # continuation visualization

|

| 14 |

+

|

| 15 |

+

# train

|

| 16 |

+

init_lr: 1e-5 # initial learning rate

|

| 17 |

+

warmup_epochs: 1 # warmup epochs

|

| 18 |

+

max_epochs: 5 #50 # max epochs

|

| 19 |

+

train_model_file: /root/lihui/StoryVisualization/save_ckpt_3last50/ARLDM/last.ckpt # model file for resume, none for train from scratch

|

| 20 |

+

freeze_clip: True #False # whether to freeze clip

|

| 21 |

+

freeze_blip: True #False # whether to freeze blip

|

| 22 |

+

freeze_resnet: True #False # whether to freeze resnet

|

| 23 |

+

|

| 24 |

+

# sample

|

| 25 |

+

test_model_file: /root/lihui/StoryVisualization/save_ckpt_3last50/ARLDM/last.ckpt # model file for test

|

| 26 |

+

calculate_fid: True # whether to calculate FID scores

|

| 27 |

+

scheduler: ddim # ddim pndm

|

| 28 |

+

guidance_scale: 6 # guidance scale

|

| 29 |

+

num_inference_steps: 250 # number of inference steps

|

| 30 |

+

sample_output_dir: /root/lihui/StoryVisualization/save_samples_128_epoch50 # output directory

|

| 31 |

+

|

| 32 |

+

pororo:

|

| 33 |

+

hdf5_file: /root/lihui/StoryVisualization/pororo.h5

|

| 34 |

+

max_length: 85

|

| 35 |

+

new_tokens: [ "pororo", "loopy", "eddy", "harry", "poby", "tongtong", "crong", "rody", "petty" ]

|

| 36 |

+

clip_embedding_tokens: 49416

|

| 37 |

+

blip_embedding_tokens: 30530

|

| 38 |

+

|

| 39 |

+

flintstones:

|

| 40 |

+

hdf5_file: /path/to/flintstones.h5

|

| 41 |

+

max_length: 91

|

| 42 |

+

new_tokens: [ "fred", "barney", "wilma", "betty", "pebbles", "dino", "slate" ]

|

| 43 |

+

clip_embedding_tokens: 49412

|

| 44 |

+

blip_embedding_tokens: 30525

|

| 45 |

+

|

| 46 |

+

vistsis:

|

| 47 |

+

hdf5_file: /path/to/vist.h5

|

| 48 |

+

max_length: 100

|

| 49 |

+

clip_embedding_tokens: 49408

|

| 50 |

+

blip_embedding_tokens: 30524

|

| 51 |

+

|

| 52 |

+

vistdii:

|

| 53 |

+

hdf5_file: /path/to/vist.h5

|

| 54 |

+

max_length: 65

|

| 55 |

+

clip_embedding_tokens: 49408

|

| 56 |

+

blip_embedding_tokens: 30524

|

| 57 |

+

|

| 58 |

+

hydra:

|

| 59 |

+

run:

|

| 60 |

+

dir: .

|

| 61 |

+

output_subdir: null

|

| 62 |

+

hydra/job_logging: disabled

|

| 63 |

+

hydra/hydra_logging: disabled

|

data_script/flintstones_hdf5.py

ADDED

|

@@ -0,0 +1,51 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import json

|

| 3 |

+

import os

|

| 4 |

+

import pickle

|

| 5 |

+

|

| 6 |

+

import cv2

|

| 7 |

+

import h5py

|

| 8 |

+

import numpy as np

|

| 9 |

+

from tqdm import tqdm

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

def main(args):

|

| 13 |

+

splits = json.load(open(os.path.join(args.data_dir, 'train-val-test_split.json'), 'r'))

|

| 14 |

+

train_ids, val_ids, test_ids = splits["train"], splits["val"], splits["test"]

|

| 15 |

+

followings = pickle.load(open(os.path.join(args.data_dir, 'following_cache4.pkl'), 'rb'))

|

| 16 |

+

annotations = json.load(open(os.path.join(args.data_dir, 'flintstones_annotations_v1-0.json')))

|

| 17 |

+

descriptions = dict()

|

| 18 |

+

for sample in annotations:

|

| 19 |

+

descriptions[sample["globalID"]] = sample["description"]

|

| 20 |

+

|

| 21 |

+

f = h5py.File(args.save_path, "w")

|

| 22 |

+

for subset, ids in {'train': train_ids, 'val': val_ids, 'test': test_ids}.items():

|

| 23 |

+

ids = [i for i in ids if i in followings and len(followings[i]) == 4]

|

| 24 |

+

length = len(ids)

|

| 25 |

+

|

| 26 |

+

group = f.create_group(subset)

|

| 27 |

+

images = list()

|

| 28 |

+

for i in range(5):

|

| 29 |

+

images.append(

|

| 30 |

+

group.create_dataset('image{}'.format(i), (length,), dtype=h5py.vlen_dtype(np.dtype('uint8'))))

|

| 31 |

+

text = group.create_dataset('text', (length,), dtype=h5py.string_dtype(encoding='utf-8'))

|

| 32 |

+

for i, item in enumerate(tqdm(ids, leave=True, desc="saveh5")):

|

| 33 |

+

globalIDs = [item] + followings[item]

|

| 34 |

+

txt = list()

|

| 35 |

+

for j, globalID in enumerate(globalIDs):

|

| 36 |

+

img = np.load(os.path.join(args.data_dir, 'video_frames_sampled', '{}.npy'.format(globalID)))

|

| 37 |

+

img = np.concatenate(img, axis=0).astype(np.uint8)

|

| 38 |

+

img = cv2.imencode('.png', img)[1].tobytes()

|

| 39 |

+

img = np.frombuffer(img, np.uint8)

|

| 40 |

+

images[j][i] = img

|

| 41 |

+

txt.append(descriptions[globalID])

|

| 42 |

+

text[i] = '|'.join([t.replace('\n', '').replace('\t', '').strip() for t in txt])

|

| 43 |

+

f.close()

|

| 44 |

+

|

| 45 |

+

|

| 46 |

+

if __name__ == '__main__':

|

| 47 |

+

parser = argparse.ArgumentParser(description='arguments for flintstones hdf5 file saving')

|

| 48 |

+

parser.add_argument('--data_dir', type=str, required=True, help='flintstones data directory')

|

| 49 |

+

parser.add_argument('--save_path', type=str, required=True, help='path to save hdf5')

|

| 50 |

+

args = parser.parse_args()

|

| 51 |

+

main(args)

|

data_script/pororo_hdf5.py

ADDED

|

@@ -0,0 +1,83 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import os

|

| 3 |

+

|

| 4 |

+

import cv2

|

| 5 |

+

import h5py

|

| 6 |

+

import numpy as np

|

| 7 |

+

from PIL import Image

|

| 8 |

+

from tqdm import tqdm

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

def main(args):

|

| 12 |

+

# 使用numpy库的load函数来加载名为descriptions.npy的文件。该文件是一个Python字典对象,因此我们使用item()方法将其转换为字典对象。

|

| 13 |

+

# ——os.path.join函数用于连接文件路径

|

| 14 |

+

# ——args.data_dir作为基础目录,将'descriptions.npy'添加到该目录中

|

| 15 |

+

# ——指定allow_pickle=True,表示允许加载包含Python对象的文件

|

| 16 |

+

# ——指定encoding='latin1',表示使用拉丁字符编码加载该文件

|

| 17 |

+

descriptions = np.load(os.path.join(args.data_dir, 'descriptions.npy'), allow_pickle=True, encoding='latin1').item()

|

| 18 |

+

# imgs_list包含一组图像文件的路径,

|

| 19 |

+

# followings_list包含每个图像的一些附加信息

|

| 20 |

+

imgs_list = np.load(os.path.join(args.data_dir, 'img_cache4.npy'), encoding='latin1')

|

| 21 |

+

followings_list = np.load(os.path.join(args.data_dir, 'following_cache4.npy'))

|

| 22 |

+

# 使用numpy库的load函数来加载名为train_seen_unseen_ids.npy的文件

|

| 23 |

+

# 该文件包含三个numpy数组:train_ids、val_ids和test_ids,分别代表训练集、验证集和测试集的ID列表。

|

| 24 |

+

# 使用元组来一次性加载这三个数组,并将它们赋值给相应的变量。

|

| 25 |

+

train_ids, val_ids, test_ids = np.load(os.path.join(args.data_dir, 'train_seen_unseen_ids.npy'), allow_pickle=True)

|

| 26 |

+

# 按照ID的顺序逐一排序

|

| 27 |

+

train_ids = np.sort(train_ids)

|

| 28 |

+

val_ids = np.sort(val_ids)

|

| 29 |

+

test_ids = np.sort(test_ids)

|

| 30 |

+

|

| 31 |

+

# 创建一个新的HDF5文件,并指定文件名为args.save_path。

|

| 32 |

+

# 使用h5py库的File函数来创建文件对象,指定打开方式为写模式("w")。

|

| 33 |

+

# 在这个文件中存储处理后的图像和文本数据。

|

| 34 |

+

f = h5py.File(args.save_path, "w")

|

| 35 |

+

for subset, ids in {'train': train_ids, 'val': val_ids, 'test': test_ids}.items():

|

| 36 |

+

length = len(ids)

|

| 37 |

+

|

| 38 |

+

# 为每个数据集(train、val和test)创建一个组

|

| 39 |

+

# 针对每个数据集都创建了5个数据集,名为'image0'、'image1'、'image2'、'image3'、'image4',分别对应于当前图像及其相关联的4个图像。

|

| 40 |

+

# 目的:将每个图像及其相关联的图像数据保存到同一个HDF5文件中,并按照一定的组织方式存储,方便后续的数据读取和处理。

|

| 41 |

+

group = f.create_group(subset)

|

| 42 |

+

# 创建一个长度为ids列表长度的空列表images,按照image0-4顺序添加了5个HDF5数据集对象

|

| 43 |

+

images = list()

|

| 44 |

+

# 为当前数据集中的每个图像创建了五个数据集。

|

| 45 |

+

# 每个数据集都使用vlen_dtype(np.dtype('uint8'))作为数据类型,并将其添加到当前组group中。

|

| 46 |

+

# ——vlen_dtype(np.dtype('uint8'))表示可变长度的无符号8位整数数组。

|

| 47 |

+

for i in range(5):

|

| 48 |

+

images.append(

|

| 49 |

+

group.create_dataset('image{}'.format(i), (length,), dtype=h5py.vlen_dtype(np.dtype('uint8'))))

|

| 50 |

+

# 创建一个数据集text,用于存储与当前数据集中图像相关的文本描述。该数据集的数据类型为字符串,编码方式为utf-8,并将其添加到当前组group中。

|

| 51 |

+

text = group.create_dataset('text', (length,), dtype=h5py.string_dtype(encoding='utf-8'))

|

| 52 |

+

# 遍历当前数据集中的每个图像,并将相关数据保存到HDF5文件中

|

| 53 |

+

for i, item in enumerate(tqdm(ids, leave=True, desc="saveh5")):

|

| 54 |

+

# 获取与当前图像相关的所有图像的路径,存储到列表img_paths中。

|

| 55 |

+

# ——imgs_list是一个字典,存储了所有图像的路径

|

| 56 |

+

# ——followings_list是一个字典,存储了与每个图像相关的四张图像的路径

|

| 57 |

+

img_paths = [str(imgs_list[item])[2:-1]] + [str(followings_list[item][i])[2:-1] for i in range(4)]

|

| 58 |

+

# 打开img_paths列表中的每个图像,并将其转换为RGB格式的PIL图像对象。

|

| 59 |

+

imgs = [Image.open(os.path.join(args.data_dir, img_path)).convert('RGB') for img_path in img_paths]

|

| 60 |

+

# 将每个PIL图像对象转换为numpy数组

|

| 61 |

+

for j, img in enumerate(imgs):

|

| 62 |

+

img = np.array(img).astype(np.uint8)

|

| 63 |

+

# 使用OpenCV将其编码为png格式的二进制数据

|

| 64 |

+

img = cv2.imencode('.png', img)[1].tobytes()

|

| 65 |

+

# 将该二进制数据转换为numpy数组

|

| 66 |

+

img = np.frombuffer(img, np.uint8)

|

| 67 |

+

# 将其存储到images列表中与当前图像相关的数据集中

|

| 68 |

+

images[j][i] = img

|

| 69 |

+

# 获取与当前图像相关的所有图像的文件名,并将其存储到列表tgt_img_ids中

|

| 70 |

+

tgt_img_ids = [str(img_path).replace('.png', '') for img_path in img_paths]

|

| 71 |

+

# 根据目标图像的文件名,获取其对应的文本描述,并将其存储到列表txt中。

|

| 72 |

+

txt = [descriptions[tgt_img_id][0] for tgt_img_id in tgt_img_ids]

|

| 73 |

+

# 将txt列表中的所有文本描述合并为一个字符串,并将其中的"\n"、"\t"等无关字符替换为空格。然后,将该字符串存储到数据集text中

|

| 74 |

+

text[i] = '|'.join([t.replace('\n', '').replace('\t', '').strip() for t in txt])

|

| 75 |

+

f.close()

|

| 76 |

+

|

| 77 |

+

|

| 78 |

+

if __name__ == '__main__':

|

| 79 |

+

parser = argparse.ArgumentParser(description='arguments for flintstones pororo file saving')

|

| 80 |

+

parser.add_argument('--data_dir', type=str, required=True, help='pororo data directory')

|

| 81 |

+

parser.add_argument('--save_path', type=str, required=True, help='path to save hdf5')

|

| 82 |

+

args = parser.parse_args()

|

| 83 |

+

main(args)

|

data_script/vist_hdf5.py

ADDED

|

@@ -0,0 +1,111 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import argparse

|

| 2 |

+

import json

|

| 3 |

+

import os

|

| 4 |

+

|

| 5 |

+

import cv2

|

| 6 |

+

import h5py

|

| 7 |

+

import numpy as np

|

| 8 |

+

from PIL import Image

|

| 9 |

+

from tqdm import tqdm

|

| 10 |

+

|

| 11 |

+

|

| 12 |

+

def main(args):

|

| 13 |

+

train_data = json.load(open(os.path.join(args.sis_json_dir, 'train.story-in-sequence.json')))

|

| 14 |

+

val_data = json.load(open(os.path.join(args.sis_json_dir, 'val.story-in-sequence.json')))

|

| 15 |

+

test_data = json.load(open(os.path.join(args.sis_json_dir, 'test.story-in-sequence.json')))

|

| 16 |

+

|

| 17 |

+

prefix = ["train", "val", "test"]

|

| 18 |

+

whole_album = {}

|

| 19 |

+

for i, data in enumerate([train_data, val_data, test_data]):

|

| 20 |

+

album_mapping = {}

|

| 21 |

+

for annot_new in data["annotations"]:

|

| 22 |

+

annot = annot_new[0]

|

| 23 |

+

assert len(annot_new) == 1

|

| 24 |

+

if annot['story_id'] not in album_mapping:

|

| 25 |

+

album_mapping[annot['story_id']] = {"flickr_id": [annot['photo_flickr_id']],

|

| 26 |

+

"sis": [annot['original_text']],

|

| 27 |

+

"length": 1}

|

| 28 |

+

else:

|

| 29 |

+

album_mapping[annot['story_id']]["flickr_id"].append(annot['photo_flickr_id'])

|

| 30 |

+

album_mapping[annot['story_id']]["sis"].append(

|

| 31 |

+

annot['original_text'])

|

| 32 |

+

album_mapping[annot['story_id']]["length"] += 1

|

| 33 |

+

whole_album[prefix[i]] = album_mapping

|

| 34 |

+

|

| 35 |

+

for p in prefix:

|

| 36 |

+

deletables = []

|

| 37 |

+

for story_id, story in whole_album[p].items():

|

| 38 |

+

if story['length'] != 5:

|

| 39 |

+

print("deleting {}".format(story_id))

|

| 40 |

+

deletables.append(story_id)

|

| 41 |

+

continue

|

| 42 |

+

d = [os.path.exists(os.path.join(args.img_dir, "{}.jpg".format(_))) for _ in story["flickr_id"]]

|

| 43 |

+

if sum(d) < 5:

|

| 44 |

+

print("deleting {}".format(story_id))

|

| 45 |

+

deletables.append(story_id)

|

| 46 |

+

else:

|

| 47 |

+

pass

|

| 48 |

+

for i in deletables:

|

| 49 |

+

del whole_album[p][i]

|

| 50 |

+

|

| 51 |

+

train_data = json.load(open(os.path.join(args.sis_json_dir, 'train.description-in-isolation.json')))

|

| 52 |

+

val_data = json.load(open(os.path.join(args.sis_json_dir, 'val.description-in-isolation.json')))

|

| 53 |

+

test_data = json.load(open(os.path.join(args.sis_json_dir, 'test.description-in-isolation.json')))

|

| 54 |

+

|

| 55 |

+

flickr_id2text = {}

|

| 56 |

+

for i, data in enumerate([train_data, val_data, test_data]):

|

| 57 |

+

for l in data['annotations']:

|

| 58 |

+

assert len(l) == 1

|

| 59 |

+

if l[0]['photo_flickr_id'] in flickr_id2text:

|

| 60 |

+

flickr_id2text[l[0]['photo_flickr_id']] = \

|

| 61 |

+

max([flickr_id2text[l[0]['photo_flickr_id']], l[0]['original_text']], key=len)

|

| 62 |

+

else:

|

| 63 |

+

flickr_id2text[l[0]['photo_flickr_id']] = l[0]['original_text']

|

| 64 |

+

|

| 65 |

+

for p in prefix:

|

| 66 |

+

deletables = []

|

| 67 |

+

for story_id, story in whole_album[p].items():

|

| 68 |

+

story['dii'] = []

|

| 69 |

+

for i, flickr_id in enumerate(story['flickr_id']):

|

| 70 |

+

if flickr_id not in flickr_id2text:

|

| 71 |

+

print("{} not found in story {}".format(flickr_id, story_id))

|

| 72 |

+

deletables.append(story_id)

|

| 73 |

+

break

|

| 74 |

+

story['dii'].append(flickr_id2text[flickr_id])

|

| 75 |

+

for i in deletables:

|

| 76 |

+

del whole_album[p][i]

|

| 77 |

+

|

| 78 |

+

f = h5py.File(args.save_path, "w")

|

| 79 |

+

for p in prefix:

|

| 80 |

+

group = f.create_group(p)

|

| 81 |

+

story_dict = whole_album[p]

|

| 82 |

+

length = len(story_dict)

|

| 83 |

+

images = list()

|

| 84 |

+

for i in range(5):

|

| 85 |

+

images.append(

|

| 86 |

+

group.create_dataset('image{}'.format(i), (length,), dtype=h5py.vlen_dtype(np.dtype('uint8'))))

|

| 87 |

+

sis = group.create_dataset('sis', (length,), dtype=h5py.string_dtype(encoding='utf-8'))

|

| 88 |

+

dii = group.create_dataset('dii', (length,), dtype=h5py.string_dtype(encoding='utf-8'))

|

| 89 |

+

for i, (story_id, story) in enumerate(tqdm(story_dict.items(), leave=True, desc="saveh5")):

|

| 90 |

+

imgs = [Image.open('{}/{}.jpg'.format(args.img_dir, flickr_id)).convert('RGB') for flickr_id in

|

| 91 |

+

story['flickr_id']]

|

| 92 |

+

for j, img in enumerate(imgs):

|

| 93 |

+

img = np.array(img).astype(np.uint8)

|

| 94 |

+

img = cv2.imencode('.png', img)[1].tobytes()

|

| 95 |

+

img = np.frombuffer(img, np.uint8)

|

| 96 |

+

images[j][i] = img

|

| 97 |

+

sis[i] = '|'.join([t.replace('\n', '').replace('\t', '').strip() for t in story['sis']])

|

| 98 |

+

txt_dii = [t.replace('\n', '').replace('\t', '').strip() for t in story['dii']]

|

| 99 |

+

txt_dii = sorted(set(txt_dii), key=txt_dii.index)

|

| 100 |

+

dii[i] = '|'.join(txt_dii)

|

| 101 |

+

f.close()

|

| 102 |

+

|

| 103 |

+

|

| 104 |

+

if __name__ == '__main__':

|

| 105 |

+

parser = argparse.ArgumentParser(description='arguments for vist hdf5 file saving')

|

| 106 |

+

parser.add_argument('--sis_json_dir', type=str, required=True, help='sis json file directory')

|

| 107 |

+

parser.add_argument('--dii_json_dir', type=str, required=True, help='dii json file directory')

|

| 108 |

+

parser.add_argument('--img_dir', type=str, required=True, help='json file directory')

|

| 109 |

+

parser.add_argument('--save_path', type=str, required=True, help='path to save hdf5')

|

| 110 |

+

args = parser.parse_args()

|

| 111 |

+

main(args)

|

data_script/vist_img_download.py

ADDED

|

@@ -0,0 +1,61 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import json

|

| 2 |

+

import requests

|

| 3 |

+

from io import BytesIO

|

| 4 |

+

from PIL import Image

|

| 5 |

+

from tqdm import tqdm

|

| 6 |

+

from multiprocessing import Process

|

| 7 |

+

import os

|

| 8 |

+

import argparse

|

| 9 |

+

|

| 10 |

+

|

| 11 |

+

def download_subprocess(dii, save_dir):

|

| 12 |

+

for image in tqdm(dii):

|

| 13 |

+

key, value = image.popitem()

|

| 14 |

+

try:

|

| 15 |

+

img_data = requests.get(value).content

|

| 16 |

+

img = Image.open(BytesIO(img_data)).convert('RGB')

|

| 17 |

+

h = img.size[0]

|

| 18 |

+

w = img.size[1]

|

| 19 |

+

if min(h, w) > 512:

|

| 20 |

+

img = img.resize((int(h / (w / 512)), 512) if h > w else (512, int(w / (h / 512))))

|

| 21 |

+

img.save('{}/{}.jpg'.format(save_dir, key))

|

| 22 |

+

except:

|

| 23 |

+

print(key, value)

|

| 24 |

+

|

| 25 |

+

|

| 26 |

+

def main(args):

|

| 27 |

+

train_data = json.load(open(os.path.join(args.json_dir, 'train.description-in-isolation.json')))

|

| 28 |

+

val_data = json.load(open(os.path.join(args.json_dir, 'val.description-in-isolation.json')))

|

| 29 |

+

test_data = json.load(open(os.path.join(args.json_dir, 'test.description-in-isolation.json')))

|

| 30 |

+

dii = []

|

| 31 |

+

for subset in [train_data, val_data, test_data]:

|

| 32 |

+

for image in subset["images"]:

|

| 33 |

+

try:

|

| 34 |

+

dii.append({image['id']: image['url_o']})

|

| 35 |

+

except:

|

| 36 |

+

dii.append({image['id']: image['url_m']})

|

| 37 |

+

|

| 38 |

+

dii = [image for image in dii if not os.path.exists('{}/{}.jpg'.format(args.save_dir, list(image)[0]))]

|

| 39 |

+

print('total images: {}'.format(len(dii)))

|

| 40 |

+

|

| 41 |

+

def splitlist(inlist, chunksize):

|

| 42 |

+

return [inlist[x:x + chunksize] for x in range(0, len(inlist), chunksize)]

|

| 43 |

+

|

| 44 |

+

dii_splitted = splitlist(dii, int((len(dii) / args.num_process)))

|

| 45 |

+

process_list = []

|

| 46 |

+

for dii_sub_list in dii_splitted:

|

| 47 |

+

p = Process(target=download_subprocess, args=(dii_sub_list,))

|

| 48 |

+

process_list.append(p)

|

| 49 |

+

p.Daemon = True

|

| 50 |

+

p.start()

|

| 51 |

+

for p in process_list:

|

| 52 |

+

p.join()

|

| 53 |

+

|

| 54 |

+

|

| 55 |

+

if __name__ == "__main__":

|

| 56 |

+

parser = argparse.ArgumentParser(description='arguments for vist images downloading')

|

| 57 |

+

parser.add_argument('--json_dir', type=str, required=True, help='dii json file directory')

|

| 58 |

+

parser.add_argument('--img_dir', type=str, required=True, help='images saving directory')

|

| 59 |

+

parser.add_argument('--num_process', type=int, default=32)

|

| 60 |

+

args = parser.parse_args()

|

| 61 |

+

main(args)

|

datasets/flintstones.py

ADDED

|

@@ -0,0 +1,93 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import random

|

| 2 |

+

|

| 3 |

+

import cv2

|

| 4 |

+

import h5py

|

| 5 |

+

import numpy as np

|

| 6 |

+

import torch

|

| 7 |

+

from torch.utils.data import Dataset

|

| 8 |

+

from torchvision import transforms

|

| 9 |

+

from transformers import CLIPTokenizer

|

| 10 |

+

|

| 11 |

+

from models.blip_override.blip import init_tokenizer

|

| 12 |

+

|

| 13 |

+

|

| 14 |

+

class StoryDataset(Dataset):

|

| 15 |

+

"""

|

| 16 |

+

A custom subset class for the LRW (includes train, val, test) subset

|

| 17 |

+

"""

|

| 18 |

+

|

| 19 |

+

def __init__(self, subset, args):

|

| 20 |

+

super(StoryDataset, self).__init__()

|

| 21 |

+

self.args = args

|

| 22 |

+

|

| 23 |

+

self.h5_file = args.get(args.dataset).hdf5_file

|

| 24 |

+

self.subset = subset

|

| 25 |

+

|

| 26 |

+

self.augment = transforms.Compose([

|

| 27 |

+

transforms.ToPILImage(),

|

| 28 |

+

transforms.Resize([512, 512]),

|

| 29 |

+

transforms.ToTensor(),

|

| 30 |

+

transforms.Normalize([0.5], [0.5])

|

| 31 |

+

])

|

| 32 |

+

self.dataset = args.dataset

|

| 33 |

+

self.max_length = args.get(args.dataset).max_length

|

| 34 |

+

self.clip_tokenizer = CLIPTokenizer.from_pretrained('runwayml/stable-diffusion-v1-5', subfolder="tokenizer")

|

| 35 |

+

self.blip_tokenizer = init_tokenizer()

|

| 36 |

+

msg = self.clip_tokenizer.add_tokens(list(args.get(args.dataset).new_tokens))

|

| 37 |

+

print("clip {} new tokens added".format(msg))

|

| 38 |

+

msg = self.blip_tokenizer.add_tokens(list(args.get(args.dataset).new_tokens))

|

| 39 |

+

print("blip {} new tokens added".format(msg))

|

| 40 |

+

|

| 41 |

+

self.blip_image_processor = transforms.Compose([

|

| 42 |

+

transforms.ToPILImage(),

|

| 43 |

+

transforms.Resize([224, 224]),

|

| 44 |

+

transforms.ToTensor(),

|

| 45 |

+

transforms.Normalize([0.48145466, 0.4578275, 0.40821073], [0.26862954, 0.26130258, 0.27577711])

|

| 46 |

+

])

|

| 47 |

+

|

| 48 |

+

def open_h5(self):

|

| 49 |

+

h5 = h5py.File(self.h5_file, "r")

|

| 50 |

+

self.h5 = h5[self.subset]

|

| 51 |

+

|

| 52 |

+

def __getitem__(self, index):

|

| 53 |

+

if not hasattr(self, 'h5'):

|

| 54 |

+

self.open_h5()

|

| 55 |

+

|

| 56 |

+

images = list()

|

| 57 |

+

for i in range(5):

|

| 58 |

+

im = self.h5['image{}'.format(i)][index]

|

| 59 |

+

im = cv2.imdecode(im, cv2.IMREAD_COLOR)

|

| 60 |

+

idx = random.randint(0, 4)

|

| 61 |

+

images.append(im[idx * 128: (idx + 1) * 128])

|

| 62 |

+

|

| 63 |

+

source_images = torch.stack([self.blip_image_processor(im) for im in images])

|

| 64 |

+

images = images[1:] if self.args.task == 'continuation' else images

|

| 65 |

+

images = torch.stack([self.augment(im) for im in images]) \

|

| 66 |

+

if self.subset in ['train', 'val'] else torch.from_numpy(np.array(images)).permute(0, 3, 1, 2)

|

| 67 |

+

|

| 68 |

+

texts = self.h5['text'][index].decode('utf-8').split('|')

|

| 69 |

+

|

| 70 |

+

# tokenize caption using default tokenizer

|

| 71 |

+

tokenized = self.clip_tokenizer(

|

| 72 |

+

texts[1:] if self.args.task == 'continuation' else texts,

|

| 73 |

+

padding="max_length",

|

| 74 |

+

max_length=self.max_length,

|

| 75 |

+

truncation=False,

|

| 76 |

+

return_tensors="pt",

|

| 77 |

+

)

|

| 78 |

+

captions, attention_mask = tokenized['input_ids'], tokenized['attention_mask']

|

| 79 |

+

|

| 80 |

+

tokenized = self.blip_tokenizer(

|

| 81 |

+

texts,

|

| 82 |

+

padding="max_length",

|

| 83 |

+

max_length=self.max_length,

|

| 84 |

+

truncation=False,

|

| 85 |

+

return_tensors="pt",

|

| 86 |

+

)

|

| 87 |

+

source_caption, source_attention_mask = tokenized['input_ids'], tokenized['attention_mask']

|

| 88 |

+

return images, captions, attention_mask, source_images, source_caption, source_attention_mask

|

| 89 |

+

|

| 90 |

+

def __len__(self):

|

| 91 |

+

if not hasattr(self, 'h5'):

|

| 92 |

+

self.open_h5()

|

| 93 |

+

return len(self.h5['text'])

|

datasets/pororo.py

ADDED

|

@@ -0,0 +1,144 @@

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 1 |

+

import copy

|

| 2 |

+

import os

|

| 3 |

+

import random

|

| 4 |

+

from PIL import Image

|

| 5 |

+

import cv2

|

| 6 |

+

import h5py

|

| 7 |

+

import numpy as np

|

| 8 |

+

import torch

|

| 9 |

+

from torch.utils.data import Dataset

|

| 10 |

+

from torchvision import transforms

|

| 11 |

+

from transformers import CLIPTokenizer

|

| 12 |

+

|

| 13 |

+

from models.blip_override.blip import init_tokenizer

|

| 14 |

+

|

| 15 |

+

|

| 16 |

+

class StoryDataset(Dataset):

|

| 17 |

+

"""

|

| 18 |

+

A custom subset class for the LRW (includes train, val, test) subset

|

| 19 |

+

"""

|

| 20 |

+

# StoryDataset 类的构造函数

|

| 21 |

+

def __init__(self, subset, args):

|