|

--- |

|

base_model: CompVis/stable-diffusion-v-1-4-original |

|

license: creativeml-openrail-m |

|

library_name: "stable-diffusion" |

|

inference: false |

|

model_creator: runwayml |

|

model_name: stable-diffusion-v-1-4-original |

|

quantized_by: Second State Inc. |

|

tags: |

|

- stable-diffusion |

|

- text-to-image |

|

--- |

|

|

|

<!-- header start --> |

|

<!-- 200823 --> |

|

<div style="width: auto; margin-left: auto; margin-right: auto"> |

|

<img src="https://github.com/LlamaEdge/LlamaEdge/raw/dev/assets/logo.svg" style="width: 100%; min-width: 400px; display: block; margin: auto;"> |

|

</div> |

|

<hr style="margin-top: 1.0em; margin-bottom: 1.0em;"> |

|

<!-- header end --> |

|

|

|

# stable-diffusion-v-1-4-GGUF |

|

|

|

## Original Model |

|

|

|

[CompVis/stable-diffusion-v-1-4-original](https://huggingface.co/CompVis/stable-diffusion-v-1-4-original) |

|

|

|

## Run with LlamaEdge-StableDiffusion |

|

|

|

- Version: [v0.2.0](https://github.com/LlamaEdge/sd-api-server/releases/tag/0.2.0) |

|

|

|

- Run as LlamaEdge service |

|

|

|

```bash |

|

wasmedge --dir .:. sd-api-server.wasm \ |

|

--model-name sd-v1.4 \ |

|

--model stable-diffusion-v1-4-Q8_0.gguf |

|

``` |

|

|

|

## Quantized GGUF Models |

|

|

|

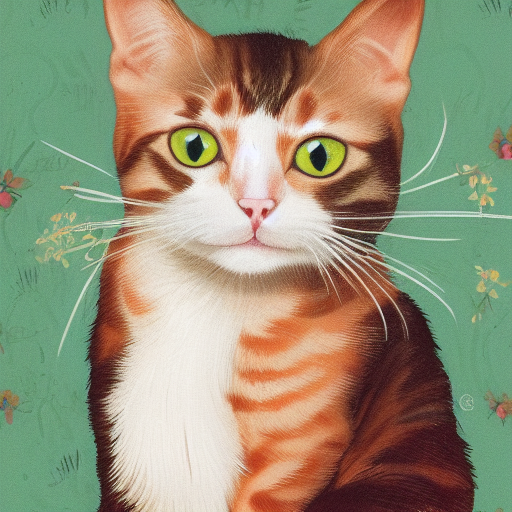

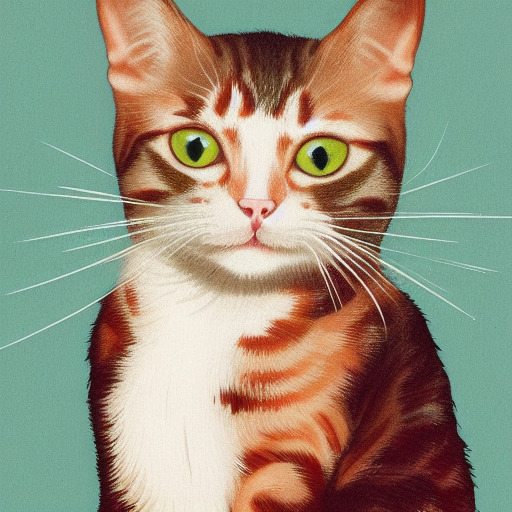

Using formats of different precisions will yield results of varying quality. |

|

|

|

| f32 | f16 |q8_0 |q5_0 |q5_1 |q4_0 |q4_1 | |

|

| ---- |---- |---- |---- |---- |---- |---- | |

|

|  | | | | | | | |

|

|

|

| Name | Quant method | Bits | Size | Use case | |

|

| ---- | ---- | ---- | ---- | ----- | |

|

| [stable-diffusion-v1-4-Q4_0.gguf](https://huggingface.co/second-state/stable-diffusion-v-1-4-GGUF/blob/main/stable-diffusion-v1-4-Q4_0.gguf) | Q4_0 | 2 | 1.57 GB | | |

|

| [stable-diffusion-v1-4-Q4_1.gguf](https://huggingface.co/second-state/stable-diffusion-v1-5-GGUF/blob/main/stable-diffusion-v1-4-Q4_1.gguf) | Q4_1 | 3 | 1.59 GB | | |

|

| [stable-diffusion-v1-4-Q5_0.gguf](https://huggingface.co/second-state/stable-diffusion-v1-5-GGUF/blob/main/stable-diffusion-v1-4-Q5_0.gguf) | Q5_0 | 3 | 1.62 GB | | |

|

| [stable-diffusion-v1-4-Q5_1.gguf](https://huggingface.co/second-state/stable-diffusion-v1-5-GGUF/blob/main/stable-diffusion-v1-4-Q5_1.gguf) | Q5_1 | 3 | 1.64 GB | | |

|

| [stable-diffusion-v1-4-Q8_0.gguf](https://huggingface.co/second-state/stable-diffusion-v1-5-GGUF/blob/main/stable-diffusion-v1-4-Q8_0.gguf) | Q8_0 | 4 | 1.76 GB | | |

|

| [stable-diffusion-v1-4-f16.gguf](https://huggingface.co/second-state/stable-diffusion-v1-5-GGUF/blob/main/stable-diffusion-v1-4-f16.gguf) | f16 | 4 | 2.13 GB | | |

|

| [stable-diffusion-v1-4-f32.gguf](https://huggingface.co/second-state/stable-diffusion-v1-5-GGUF/blob/main/stable-diffusion-v1-4-f32.gguf) | f32 | 4 | 4.27 GB | | |

|

|