Update README.md

Browse files

README.md

CHANGED

|

@@ -9,7 +9,6 @@ base_model:

|

|

| 9 |

- CultriX/SeQwence-14B-EvolMerge

|

| 10 |

- CultriX/Qwen2.5-14B-Wernicke

|

| 11 |

- sometimesanotion/lamarck-14b-prose-model_stock

|

| 12 |

-

- sometimesanotion/lamarck-14b-if-model_stock

|

| 13 |

- sometimesanotion/lamarck-14b-reason-model_stock

|

| 14 |

language:

|

| 15 |

- en

|

|

@@ -19,25 +18,27 @@ language:

|

|

| 19 |

|

| 20 |

### Overview:

|

| 21 |

|

| 22 |

-

Lamarck-14B version 0.3 is the product of a

|

| 23 |

|

| 24 |

-

|

| 25 |

|

| 26 |

-

|

|

|

|

|

|

|

| 27 |

- For refinement on Virtuoso as a base model, DELLA and SLERP include the model_stocks while re-emphasizing selected ancestors.

|

| 28 |

- For integration, a SLERP merge of Virtuoso with the converged branches.

|

| 29 |

- For finalization, a TIES merge.

|

| 30 |

|

| 31 |

|

| 32 |

|

| 33 |

-

While most censorship is unwelcome in Lamarck, and the author believes that adjacent services and not language models themselves are where guardrails are best placed, no effort has been made to de-censor this release of Lamarck. That is reserved for future merges and fine-tuning.

|

| 34 |

|

| 35 |

### Thanks go to:

|

| 36 |

|

| 37 |

- @arcee-ai's team for the bounties of mergekit and the exceptional Virtuoso Small model

|

| 38 |

- @CultriX for the helpful examples of memory-efficient sliced merges and evolutionary merging. Their contribution of tinyevals on version 0.1 of Lamarck did much to validate the hypotheses of the process used here.

|

| 39 |

|

| 40 |

-

###

|

| 41 |

|

| 42 |

**Top influences:** These ancestors are base models and present in the model_stocks, but are heavily re-emphasized in the DELLA and SLERP merges.

|

| 43 |

|

|

@@ -47,7 +48,15 @@ While most censorship is unwelcome in Lamarck, and the author believes that adja

|

|

| 47 |

|

| 48 |

- **[CultriX/Qwen2.5-14B-Wernicke](http://huggingface.co/CultriX/Qwen2.5-14B-Wernicke)** - A top performer for Arc and GPQA, Wernicke is re-emphasized in small but highly-ranked portions of the model.

|

| 49 |

|

| 50 |

-

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

|

| 51 |

|

| 52 |

```yaml

|

| 53 |

name: lamarck-14b-reason-della # This contributes the knowledge and reasoning pool, later to be merged

|

|

|

|

| 9 |

- CultriX/SeQwence-14B-EvolMerge

|

| 10 |

- CultriX/Qwen2.5-14B-Wernicke

|

| 11 |

- sometimesanotion/lamarck-14b-prose-model_stock

|

|

|

|

| 12 |

- sometimesanotion/lamarck-14b-reason-model_stock

|

| 13 |

language:

|

| 14 |

- en

|

|

|

|

| 18 |

|

| 19 |

### Overview:

|

| 20 |

|

| 21 |

+

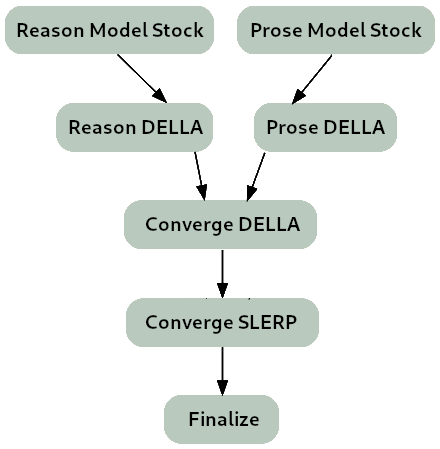

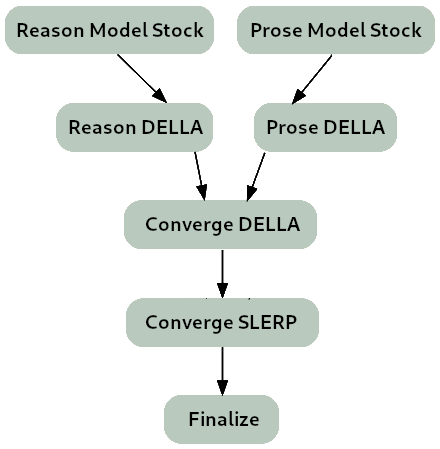

Lamarck-14B version 0.3 is the product of a carefully planned sequence of templated merges. It is strongly based on [arcee-ai/Virtuoso-Small](https://huggingface.co/arcee-ai/Virtuoso-Small) as a diffuse influence for prose and reasoning. Arcee's pioneering use of distillation and innovative merge techniques create a diverse knowledge pool for its models.

|

| 22 |

|

| 23 |

+

It inherits, and is intended to feed into, an evolutionary merge strategy used for its reasoning-heavy components.

|

| 24 |

|

| 25 |

+

**The merge strategy of Lamarck 0.3 can be summarized as:**

|

| 26 |

+

|

| 27 |

+

- Two model_stocks are the starting point for specialized branches for reasoning and prose quality.

|

| 28 |

- For refinement on Virtuoso as a base model, DELLA and SLERP include the model_stocks while re-emphasizing selected ancestors.

|

| 29 |

- For integration, a SLERP merge of Virtuoso with the converged branches.

|

| 30 |

- For finalization, a TIES merge.

|

| 31 |

|

| 32 |

|

| 33 |

|

| 34 |

+

**Note on censorship/abliteration:** While most censorship is unwelcome in Lamarck, and the author believes that adjacent services and not language models themselves are where guardrails are best placed, no effort has been made to de-censor this release of Lamarck. That is reserved for future merges and fine-tuning.

|

| 35 |

|

| 36 |

### Thanks go to:

|

| 37 |

|

| 38 |

- @arcee-ai's team for the bounties of mergekit and the exceptional Virtuoso Small model

|

| 39 |

- @CultriX for the helpful examples of memory-efficient sliced merges and evolutionary merging. Their contribution of tinyevals on version 0.1 of Lamarck did much to validate the hypotheses of the process used here.

|

| 40 |

|

| 41 |

+

### Models Merged:

|

| 42 |

|

| 43 |

**Top influences:** These ancestors are base models and present in the model_stocks, but are heavily re-emphasized in the DELLA and SLERP merges.

|

| 44 |

|

|

|

|

| 48 |

|

| 49 |

- **[CultriX/Qwen2.5-14B-Wernicke](http://huggingface.co/CultriX/Qwen2.5-14B-Wernicke)** - A top performer for Arc and GPQA, Wernicke is re-emphasized in small but highly-ranked portions of the model.

|

| 50 |

|

| 51 |

+

**Secondary influences:** Two model_stock merges, specialized for specific aspects of performance, are used to mildly influence a large range of the model.

|

| 52 |

+

|

| 53 |

+

- **[sometimesanotion/lamarck-14b-reason-model_stock](https://huggingface.co/sometimesanotion/lamarck-14b-reason-model_stock)**

|

| 54 |

+

|

| 55 |

+

- **[sometimesanotion/lamarck-14b-prose-model_stock](https://huggingface.co/sometimesanotion/lamarck-14b-prose-model_stock)**

|

| 56 |

+

|

| 57 |

+

### Configuration:

|

| 58 |

+

|

| 59 |

+

The following YAML configurations were used to produce this model:

|

| 60 |

|

| 61 |

```yaml

|

| 62 |

name: lamarck-14b-reason-della # This contributes the knowledge and reasoning pool, later to be merged

|