Spaces:

Runtime error

A newer version of the Gradio SDK is available:

5.13.0

title: Lesion-Cells DET

emoji: 🤗

colorFrom: indigo

colorTo: indigo

sdk: gradio

sdk_version: 4.2.0

app_file: app.py

pinned: false

license: mit

Check out the configuration reference at https://huggingface.co/docs/hub/spaces-config-reference

Description

Description

Lesion-Cells DET stands for Multi-granularity Lesion Cells Detection.

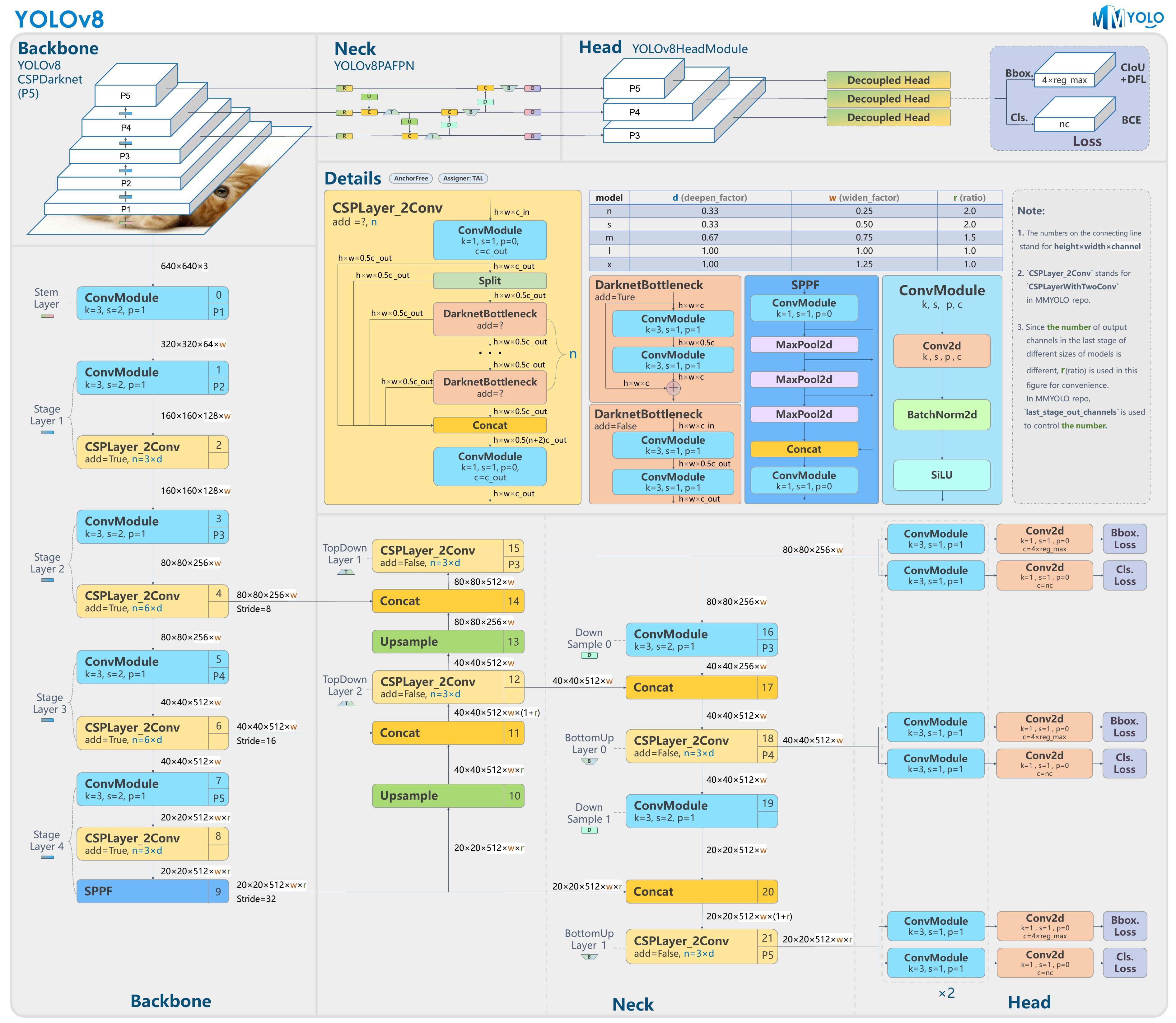

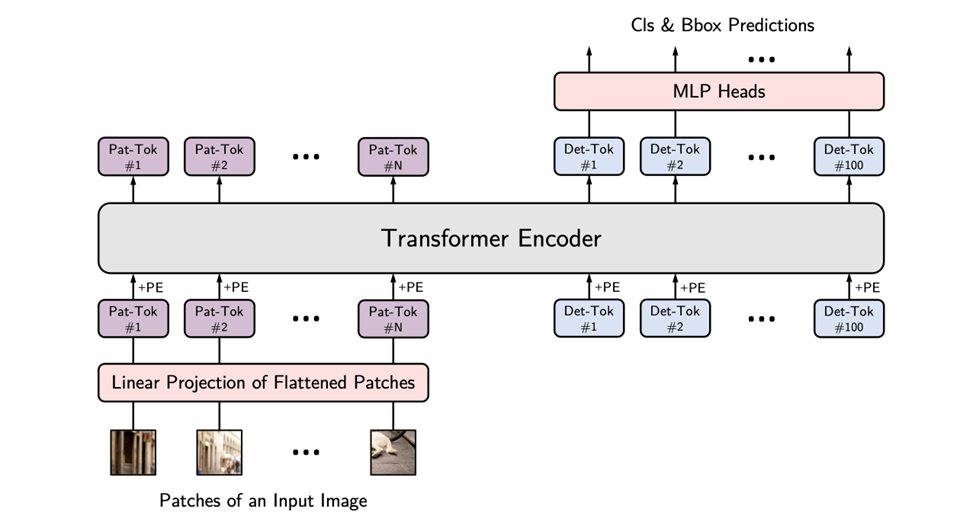

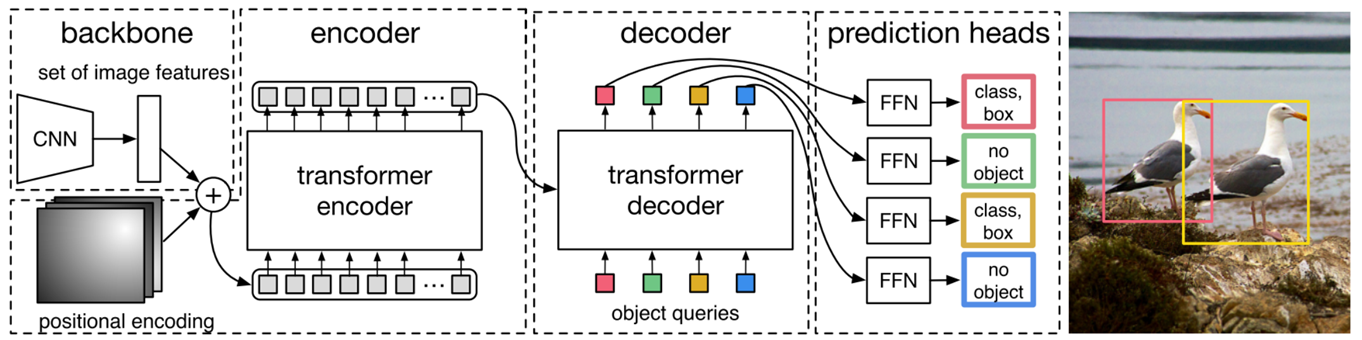

The projects employs both CNN-based and Transformer-based neural networks for Object Detection.

The system excels at detecting 7 types of cells with varying granularity in images. Additionally, it provides statistical information on the relative sizes and lesion degree distribution ratios of the identified cells.

Acknowledgements

Acknowledgements

I would like to express my sincere gratitude to Professor Lio for his invaluable guidance in Office Hour and supports throughout the development of this project. Professor's expertise and insightful feedback played a crucial role in shaping the direction of the project.

Demonstration

Demonstration

ToDo

ToDo

-

Change the large weights files with Google Drive sharing link -

Add Professor Lio's brief introduction -

Add a .gif demonstration instead of a static image - deploy the demo on HuggingFace

- Train models that have better performance

- Upload part of the datasets, so that everyone can train their own customized models

Quick Start

Quick Start

Installation

I strongly recommend you to use conda. Both Anaconda and miniconda is OK!

- create a virtual conda environment for the demo 😆

$ conda create -n demo python==3.8

$ conda activate demo

- install essential requirements by run the following command in the CLI 😊

$ git clone https://github.com/Tsumugii24/lesion-cells-det

$ cd lesion-cells-det

$ pip install -r requirements.txt

download the weights files from Google Drive that have already been trained properly

here is the link, from where you can download your preferred model and then test its performance 🤗

https://drive.google.com/drive/folders/1-H4nN8viLdH6nniuiGO-_wJDENDf-BkL?usp=sharing

- remember to put the weights files under the root of the project 😉

Run

$ python gradio_demo.py

Now, if everything is OK, your default browser will open automatically, and Gradio is running on local URL: http://127.0.0.1:7860

Datasets

The original datasets origins from Kaggle, iFLYTEK AI algorithm competition and other open source sources.

Anyway, we annotated an object detection dataset of more than 2000 cells for a total of 7 categories.

| class number | class name |

|---|---|

| 0 | normal_columnar |

| 1 | normal_intermediate |

| 2 | normal_superficiel |

| 3 | carcinoma_in_situ |

| 4 | light_dysplastic |

| 5 | moderate_dysplastic |

| 6 | severe_dysplastic |

We decided to share about 800 of them, which should be an adequate number for further test and study.

Train custom models

You can train your own custom model as long as it can work properly.

Training

Training

example weights

Example models of the project are trained with different methods, ranging from Convolutional Neutral Network to Vision Transformer.

| Model Name | Training Device | Open Source Repository for Reference | Average AP |

|---|---|---|---|

| yolov5_based | NVIDIA GeForce RTX 4090, 24563.5MB | https://github.com/ultralytics/yolov5.git | 0.721 |

| yolov8_based | NVIDIA GeForce RTX 4090, 24563.5MB | https://github.com/ultralytics/ultralytics.git | 0.810 |

| vit_based | NVIDIA GeForce RTX 4090, 24563.5MB | https://github.com/hustvl/YOLOS.git | 0.834 |

| detr_based | NVIDIA GeForce RTX 4090, 24563.5MB | https://github.com/lyuwenyu/RT-DETR.git | 0.859 |

References

References

Jocher, G., Chaurasia, A., & Qiu, J. (2023). YOLO by Ultralytics (Version 8.0.0) [Computer software]. https://github.com/ultralytics/ultralytics

Jocher, G. (2020). YOLOv5 by Ultralytics (Version 7.0) [Computer software]. https://doi.org/10.5281/zenodo.3908559

[GitHub - hustvl/YOLOS: NeurIPS 2021] You Only Look at One Sequence

[GitHub - ViTAE-Transformer/ViTDet: Unofficial implementation for ECCV'22] "Exploring Plain Vision Transformer Backbones for Object Detection"

Touvron, H., Cord, M., Douze, M., Massa, F., Sablayrolles, A., & J'egou, H. (2020). Training data-efficient image transformers & distillation through attention. International Conference on Machine Learning.

Fang, Y., Liao, B., Wang, X., Fang, J., Qi, J., Wu, R., Niu, J., & Liu, W. (2021). You Only Look at One Sequence: Rethinking Transformer in Vision through Object Detection. Neural Information Processing Systems.

Lv, W., Xu, S., Zhao, Y., Wang, G., Wei, J., Cui, C., Du, Y., Dang, Q., & Liu, Y. (2023). DETRs Beat YOLOs on Real-time Object Detection. ArXiv, abs/2304.08069.

GitHub - facebookresearch/detr: End-to-End Object Detection with Transformers

PaddleDetection/configs/rtdetr at develop · PaddlePaddle/PaddleDetection · GitHub

J. Hu, L. Shen and G. Sun, "Squeeze-and-Excitation Networks," 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, 2018, pp. 7132-7141, doi: 10.1109/CVPR.2018.00745.

Carion, N., Massa, F., Synnaeve, G., Usunier, N., Kirillov, A., & Zagoruyko, S. (2020). End-to-End Object Detection with Transformers. ArXiv, abs/2005.12872.

Beal, J., Kim, E., Tzeng, E., Park, D., Zhai, A., & Kislyuk, D. (2020). Toward Transformer-Based Object Detection. ArXiv, abs/2012.09958.

Liu, Z., Lin, Y., Cao, Y., Hu, H., Wei, Y., Zhang, Z., Lin, S., & Guo, B. (2021). Swin Transformer: Hierarchical Vision Transformer using Shifted Windows. 2021 IEEE/CVF International Conference on Computer Vision (ICCV), 9992-10002.

Zong, Z., Song, G., & Liu, Y. (2022). DETRs with Collaborative Hybrid Assignments Training. ArXiv, abs/2211.12860.

Contact

Contact

Feel free to contact me through GitHub issues or directly send me a mail if you have any questions about the project. 🐼

My Gmail Address 👉 jsf002016@gmail.com