Spaces:

Running

on

Zero

title: catvton-flux

emoji: 🖥️

colorFrom: yellow

colorTo: pink

sdk: gradio

sdk_version: 5.0.1

app_file: app.py

pinned: false

catvton-flux

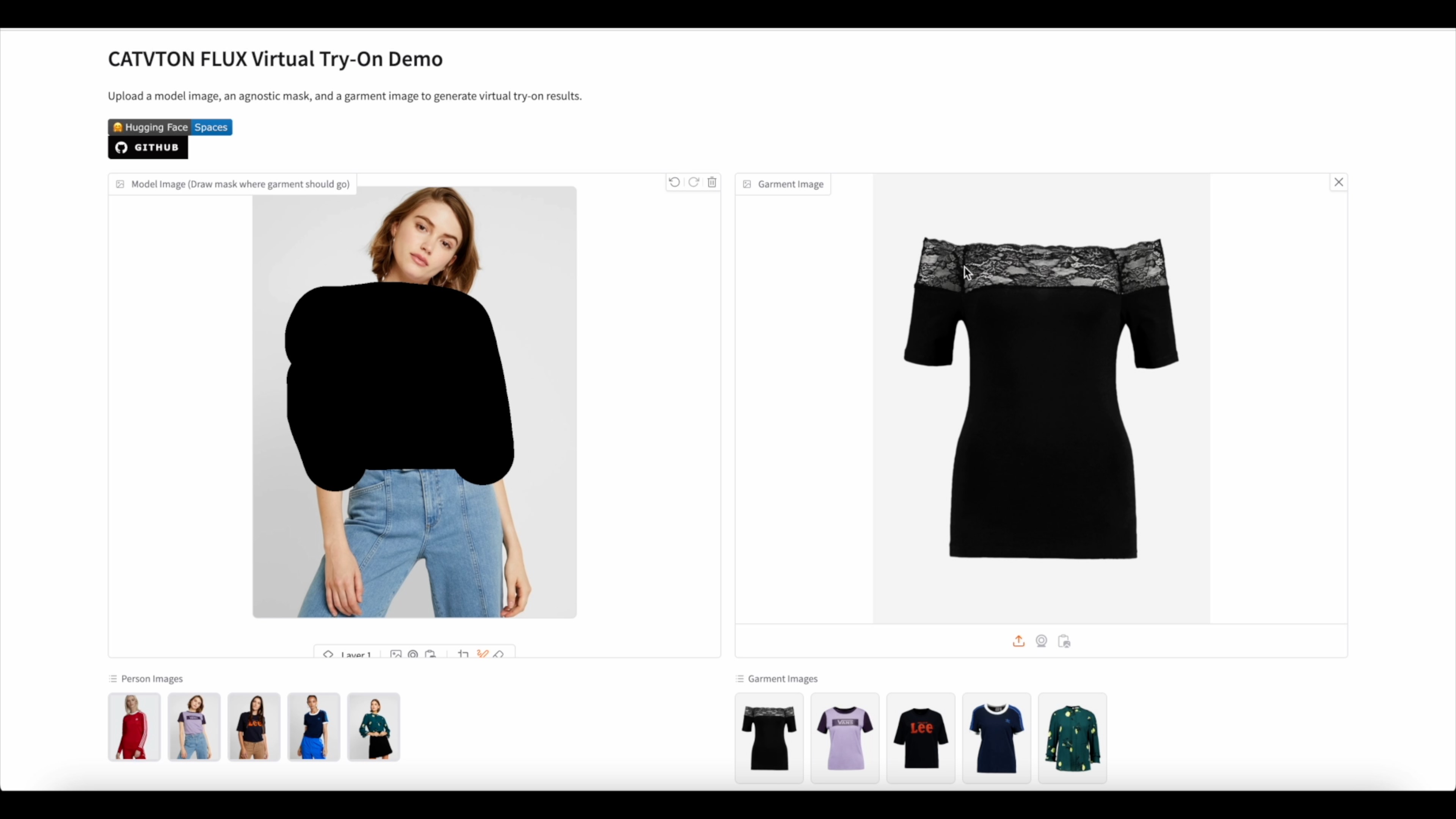

An state-of-the-art virtual try-on solution that combines the power of CATVTON (CatVTON: Concatenation Is All You Need for Virtual Try-On with Diffusion Models) with Flux fill inpainting model for realistic and accurate clothing transfer. Also inspired by In-Context LoRA for prompt engineering.

Running it now on website: CATVTON-FLUX-TRY-ON

Update

Latest Achievement

(2024/12/6):

- Released a new weights for tryoff. The model named cat-tryoff-flux can extract and reconstruct the front view of clothing items from images of people wearing them. Showcase examples is here.

- Try-off Hugging Face: 🤗 CAT-TRYOFF-FLUX

(2024/12/1):

(2024/11/26):

- Updated the weights. (Still training on the VITON-HD dataset only.)

- Reduce the fine-tuning weights size (46GB -> 23GB)

- Weights has better performance on garment small details/text.

- Added the huggingface ZeroGPU support. You can run CATVTON-FLUX-TRY-ON now on huggingface space here

(2024/11/25):

- Released lora weights. Lora weights achieved FID:

6.0675811767578125on VITON-HD dataset. Test configuration: scale 30, step 30. - Revise gradio demo. Added huggingface spaces support.

- Clean up the requirements.txt.

(2024/11/24):

- Released FID score and gradio demo

- CatVton-Flux-Alpha achieved SOTA performance with FID:

5.593255043029785on VITON-HD dataset. Test configuration: scale 30, step 30. My VITON-HD test inferencing results available here

Showcase

Try-on examples

Try-off examples

Model Weights

Tryon

Fine-tuning weights in Hugging Face: 🤗 catvton-flux-alpha

LORA weights in Hugging Face: 🤗 catvton-flux-lora-alpha

Tryoff

Fine-tuning weights in Hugging Face: 🤗 cat-tryoff-flux

Dataset

The model weights are trained on the VITON-HD dataset.

Prerequisites

Make sure you are running the code with VRAM >= 40GB. (I run all my experiments on a 80GB GPU, lower VRAM will cause OOM error. Will support lower VRAM in the future.)

bash

conda create -n flux python=3.10

conda activate flux

pip install -r requirements.txt

huggingface-cli login

Usage

Tryoff

Run the following command to restore the front side of the garment from the clothed model image:

python tryoff_inference.py \

--image ./example/person/00069_00.jpg \

--mask ./example/person/00069_00_mask.png \

--seed 41 \

--output_tryon test_original.png \

--output_garment restored_garment6.png \

--steps 30

Tryon

Run the following command to try on an image:

LORA version:

python tryon_inference_lora.py \

--image ./example/person/00008_00.jpg \

--mask ./example/person/00008_00_mask.png \

--garment ./example/garment/00034_00.jpg \

--seed 4096 \

--output_tryon test_lora.png \

--steps 30

Fine-tuning version:

python tryon_inference.py \

--image ./example/person/00008_00.jpg \

--mask ./example/person/00008_00_mask.png \

--garment ./example/garment/00034_00.jpg \

--seed 42 \

--output_tryon test.png \

--steps 30

Run the following command to start a gradio demo with LoRA weights:

python app.py

Run the following command to start a gradio demo without LoRA weights:

python app_no_lora.py

Gradio demo: Try-on Hugging Face: 🤗 CATVTON-FLUX-TRY-ON Try-off Hugging Face: 🤗 CAT-TRYOFF-FLUX

TODO:

- Release the FID score

- Add gradio demo

- Release updated weights with better performance

- Train a smaller model

- Support comfyui

- Release tryoff weights

Citation

@misc{chong2024catvtonconcatenationneedvirtual,

title={CatVTON: Concatenation Is All You Need for Virtual Try-On with Diffusion Models},

author={Zheng Chong and Xiao Dong and Haoxiang Li and Shiyue Zhang and Wenqing Zhang and Xujie Zhang and Hanqing Zhao and Xiaodan Liang},

year={2024},

eprint={2407.15886},

archivePrefix={arXiv},

primaryClass={cs.CV},

url={https://arxiv.org/abs/2407.15886},

}

@article{lhhuang2024iclora,

title={In-Context LoRA for Diffusion Transformers},

author={Huang, Lianghua and Wang, Wei and Wu, Zhi-Fan and Shi, Yupeng and Dou, Huanzhang and Liang, Chen and Feng, Yutong and Liu, Yu and Zhou, Jingren},

journal={arXiv preprint arxiv:2410.23775},

year={2024}

}

Thanks to Jim for insisting on spatial concatenation. Thanks to dingkang MoonBlvd Stevada for the helpful discussions.

License

- The code is licensed under the MIT License.

- The model weights have the same license as Flux.1 Fill and VITON-HD.