|

--- |

|

base_model: |

|

- tiiuae/falcon-11B |

|

library_name: transformers |

|

tags: |

|

- mergekit |

|

- merge |

|

- lazymergekit |

|

- tiiuae/falcon-11B |

|

license: apache-2.0 |

|

language: |

|

- es |

|

- fr |

|

- de |

|

- 'no' |

|

- sv |

|

- da |

|

- nl |

|

- pt |

|

- pl |

|

- ro |

|

- it |

|

- cs |

|

--- |

|

## Why prune? |

|

|

|

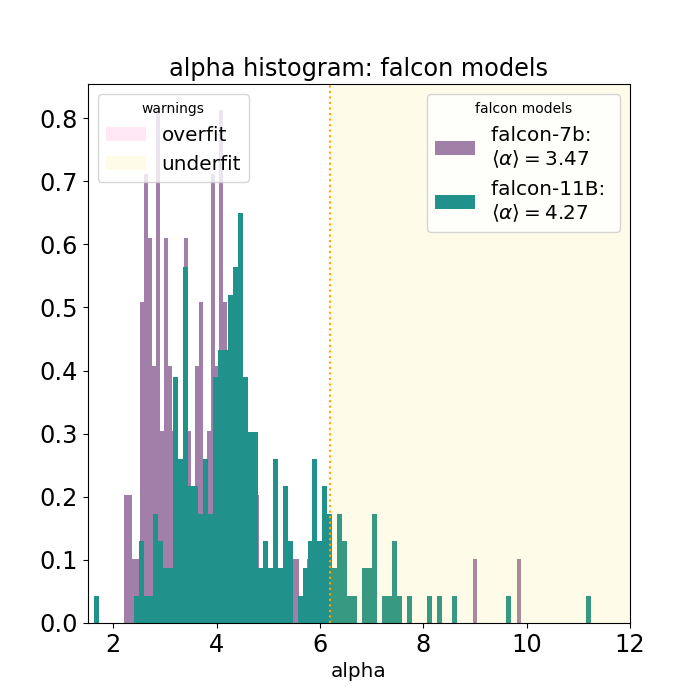

Even though [Falcon-11B](https://huggingface.co/tiiuae/falcon-11B) is trained on 5T tokens, it is still undertrained, as can be seen by this graph: |

|

|

|

This is why the choice is made to prune 50% of the layers. |

|

Note that \~1B of continued pre-training (\~1M rows of 1k tokens) is still required to restore the perplexity of this model in the desired language. |

|

I'm planning on doing that for certain languages when fineweb-edu-{specific_language} will be available, depending on how much compute will be available. |

|

|

|

# sliced |

|

|

|

This is a merge of pre-trained language models created using [mergekit](https://github.com/cg123/mergekit). |

|

|

|

## Merge Details |

|

### Merge Method |

|

|

|

This model was pruned using the passthrough merge method. |

|

|

|

### Models Merged |

|

|

|

The following models were included in the merge: |

|

* [tiiuae/falcon-11B](https://huggingface.co/tiiuae/falcon-11B) |

|

|

|

### Configuration |

|

|

|

The following YAML configuration was used to produce this model: |

|

|

|

|

|

```yaml |

|

slices: |

|

- sources: |

|

- model: tiiuae/falcon-11B |

|

layer_range: [0, 24] |

|

- sources: |

|

- model: tiiuae/falcon-11B |

|

layer_range: [55, 59] |

|

merge_method: passthrough |

|

dtype: bfloat16 |

|

``` |

|

|

|

[PruneMe](https://github.com/arcee-ai/PruneMe) has been utilized using the wikimedia/wikipedia subsets of 11 languages by investigating layer similarity with 2000 samples per language. The layer ranges for pruning were determined based on the averages of each language analysis to maintain performance while reducing model size. |

|

|

|

|

|

|

|

```python |

|

from transformers import AutoTokenizer, AutoModelForCausalLM |

|

import transformers |

|

import torch |

|

|

|

model = "ssmits/Falcon2-5.5B-multilingual" |

|

|

|

tokenizer = AutoTokenizer.from_pretrained(model) |

|

pipeline = transformers.pipeline( |

|

"text-generation", |

|

model=model, |

|

tokenizer=tokenizer, |

|

torch_dtype=torch.bfloat16, |

|

) |

|

sequences = pipeline( |

|

"Can you explain the concepts of Quantum Computing?", |

|

max_length=200, |

|

do_sample=True, |

|

top_k=10, |

|

num_return_sequences=1, |

|

eos_token_id=tokenizer.eos_token_id, |

|

) |

|

for seq in sequences: |

|

print(f"Result: {seq['generated_text']}") |

|

|

|

``` |

|

|

|

💥 **Falcon LLMs require PyTorch 2.0 for use with `transformers`!** |

|

|

|

For fast inference with Falcon, check-out [Text Generation Inference](https://github.com/huggingface/text-generation-inference)! Read more in this [blogpost]((https://huggingface.co/blog/falcon). |

|

|

|

## Direct Use |

|

Research on large language models; as a foundation for further specialization and finetuning for specific usecases (e.g., summarization, text generation, chatbot, etc.) |

|

|

|

## Out-of-Scope Use |

|

Production use without adequate assessment of risks and mitigation; any use cases which may be considered irresponsible or harmful. |

|

|

|

## Bias, Risks, and Limitations |

|

Falcon2-5.5B is trained mostly on English, but also German, Spanish, French, Italian, Portuguese, Polish, Dutch, Romanian, Czech, Swedish. It will not generalize appropriately to other languages. Furthermore, as it is trained on a large-scale corpora representative of the web, it will carry the stereotypes and biases commonly encountered online. |

|

|

|

## Recommendations |

|

We recommend users of Falcon2-5.5B to consider finetuning it for the specific set of tasks of interest, and for guardrails and appropriate precautions to be taken for any production use. |