SauerkrautLM Mixtral 8X7B Instruct - AWQ

- Model creator: VAGO solutions

- Original model: SauerkrautLM Mixtral 8X7B Instruct

Description

This repo contains AWQ model files for VAGO solutions's SauerkrautLM Mixtral 8X7B Instruct.

MIXTRAL AWQ

This is a Mixtral AWQ model. With a slightly better 4-bit quantisation with a group size of 32 compared to TheBoke's AWQ quant with gs 128.

For AutoAWQ inference, please install AutoAWQ 0.1.8 or later.

Support via Transformers is also available, but currently requires installing Transformers from Github: pip3 install git+https://github.com/huggingface/transformers.git

vLLM: version 0.2.6 is confirmed to support Mixtral AWQs.

TGI: I tested version 1.3.3 and it loaded the model fine, but I was not able to get any output back. Further testing/debug is required. (Let me know if you get it working!)

About AWQ

AWQ is an efficient, accurate and blazing-fast low-bit weight quantization method, currently supporting 4-bit quantization. Compared to GPTQ, it offers faster Transformers-based inference with equivalent or better quality compared to the most commonly used GPTQ settings.

AWQ models are currently supported on Linux and Windows, with NVidia GPUs only. macOS users: please use GGUF models instead.

AWQ models are supported by (note that not all of these may support Mixtral models yet - see above):

- Text Generation Webui - using Loader: AutoAWQ

- vLLM - version 0.2.2 or later for support for all model types.

- Hugging Face Text Generation Inference (TGI)

- Transformers version 4.35.0 and later, from any code or client that supports Transformers

- AutoAWQ - for use from Python code

Prompt template: Mistral

[INST] {prompt} [/INST]

Provided files, and AWQ parameters

I currently release 128g GEMM models only. The addition of group_size 32 models, and GEMV kernel models, is being actively considered.

Models are released as sharded safetensors files.

| Branch | Bits | GS | AWQ Dataset | Seq Len | Size |

|---|---|---|---|---|---|

| main | 4 | 32 | German Quad | 8192 | 24.65 GB |

How to easily download and use this model in text-generation-webui

Please make sure you're using the latest version of text-generation-webui.

It is strongly recommended to use the text-generation-webui one-click-installers unless you're sure you know how to make a manual install.

- Click the Model tab.

- Under Download custom model or LoRA, enter

LHC88/SauerkrautLM-Mixtral-8x7B-Instruct-AWQ. - Click Download.

- The model will start downloading. Once it's finished it will say "Done".

- In the top left, click the refresh icon next to Model.

- In the Model dropdown, choose the model you just downloaded:

SauerkrautLM-Mixtral-8x7B-Instruct-AWQ - Select Loader: AutoAWQ.

- Click Load, and the model will load and is now ready for use.

- If you want any custom settings, set them and then click Save settings for this model followed by Reload the Model in the top right.

- Once you're ready, click the Text Generation tab and enter a prompt to get started!

Multi-user inference server: vLLM

Documentation on installing and using vLLM can be found here.

- Please ensure you are using vLLM version 0.2 or later.

- When using vLLM as a server, pass the

--quantization awqparameter.

For example:

python3 -m vllm.entrypoints.api_server --model LHC88/SauerkrautLM-Mixtral-8x7B-Instruct-AWQ --quantization awq --dtype auto

- When using vLLM from Python code, again set

quantization=awq.

For example:

from vllm import LLM, SamplingParams

prompts = [

"Tell me about AI",

"Write a story about llamas",

"What is 291 - 150?",

"How much wood would a woodchuck chuck if a woodchuck could chuck wood?",

]

prompt_template=f'''[INST] {prompt} [/INST]

'''

prompts = [prompt_template.format(prompt=prompt) for prompt in prompts]

sampling_params = SamplingParams(temperature=0.8, top_p=0.95)

llm = LLM(model="LHC88/SauerkrautLM-Mixtral-8x7B-Instruct-AWQ", quantization="awq", dtype="auto")

outputs = llm.generate(prompts, sampling_params)

# Print the outputs.

for output in outputs:

prompt = output.prompt

generated_text = output.outputs[0].text

print(f"Prompt: {prompt!r}, Generated text: {generated_text!r}")

Multi-user inference server: Hugging Face Text Generation Inference (TGI)

Use TGI version 1.1.0 or later. The official Docker container is: ghcr.io/huggingface/text-generation-inference:1.1.0

Example Docker parameters:

--model-id LHC88/SauerkrautLM-Mixtral-8x7B-Instruct-AWQ --port 3000 --quantize awq --max-input-length 3696 --max-total-tokens 4096 --max-batch-prefill-tokens 4096

Example Python code for interfacing with TGI (requires huggingface-hub 0.17.0 or later):

pip3 install huggingface-hub

from huggingface_hub import InferenceClient

endpoint_url = "https://your-endpoint-url-here"

prompt = "Tell me about AI"

prompt_template=f'''[INST] {prompt} [/INST]

'''

client = InferenceClient(endpoint_url)

response = client.text_generation(prompt,

max_new_tokens=128,

do_sample=True,

temperature=0.7,

top_p=0.95,

top_k=40,

repetition_penalty=1.1)

print(f"Model output: ", response)

Inference from Python code using Transformers

Install the necessary packages

- Requires: Transformers 4.35.0 or later.

- Requires: AutoAWQ 0.1.6 or later.

pip3 install --upgrade "autoawq>=0.1.6" "transformers>=4.35.0"

Note that if you are using PyTorch 2.0.1, the above AutoAWQ command will automatically upgrade you to PyTorch 2.1.0.

If you are using CUDA 11.8 and wish to continue using PyTorch 2.0.1, instead run this command:

pip3 install https://github.com/casper-hansen/AutoAWQ/releases/download/v0.1.6/autoawq-0.1.6+cu118-cp310-cp310-linux_x86_64.whl

If you have problems installing AutoAWQ using the pre-built wheels, install it from source instead:

pip3 uninstall -y autoawq

git clone https://github.com/casper-hansen/AutoAWQ

cd AutoAWQ

pip3 install .

Transformers example code (requires Transformers 4.35.0 and later)

from transformers import AutoModelForCausalLM, AutoTokenizer, TextStreamer

model_name_or_path = "LHC88/SauerkrautLM-Mixtral-8x7B-Instruct-AWQ"

tokenizer = AutoTokenizer.from_pretrained(model_name_or_path)

model = AutoModelForCausalLM.from_pretrained(

model_name_or_path,

low_cpu_mem_usage=True,

device_map="cuda:0"

)

# Using the text streamer to stream output one token at a time

streamer = TextStreamer(tokenizer, skip_prompt=True, skip_special_tokens=True)

prompt = "Tell me about AI"

prompt_template=f'''[INST] {prompt} [/INST]

'''

# Convert prompt to tokens

tokens = tokenizer(

prompt_template,

return_tensors='pt'

).input_ids.cuda()

generation_params = {

"do_sample": True,

"temperature": 0.7,

"top_p": 0.95,

"top_k": 40,

"max_new_tokens": 512,

"repetition_penalty": 1.1

}

# Generate streamed output, visible one token at a time

generation_output = model.generate(

tokens,

streamer=streamer,

**generation_params

)

# Generation without a streamer, which will include the prompt in the output

generation_output = model.generate(

tokens,

**generation_params

)

# Get the tokens from the output, decode them, print them

token_output = generation_output[0]

text_output = tokenizer.decode(token_output)

print("model.generate output: ", text_output)

# Inference is also possible via Transformers' pipeline

from transformers import pipeline

pipe = pipeline(

"text-generation",

model=model,

tokenizer=tokenizer,

**generation_params

)

pipe_output = pipe(prompt_template)[0]['generated_text']

print("pipeline output: ", pipe_output)

Compatibility

The files provided are tested to work with:

- text-generation-webui using

Loader: AutoAWQ. - vLLM version 0.2.0 and later.

- Hugging Face Text Generation Inference (TGI) version 1.1.0 and later.

- Transformers version 4.35.0 and later.

- AutoAWQ version 0.1.1 and later.

Original model card: VAGO solutions's SauerkrautLM Mixtral 8X7B Instruct

VAGO solutions SauerkrautLM-Mixtral-8x7B-Instruct

Introducing SauerkrautLM-Mixtral-8x7B-Instruct – our Sauerkraut version of the powerful Mixtral-8x7B-Instruct! Aligned with DPO

Table of Contents

- Overview of all SauerkrautLM-Mixtral models

- Model Details

- Evaluation

- Disclaimer

- Contact

- Collaborations

- Acknowledgement

All SauerkrautLM-Mixtral Models

| Model | HF | GPTQ | GGUF | AWQ |

|---|---|---|---|---|

| SauerkrautLM-Mixtral-8x7B-Instruct | Link | coming soon | coming soon | coming soon |

| SauerkrautLM-Mixtral-8x7B | Link | coming soon | coming soon | coming soon |

Model Details

SauerkrautLM-Mixtral-8x7B-Instruct

- Model Type: SauerkrautLM-Mixtral-8x7B-Instruct-v0.1 is a Mixture of Experts (MoE) Model based on mistralai/Mixtral-8x7B-Instruct-v0.1

- Language(s): English, German, French, Italian, Spanish

- License: APACHE 2.0

- Contact: Website David Golchinfar

Training Dataset:

SauerkrautLM-Mixtral-8x7B-Instruct was trained with mix of German data augmentation and translated data.

Aligned through DPO with our new German SauerkrautLM-DPO dataset based on parts of the SFT SauerkrautLM dataset

as chosen answers and Sauerkraut-7b-HerO as rejected answers. Added with additional translated Parts of the HuggingFaceH4/ultrafeedback_binarized (Our dataset do not contain any TruthfulQA prompts - check Data Contamination Test Results) and argilla/distilabel-math-preference-dpo.

We found, that only a simple translation of training data can lead to unnatural German phrasings.

Data augmentation techniques were used to grant grammatical, syntactical correctness and a more natural German wording in our training data.

Data Contamination Test Results

Some models on the HuggingFace leaderboard had problems with wrong data getting mixed in. We checked our SauerkrautLM-DPO dataset with a special test [1] on a smaller model for this problem. The HuggingFace team used the same methods [2, 3].

Our results, with result < 0.1, %: being well below 0.9, indicate that our dataset is free from contamination.

The data contamination test results of HellaSwag and Winograde will be added once [1] supports them.

| Dataset | ARC | MMLU | TruthfulQA | GSM8K |

|---|---|---|---|---|

| SauerkrautLM-DPO | result < 0.1, %: 0.0 | result < 0.1, %: 0.09 | result < 0.1, %: 0.13 | result < 0.1, %: 0.16 |

[1] https://github.com/swj0419/detect-pretrain-code-contamination

Prompt Template:

[INST] Instruction [/INST] Model answer [INST] Follow-up instruction [/INST]

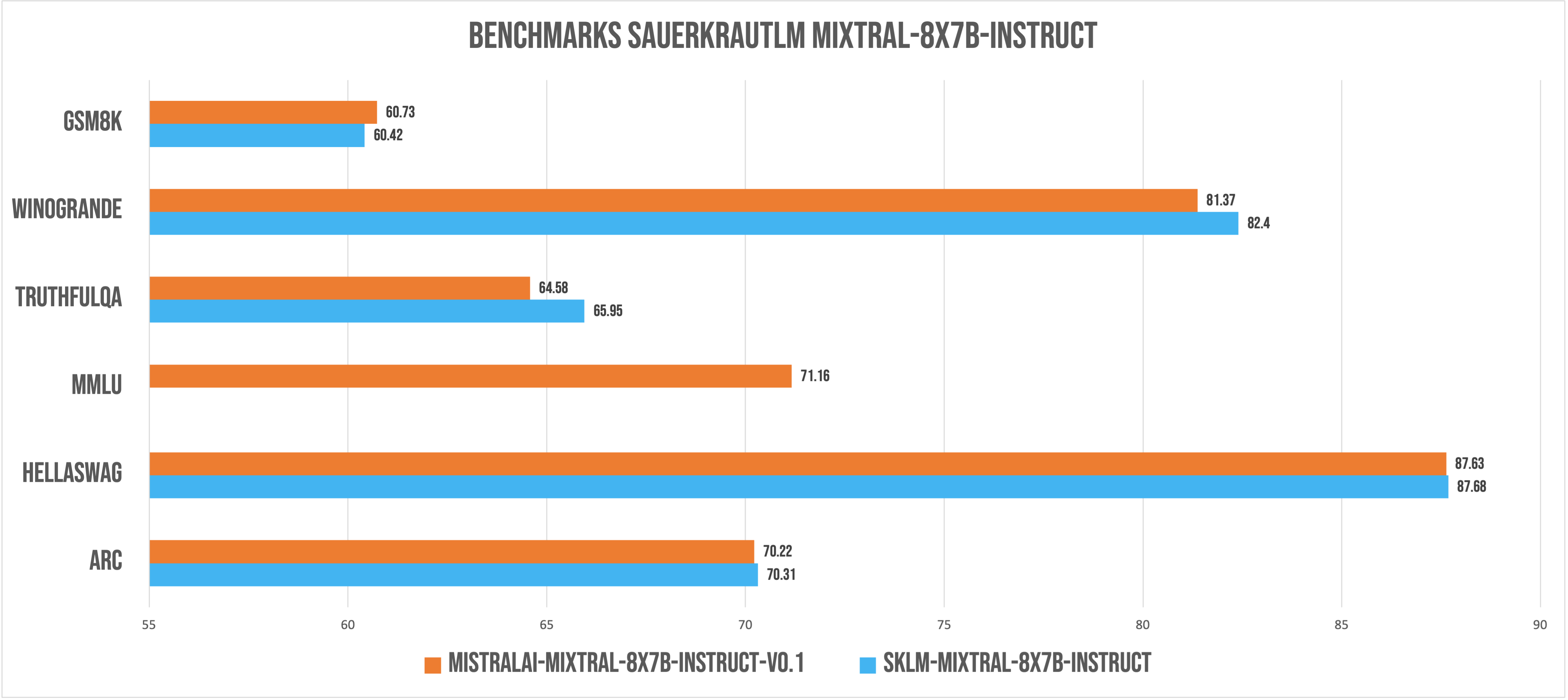

Evaluation

*evaluated with lm-evaluation-harness v0.3.0 - mmlu coming soon

*evaluated with lm-evaluation-harness v0.3.0 - mmlu coming soon

*All benchmarks were performed with a sliding window of 4096. New Benchmarks with Sliding Window null coming soon

Disclaimer

We must inform users that despite our best efforts in data cleansing, the possibility of uncensored content slipping through cannot be entirely ruled out. However, we cannot guarantee consistently appropriate behavior. Therefore, if you encounter any issues or come across inappropriate content, we kindly request that you inform us through the contact information provided. Additionally, it is essential to understand that the licensing of these models does not constitute legal advice. We are not held responsible for the actions of third parties who utilize our models. These models may be employed for commercial purposes, and the Apache 2.0 remains applicable and is included with the model files.

Contact

If you are interested in customized LLMs for business applications, please get in contact with us via our website or contact us at Dr. Daryoush Vaziri. We are also grateful for your feedback and suggestions.

Collaborations

We are also keenly seeking support and investment for our startup, VAGO solutions, where we continuously advance the development of robust language models designed to address a diverse range of purposes and requirements. If the prospect of collaboratively navigating future challenges excites you, we warmly invite you to reach out to us.

Acknowledgement

Many thanks to argilla and Huggingface for providing such valuable datasets to the Open-Source community. And of course a big thanks to MistralAI for providing the open source community with their latest technology!

- Downloads last month

- 6